Inspiration

Our dreams are not static images or linear videos; they are interactive, spatial, and inherently unstable memories. Most current generative AI tools stop at text-to-image or text-to-video, failing to capture the fluid nature of how we dream. In Dream Sculpt, we wanted to, making use of Gemini 3, creating a medium where the user doesn’t just watch a dream, but shapes it and experiences it.

What it does

Dream Sculpting is a web-based immersive experience that transforms a user’s dream description into an explorable 3D world.

- Real-time Object Sculpting: The world is populated by the objects that was inputted to the scene. 3D models was transformed to point cloud and dynamically displayed into the scene. Users can revise their dream, and the world updates coherently.

- Immersive Navigation: Users enter a first-person, navigable environment where objects assemble, flicker, and dissolve in point-cloud form as the user moves forward.

- Gesture-Based Interaction: Using hand tracking, users can physically manipulate the dream—pinching to reposition objects or using two-hand distance to scale elements.

How we built it

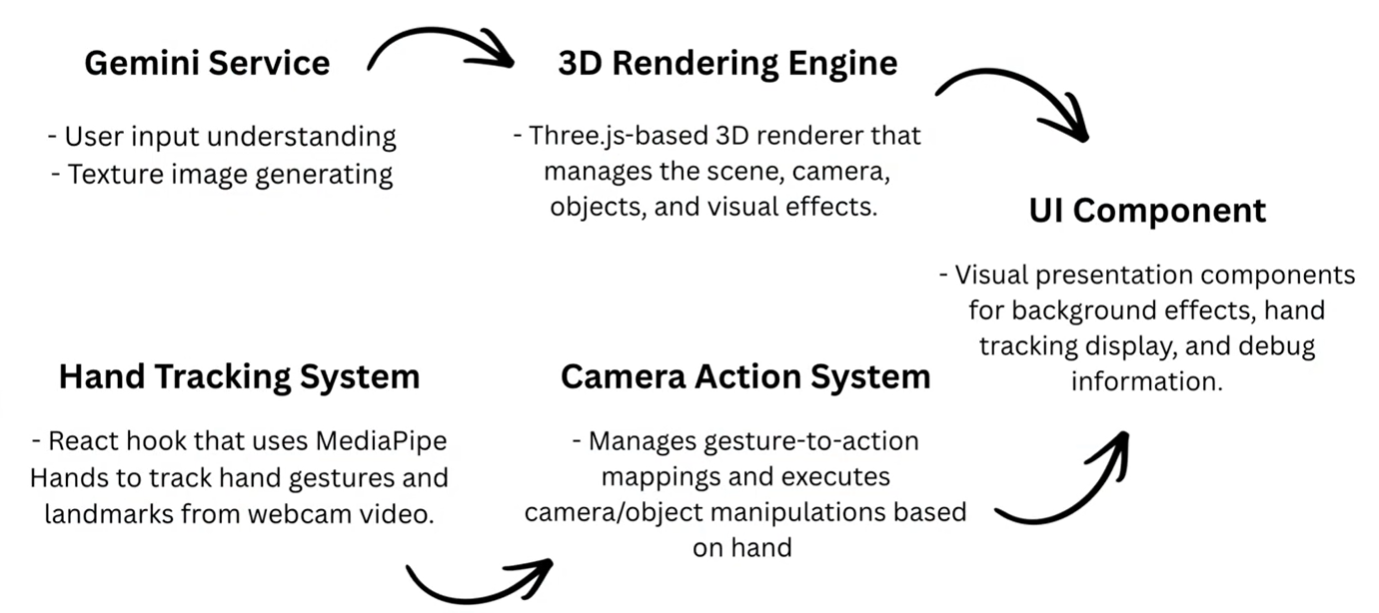

Dream Sculpt has the following main components:

- Gemini Service: Interfaces with Google Gemini AI to classify prompts, generate object files, parse scene descriptions, and generate sky/terrain textures.

- 3D Rendering Engine: Three.js-based 3D renderer that manages the scene, camera, objects, and visual effects.

- Hand Tracking System: React hook that uses MediaPipe Hands to track hand gestures and landmarks from webcam video

- Camera Action System : Manages gesture-to-action mappings and executes camera/object manipulations based on hand gestures.

Accomplishments that we're proud of

- An automated pipeline for creating interactive 3D scene is created. This transform the generative AI's nature from "generative artifacts" to "interactive dream sculpture."

- Aesthetically beautiful and interactively smooth scene creation.

Challenges we ran into

- Spatial Reasoning: Translating vague natural language into precise 3D coordinates that feel "right" to a user was a significant hurdle.

- Asset Scalability: Building a 3D world usually requires extensive manual labor. Automating the conversion of diverse assets into a unified point-cloud style required complex scripting and parameter tuning.

- Maintaining Immersion during Updates: Ensuring the world could "regenerate" or update based on new prompts without breaking the user's sense of presence required careful management of the "dissolve and assemble" logic.

What we learned

- We discovered that multimodal models like Gemini 3 are surprisingly adept at spatial planning and atmospheric consistency when provided with the right structured framework.

What's next for DreamSculpt

- Add more scene objects to the library

- Visualize a well-known book (say, Le Petit Prince)

Built With

- aistudio

- gemini

- mediapipe

- nanobanana

- react

- three.js

- typescript

Log in or sign up for Devpost to join the conversation.