-

-

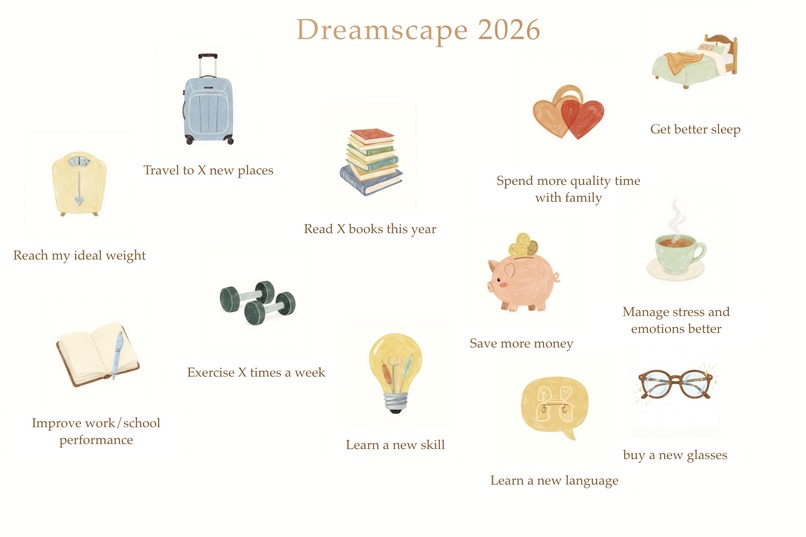

The Dreamscape Canvas: A dynamic vision board where every sticker—whether preset or AI-generated—shares a consistent lovely aesthetic.

-

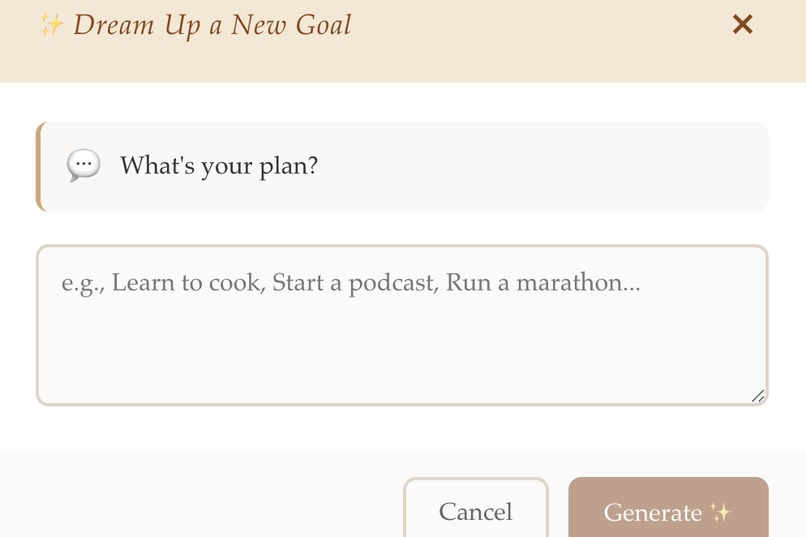

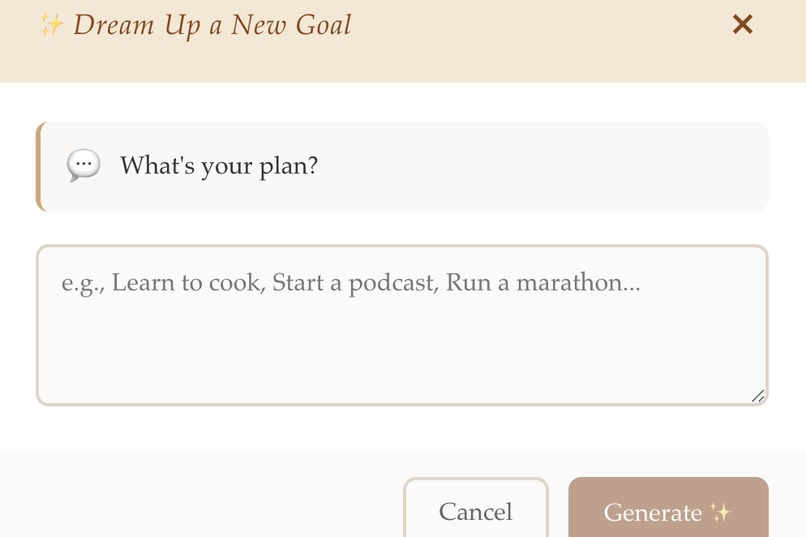

"Dream Up" Feature: We use Gemini 3 Flash to semantically understand abstract goals, Nano Banana to generate images.

-

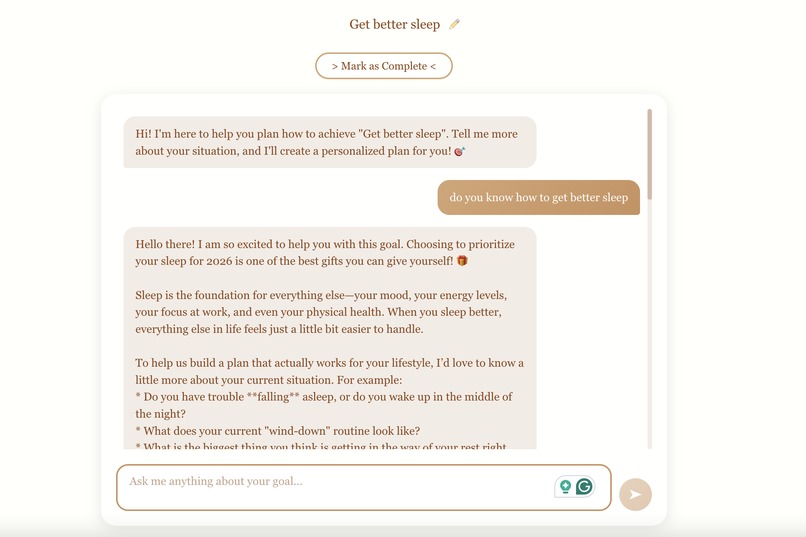

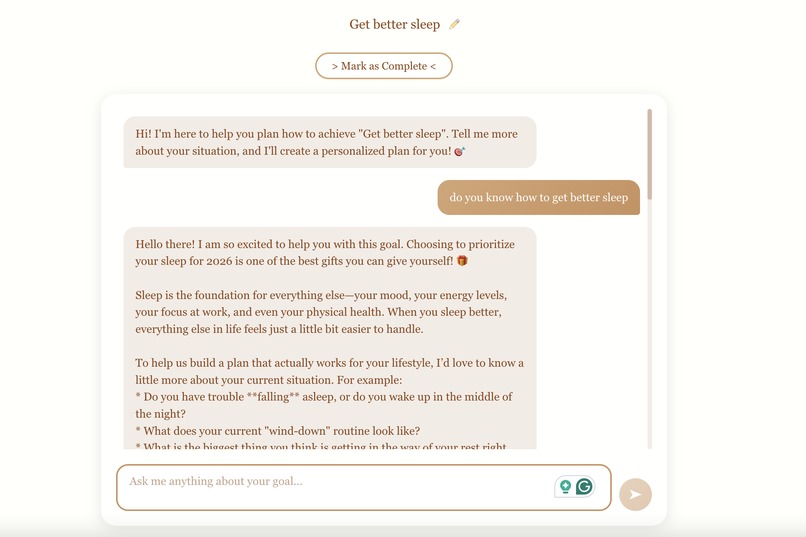

Smart Companion: Double-click any sticker to dive deeper. Chat with Gemini 3 Flash to get actionable advice about how to achieve goals

Inspiration

I’m a liberal arts student who taught myself to code because I wanted to build beautiful things. Last year, I launched my first iOS app, "Autumn Bucket List". It was simple: a digital board with a cozy, Pinterest-style aesthetic where users could pin seasonal activities. With zero marketing, it got 440 downloads and proved that people crave aesthetic, visual ways to track their lives.

But there was a problem. My app only had 40 hand-drawn stickers. Users wanted to add unique goals—like "Start a podcast" or "Make a magic potion"—but I couldn't manually draw a sticker for every possible wish. The moment they added a custom text goal without a matching image, the aesthetic was broken.

I realized Generative AI could solve this. I wanted to build a tool that preserves the cozy, hand-drawn vibe but allows for infinite creativity. That's how Dreamscape was born.

What it does

Dreamscape is a dynamic vision board powered by Gemini 3. It transforms abstract goals into visual reality.

- Curate with Presets: Users start with 11 curated, aesthetic goals.

- ✨ Dream Up (The Core): Users type any unique goal (e.g., "Learn to cook"). Dreamscape generates a unique, watercolor-style sticker in seconds.

- Smart Companion: Users can double-click any sticker to chat with it. Gemini 3 acts as a mentor, breaking down big dreams into actionable steps.

- Local & Private: No login required, all data is stored locally in the browser.

How we built it

This project was a journey of moving from a native iOS mindset to a modern Web AI stack.

- Frontend: Built with React and Vite for a fluid experience.

- Backend: Deployed on Vercel using Serverless Functions.

- The AI Pipeline (The Secret Sauce):

- User Input: The user types a goal (e.g., "Start a YouTube Channel").

- Semantic Translation (Gemini 3 Flash): We don't just send the text to the image generator. We use Gemini 3 Flash to "think" like an art director. It translates the abstract goal into a concrete visual object description (e.g., "A vintage video camera and editing film, watercolor style").

- Nano banana pro(gemini-3-pro-image-preview): This optimized prompt is sent to gemini-3-pro-image-preview (via Google AI SDK) to generate the final sticker.

Challenges we ran into

The biggest challenge was Prompt Engineering for consistency.

1. The "Literal" Problem: Initially, when I fed user goals directly into the image generator, the results were too literal. If a user typed "Start a YouTube Channel", the AI would generate a logo of YouTube or text that said "Channel". That's not a sticker; that's a logo. The Solution: I used Gemini 3 Flash as a "middleman". I instructed it to extract the essence of the goal and convert it into a physical object. So, "Start a YouTube Channel" becomes "A camera setup". "Learn Japanese" becomes "A textbook and cherry blossoms". This semantic pivot was crucial.

2. The Style Consistency Problem: AI-generated images often look too realistic or disjointed, clashing with my original hand-drawn UI. The Solution: I created a composite "Reference Image" containing my original 11 hand-drawn stickers. I passed this reference to the model, instructing it to strictly follow the color palette, stroke width, and "cozy watercolor" vibe of the reference. This ensured every new sticker felt like it belonged in the same universe.

Accomplishments that we're proud of

- Building a Full-Stack App as a Liberal Arts Student: I moved from Swift to React and successfully integrated complex AI APIs in a few days.

- The "Vibe" Check: We managed to make AI feel "cozy" and "personal" rather than robotic. The stickers genuinely look hand-crafted.

- Seamless Integration: The latency of Gemini 3 Flash is so low that the "Semantic Translation" step happens almost instantly, keeping the user experience fluid.

What we learned

- AI needs Art Direction: You can't just rely on the raw model. Providing reference images and using an LLM (Gemini) to refine prompts for the Image Model (gemini-3-pro-image-preview) creates much better results.

- Web Development: I learned how to use Vercel Serverless Functions to secure API keys, moving away from client-side only development.

What's next for Dreamscape

- Mobile Native: Porting the Gemini integration back to the original iOS app to reach my existing users.

- Social Sharing: Allowing users to export their finished "2026 Vision Board" as a high-res wallpaper.

- More Art Styles: Letting users switch between "Pixel Art" or "Minimalist" themes, all dynamically prompted by Gemini.

Built With

- gemini-3-api

- gemini-3-flash-preview

- gemini-3-pro-image-preview

- google-generative-ai

- javascript

- kiro

- nanobananapro

- react

- vercel

- vite

Log in or sign up for Devpost to join the conversation.