-

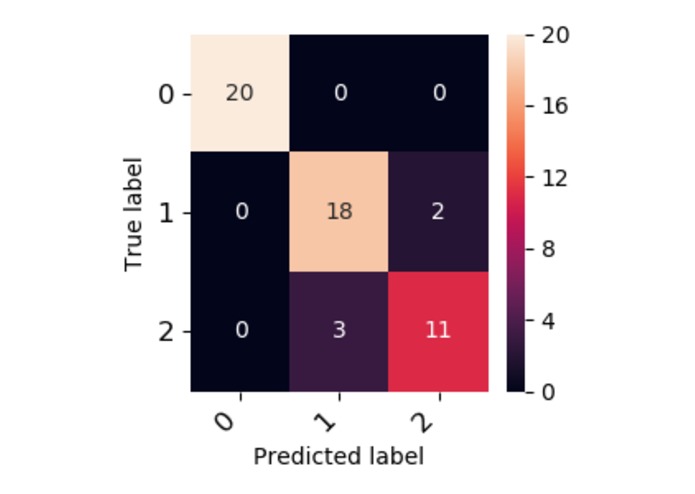

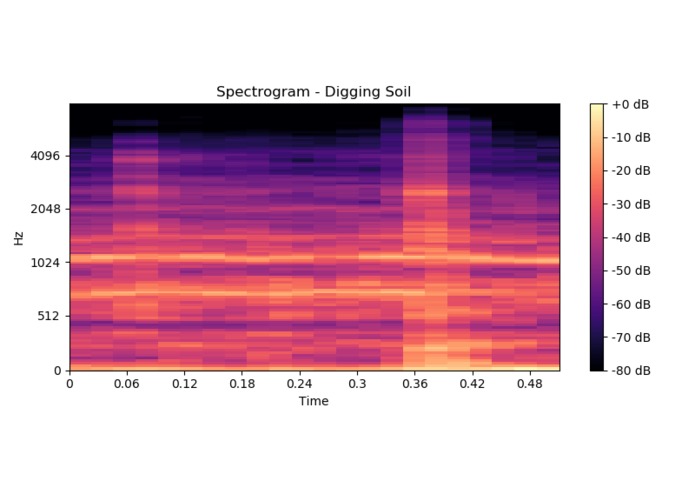

A confusion matrix showing the results of classifying unseen data.

-

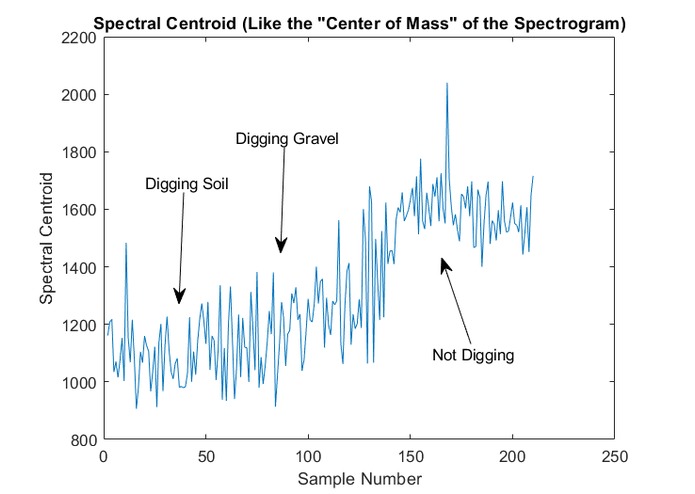

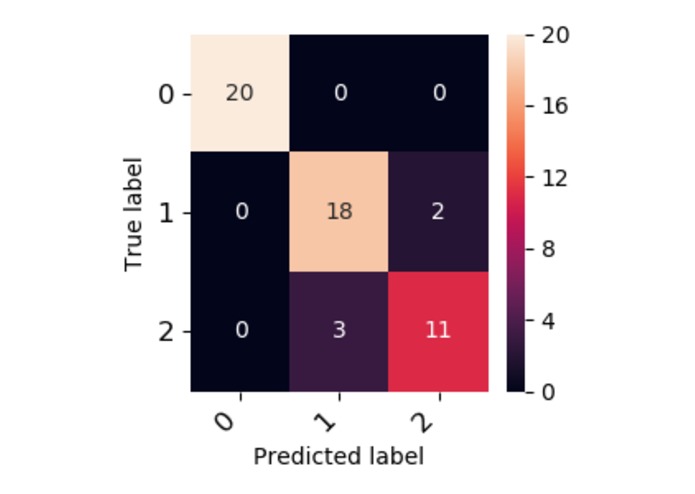

A plot of the Spectral Centroids of the testing data

-

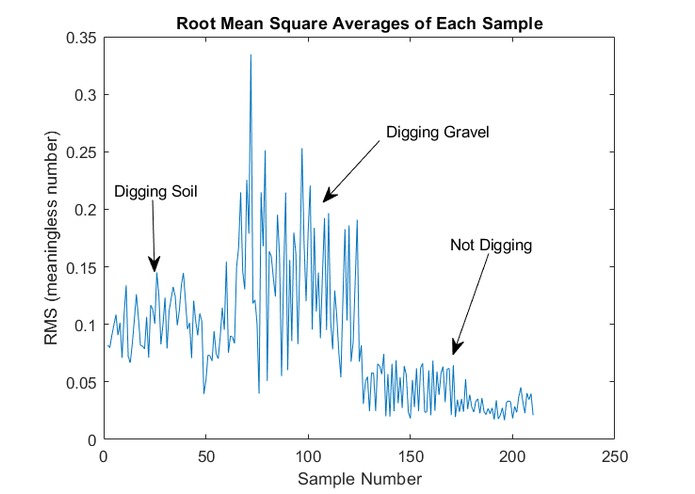

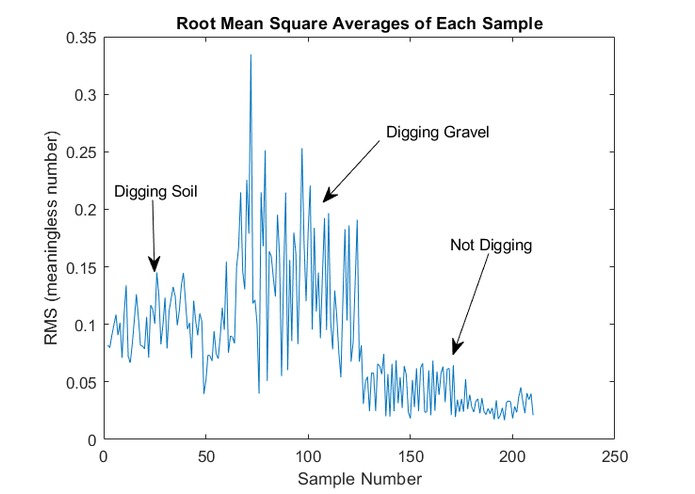

A plot of the Root Mean Squares of the testing data

-

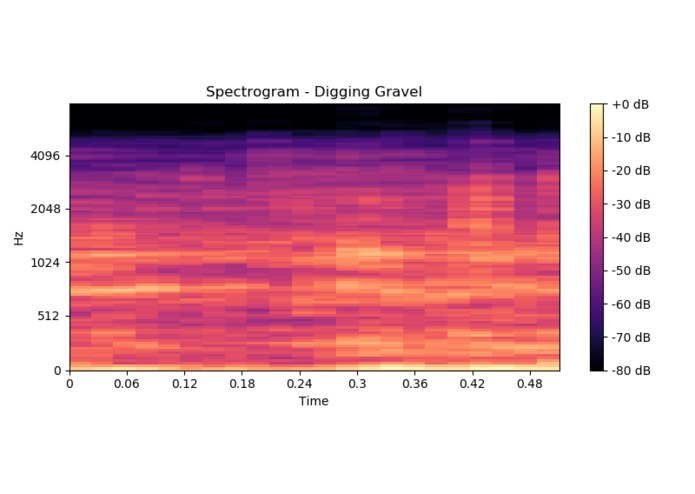

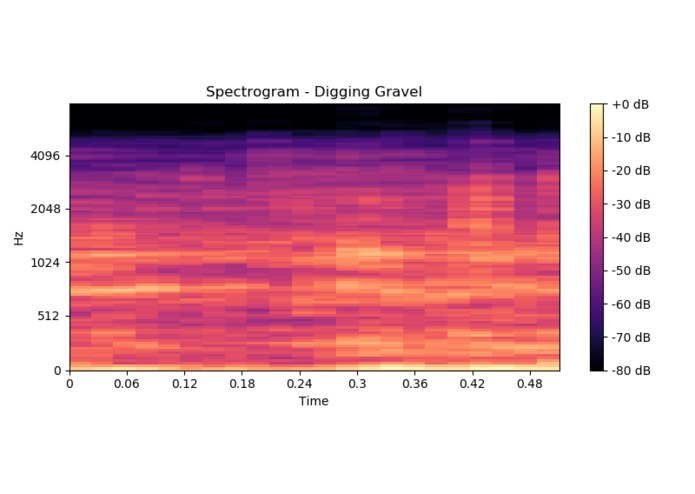

A spectrogram of excavating gravel

-

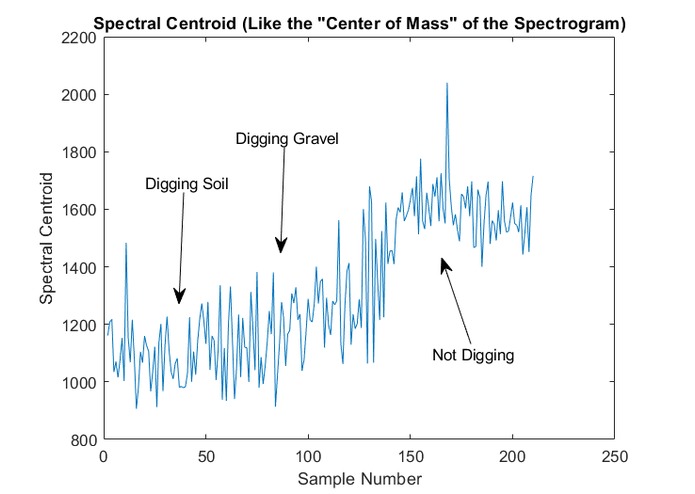

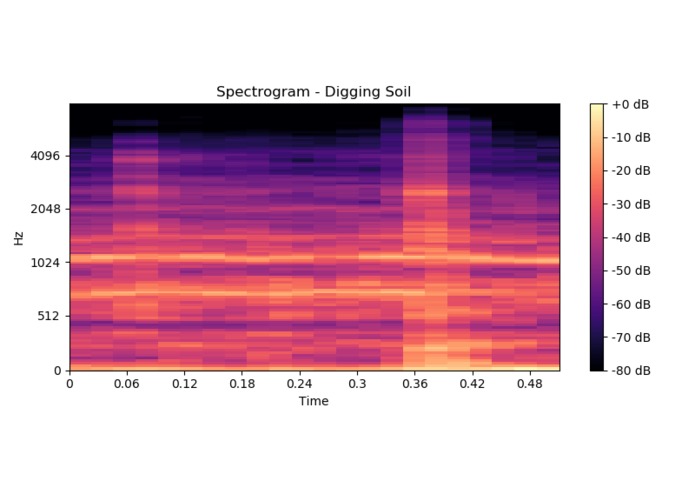

A spectrogram of excavating loose soil

-

The drum digging mechanism used to collect the data

-

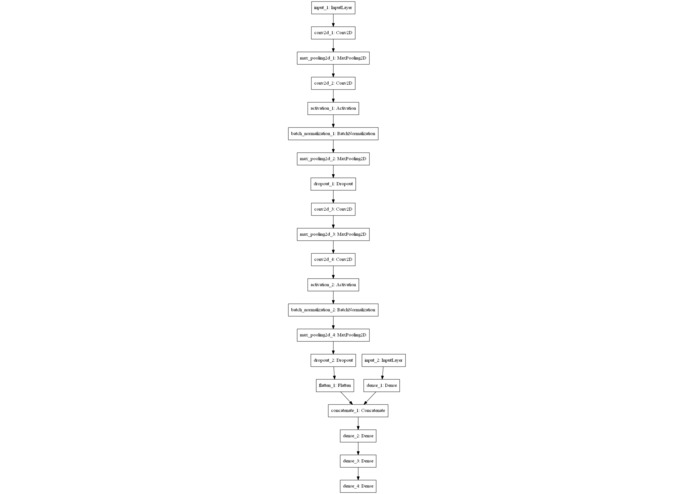

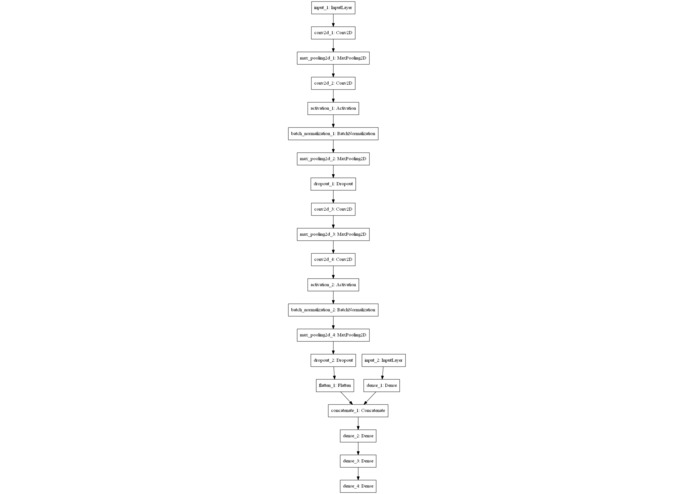

A visual representation of the machine learning model used

TL;DR

We used a convolutional neural network on contact microphone data to differentiate between types of soil being excavated by an autonomous mining robot used in a NASA competition.

Inspiration

We are members of the Utah Student Robotics Team. Our annual project is building an autonomous mining robot to compete in a collegiate NASA competition. This year we wanted to add another level of autonomy to the robot by using a neural network to determine what material the robot is mining based on the sound data from a contact microphone.

What it does

In the NASA competition, there is a layer of soft soil over a bed of gravel. The objective of the competition is to collect as much gravel as possible with a minimum of soil. The neural network we wrote allows the robot to differentiate between digging soil and gravel. In turn, this gives us the ability to program the behavior of the robot more efficiently, focusing on the gravel without wasting time on the soil.

How we built it

The first step was to collect as much data as we could from the digging mechanism. Then we split the raw .wav files into 0.5 second pieces, and used the librosa module in python to create a spectrogram from each piece. We also extract additional features such as the average root mean square and spectral centroid (think of it as the center of mass of the sound). We split the data into two subsets: 80% of the samples for training and the remaining 20% for testing and validation of the fitted model. We input the spectrograms and other data into several convolutional layers before flattening the results and feeding them into a traditional neural network structure.

Some advanced techniques we used to improve the model and reduce chances of overfitting were normalization of the data and dropouts during training.

Challenges we ran into

We ran into our first challenge almost immediately after starting when our robot digging drum seized immediately on the first data collection run and broke the supporting frame. After a few minutes of considering whether or not we should come up with a new project idea, we were able to patch it back together with 2x4's and clamps.

On the programming side our biggest struggle was a recurring issue with our data arrays having the wrong shape. When we would try to pass a misshapen array to a function, the resulting error message would be extraordinarily misleading and unhelpful. We spend hours "coding in circles" before we realized a simple call to .reshape would solve all of our problems.

Accomplishments that we're proud of

There are a number of technical accomplishments we achieved that we are especially proud of. Firstly was the quality of data we collected using the audio engineering skills of one of our team members. Next came our unique implementation of parallel processing using four computers simultaneously to test dozens of combinations of model parameters with the limited time available. And of course, our success rate of over 90% in labeling new data is our proudest accomplishment.

What we learned

Paying close attention to the mundane "boring" sections of code would have saved many hours of debugging time. There were several times where all of our errors stemmed from attempting to input incorrectly formatted parameters.

What's next for Diggatron 2000

We plan to continue developing the network with additional and more varied testing data to increase the accuracy and robustness of the model. We also plan on adding the functionality to continuously sample data using the robot's onboard computer with a low latency so it can operate independently from any outside help.

Our ultimate goal for the work we put in this weekend is to implement it as part of the autonomy system on our final competition robot at the Kennedy Space Center in Florida.

Log in or sign up for Devpost to join the conversation.