-

-

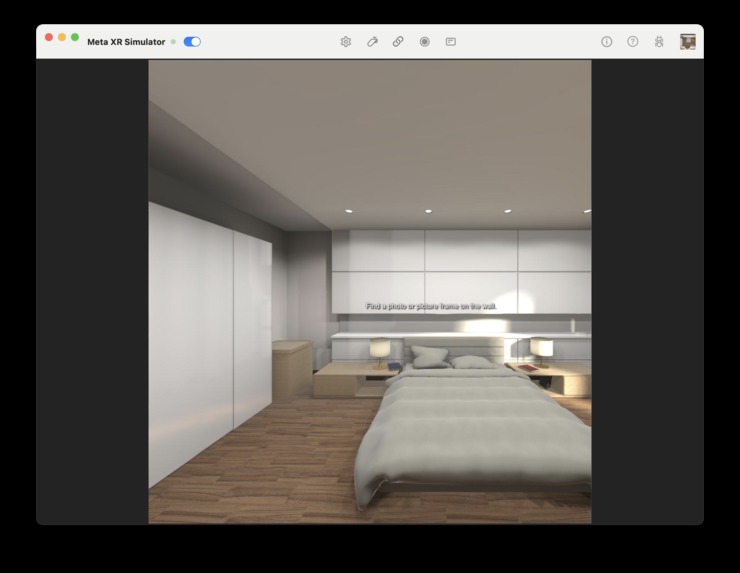

It's a Unity app (for VR glasses), or a phone app

-

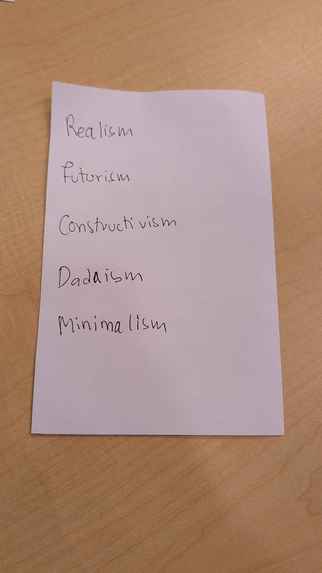

Let's say you need to learn a list of concepts like this one - hard to do so without help! But we use the "method of loci" to help :)

-

We guide you through a journey with real "anchor" objects. E.g.: Realism is like painting reality

-

Futurism = speed

-

Constructivism = like building a new world

-

Dadaism = art is meaningless (trash)

-

Minimalism = functionality is the only thing that matters

-

...and this helps you remember the whole list! Welcome to Déjà View - a better way to memorize :)

If walls could talk, what would they teach you?

We decided to find out.

How we came up with the idea

In the school they tell us to "pay attention," but the reality is that studying from a book or a screen is unnatural. We are 3D creatures living in a 3D world, yet we try to force information into our brains using 2D text. It doesn't make sense.

We were studying Art History and finding it very dificult to remember which movement came after which. Was Dadaism before Surrealism? Who knows. We looked at our messy room and thought: "I wish I could just stick this information to my furniture."

And then we realized that with the Meta Quest 3, we actuallly can.

What is Deja-View?

Deja-View is a "cheat code" for your memory. It transforms your physical environment into an interactive museum of knowledge. It's a mixed reality app where you don't escape the real world; you annotate it.

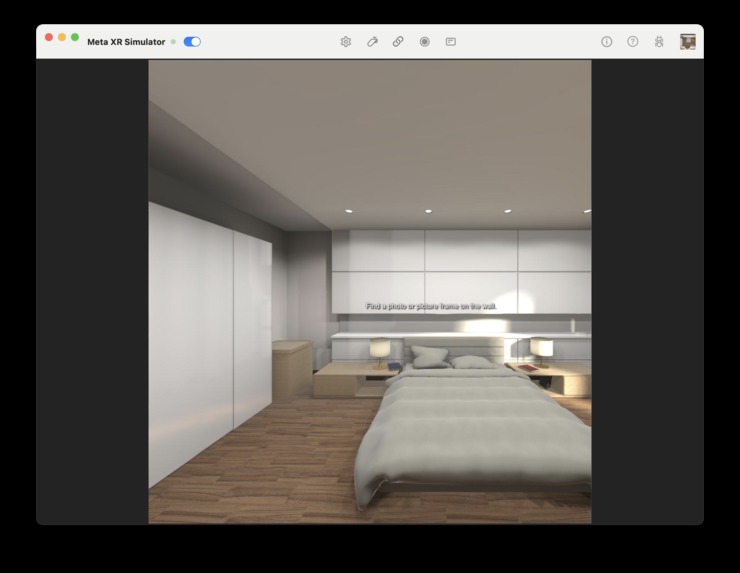

You walk around your room, and the app asks you to find specific items. When you find them, it uses that physical action to "lock" a piece of information in your brain.

- Find a lamp: Learn about the Enlightenment.

- Find a chaotic pile of clothes: Learn about Abstract Expressionism.

- Find a mirror: Learn about Self-Portraits.

Under the Hood: The AI Eyes

The core of this project is not just seeing objects, but understanding them. We used EyePop for this, and specifically their Visual Intelligence capabilities.

Standard object detection is boring. It just says "chair" or "TV." That wasn't enough for our storyline. We needed to know context.

In our demo, we had a very specific problem: we have digital screens (TVs) and canvases (paintings) in the room. To a basic AI model, they look almost the same; they are just rectangles on a wall. But for our game, one represents "Modern Media" and the other represents "Classical Art."

We configured a custom EyePop Pop using the eyepop.image-contents component. This allows to send natural language prompts to the visual engine. We literally ask the API: "Look at this rectangle. Is it a digital screen emitting light, or is it a painted canvas? Explain why."

The Visual Intelegence analyzes the texture, the reflections, and the context, and it gives us the correct answer. This level of "reasoning" on the image is what makes the experience feel responsive and smart.

How we built it

- The Eyes (Unity + Quest 3): We used the Meta MR Utility Kit to access the passthrough cameras. This was the hardest part because accessing raw images is restricted for privacy, so we had to jump through some hoops.

- The Brain (Python + EyePop): We stream the images to a Flask server. This server talks to EyePop. We use a "fast-check" with standard detection first, and if we need more detail (like the TV vs Painting example), we trigger the heavier Visual Intelligence prompt.

- The Glue: We return the data to Unity and spawn a 3D information card right next to the real object.

Challenges

- The "Rectangle" Problem: As we mentioned, distinguishing between similar objects was hard until we switched to Visual Intelligence prompts.

- Latency vs. Experience: We want that the user feels instant feedback. Sending images to the cloud takes time (about 2-3 seconds). We hid this latency by adding a "scanning" animation in the UI so the user thinks the headset is "thinking."

- Prompt Engineering: We learned that you have to be very especific with the AI. If you just ask "what is this?", it gives you a generic answer. If you ask "is this a X or a Y?", it is much more accurate.

What's Next?

We built this for Art History, but the system is content-agnostic. We imagine a version for:

- Language Learning: Label everything in your house in Spanish or Japanese.

- Alzheimer's Patients: Help people remember where they left things or what objects are for.

- Technical Training: Guide a mechanic to find the right tool in a workshop.

We believe that the future of learning is not in the cloud or in a book; it's right in front of you. You just need the right glasses to see it.

Tech Stack

- Unity 2022.3 (C#)

- Meta Quest 3 (MR Utility Kit)

- EyePop API (Visual Intelligence)

- Python 3.13 / Flask

Log in or sign up for Devpost to join the conversation.