-

-

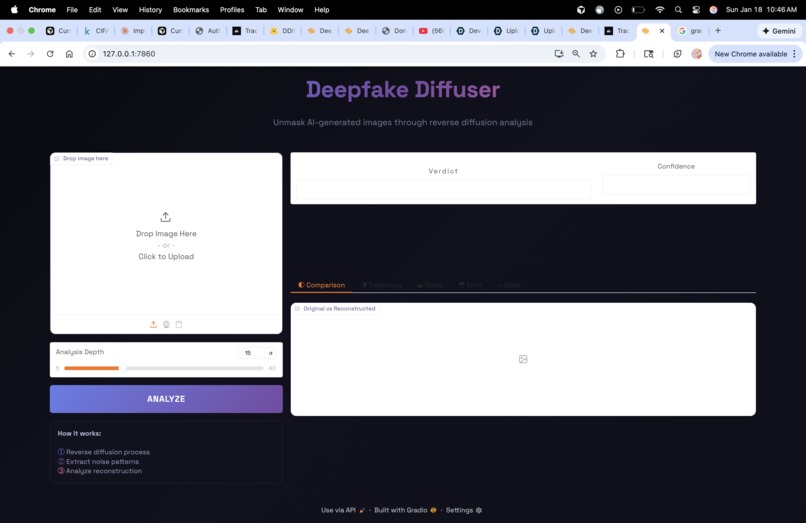

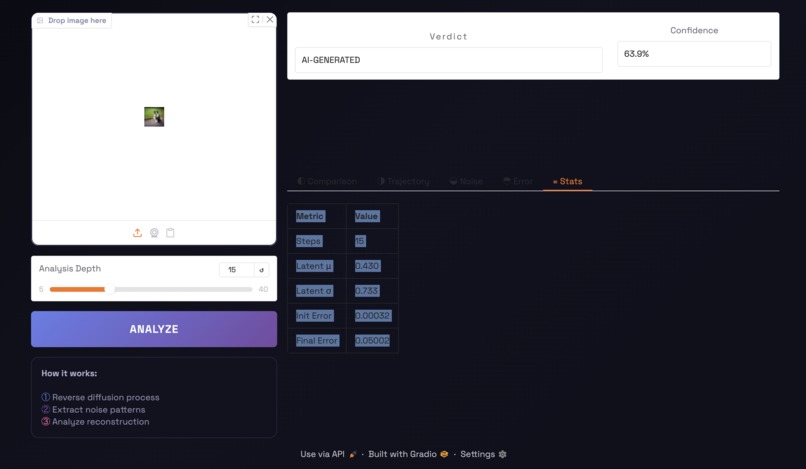

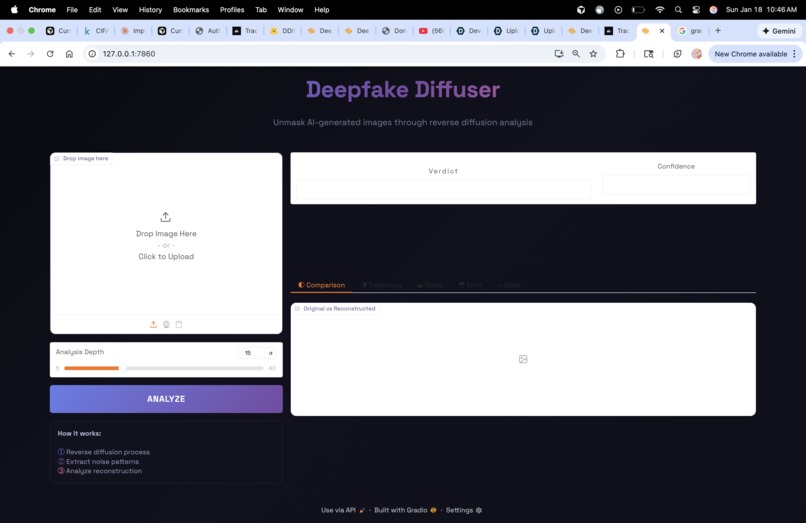

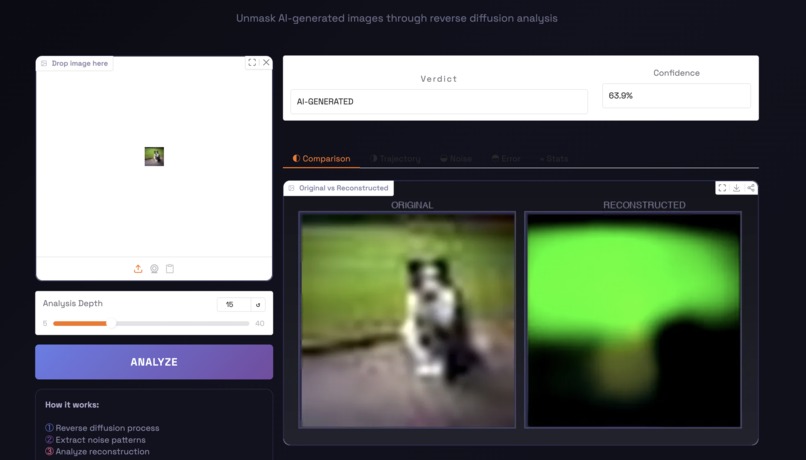

The UI of the webpage containing the verdict, reconsturction, and iterations

-

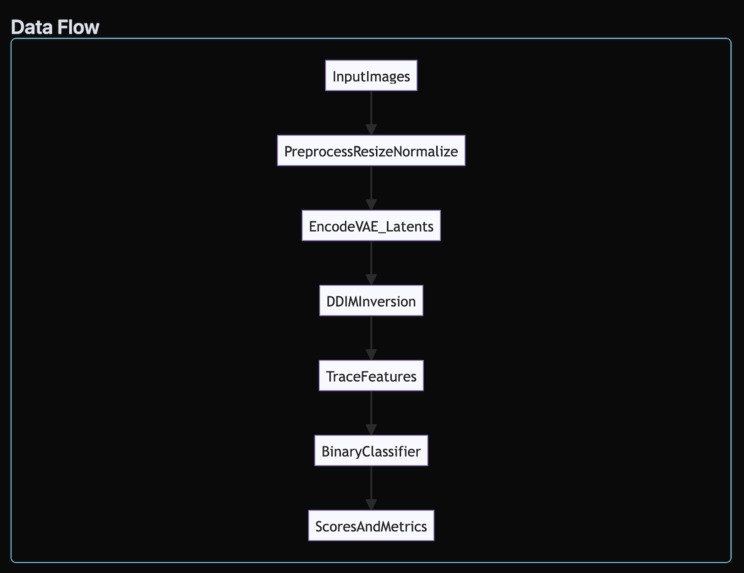

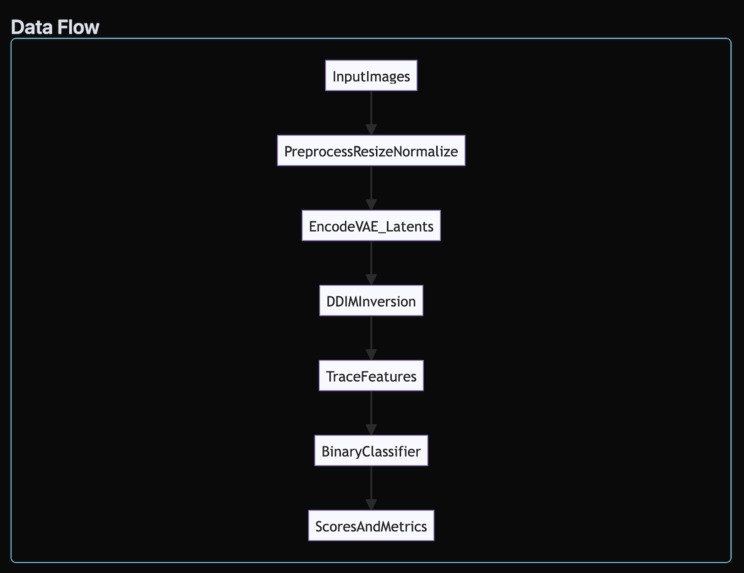

The data flow when planning and building the project

-

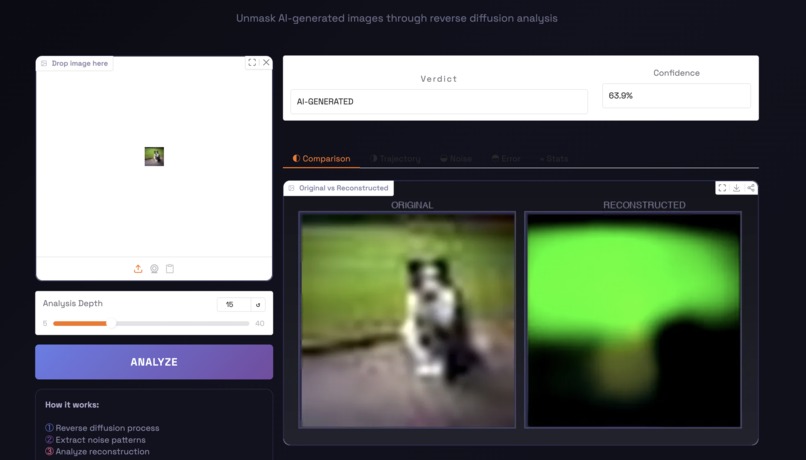

The output of the classifier saying this image is AI

-

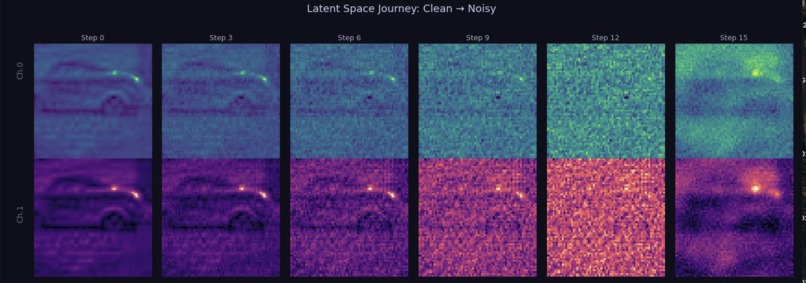

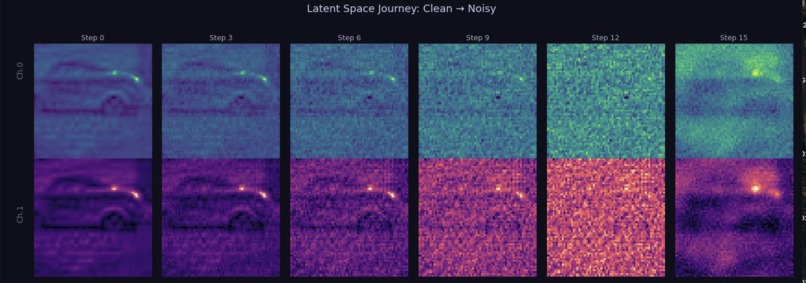

The latent space journey in 15 steps

-

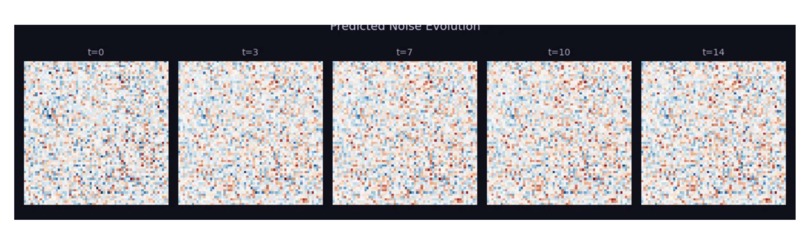

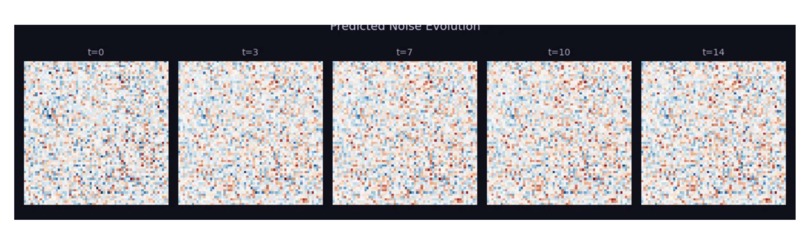

The residualization of predicted noise evolution

-

The step vs error graph (Loss)

-

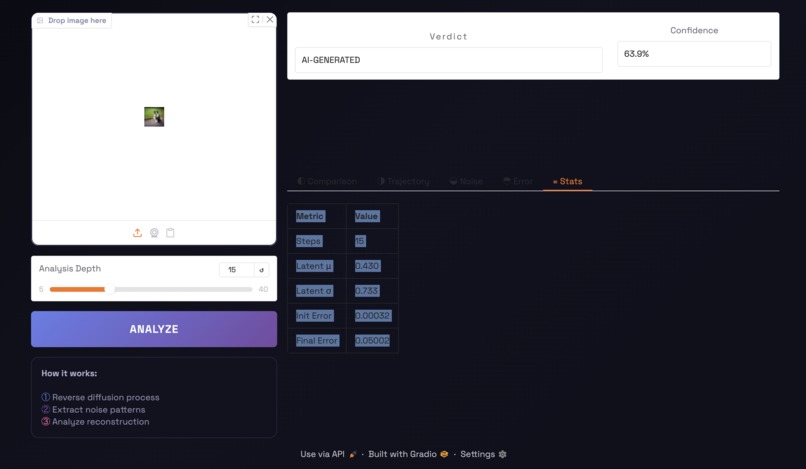

Some statistics provided

Project Story: Deepfake Diffuser

About the Project

Deepfake Diffuser began as an ethical hacking experiment. In a final project on image generation, I watched synthetic images become indistinguishable from real photos. Instead of building a more powerful generator, I asked the opposite question: how can we reverse‑engineer the traces these models leave behind? This project is my answer, a defensive, forensic tool that “hacks” the diffusion process to expose AI‑generated images.

Inspiration

During my final project on generative images, I realized that realism was no longer a novelty; it was a risk. If images could fool me, they could fool anyone. That shifted my mindset from creation to verification. I wanted to approach the problem like an ethical hacker: understanding the system’s internals to uncover weaknesses and build trust.

What I Built

Deepfake Diffuser detects AI‑generated images by running diffusion in reverse. We take an input image, encode it into the Stable Diffusion VAE latent space, and perform DDIM inversion step‑by‑step to trace its noise trajectory. From that trajectory, we extract statistical features and feed them into a Random Forest classifier to distinguish real photos from AI images.

At a high level:

- DDIM inversion reconstructs the diffusion path (clean → noisy) and captures noise predictions per step.

- Feature extraction computes trajectory stats (noise norms, frequency energy, reconstruction error, latent consistency).

- Random Forest inference learns the boundary between real and AI traces.

- UI visualizes the reconstruction, noise evolution, and forensic metrics.

How It Works (with Math)

Diffusion models generate images by gradually denoising. We invert that process using DDIM. In simplified form:

[ x_t = \sqrt{\alpha_t} \, x_0 + \sqrt{1-\alpha_t} \, \epsilon ]

By estimating the noise (\epsilon) at each step, we reconstruct the path the image likely took. Real images tend to produce smoother, more consistent trajectories. AI images often show stronger deviations and different noise statistics.

What I Learned

- Security through understanding: The best defenses come from knowing how the attack works.

- Diffusion models are measurable: Even when images look perfect, their latent trajectories aren’t.

- Classifiers are only as good as their features: thoughtful extraction matters more than model size.

- UX matters: A detection tool is only useful if it explains its result clearly.

Challenges

- Model access: Some diffusion checkpoints require authentication, so I needed stable public baselines.

- Runtime cost: DDIM inversion is slow on CPU, so I optimized sampling and visualization.

- Explainability: Turning latent traces into interpretable forensic signals took iteration.

Why Ethical Hacking?

Deepfake Diffuser treats AI models like a black box to be audited—not exploited. The goal isn’t to break systems, but to understand them well enough to protect others. That’s the spirit of ethical hacking: learn deeply, test rigorously, and build responsibly.

Log in or sign up for Devpost to join the conversation.