-

-

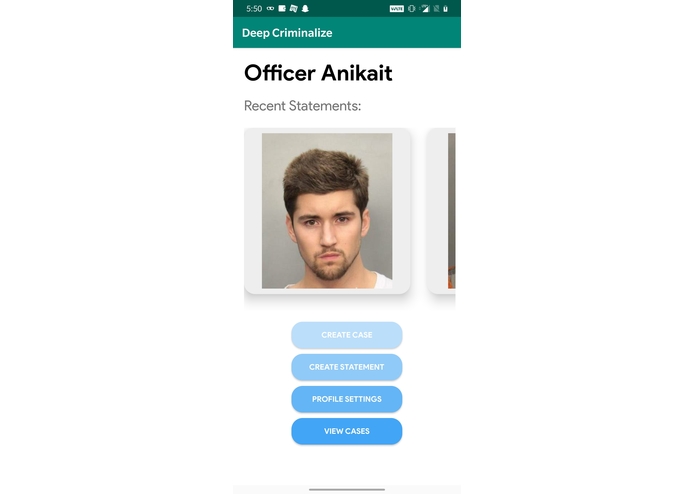

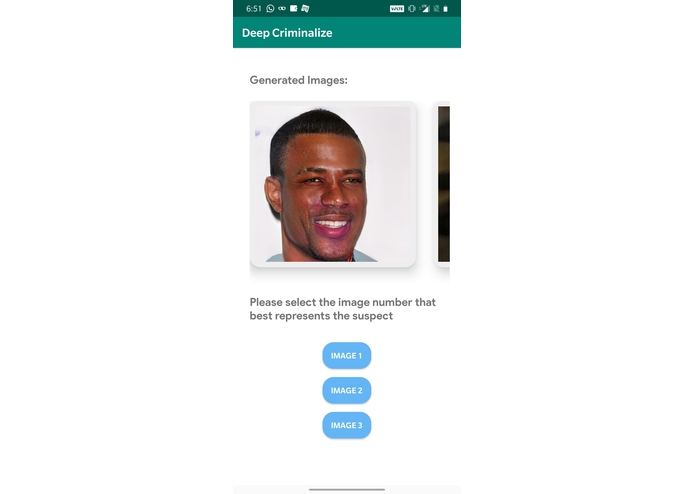

Our landing page

-

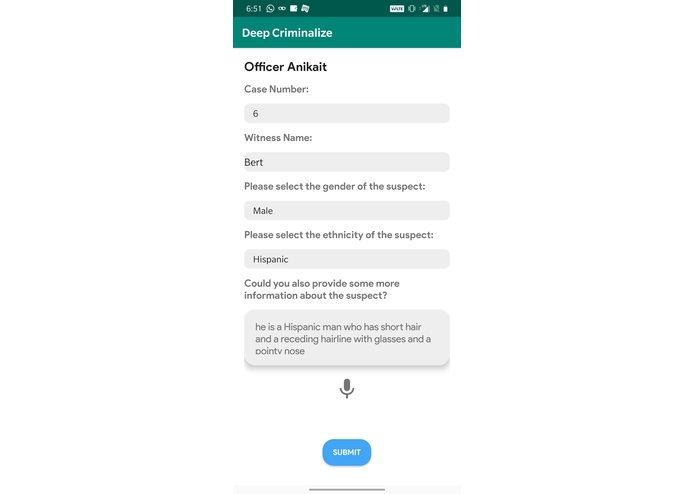

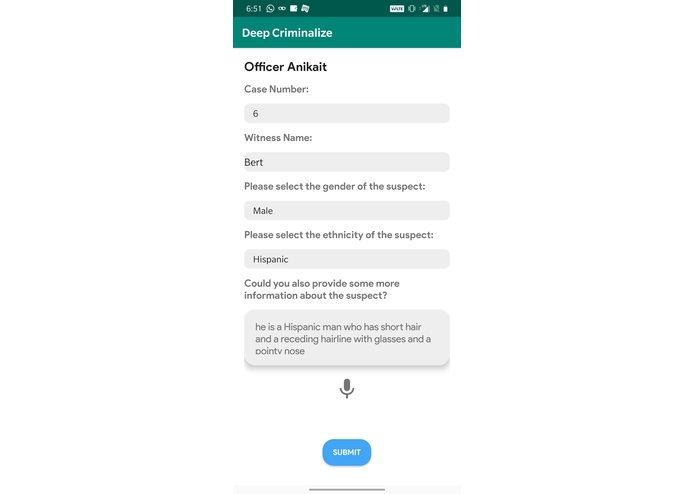

Case files,with speech to text functionality

-

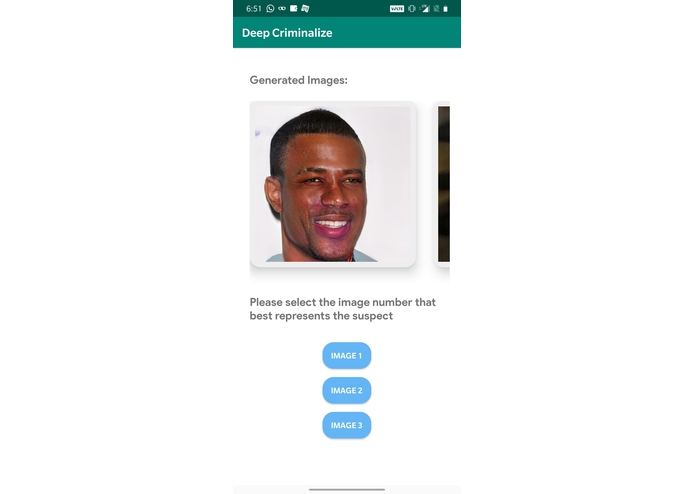

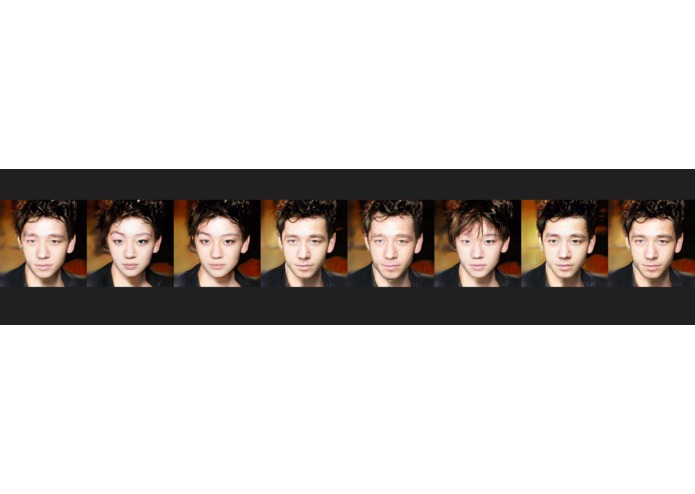

A set of three images generated by the GAN based on our case description, which can be updated and fine-tuned for further descriptions.

-

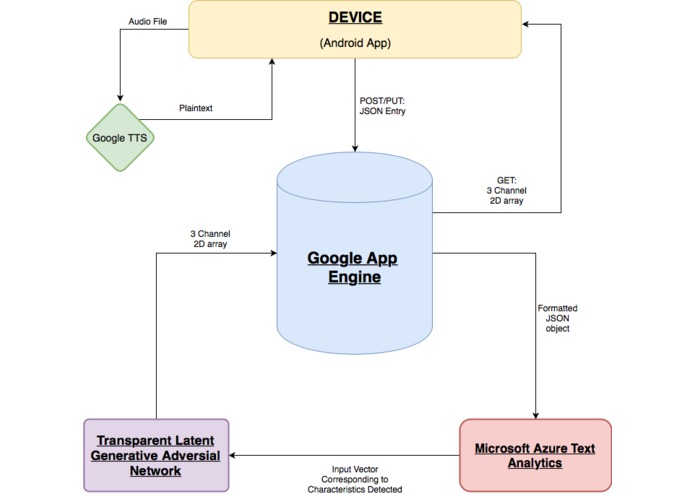

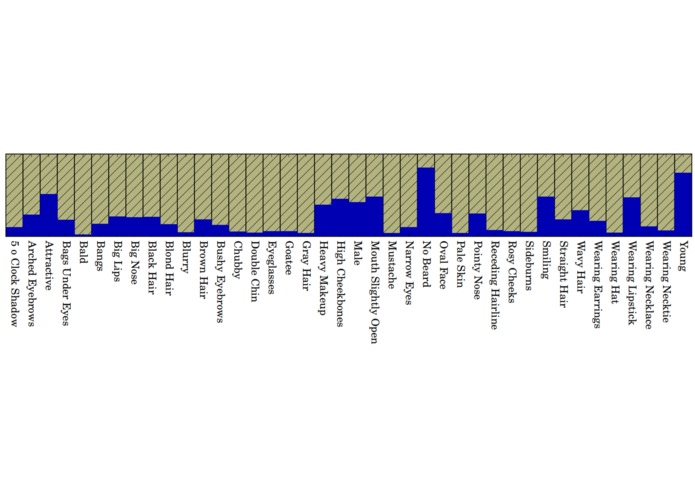

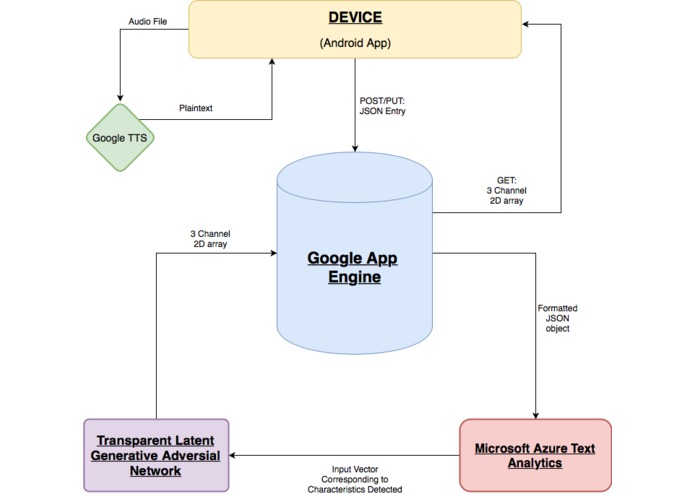

Visualizing our data flow

-

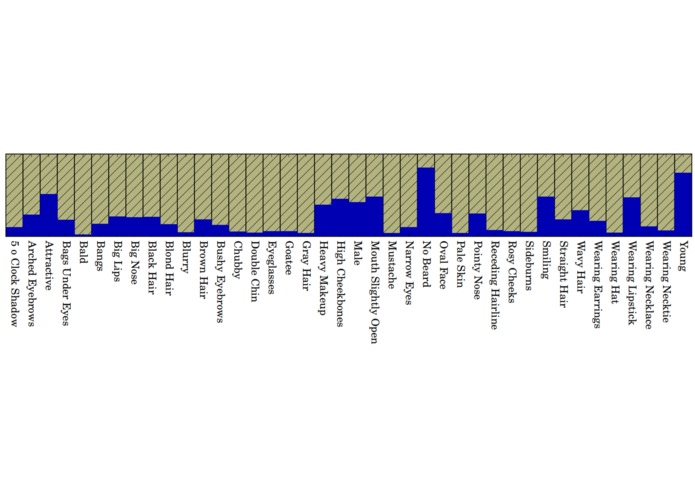

Visualizing our GAN training dataset.

-

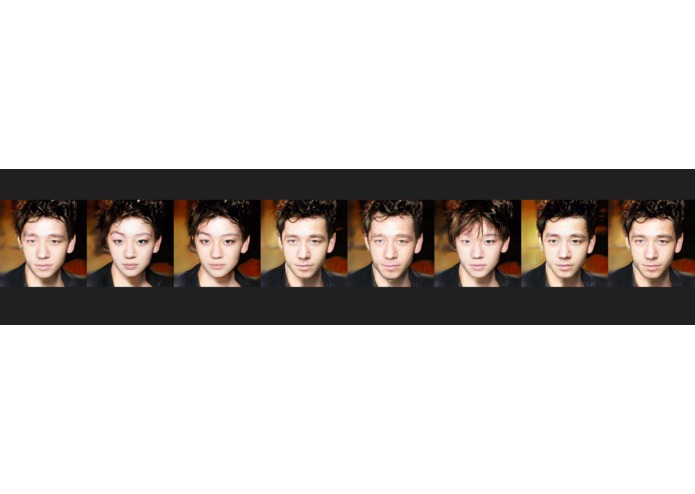

The set of images as case descriptions are being updated

-

Another set of images as case descriptions are being updated

Inspiration

Due to the rise in the number of criminal cases, sketch artists are often unavailable and back-logged. Additionally, some sketches may be highly unrealistic due to a lack of information. To solve this pressing issue, we created an Android app that uses a pg-GAN to render realistic images based off of witness descriptions. This project is highly challenging to implement, but could revolutionize the process of criminal identification by providing realistic images which are more likely to have a match than a sketch when searched across the existing criminal database.

What it does

We have a mobile interface that allows police officers to set up case files and record and transcribe witness testimony, a database to store witness information, case testimonies, and the generated images, and a server-based backend that is able to take the transcribed testimony, extract descriptions of facial features, and generate an image of the criminal from those descriptions. We are also working on a translation feature that takes in descriptions in any language. In the future we will including classification specific to features such as tattoos to link criminals to gangs/areas, etc.

How we built it

Between the four of us, we set up Compute Engine and Firebase, integrated the NLP model, compiled data visualizations and incorporated the GAN. We also created an intuitive, easy-to-use UI, to make app usage in time sensitive circumstances as seamless as possible. Given the complexity and many moving parts of this project, it required significant use of REST APIs and other integration techniques.

Challenges we ran into

In the beginning, we wanted to create a GAN that takes in labels of facial features and outputs images of faces with those features. However, it became apparent that for this task sufficient datasets did not already exist. Furthermore training conditional GANs on data as complex as facial features was incredibly hard, and not something we could accomplish in 36 hours. After reading about tweaking facial attributes of GAN outputs through changing the latent variable, we decided to use NVIDIA's pre-trained pg-GAN to generate random faces, and align the facial features to our description post-image generation.

What's next for DeepCriminalize

To validate the usefulness of our app, we want to work with a local police department, and draw comparisons between our application and sketches done by a professional sketch artist and measure the clearance rate along with the time period in which criminal was identified using the sketch. We would collect such data over a one year period and use that data in our datasets and thus increase accuracy. Moreover, we would want to measure the usage of the application remotely, to determine what factors to improve upon for a full-fledged version of DeepCriminalize.

Currently we are using NVIDIA's pre-trained pg-GAN model to generate face images, and moving the noise vector along feature axes in the latent space to tweak facial features according to our witness description. However, NVIDIA's model was trained on the CelebA network, and thus is not an unbiased sample as it favors certain groups of people over others. Going forward, we plan to develop and train our own GAN that directly takes labeled facial features as inputs and outputs images of faces with those features.

Citation

Tero Karras, 2017, Progressive Growing of GANs for Improved Quality, Stability, and Variation

Built With

- azure

- flask

- google-compute-engine

- java

- microsoft

- python

- tensorflow

Log in or sign up for Devpost to join the conversation.