-

-

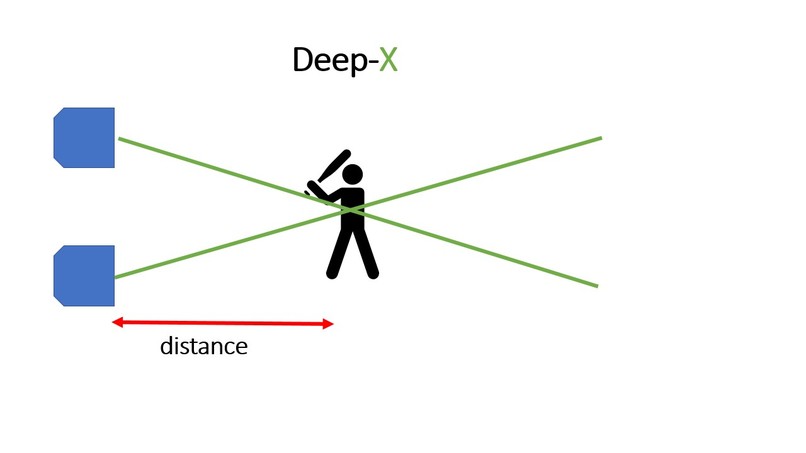

Deep-X - Seeing with your ears

-

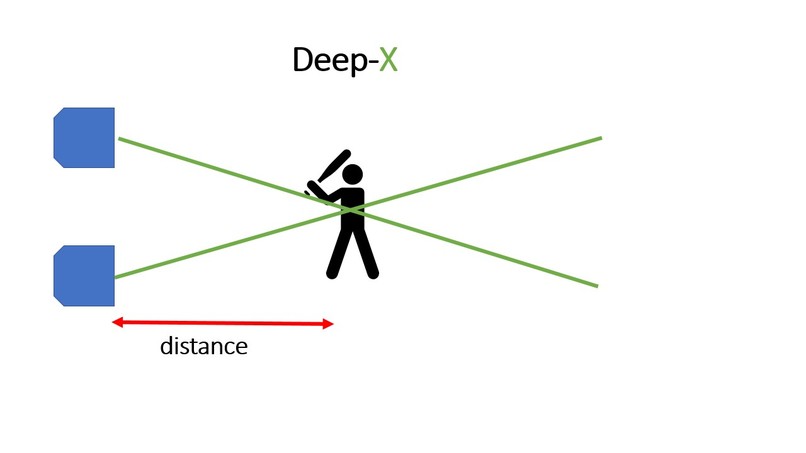

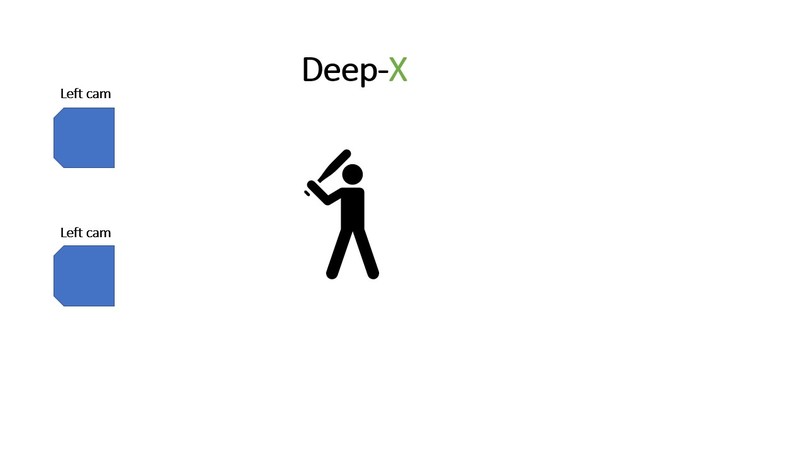

Stereo feed

-

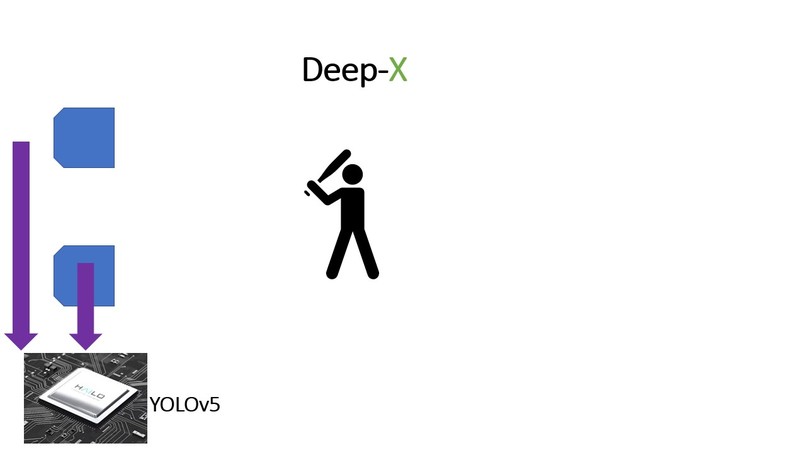

Hailo8 hardware running YOLOv5 object detection network

-

-

-

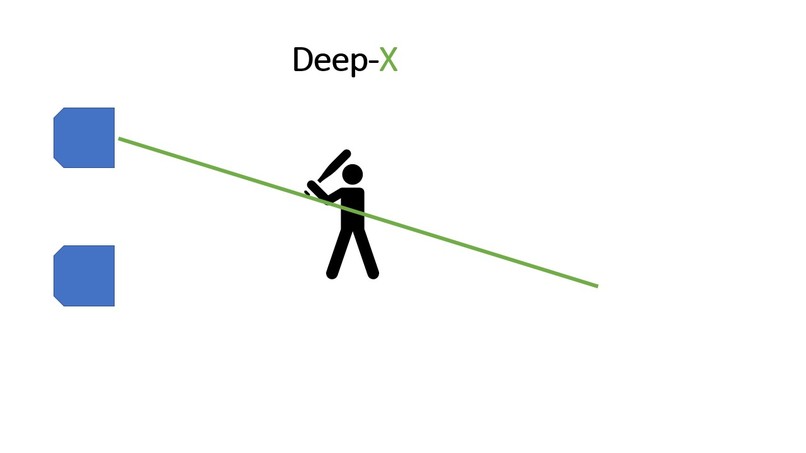

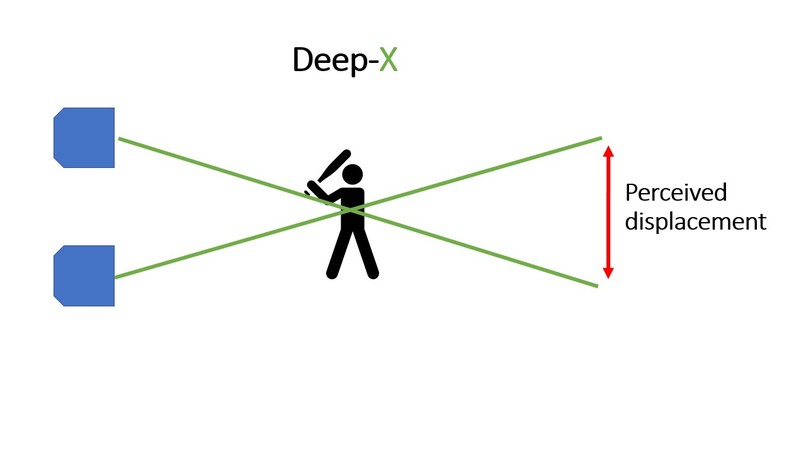

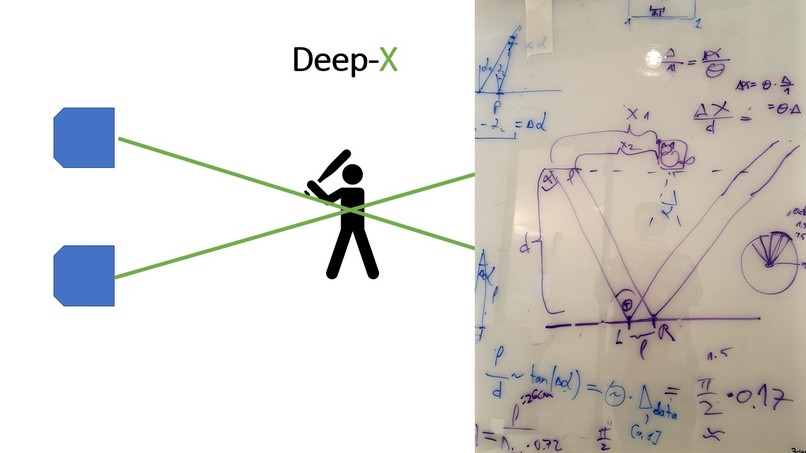

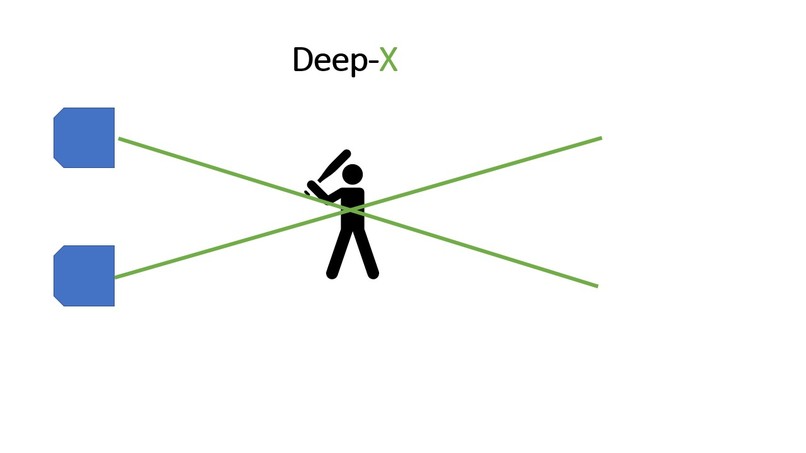

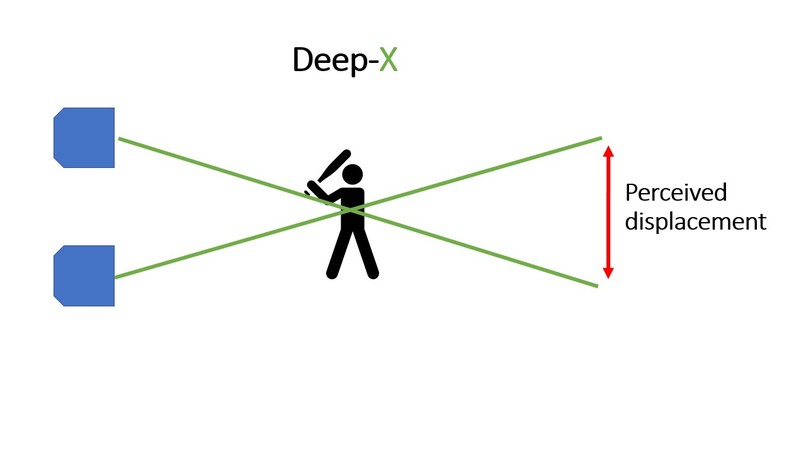

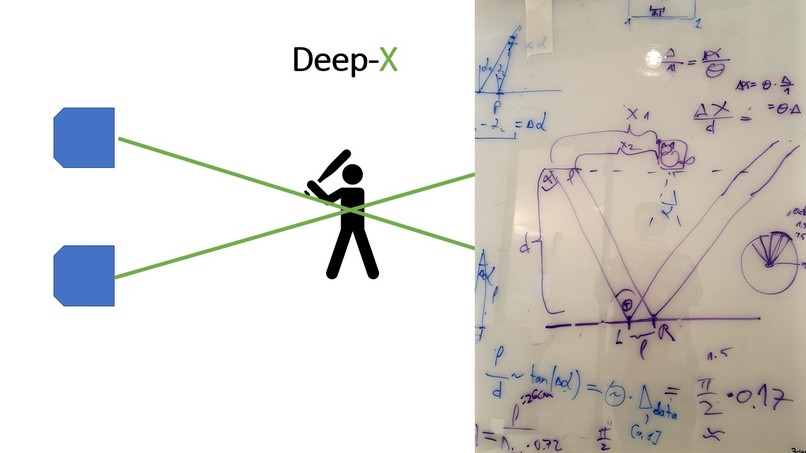

Calculate perceived displacent of objects between the 2 frames

-

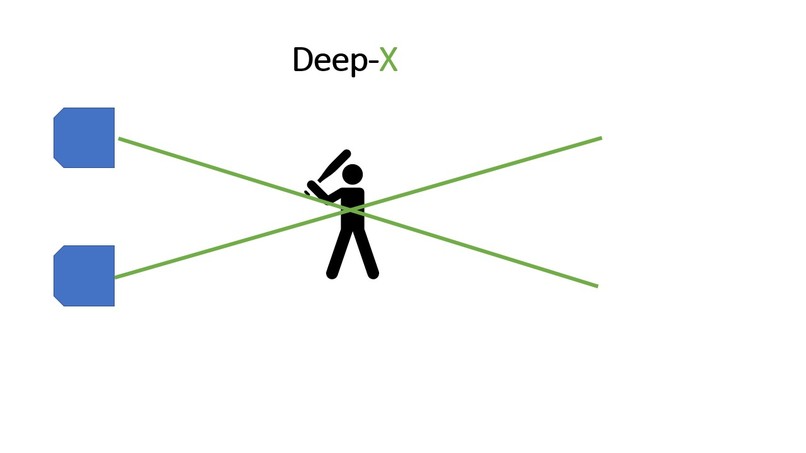

Triangulate

-

calculate object distance from system

-

Output voice alert about object class, distance and direction

-

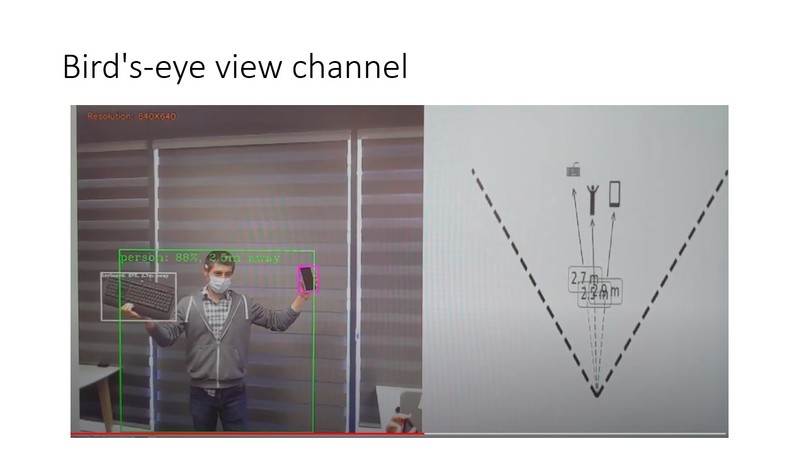

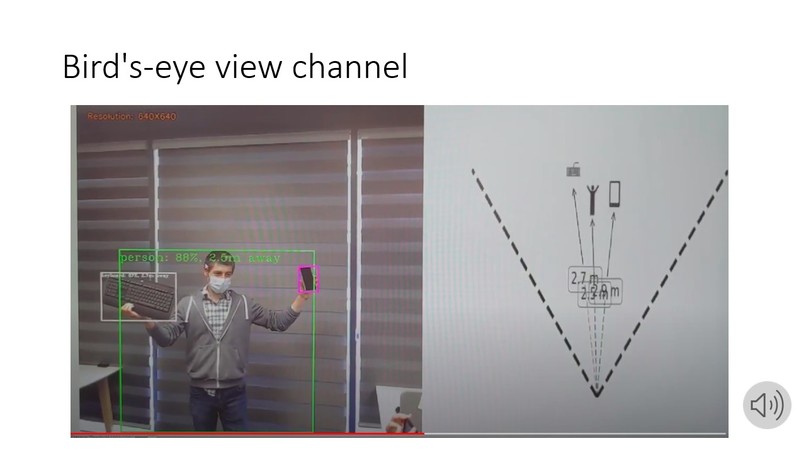

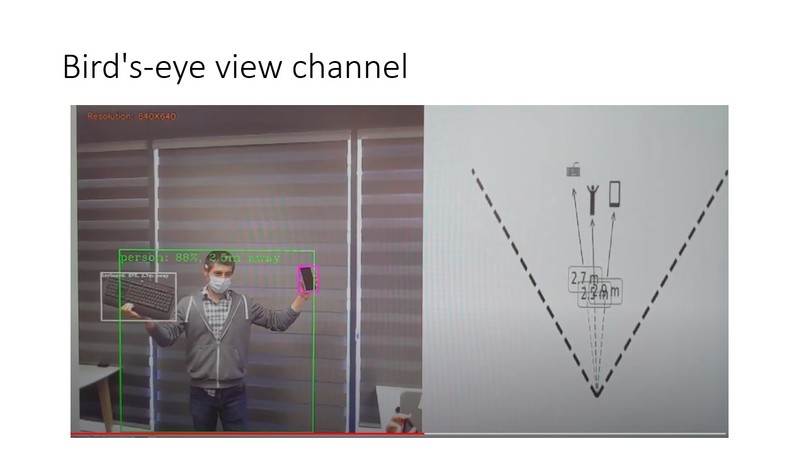

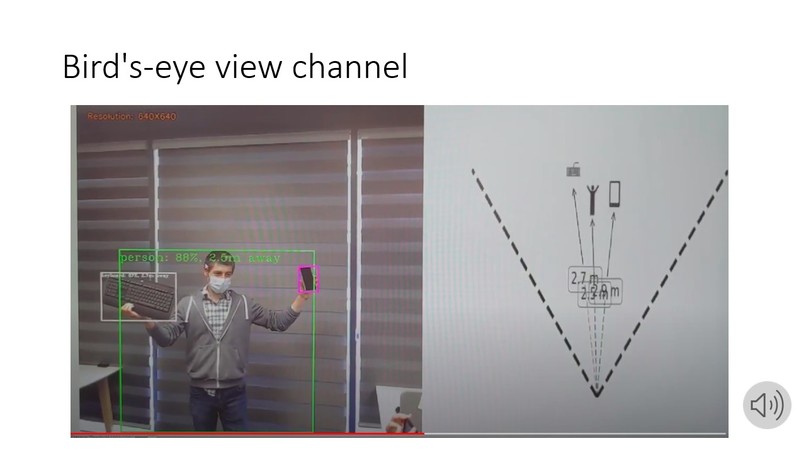

Create a bird's-eye view of the scene for low-resource analysis.

-

-

The team members with the stereo prototype

Project Description

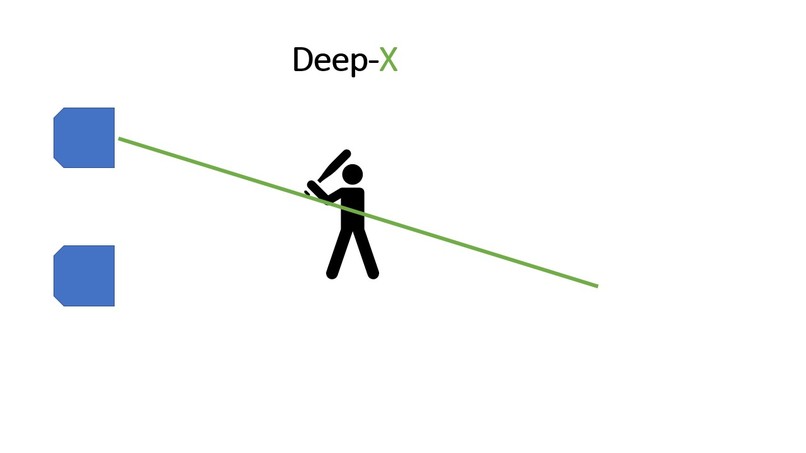

We use aligned dual camera setup, and run a strong (yolo-v5) object detection net on both (L & R) images, via small high-level additions to the demos infra. We use the disparity in the bbox coordinates as detected in the left and right view, to infer the distance to the object via (simplified) triangulation.

The distance-augmented detections are displayed to user via a multi-sense experience, whereby the normal demo video stream is augmented with a "Radar" aka birds-eye View, displaying vectorial locations of the objects on the office floor. In addition, as a sneak-peek to the up and coming wearable applications, we give audible alerts and navigation tips.

Usefulness

This significantly upgrades the basic object detection proto-app, enabling Bird's Eye View on the scene (for the first time in Hailo). Technologically, it doesn't make use of a specialized depth estimation networks, possibly freeing up resources for other tasks or stronger object detection, which may be a winner for cases where only distance to the objects is really interesting (and not a full depth map).

Down the road, it can assist visually impaired persons, as well as office robots to navigate indoor (or outdoor) environments.

User Adoption

Our code is lean and high-level, without any significant change to underlying infra, facilitating easy adoption.

Industry and Market

Internal - Hailo demos. Wearables - for visually impaired persons and augmented attention (think OrCam's products). Office robots.

The Hailo Difference

The underlying technology relies on strong object detection networks for which Hailo chip is an enabler (compared to competition which can only run mobilenet_ssd, whose boxes quality is not sufficient for the current application). This supports our basic claims, when coupled with smooth performance via low latency, high FPS and of course low power suitable for wearables and small robots.

The resultant object-detection-2.0 is a step forward for Hailo's demos, enabling Bird's Eye View on the scene for the first time.

Built With

- cardboard

- hailo8

- matplotlib

- opencv

- tappas

- tesa

Log in or sign up for Devpost to join the conversation.