-

-

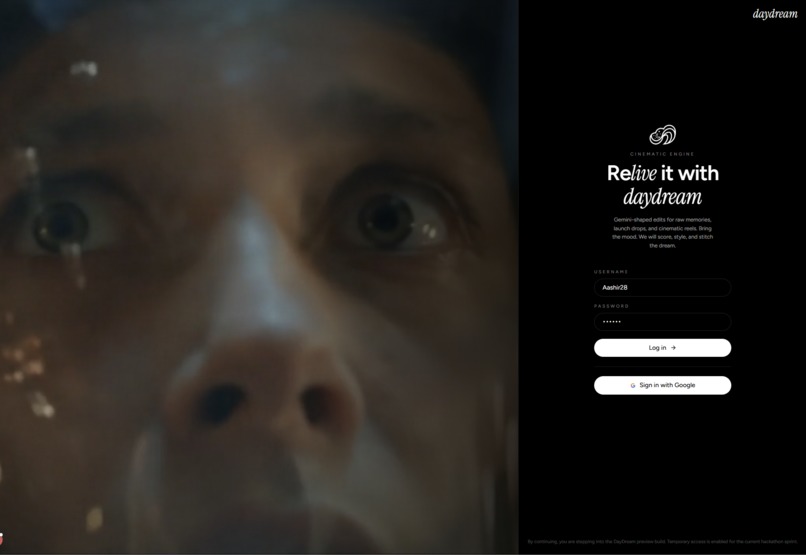

The login gate. A looping cinematic render greets you before entering the DayDream studio.

-

Fully responsive mobile login. Enter the liminal cinematic engine and direct your visions from any device.

-

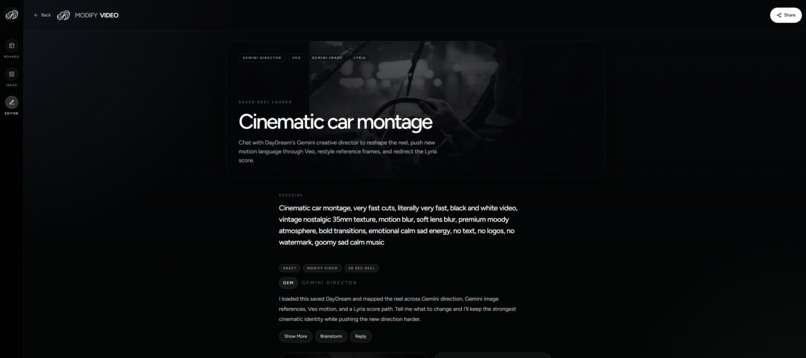

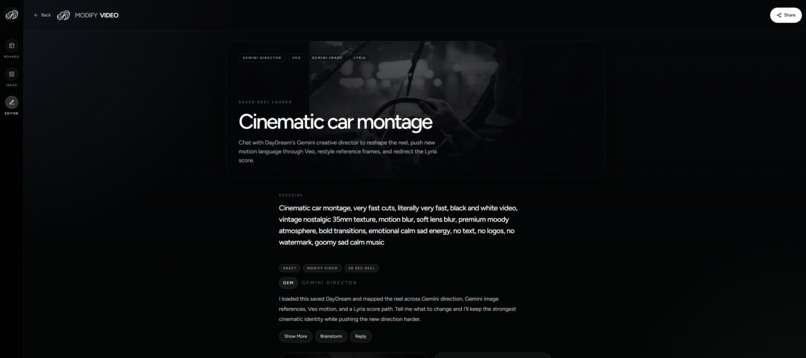

The Studio. A minimal, dark film lab UI. No complex timelines, just type your vibe, or upload your camera roll, set your pace, and direct.

-

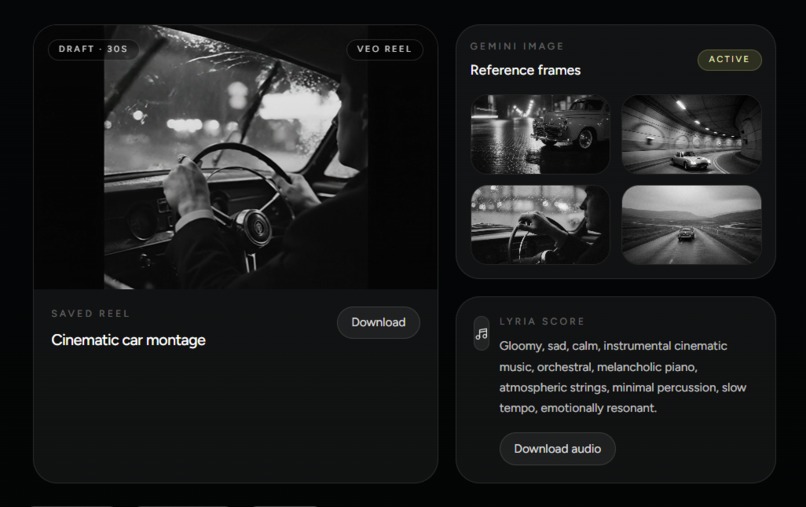

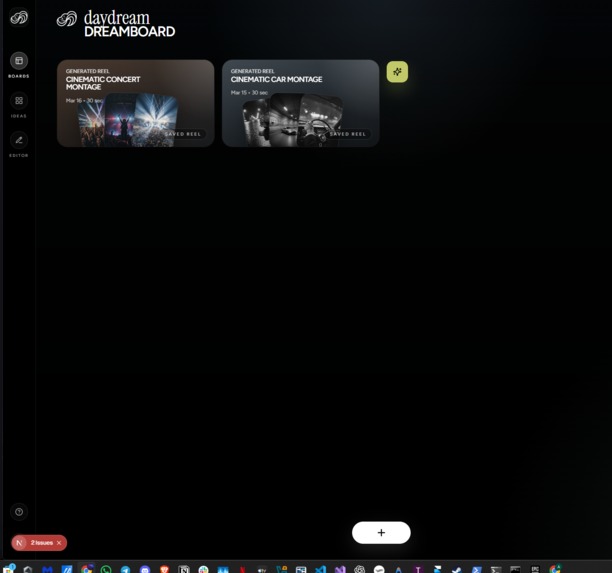

A saved draft in your DreamBoard. Access your generated reels instantly and prepare them for compute-safe remixing.

-

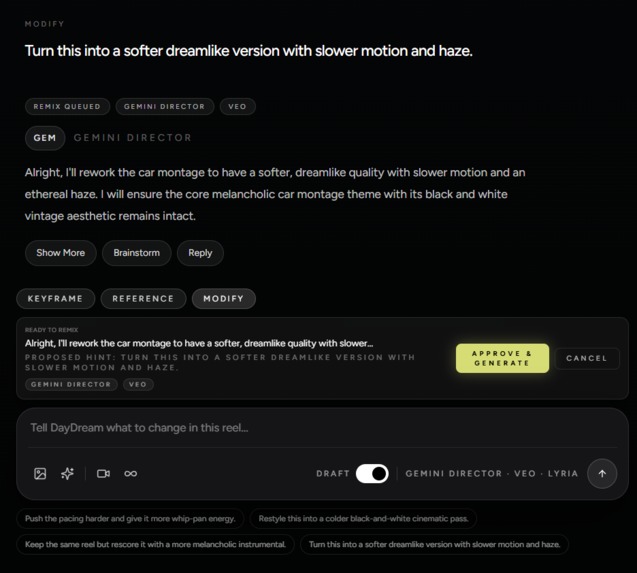

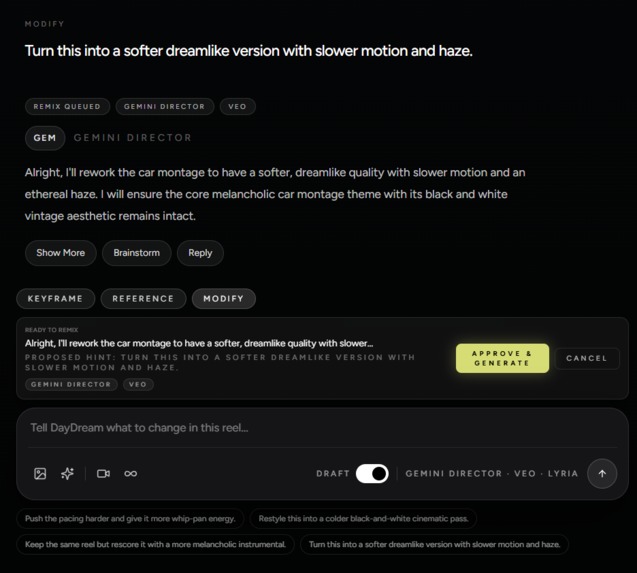

Compute-safe remixing. Chat with the Gemini Director to modify your reel. Approve its proposed plan before triggering new generations.

-

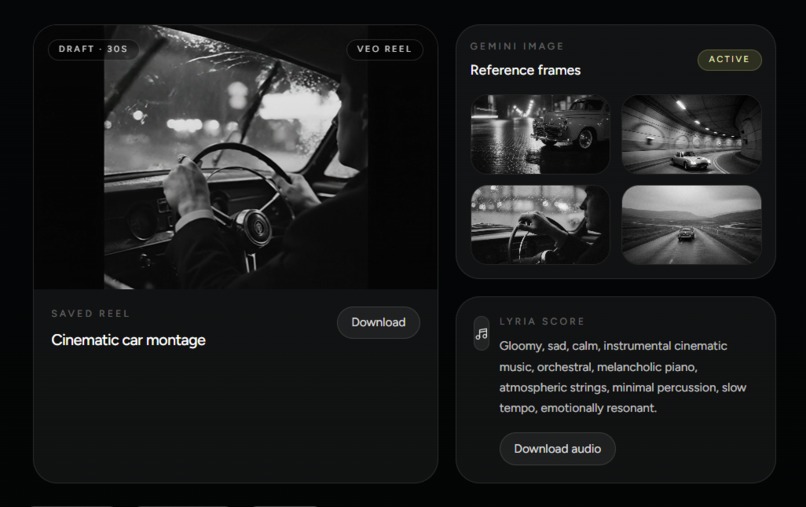

Full asset extraction. Every Lyria audio track, Nano Banana 2 hero frame, and Veo video clip is rendered and fully downloadable.

-

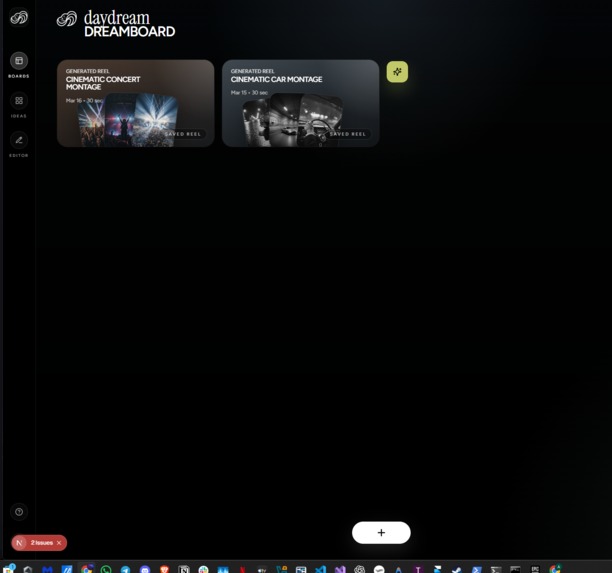

The DreamBoard. Your personal gallery of generated reels and the gateway to chat with the Gemini Director for modifications.

-

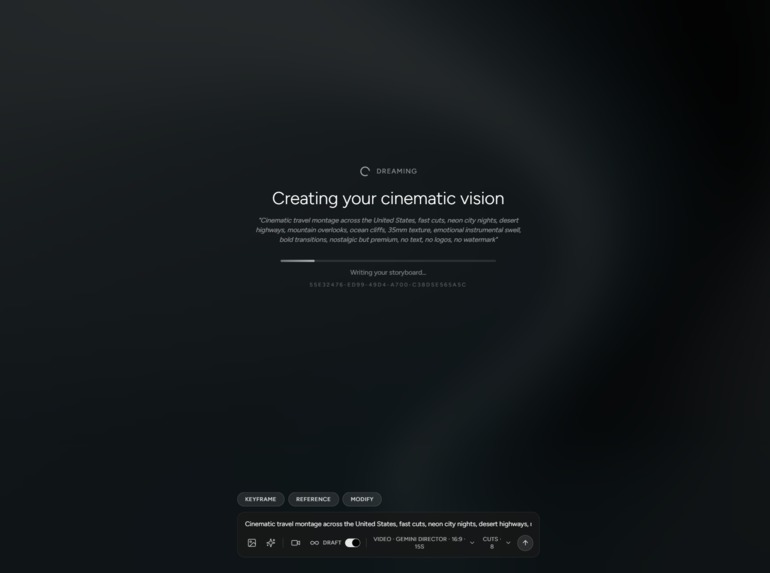

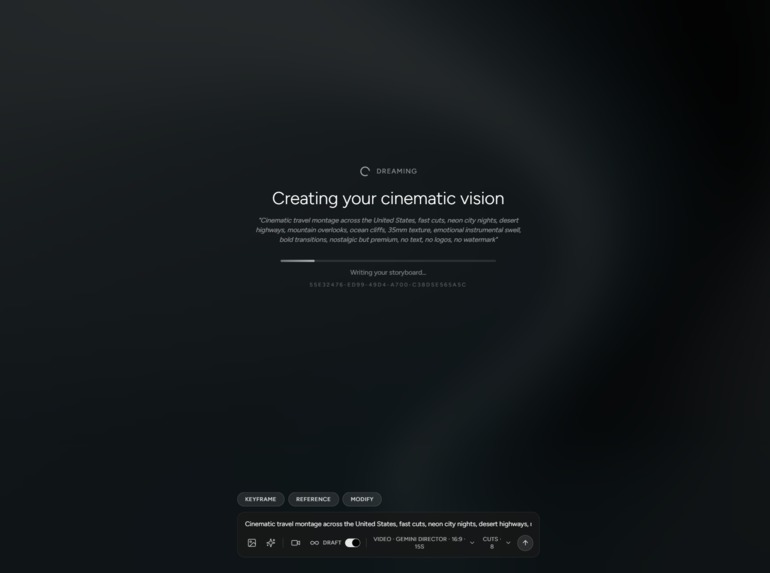

The "Dreaming" overlay. Watch the multimodal pipeline seamlessly orchestrate Gemini, Veo, and Lyria in real-time.

-

The final render. A flawless, beat-synced masterpiece forged by the engine, ready to be exported or remixed in the Studio.

-

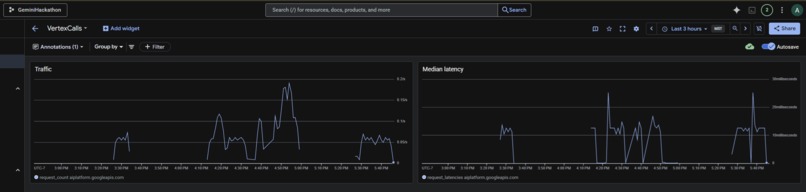

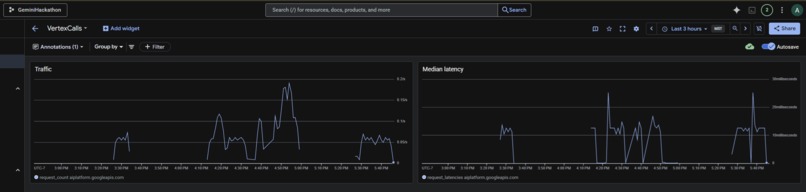

Vertex AI dashboard monitoring our sustained API orchestration, traffic spikes, and median latencies.

-

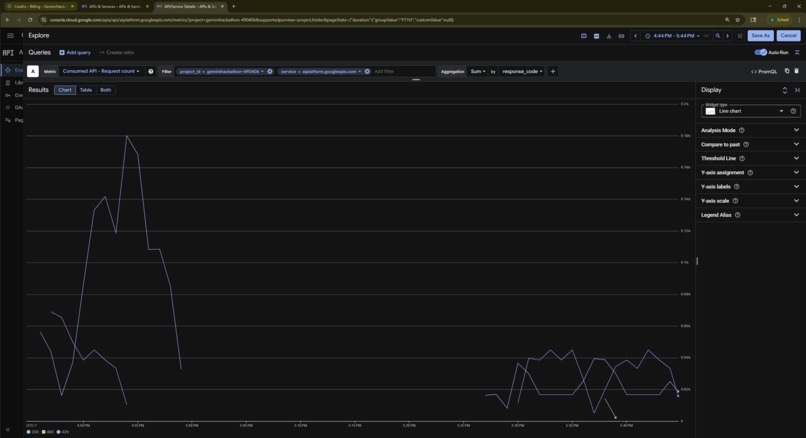

Proof of work: Live request counts showing successful (200 OK) API calls to Vertex AI during our generation loop.

-

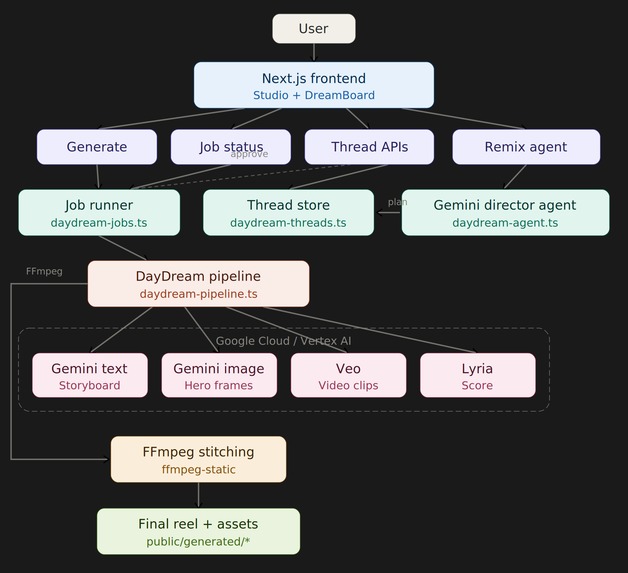

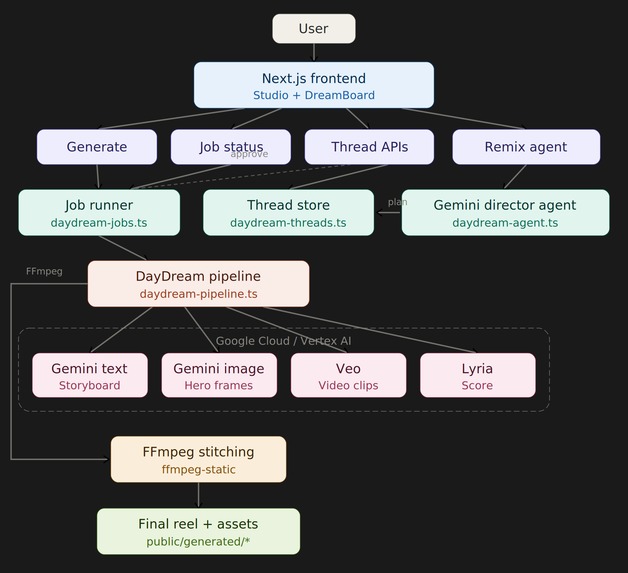

The daydream architecture.

Inspiration 💡

Right now, creating a cinematic, beat-synced video requires one of two things: hours of manual labor in Premiere Pro, or settling for disjointed, generic slop from standard text-to-video generators. Even with gemini, veo, lyria and nano banana, there is no middle ground for creators who just want to direct a feeling with all the models combined, rather than generating assets separately and stitching them together. I wanted to build the death of the editing timeline, a true "Creative Storyteller" that doesn't just generate clips, but acts as a fully autonomous AI film director, and an agentic editing engine.

What it does 🎬

DayDream is a cinematic engine that turns a single vibe prompt into a finished, beat-synced short film. You land in a dark, minimal film lab UI. You type a vibe (e.g., "nostalgic 35mm night drive") and select your pacing (like "Velocity Mode" for rapid TikTok-style cuts).

DayDream then orchestrates a massive multimodal pipeline:

- 🎭 The Director: Gemini parses the vibe, writes a storyboard, and passes strict style guidance down the stack.

- 🎵 The Audio Backbone: Lyria 3 generates a high-fidelity instrumental track matching the pacing.

- 📸 The Cinematographer: Nano Banana 2 generates cohesive "Hero Frames" based on the storyboard.

- 🎞️ The Animator: Veo animates each frame into short, fluid clips.

- 🔗 The Synthesizer: The engine stitches the Veo clips together, hard-syncing the scene transitions directly to the tempo of the Lyria track.

Everything is saved to your DreamBoard. If you want to change a video, you open the Modify Studio and chat directly with the Gemini Director agent. Instead of blindly regenerating and burning compute, the agent acts like a senior editor, proposing a text-based "Remix Plan" and waiting for your explicit approval before surgically triggering new API calls.

How I built it 🛠️

I architected the core production environment using TypeScript for a seamless, highly-responsive UI and fast API routing, while leveraging Python on the backend to handle the heavy AI orchestration and media stitching.

The entire engine relies on Google's multimodal stack. I used the Gemini Live API as the core reasoning engine to route payloads between the Lyria API (for audio), the Nano Banana 2 API (for stylistic image generation), and the Veo API (for high-fidelity video rendering).

Architecture Diagram 🏗️

Refer to the last slide of the image gallery.

Challenges I ran into 🧗

My biggest hurdle was the 30-second output goal. Veo isn't designed to natively output a 30-second continuous shot out of the box. I had to engineer a backend loop that utilizes Veo's capabilities combined with my own scene-stitching logic, ensuring the visual cuts landed perfectly on the audio drops generated by Lyria.

Additionally, I realized that allowing users to simply click "regenerate" on heavy multimodal pipelines was going to burn through API quotas instantly.

Accomplishments that I'm proud of 🏆

I am incredibly proud of the "Compute-Safe Remixing" via the Modify Studio. Forcing the Gemini agent to propose a modification plan and await user approval before hitting the Veo or Nano Banana endpoints is a massive leap in UX and resource management. It gives the user complete creative control without the compute burn.

Proof of Work: Live Vertex AI Orchestration ⚡

Refer to the last second and last third image in the image gallery.

What I learned 📚

I learned that the secret to high-quality AI video isn't just a better video model; it's multimodal orchestration. By generating the Lyria audio track first and using its metadata to dictate the Veo video transitions, the final output feels intentionally directed rather than randomly generated.

What's next for DayDream 🚀

I want to expand the engine's inputs. I envision DayDream moving beyond just text and photos. I want it to be a fully agentic AI editing tool, with masking, filters, camera architecture, and every advanced editing feature; production level ready, where people can use the abilities, of Gemini, in few shots.

Built With

- gemini

- lyria

- nano-banana-2

- node.js

- python

- react

- typescript

- veo

Log in or sign up for Devpost to join the conversation.