-

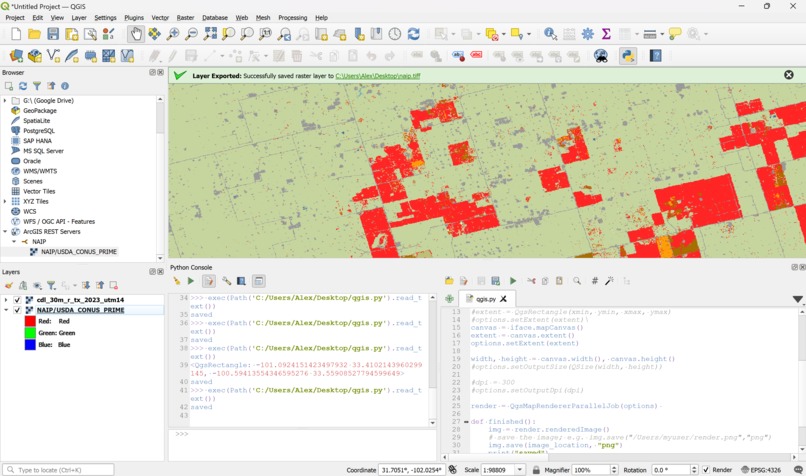

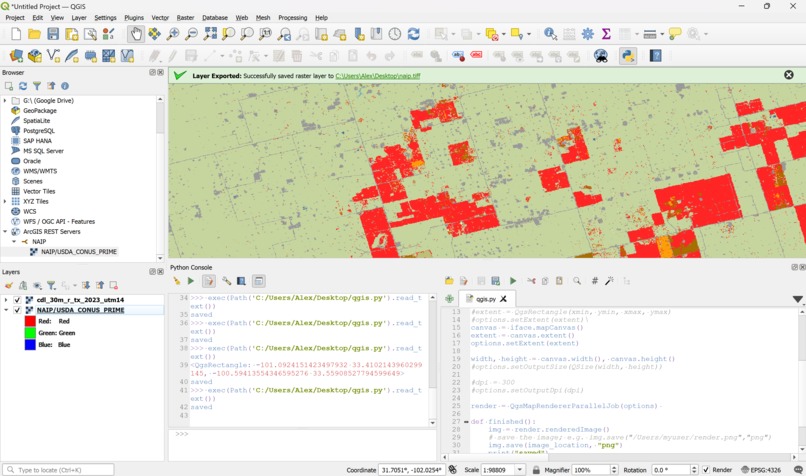

NAIP and CDL databases overlayed. Green is cotton production area, red is all other land use (details removed for simplicity).

-

Creating a region of interest (ROI) export for an area on the map.

-

Frontend using Leaflet map API for selecting the location to run image segmentation

-

QGIS, open-source software for loading and displaying maps and other features.

Inspiration

Having a wide range of applications, we knew that we would want to participate in the TAMIDS crop classification challenge.

What it does

This program is a model that takes satellite imagery as an input, and outputs a mask image that provides insights about the type of crops in different areas of the image.

How we built it

Designing the Dataset

The first step we took when designing this project was finding a dataset to train our model on. Unfortunately, we couldn't find a large enough dataset to train the neural network on. Because of this, we had to make our own.

To make the dataset we used the Google Earth Engine (GEE), since it was the most comprehensive api library of GIS (global information system) data. We selected the NAIP (National Agriculture Imagery Project) as our satellite imagery resource since it had a high enough resolution to recognize cotton crops from the sky (resolution was 0.6 meters per pixel). We selected the Cropland Data Layer (CDL) from the National Agricultural Statistics Service (NASS) as a dataset that shows labels for which type of crop or ground cover covers the United States. This was our mask layer.

We used QGIS in order to render subsets of each of these datasets and export them into usable images. Then, we used NumPY arrays in order to segment the image into squares with standardized dimensions, and convert the CDL images into usable masks for training. Finally, we used the images to train our custom convolutional neural network algorithm built in Pytorch.

UI

For the frontend, we used NextJS and Tailwind. We also used Leaflet, an open source maps API to supply a user interface for selecting a location for querying our neural network. We also used Flask to host small backend server for receiving requests from the frontend to run our image segmentation functionality.

Segmentation Neural Network Functionality

The Segmentation Network works by having an encoding and a decoding channel. First the original image is sent through the encoding channel which extracts features such as the crop fields from the image. This channel works by passing the image's rgb layers through 2 convolutional layers, with a ReLU layer in between each one to introduce non-linearity to the network to allow for the network to account for more complex patterns. Each of the convolutional layers has different kernels which extract different details from the image such as edges and textures. Eventually after going through 2 convolutional layers the output is sent trough a max pooling layer which further compresses the output and extracts critical features which are essential for segmentation. Secondly comes the decoder channel which takes the output of the encoding channel and reconstructs it back to the original image size. This is done through a Transposed Convolutional layer which undo's what the encoding channel did. Lastly this is sent through a convolutional layer with a kernel size of 1 which simply applies a transformation point-by-point across the image's spatial dimensions which yields a segmented output that aligns with the original image's resolution.

Challenges we ran into

This is the first time we've ever tried to create image segmentation, and this was made especially hard by the high volume of data that we had to sort through. Also, using the Google Earth Engine ended up being much more unclear than we originally thought, and figuring out that API ended up taking most of the time we spent on this project. Our original plan was to create a dynamic backend on the Google cloud compute platform that would use GEE in order to fetch and stream an image dataset on demand, but we ended up not having enough time to accomplish this.

Accomplishments that we're proud of

The dataset generation was especially difficult and took up the majority of our time.

What we learned

There was a lot of lessons learned about working with and normalizing large amounts of data efficiently and the

What's next for Cropland Segmentation Using CNNs and Google Earth Engine

We would like to fully integrate the frontend with our neural network, as well as finding more diverse data to train one.

Log in or sign up for Devpost to join the conversation.