Inspiration

I was inspired to build a system that can quickly detect credit card fraud, which is a growing problem for banks and customers. The goal was to create an efficient, real-time solution using cloud technologies and machine learning.

What it does

The project detects fraudulent credit card transactions in real time using Google Cloud. It processes transaction data, runs fraud detection algorithms, and sends instant email alerts if fraud is detected.

How we built it

We used Google Cloud services like:

- Pub/Sub for real-time data streaming.

- BigQuery for storage.

- Vertex AI for machine learning models.

- Cloud Functions to send email alerts.

Toolbox 🧰

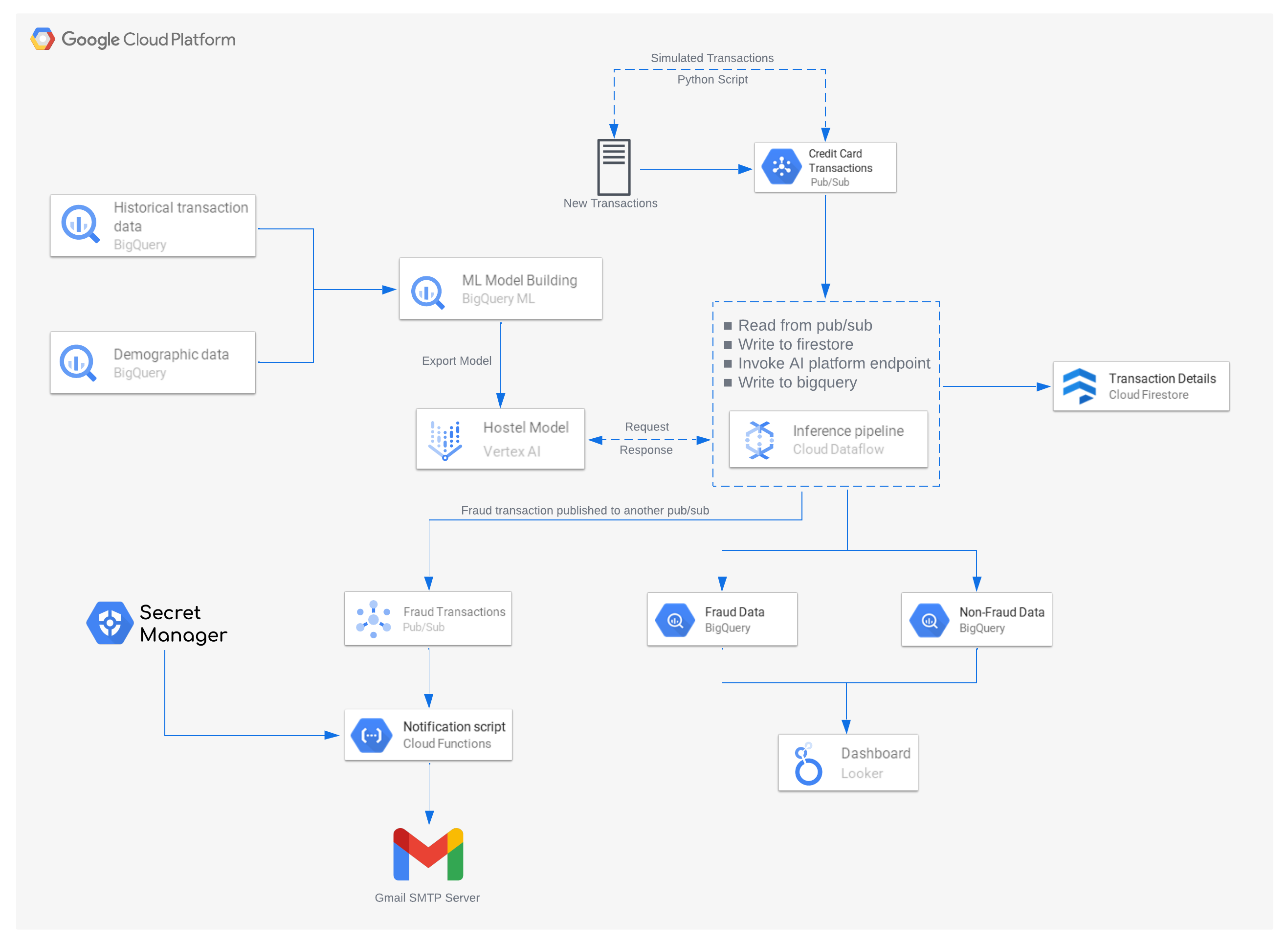

Architecture Diagram

Workflow of the Project

1. Data Preparation

Data Collection: Historical and demographic transaction data stored in BigQuery is used to train the fraud detection model.

Feature Engineering:

Key features include transaction type, transaction amount, balance changes, and origin/destination accounts. Features like type, amount, oldbalanceOrig, newbalanceOrig, oldbalanceDest, and newbalanceDest play a vital role in model training.

Preprocessing: Data is cleaned to handle missing values, remove anomalies, and normalize numeric features. The data is highly imbalanced; BigQuery handles smoothing and applies one-hot encoding in the background.

2. Model Training

BigQuery ML:

Used to train classification models such as KMEANS, LOGISTIC_REGRESSION, and BOOSTED_TREE_CLASSIFIER. The model learns patterns of fraudulent behavior based on labeled data in the isFraud column.

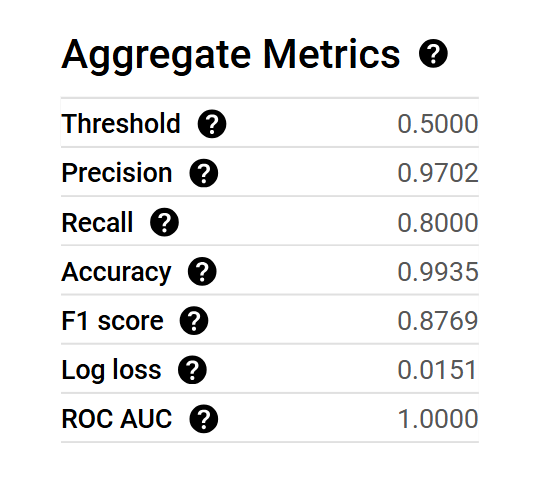

Evaluation Metrics:

Metrics like precision, recall, and F1-score ensure the model is optimized for fraud detection accuracy.

Below are the metrics for the BOOSTED_TREE_CLASSIFIER after training.

3. Model Deployment

- The trained model is deployed on Vertex AI, creating an API endpoint for real-time scoring.

- Vertex AI offers scalability and ensures low-latency predictions.

- Each transaction is scored in real time, generating a fraud probability.

4. Real-Time Data Pipeline

Data Ingestion: Transactional data from on-prem systems is streamed into a Pub/Sub topic in real time. Data is converted to JSON format for processing.

Firestore: Recent transaction data is stored in Firestore for efficient monitoring and retrieval.

Dataflow Pipeline: A Dataflow pipeline processes the Pub/Sub messages, applies transformations, and routes the data for storage and prediction. Each transaction is scored by the Vertex AI model, and predictions are appended to the data.

5. Fraud Detection and Alerts

Transactions Identified as Fraudulent: Transactions identified as fraudulent are published to another Pub/Sub topic.

Cloud Function:

- Sends email notifications to customers and banks.

- Logs the fraud incident in an internal system for further analysis.

- Sensitive credentials like email SMTP configurations are securely fetched from Secret Manager.

6. Data Storage

- Transactions are categorized based on predictions and stored in two BigQuery tables:

- Fraudulent Transactions Table: Used for further investigation, analytics, and notification purposes.

- Non-Fraudulent Transactions Table: Used for general analysis and reporting.

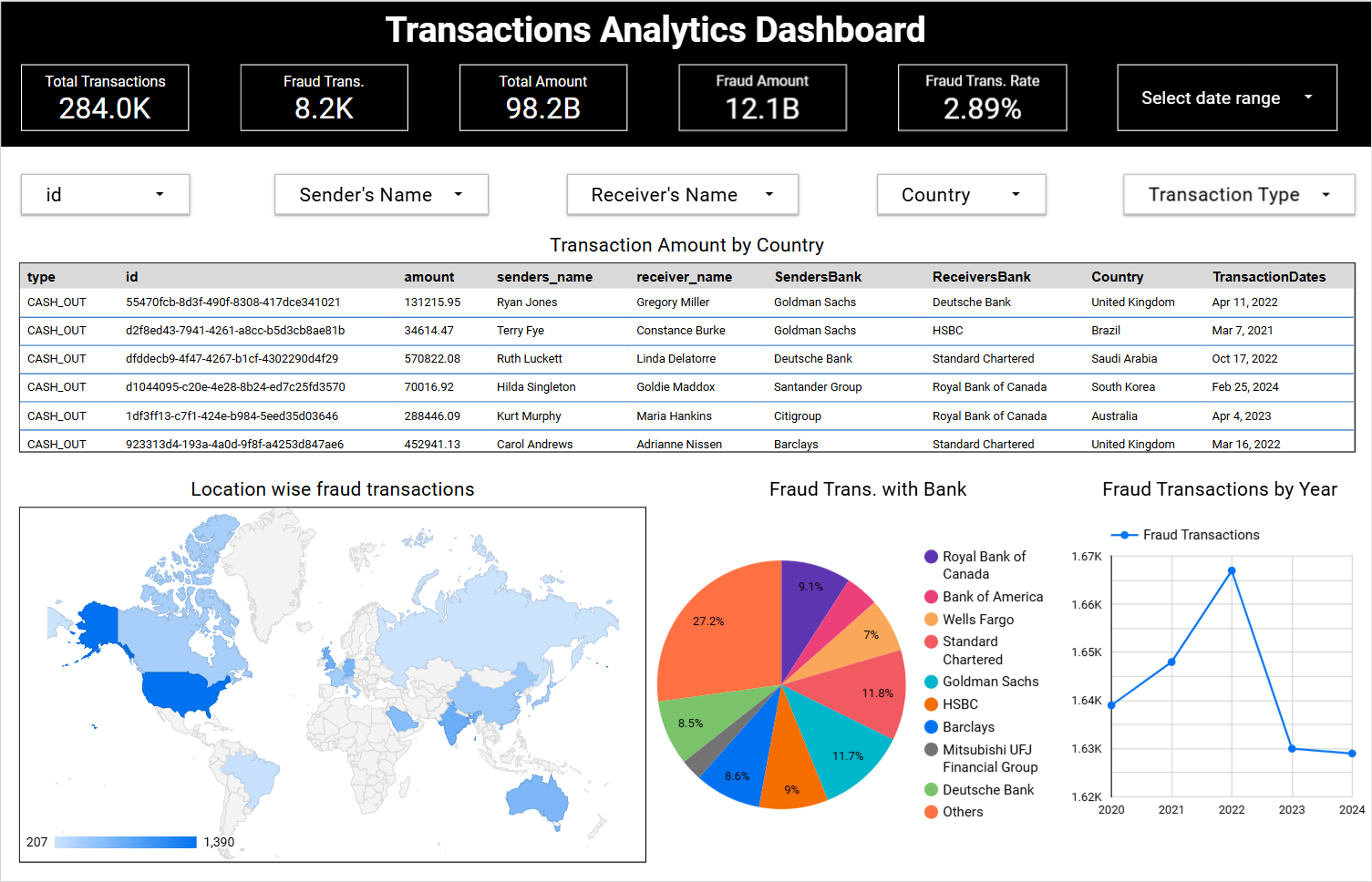

7. Dashboards and Visualization

Looker Studio Dashboards:

- Provide comprehensive insights into transaction data:

- Built the interactive dashboard on Looker Studio.

- Trends in fraudulent transactions over time.

- Real-time monitoring, fraud trends, and actionable intelligence.

- Region-wise distribution of fraud cases.

- Live Dashboard

Accomplishments that we're proud of

- Successfully built a real-time fraud detection system that processes over 2.8 lakh transactions.

- Instantly sends fraud alerts and has a visual dashboard to monitor fraud trends and decision-making.

Challenges we ran into

- Dealing with large transaction datasets.

- Debugging machine learning model errors.

- Setting up a real-time data pipeline.

- Debugging the code for three days to ensure everything worked smoothly.

What we learned

- Gained hands-on experience integrating Google Cloud services for real-time data pipelines.

- Worked with machine learning models for fraud detection.

- Learned data engineering techniques and how to use cloud platforms effectively.

Built With

- cloud-function

- cloud-pubsub

- dataflow

- firestore

- google-bigquery

- google-cloud

- looker-studio

- python

- vertex-ai

Log in or sign up for Devpost to join the conversation.