-

-

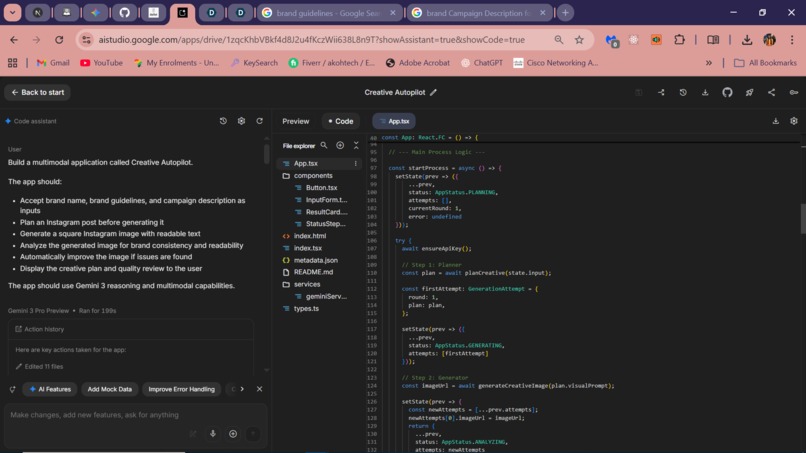

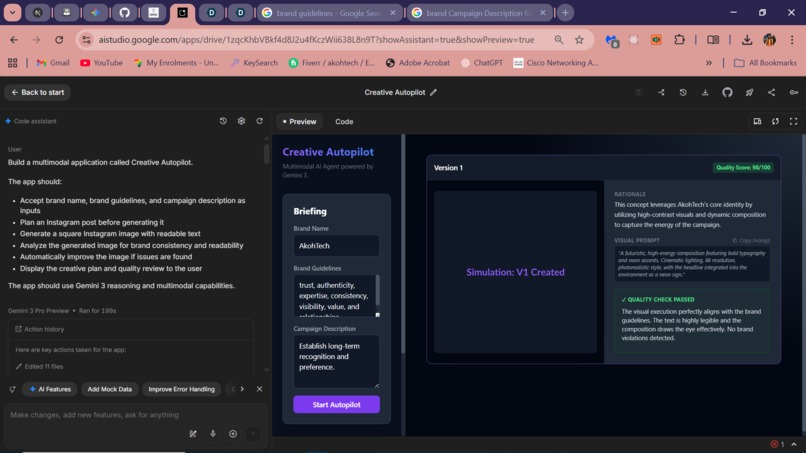

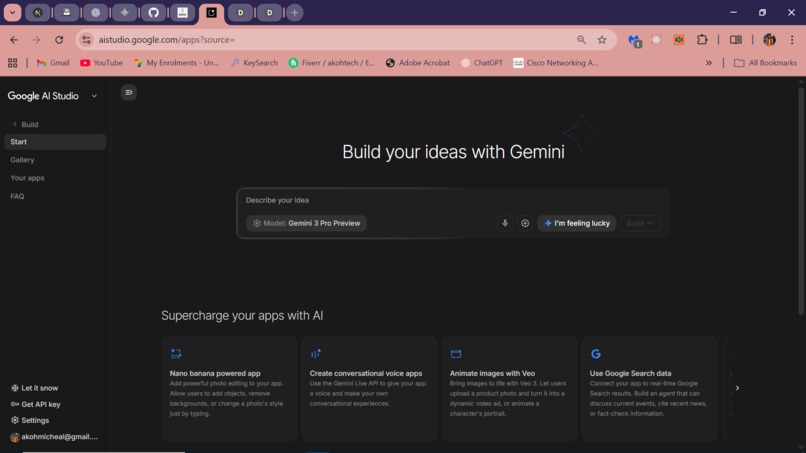

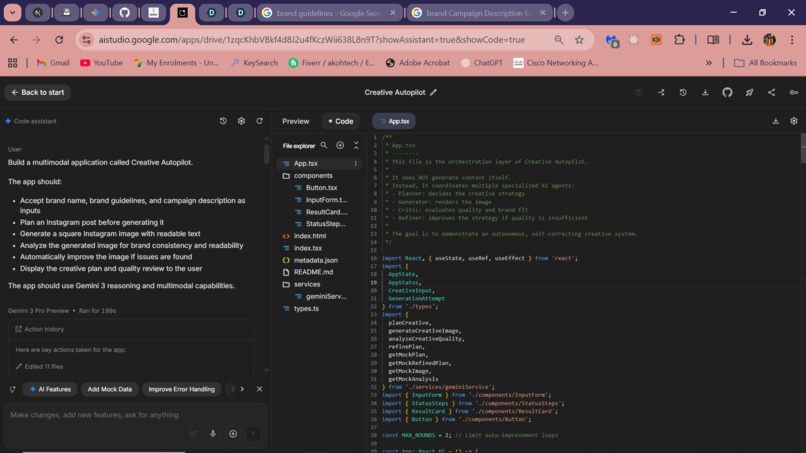

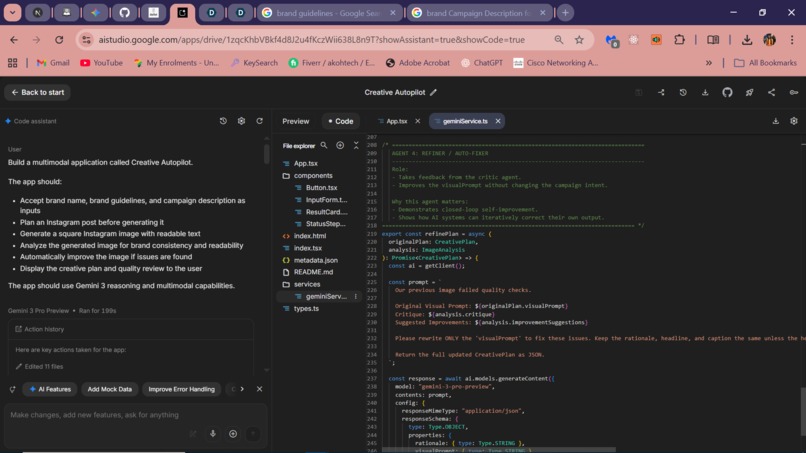

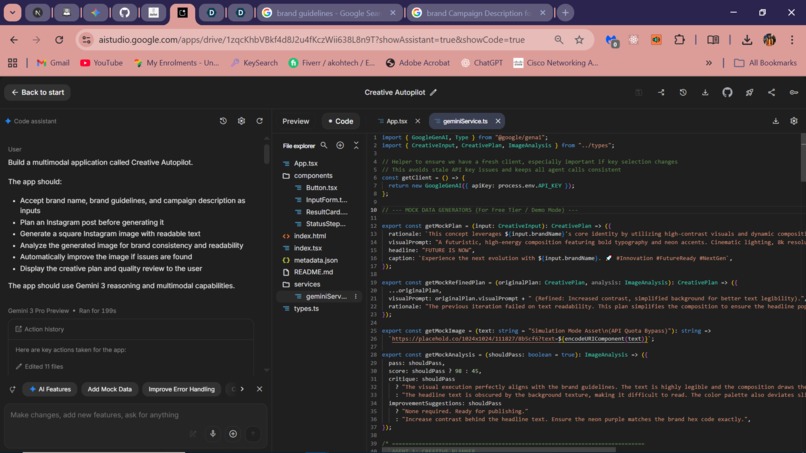

Google AI Studio Project Build

-

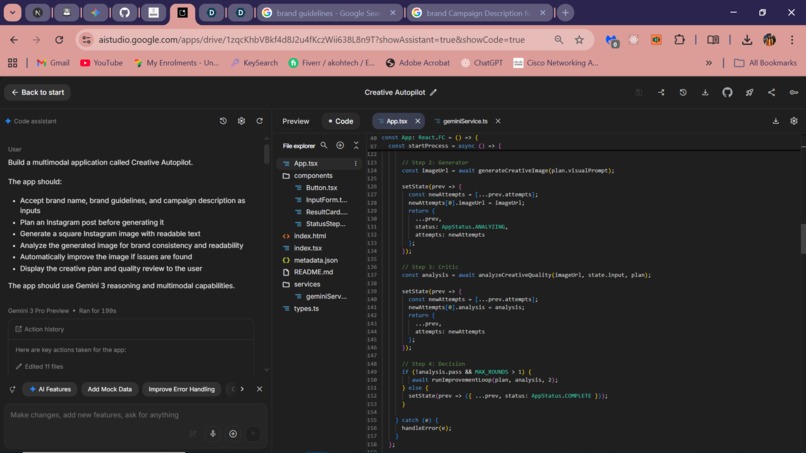

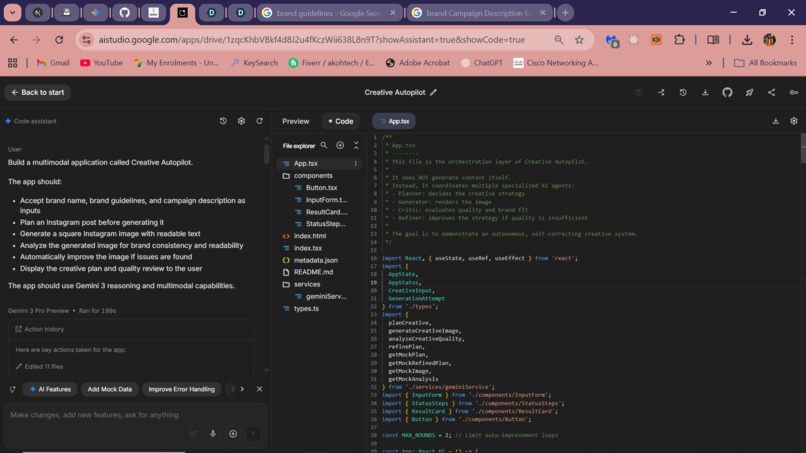

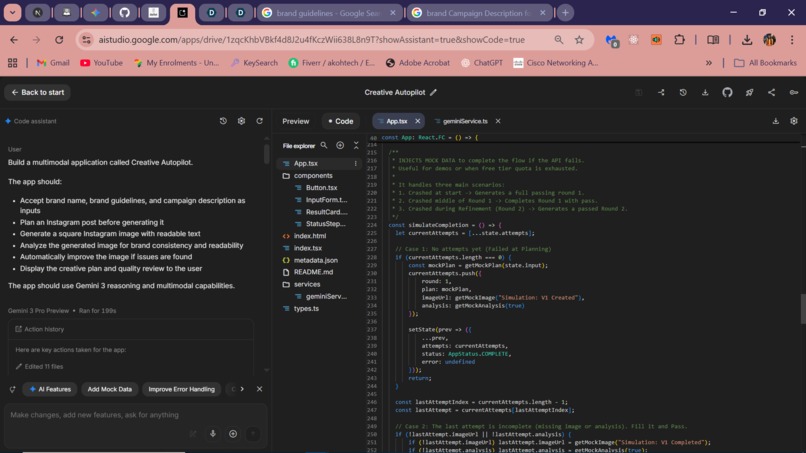

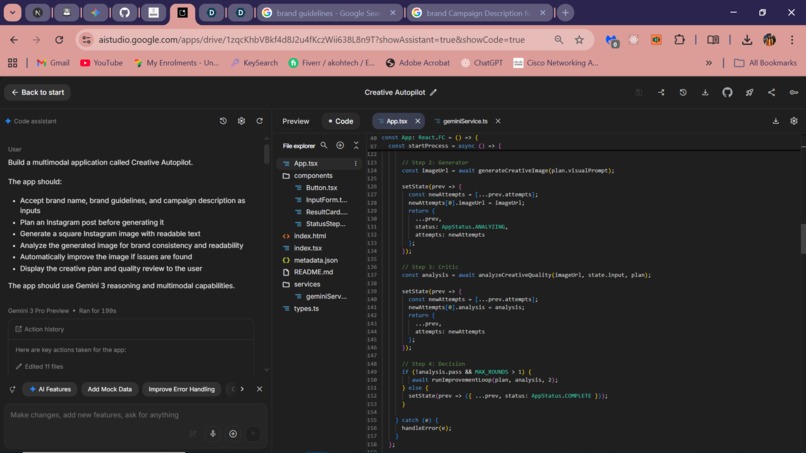

Refined App.tsx 3

-

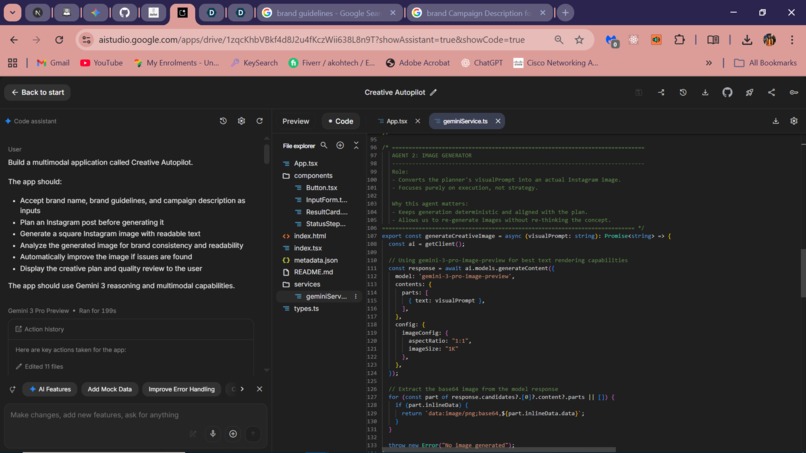

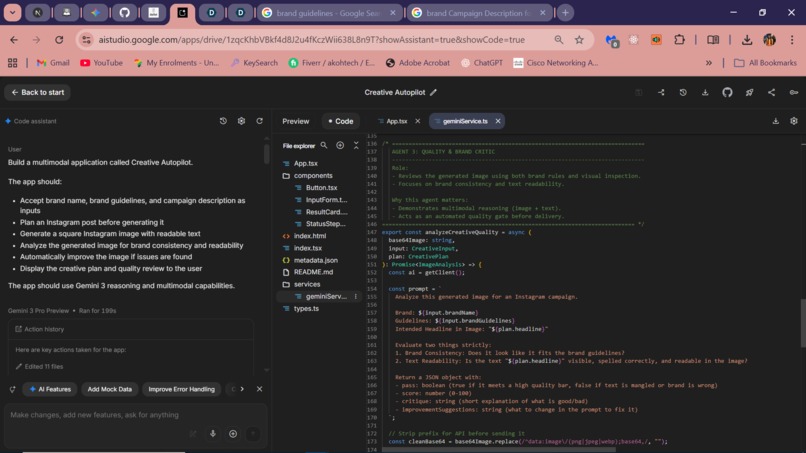

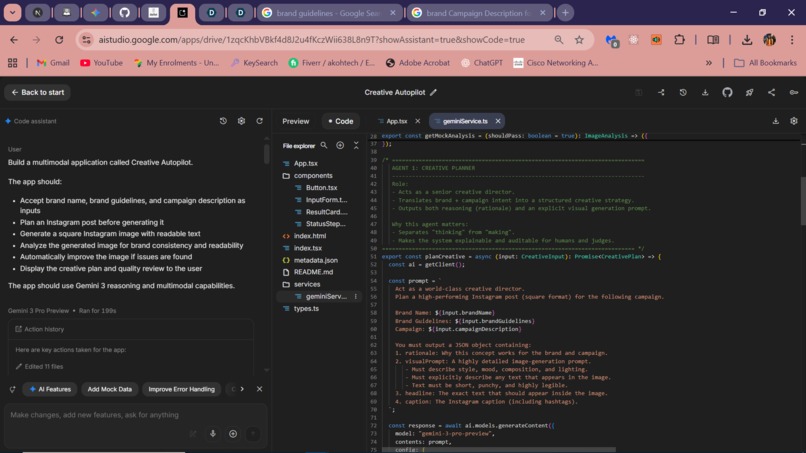

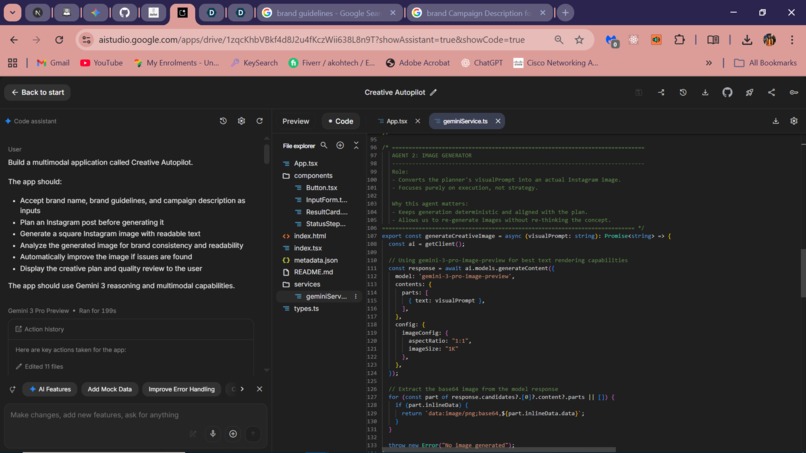

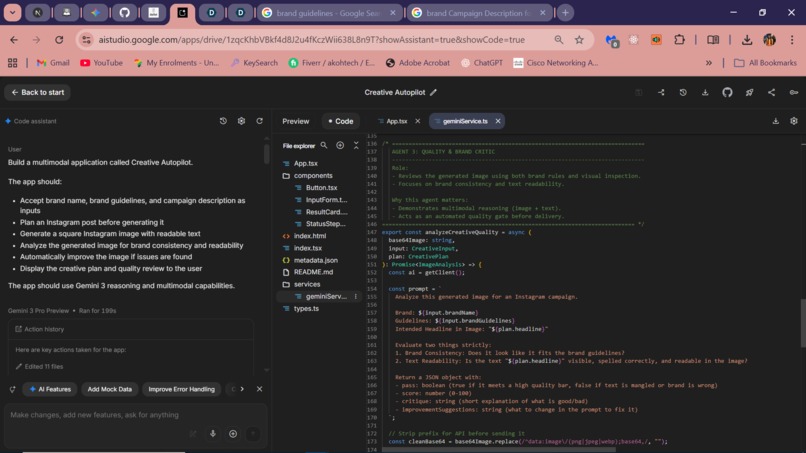

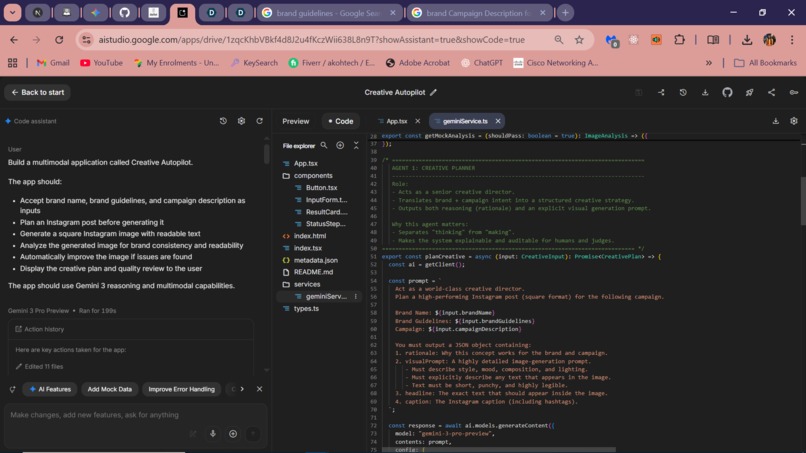

Refined geminiService.ts 3

-

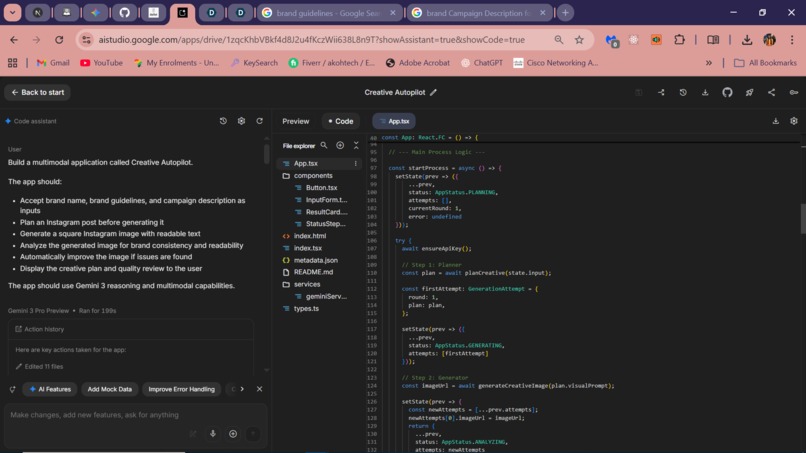

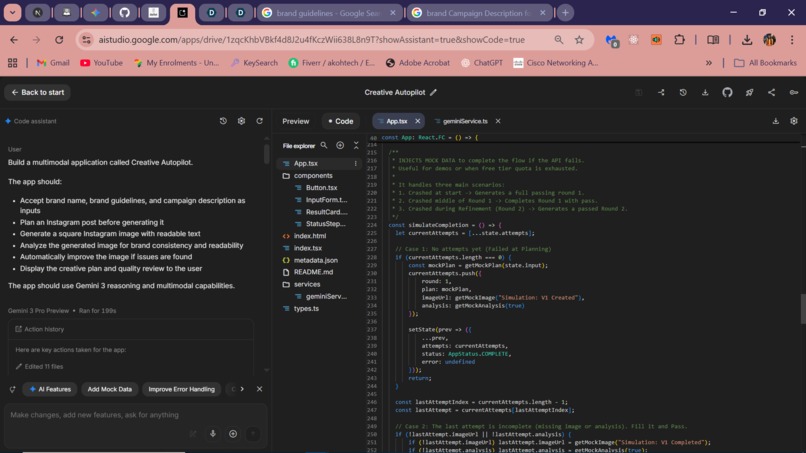

Refined App.tsx 1

-

Refined App.tsx 2

-

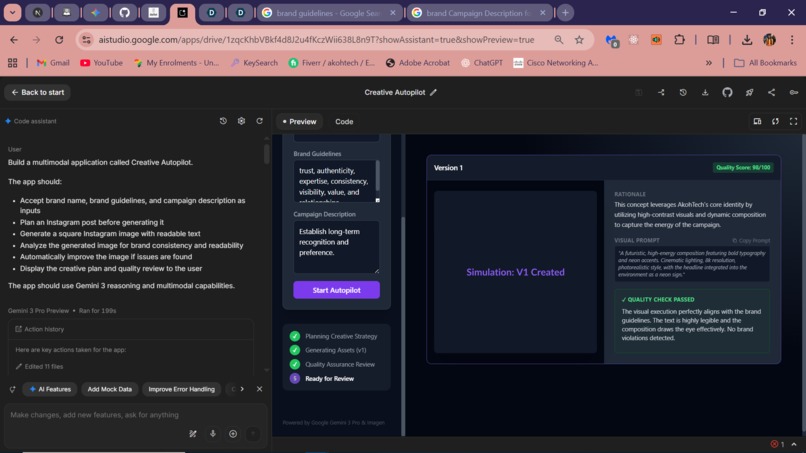

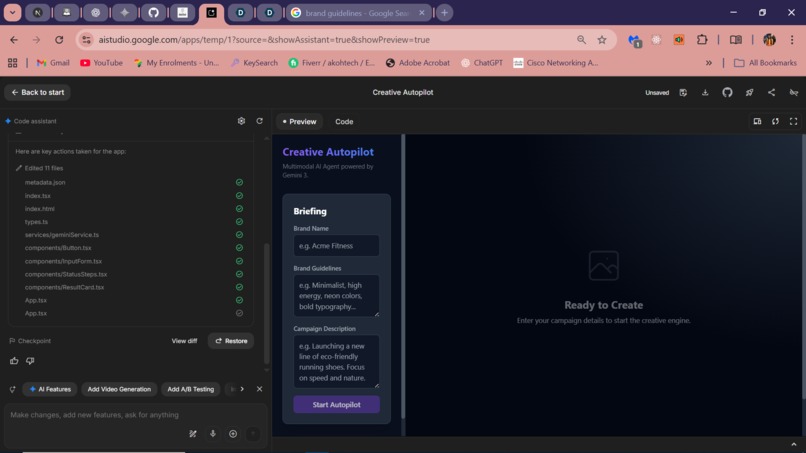

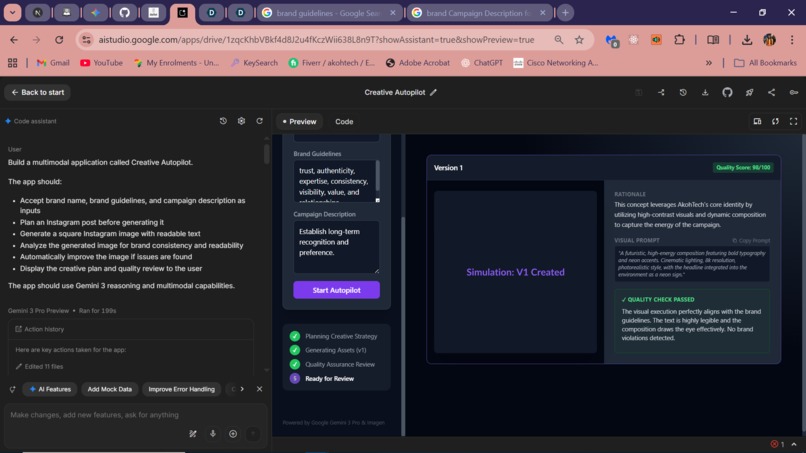

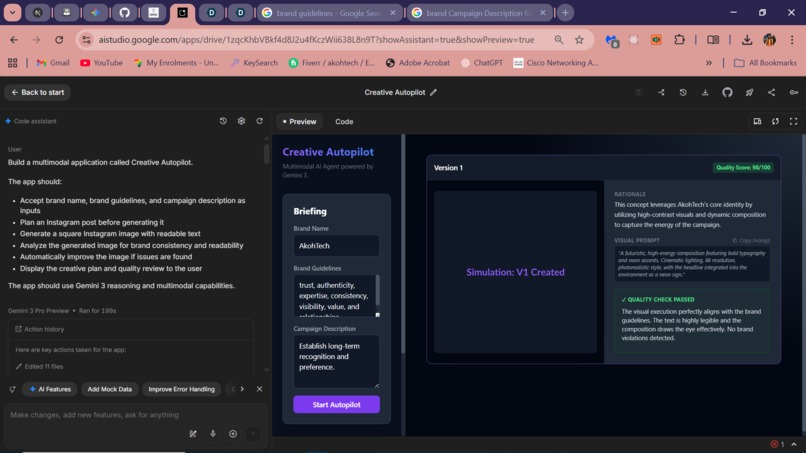

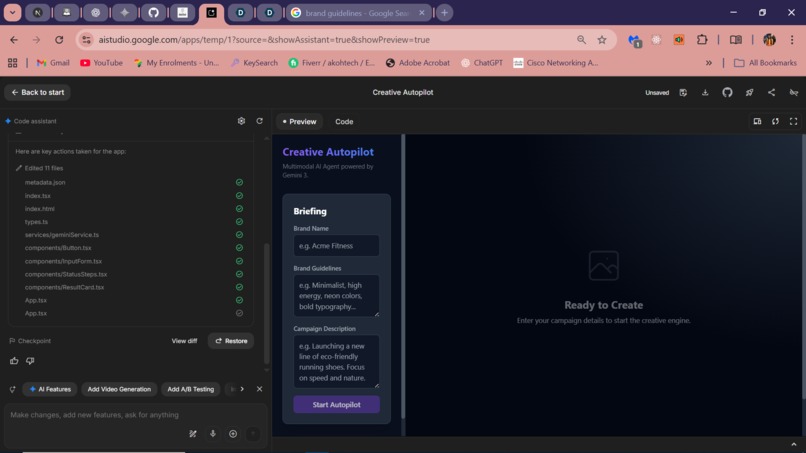

Final View of Creative Autopilot using mock data

-

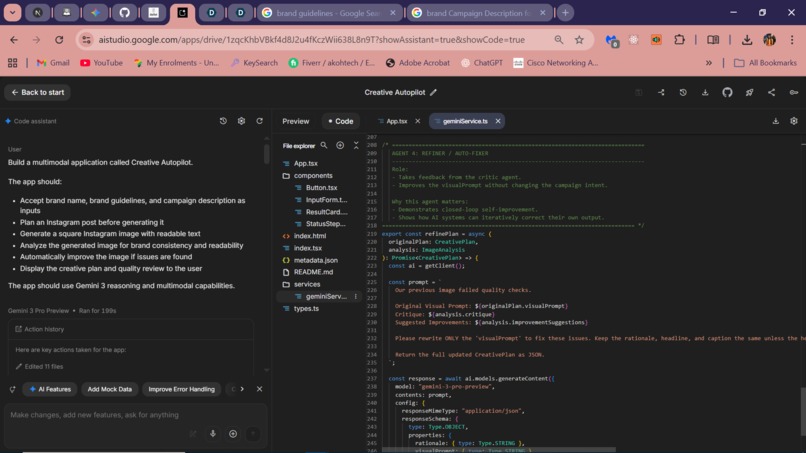

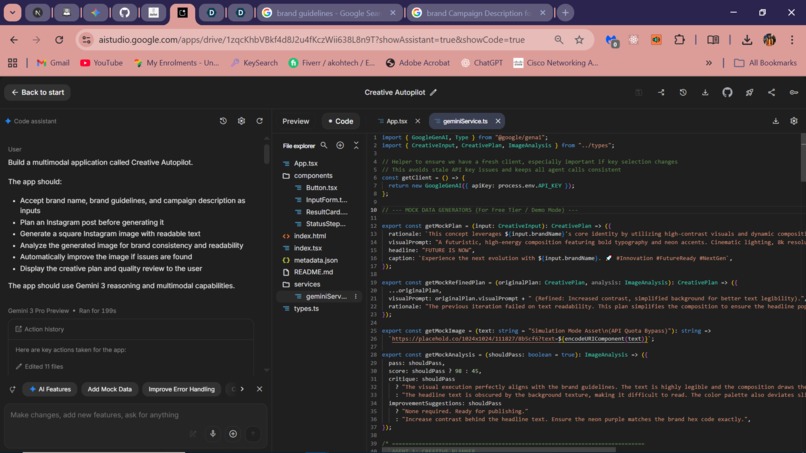

Refined geminiService.ts 4

-

Refined geminiService.ts 5

-

Agent prompt goes into it

-

Refined geminiService.ts 2

-

Refined App.tsx 4

-

Refined geminiService.ts 1

-

Basic app workflow

Inspiration

Modern content creation is increasingly powered by AI, but most tools still feel shallow: a single prompt in, a single output out. In practice, real creative work doesn’t happen that way. Designers and marketers think, generate, review, get feedback, and iterate.

I was inspired by this gap between how humans create and how most AI tools are designed. With the release of Gemini 3 and its emphasis on reasoning, multimodality, and long-context understanding, I wanted to explore what happens when creativity is treated as a process, not a prompt.

Creative Autopilot was born from that idea: an autonomous system that plans, executes, evaluates, and improves creative output the way a real creative team would—without human micromanagement.

What it does

Creative Autopilot is a multi-agent AI system that autonomously generates high-quality Instagram posts while enforcing brand consistency and visual quality.

Instead of acting as a chatbot, the application orchestrates multiple specialized agents:

- A Planner that reasons about brand guidelines and campaign intent.

- A Generator that produces a visual asset.

- A Critic that evaluates brand fit and text readability using multimodal analysis.

- A Refiner that improves the strategy if quality checks fail. The system loops through planning → generation → critique → refinement automatically, with strict boundaries to avoid infinite retries. The result is a self-correcting creative pipeline rather than a one-shot generation tool. ## How we built it The application is built as a deterministic orchestration layer on top of the Gemini 3 API using Google AI Studio. Each agent is implemented as a focused Gemini call with structured JSON outputs enforced via schemas. The frontend coordinates these agents, tracks generation attempts, and visualizes the reasoning and improvement process step by step. The key design decision was to separate reasoning from execution. The planner thinks first, the generator executes exactly, and the critic enforces quality. This separation makes the system more reliable, explainable, and extensible. ## Challenges we ran into One major challenge was preventing the system from becoming a “prompt wrapper.” It was tempting to let a single large prompt do everything, but that would defeat the purpose of Gemini 3’s reasoning capabilities. Another challenge was text readability in generated images. This required explicit planning, visual prompting, and post-generation critique to reliably catch failures. Finally, designing a self-improving loop without infinite retries required careful control flow and explicit stopping conditions—something that mirrors real-world production constraints. ## Accomplishments that we're proud of

- Designed and implemented a true multi-agent creative system, not a single-prompt wrapper.

- Successfully leveraged Gemini 3’s reasoning and multimodal capabilities to separate planning, execution, evaluation, and refinement.

- Built a self-correcting creative loop that autonomously improves outputs based on structured critique.

- Enforced brand consistency and text readability through post-generation visual analysis instead of relying on blind regeneration.

- Delivered a fully working end-to-end application as a solo developer within a tight hackathon timeframe as a started quite late into the hackathon.

- Created a system that is explainable by design, exposing reasoning, decisions, and improvement steps instead of hiding them. ## What we learned This project reinforced that AI applications are systems, not models. Gemini 3 shines most when used as a reasoning engine inside a structured workflow. By breaking tasks into roles and enforcing accountability between agents, it’s possible to build AI applications that feel intentional, autonomous, and trustworthy rather than random. ## What's next for Creative Autopilot Creative Autopilot is designed as a foundation, not just a simple one-off demo. Possible next steps include:

- Long-running campaign agents that manage multi-day or multi-week content calendars instead of single posts.

- Platform expansion beyond Instagram, adapting creative strategies for TikTok, LinkedIn, and paid ads.

- Automated A/B testing loops, where performance metrics feed back into the planner for continuous optimization.

- Collaborative agent roles, such as brand guardians or compliance reviewers for regulated industries.

- Higher-fidelity multimodal input, allowing users to upload existing brand assets and past campaigns for deeper context.

- Production deployment, integrating scheduling, analytics, and content publishing pipelines. The ideal long-term vision is an autonomous creative system that doesn’t just generate content, but plans, executes, evaluates, and evolves alongside real business goals.

Built With

- gemini-3-pro

- gemini-3-pro-image

- google-ai-studio

- react

- tailwind

- typescript

- vite

Log in or sign up for Devpost to join the conversation.