-

-

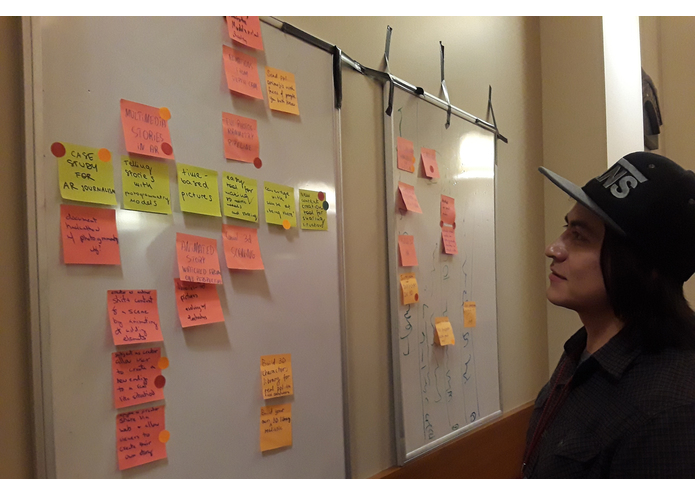

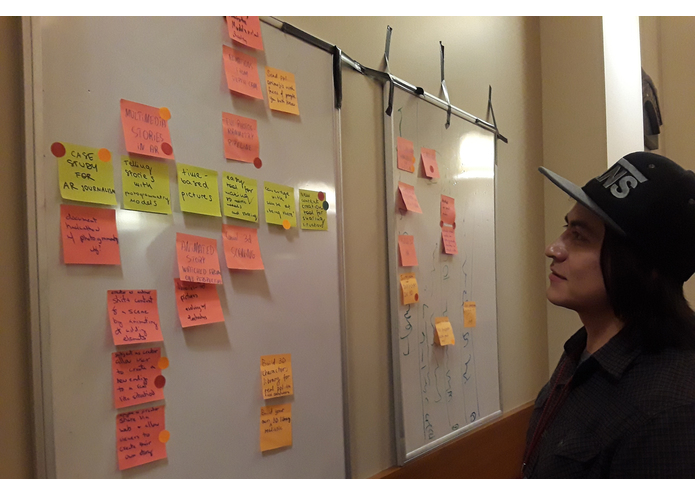

Collaborative Product Ideation: Sticky note brain storm and dot voting.

-

"Winning" idea is not flushing out...Back to the drawing board--heavy contemplation.

-

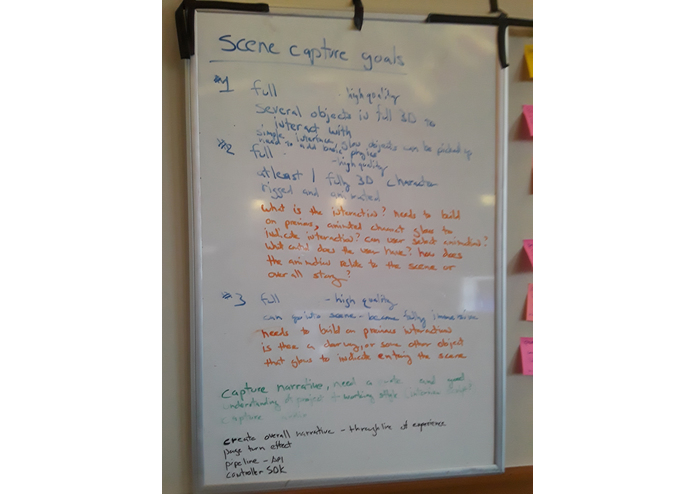

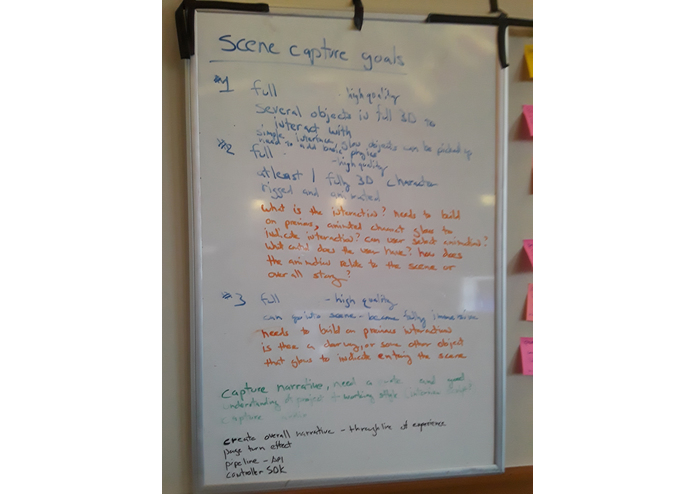

Everyone is onboard, in agreement and in action.

-

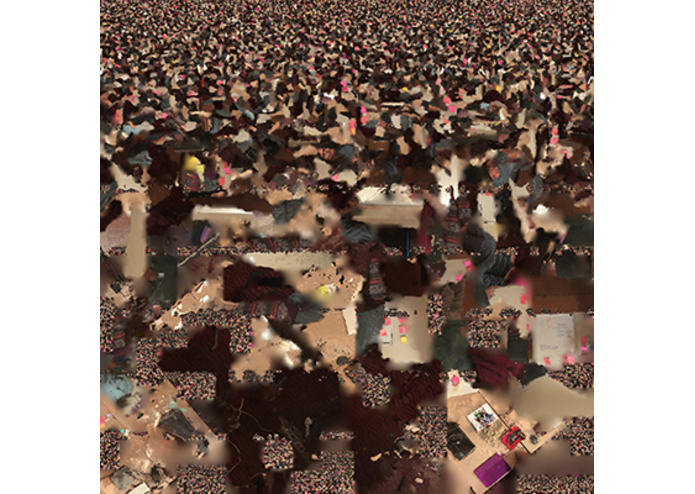

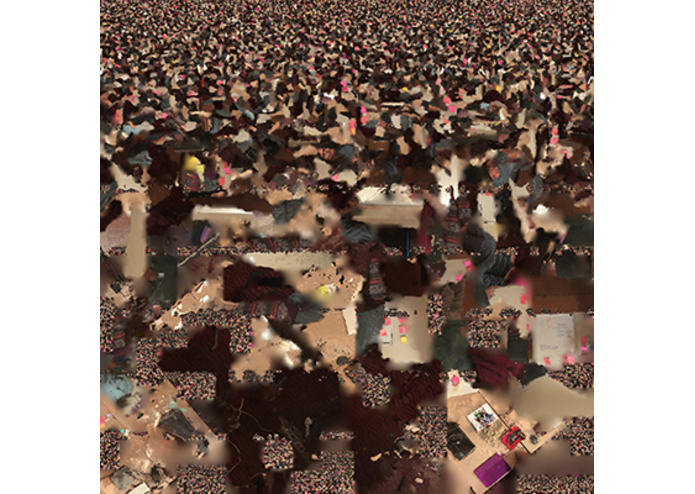

Sample photogrammetry texture map.

-

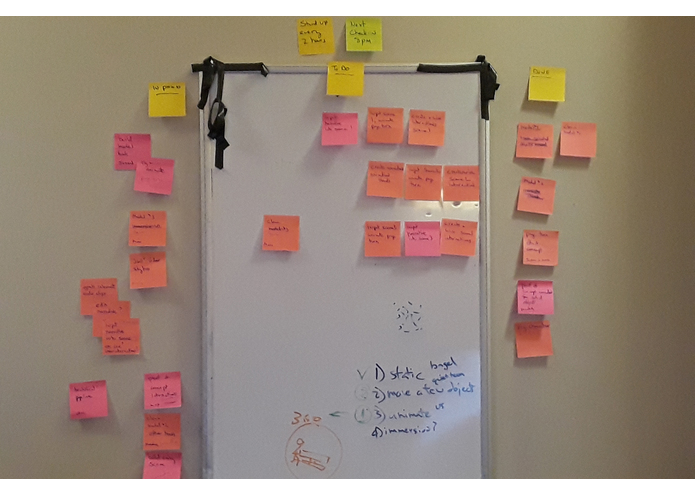

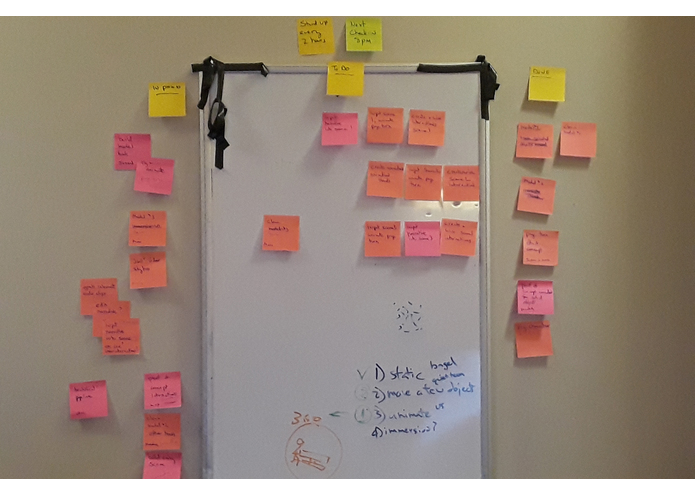

Our scrum board in full swing--stand-up every 4 hours.

-

Awards ceremony with Steven Max Patterson, left to right: Samantha, Susan, Steven, Adam, Maria, Max.

Inspiration

Photogrammetry, a technology for creating 3-dimensional models from 2-dimensional images, increases the sense of presence and emotional connection to a memory. We wanted to explore the limits of current immersive technology using photometric models to maximize this sense of presence. To make this technology available to the widest audience possible, we also strove to create a simple to use process utilizing mobile phone technology.

What it does

We created an open source photogrammetry pipeline where you can capture images of a still object, character, or scene with your cell phone, upload it to a self-hosted server, and get a photogrammetric model.

We also created a VR simulated AR experience where you can experience the three scenes we documented during the Hackathon to explore what's possible with this photogrammetry-powered future. In the last scene, we rigged one of the models for animation and provided a vehicle to enter the scene for a totally immersive experience.

How we built it

A team of 5 creatives, the reAnimators, together we took a multidimensional journey from journalism to UX design, to coding, animation and game design.

We used our smartphones to capture photogrammetry scenes and objects: taking videos, separating the frames and then assembling them with Agisoft PhotoScan (Trial) into 3d assets. Then, we cleaned up the models and created the page turning animation with Blender. Mixamo was used for auto-rigging and character animation. That’s how we were able to make photorealistic 3D content into the photogrammetry album XR experience.

We used Unity to render the photogrammetry models and create the VR environment on HTC Vive, SteamVR for the headset connection and VRTK to streamline basic model interactions. Music: www.bensound.com

For the prototype photogrammetry pipeline, we used Colmap, OpenMVS, OpenMVG, Swift, ffmepg library, C#, Python and Agisoft PhotoScan.

Challenges we ran into

- Vive headset not working and requiring a special adapter--thanks to Jad Boniface.

- The difficult situation of capturing multiple figures in a wide range of lighting environments.

- Learning and using Blender in one day.

- Difficulting exporting Blender mesh animation with a lattice to Unity.

- Mixamo auto-rigging tool is very picky about the models.

- Learning and using VRTK in one day.

- Processing and rendering large mesh objects is computationally heavy and time-consuming.

- Unity doesn't have good team collaboration solutions, so only 1 person could work in Unity at one time.

Accomplishments that we're proud of

- We kept an open and honest collaborative environment with regular stand-ups, sync-ups, and feature demos.

- We took many risks during the Hackathon: talking a long time for brainstorming to make sure we hear everyone and have a solid concept, working with bleeding-edge technology and early version software, working with tools and workflows that we are not familiar with, and getting a satisfying result in the end.

- Tested the process of creating fully rigged and animated character out of just a smartphone video clip.

- Created a new VR UX pattern for scene transition--leaning into a scene.

- Dancing on the table.

What we learned

- Sleeping is optional. For a great idea, people are willing to stay late, change schedules, etc.

- Creating low-def proof-of-concept as early as possible would really speed up the process and lower the pressure level.

- For a Hackathon, quick manual solutions sometimes are better than fully developed toolkits/libraries.

- No one person can do it all no matter how talented or experienced. Ongoing teamwork, collaboration and communication is key to making the most of everyone's talent.

What's next for Memories

- Happy hour!

- More explorations of mobile, open source solutions for photogrammetry.

- Cleaning up some of the roughness and creating a more polished finish.

Log in or sign up for Devpost to join the conversation.