Inspiration

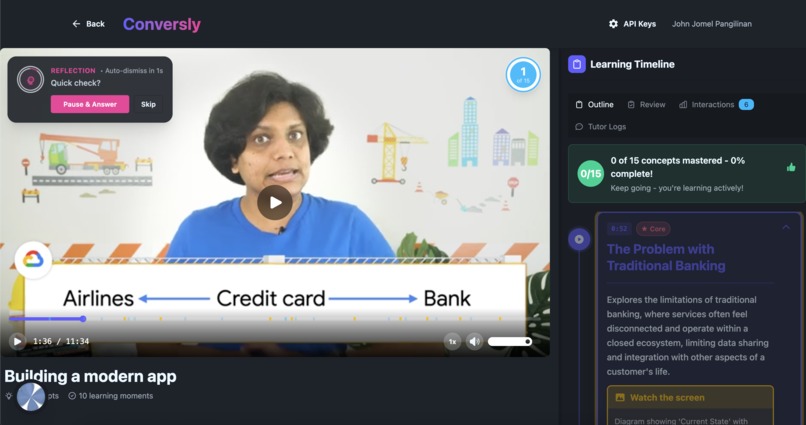

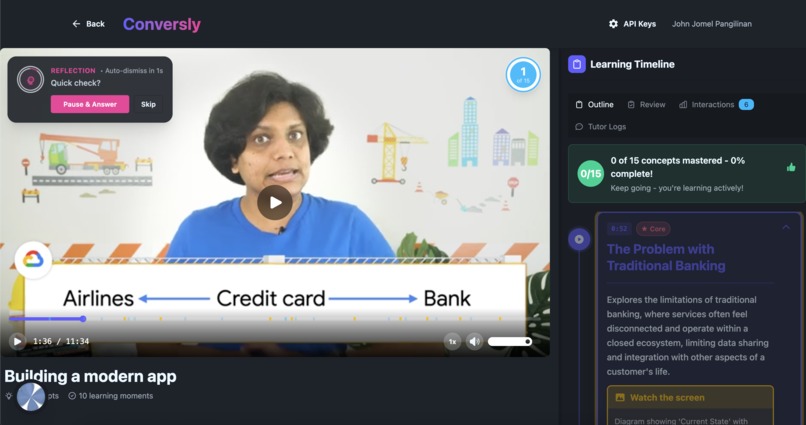

Online learning has a retention crisis. Students watch hours of video content, click through quizzes, and walk away remembering less than 10%. The problem isn't the content—it's the format. Passive consumption doesn't create understanding. We envisioned a world where every learner has an AI tutor who understands the exact video they're watching, responds in natural conversation, and can measure real comprehension through dialogue—not multiple choice. Conversly transforms video learning from a broadcast medium into an interactive conversation.

What it does

Conversly is an AI-powered learning platform that transforms any educational video into an interactive, voice-first learning experience with a context-aware AI tutor.

Core Features:

- Context-Aware Voice Tutor: Ask questions about what's happening on screen using natural voice. The AI tutor has watched the entire video and understands every concept, timestamp, and visual element.

- Intelligent Checkpoints: AI-generated conversation points pause the video at critical moments to verify understanding through natural dialogue—not quizzes.

- Adaptive Explanations: The tutor adjusts its language and depth based on your responses, providing visual references, replaying sections, and offering multiple explanations until you grasp the concept.

- Visual Timeline: An interactive timeline shows all key concepts with timestamps, allowing learners to navigate by topic rather than scrubbing blindly.

- Progress Analytics: Track comprehension patterns, identify struggle points, and see personalized recommendations for review.

- Hands-Free Learning: Fully voice-controlled experience—pause, replay, seek, and ask questions without touching a keyboard.

For Creators:

- Upload any video and get instant AI analysis

- Automatic concept extraction with timestamps

- Learning Engagement Rate Analysis: AI evaluates your video's effectiveness across engagement, accessibility, pedagogical quality, and active/passive balance

- AI Refinement Engine: Get intelligent suggestions to improve concepts, checkpoints, and content based on engagement metrics

- Zero manual tagging or quiz creation

- Real-time analytics on where students struggle

- Deploy courses with a built-in AI tutor in minutes

How we built it

Architecture:

- Frontend: React 19 + TypeScript with real-time voice interface using ElevenLabs React SDK, Material-UI components, and Three.js for visual effects. Deployed on Firebase Hosting.

- Backend: Express server with TypeScript, handling video uploads, AI orchestration, and real-time progress tracking. Deployed on Google Cloud Run.

- AI Pipeline: Gemini 2.0 Flash Experimental for multimodal video analysis (visual + audio), extracting transcripts, identifying on-screen code/diagrams, and generating contextual checkpoints.

- Database: Cloud Firestore for video metadata, user progress, conversation history, and checkpoint responses with real-time sync.

- Storage: Google Cloud Storage for secure video hosting with signed URLs and CDN delivery.

- Voice AI: ElevenLabs Conversational AI with custom client-side tools enabling the agent to control video playback, access video context, and provide timestamp-specific explanations.

Key Technical Innovations:

- Multimodal Context System: Videos are uploaded to Cloud Storage, then processed by Gemini's File API to analyze both visual elements (code, diagrams, slides) and audio content simultaneously.

- Client-Side Tool Architecture: Built custom ElevenLabs tools (

getContext,seekToTime,pauseVideo, etc.) that give the voice agent direct control over the learning experience. - Progressive Processing Pipeline: Real-time status updates (uploading → processing → analyzing) keep users informed during the 30-90 second AI analysis phase.

- Struggle Detection Algorithm: Analyzes conversation patterns, checkpoint failures, and replay frequency to identify concepts requiring intervention.

- Learning Engagement Rate System: Multi-dimensional AI analysis scoring videos on engagement (0-100), accessibility barriers, pedagogical quality, and active/passive balance with timestamped attention retention graphs.

- Self-Refining Content Engine: AI analyzes existing content structure and suggests improvements—adding missing concepts, repositioning checkpoints, improving prompt clarity—all grounded in engagement metrics and learning science.

Tech Stack:

- Google Cloud: Firestore, Cloud Storage, Cloud Run, Gemini 2.0 Pro/Flash, Vertex AI

- ElevenLabs: Conversational AI, Voice SDK

- Frontend: React, Vite, TypeScript, React Player, Zustand, Material-UI

- Backend: Node.js, Express, Firebase Admin SDK

Challenges we ran into

- Grounding AI Responses: Ensuring the voice tutor only references actual video content and doesn't hallucinate information. Solved with structured Gemini prompts that extract timestamped concepts and enforce citation requirements.

- Low-Latency Voice Interactions: Maintaining conversational flow while fetching video context and generating responses. Implemented client-side caching and predictive context loading.

- Conversational Assessment Design: Moving beyond "quiz questions" to measure genuine understanding. Developed multi-turn dialogue checkpoints that adapt based on student responses and allow for partial credit.

- Visual Element Recognition: Getting Gemini to reliably identify and describe on-screen code, diagrams, and slides. Refined prompts to extract

visualElementsfields with natural language descriptions. - Real-Time Progress Tracking: Synchronizing video playback state, voice interactions, and Firestore updates without race conditions. Built a unidirectional data flow with optimistic updates.

Accomplishments that we're proud of

- Full Production Demo: Complete end-to-end platform deployed on Google Cloud with real user flows from upload to voice-based learning.

- AI-Powered Content Refinement: Creators get actionable suggestions to improve their educational content based on real engagement metrics and learning science principles.

- Learning Engagement Rate Scoring: Pioneered a comprehensive video effectiveness scoring system that measures engagement, accessibility, and pedagogical quality on 0-100 scales.

- True Conversational Learning: Not a chatbot with video—the AI tutor actively controls playback, references specific timestamps, and can replay sections during explanations.

- Zero Creator Friction: Upload a video, wait 60 seconds, and you have a course with an AI tutor. No manual quiz creation or concept tagging required.

- Dual Market Product: Clear value propositions for both individual learners (instant tutoring) and enterprises (compliance training with comprehension verification).

- Seamless Multi-Product Integration: Gemini analyzes, ElevenLabs responds, Firebase stores, Cloud Storage serves—all working together in a cohesive learning experience.

- Open-Source Extensibility: Built a CLI tool system for ElevenLabs agent configuration, making the voice interface modular and customizable.

- Real Comprehension Analytics: Track not just completion rates, but understanding depth through conversation analysis and checkpoint performance.

What we learned

Voice changes learning behavior fundamentally. When students speak their questions instead of typing, they:

- Ask more "why" questions and fewer lookup questions

- Reveal misunderstandings faster (tone, hesitation, incorrect phrasing)

- Stay engaged longer—conversation prevents the "zone out" problem

- Retain information better through verbal articulation (the "generation effect")

Multimodal AI unlocks new pedagogical patterns. Gemini's ability to "watch" the video means the AI can reference visual elements ("that diagram at 2:34") and temporal relationships ("remember when we covered X earlier?")—something impossible with transcript-only systems.

The best EdTech feels invisible. Students don't think "I'm using AI"—they just feel like they have a knowledgeable tutor. The technology succeeds when it disappears into the learning experience.

What's next for Conversly

Near-Term (Q1 2026):

- Multi-language support (Gemini + ElevenLabs both support 40+ languages)

- Mobile apps (iOS/Android) for on-the-go learning

- Creator marketplace—let educators publish courses and monetize access

- Enhanced analytics dashboard with comprehension heatmaps and cohort comparisons

Mid-Term (Q2-Q3 2026):

- Enterprise compliance pilots with Fortune 500 companies

- Advanced comprehension analytics using Vertex AI for predictive intervention

- Live course mode—real-time voice tutoring during instructor-led sessions

- Integration with LMS platforms (Canvas, Moodle, Blackboard)

- Collaborative learning rooms where multiple students interact with one AI tutor

Long-Term Vision:

- Open platform for any creator to launch AI-tutored courses

- Adaptive learning paths that sequence content based on demonstrated understanding

- Peer learning features where the AI facilitates student-to-student explanation

- Accreditation partnerships to offer verified certificates

- Voice-first assessment standards for measuring soft skills (communication, reasoning, critical thinking)

Research Goals:

- Publish studies on voice-based learning retention vs. traditional video

- Develop comprehension measurement standards for conversational AI

- Partner with universities to validate pedagogical effectiveness

Built With

- elevenlabs

- gcp

- gemini

- react

- typescript

- vite

Log in or sign up for Devpost to join the conversation.