-

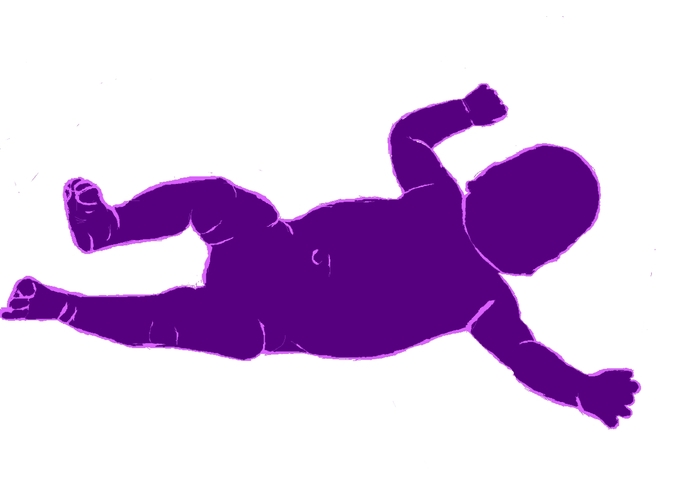

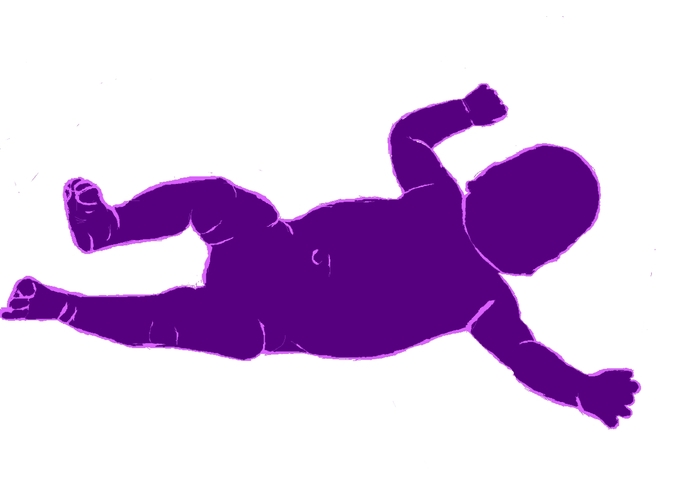

A body type for a small child

-

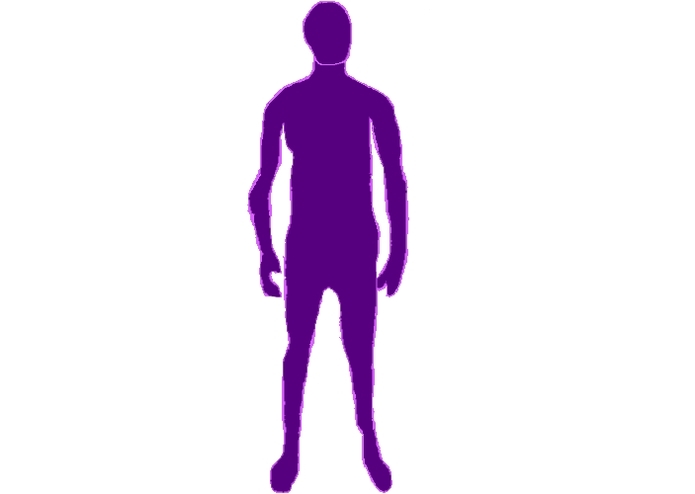

A body type for an adult

-

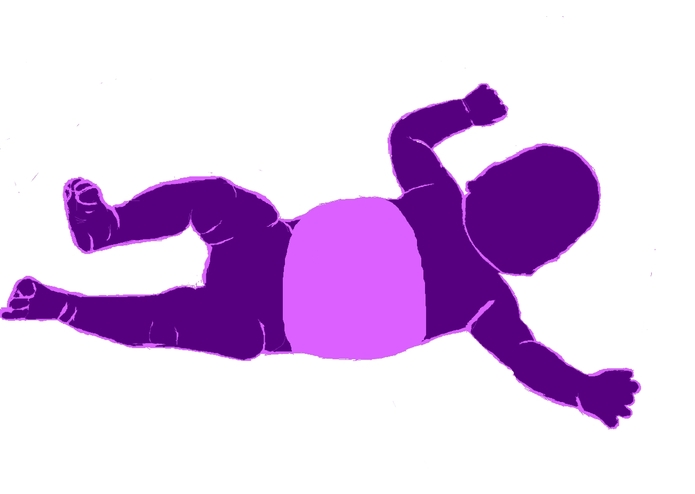

Small child body type with torso selected

-

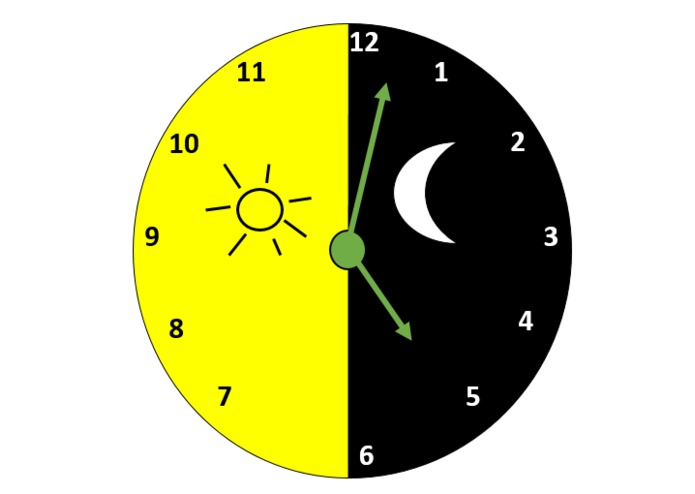

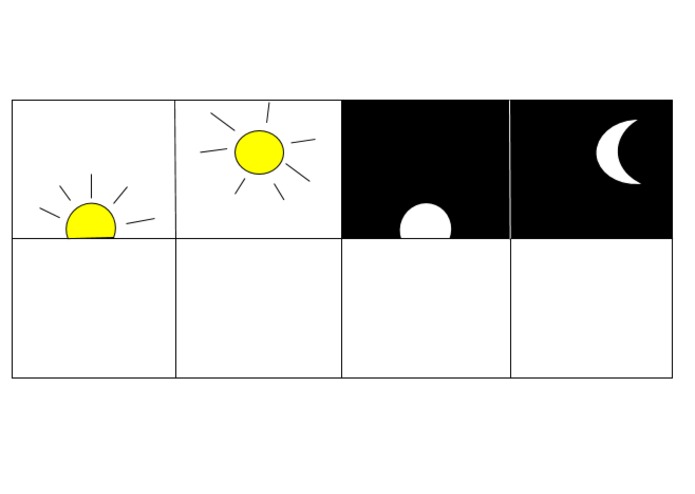

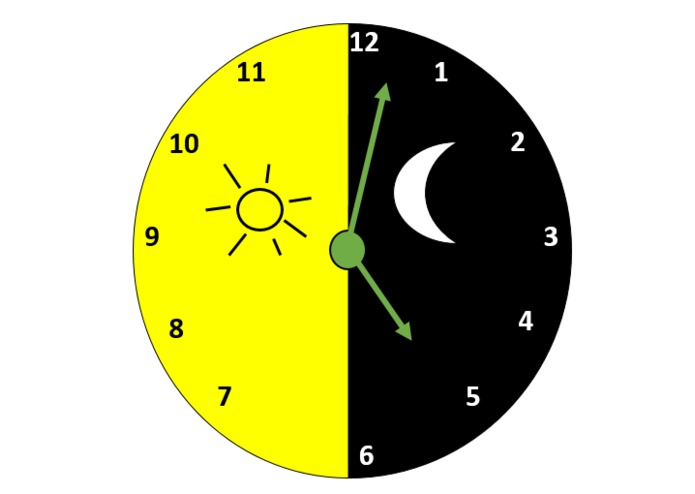

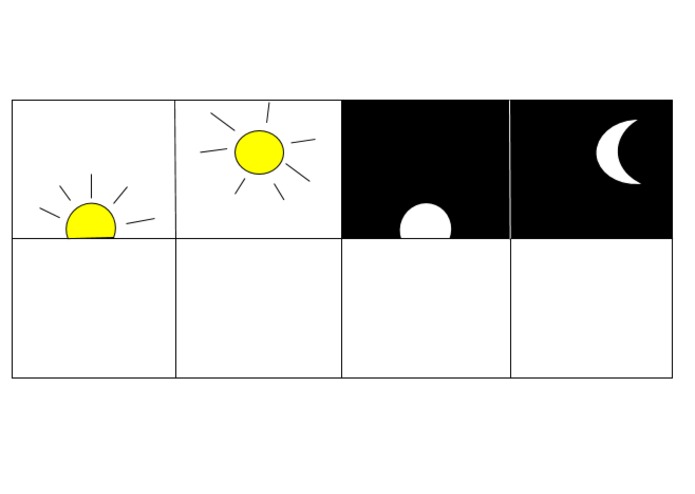

Day/night clock icon for representing how long or how often a symptom has been occurring

-

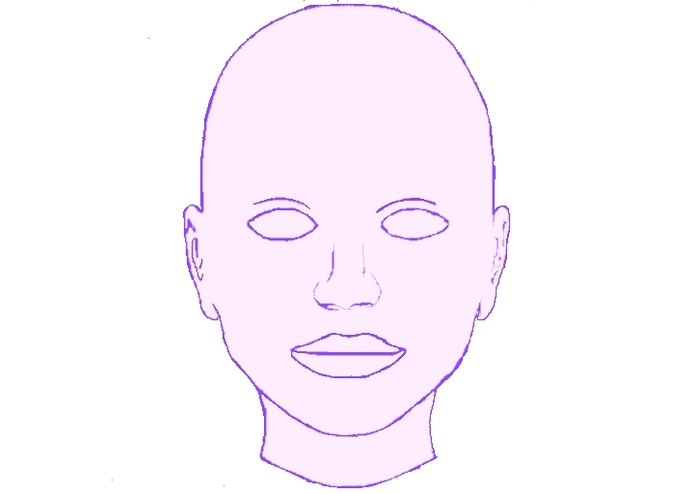

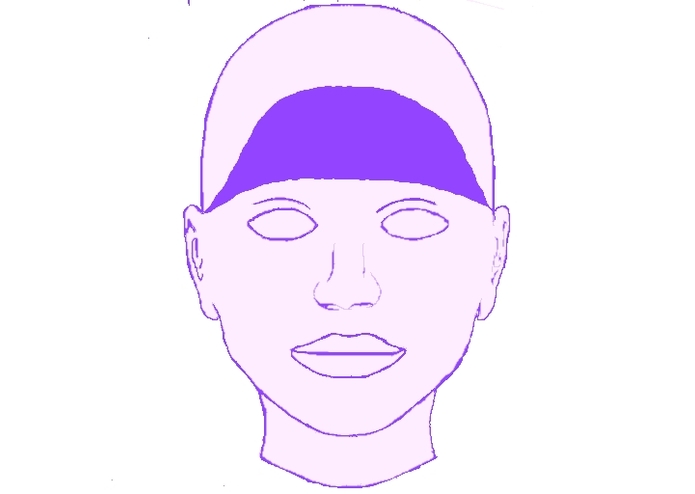

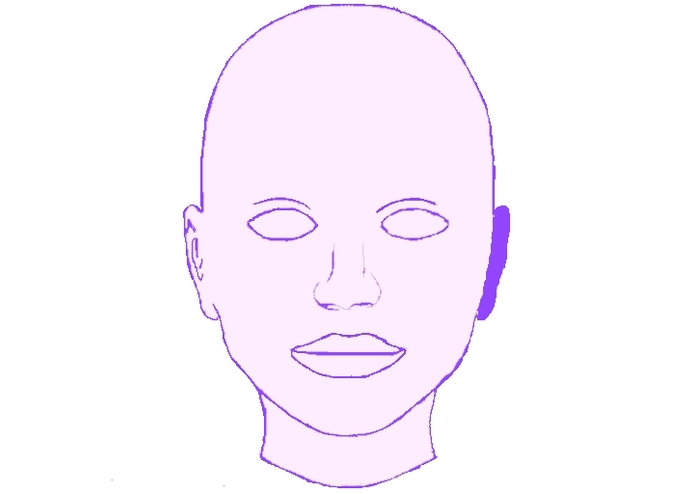

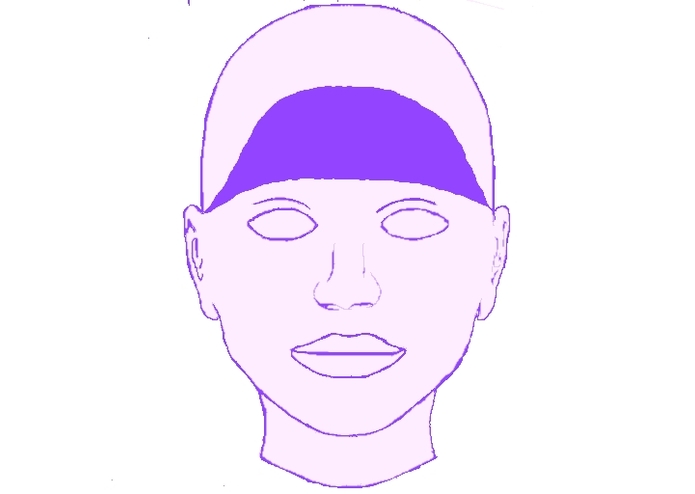

Up-close view of head

-

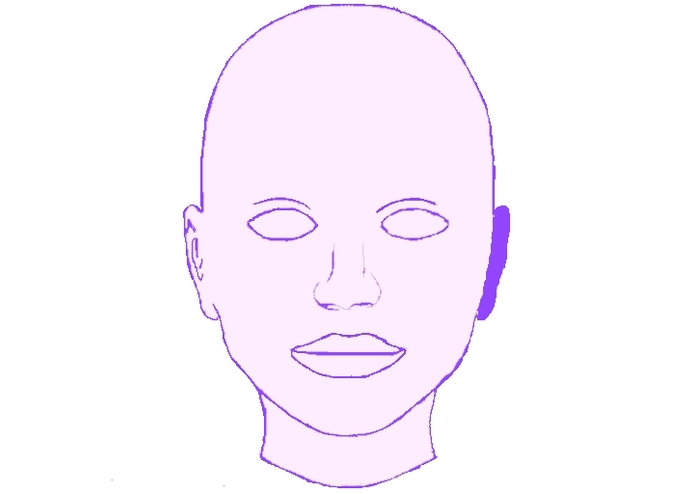

Head with ear selected

-

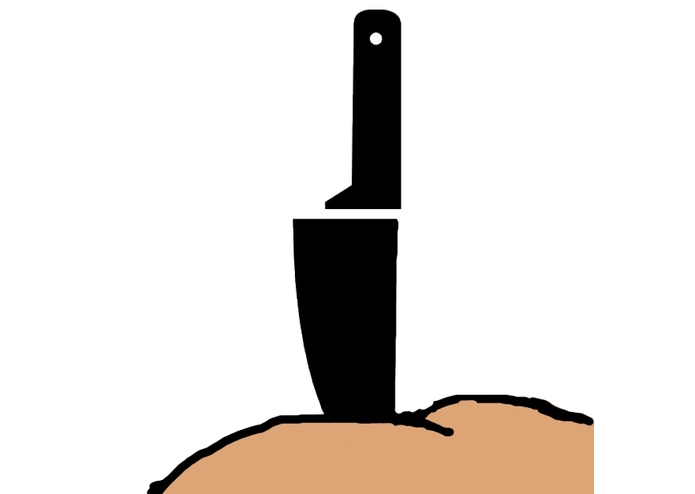

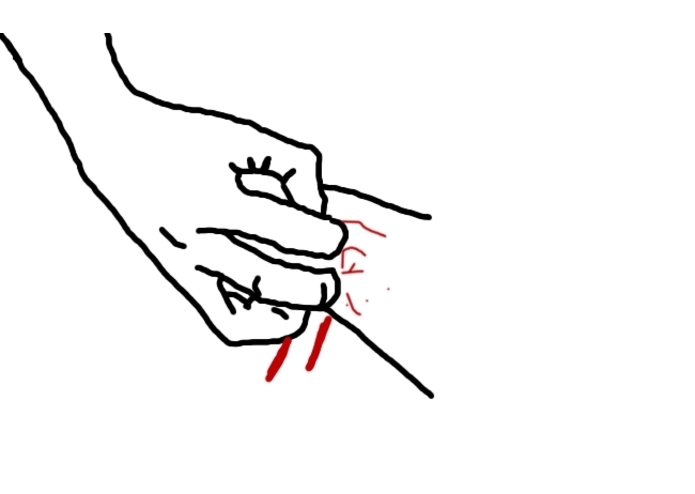

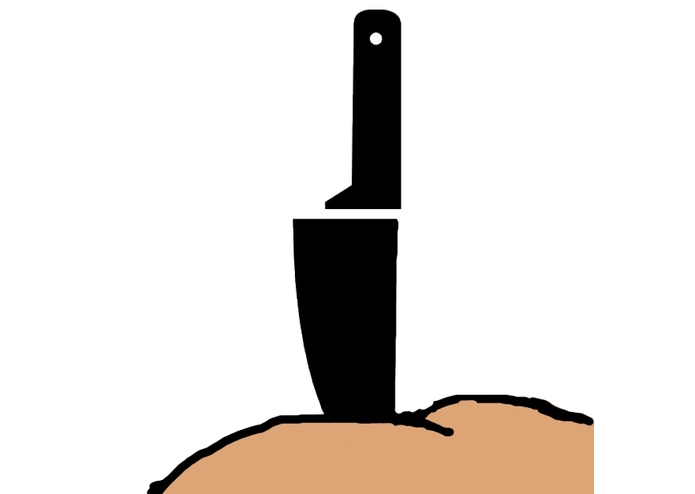

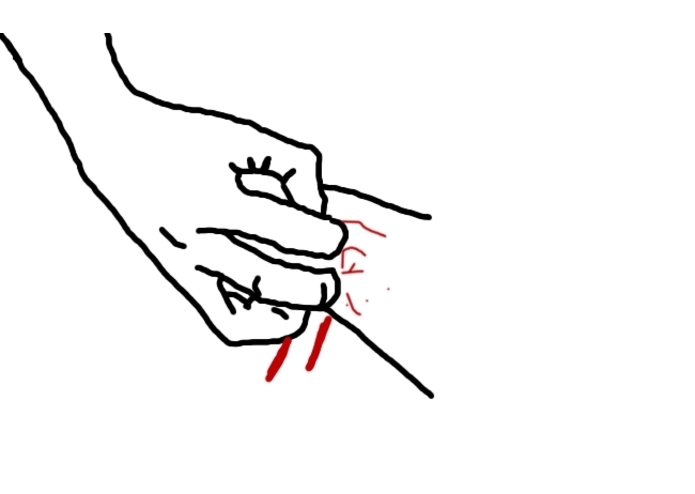

Representation of stabbing pain

-

General representation of pain

-

Some icons to help demonstrate how long a symptom has been occurring

-

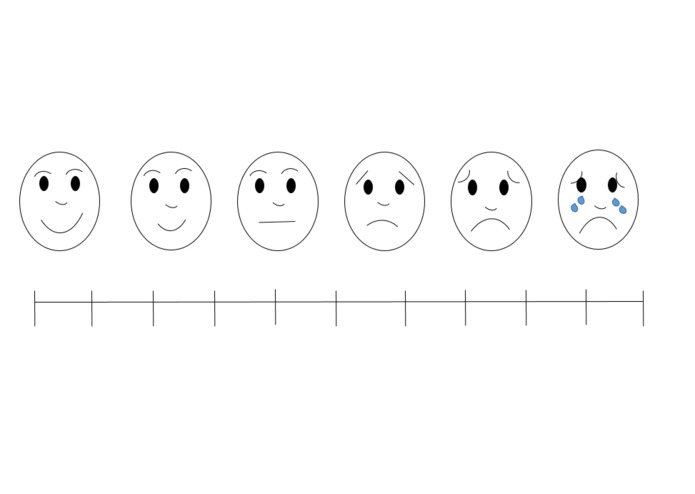

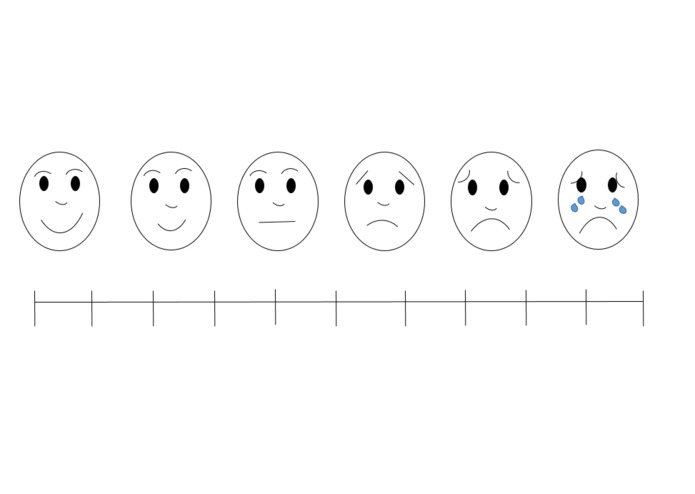

Our depiction of Wong-Baker Pain Scale

-

Head with forehead highlighted

-

Our logo!

-

An icon representing itchiness

Inspiration

We observed that large hospitals and other organizations often have translation resources available for situations in which patients and doctors cannot easily communicate. However, smaller organizations do not have those resources on hand, yet still might occasionally face language barriers. We wanted to create a tool that would allow doctors and patients to communicate critical symptomatic information in situations where the two parties speak different languages and even in cases where the patient cannot read or write.

What it does

The patient selects a body type that most accurately reflects their size and gender identity. Then, the patient selects body parts with which they associate the symptoms they have. Upon selecting a body part, a more detailed view of that part will be displayed where the patient can select a more specific area where the symptom is occuring. After selecting that area, the patient can choose from a list of visual icons representing common symptoms that might be assocated with that area of the body. After selecting a symptom, the patient will then be able to convey details such as pain intensity, duration, and frequency through visually-oriented sliders and diagrams. At this point, the patient can then go back to the body screen, select another part for a new symptom, and continue the process. A doctor standing by can take note of the information the patient is conveying and interpret it to produce a diagnosis and eventually a solution.

How we built it

We used Android Studio to design an application for Android devices which would perform the tasks described above. We made resources by researching common visual guides used by medical professionals to help diagnose patients, such as the Wong-Baker pain scale. We modeled our images off of these designs and also made our own illustrations to best suit our purposes.

Challenges we ran into

We encountered a lot of difficulty when trying to find usable data about patient symptoms and how they are used for diagnoses. Lots of data was not available publicly and we could only find general overviews about it that were not very useful to us. We also don't have a lot of experience with designing applications or working with Android Studio, so we encountered many hurdles while trying to design our project and implement our ideas.

Accomplishments that we're proud of

We are proud of the very broad perspective we took when considering what sort of information doctors and patients would need to communicate and the diverse sets of ways in which they might choose to convey it. We were very aware of the fact that people from different places around the world don't necessarily associate the same visual cues with certain symptoms as we do, so we tried to design our visual aids so they could be used universally instead of just choosing images that we and our friends could understand. We are also very proud of the fact that all of us come from different disciplines, such as computer science, public health, social work, mathematics, and environmental sustainability. We each contributed very different ideas to our project and brought them together to create something unique.

What we learned

We learned a lot about troubleshooting, Android Studio, and using GitHub. We also learned a lot about creating images and health communication.

What's next for Connect Health

We would like to get the app to a workable state, add many more symptoms, and also cover situations including mental health and preexisting conditions.

Log in or sign up for Devpost to join the conversation.