-

-

Alexa-enabled Compost Status Tablet

-

High Level Architecture for the Compost Professor Alexa skill and device cloud

-

Example gauge chart that provides additional detail

-

Automated probe and 3D printed enclosure for IoT probe

-

Automated probe and 3D printed enclosure for IoT probe (top open)

-

PCBs used (in conjunction with Adafriut Feathers) to record and upload temperature and moisture data

-

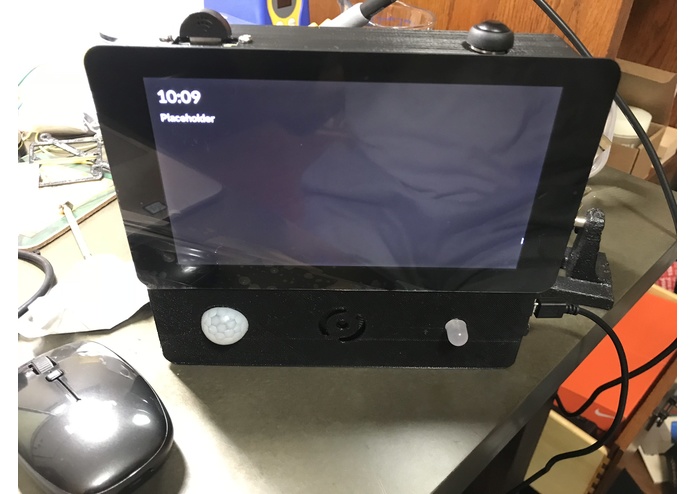

Work in progress - Alexa-enabled tablet

-

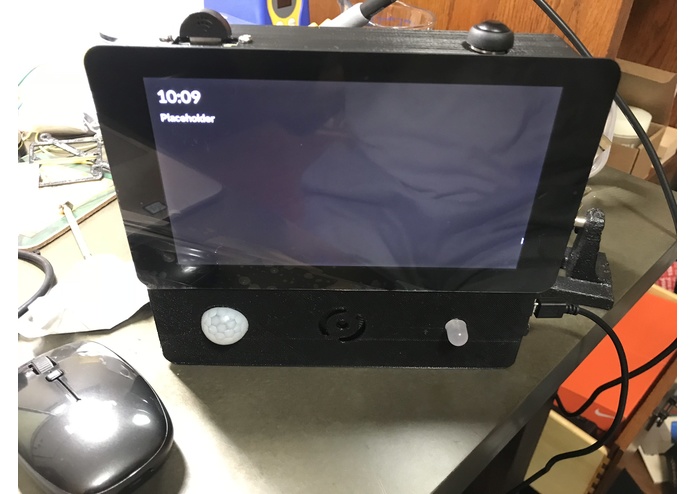

Back of the Alexa-enabled tablet

Inspiration

Per the EPA, food scraps and yard waste make up 20-30% of what we throw away. These materials could instead be composted, keeping waste out of landfills and reducing methane gas emissions.

Unfortunately, most do not compost, due to ignorance on the benefits of composting, misunderstanding of what can be composted, or lack of desire to manage compost.

The Compost Professor is a smart composting system that helps to address these issues by making the science of composting simple for today’s homeowner. The Compost Professor uses analytics to help anyone successfully create compost with minimal effort.

There are two main types of composting - hot composting (using heat and air to break down the materials) and cold composting (materials decompose on their own over a longer period of time). Hot composting is more beneficial - compost is created is a shorter timeframe and less methane is released into the atmosphere. This skill is built to help users "hot" compost.

What it does

The Compost Professor gives customized recommendations on making compost. The system uses the temperature, moisture, and weather forecast to give recommendations on what to add and when to turn the compost. It can also tell you to hydrate (water) the compost. Finally, the Compost Professor can track when your compost is ready for use.

Background

The Compost Professor is a bit of a passion project for me. I've made three prior versions to this Alexa enabled version - each iteration slightly better, but harder to implement (due to costs and complexity). I wanted to create a solution that was simple to use and customizable for more advanced users.

This version of the Compost Professor is the most simple yet... all you need is Alexa, a thermometer, and your hands to use it. The thermometer is used to get the temperature (duh!); you can use your hands to judge moisture level. This information can be given to Alexa; from there, the user can get customized recommendations for his or her compost.

I also wanted to stay true to my "maker/builder" roots, and provide "upgrade" options. More adventurous users can build a system that automatically measures the temperature and moisture and uploads this data to the Compost Professor cloud (a series of APIs and DynamoDB tables).

How I built it

The key inputs for analyzing compost are temperature and moisture. I wanted to create a low barrier for using the system, so these values can be provided verbally (via Alexa). Alternatively, a user can create a simple temperature/moisture monitor that can automatically provide the required information to Alexa.

A: Ontaining key user information:

As stated above, the Compost Professor needs temperature and moisture readings in order to provide a recommendation. Additional user information is needed to refine the analysis and experience

- Postal Code - this is used to determine the precipitation forecast

- Email - used to send the user detailed instructions on how to take measurements

- Given Name - used to personalize the experience

- Temperature Units - C or F (used when confirming the temperature input)

B: Saving User Attributes, Times Accessed, Getting required information, etc.:

I wanted to separate the "compost analysis" functionality from the Alexa skill. The Alexa skill is primarily responsible for interpreting the requests from the user (via intents) and calling the appropriate API. In addition, the Alexa skill is responsible for:

- Obtaining the information listed in Step A

- Saving the number of times a user has accessed the system (used to provide contextual welcome and help)

- Saving status of setup (also used to provide contextual welcome and help)

- Sending instructional emails (via the Amazon Simple Email Service)

- Creating and storing the API key (used to access the Compost Professor APIs, done via the Amazon API Gateway API)

- Randomizing greetings and message ("Hello", "Hi", "Welcome back, Darian.", etc).

Metricas and user data is stored in a DynamoDB table. User variables are updated every time the user logs in (in the off chance that the user changes their first name or zip code).

I used Dialog Management and Entity Resolution to simplify the setup process:

- User is asked the data gathering method (DIY or manual), the type of bin (open or closed), and if they want instructions emailed.

- Entity resolution is used to match any variations of "do it yourself" (diy, electronics, etc) and manual (verbal, manually provide, etc).

I also used Dialog Management and Entity Resolution for data gathering:

- User needs to provide temperature and moisture

- Moisture is described qualitative - wet, dry, moist, etc, so entity resolution is used to map the description to a moisture level

- Users do not need to provide the temperature scale (F or C); this data is derived from the Customer Settings API; however, users can "override" that if they verbally provide the scale

- I confirm the temperature and moisture before processing

C: Performing Analysis

The Compost Professor "cloud" is a series of Lambda functions (with associated DynamoDB tables) that are exposed via API gateway. The APIs require a key; this serves two purposes: 1)allows 'linkage' between the Compost Professor and the Alexa Skill and 2) forces registration to use the skill (and for implementing user limits)

The Lambda functions use the Dark Sky api to determine precipitation forecast. This is needed if the compost is dry and the bin in exposed to the environment. If there is a forecast of rain, then there is no action needed (the user does not need to hydrate the compost manually).

The Regression-JS library is used to determine trend data. Specific actions are needed if compost is drying out, getting wetter, warming up, or cooling down.

D: Creating Charts

The Compost Professor collects quite a bit of data (especially if a DIY hardware solution is used). I wanted to expose some of this data back to the user. Google Charts provides a simple, HTML-generated charts. I needed to convert the charts (rendered in HTML) as an image, so I used PhantomJS to take a headless screen-shot of the charts. The screen shots are saved as jpg images to an S3 bucket. The images are then rendered via a screen-enabled device or the Alexa app (when the user asks about the temperature, moisture, battery, or days until the compost is ready).

The PhantomJS-embedded Lambda function takes a few seconds to spin up, so I used AWS Cloud Watch events to keep the lambda function "warm". You can read more about PhnatomJS here., and keeping Lambda warm here

E: Automated Do It Yourself Temperature and Moisture Reader

I designed the Compost Professor to support verbal recording of measurements and automated data updates. A simple IoT (Internet of Things) probe can be created (for about $25-50) to simplify the measurement recording process. The IoT probe measures the temperature and moisture every hour and sends the data to the Compost Professor (via the same APIs that the Alexa skill uses).

Instructions to build the probe are posted on Hackster.io.

F: Alexa-enabled Compost Status Tablet

As a bonus, I decided to create a stand alone display that allows a user to quickly get status of their compost w/o asking Alexa (all the user needs to do is push a button on the device). I remixed and merged two Raspberry Pi tablet and computer projects from Adafruit. Given that I was using a Raspberry Pi, I decided to make the tablet Alexa enabled. Here's a high level overview of the tablet

- Screen displays Temperature and Time (similar to Echo Show)

- User is able to get compost details with a button click

- Motion detector is used to turn on/off screen

- LED indicator to provide status of Alexa response, if Alexa is muted, etc

- Provide displays for weather, and simple questions (that have a card - e.g. "Who is Barack Obama")

Challenges I ran into

The touchscreen, Raspberry Pi, and speaker required more amps that expected. I originally designed the tablet to be battery powered, but kept getting brownouts. I moved to a more robust plug, which temporarily mitigated the problem.

I also struggled to get the templates to display on the screen; I used nodeJS to render the cards, by writing a hack in the AVS C code to output the display card JSON to a file. The nodeJS app continuously looks for any new files, and renders them via a node/HTML app. Going forward, I would write the GUI in C.

Finally, I wanted to add physical buttons for volume and muting, but struggled to get the AVS code to compile once I added code to enable the physical buttons. This is something I plan to look into at a later time.

Other tidbits

- Leveraging Amazon Alexa Customer APIs for key information - Amazon just released a number of APIs that simplified getting information about the user (units of measurement, email address, name, etc). In the past, I would need to implement account linking to get this information. Using the new APIs reduces user "friction" and simplifies getting the required inputs.

- Using APIs extensively - Most of my Alexa skills are self contained; this skill - given that there are multiple ways to input and receive data - needed to allow multiple points of interaction. Building APIs was the route I took.

- This is my first video enabled skill. I provide instructions for users with video-enabled devices. Manual Instructions, DIY Instructions

What's next for Compost Professor

I'd like to update the probe to incorporate:

- solar power

- light measurement

- ambient temperature measurements

In addition, I'd like to integrate the Compost Professor hardware into a rotating tumbler, and look into auto hydration and auto turning of the compost.

Finally, using Polly voices seems like a natural evolution for the skill.

Built With

- adafruit

- alexa-skills-kit

- alexa-voice-service

- amazon-dynamodb

- lambda

- node.js

- raspberry-pi

Log in or sign up for Devpost to join the conversation.