-

-

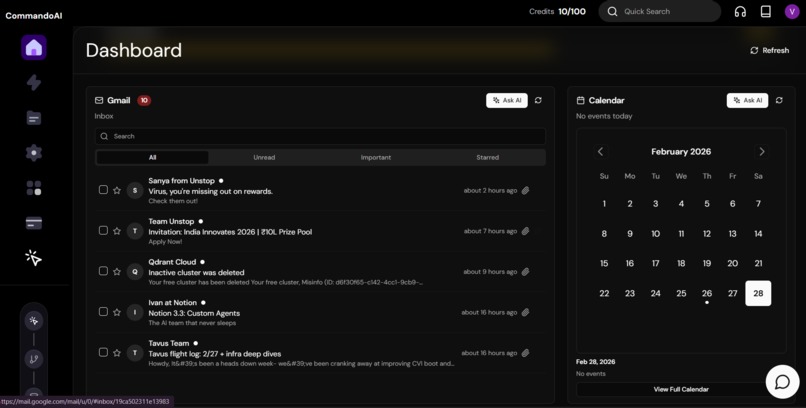

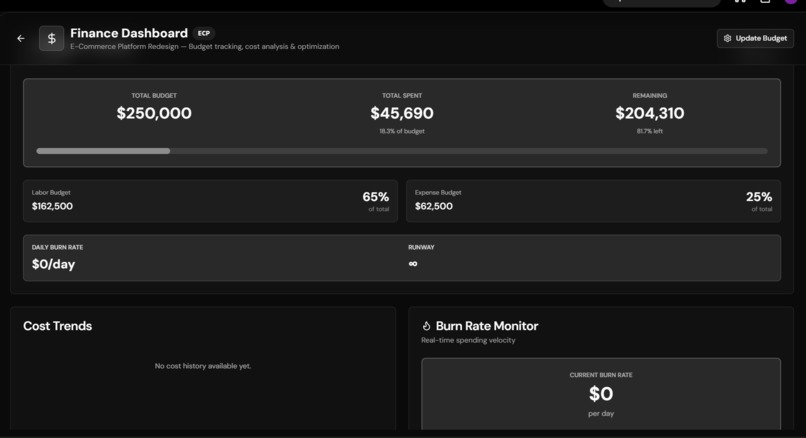

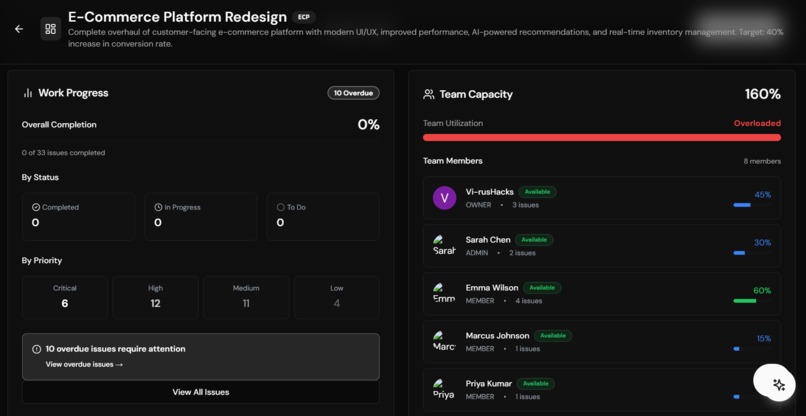

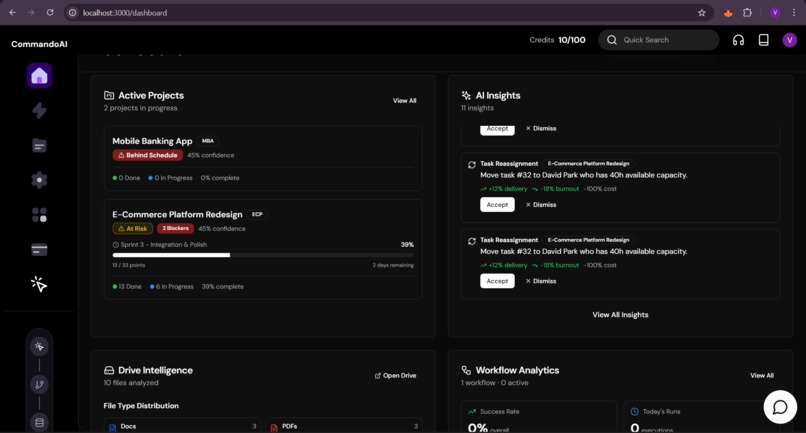

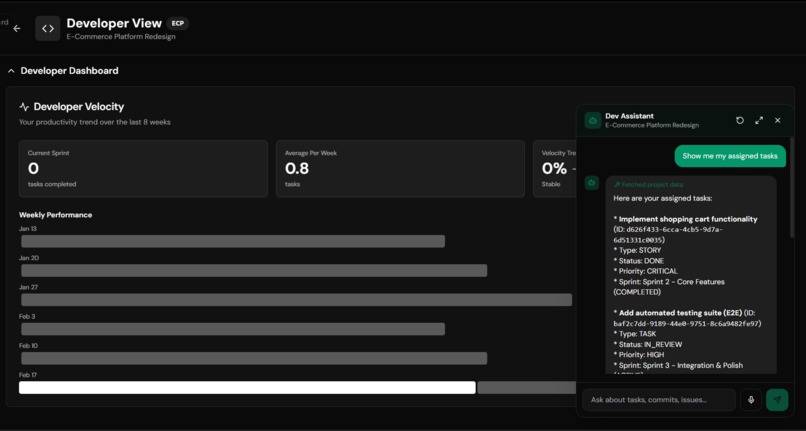

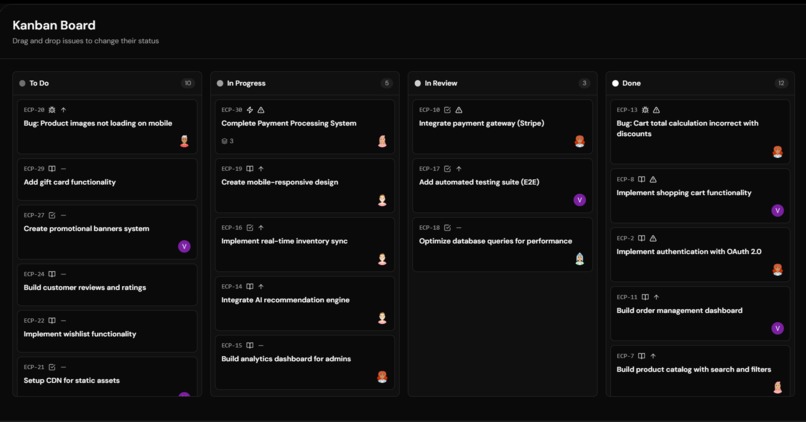

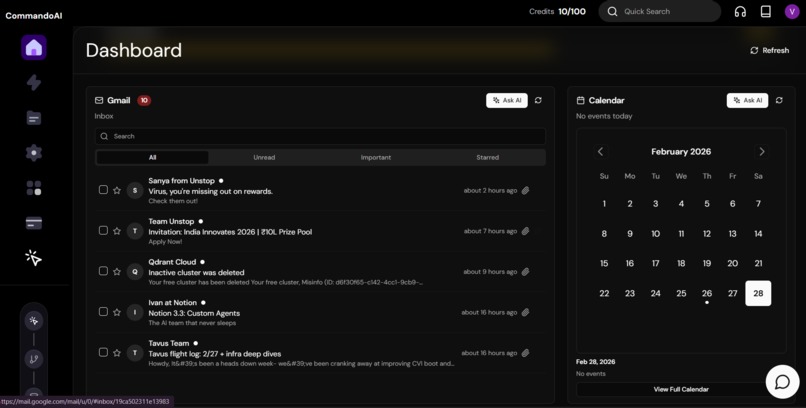

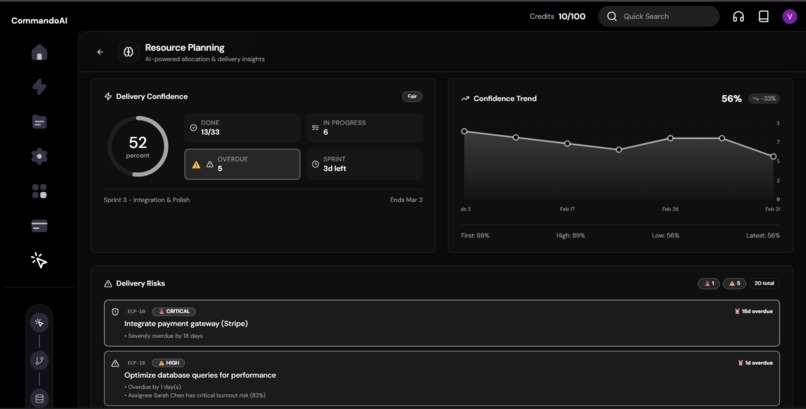

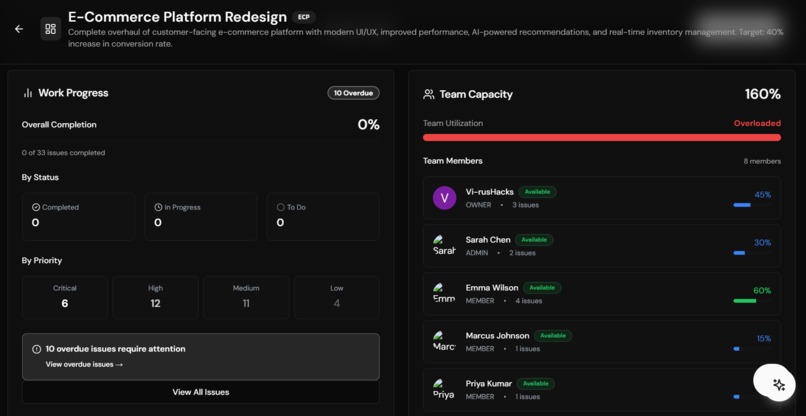

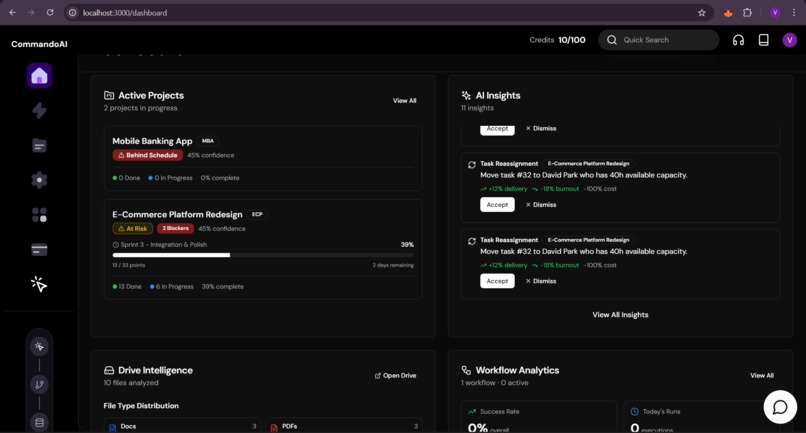

Opening Dashboard containing everything you need

-

What if Scenarios as Stochastic Event

-

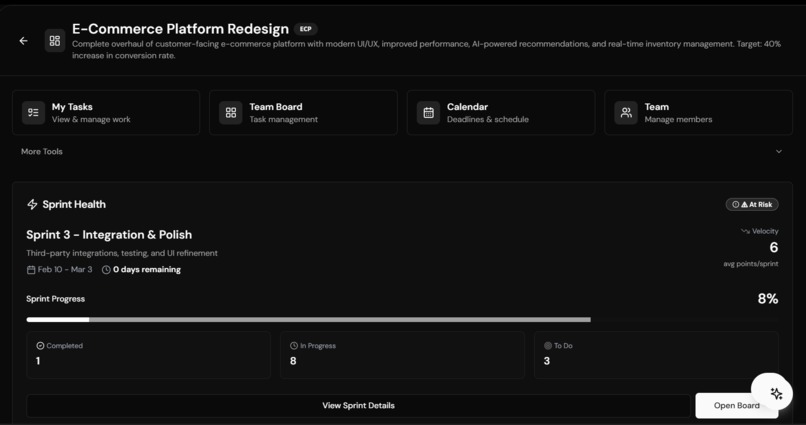

Running Partial Markov Decision Procees for Resource Allocation

-

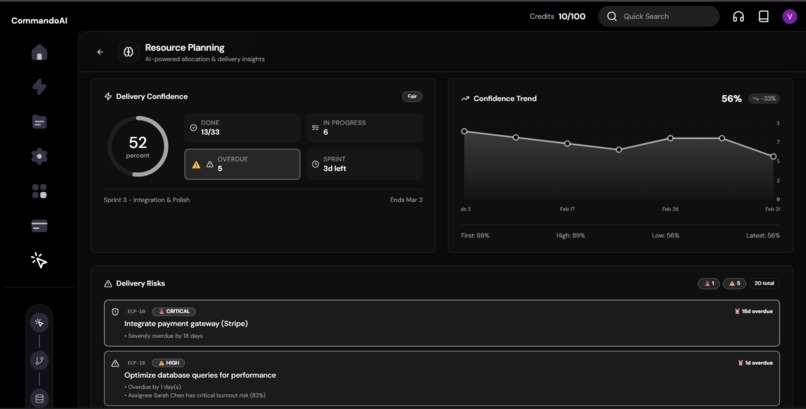

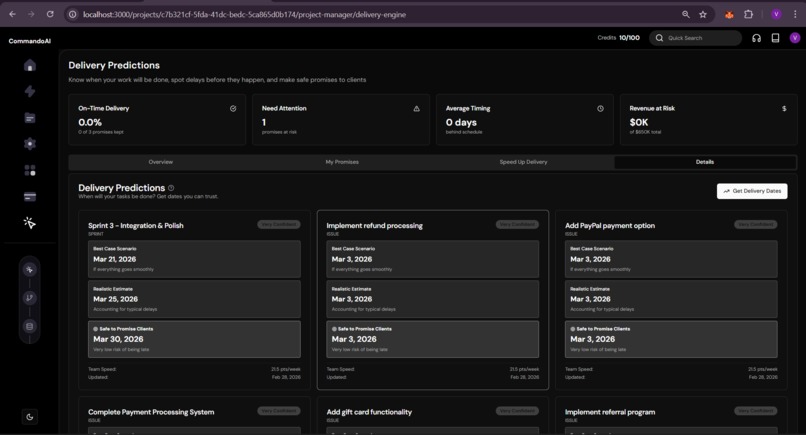

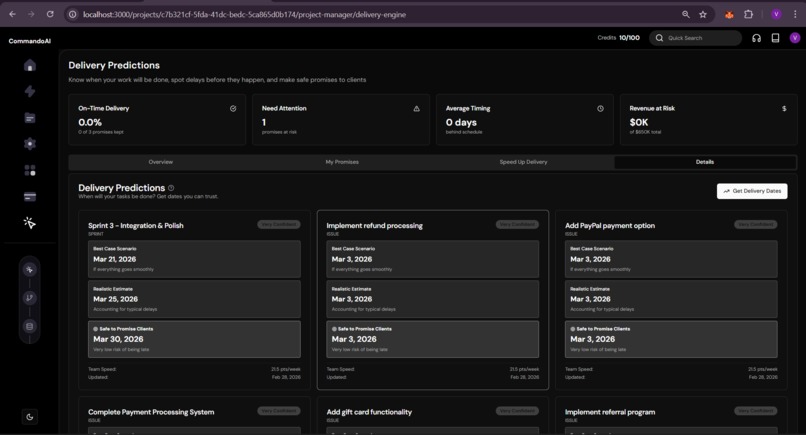

Monte Carlo Simulation based Delivery Handling

-

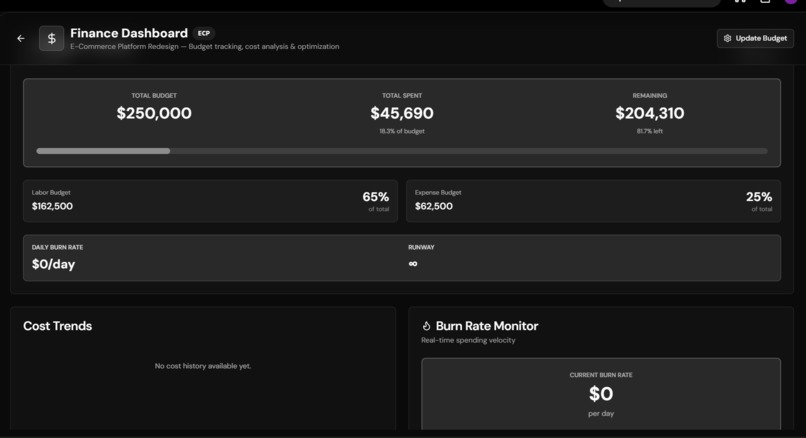

Sales and Cost Forecasting Using Reactive Analysis

-

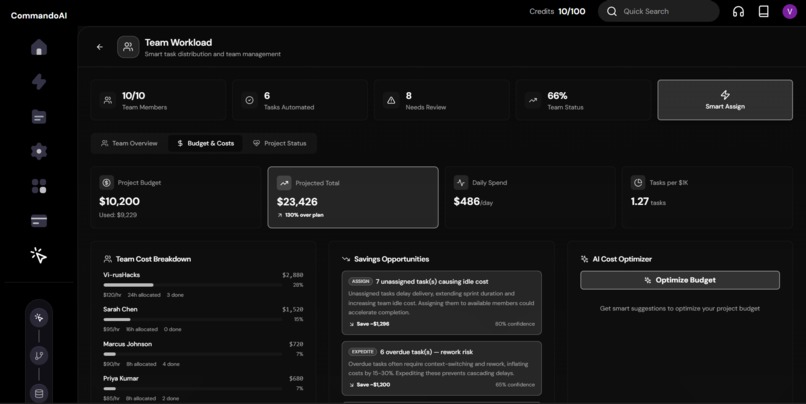

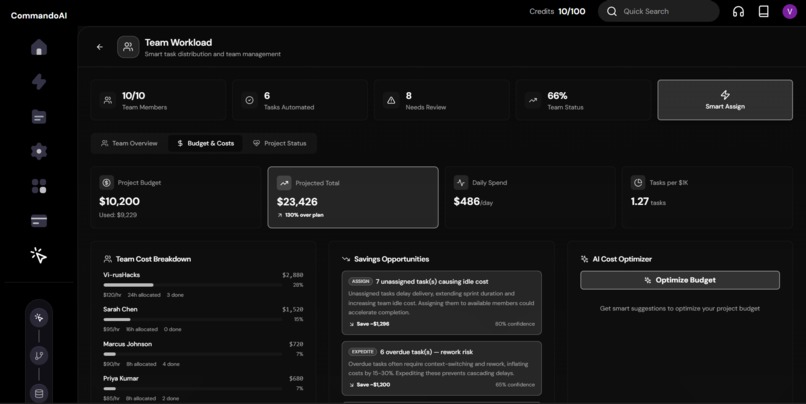

Proactive Suggestions For Reducing Budgets

-

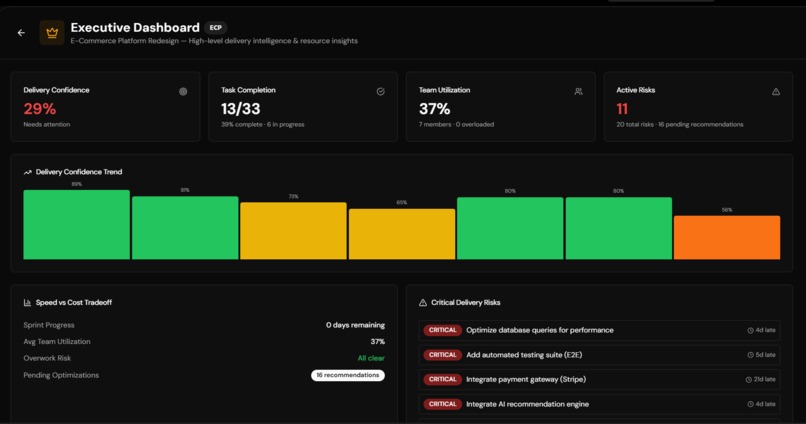

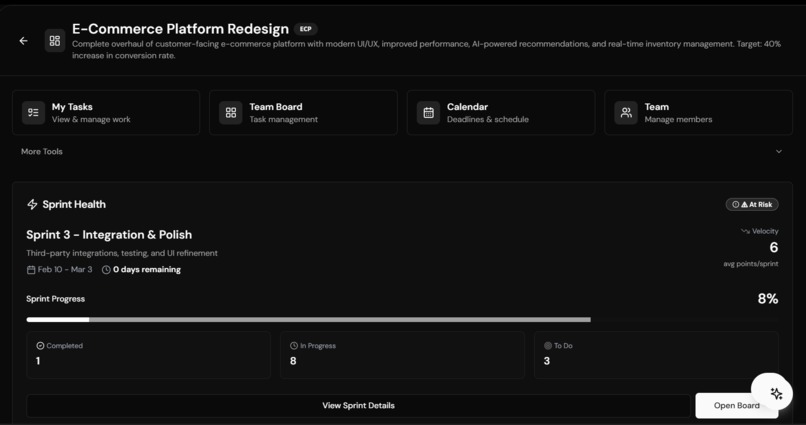

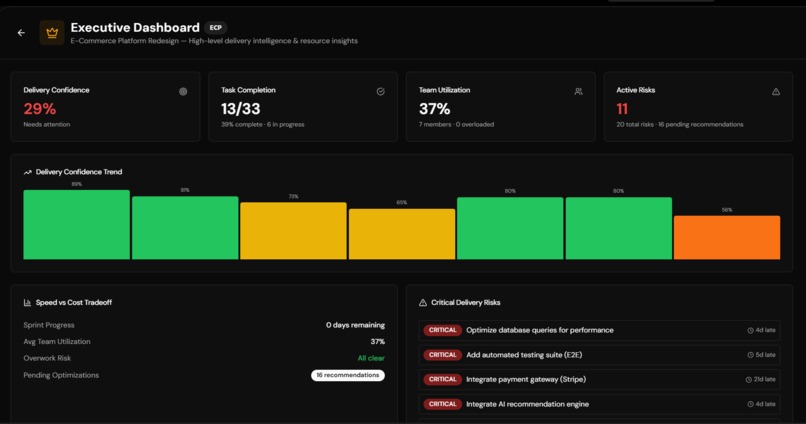

Executive Dashboard to handle top level decision making

-

All Your Insights in One Place

-

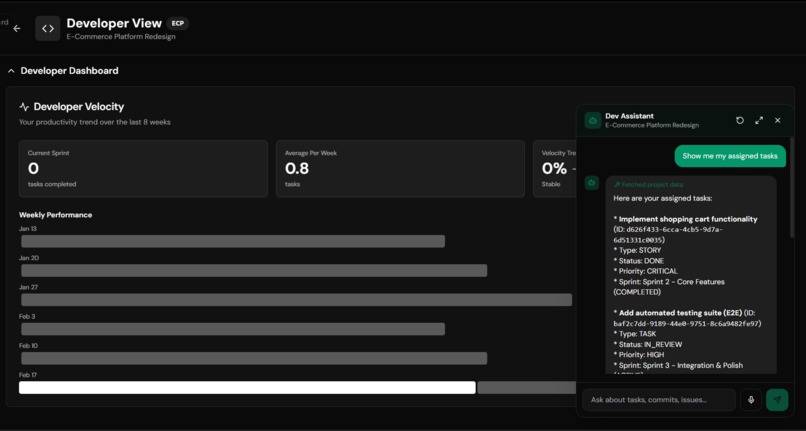

Developer CLI for All purpose events

-

Multi Agent Communication for better context handling

-

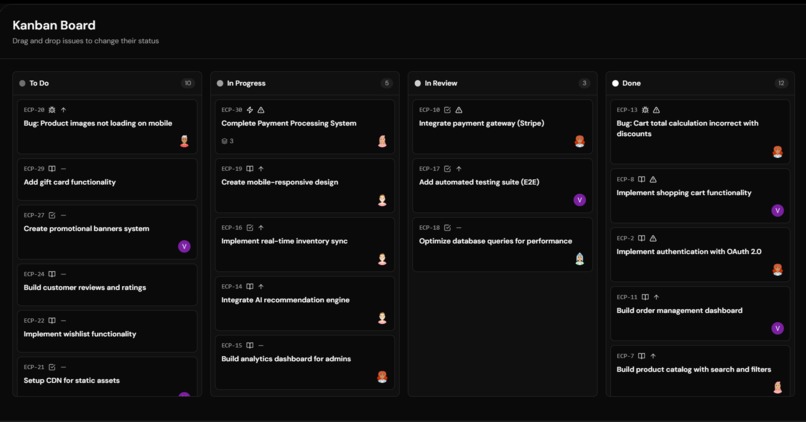

Bringing Jira to Generative AI

-

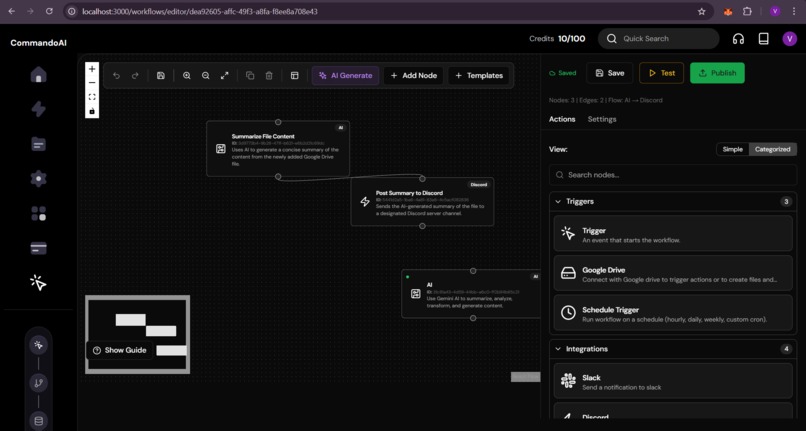

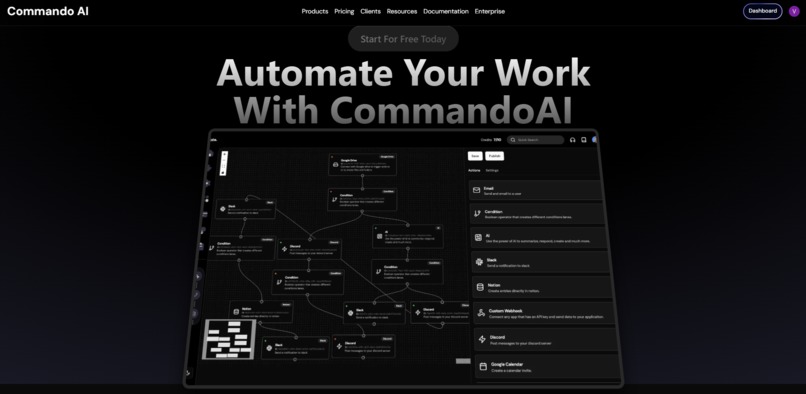

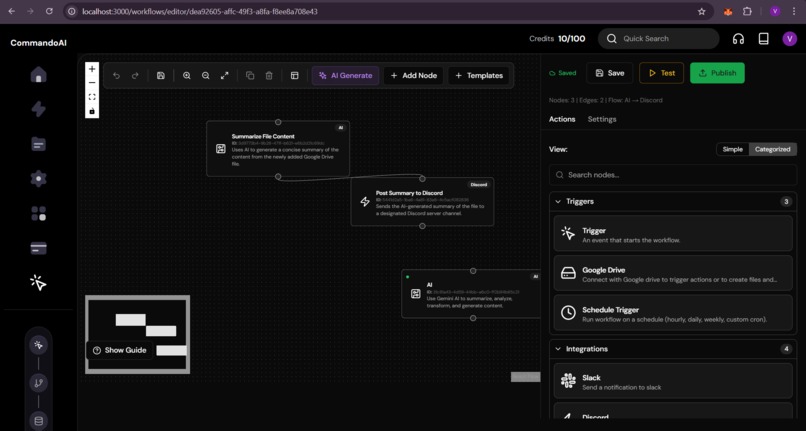

Building Automated Workflows Just On Your Command

-

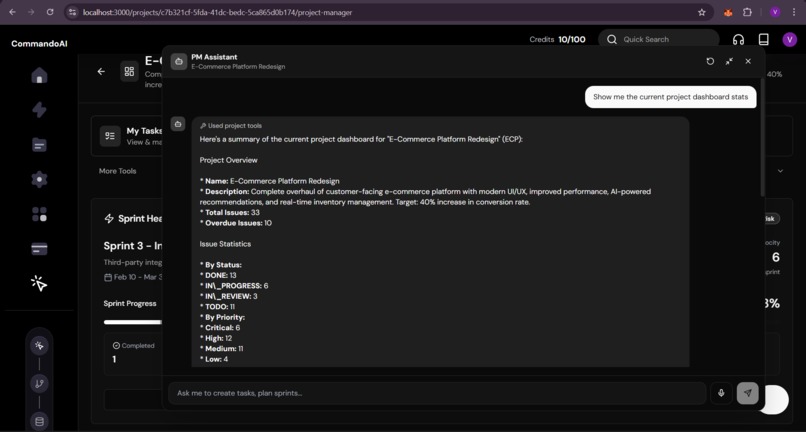

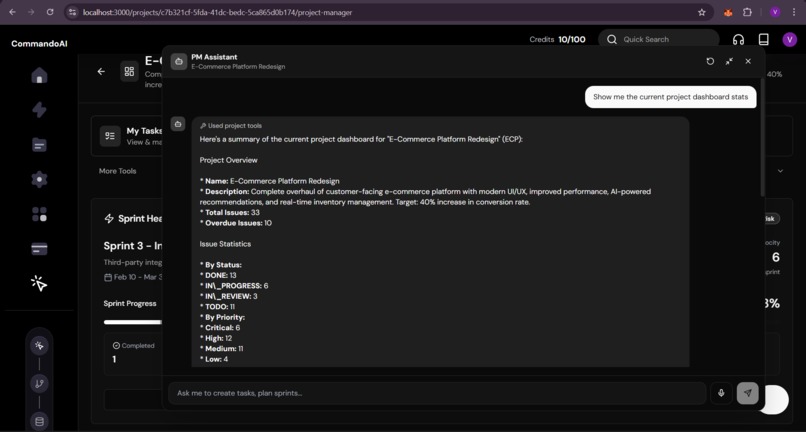

Model Context Protocol Based PM Assistant

-

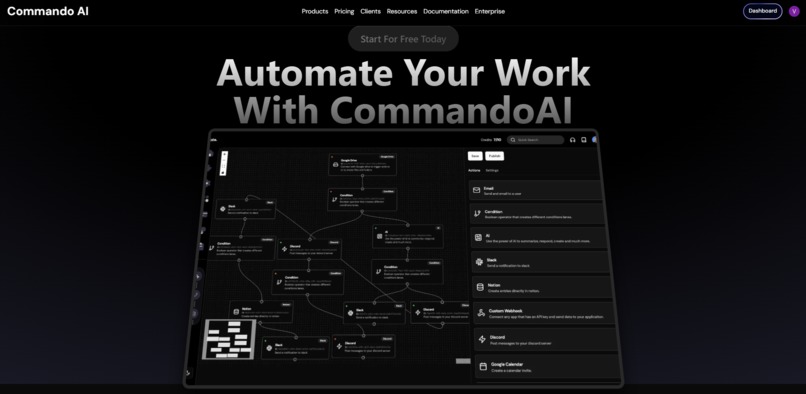

Landing Page

Inspiration

Most software teams don't fail because they lack skill. They fail because they're navigating blind

In the high-stakes world of software engineering, we’ve standardized on an uncomfortable truth: most projects are managed by gut feel disguised as spreadsheets. When asked, "will we ship on time?", the honest answer is usually a guess backed by optimism. Data is everywhere—Slack, Discord, Jira, Notion, GitHub—yet clarity is nowhere.

Commando AI is our answer to one of enterprise software's oldest unsolved problems: shipping with confidence, not hope.

We saw projects fail not because of a lack of skill, but because of a systemic lack of foresight. Traditional tools like PERT and CPM provide point estimates that hide risk. Jira provides velocity charts that analyze the past but cannot predict the future. We built Commando AI to eliminate the "Fog of War" in project management. We shifted the paradigm from reactive damage control to proactive failure prevention, engineering a platform that optimizes for certainty and ensures the highest probability of success.

| User Persona | The Pain Today | The Commando AI Advantage |

|---|---|---|

| Developer | Cognitive load from context-switching across 5+ tabs. | Unified Context: All tasks, docs, and implementation plans delivered directly to the IDE via the MCP Server. |

| Team Lead / PM | Managing by hope; no way to quantify "deadline risk." | Probabilistic Foresight: Real-time confidence scoring based on 10,000 Monte Carlo simulations per cycle. |

| Executive | Status reports that are high-level, subjective, and outdated. | Health Intelligence: An automated, data-driven brief on project trajectory, budget burn, and systemic friction. |

| Non-Profit / Student | Scale without the budget for professional PM oversight. | Embedded Best-Practices: Adaptive intelligence that identifies bottlenecks and burnout before they derail the team. |

What it does

Commando AI is an AI-native project intelligence platform that connects your existing tools — GitHub, Slack, Jira, Notion — and turns raw activity signals into probabilistic delivery forecasts, autonomous agent actions, and IDE-native context.

1. Predictive Delivery Engine

We replace "estimated dates" with probabilistic forecasting. Using 10,000 Monte Carlo iterations, the system samples your team’s real velocity, adjusts for burnout trajectories, and factors in dependency-induced stalls. You don't just see a date; you see a Delivery Confidence Curve.

- What-If Simulation: Instantly model the impact of adding developers, cutting scope, or authorizing overtime. Every scenario is scored for cost, feasibility, and its impact on your P70/P90 commitments.

2. Adaptive Resource Intelligence

The system doesn't just flag overload; it optimizes for throughput. Our engine identifies systemic risks—burnout, workload inequality (Gini coefficient analysis), and blocked critical-path tasks.

- Reinforcement Learning Interventions: Every recommendation (reassignment, delay, rebalancing) is powered by a Thompson Sampling bandit. The system learns which interventions your team actually follows and adapts its advice to your team's specific culture and capacity.

3. Autonomous Agent Orchestration

Commando AI deploys persistent AI agents that think, communicate, and act on your behalf.

- Optimizer Agents identify systemic inequality.

- Manager Agents coordinate cross-team interventions.

- Developer Agents proactively flag blockers and negotiate task swaps. Agents operate with five levels of autonomy and earn "Trust Scores" based on the approval rate of their decisions.

4. IDE-Native Project Context (MCP)

We eliminate the context-switching tax. Our 22-tool MCP Server brings the entire project management suite into Cursor, Claude, or Windsurf. Developers generate implementation plans, query coding standards, and update task status without ever leaving their editor.

5. Native Workflow Automation

A drag-and-drop visual engine with 20+ nodes (Gmail, Drive, Slack, GitHub, Notion) that turns project signals into actions. Describe an automation in plain English—"Notify Slack if the P70 date slips by 2 days"—and Gemini generates the graph.

Competitive Moat

| Feature | Legacy Tools (Jira/Monday) | Commando AI |

|---|---|---|

| Forecasting | Reactive Velocity Charts (The Past) | Probabilistic Simulations (The Future) |

| Workload | Simple Capacity Heatmaps | Gini Inequality & Burnout Trajectories |

| Recommendations | Static Rules | RL-Based (Thompson Sampling) Learning |

| Developer UX | Browser-Based (Context Switch) | IDE-Native (MCP Server) |

| Intelligence | Chatbot Assistants | Autonomous Agent Orchsestration |

Technical Underpinnings

Monte Carlo Forecasting: We model velocity $v$ as a Gaussian distribution. For each of the 10,000 runs, we sample $v$ using the Box-Muller transform: $$z_0 = \sqrt{-2 \ln u_1} \cos(2\pi u_2)$$ The sampled velocity is then adjusted for real-world friction: $$v_{adjusted} = v_{sampled} \cdot (1 - 0.3 \cdot \text{burnout_risk})$$ $$Weeks = \left( \frac{\text{Complexity}}{v_{adjusted}} \right) \cdot (1 + \text{deps} \cdot \text{stochastic_delay})$$

Adaptive Recommendations: We use Thompson Sampling to learn team preferences. Each intervention follows a Beta distribution $\text{Beta}(\alpha, \beta)$. Upon human feedback, we update the conjugate prior: $$\alpha_{new} = \alpha_{old} + \text{accepted}, \quad \beta_{new} = \beta_{old} + \text{rejected}$$ The system balances exploration and exploitation by sampling from this posterior per cycle.

Inequality Detection: Systemic workload imbalance is quantified using the Gini Coefficient: $$G = \frac{\sum_{i=1}^n \sum_{j=1}^n |x_i - x_j|}{2n^2 \bar{x}}$$ This allows the Optimizer agent to detect "Silent Overload" even when average utilization looks healthy.

Commando AI by the Numbers

- 10,000 iterations per Monte Carlo delivery simulation.

- 3 days: Average historical accuracy gap of P70 predictions.

- 22 professional tools exposed via the IDE-native MCP Server.

- 75% increase in recommendation accuracy over time via RL.

- 1,422 lines of custom logic powering the workflow executor.

- 20+ automation nodes for deep toolchain integration.

How we built it

Domains: Artificial Intelligence & Machine Learning · Enterprise Systems & SaaS · Developer Tools & APIs The Stack: Next.js 14, TypeScript, Prisma (30+ models), Inngest, Vercel.

- Custom Simulation Logic: We wrote the Monte Carlo engine from scratch, utilizing a Box-Muller transform for Gaussian sampling and a stochastic model for dependency cascade propagation.

- Bayesian Recommendation Engine: Thompson Sampling maintains a beta distribution for intervention types, ensuring the system exploits proven wins while exploring new strategies for team optimization.

- Hierarchical Agent Flow: Using Inngest, we established a strict "Optimizer → Manager → Developer" reasoning cycle. This ensures high-level project health data informs individual agent decisions, preventing myopic task choices.

- Resilient Workflow Executor: We built a recursive template resolver and topological sorter to handle complex, asynchronous automation DAGs natively.

Challenges we ran into

- Calibration vs. Implementation: Coding the math was simple; making it truthful was the trial. We spent days tuning the "burnout penalty" and "dependency decay" variables against real historical data until the system's P70 predictions actually mattered.

- The RL Cold-Start: Thompson Sampling is a long-game strategy. Early recommendations are broad; it takes 15+ decisions to see the system "wake up" and align with team culture. We chose transparency over "faked" early intelligence.

- Agent Stability: Managing persistent state across dozens of agents required strict per-agent throttling and message expiration policies to prevent runaway feedback loops and "agent hallucination" in the message bus.

Accomplishments that we're proud of

- Proving the Math: Validating the P70 dates against a completed project and seeing a 3-day delta. The system would have warned of a disaster a month before the human team noticed.

- Autonomous Coordination: Watching three agents—Optimizer, Manager, and Developer—negotiate a workload rebalance without a single human keystroke.

- Dogfooding: We managed the entire development of Commando AI within Commando AI. Every feature was tested in the fires of its own creation.

What we learned

- Probabilistic thinking is a superpower. A range of dates is fundamentally more actionable than a single false promise.

- Reliability > Intelligence. In multi-agent systems, the orchestration (throttle rates, execution order) is more important than the LLM's raw reasoning.

- The "Context Switch" is the hidden killer. Bringing project data into the IDE via MCP did more for developer velocity than any "AI assistant" chatbot.

What's next for Commando AI

- Outcome-Aware RL: Closing the loop from "recommendation accepted" to "did the project actually speed up?"

- Per-Developer Velocity: Tighter prediction bands based on personalized historical throughput.

- Compound Memory: Agents that learn developer nuances—like underestimating backend work by 20%—and adjust their planning in real-time.

Built With

- Language: TypeScript

- Framework: Next.js 14 (App Router)

- Database: PostgreSQL, Prisma

- AI: GPT-4o-mini (Agents), Gemini 2.5 Flash (MCP / Workflows)

- Orchestration: Inngest

- Data Flow: React Flow (Visual Workflows)

- Deployment: Vercel

The destination is a platform where routine friction vanishes, risks are detected before they become failures, and teams finally have the Certainty required to ship their best work.

Built With

- huggingface

- langchain

- langraph

- markov-decision-proccess

- monte-carlo

- nextjs

- openai

- postgresql

- thompson-sampling

- trl

Log in or sign up for Devpost to join the conversation.