-

-

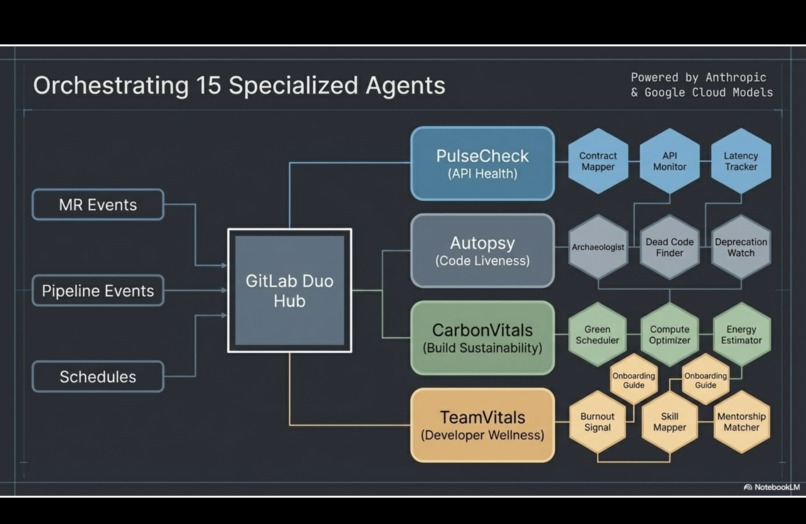

CodeVitals - Proactive, Autonomous Health for Your Code.

-

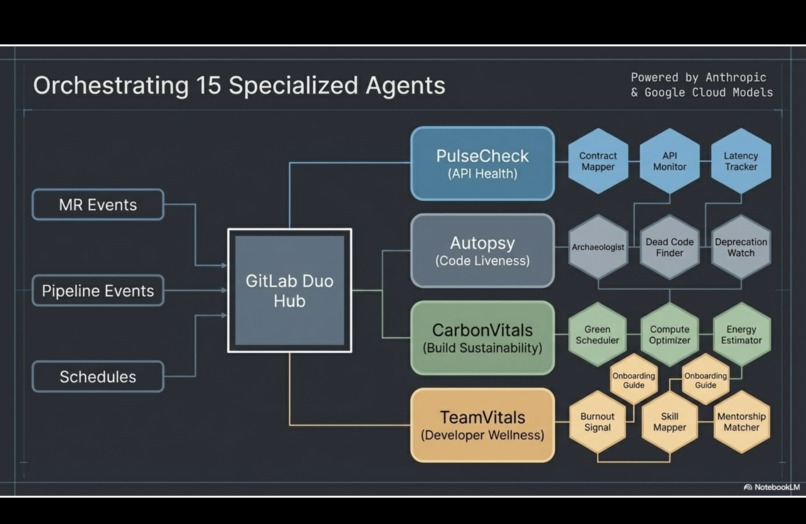

15 specialised agents orchestrated for CodeVitals

-

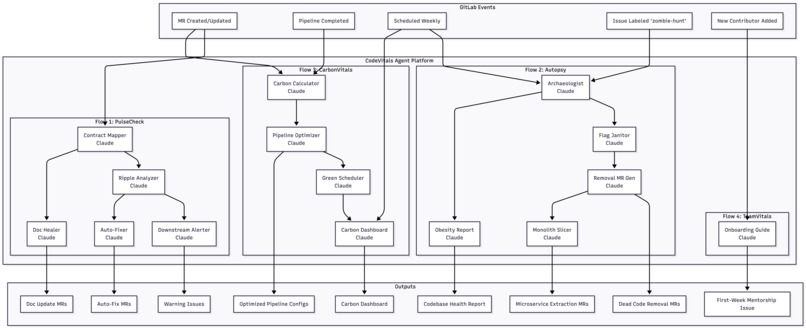

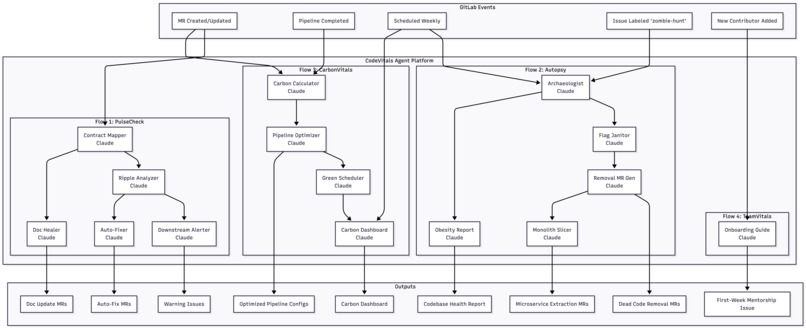

CodeVitals Architecture

-

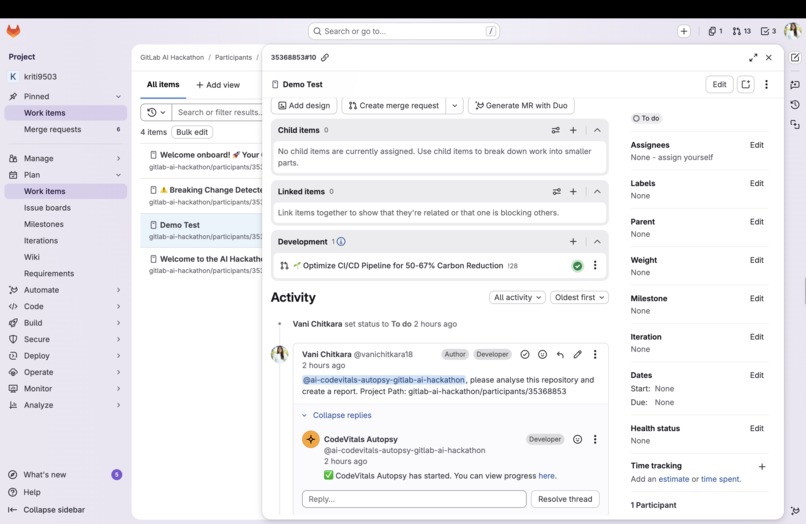

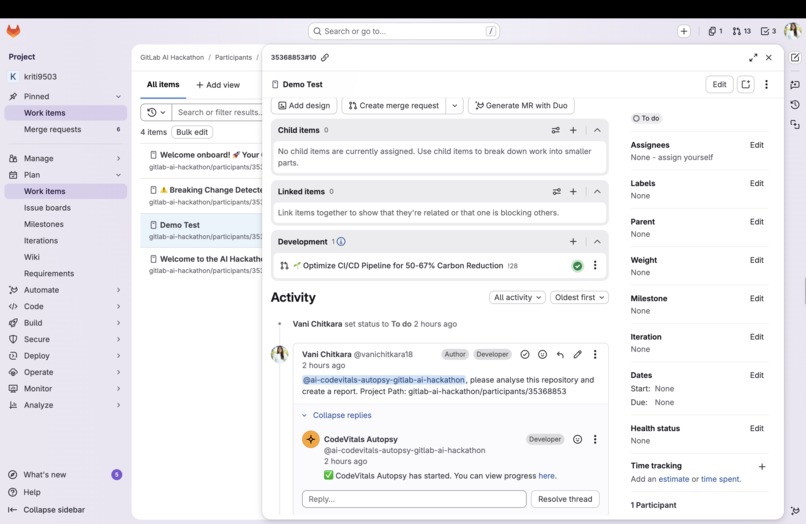

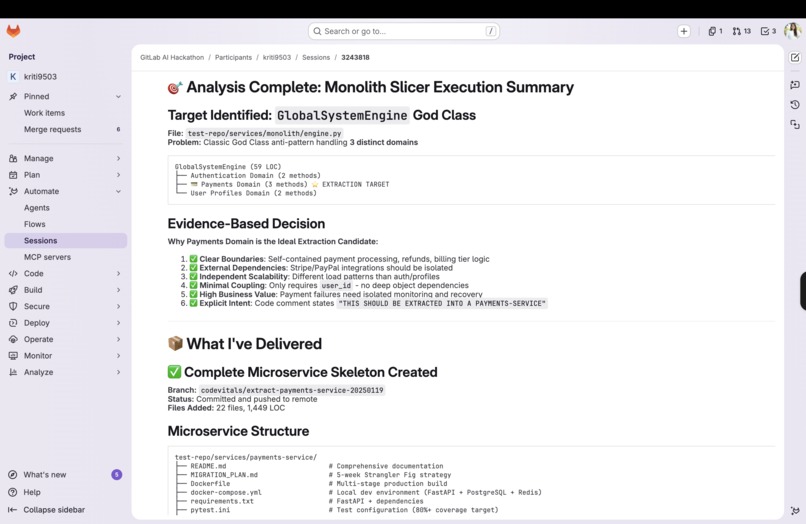

CodeVitals Autopsy triggered

-

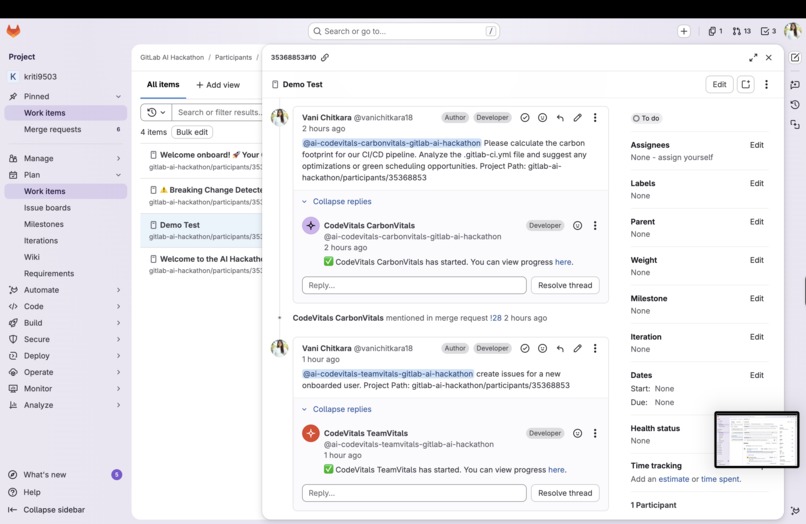

CodeVitals CarbonVitals and TeamVitals triggered

-

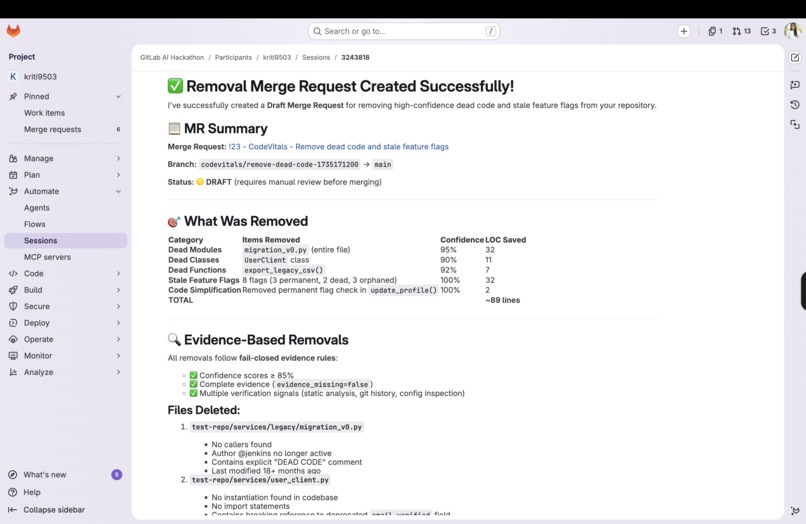

CodeVitals Autopsy in action - Agents raised MR to remove dead code

-

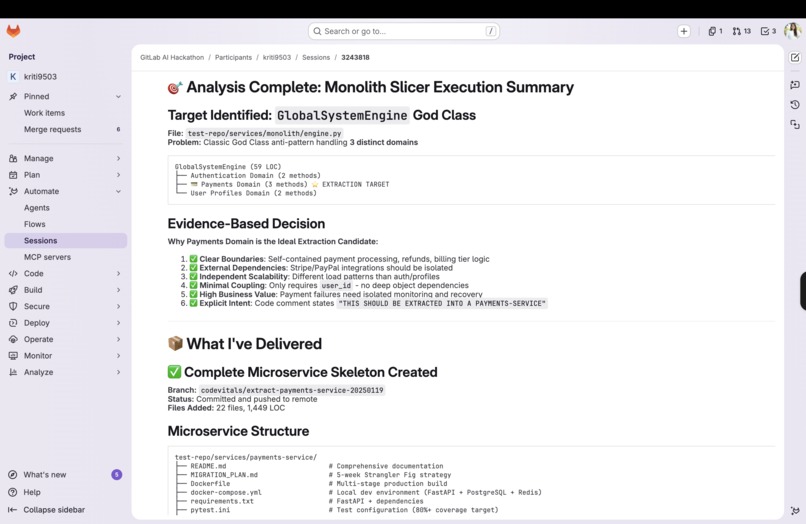

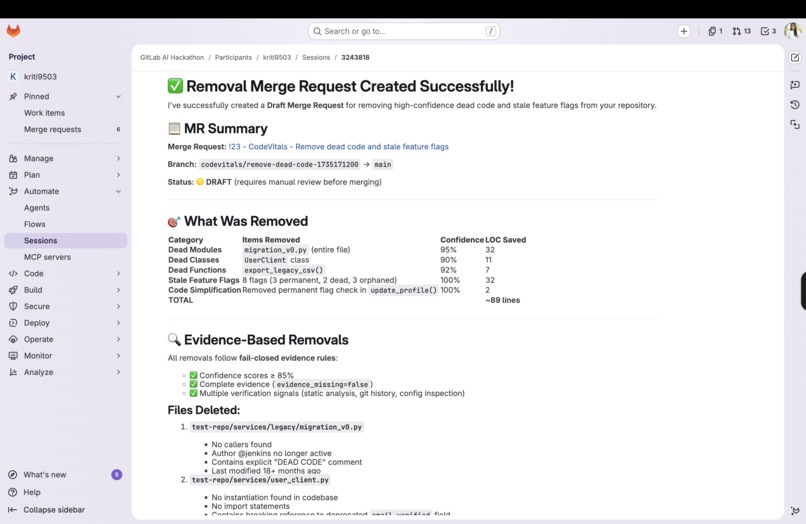

CodeVitals Autopsy in action - Monolith slicer raised MR to break down large modules

-

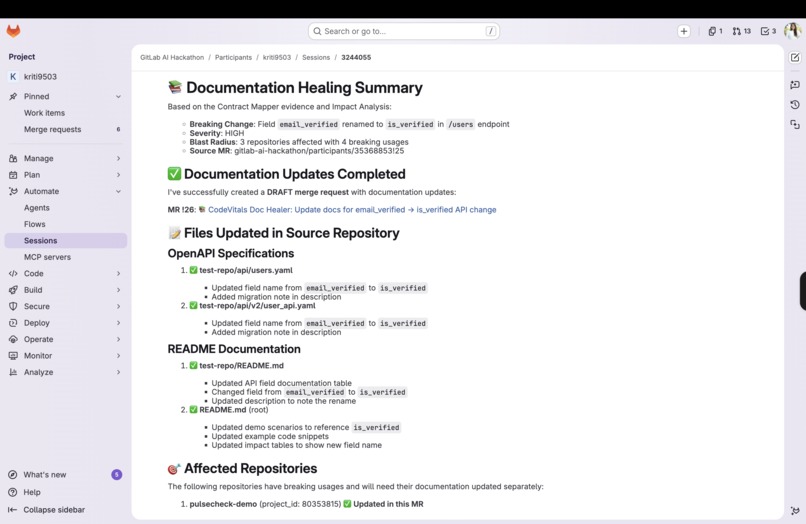

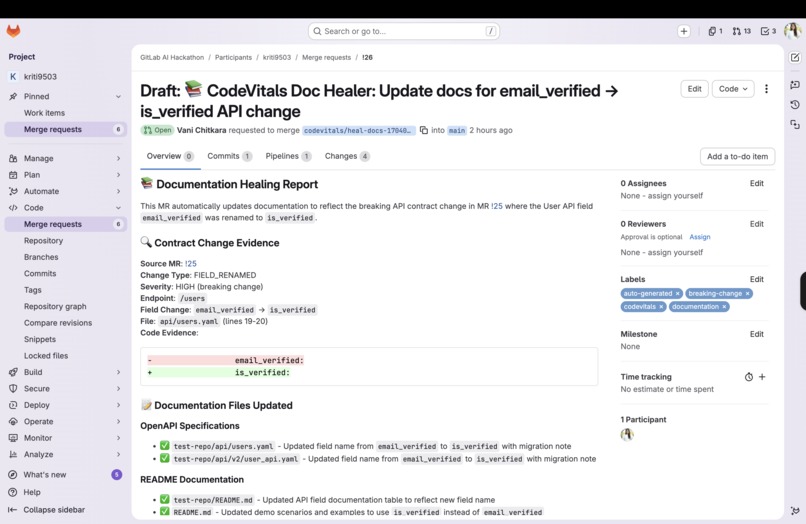

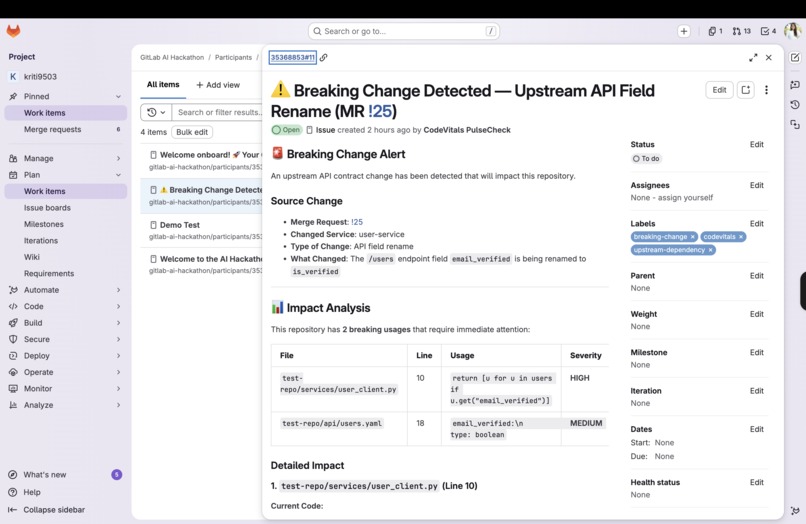

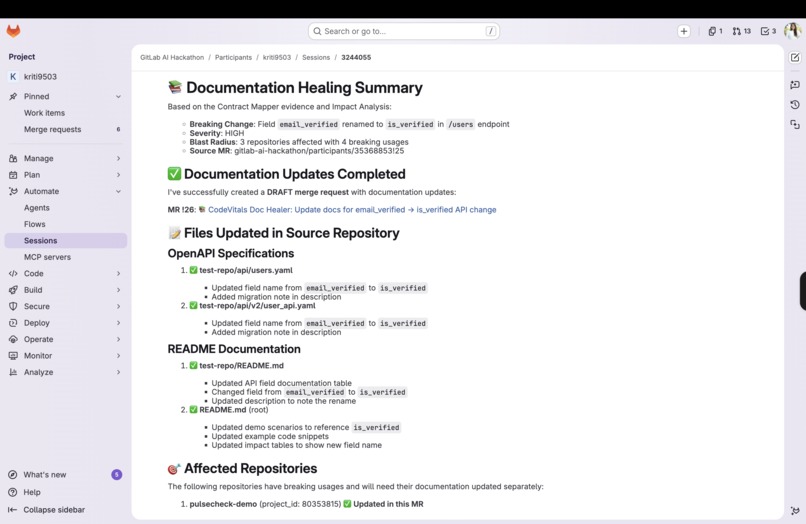

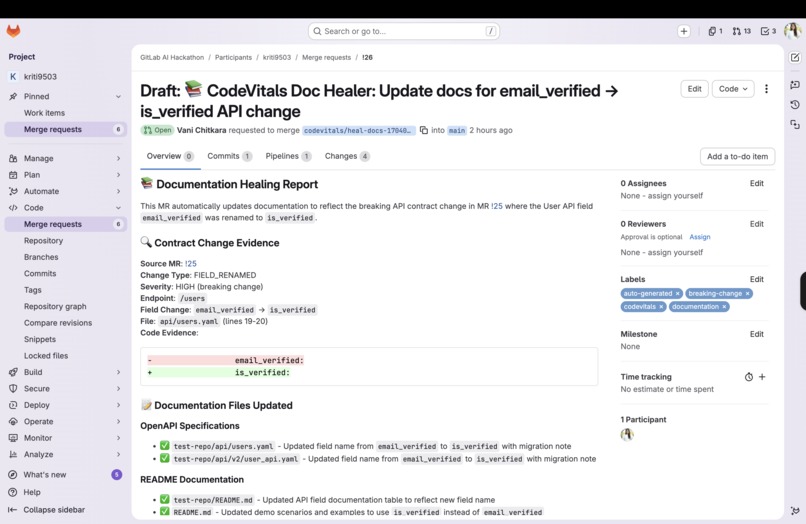

CodeVitals PulseCheck in action - Document healer captures document updates upon API contract changes

-

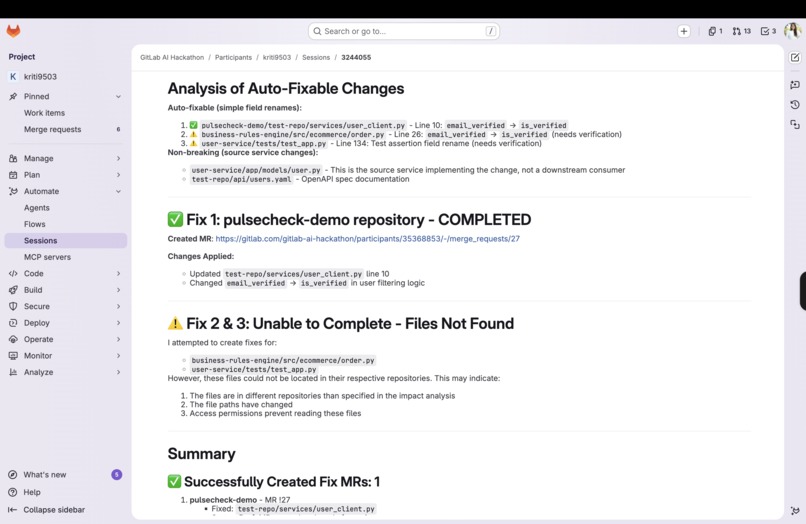

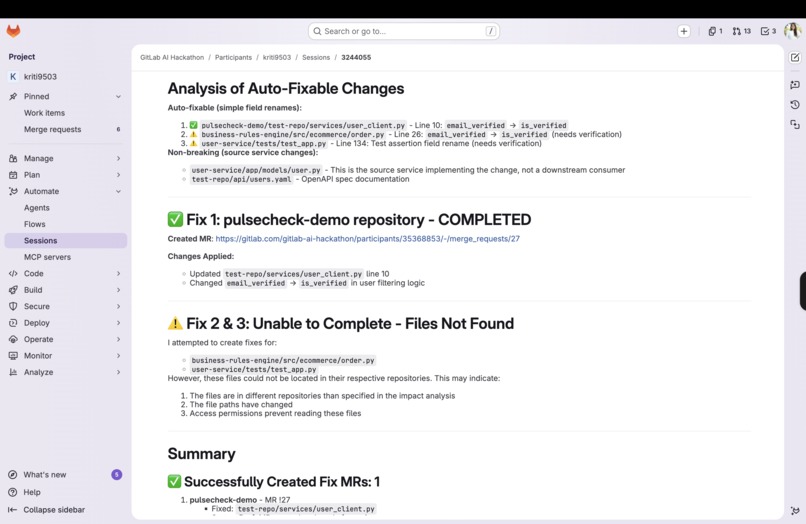

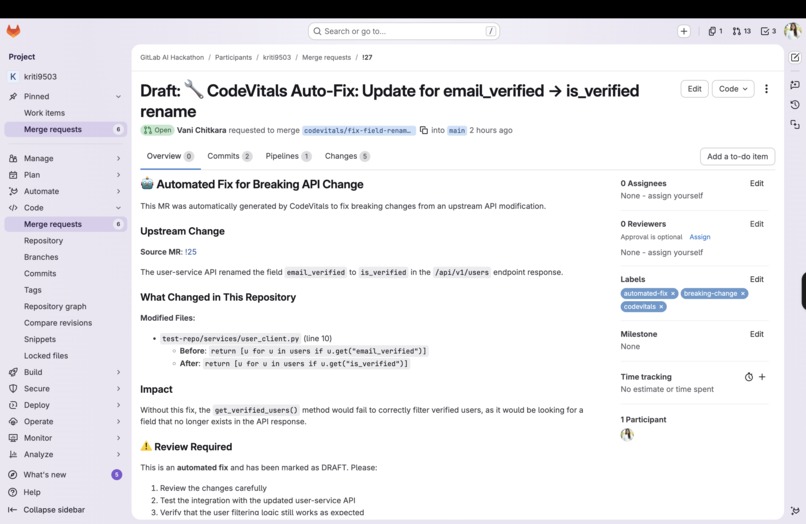

CodeVitals PulseCheck in action - Agents raised MR to fix the broken API contract in multiple repositories

-

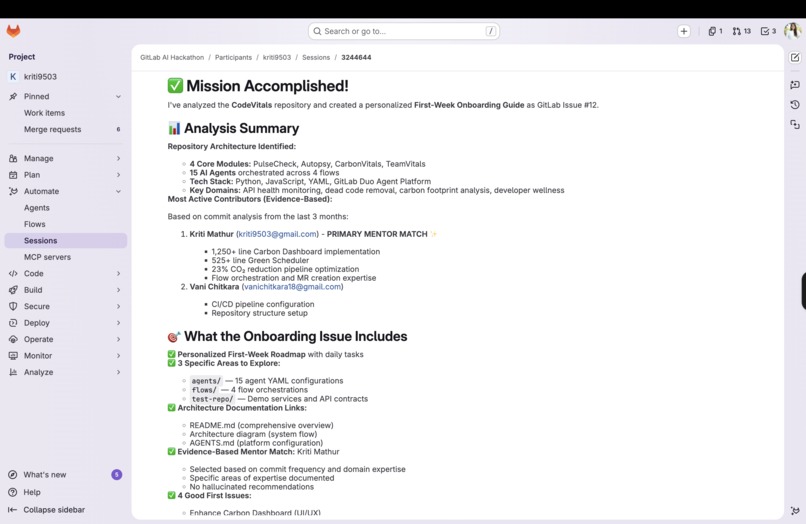

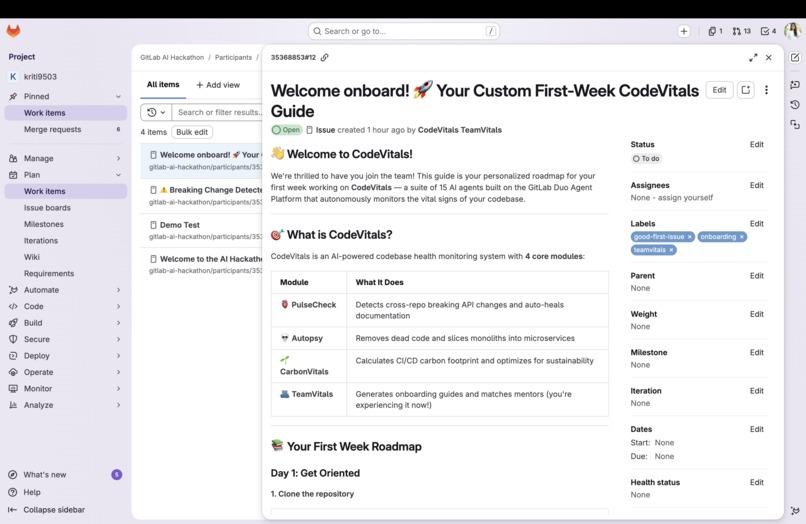

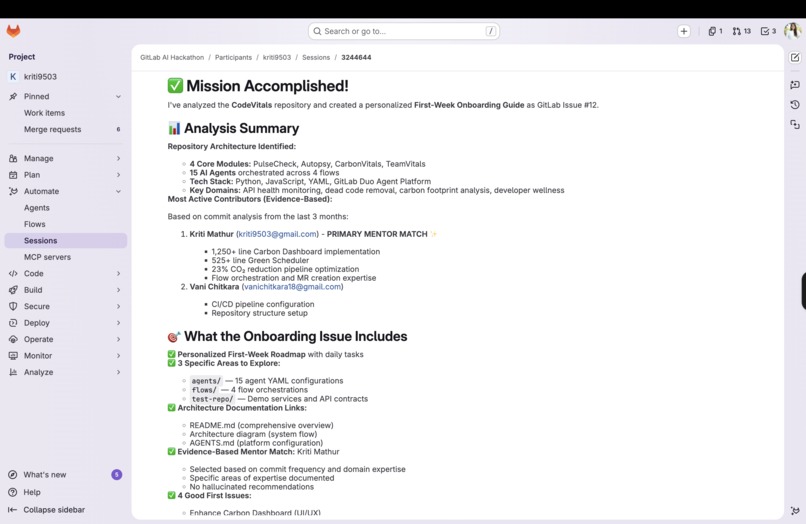

CodeVitals TeamVitals in action - Agents created an onboarding plan for the new user with good first issues and mentors assigned

-

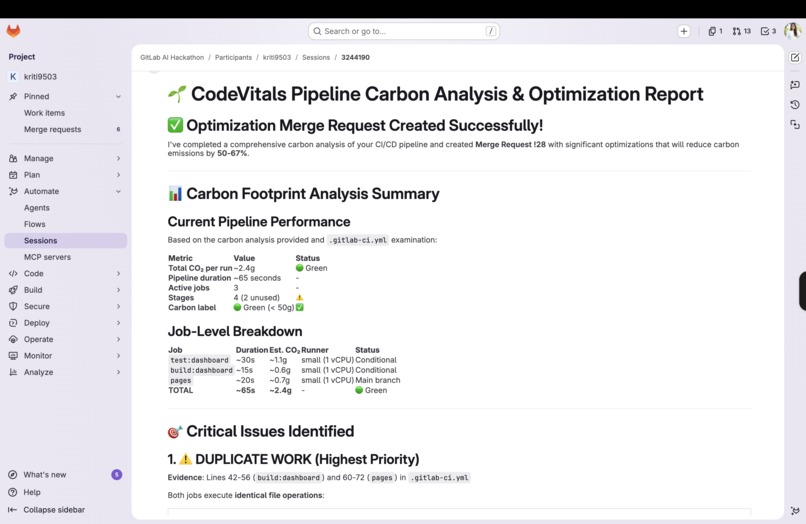

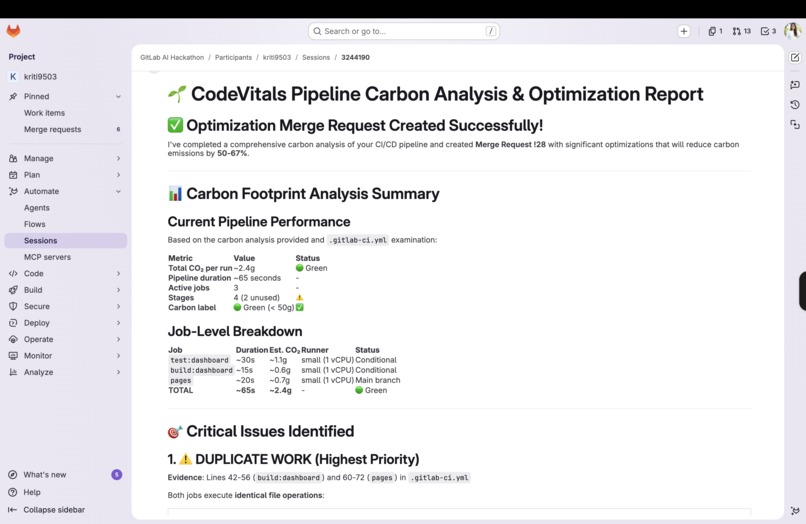

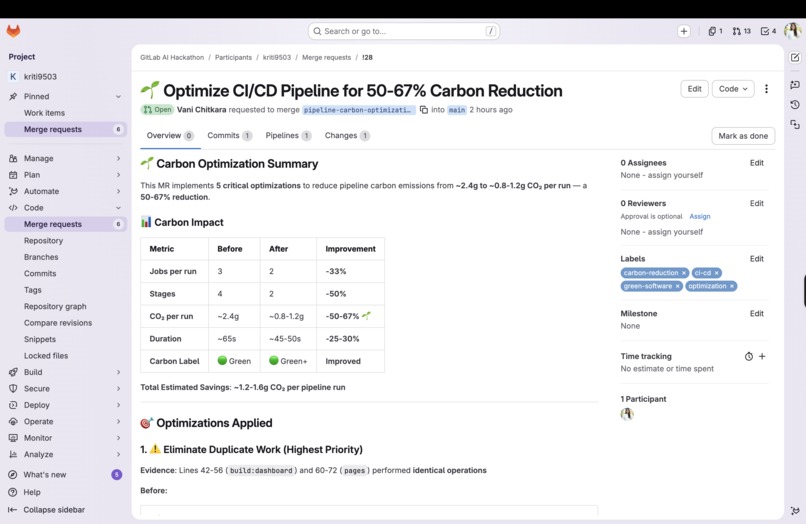

CodeVitals CarbonVitals in action - Agents ran carbon analysis on the pipelines

-

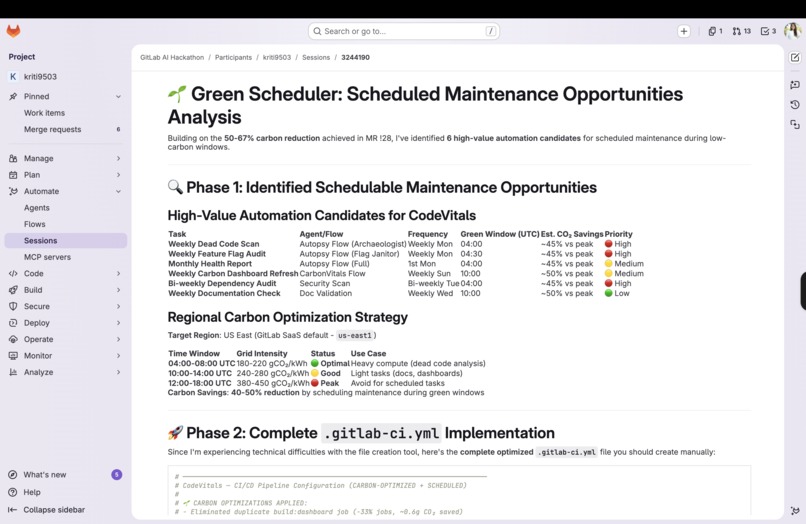

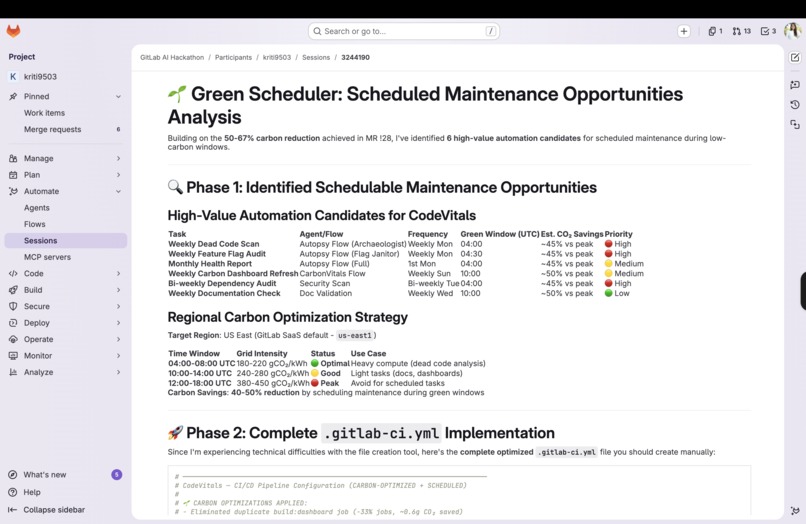

CodeVitals CarbonVitals in action - Scheduled maintenance opportunities assessed

-

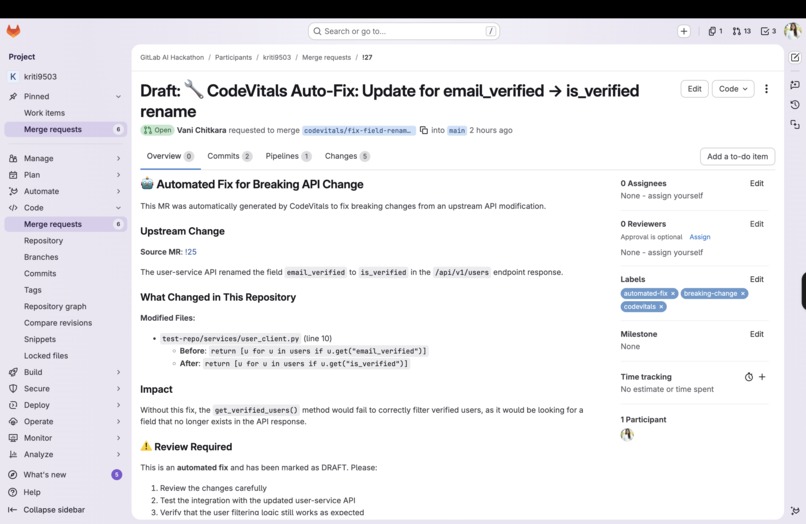

Merge Request raised by PulseCheck flow to fix the docs for the API field change

-

Merge Request raised by PulseCheck for fixing the broken API contract across all the API usages in different services in different repos

-

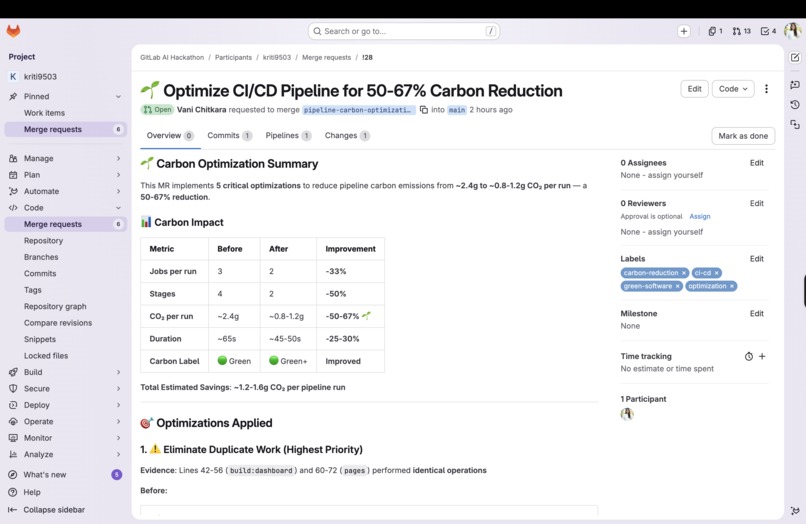

Merge Request raised by CarbonVitals to reduce carbon emissions via pipeline runs

-

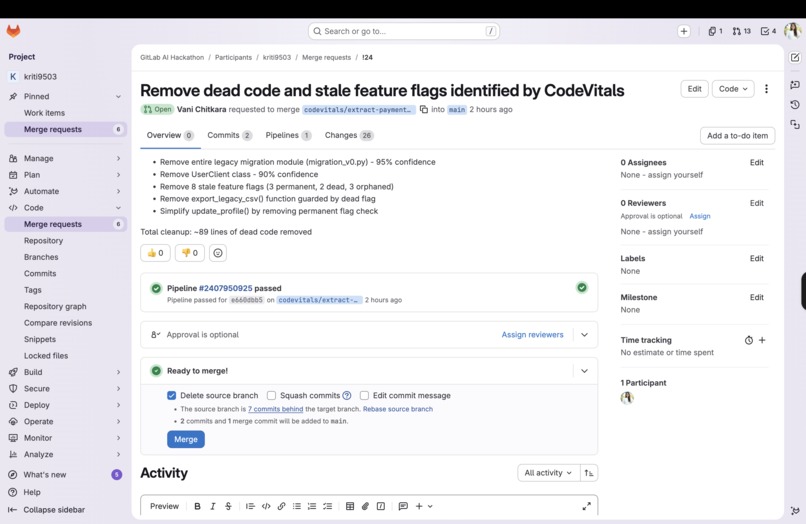

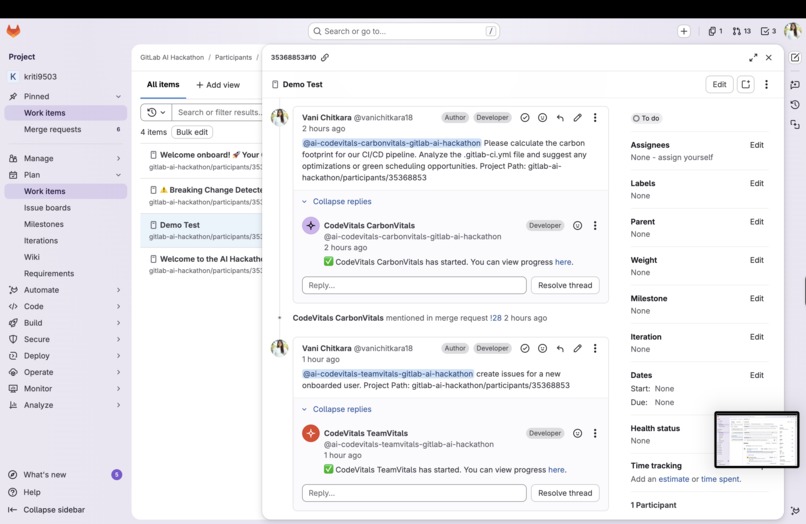

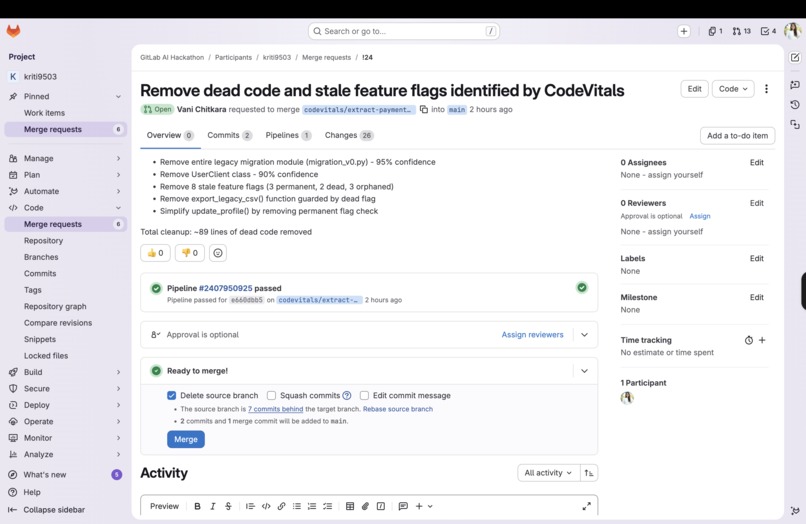

Merge Request raised by Autopsy to remove dead code and stale feature flags

-

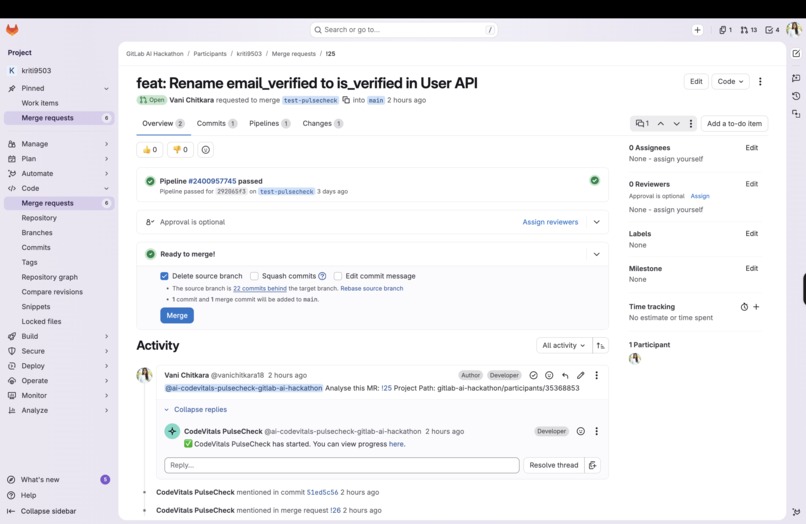

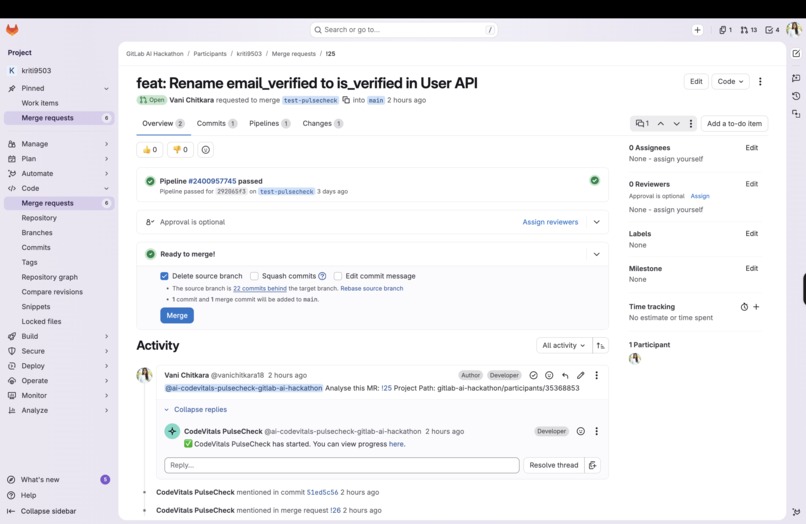

Merge Request raised by PulseCheck to fix the broken API contract across services

-

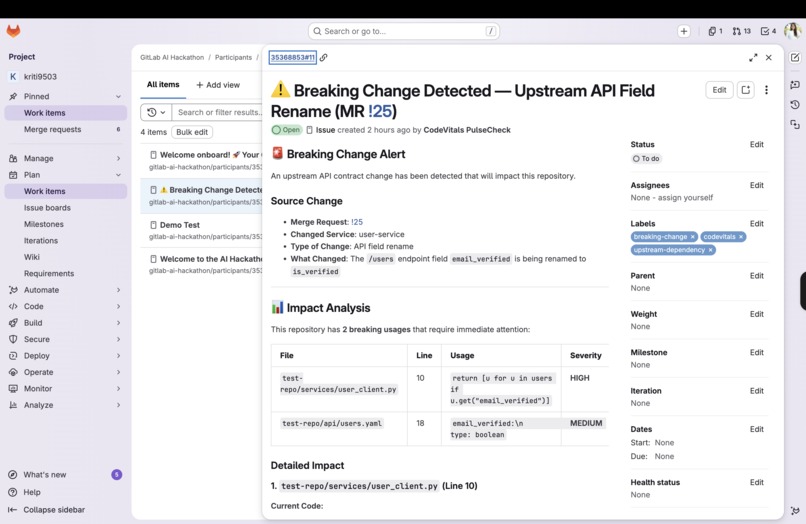

Issue raised by Autopsy upon identifying the breaking API changes

-

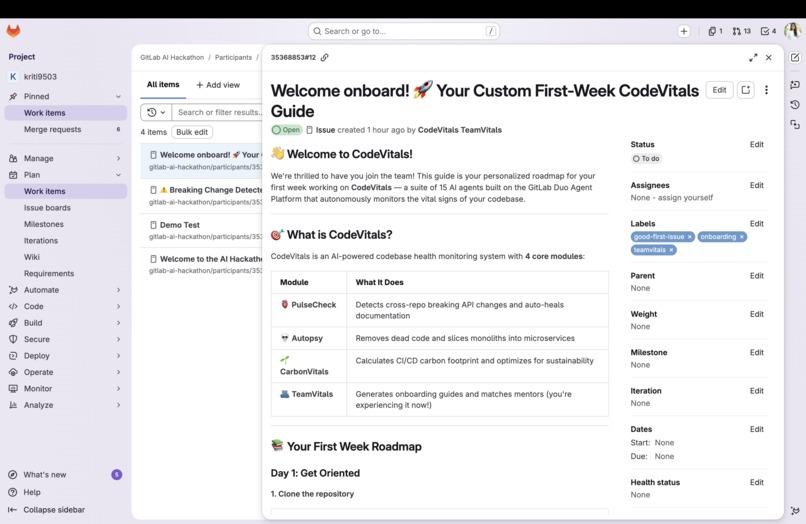

Issue raised by TeamVitals to onboard new members by assigning day-wise tasks, good first issues and mentors

Inspiration

Every day, development teams silently suffer from three compounding problems that no existing tool fully solves. AI is getting better at writing code, but nobody is watching over the health of the code that already exists. Breaking API changes ripple across microservices and surface as production incidents weeks later. Dead code accumulates until nobody dares touch it. And CI/CD pipelines quietly burn carbon with zero visibility or accountability. We were inspired by the idea of treating a codebase like a living organism, one with vital signs that can be monitored, diagnosed, and healed autonomously. That's how CodeVitals was born.

What It Does

CodeVitals is a suite of 15 AI agents organized into 4 autonomous flows, built on the GitLab Duo Agent Platform, that monitors four critical "vital signs" of your codebase:

- 🫀 PulseCheck — Detects cross-repository breaking API changes the moment an MR is opened. It finds all downstream consumers across your entire GitLab group, creates warning issues in affected repos, generates auto-fix MRs for simple changes, and heals stale documentation automatically.

- 💀 Autopsy — Hunts dead code, stale feature flags, and bloated monoliths. It builds call graphs, assigns confidence scores to dead code candidates, and generates DRAFT removal MRs safely, with the original author tagged for review. It also extracts God Classes into microservice skeletons.

- 🌱 CarbonVitals — Calculates the CO2 footprint of every CI/CD pipeline run, identifies caching and parallelization optimizations, shifts non-urgent pipelines to low-carbon time windows, and publishes a live carbon dashboard to GitLab Pages.

- 🫂 TeamVitals — When a new developer joins, it automatically generates a personalized first-week onboarding guide tailored to their background and auto-matches them with a mentor from the most active component authors.

All of this runs with zero manual toil, triggered by MR events, pipeline completions, scheduled scans, issue labels, and contributor additions.

How We Built It

CodeVitals was built entirely on the GitLab Duo Agent Platform using Custom Agents and Custom Flows defined as YAML files. Each of the 15 agents is powered by Claude (via GitLab's AI integration with Google Cloud and Anthropic), and the 4 flows orchestrate agents in sequence based on GitLab events.

Key technical components:

- Agent definitions in

agents/— one YAML per agent with prompts, tools, and behavior - Flow orchestrations in

flows/— 4 flows wiring agents together with conditional branching - External API integration — Electricity Maps API via MCP for real-time carbon grid intensity data

- Carbon Dashboard — Static HTML/JS with Chart.js, deployed to GitLab Pages

- Demo scripts — Bash scripts to seed demo repos with realistic breaking changes and dead code for live demonstrations

The architecture is fully event-driven: MR creation triggers PulseCheck and CarbonVitals; scheduled jobs trigger Autopsy weekly; issue labels trigger on-demand zombie hunts; new contributors trigger TeamVitals.

Challenges We Ran Into

- Cross-repo awareness — Having an agent reason meaningfully across an entire GitLab group (not just a single repo) required careful prompt engineering to ensure the Ripple Analyzer could search, parse, and contextualize findings from multiple codebases simultaneously.

- Confidence scoring for dead code — Determining whether code is truly dead (vs. dynamically called, reflection-based, or conditionally used) is genuinely hard. Building a multi-signal scoring system with appropriate weights to minimize false positives, while still being aggressive enough to be useful, required significant iteration.

- Carbon estimation without hardware telemetry — Real carbon data requires runner hardware specs and live grid intensity. We had to design a practical estimation model that produces meaningful results using only pipeline metadata and the Electricity Maps API.

- Safety guardrails — Ensuring that agents never auto-merge removal MRs, always tag original authors, and always create DRAFT MRs requires deliberate design. Autonomous agents acting on production codebases demand conservative defaults.

- Flow orchestration complexity — Chaining 5 agents sequentially (for example, PulseCheck's Contract Mapper to Ripple Analyzer to Alerter to Auto-Fixer to Doc Healer) while passing context between steps cleanly was one of the most technically demanding parts of working with the Duo Agent Platform.

Accomplishments That We're Proud Of

- Designed and implemented 15 fully-specified AI agents across 4 coherent problem domains, each with a clear responsibility, input, and output.

- Built what we believe is the first CI/CD carbon footprint tracker natively integrated into GitLab, complete with a live dashboard, green scoring, and pipeline shift recommendations.

- Created a cross-repository breaking change detection system that can identify downstream impact across an entire GitLab group in about 30 seconds, something that previously required manual auditing.

- Designed a dead code confidence scoring system with 6 independent signals that prioritizes safety and transparency over aggression.

- Shipped a fully working demo environment with setup scripts that let judges and reviewers experience all four modules against realistic codebases, not just a slide deck.

What We Learned

- The GitLab Duo Agent Platform is genuinely powerful for event-driven, multi-step AI workflows. The declarative YAML approach for defining agents and flows is well-suited for teams who want to ship agents without building custom infrastructure.

- Prompt engineering at the agent level is very different from prompt engineering for one-off queries. Agents need to be robust to unexpected inputs, gracefully handle partial results, and produce outputs that downstream agents can reliably parse.

- Autonomous agents acting on codebases need conservative defaults. The instinct is to make agents as capable as possible, but the right instinct is to make them as safe as possible first. DRAFT MRs, human-in-the-loop reviews, and confidence thresholds matter enormously for real-world trust.

- Carbon visibility changes behavior. Simply measuring and displaying CO2 per pipeline run, even as an estimate, creates accountability that didn't exist before. You don't improve what you don't measure.

- Scoping 4 ambitious modules into a hackathon timeline required ruthless prioritization. The architecture and agent definitions are complete; production-hardening each agent would be the natural next step.

What's Next for CodeVitals

- GitLab Marketplace listing — Package CodeVitals as a one-click installable agent suite for any GitLab group, with a configuration wizard.

- Real runner telemetry for CarbonVitals — Integrate with GitLab Runner metadata to get actual CPU/GPU utilization rather than TDP estimates, making carbon calculations significantly more accurate.

- PulseCheck multi-language support — Extend API contract parsing beyond OpenAPI/protobuf/GraphQL to cover REST conventions inferred from framework patterns (FastAPI, Spring Boot, Rails).

- Autopsy ML model — Train a classifier on historical "safe to delete" vs "surprisingly still used" cases to improve confidence scoring over time.

- TeamVitals expansion — Add recurring check-in issues for new developers at week 2, week 4, and week 8, with sentiment analysis on their MR comments to flag early signs of disengagement.

- Carbon offsetting integrations — Connect CarbonVitals to carbon offset APIs so teams can automatically purchase offsets for pipelines that exceed a configurable threshold.

Built With

- anthropic

- claude

- duo

- gitlab

Log in or sign up for Devpost to join the conversation.