-

-

Guard Agent: Pre-Initialization Consent Architecture for AI

-

Stop Giving AI Full Access | Guard Agent Demo

-

I Built an AI That Asks Permission First

-

Clarification

-

A secure AI system using Auth0 Token Vault, Action Contracts, and Selective Scope Authorization

-

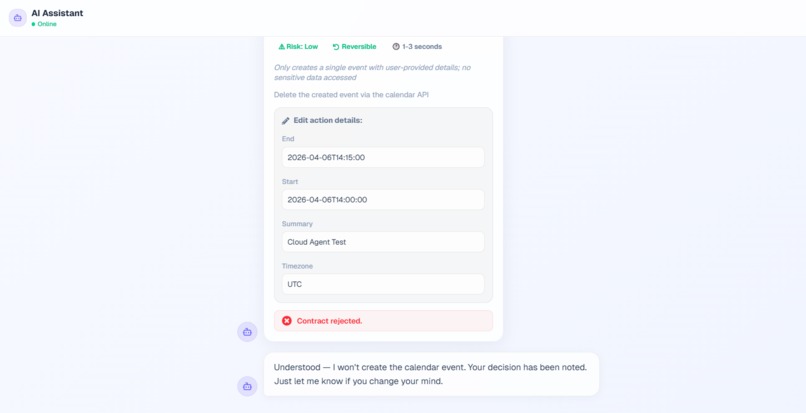

But Over Used.... then Deny Permission Access

-

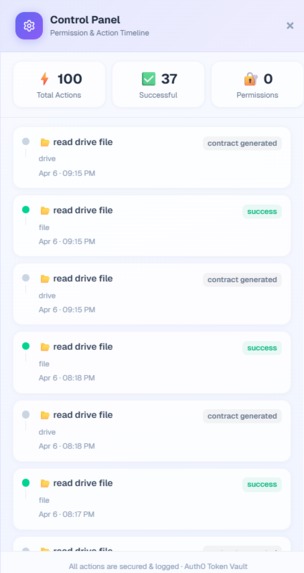

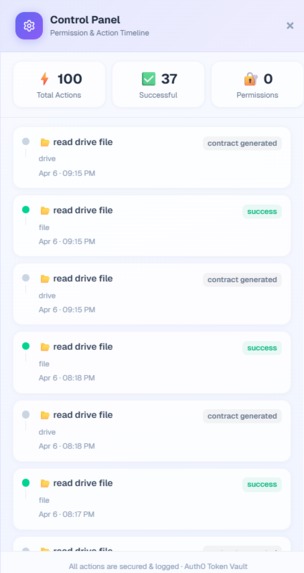

Logs of AI (Guard Agent)

-

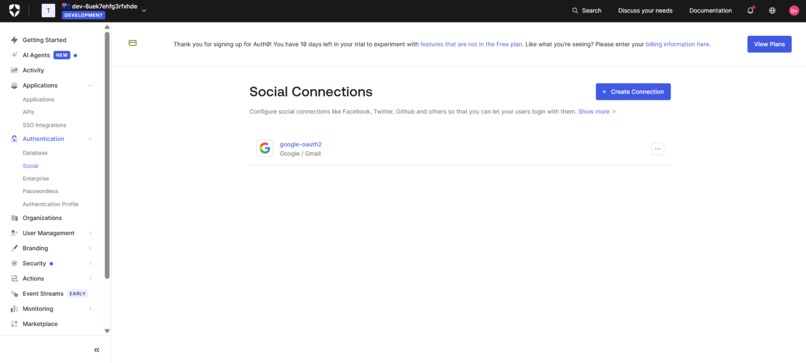

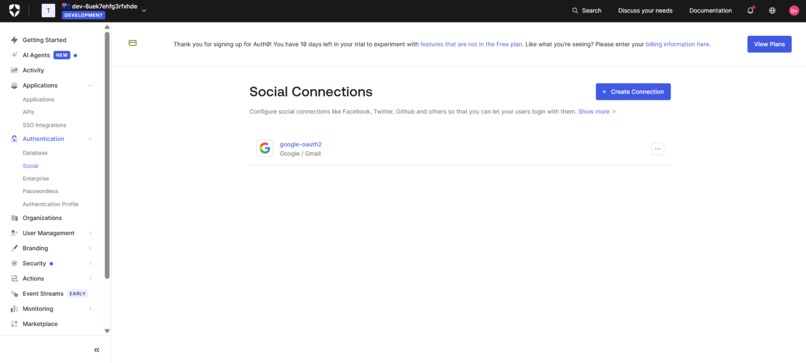

Auth0 Social Connect

-

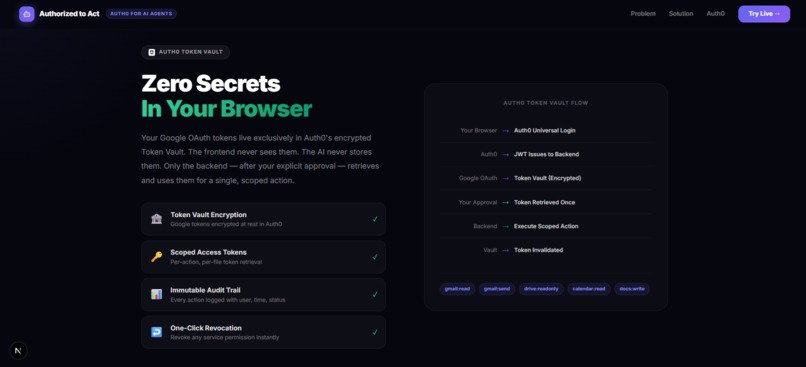

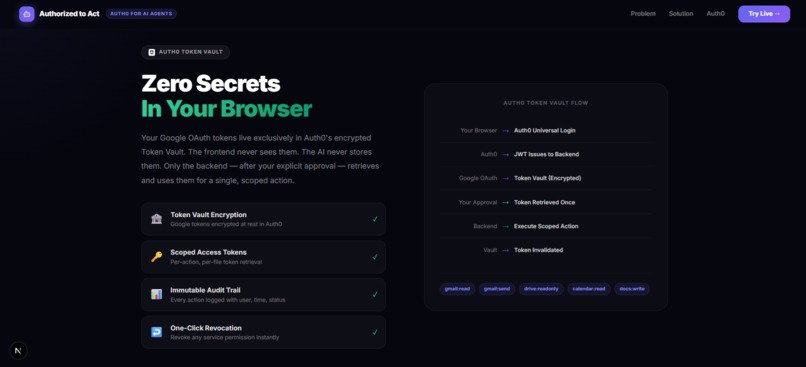

Zero Secrets In Your Browser

-

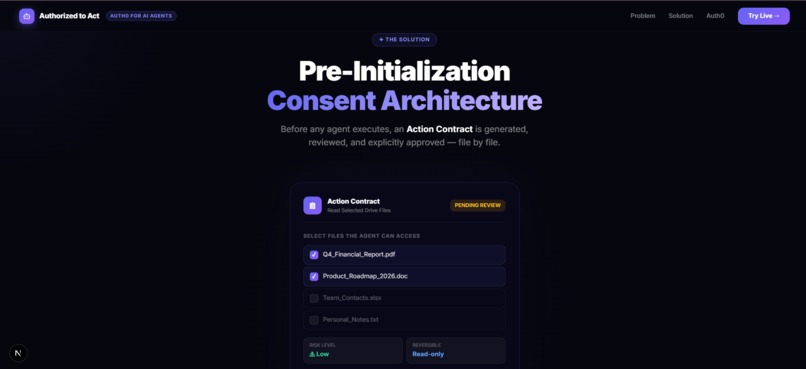

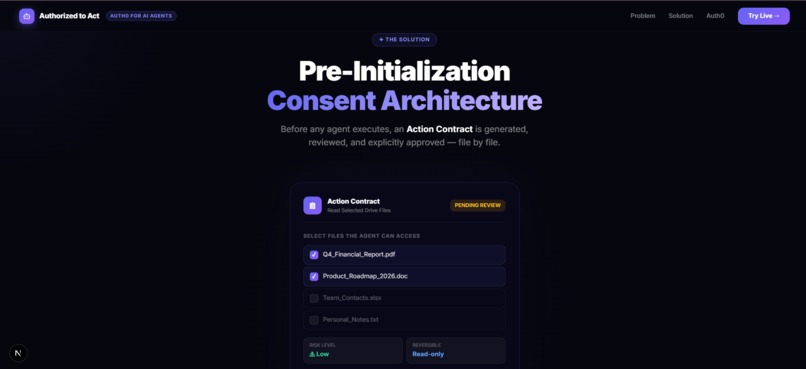

Pre-Initialization Consent Architecture

-

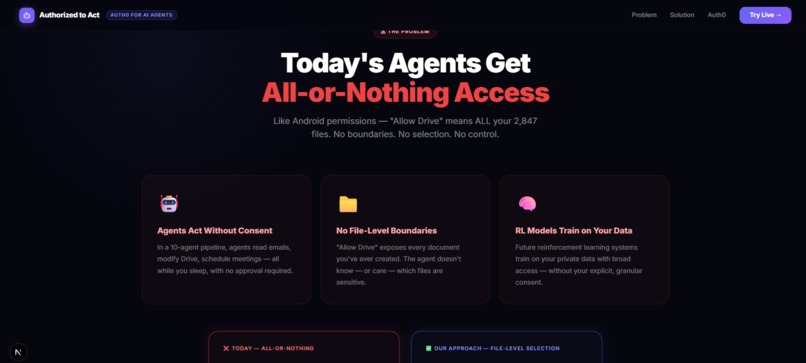

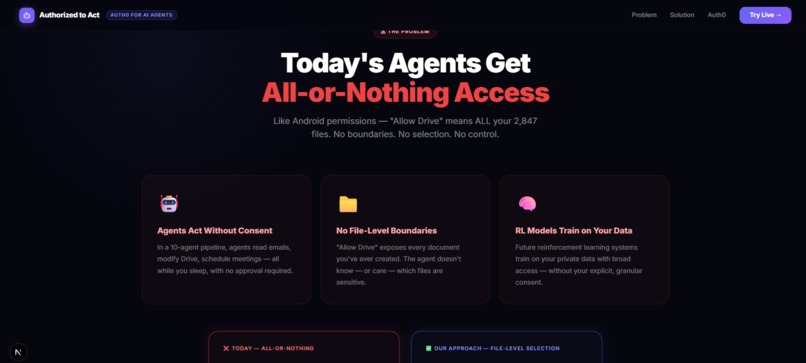

Today's Agents Get All-or-Nothing Access

-

Guard Agent: Who Controls Your AI Agents?

Guard Agent: The Trust Layer for AI

AI agents today have unlimited access to your data. Guard Agent changes that by enforcing explicit, per-action user consent before anything happens.

Inspiration

The current paradigm of "all-or-nothing" OAuth authorization is fundamentally unsafe for autonomous AI agents. When an agent requests access to tools like Google Drive, it is often granted full control—read, write, and delete across thousands of files. As AI systems become more powerful, the potential damage from a compromised or hallucinating agent grows exponentially.

Guard Agent was born from a simple but critical idea: AI should not be trusted with unrestricted access. Instead, it should operate through a Trust Orchestrator a secure intermediary that enforces granular, explicit human consent before any sensitive action is performed. Our goal is to let users benefit from AI automation without ever losing control of their data.

What it does

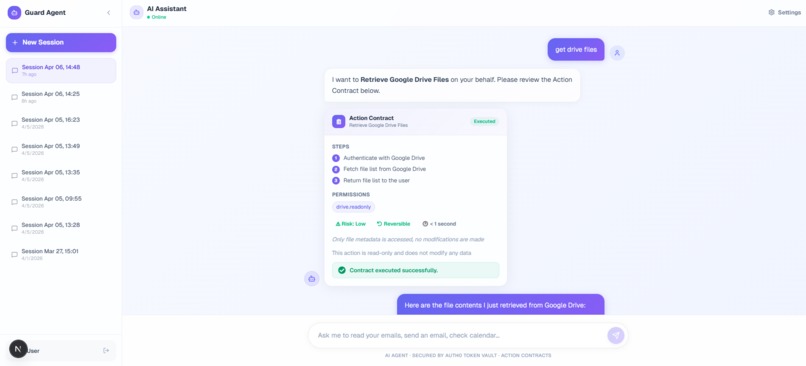

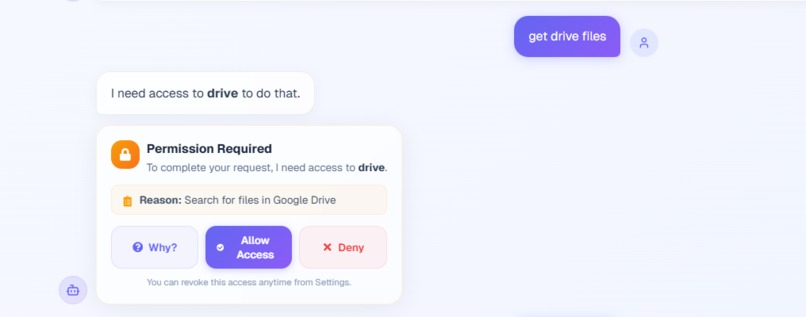

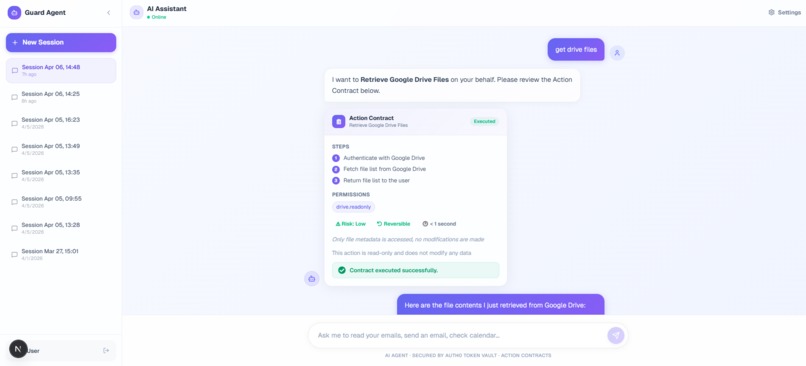

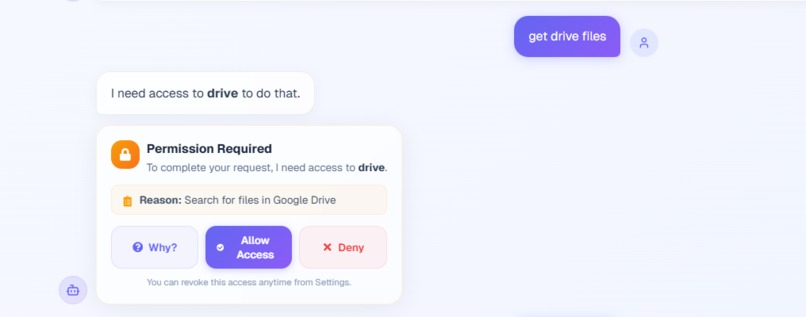

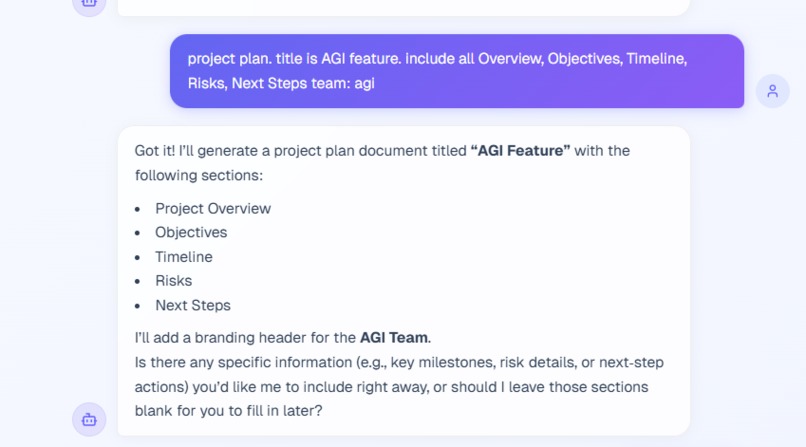

Guard Agent is a high-security AI orchestration platform that acts as a protective layer between users and third-party APIs. It analyzes user intent, plans actions safely, and introduces a mandatory approval step before execution.

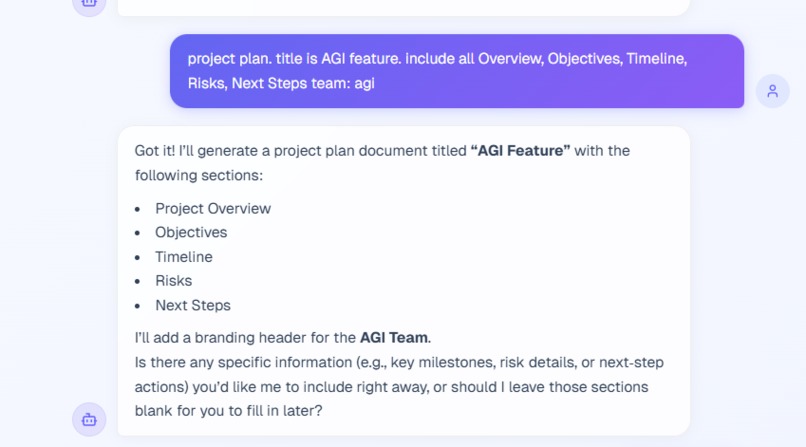

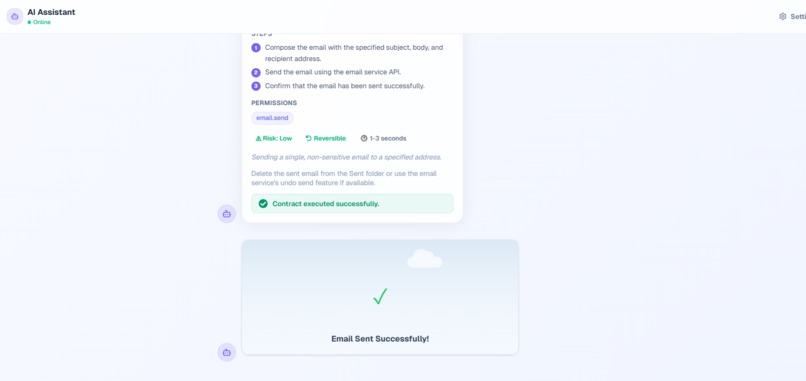

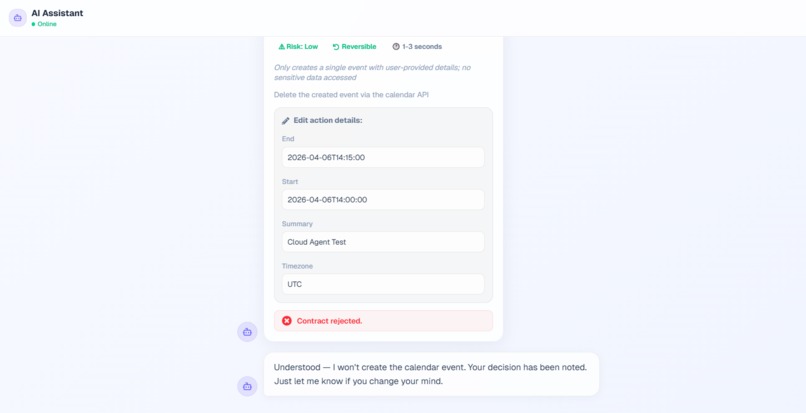

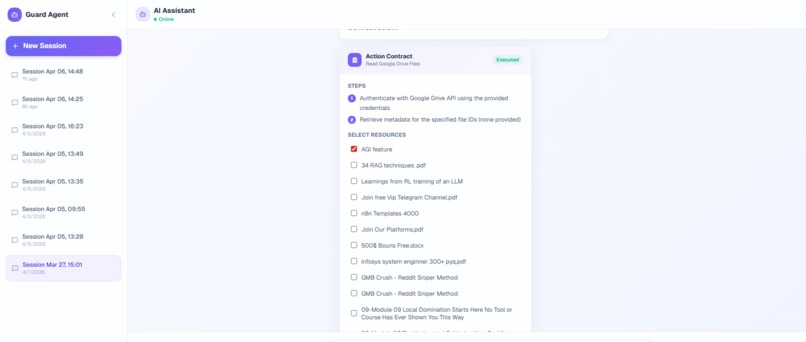

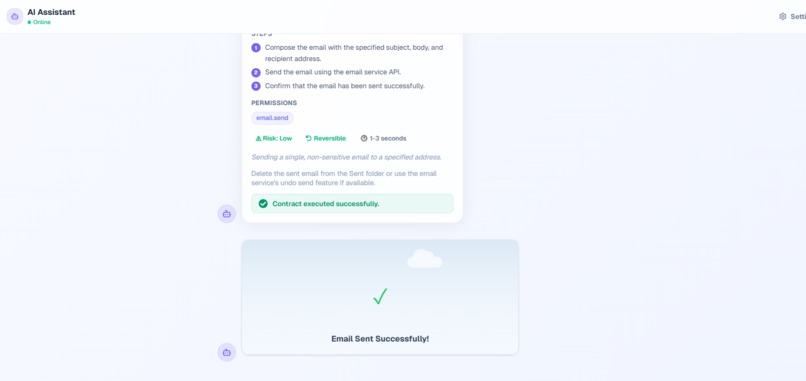

Instead of blindly executing tasks, the system generates an Action Contract a clear, human-readable summary of what the AI intends to do, the associated risk level, and the exact data it needs. Users can then approve or reject the action.

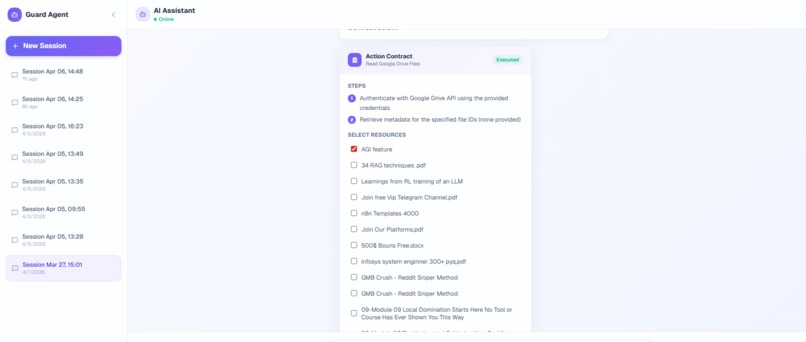

To solve the problem of over-permissioned access, we built Selective Scope Authorization (SSA), which allows users to grant access only to specific files or resources for a single task, rather than entire accounts. Once approved, the system securely executes the action using short-lived tokens and logs every step in a transparent permission timeline.

How we built it

Guard Agent is powered by a stateful multi-agent architecture built with LangGraph in Python, structured into three stages: IntentNode, ContractNode, and ExecutorNode. This enables the system to plan, pause, and execute tasks in a controlled and observable manner.

To ensure fast and responsive performance, we integrated Groq running Llama 3 (70B), significantly reducing planning latency. For identity and security, we leveraged Auth0’s Token Vault, ensuring that all sensitive credentials are handled server-side and never exposed to the browser, achieving a Zero Secrets architecture.

The backend is built with FastAPI and handles secure token exchange, while the frontend is developed using Next.js, Tailwind CSS, and Framer Motion. The interface is designed to feel cinematic and intuitive, turning complex security flows into a seamless user experience.

Challenges we ran into

One of the biggest challenges was implementing true human-in-the-loop execution. We needed the system to pause mid-flow, present the Action Contract to the user, wait for input, and then resume execution without breaking the agent state. Achieving this required precise control over LangGraph’s state management and asynchronous workflows.

Another challenge was integrating modern frontend frameworks with strict dependency requirements alongside Auth0’s SDKs. We resolved this by shifting to a secure server-side authentication model.

Additionally, since external APIs like Google do not support dynamic, file-level OAuth scopes, we built an application-layer enforcement system to guarantee that only user-approved resources are accessed.

Accomplishments that we're proud of

We successfully implemented a Zero Secrets in the Browser architecture using Auth0 Token Vault, eliminating direct exposure of sensitive credentials. We designed and built the Action Contract and SSA interface, transforming complex security concepts into a simple and intuitive user experience.

Our multi-agent system runs efficiently without noticeable delays, and we delivered a highly polished, cinematic interface that makes security feel like a core feature rather than a barrier.

What we learned

Building Guard Agent taught us that AI security requires a fundamentally different approach than traditional applications. Agents need ephemeral, highly scoped, and verifiable access rather than persistent permissions.

Auth0’s Token Vault proved to be a critical component, abstracting away the complexity and risk of managing third-party tokens. We also gained deep experience in combining asynchronous AI workflows with modern server-rendered frontend systems to create a seamless and secure user experience.

What's next for Guard Agent

We plan to expand integrations beyond Google Workspace to platforms like Notion, Slack, GitHub, and Jira. We are also working on proactive AI auditing, where the system can detect anomalies in real time and automatically revoke access if behavior deviates from the approved contract.

Another key direction is introducing distinct identities for different agents within a system, allowing more granular control over capabilities. Finally, we aim to extend Guard Agent to support sovereign AI models, enabling secure interaction between locally running models and external services.

Bonus Blog Post

Building Guard Agent completely reshaped our perspective on AI security. Early on, we recognized a critical flaw—AI agents today are powerful but dangerously over-permissioned. A simple request like summarizing a document could unintentionally expose an entire data repository.

This led us to ask a fundamental question: what if AI had to earn trust for every action?

Implementing this idea introduced significant technical challenges. One of the hardest problems was enabling a seamless pause-and-resume mechanism in a multi-agent system. Using LangGraph, we built a stateful architecture where the agent could stop execution, generate an Action Contract, wait for user approval, and continue without losing context.

Auth0’s Token Vault played a key role in securing the system. By ensuring that tokens are never exposed to the frontend, we established a Zero Secrets foundation that greatly reduces risk.

We are particularly proud of our Selective Scope Authorization layer. Since most APIs lack fine-grained permission controls, we engineered our own enforcement system to guarantee that only explicitly approved resources are accessed.

Guard Agent represents our vision for the future of AI one where power is balanced with trust, and every action is transparent, controlled, and secure.

Built With

- ai-agents

- ai-automation

- ai-consent-system

- ai-demo

- ai-hackathon-project

- ai-innovation

- ai-orchestration

- ai-platform

- ai-privacy

- ai-security

- ai-tools-2026

- auth0

- auth0-token-vault

- cloud-apis

- fastapi

- fastapi-ai-backend

- framer-motion

- google-drive-api-security

- groq

- groq-llama3

- human-in-the-loop-ai

- jwt

- langgraph

- llama-3

- multi-agent-system

- multi-agent-systems

- next.js

- next.js-ai-project

- node.js

- oauth-2.0

- oauth-security

- python

- react

- render

- rest-apis

- saas

- saas-security-ai

- secure-ai

- secure-token-management

- tailwind-css

- token-vault

- typescript

- vercel

- zero-trust-ai

Log in or sign up for Devpost to join the conversation.