-

-

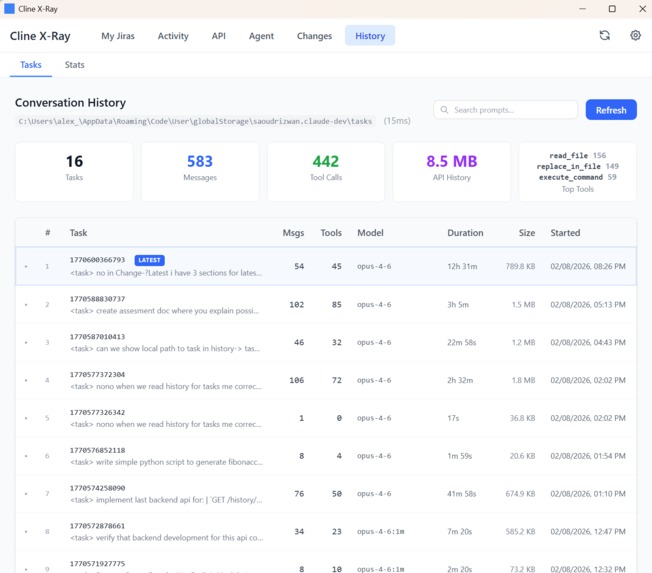

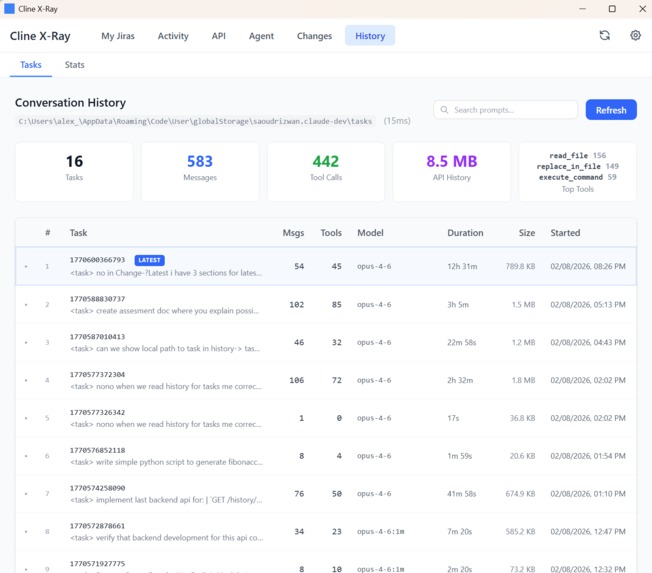

Cline task history

-

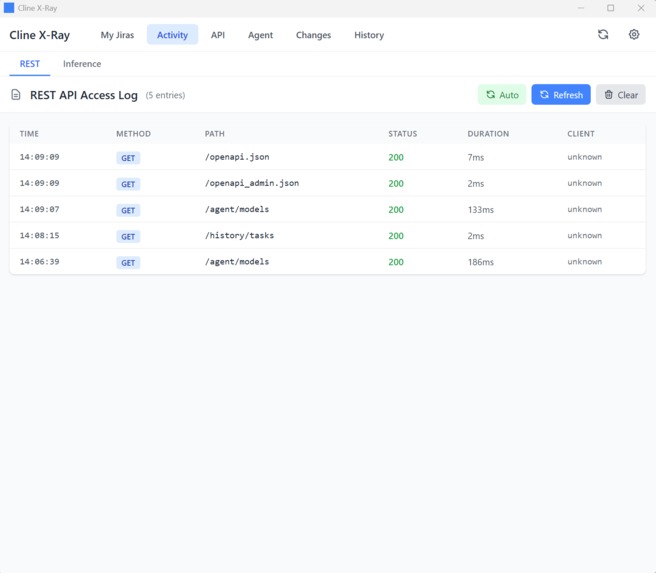

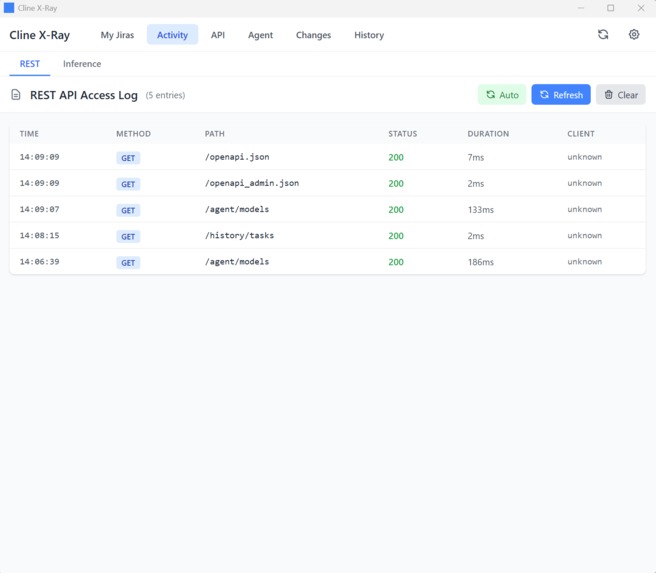

List of REST api endpoints (backing (Changes and History functionality)

-

REST api activity log

-

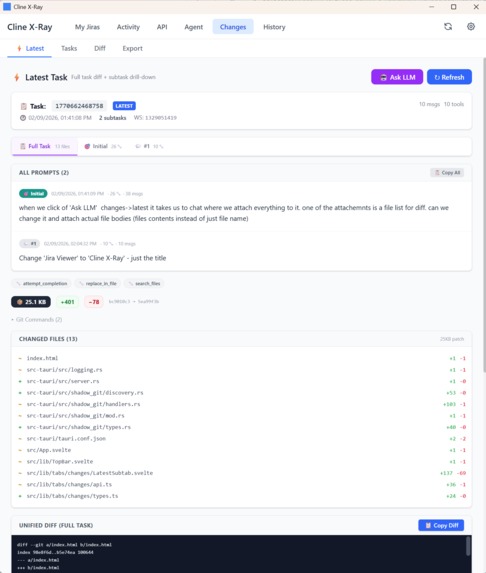

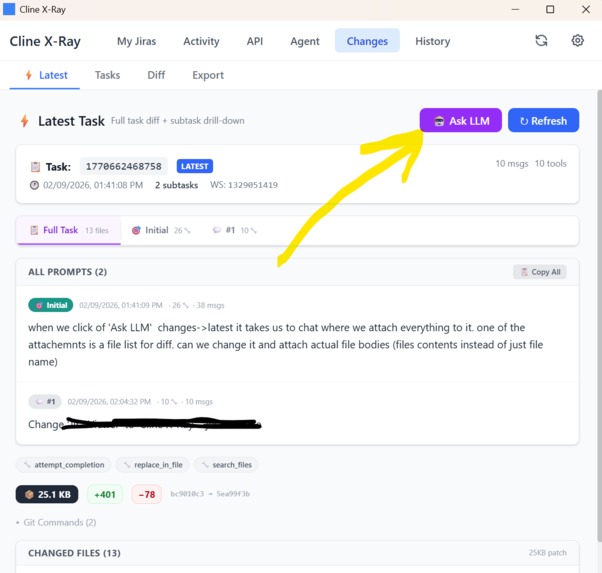

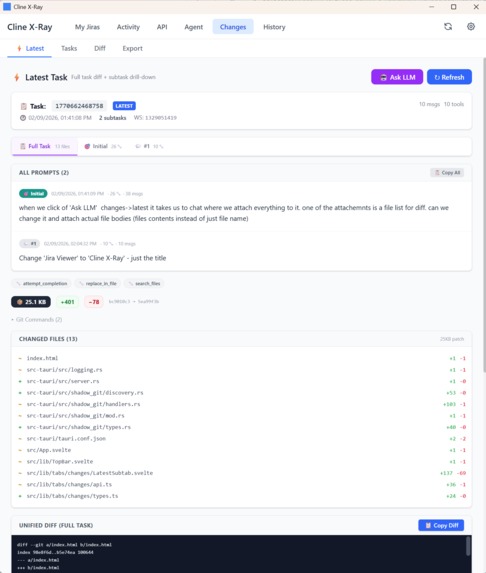

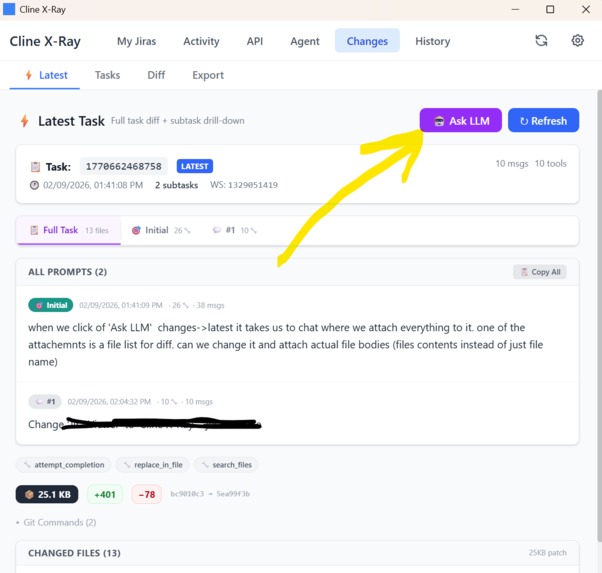

Latest task Dashboard with prompt, changed files list and full diff from shadow cline git workspace

-

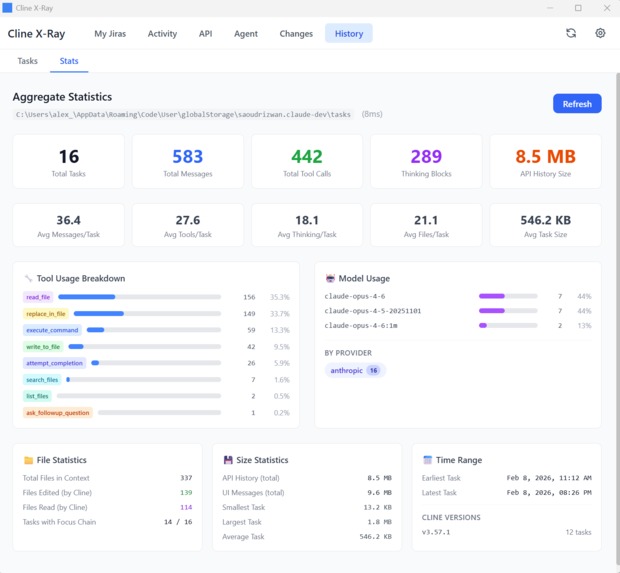

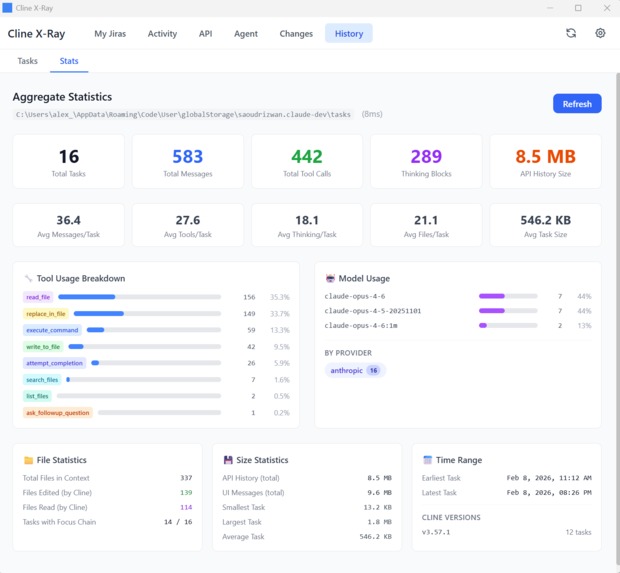

Cline workspace task history dashboard

-

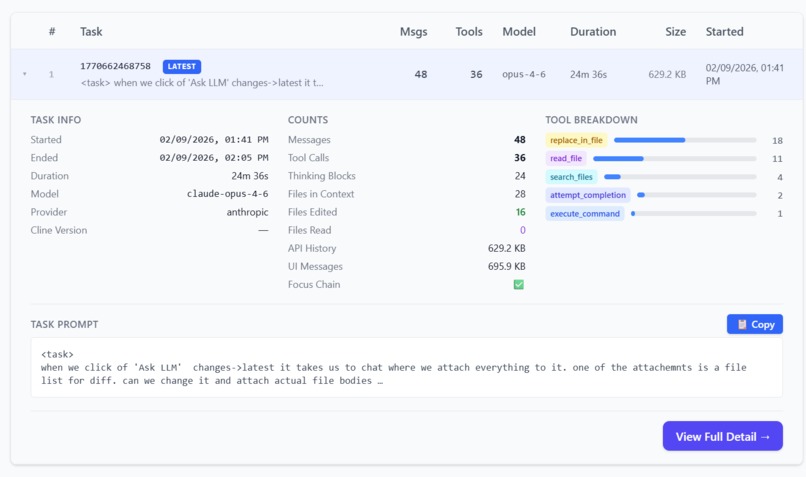

Cline history task summary dashboard

-

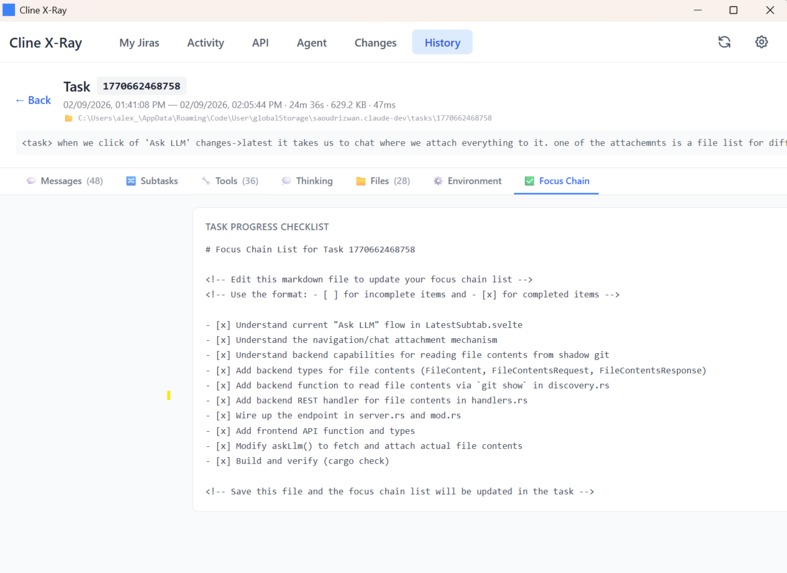

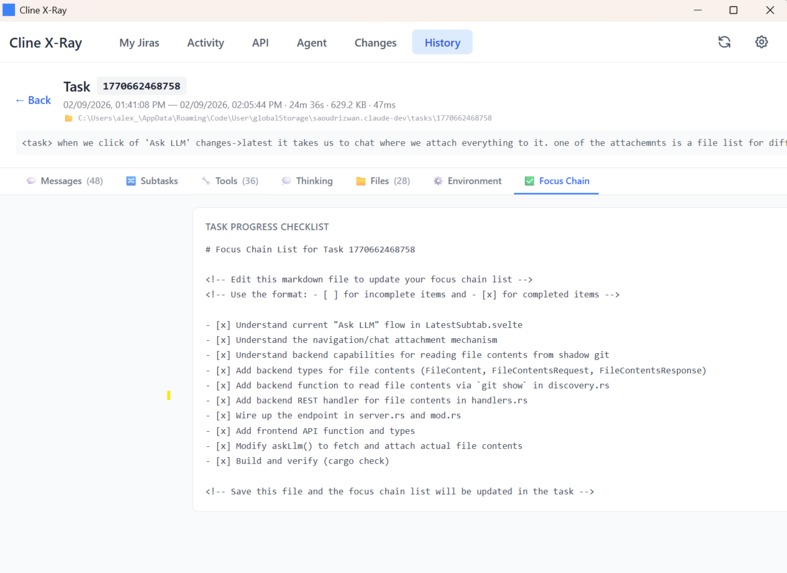

Cline history task focus chain

-

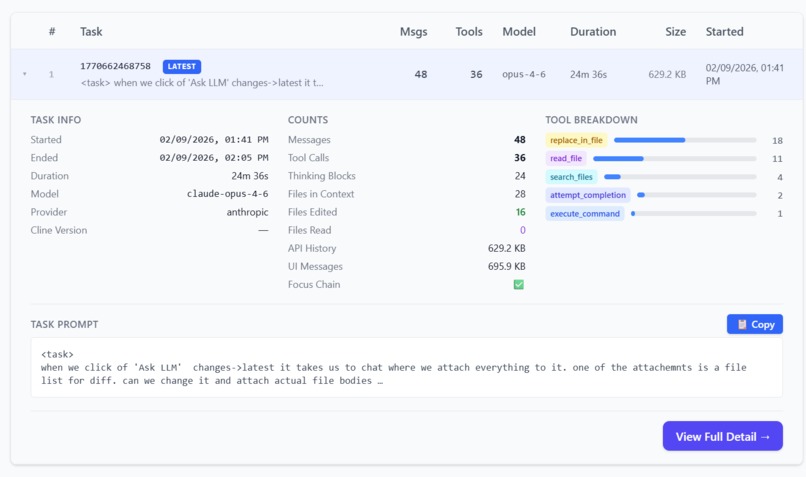

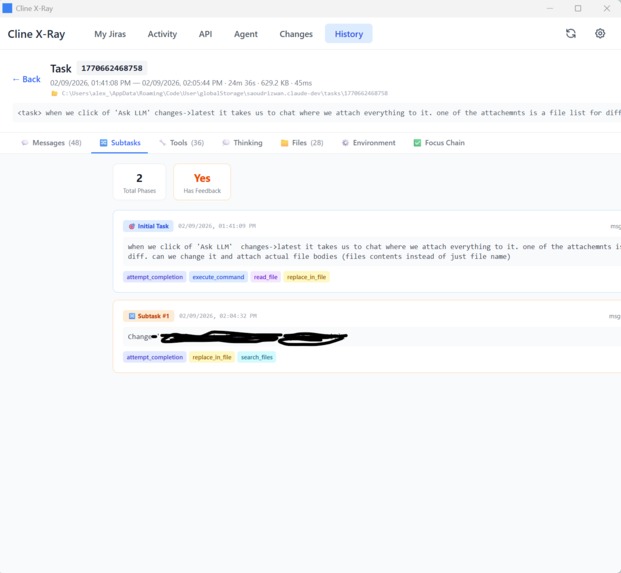

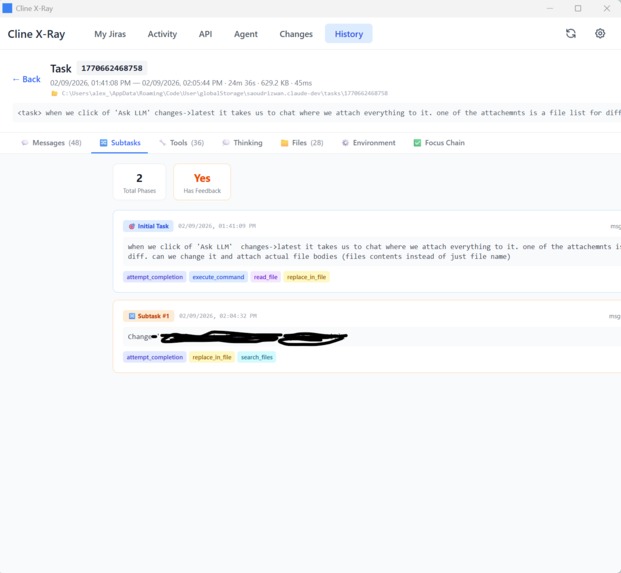

Cline task subtask list

-

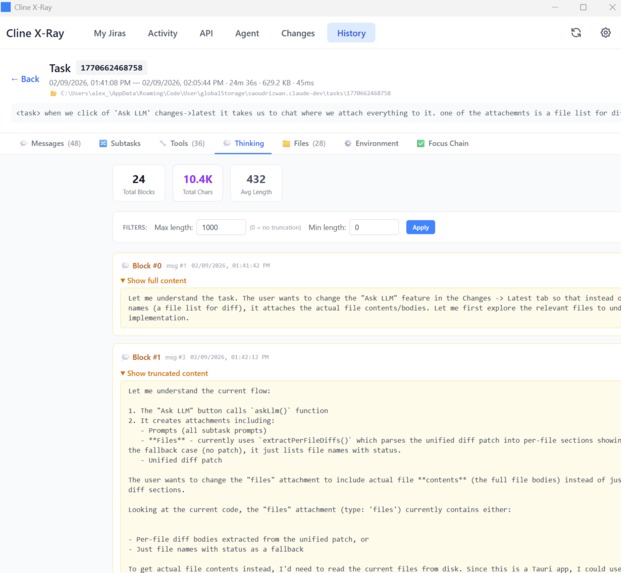

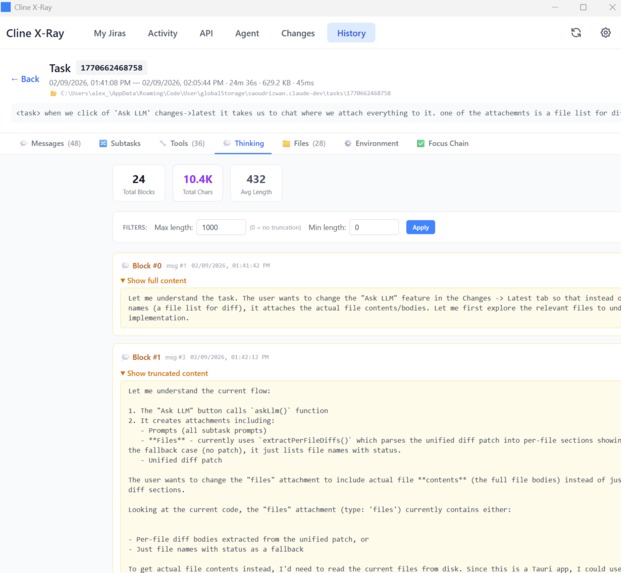

Cline task thinking log

-

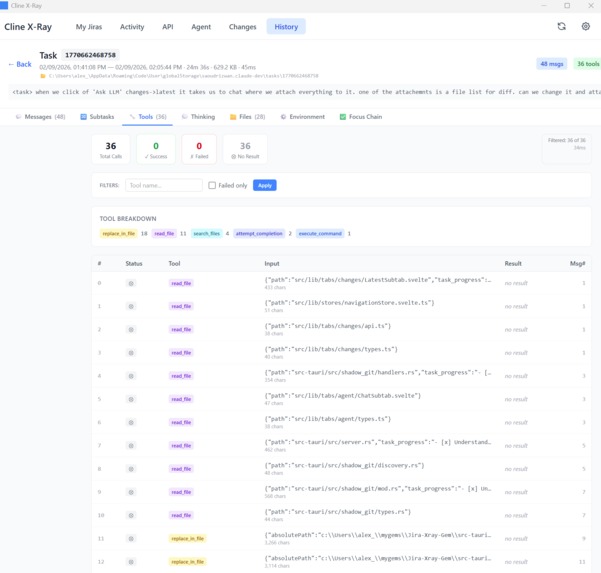

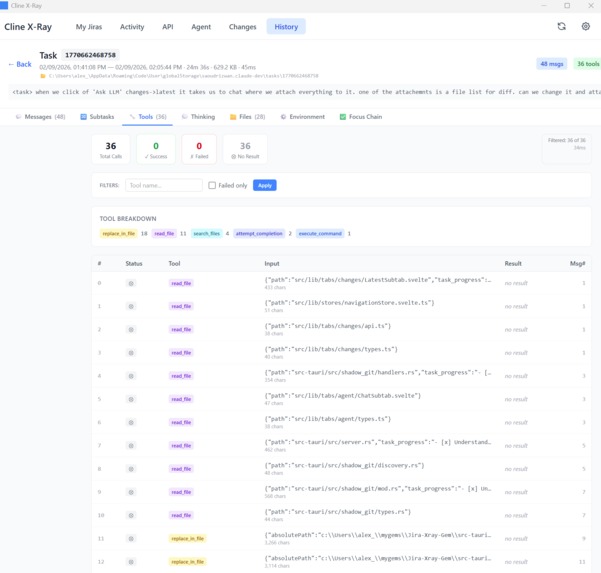

Cline task tools log

-

Cline task diff "Ask LLM" button

-

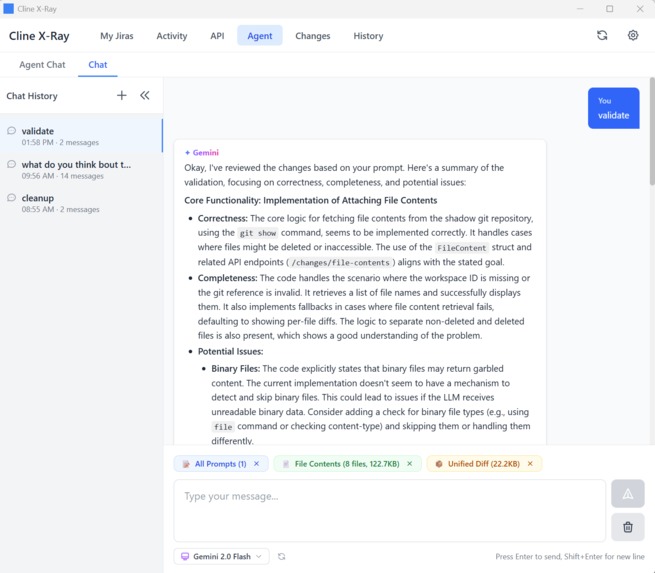

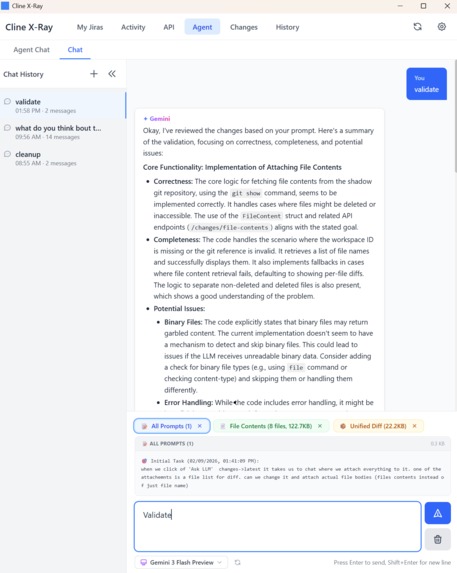

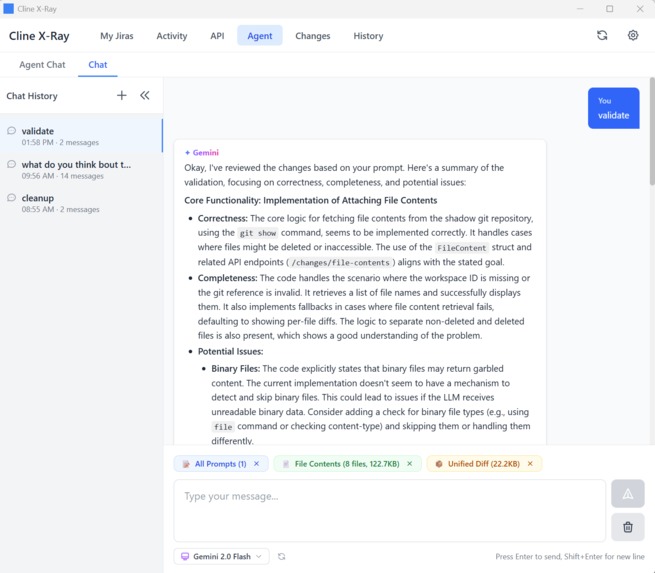

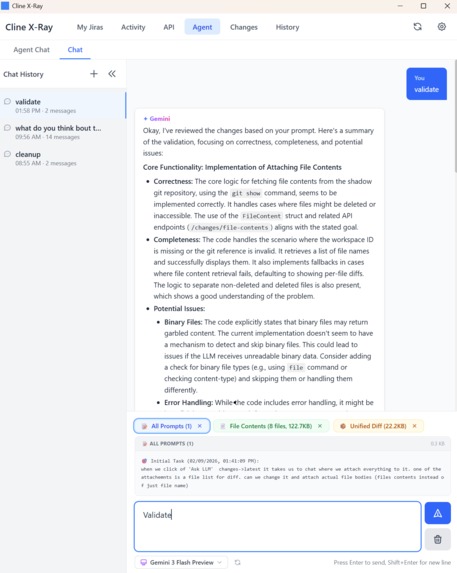

Gemini3 validating cline shadow diff

Inspiration

Cline X-Ray was built specifically for Gemini 3.

Gemini 3 introduces a new class of reasoning models — capable of analyzing complex systems, long timelines, and multi-step causality. While experimenting with Gemini 3 during agent-assisted coding, we discovered a critical limitation:

Gemini’s reasoning power is only as good as the structure of the evidence it receives.

Modern AI coding agents like Cline generate large, multi-step changes across files, tools, and models. After execution, most of this context is flattened into summaries or explanations — exactly the kind of abstraction that weakens Gemini’s ability to reason precisely.

Cline X-Ray was inspired by a simple question:

What if Gemini 3 could inspect agent runs the same way developers inspect Git history — using diffs, timelines, and concrete artifacts instead of summaries?

Instead of asking agents to explain themselves, Cline X-Ray exposes the artifacts they already produce — commits, diffs, logs, and metadata — and reconstructs execution in a form that Gemini 3 can reason over directly.

What it does

Cline X-Ray is a post-execution explorer for agentic coding sessions, designed to act as a structured evidence layer for Gemini 3.

It reads Cline’s on-disk work artifacts — shadow-Git repositories, task metadata, message history, and tool logs — and presents them as a navigable execution history.

Rather than summarizing behavior, it shows:

- which files were read and edited

- how changes evolved across tasks and subtasks

- which prompts and tool calls produced which diffs

- how models, execution size, and timing varied over time

Because this output is grounded in concrete artifacts, Gemini 3 can be used as a second-party reasoning model to:

- explain why changes happened

- identify risky diffs or scope creep

- suggest safer follow-up refactors

- reason about agent behavior across multiple tasks

How we built it

Cline X-Ray is implemented as a single-process Tauri application with a privileged Rust backend.

- The backend parses Cline’s existing artifacts directly from disk.

- An embedded localhost REST API exposes the data via a stable OpenAPI contract.

- The UI consumes the API the same way an external system or LLM would.

- Parsing is done on demand and aligned with task boundaries, diffs, and timestamps.

This architecture allows Gemini 3 to be integrated cleanly as an independent reasoning layer, without coupling inspection logic to any single agent or UI.

Challenges we ran into

The primary challenge was structural scale.

Agent runs generate thousands of messages, multi-megabyte JSON histories, and many small diffs across steps. Early designs collapsed too much logic into large files and endpoints, which made both human review and Gemini-based reasoning brittle and inefficient.

Gemini performs best when context is well-structured. To support that, we redesigned the system around diff-aware and execution-aware boundaries, splitting parsing, handlers, and views by responsibility rather than convenience.

Accomplishments that we're proud of

- Turning opaque agent runs into verifiable execution timelines

- Aligning prompts, tool calls, and diffs into a single coherent view

- Enabling Gemini 3 to reason over agent behavior using real artifacts

- Making AI-generated code reviewable without relying on self-explanations

- Designing an inspection layer that scales as agents and models improve

What we learned

Gemini 3 reinforced an important truth: stronger reasoning models amplify both good and bad structure.

Large files, monolithic handlers, and implicit boundaries don’t just hurt maintainability — they actively degrade LLM reasoning quality. Evidence-first workflows built on diffs, logs, and timelines dramatically improve how models like Gemini analyze complex systems.

Inspection is not optional infrastructure for AI systems — it is foundational.

What's next for Cline X-Ray

Next, we want to move from inspection into orchestration using Gemini 3:

- Generate diff-aware follow-up prompts for refactors and fixes

- Use Gemini to flag risky changes and explain complex diffs

- Compare agent runs over time to detect regressions or drift

- Enable governance and policy checks driven by execution history

Our long-term goal is to make agent-written code as inspectable, explainable, and governable as human-written code, with Gemini 3 acting as a first-class reasoning partner — not just a chat interface.

Built With

- axum

- gemini

- git

- openapi

- rust

- serde

- tauri

- tokio

- typescript

- vscode

Log in or sign up for Devpost to join the conversation.