-

-

-

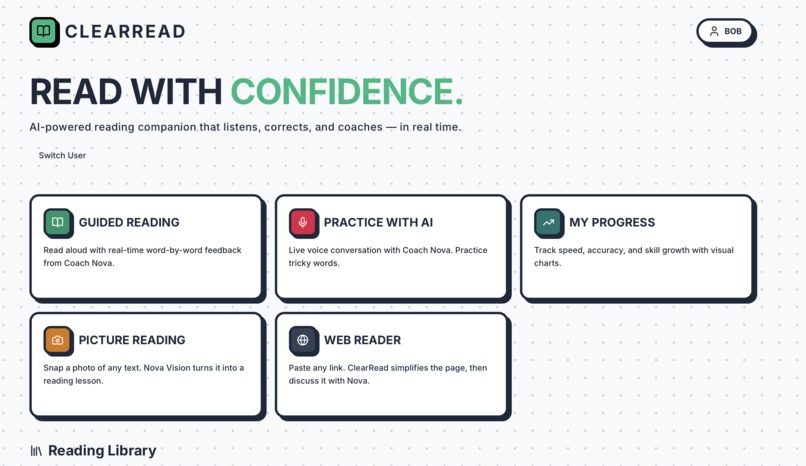

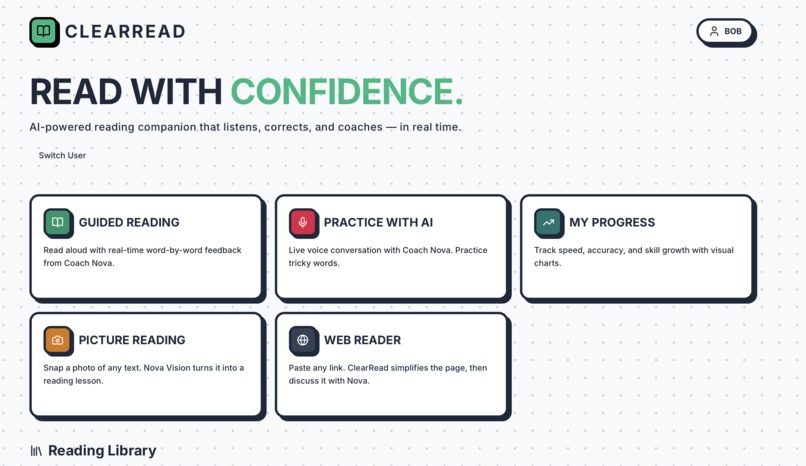

Home page of ClearRead Showing All Features

-

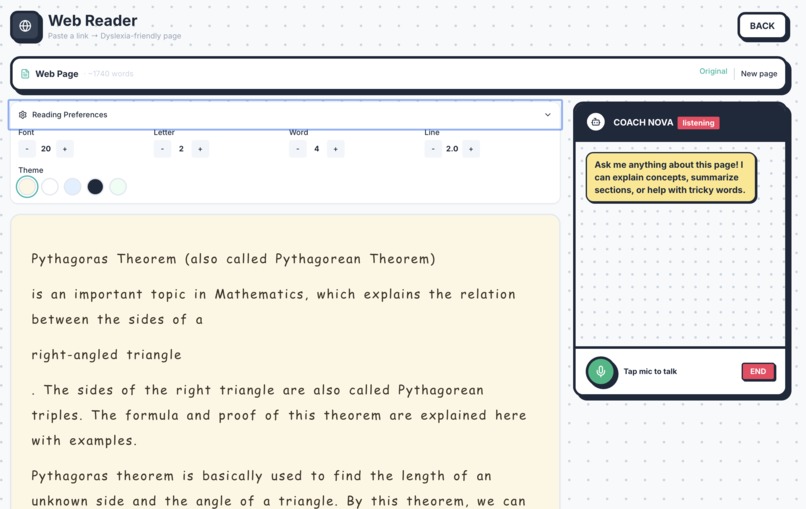

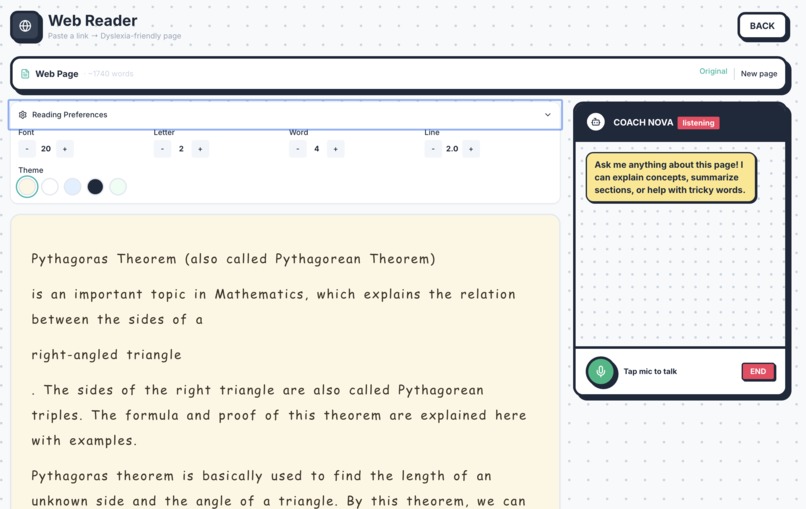

Webreader - Simplify any webpage to dyslexia friendly content and discuss with Nova

-

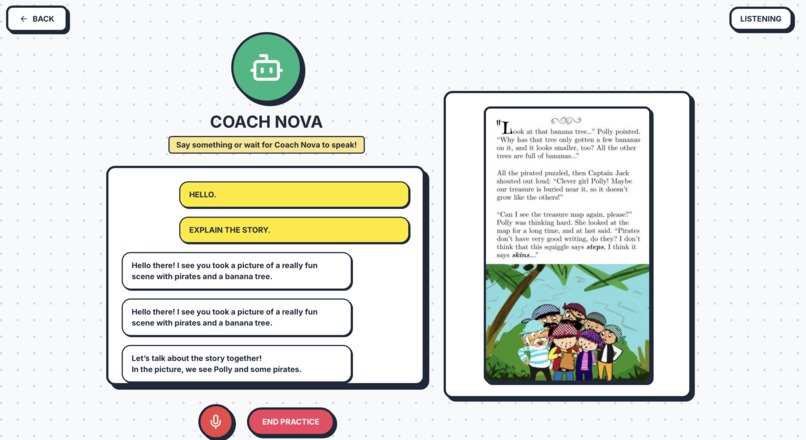

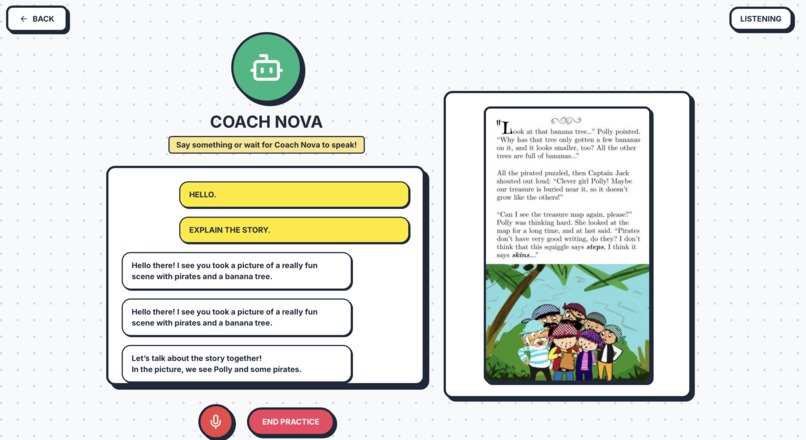

Picture Reading - Upload or take pictures and talk with them

-

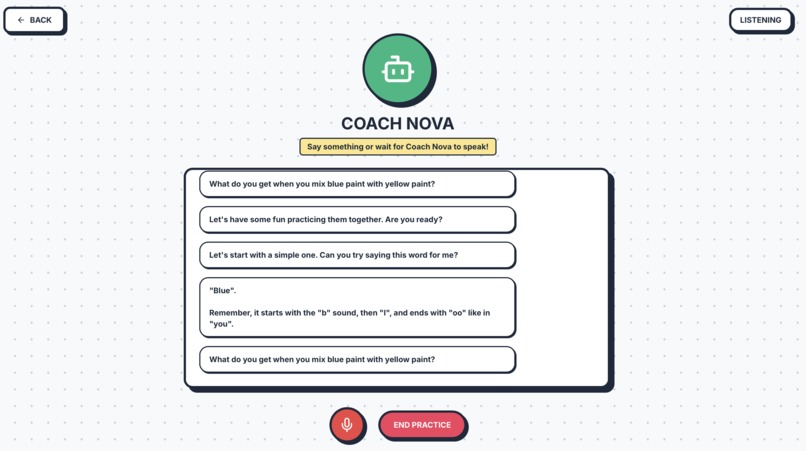

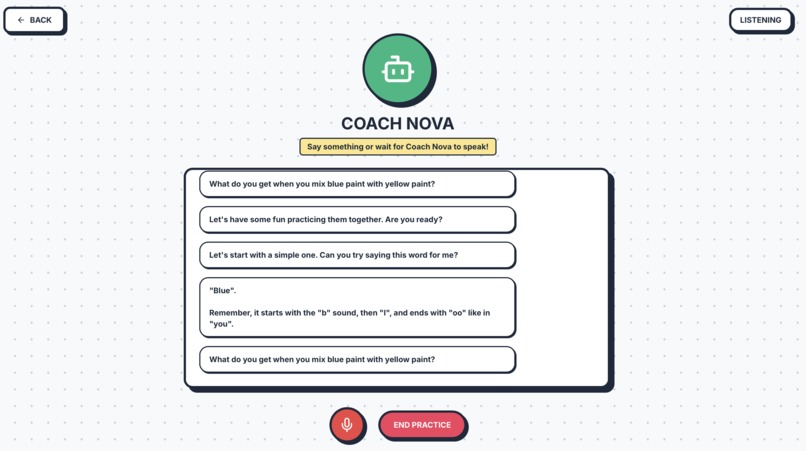

Practice with Nova Tutor on the user's weakness

-

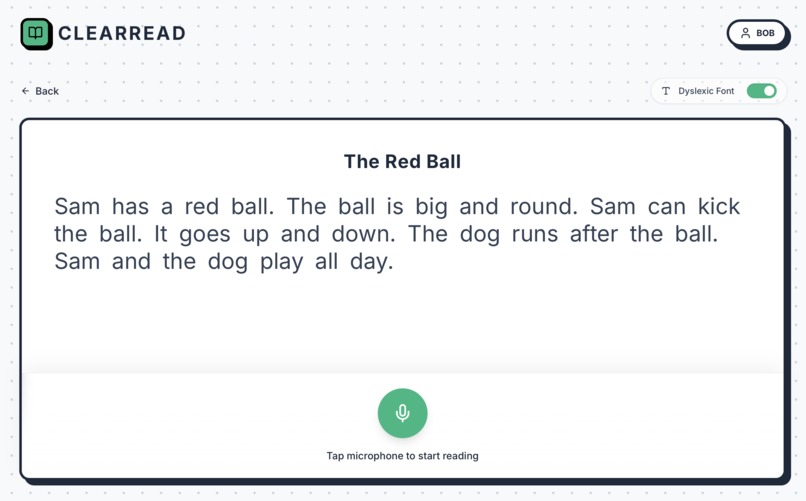

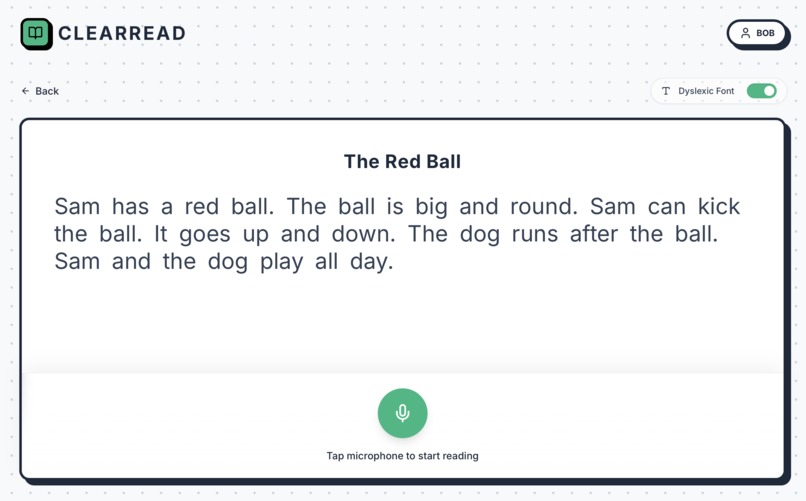

Practice Paragraphs for Reading to Get Your Scores

-

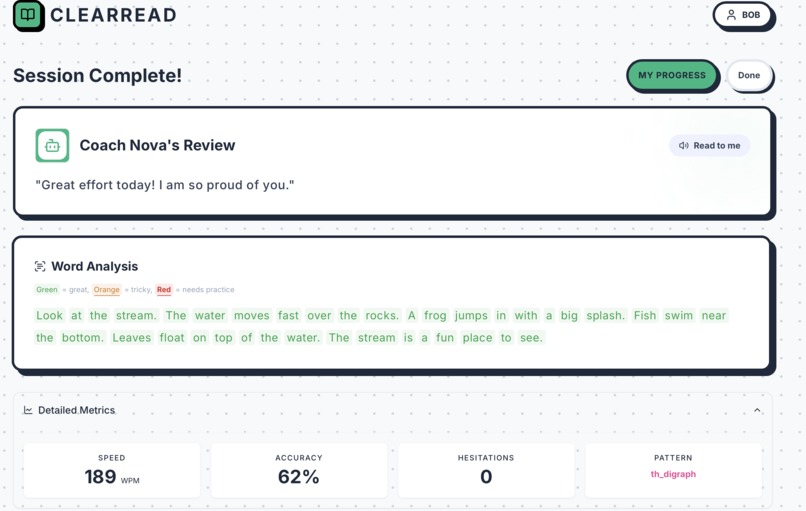

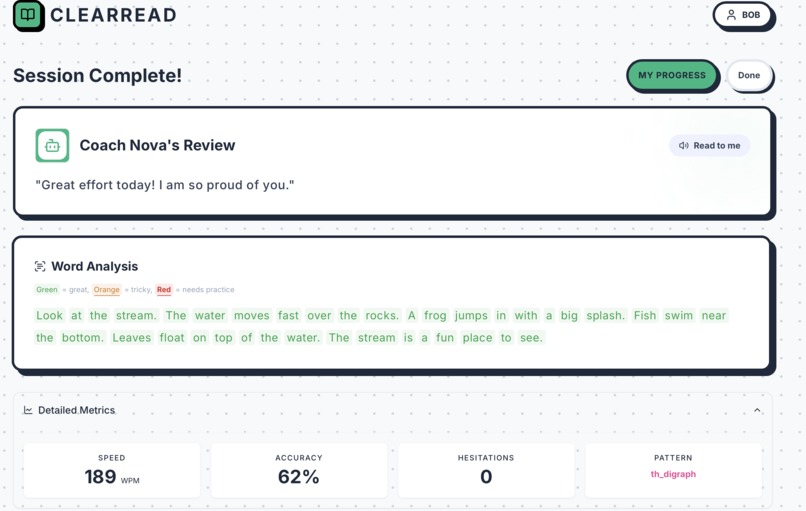

Practice session's Metrics and Feedback

-

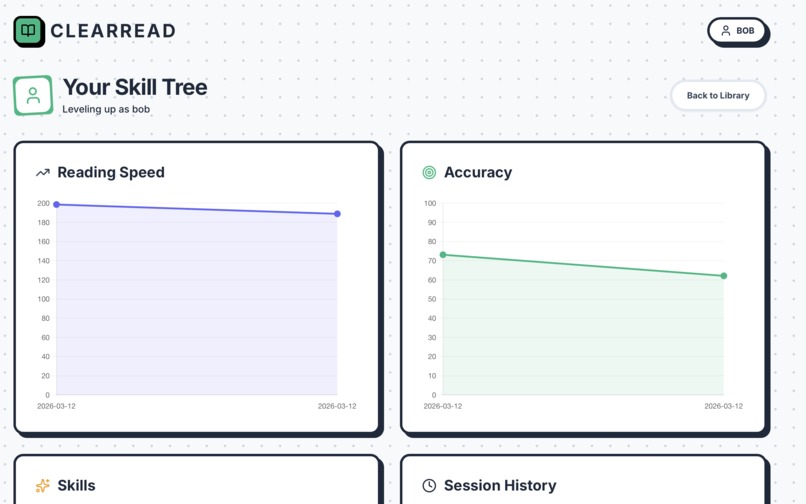

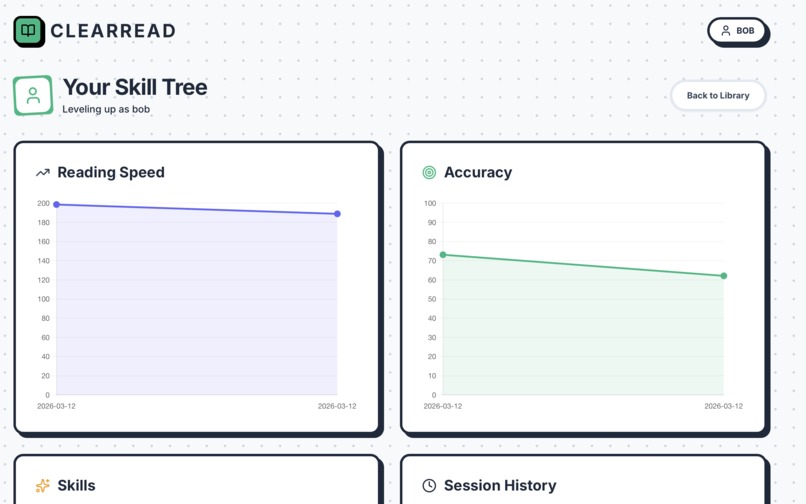

Progress charts with all metrics of user performance

Inspiration

Dyslexia affects a massive portion of students, but most reading accessibility tools on the market are either overly clinical, generic, or just simple text-to-speech readers. I noticed that learners get frustrated because these tools read for them rather than teaching them how to overcome their specific reading hurdles. We wanted to build something that acts like a real tutor—an application that actually listens, understands exactly where a learner struggles, and dynamically adjusts the curriculum to help them improve. Our goal was to leverage real-time Nova AI's Multimodal capabilities specifically Nova 2 Sonic, to make reading accessible and personalized anywhere, from a images to a complex web pages.

What it does

ClearRead is an adaptive, multimodal reading tutor built specifically for dyslexic learners. It provides real-time, interactive reading practice across multiple formats.

The platform offers a few core features:

- Adaptive Practice: Kids read passages out loud. The system uses audio transcription to evaluate their fluency, tracking specific phonics errors and building a dynamic cognitive error profile over time.

- Simplied Web Reader: Users can paste any URL. ClearRead strips away the clutter, simplifies the text to their reading level and makes a simplified redesign of the webpage content with voice assistant that discusses the page content with student, clarify their doubts about the page, teaches concepts and corrects their mistakes.

- Multimodal Nova Vision Reading: Student can snap or upload a photo they want to learn, discuss. The system extracts the text using multimodal Nova 2 lite model and instantly turns it into an interactive reading lesson.

- Metrics & Feedback: All reading sessions feed into a metrics engine that tracks reading speed, accuracy, and specific skill growth, allowing learners to actually see their improvement over the time.

How we built it

We leaned heavily into the Amazon Nova family of models to handle the complex multimodal requirements of the application.

- Backend & Adaptation Engine: The core intelligence is the dynamic cognitive error profile.

- After every session, our backend parses the raw acoustic trace from Nova Sonic (detecting specific hesitation durations, skipped words, and phoneme struggles).

- feeding this structured trace into Nova 2 Lite. Nova Lite acts as a diagnostic reasoning engine, inferring whether the child struggled with visual tracking, working memory, or specific decoding patterns.

- The backend then uses an Exponential Moving Average (EMA) to update their long-term profile with more weight to recent session performance of user.

- Real-time Voice Interaction: We implemented bidirectional WebSockets combined with Nova 2 Sonic for the interactive practice mode, Web Reader features. This allows the user to have low-latency, natural voice conversations with "Coach Nova" where the AI listens to their reading, gently corrects pronunciation, and dynamically teaches them based on their specific error profile.

- Image Processing: We integrated Nova Multimodal capabilities to process images taken by the user. The model acts as an OCR engine with contextual understanding, extracting text from physical media and structuring it for the digital reading practice UI.

Challenges we ran into

Handling real-time multimodal streaming was our biggest technical hurdle. Synchronizing audio chunks over WebSockets while streaming them into the Nova Sonic model required careful state management to maintain a natural conversational flow, ensuring the AI could pause, listen, and not talk over the user.

Another major challenge was getting the prompt engineering right for the educational aspect. We had to heavily tune the Nova models output with prompt iterations so they wouldn't just give away answers or act like a standard chatbot. We needed the model to act like a teacher, waiting for the user, focusing strictly on specific phonics patterns identified in the backend database, and discussing web page content naturally without being overly pedantic.

Accomplishments that we're proud of

We are incredibly proud of the adaptive learning pipeline we built. The fact that the system

- can listen to a student read, identify through data that they specifically struggle with consonant blends, update their profile in the backend

- Integrating this seamlessly with multimodal nova capabilities and random internet articles via the Web Reader makes it a complete ecosystem.

- then instruct the Nova Sonic voice model to specifically prioritize words with consonant blends in the very next session is huge.

- It moves the project from a generic wrapper to a truly personalized educational engine.

What we learned

We learned a lot about managing low-latency audio streaming and working with bidirectional model APIs. On the product side, we learned that accessibility requires very deliberate technical and design choices—from the frontend hierarchy down to the specific personality and prompt structures we feed into the language models. AI in education works best when it is highly opinionated, context-aware, and data-driven.

What's next for ClearRead

The immediate next step is expanding our metrics dashboard to provide actionable reports for teachers and parents, allowing them to monitor the exact phonics patterns a student is struggling with. We also plan to implement offline caching and a PWA wrapper so students can practice reading their physical books via the camera without needing a constant high-speed internet connection.

Log in or sign up for Devpost to join the conversation.