-

-

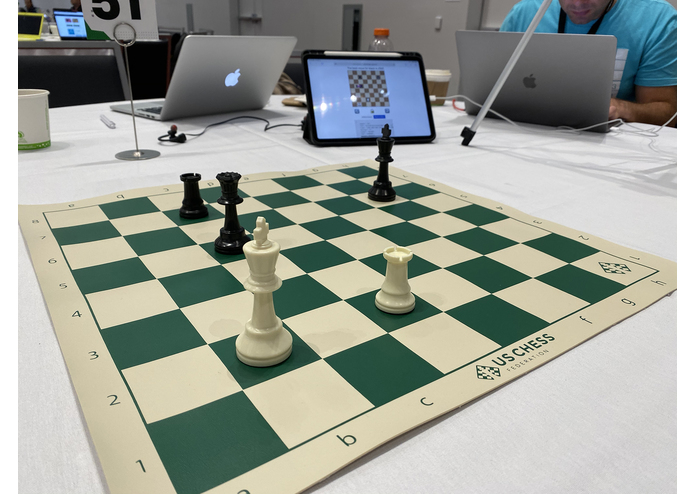

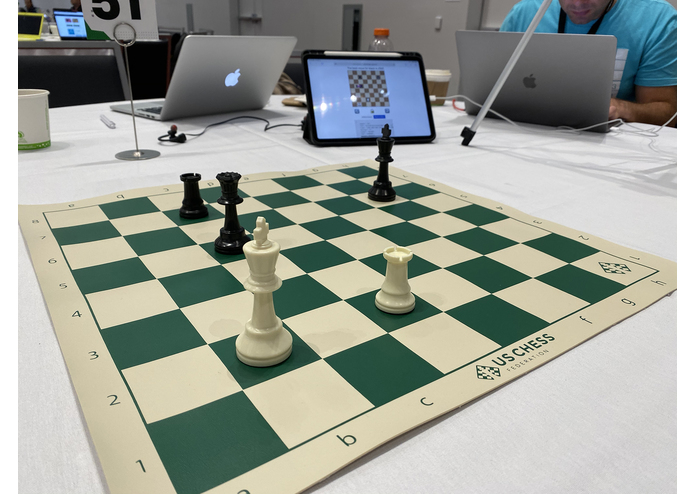

The iPhone captures images and runs a machine learning model to determine where and what the pieces are

-

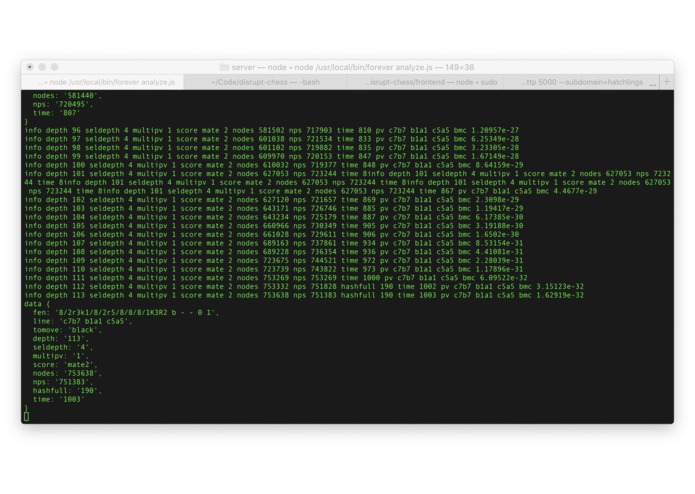

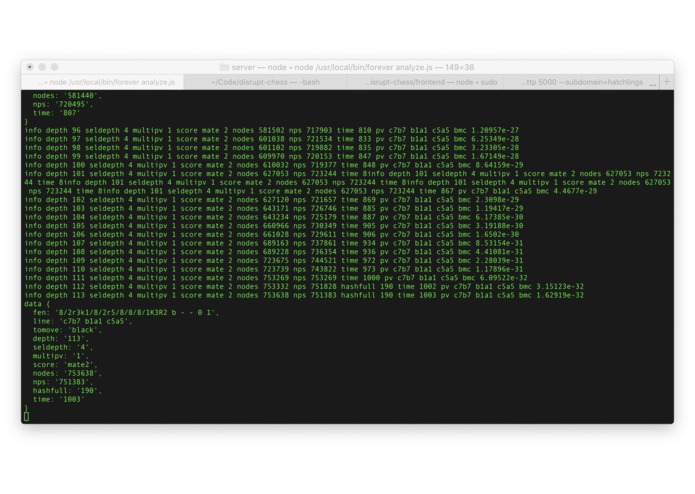

Our node server receives that board state, runs it through the Stockfish chess engine, and passes it to the web client

-

The iPad displays the best next move via a web interface

-

The whole thing is controlled by the state of the physical chess board

-

The output of the model isn't perfect but it's passable!

Inspiration

We love chess! But manually inputting a board state into computer chess engines for analysis and recording your moves by hand during a game is a slog.

What it does

We use a machine learning model to automatically understand the state of a chess board using a smartphone camera! We then connect it to the Stockfish chess engine and display the board analysis. It's bringing the best of computer chess into the physical world.

How we built it

We collected and labeled pictures of a chess board and used them to train a machine learning model to recognize what the current state of a Chess board is. Then we pass that info to Stockfish for analysis and display it in real-time via a web interface!

Challenges we ran into

Training machine learning models takes a long time... the model we trained isn't perfect yet! But it does a pretty good job considering the time constraints; we're working on a custom model that should do much better soon.

Accomplishments that we're proud of

Getting a machine learning project working end to end in such a short period of time... including collecting and labeling training data at the hackathon!

What we learned

There's lots of room for improvement for computer vision pipelines to mobile... Keras and CoreML don't play nice.

What's next for Chess Boss

We plan to launch a Kickstarter to get funding so we can turn this into a real product to enhance Chess for people playing in the real world.

Built With

- ai

- coreml

- firebase

- javascript

- machine-learning

- swift

- vision

Log in or sign up for Devpost to join the conversation.