-

-

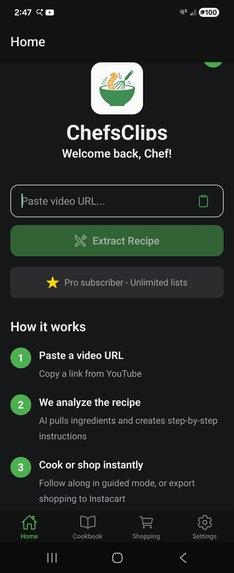

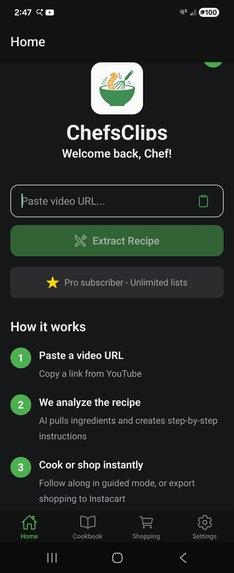

Extract YouTube recipes

-

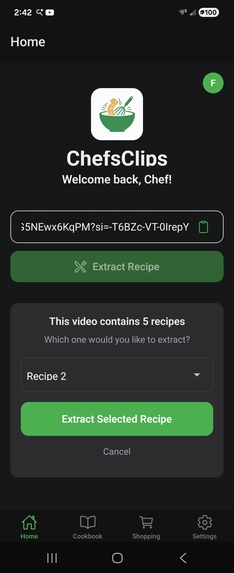

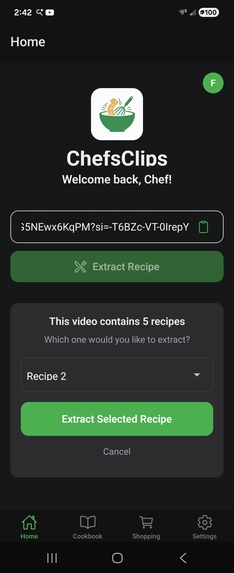

Pick the recipe you want from videos with multiple recipes

-

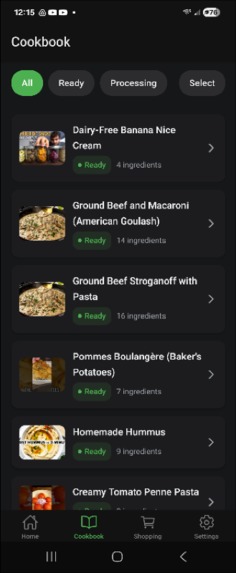

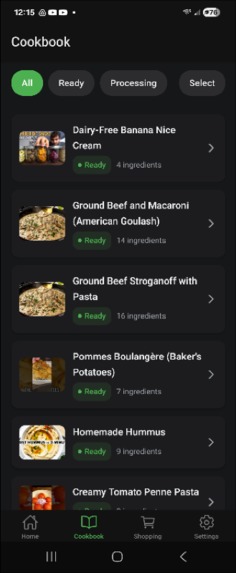

Collect and save your favorite recipes

-

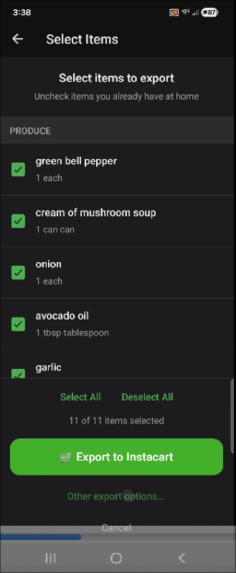

Create smart shopping lists

-

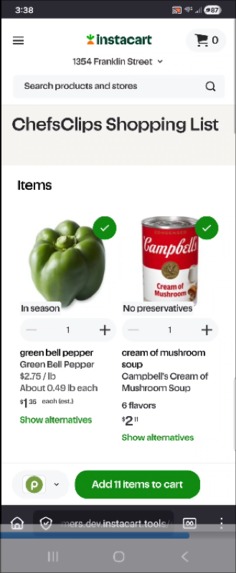

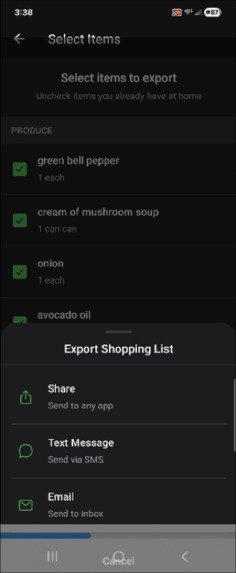

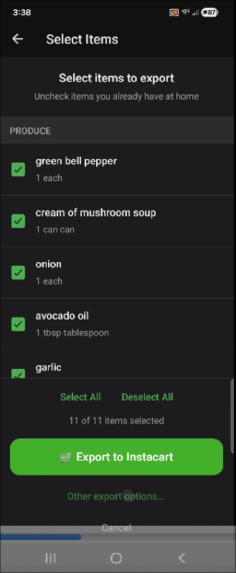

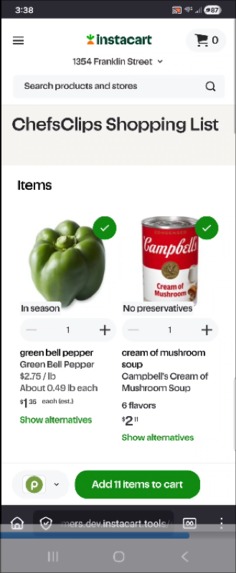

Export shopping lists to Instacart

-

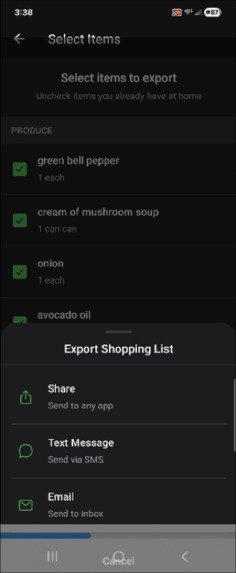

Export shopping lists to text message, email, or even other apps like native note-taking apps, etc.

-

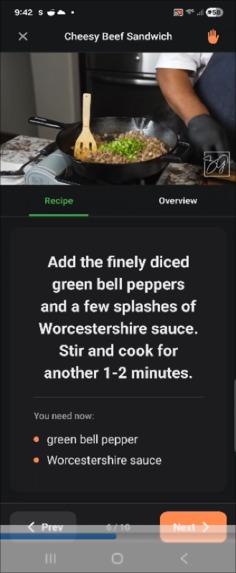

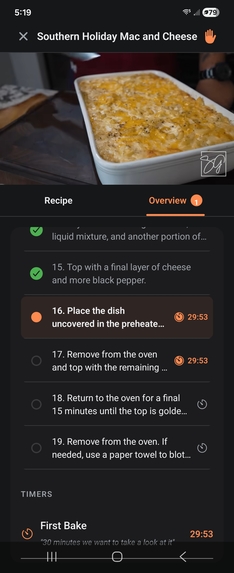

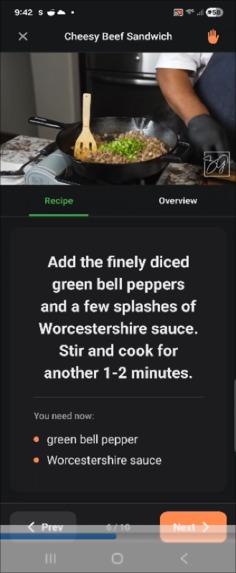

Guided cooking gives a step-by-step walk-through while watching the video on YouTube

-

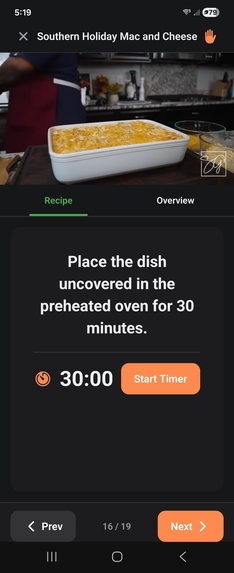

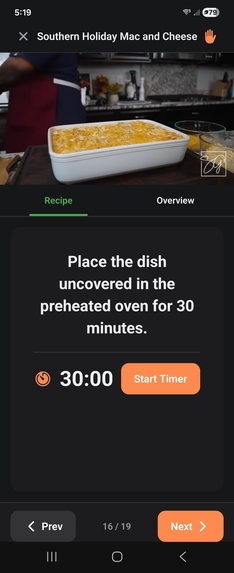

Guided cooking includes extracted timers for long-running tasks when moving onto other steps

-

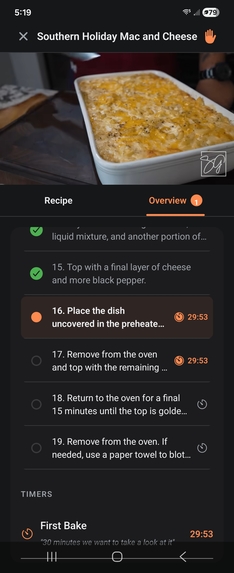

Guided cooking includes an overview and shows what steps in-progress timers relate too (which step is waiting for the timer)

-

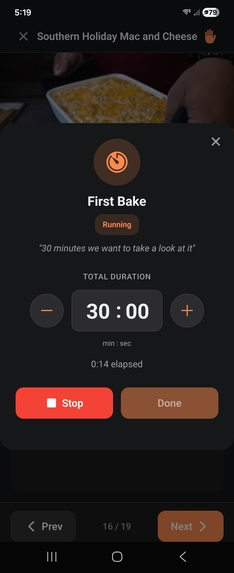

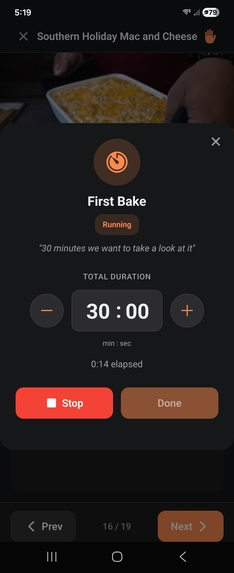

Guided cooking - timers themselves are configurable (in case you want to customize how long your noodles boil, for example)

Inspiration

Last year I was diagnosed with Celiac Disease. On one hand — relief. I finally understood what had been causing me to live in fear of being more than ten minutes from a bathroom for years. On the other hand, it meant a pretty massive overhaul of how I eat.

I'm a full-time software consultant with a beautiful but rambunctious two-year-old, and my wife and I both work to keep things going. Before the diagnosis we leaned hard on delivery apps and fast food. Afterward, most of that just wasn't safe anymore.

So I started turning to YouTube to learn how to actually cook. And look — there's amazing content out there. But the process of cooking from a video is awful. You pause, rewind, scribble down ingredients on whatever's nearby, lose your place, rewind again, realize you forgot to buy something, and eventually just pick one recipe for the week and call it done. That was my life for months.

When I read Eitan's brief — reduce the friction of getting his audience back into the kitchen — I didn't see a hackathon prompt. I saw my own Tuesday night. It resonated immediately and honestly made me wonder why I hadn't thought of it before.

I wanted to answer two things: How do you make it as convenient for a YouTube-taught cook to get into the kitchen as it is to just order delivery? And can you do that without screwing over the creators whose content makes the whole thing work?

What it does

ChefsClips keeps things simple. You don't need help getting started — just install the app, pick a video, get the groceries delivered, hit "Let's Cook" and follow the steps. That's it.

Here's what's under the hood:

Recipe Extraction — Paste a YouTube link. The AI analyzes the video and pulls out a structured recipe — ingredients, quantities, categories, steps. A lot of cooking videos actually have multiple recipes in one video, so the app detects that and lets you pick which one you want before moving forward.

Virtual Cookbook — Every recipe you extract gets saved with the thumbnail, title, and ingredients. Over time it becomes a personal cookbook built entirely from videos you already trust.

Smart Shopping Lists — Combine ingredients across multiple recipes into one list. Export straight to Instacart for delivery or pickup, send it as a text, email, or save it to whatever list app you already use.

Guided Cooking — This is the thing I'm most excited about. ChefsClips builds a step-by-step walkthrough synced directly to the creator's original video. Two modes:

- Guided — the video stops after each step and waits for you to hit "Next." You never lose your place. Trust me, this is a lifesaver, especially with YouTube Shorts where everything moves fast.

- Streamlined — the video just plays through like normal, and the app updates the instructions as the video progresses. Better for longer content where you want the flow.

Smart Timers — When a step involves waiting (boiling, baking, resting), the app extracts those timers automatically. Each timer tracks which step kicked it off and which step needs it done. So when you're chopping vegetables and the pasta is boiling, you're not trying to remember when you started it or how long it's been.

I've been using this thing myself throughout development and it dramatically reduces the mental load of cooking from a video. You just stop worrying about losing your place and actually cook. It's a completely different experience.

How this works WITH creators, not against them

OK, this part matters and I want to explain it properly because it shaped the entire project.

Early on, I tried pulling video data directly using yt-dlp and got blocked after my second attempt. My heart sank — I thought I'd picked a brief that couldn't be built without violating someone's terms of service.

But then I found the path through Google's Gemini API. Since YouTube and Gemini are both Google products, I can analyze video content through legitimate channels. No scraping, no sketchy third-party services, no trying to bypass bot protections that would put any real scaling potential at serious risk the moment they caught wind.

Here's the key detail: the only information users see outside of the video player is the ingredient list — and ingredients are facts, not copyrightable expressions. Anytime the user views actual cooking instructions, it's displayed alongside the creator's video. Every recipe view in guided cooking is a view of the creator's content. That means ChefsClips actually increases viewership for creators while making their content way more useful.

This is what I believe moves ChefsClips from a simple hackathon hack to a monetizable platform that is grounded in business ethics and genuine innovation.

How we built it

I learned about this challenge two weeks before it closed. Between my day job and family, there was absolutely no way I was building this to my own standards without AI. And I'll be honest — I'd been slow to adopt these tools. This project changed that. I learned a lot about them, their limitations, and the way that they reduce my own.

The stack:

- Mobile: Expo SDK 54 (React Native), Android-first

- Backend: FastAPI on Vultr, Caddy reverse proxy, SQLite

- Auth: Clerk

- Subscriptions: RevenueCat

- AI: Google Gemini API — not chosen because it's trendy. It was the only approach I found that let me analyze YouTube videos without violating platform terms. This was a business decision as much as a technical one.

Claude Code did most of the actual code generation once I had a detailed spec. But getting to that spec? That took days of conversation, thinking through edge cases, designing directory structure, mapping data flow. More on that in "What we learned."

Challenges we ran into

Legal is the big one. Copyright, YouTube ToS, Instacart API compliance, creator rights — I spent more time on legal research than I expected to spend on this entire project. The yt-dlp incident early on genuinely made me think the brief was impossible to satisfy. Finding the Gemini path was a huge relief.

Timestamp accuracy. Getting Gemini to produce step timestamps that actually line up with the video required a lot of prompt engineering work. At one point the AI was subtracting time for sponsor segments that are baked into the video timeline, which meant every step after minute two was off by about two full minutes. Debugging something nondeterministic is a special kind of fun.

Time. Two weeks. Solo. Full-time job. Toddler. I need more coffee.

Accomplishments that we're proud of

- It works. Like, for real. I've already been using it in my kitchen and it's awesome. That's not a sales pitch — I'm genuinely surprised at how much easier it makes things.

- The ethical stuff is resolved. It's not always easy, but I really do try to do the right thing. Beyond the legal details, I believe this is a win-win-win for platforms, creators, and viewers. Plus maybe one more win for me.

- The fact that I got a product name that rhymes with "chef's kiss" is, for me, chef's kiss.

What we learned

Ever notice how wizards in Harry Potter still need to know how to read?

What you can do with LLMs these days feels like magic, especially coming from a background of poking around in low-level C projects. But using magic still takes skill and forethought. I spent days conversing with Claude — describing architecture, directory structure, design patterns, data models — in extreme detail before I finally told it to generate a spec and submit it to an instance of Claude Code running on my machine. When I did? Basically just... done. Poof. No bugs. It was unbelievable.

But then I kept going — integration, database, testing, finishing multiple features simultaneously (something I would never attempt solo under normal circumstances). And I realized I had to keep a massive amount of context in my head while the AI chugged away at the lower layers. The mental engagement was the same as writing the code myself. The difference was that I could see further ahead than I'd normally dare to.

I also insisted on clean directory structure and intentional design so I could find my way around the codebase without spending tokens when I wanted to tweak something. And I can — and honestly the code is written better than I would have done on a first try with twice as much time.

The other big lesson: AI output is nondeterministic in ways that actually matter. Gemini occasionally hands back malformed JSON or timestamps that violate basic arithmetic. You don't trust it. You build validation layers that catch the garbage before it reaches users. That's the deal.

What's next for Chefs Clips

- Dietary restriction awareness — Starting with gluten-free substitutions, because as a Celiac this is personal. Then expanding to nut allergies, dairy alternatives, and more.

- Smarter shopping lists — Reusable lists, importing items from text messages, better deduplication across recipes.

- iOS release — Built with Expo, so this is configuration and App Store registration, not a rebuild.

- Pantry tracking — If users shop through ChefsClips regularly, I can infer what they probably have on hand and suggest recipes they're already equipped to cook. This was actually the other half of Eitan's original brief — and ChefsClips is in a unique spot to deliver on it.

I'm planning to launch on the Play Store not long after this contest wraps. If you're interested, sign up with the website!

Built With

- clerk

- expo.io

- fastapi

- instacart

- kroger

- llm

- postgresql

- python

- react-native

- revenuecat

- sqlalchemy

- sqlite

Log in or sign up for Devpost to join the conversation.