-

-

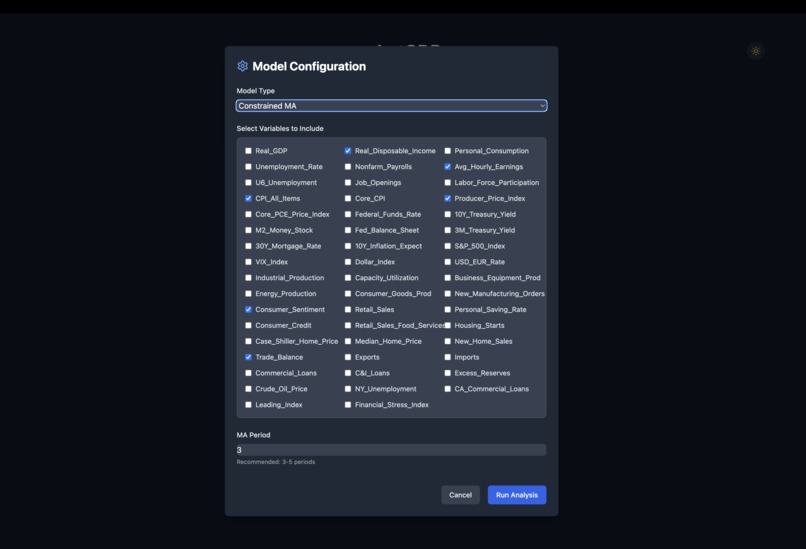

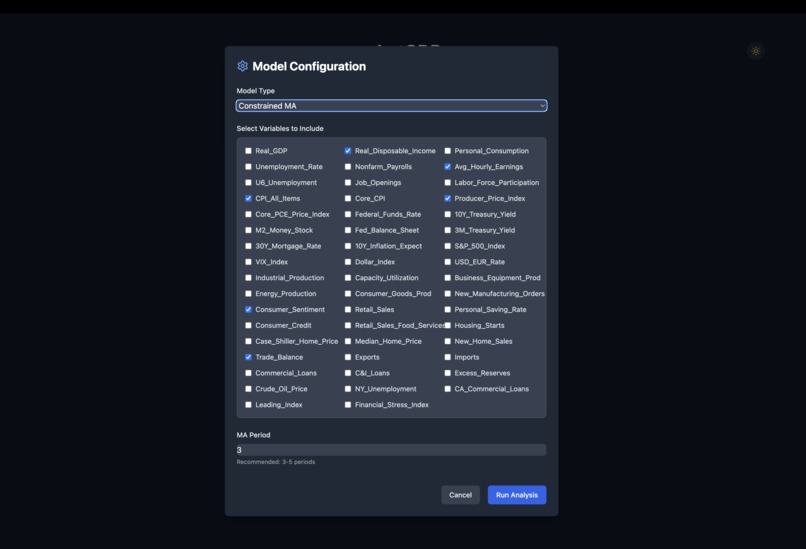

Model configuration with data from the US economy between 1947 and 2024, various model types and hyperparameters

-

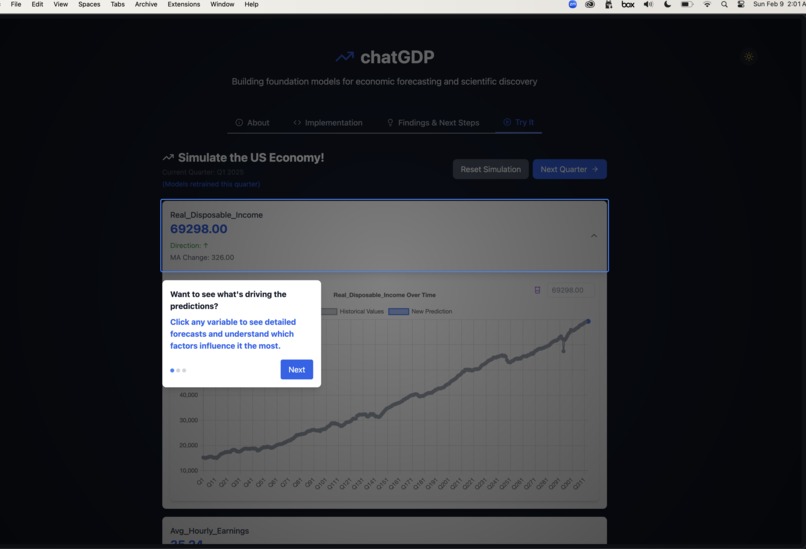

Tour of features, for accessibility and ease of use across disciplines

-

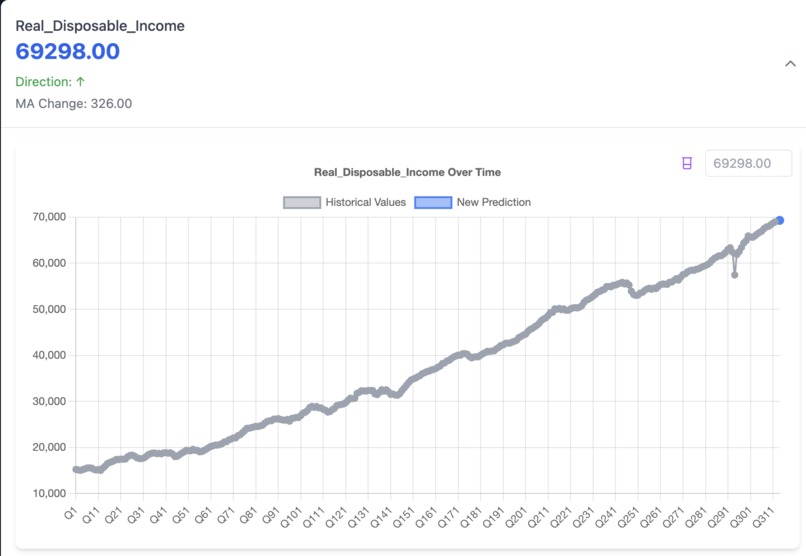

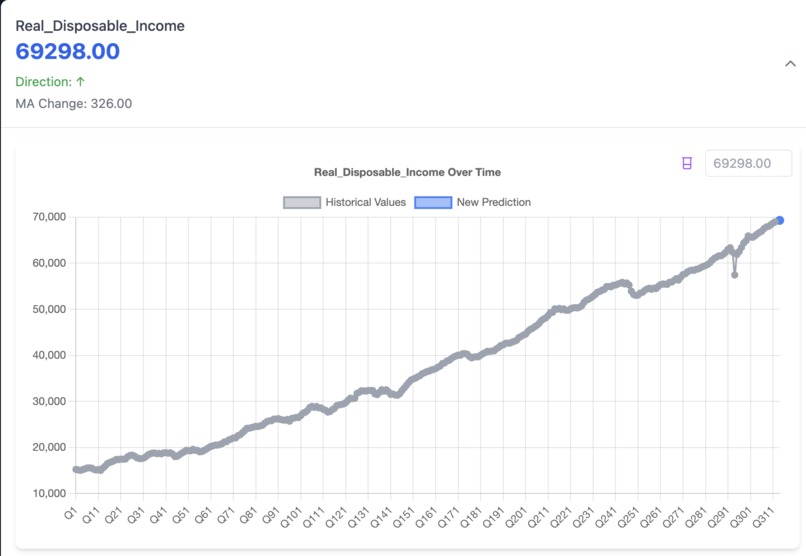

One step of the economy simulation, showing a new prediction and the button (beaker, top right) to run experiments in the simulation.

ChatGDP: A Generative Model of the Economy

Inspiration

Real-world economies are far more complex than traditional models assume. Instead of relying solely on broad theories, imagine a model that can predict the impact of a policy change—say, a 10% tariff on China—down to individual firms, communities, and people. Deep generative models of the economy could enable more efficient policies and a more equitable distribution of gains. ChatGDP is a toy prototype built in under 24 hours to spark ideas and further research in this area.

What It Does

ChatGDP takes quarterly macroeconomic data from any country and predicts the state of the economy in the next quarter by mapping every input feature forward. Key features include:

- Local Models: Each economic feature is predicted independently using data from the previous quarter.

- Multiple Forecasting Methods:

- A volatile yet expressive autoregression model.

- A simpler moving average model that aligns closely with historical data.

- Flexibility: Works with data of any resolution (quarterly, daily, etc.) and is adaptable to any economy.

How It Was Built

The project followed a "vibes coding" approach, combining AI coding tools with iterative development:

- Backend & Frontend Integration: Initial challenges in linking the two were overcome using AI tools (like bolt.new and Cursor) and by translating Python code examples into TypeScript.

- Modeling: Early experiments with transformer models gave way to simpler, local models. Random forest regressors were first tried but tended to converge to historical averages, so they were refined by updating weights every simulated year. A constrained moving average model that predicts trend continuations or breaks proved more realistic.

- Frontend: Despite having no prior frontend experience, the project was executed step-by-step, using representative Python code as a guide for TypeScript implementation.

Challenges

Modeling

- Data Resolution: Lack of high-resolution, microeconomic data made it hard to train deep models.

- Model Convergence: Early random forest approaches converged too quickly to historical averages.

- Simpler Alternatives: Constrained models focusing on the magnitude of change provided a more realistic simulation.

Frontend Development

- Experience Gap: Limited frontend expertise made integration challenging.

- Tool Limitations: AI assistants specialized in frontend tasks often struggled with backend integration, necessitating a more modular approach.

Accomplishments

- Built a realistic model predicting future economic conditions.

- Developed interpretable models that allow interaction and experimentation.

- Demonstrated the potential of generative AI in economic modeling, inspiring further innovation.

Lessons Learned

- Deep Models: Require significant time, effort, and high-resolution data.

- Local Models: Can effectively capture empirical economic intuition when properly constrained.

- Development Order: Building the backend first is crucial when working with AI coding assistants.

What's Next

- Data Expansion: Incorporate microeconomic data (e.g., firm and community-level) to enhance resolution.

- Backend Enhancement: Develop a dedicated Python backend with GPU support to train deeper models.

- Validation & Backtesting: Implement automatic validation and backtesting to streamline model iteration.

Built With

- autoregressive-models

- chart.js

- deep-learning

- moving-average-models

- netlify

- papaparse

- random-forest-regressors

- react

- tailwindcss

- typescript

- vite

Log in or sign up for Devpost to join the conversation.