Inspiration

Inspired to explain why a race car behaved the way it did by tracing setup changes and observations back to physical mechanisms. This is not a lap time optimizer or a setup recommender. i read a reddit post which said Race engineers don’t struggle with data — they said they struggle with causality. After a setup change or telemetry shift, the hardest question isn’t what changed, but why the car behaved differently. Currently, that knowledge lives in people’s heads, notebooks, or memory.

What it does

ChassisWhy

- Explains cause and effect in race car handling

- Translates setup changes, telemetry deltas, and driver feedback into physical mechanisms

- Structures reasoning around vehicle-dynamics concepts like:

- Load transfer

- Tire saturation

- Compliance and damping

- Produces explanations that engineers can review, challenge, and trust

- Helps teams understand why a behavior appeared, not just what changed

What it doesn’t do

- Does not predict lap time

- Does not automatically recommend setups

- Does not replace race engineers or mechanics

- Does not claim perfect or universal correctness

- Does not use black-box machine learning on telemetry

ChassisWhy is a causal - effect explanation engine that helps race engineers and mechanics understand why a car’s handling behavior changed, using structured reasoning vehicle-dynamics reasoning rather than black-box predictions. For example engineer uploads a race video footage , and ChassisWhy explains car's behavior traced back to setup changes.

ChassisWhy externalizes race engineering reasoning. and its explanations can be reviewed. It uses real engineering language. Scalable to club racing to → pro teams.

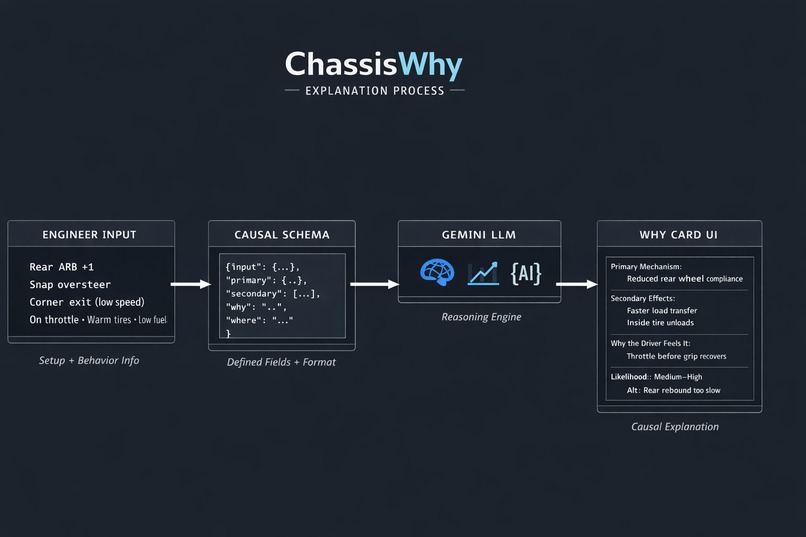

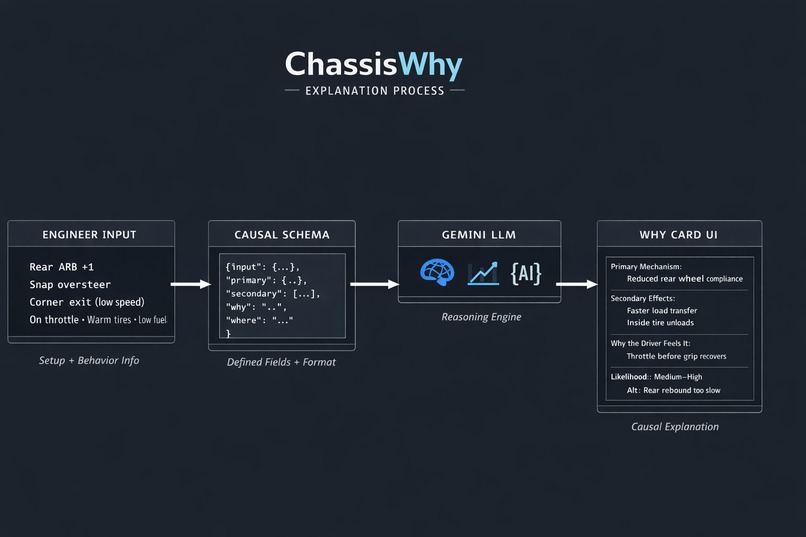

How we built it

We built a causal reasoning layer using a structured vehicle-dynamics schema — things like load transfer, tire saturation, and compliance. Then we use Gemini 3 to devise exlpanations strictly within the schema. Gemini is used because it can:

- Hold long session context

- Combine telemetry, setup, and driver language

- human-readable causal explanations

- excels at explaining chains, not just outputs

We built the Why Card :

- Input form (minimal)

- Gemini call

- Rendered Why Card UI.

Challenges we ran into

We needed to be consistent with explanations from input changed to observation changed. Maintaining mechanical consistency across explanations was fortunately what Gemini-3 excels at.

Accomplishments that we're proud of

Our app explains cause and effect in vehicle dynamics. Given a change — like a setup adjustment, telemetry delta, or driver feedback — it explains the likely physical mechanisms behind the behavior. It does not replace race engineers. a junior race engineer in software form

What we learned

What's next for ChassisWhy

Built With

- gemini-3-flash-preview

Log in or sign up for Devpost to join the conversation.