-

-

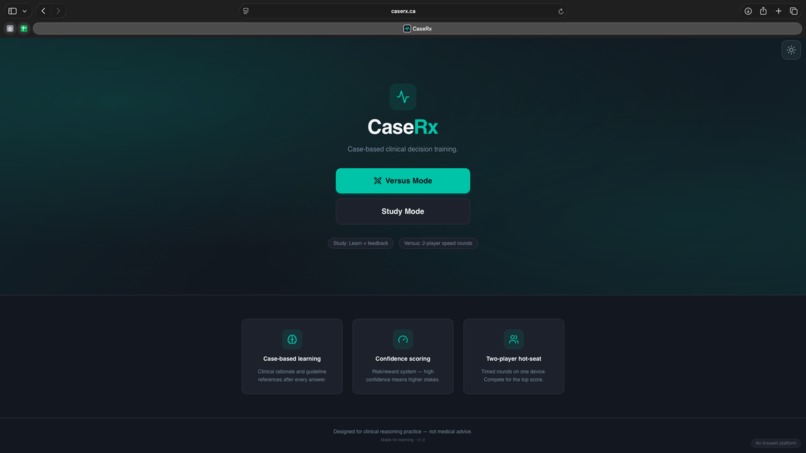

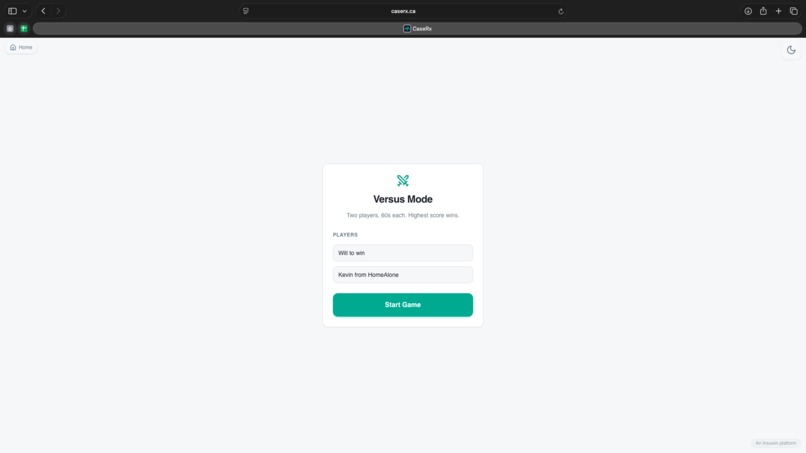

Sharpen your skills or play a friend

-

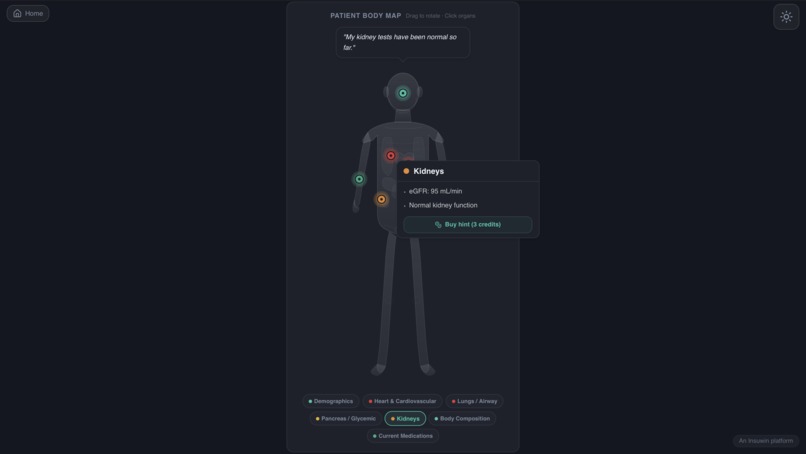

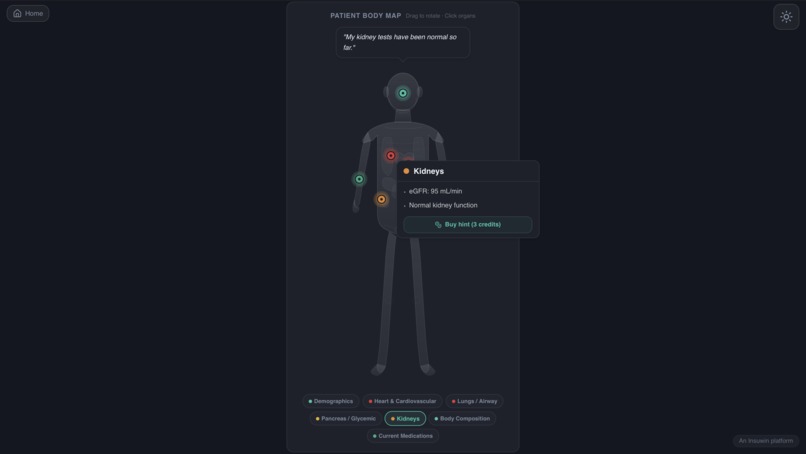

Visualize patient with an interactive model and spend in-game currency to purchase hints!

-

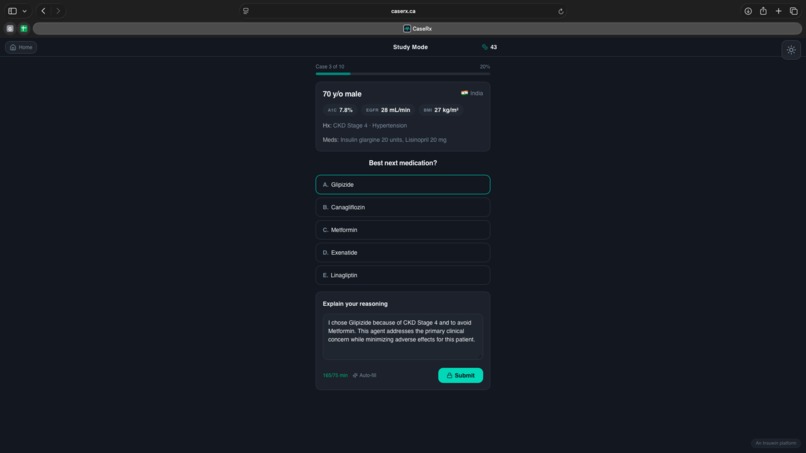

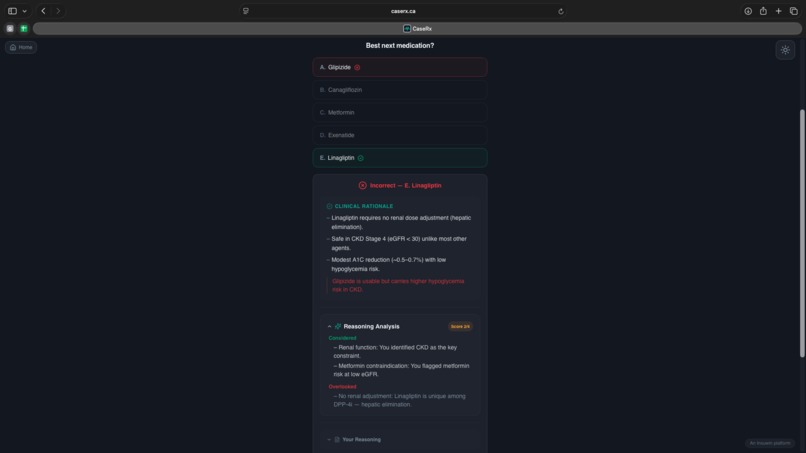

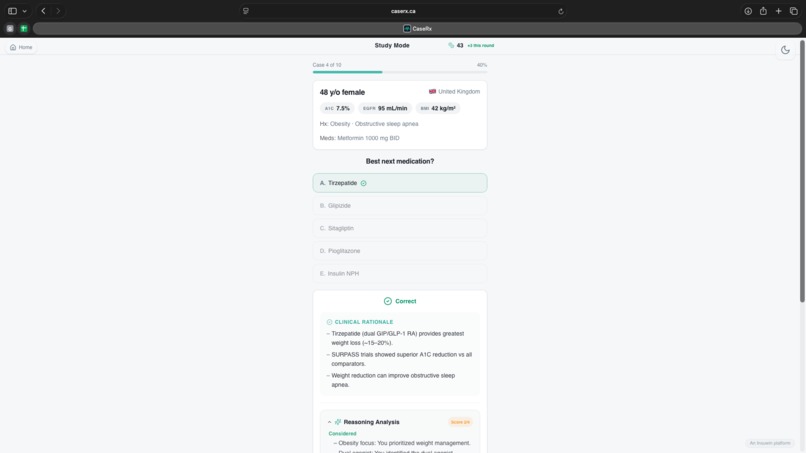

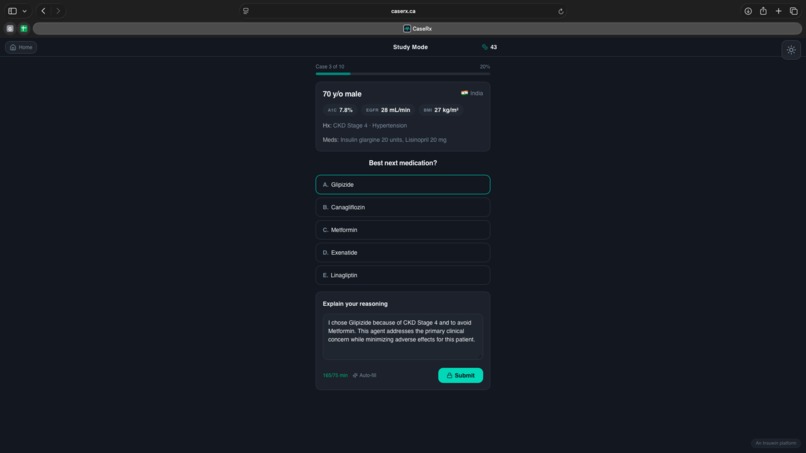

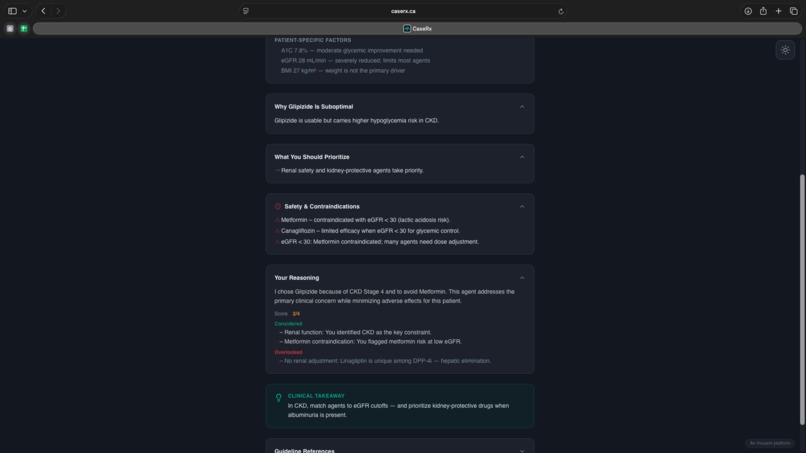

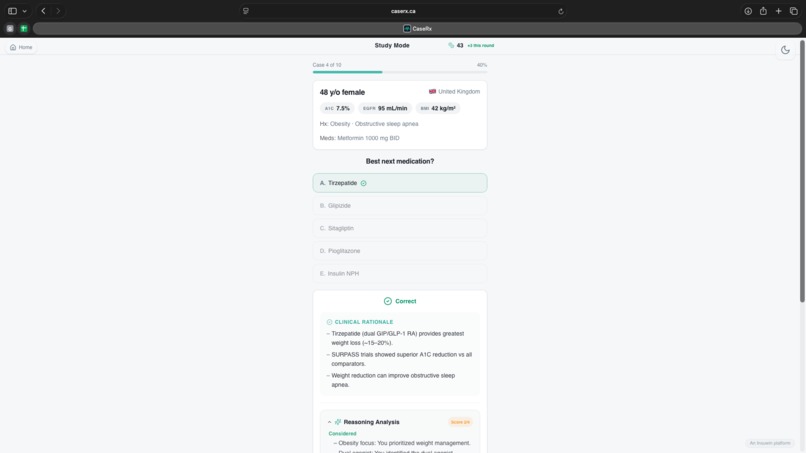

Provide justifications for clinical decisions to build confidence and receive tailored feedback

-

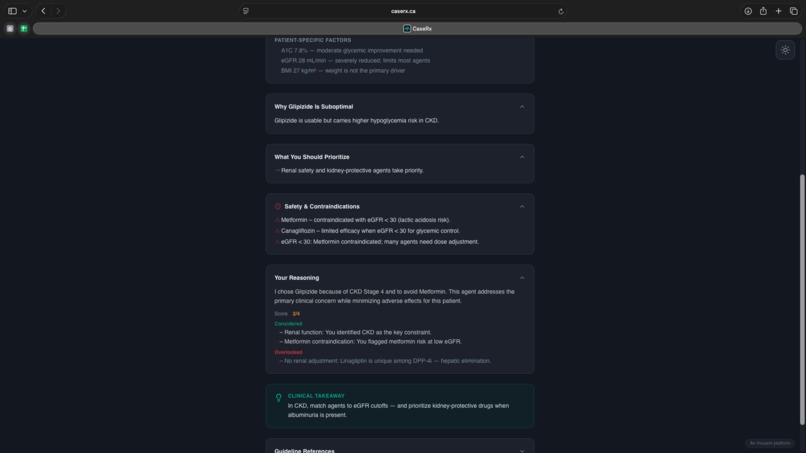

Delve deeper into clinical choices and strengthen justification-based decisions rather than recall and memorization

-

Discover flaws and holes in theory and diagnostic strategies

-

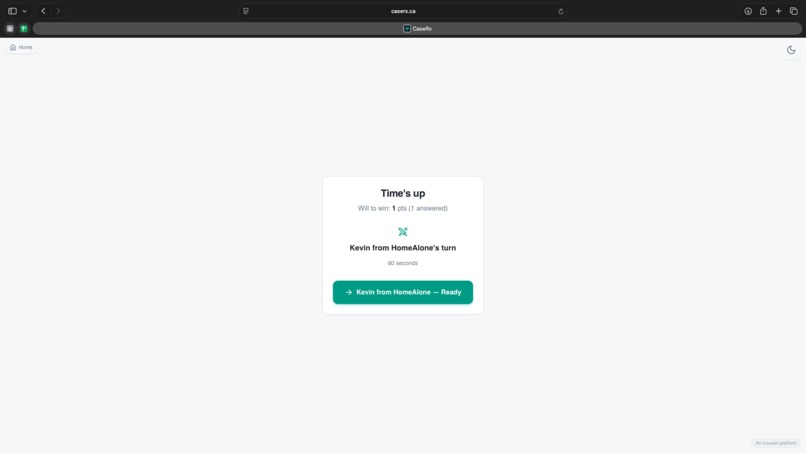

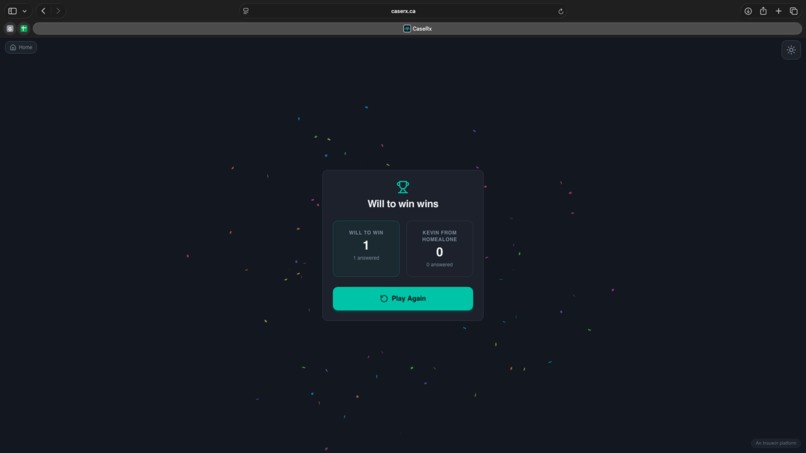

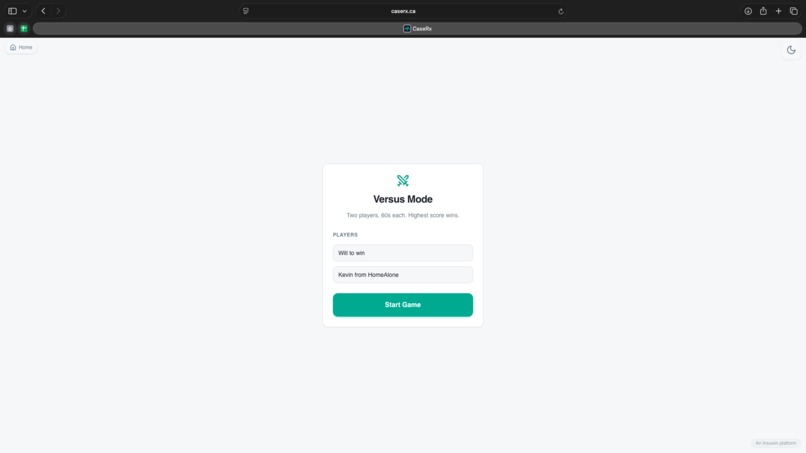

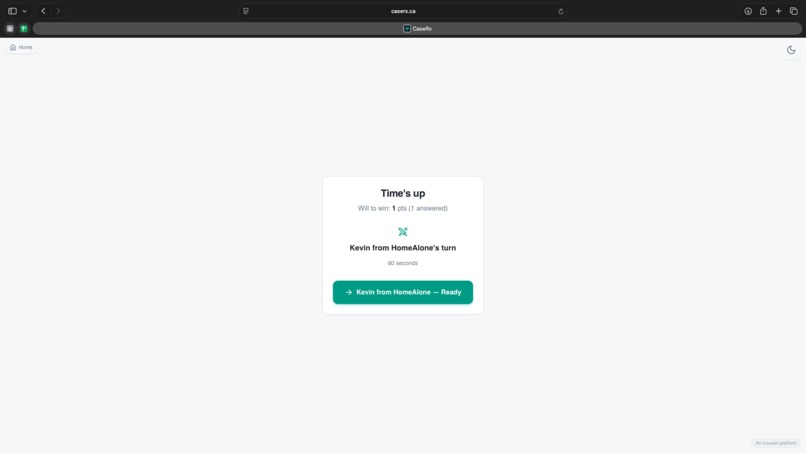

Play against a friend in a high stakes 60-second 1v1 to see who can score the most points

-

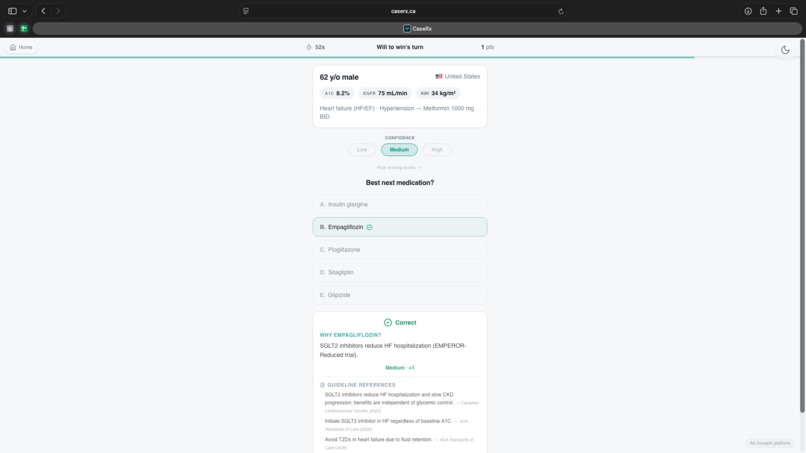

Submit answers with mandatory confidence reports to bring weighting and simulate real clinical diagnosis

-

Swap with your opponent

-

Ensure true evaluation of understanding based through identical case sets to eliminate difficulty variables

-

Celebrate your hard work!

Inspiration

Clinical education often rewards correctness without interrogating how decisions are made. As learners, we noticed a recurring gap: most tools train recognition and recall, but not clinical judgment under uncertainty. In real practice, clinicians must commit to decisions with incomplete information, justify their reasoning, and calibrate their confidence — yet very few learning systems simulate this pressure. We were inspired to build a platform that mirrors how medicine is actually practiced: decisions are time-pressured, socially accountable, and consequential. CaseRx was born from the question: What if learning medicine felt less like answering questions and more like making decisions?

What it does

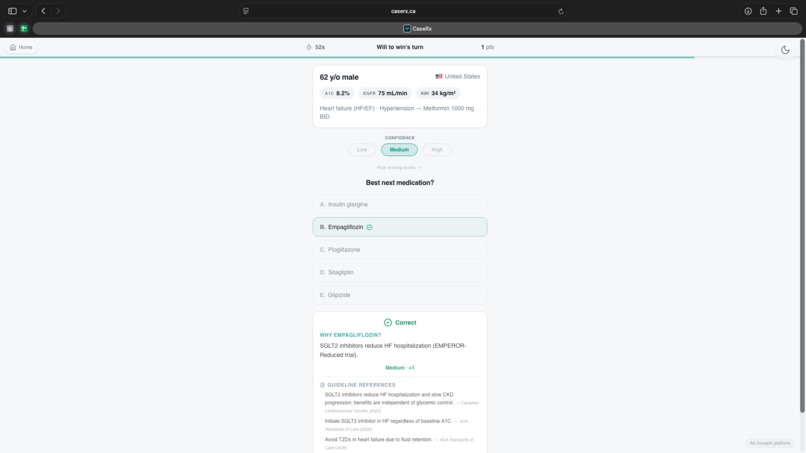

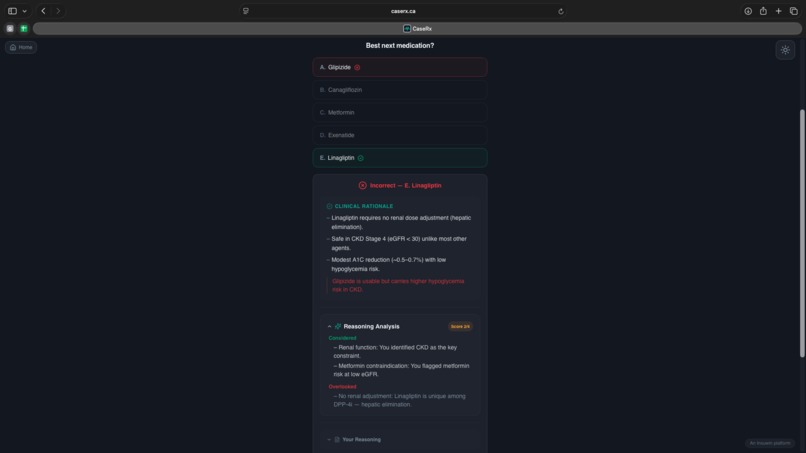

CaseRx is a competitive clinical reasoning simulator designed to train decision-making, confidence calibration, and explanatory reasoning — not just factual recall. Users work through realistic clinical cases and must: Commit to a single clinical action Declare their confidence level Defend their reasoning in writing In Versus Mode, two learners face the same case under time pressure. Points are awarded based not only on correctness, but on how well confidence aligns with decision quality. High-confidence errors are penalized, while well-reasoned, appropriately confident decisions are rewarded. After each case, learners receive structured feedback identifying: What they prioritized correctly What they overlooked Why alternative choices were superior This transforms assessment into deliberate practice, reinforcing safe clinical habits and transferable reasoning skills.

How we built it

We designed CaseRx by letting learning theory drive system architecture, rather than layering pedagogy on top of a quiz engine. Key components include: A case engine built around real guideline-referenced scenarios A dynamic scoring system weighted by confidence and reasoning quality A feedback engine that explicitly surfaces cognitive errors A multiplayer Versus Mode to introduce social accountability and time pressure The interface was carefully tuned to minimize friction and maximize focus on reasoning. Every design decision — from forced binary choices to delayed feedback — was intentional and grounded in educational theory.

Challenges we ran into

One of our biggest challenges was avoiding the “just another quiz app” trap. It was tempting to optimize for speed or content volume, but doing so would undermine the learning goals. Other challenges included: Designing a scoring system that rewards judgment, not guessing Creating feedback that is educational without being overwhelming Balancing competitive elements without trivializing clinical seriousness Ensuring the demo was reliable and polished under time constraints Each challenge forced us to clarify our core philosophy: this tool must train reasoning, not recognition.

Accomplishments that we're proud of

Built a fully functional end-to-end learning loop within the hackathon timeframe Implemented confidence-weighted scoring that meaningfully changes learner behavior Created a competitive mode that mirrors real clinical pressure without sacrificing educational value Designed structured feedback that teaches what not to do, not just what’s correct Validated the experience through informal user testing within the team, iterating rapidly based on learner behavior Most importantly, we built something that made us say: “I wish I had this when I was learning.”

What we learned

We learned that effective educational technology is not about more features — it’s about intentional constraint. Forcing learners to commit, explain, and reflect creates far deeper learning than allowing unlimited retries or passive review. We also learned that: Competition can enhance learning when tied to reasoning, not speed Confidence calibration is a powerful and underutilized teaching lever Learning theory must shape product design from day one to be meaningful

What's next for CaseRx

Next steps include: Adaptive case selection based on individual reasoning gaps Longitudinal tracking of confidence calibration and decision quality Expanded multiplayer modes, including team-based and teaching rounds Integration into formal medical education settings Research studies evaluating improvements in reasoning, retention, and safety Our long-term vision is for CaseRx to become a core training layer for clinical judgment, complementing existing content-heavy tools rather than replacing them.

Built With

- chatgpt

- cloudflare

- github

- ionos

- lovable

- react

- replit

- tailwind

Log in or sign up for Devpost to join the conversation.