-

-

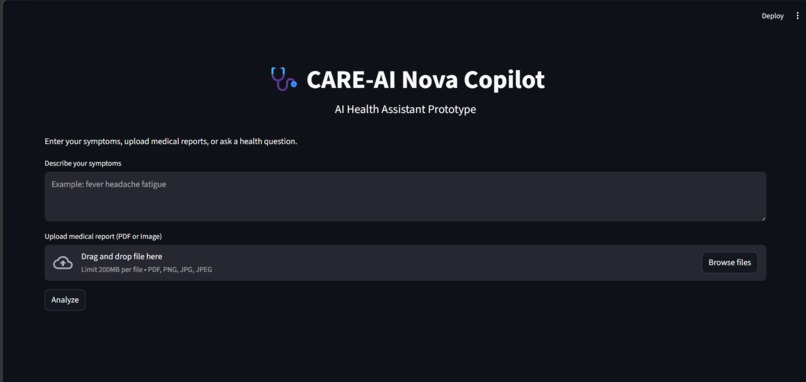

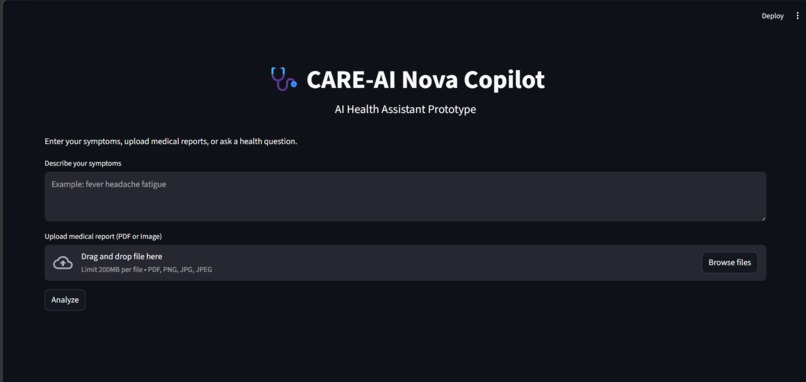

CARE-AI Nova Copilot main interface for entering symptoms and uploading medical reports.

-

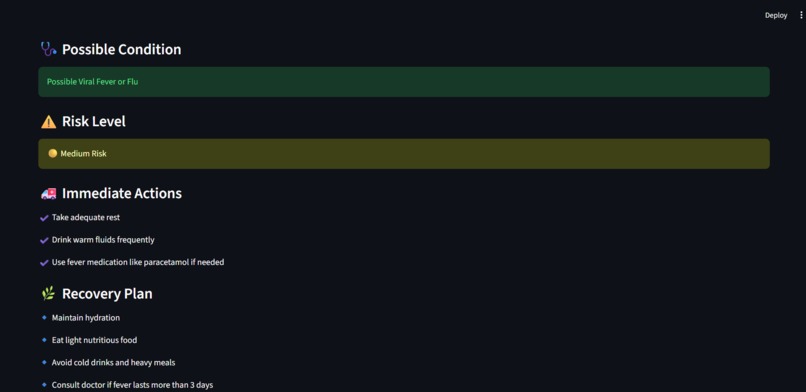

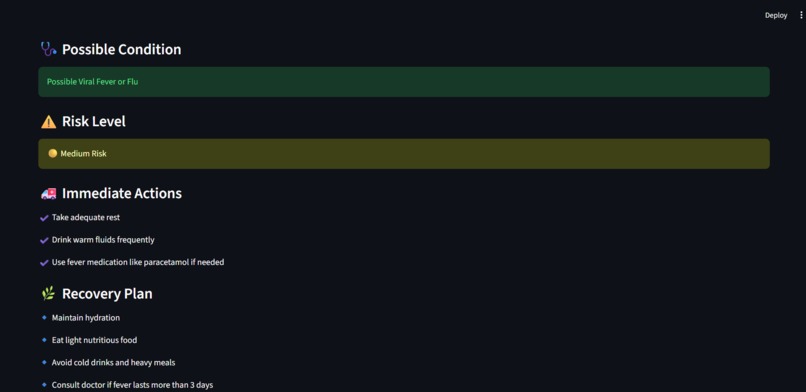

AI symptom analysis detecting possible health conditions based on user-described symptoms.

-

AI-powered symptom analysis providing possible health conditions, risk level, and recommended actions.

-

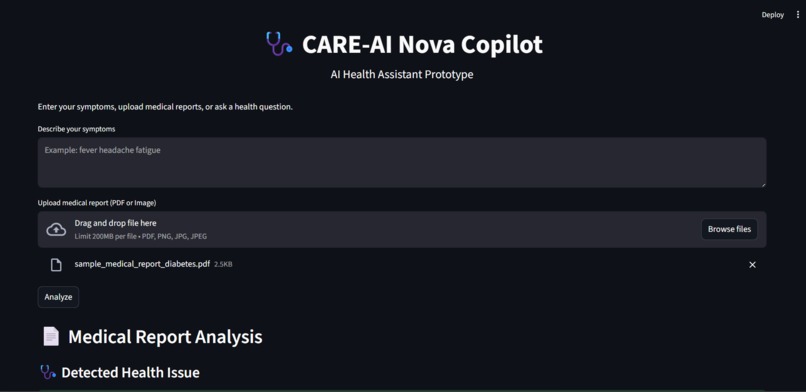

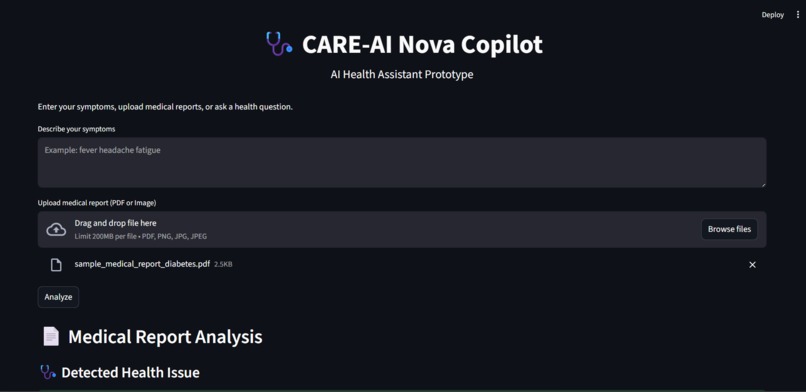

Uploading a medical report for automated health analysis using CARE-AI Nova Copilot.

-

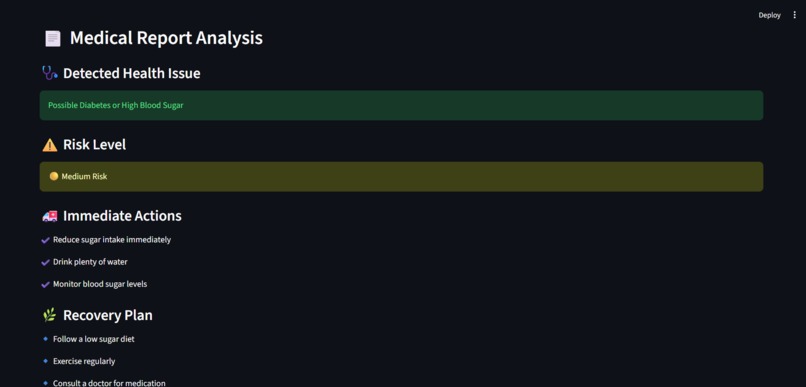

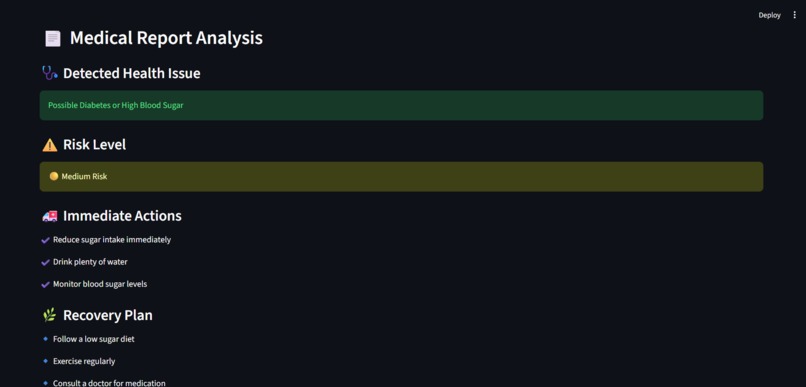

AI interpretation of medical reports highlighting potential health risks and suggested recovery actions.

About the Project Inspiration

Access to timely and understandable healthcare information is still a major challenge for millions of people. Many individuals struggle to interpret medical reports, understand symptoms, or decide when to seek medical help. This problem is even more significant in rural and semi-urban areas where medical professionals may not always be easily accessible.

We were inspired to build a solution that empowers individuals with AI-driven health insights. By leveraging the capabilities of Amazon Nova foundation models, we wanted to create a smart assistant that can interpret medical information and provide simple, understandable guidance. Our goal was to bridge the gap between complex medical data and everyday users.

What Our Project Does

CARE-AI Nova Copilot is a multimodal AI health assistant designed to help users better understand their health information.

The system allows users to:

Enter symptoms in natural language

Upload medical reports or documents

Ask health-related questions through text or voice

Receive simplified explanations and guidance

Using Amazon Nova 2 Lite, the application analyzes user inputs and generates easy-to-understand insights. Voice interactions are powered by Amazon Nova 2 Sonic, enabling users to interact with the assistant naturally.

The goal is not to replace doctors, but to act as a health information copilot that helps users better understand their conditions and encourages early awareness.

How We Built It

The project was developed on AWS, combining generative AI capabilities with modern application frameworks.

Key components include:

Frontend Interface: A simple web interface where users can enter symptoms, upload documents, and interact with the assistant.

AI Processing Layer: Powered by Amazon Nova models to analyze text inputs, interpret uploaded information, and generate meaningful responses.

Voice Interaction: Implemented using Amazon Nova 2 Sonic to enable real-time conversational experiences.

Application Logic: Built using modern AI orchestration frameworks such as LangChain to manage prompts, workflows, and responses.

Together, these components create an interactive system that can process multiple types of inputs and generate helpful health explanations.

Challenges We Faced

Building a multimodal AI assistant presented several challenges.

One of the primary difficulties was ensuring that the AI responses remained clear, safe, and understandable for non-medical users. Medical information can be complex, and simplifying it without losing accuracy required careful prompt engineering and testing.

Another challenge was integrating multiple AI capabilities such as text analysis, document understanding, and voice interaction into a single seamless experience.

Finally, designing a user-friendly interface that allows people to easily interact with advanced AI technologies required several iterations and improvements.

What We Learned

This project helped us gain valuable experience in building real-world generative AI applications. We learned how to integrate foundation models, design multimodal workflows, and create AI systems that solve meaningful problems.

Working with Amazon Nova models also gave us deeper insights into how advanced AI reasoning and conversational capabilities can be applied to practical scenarios.

Future Improvements

In the future, we plan to enhance the system by:

Supporting multiple languages to improve accessibility

Integrating wearable health data

Providing personalized health insights based on user history

Our vision is to transform CARE-AI Nova Copilot into a powerful health guidance companion that makes healthcare information more accessible to everyone.

Built With

- amazon-api-gateway

- amazon-nova

- amazon-nova-2-lite

- amazon-nova-2-sonic

- amazon-web-services

- amazon-web-services-(aws)

- aws-lambda

- css

- dynamodb

- html

- javascript

- langchain

- python

- streamlit

Log in or sign up for Devpost to join the conversation.