-

-

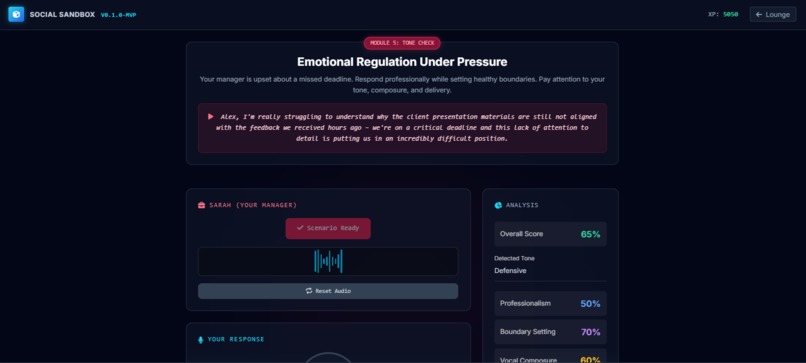

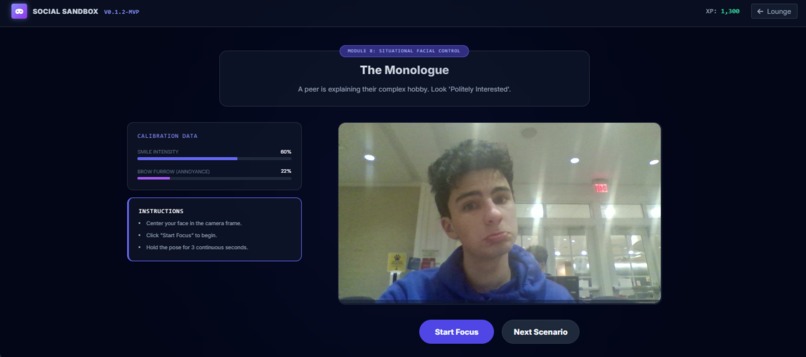

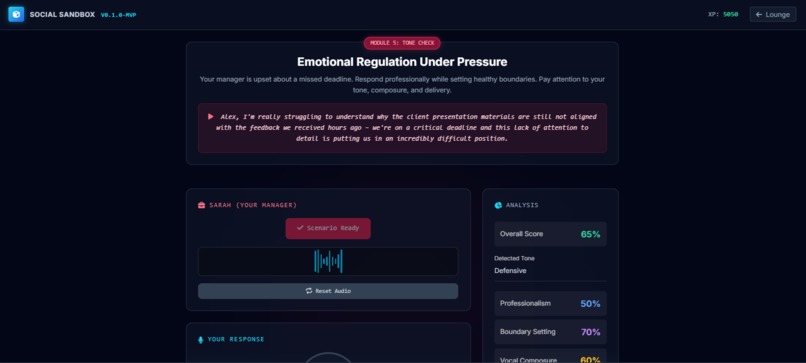

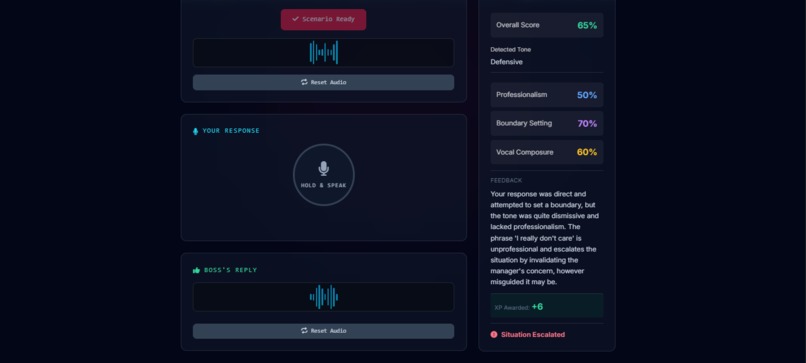

This is the part of the platform that allows users to simulate stressful situations

-

This is also the stressful situations simulation.

-

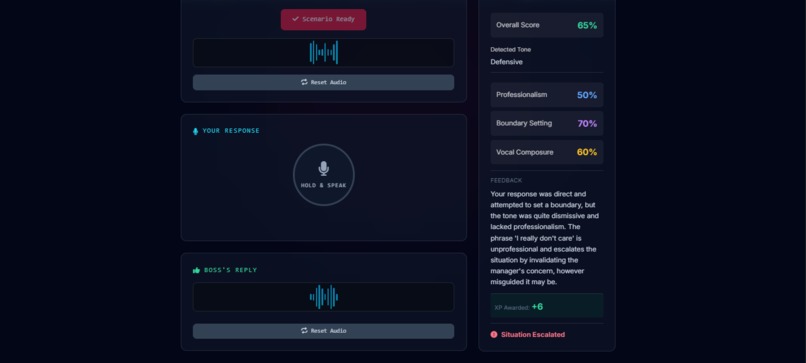

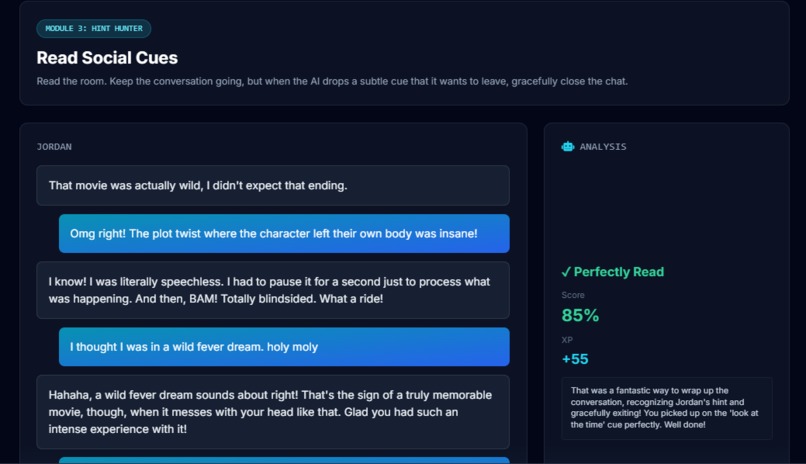

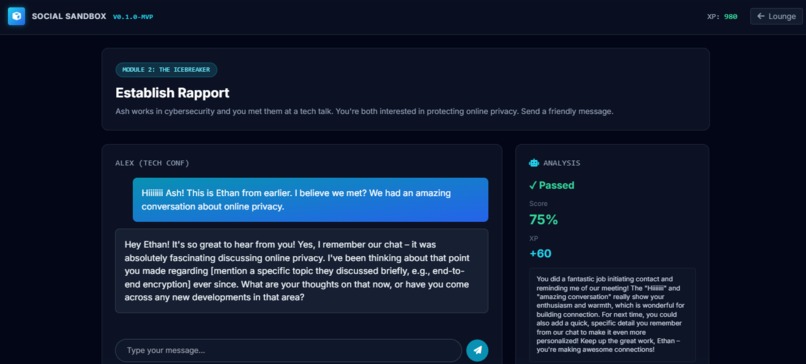

This is the social cues fake texting chat which works to help the user practice texting

-

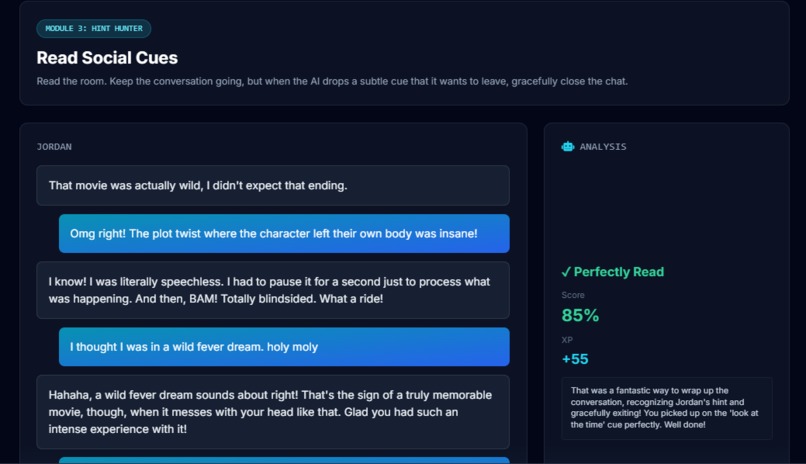

This assists the user in introducing themselves over text

-

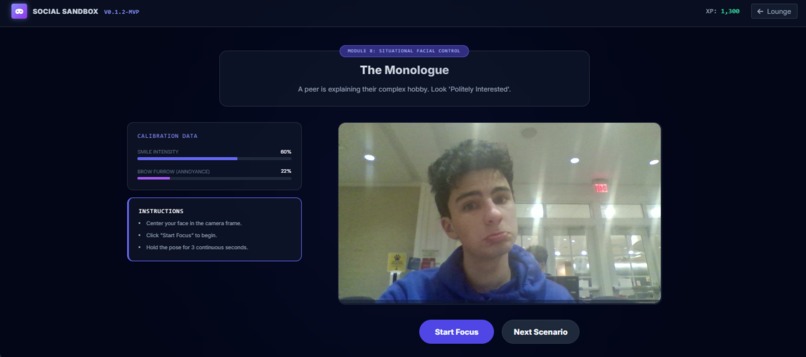

Facial Recognition that ensures the right emotions are being displayed on one's face at the right times

-

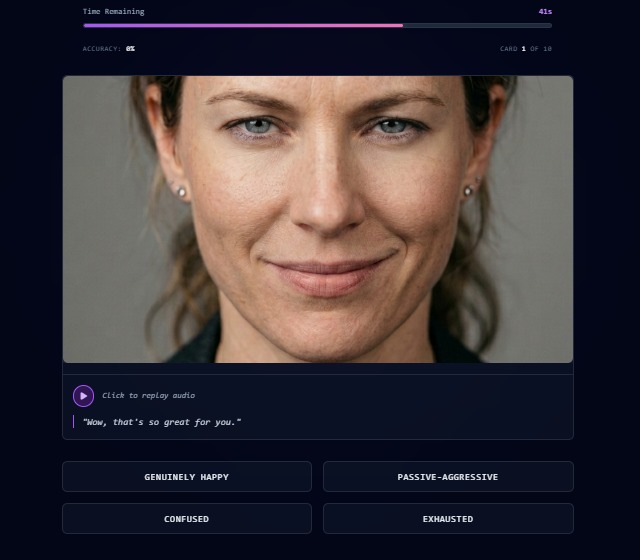

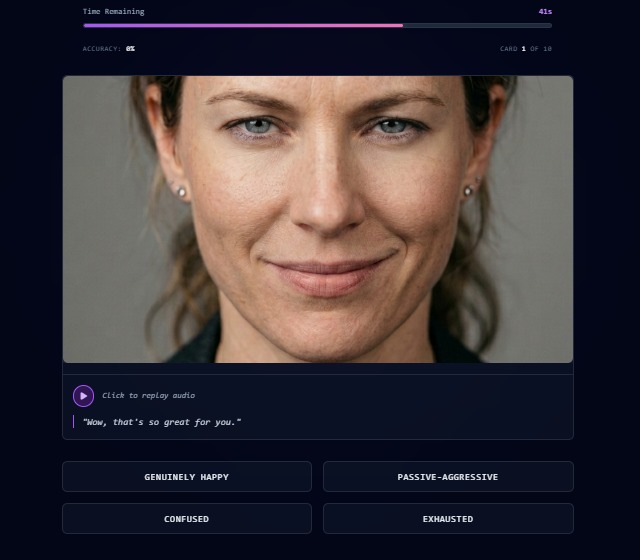

Quizzing the user on emotions on one's face

-

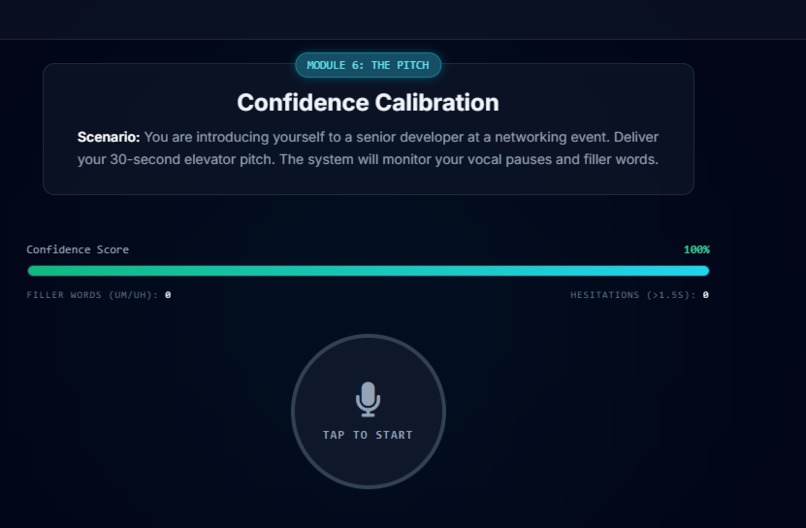

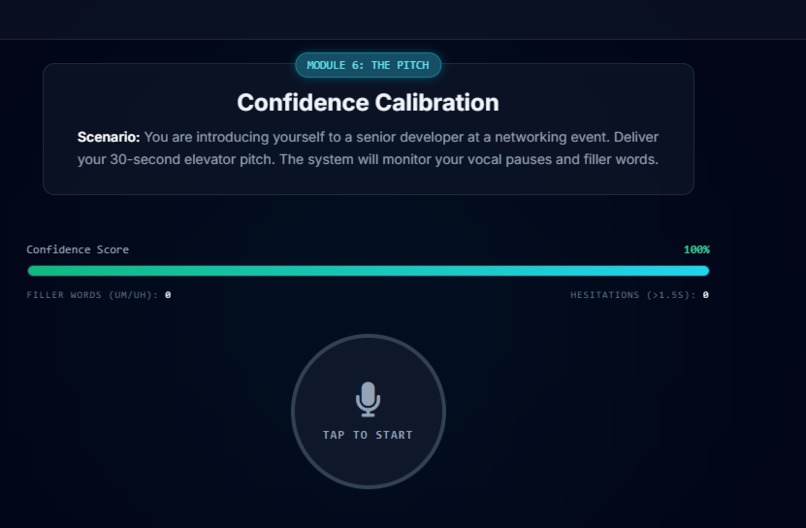

Introducing oneself to improve confidence, the user is given points based on how many filler words they used, and what they actually say

-

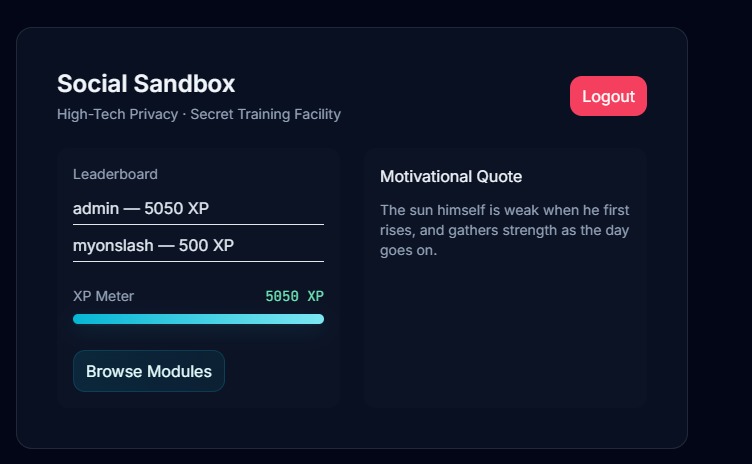

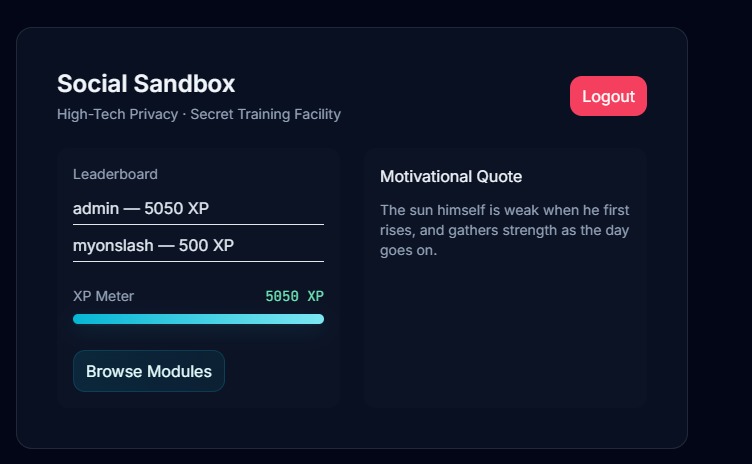

Dashboard Page

-

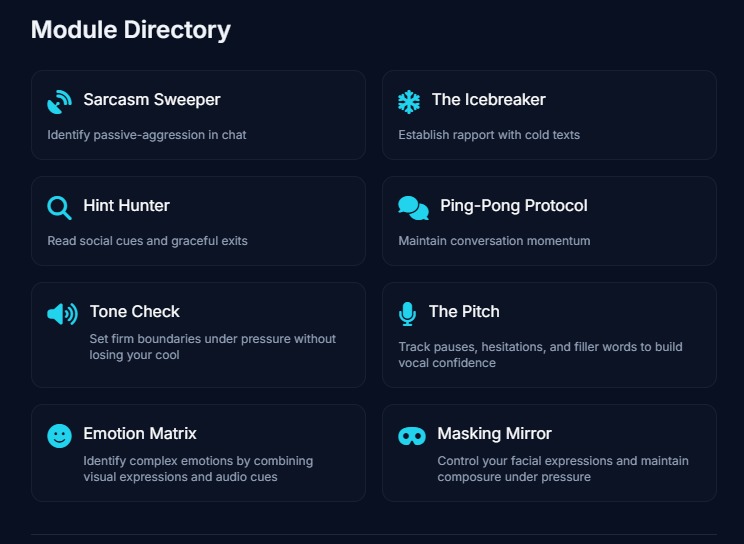

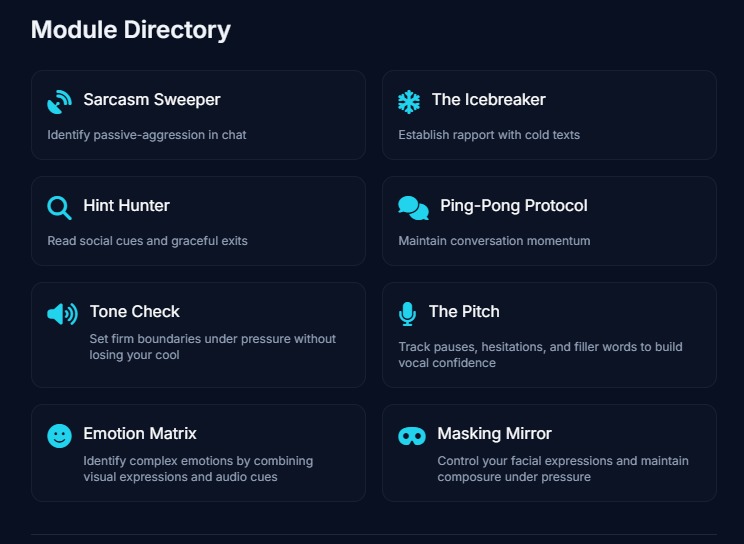

Our directory of 8+ exercises for the users to practice on.

🧊 Bridge Communications Co: Debugging Human Interaction

Tagline: A gamified, AI-powered training environment to rebuild the social skills eroded by the digital age. Welcome to the gym for emotional intelligence.

💡 The Inspiration (Our "Why")

We are living in a social recession. With the rise of remote work, hyper-digital communication, and prolonged screen time, an entire generation of young professionals is entering the workforce with a critical deficit in soft skills. We realized that while we have tools to debug our code, check our grammar, and track our physical fitness, we don't have a safe place to practice and "debug" human interaction.

We wanted to create a solution that didn't feel like a boring corporate HR training video. It needed to be fast, stressful (in a good way), and highly analytical.

Enter Bridge Communications: A cyber-aesthetic, gamified simulation suite that uses multimodal AI to pressure-test your communication, empathy, and conflict-resolution skills.

🚀 What It Does

Bridge Communications is divided into three distinct "Gyms," each targeting a specific pillar of human communication. Users earn XP by completing modules, unlocking higher-stakes scenarios as they level up.

📱 GYM 1: The Digital Comm Suite

Focus: Asynchronous text communication and reading subtext.

Sarcasm Sweeper: A digital minefield where users scan group chats to flag passive-aggressive messages.

The Icebreaker & The Ping-Pong Protocol: Live-typing simulators where an LLM judges your ability to establish rapport and maintain conversational momentum without resorting to dead-end "yes/no" answers.

Hint Hunter: Users must read the room and gracefully exit a text conversation when the AI starts dropping subtle cues that it wants to leave.

🎙️ GYM 2: The Vocal Sandbox

Focus: Real-time verbal communication and conflict de-escalation.

The Pitch: A live confidence calibrator. Users hold a mic and deliver an elevator pitch while the system tracks their filler words ("um", "uh") and hesitations, calculating a live confidence score.

Tone Check (The De-Escalator): Unlocked at 5,000 XP. A high-stress simulator where an angry boss (voiced by ElevenLabs) yells at the user. The user must verbally respond. We pass the raw audio to Gemini to evaluate not just what they said, but their vocal tone (shakiness, defensiveness) and boundary-setting ability.

🎭 GYM 3: The Facial Gym

Focus: Cognitive load and non-verbal cues.

The Emotion Matrix: A high-speed, zero-latency flashcard game. Users are bombarded with conflicting visual and auditory signals (e.g., a picture of a smiling face paired with a heavy, frustrated sigh generated by ElevenLabs) and must quickly identify the true underlying emotion.

⚙️ How We Built It

We built a highly scalable, modular architecture from the ground up in just a few hours.

Frontend: Built with Vue.js and Tailwind CSS. We designed a custom "Glassmorphism / Cyber-Corporate" UI. The UI is driven by a master JSON configuration object, allowing us to swap rulesets, disable typing, or inject new scenarios instantly without rewriting UI components.

Backend & Database: We used MongoDB Atlas to store user profiles, XP, and detailed session logs (transcripts, tone analyses, and module scores), populating a global Lounge Dashboard.

The AI Brain (Gemini API): We heavily utilized the Gemini API. For text modules, it parses subtext and returns structured JSON feedback. For our advanced voice modules, we utilized Gemini's native multimodal audio capabilities, passing raw .webm audio blobs so the AI could analyze the user's actual vocal inflection and emotional state, not just a text transcript.

The Voices (ElevenLabs): To make the simulations feel real, we integrated ElevenLabs. We tweaked the stability and similarity_boost parameters of specific voice models to create highly expressive, aggressive, or passive-aggressive antagonist voices.

😤 Challenges We Ran Into

The Web Speech API State: We initially struggled with InvalidStateError crashes when users rapidly clicked the microphone button. We had to build a strict, bulletproof state-tracking wrapper using try/catch blocks around the browser's async audio engines.

Audio Blob Routing: Capturing raw audio from the browser via the MediaRecorder API and correctly formatting the payload to send to Gemini's native audio endpoints required incredibly precise data handling and MIME-type formatting.

The Hackathon Clock: We went from ideation at 12:00 PM to having 6 fully functional, highly polished UI modules mapped to complex logic by 5:00 PM. Managing scope while maintaining a premium UI was a massive challenge.

🏆 Accomplishments That We're Proud Of

The Vue.js Config Pattern: We're incredibly proud of how scalable Gym 1 is. We can add 100 new text scenarios just by adding JSON objects to our registry—the Vue frontend dynamically adapts the UI (checkboxes vs. text inputs) based on the config.

Zero-Latency Multimodal (Module 7): By pre-generating ElevenLabs audio and coupling it with static images for the Emotion Matrix, we created a module that tests complex multimodal human perception without relying on live LLM generation, making it blazing fast.

Actually Useful Feedback: The LLaMA 3 / Gemini system prompts are tuned perfectly. The "Social Debugger" doesn't just say "Good job," it gives actionable feedback like, "Threat neutralized. 'No rush' combined with 'biggest client' is highly contradictory and indicates passive-aggression."

📚 What We Learned

We learned how powerful native audio processing is compared to standard Speech-to-Text. Hearing the "shake" in a user's voice completely changes the context of their words.

We mastered handling complex state management in Vue.js for real-time applications.

We learned that designing "stressful" UX requires a delicate balance of gamification (neon colors, XP bars, sound effects) so the user feels challenged, not discouraged.

🔮 What's Next for Bridge Communications

Gym 4 (The Body Language Lab): Integrating client-side computer vision (like MediaPipe) to track posture and eye contact during simulated video calls.

Multiplayer Sandbox: Allowing two users to enter a simulated negotiation, with the AI acting as the referee and grading both parties on their active listening and compromise.

Enterprise Integration: Packaging the sandbox for HR departments to use for onboarding and management training.

Log in or sign up for Devpost to join the conversation.