BrandForge

AI-powered Creative Director that turns a single marketing idea into a complete multi-channel, publish-ready campaign in minutes.

Inspiration

Every marketer knows this pain. You have a product launch coming up soon, and you need to create content for LinkedIn, Instagram, Twitter, Facebook, TikTok, YouTube, email, newsletters, your blog, Reddit, Pinterest, Threads, Spotify, and more. That is more than 14 platforms already, each with its own unique dimensions, tone, and format. Even with a solid brief and a solid brand kit, producing all this content is going to take days of drafting, designing, and context-switching, if not spending thousands of dollars in agency fees.

When we came across the Gemini Live Agent Challenge on DevPost calling for developers to build an AI agent that can “think and create like a creative storyteller, seamlessly weaving together social copy, visuals, audio, and video in one go,” we realized Gemini’s multimodal capabilities could solve this problem end-to-end. What if there were an AI Creative Director that could take a single marketing brief and a brand kit, then create a complete, publish-ready campaign across every major platform? And what if you could control this entire creative process with your voice completely hands-free?

That vision became BrandForge our AI-powered Creative Director that turns a single marketing idea into a complete multi-channel, publish-ready campaign in minutes.

What It Does

BrandForge takes a single campaign brief (e.g., "Launch our new eco-friendly water bottle, EcoHydrate Pro, targeting young professionals who care about sustainability and an active lifestyle") and a brand kit (logo, product image, colors, fonts, and your brand tone of voice), and generates a complete marketing campaign across 14 channels with images, video, audio ads, and platform-specific marketing copy, all streamed in real time into a single mosaic canvas.

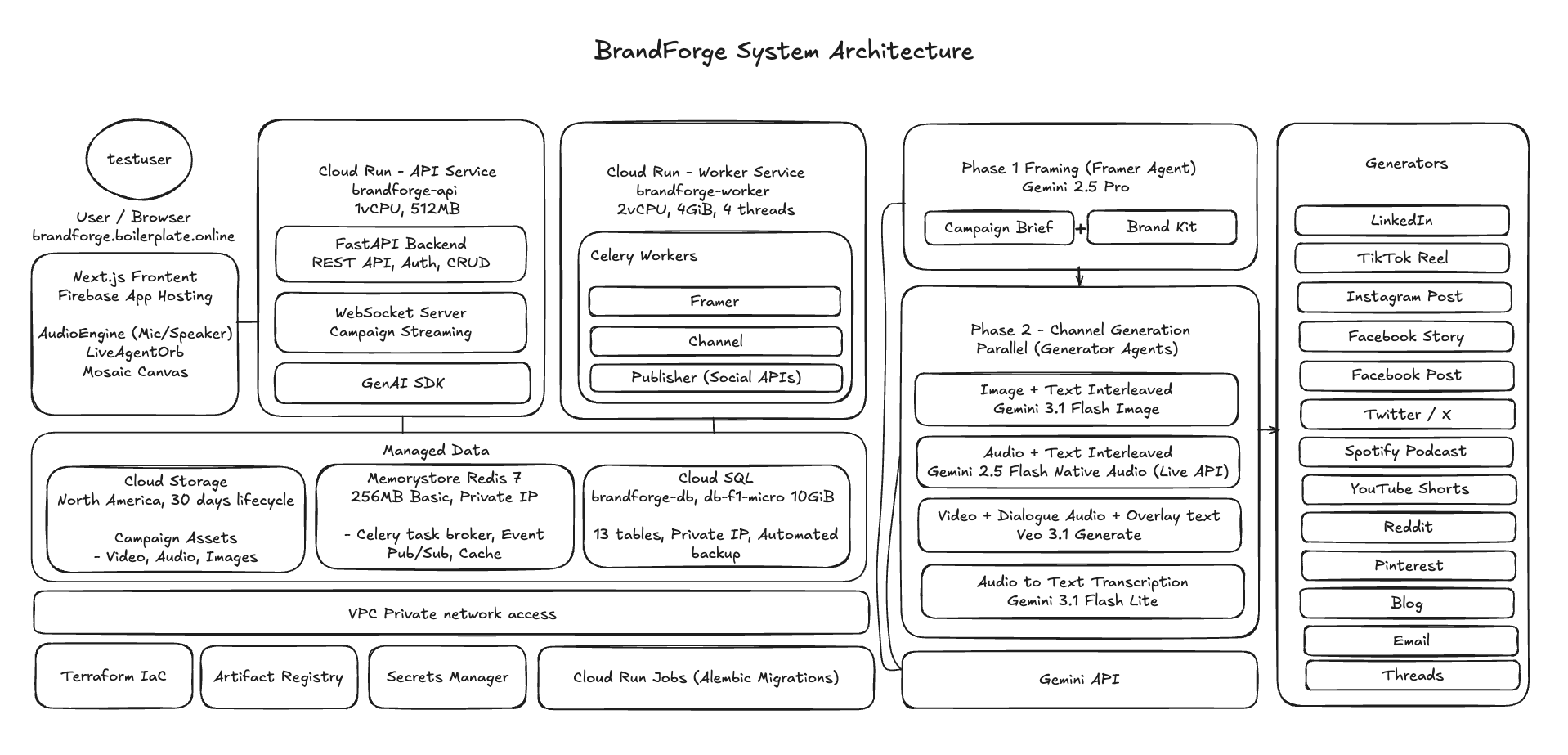

Leveraging Gemini multimodal models, the campaign creation pipeline has two stages:

Stage 1 - Framer Agent: First, the Framer Agent uses Gemini 3.1 Pro to analyze the brief and brand kit, then produces a structured creative plan with a headline, tagline, campaign story, and creative direction for all 14 channels, along with a hero image generated with Gemini 3 Pro Image (a.k.a. Nano Banana Pro). Each of the 14 Channel Generator Agents is dispatched in parallel.

Stage 2 - Channel Generator Agents:

- 9 image and text channels (LinkedIn, Twitter/X, Facebook Post, email, blog, Reddit, Pinterest, Thread, Instagram Post) use Gemini 3.1 Flash Image

- 4 video channels (YouTube, TikTok, Instagram Reel, Facebook Stories) use Veo 3.1 Generate

- 1 audio channel generates the spoken audio ad for Spotify with Gemini 2.5 Flash Native Audio, while the cover image and automatic transcription are generated via Gemini 2.5 Flash for the podcast episode

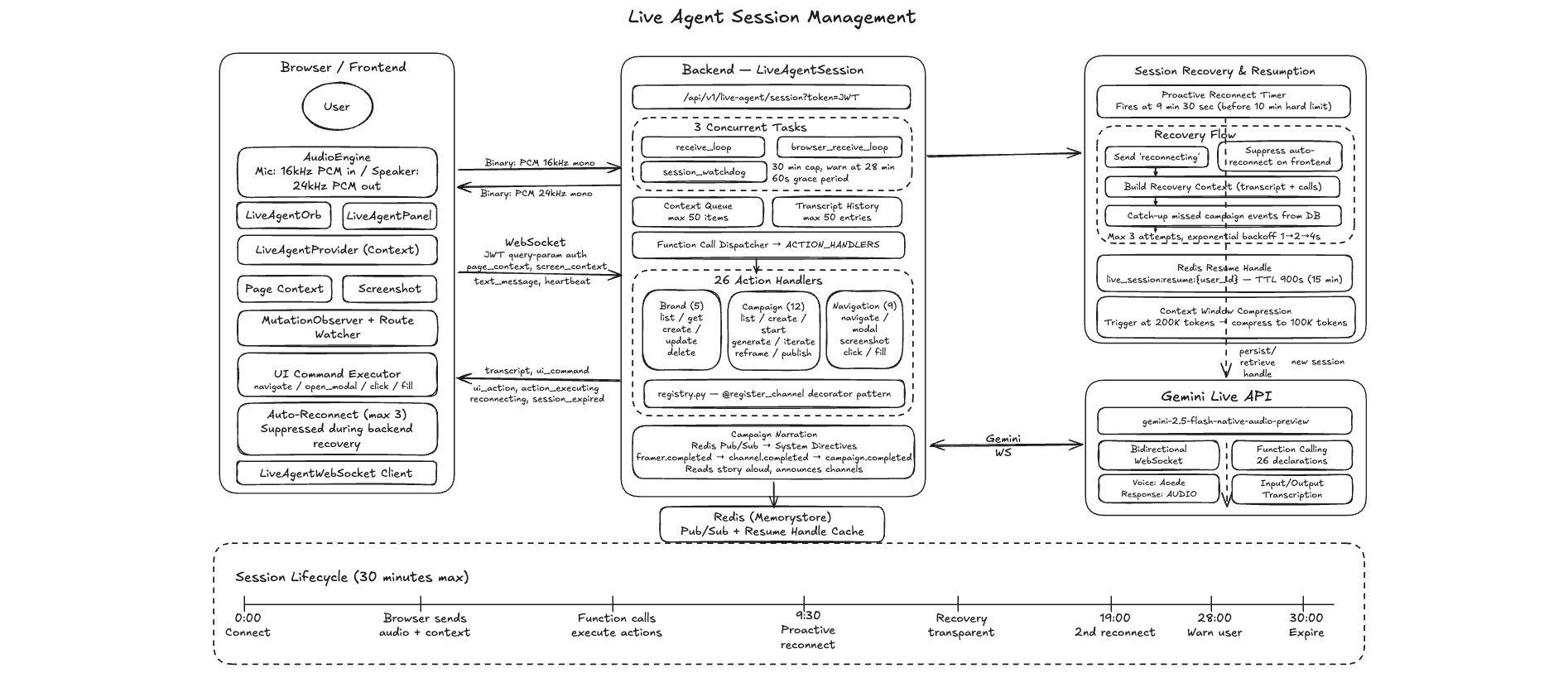

In addition, a voice-controlled Live Agent built on the Gemini Live API using Gemini 2.5 Flash Native Audio acts as our AI Creative Director, exposing more than 26 functions across brand management, campaign lifecycle management, and UI navigation, letting you create brands, launch campaigns, iterate on specific channels, reframe entire campaigns, and navigate the app entirely through natural language conversation.

How We Built It

Backend

- FastAPI (Python 3.12) with asynchronous input/output

- PostgreSQL for data persistence

- Celery + Redis to distribute generation and queues across all 14 channels

- Every AI call goes through the Google GenAI SDK, providing a unified client interface across all seven Gemini and AI models

- Redis pub/sub streams typed events from Celery workers to WebSocket endpoints

Frontend

- Next.js with TypeScript and Tailwind CSS

- Custom WebSocket client with exponential backoff reconnection to render assets in real time on a masonry mosaic canvas

- The Live Agent UI uses the Web Audio API for PCM audio capture and playback over a mixed binary/JSON WebSocket protocol, with a breathing orb animation that synchronises with voice activity

Architecture Highlights

Channel Registry Pattern: Each channel generator is a Python class inherited from a BaseChannelGenerator, registered via a @register_channel("channel_name") decorator that automatically wires it into the central registry.

This means that, adding a new channel simply requires writing one new class with no configuration file or factory updates.

Live Agent Session Management:

The Gemini Live API session manages bidirectional audio streaming at 16 kHz mono in and 24 kHz mono out, dispatching function calls to 26 action handlers across three domains (brand, campaign, navigation), and narrates the campaign as it progresses via system directives. A Redis-backed session resumption handler enables seamless reconnection past the Gemini Live 15-minute session limit, with proactive reconnection every 9.5 minutes and context window compression to allow longer conversations for up to 30+ minutes.

Infrastructure

- Local development: We host the PostgreSQL and Redis services via Docker Compose

- Live deployment: We use Cloud Run, Cloud SQL, Memorystore, and Cloud Storage on Google Cloud, with Terraform Infrastructure-as-Code

Challenges We Ran Into

1. Hex Color Codes Rendered as Text in Veo Video

When we included the hex value like #2E8B57 in the video prompt, thinking that the model would use it to generate accurate color rendering, the Veo generation models rendered it as literal strings visible as text in the video instead of interpreting it as a color guide. So we built a hex-to-color-name sanitization layer that converts codes to human-readable names (e.g., #2E8B57 translates to Sea Green) before they are injected into the prompt. Now the Veo generation model renders the color accurately.

2. Live API Session Timeout After 15 Minutes

The Gemini Live API has an approximately 15-minute session timeout, but our creative direction sessions can last more than 30 minutes. To overcome this, we implemented a Redis-backed session resumption handler that proactively reconnects at 9.5 minutes before timeout to ensure context compression and session continuity.

3. Transcript Artifacts from the Live API

Speech-to-text occasionally produces control tokens or noise transcriptions such as [EVENT], [MUSIC], and [APPLAUSE], as well as misdetected language fragments. We built a custom sanitization pipeline to strip these before they reach the transcript display or function call processing.

4. Sharpening the Voice Personality

Voice interaction is fundamentally different from text chat. Users speak in fragments, interrupt themselves, and expect implicit context understanding. Getting the Creative Director persona to feel natural required a 238-line system instruction defining conversational flow rules, multi-step workflow protocols, when to ask follow-up questions versus proceed, and behavioural guardrails.

Accomplishments We're Proud Of

1. Orchestrating Seven Gemini Models Into One Coherent Pipeline

BrandForge coordinates multiple Gemini and Google AI models, each optimized for text, image, video, or audio generation through the same Google GenAI SDK.

2. 14 Channels Generated in Parallel From a Single Brief

The two-stage architecture with a Framer Agent for strategy and Channel Generator Agents for execution, ensures consistent creative direction across every channel while generation runs concurrently, allowing a full campaign to materialize in under 60 seconds.

3. A Voice-Controlled Creative Director That Works

Our Live Agent with 26 function declarations handles natural conversation, brand creation workflows, campaign generation with live narration, channel-specific iteration, and UI navigation entirely by voice with session resumption that keeps the experience engaging across long-running workflows.

4. Arrival-Order Streaming Creates Compelling UX

Instead of waiting for all 14 channels to complete, each channel appears instantly as it is ready, filling the mosaic canvas organically and creating momentum that keeps users engaged in the creative process.

What We Learned

1. Match the Model to the Task

We used Gemini Pro models for deep reasoning and complex tasks such as campaign strategy, Flash models for fast execution, image models for image generation, and Veo for video. Native audio models handle speech synthesis, and the GenAI SDK makes switching easier, by using the same client with different model strings. However, the quality of output and speed of delivery vary significantly between the different models.

2. Multipart Prompting With Brand Assets Is Transformative

Sending the brand’s actual logo, product image, and key visual directive alongside the text prompt produces dramatically more brand-consistent output than trying to describe the brand in words. Gemini’s multimodal understanding sees the logo, understands the brand styles, and incorporates them naturally into the output.

3. Separate Planning From Execution

The Framer/Generator two-stage architecture ensures creative consistency through a shared strategic plan. The Framer Agent thinks deeply and plans the entire campaign allowing the channel specific generator agent to execute fast in parallel. The creative consistency comes from the shared plan, not from the individual channel generator agent's interpretation of the brief.

4. Redis Pub/Sub Is the Right Abstraction for AI Streaming

It cleanly decouples generation from delivery. Each generator publishes events without knowing who is listening, and the WebSocket subscribes without knowing what is generating them. This separation made scaling and debugging straightforward.

5. Voice Is a Fundamentally Different Interface

Users speak in fragments, change direction mid-sentence, and expect the agent to infer context. Building a natural voice-first experience required far more system instruction than text-based chat. Conversational flow, personality sharpening, when to ask versus when to act, and how to handle interruptions all shape whether the agent feels like a tool or a collaborator.

What's Next for BrandForge

1. Direct Platform Publishing

The OAuth-based publishing infrastructure is built. Platform integration with encrypted token storage, a pulsing job queue, and status tracking are already in place and tested with LinkedIn. Our next step is connecting the final mile to each platform-specific API for one-click and natural language command publishing from the mosaic canvas and the Live Agent.

2. Campaign Analytics

Tracking the performance of published campaigns and feeding engagement data back into the generation pipeline so BrandForge can learn which creative directions perform best for each brand and audience.

3. Collaborative Workspaces

Multi-user brand workspaces with role-based access, shared campaign libraries, and approval workflows, turning BrandForge from a solo creative tool into a collaborative team platform.

4. More Channels and Formats

The @register_channel decorator pattern makes adding new channels trivial. Examples of planned additions include Google Ads, WhatsApp Business, Snapchat, and long-form video content generation beyond the 8-second limit of current Veo generation models.

5. Fine-Tuned Brand Voice Models

Using brand kit history and approved campaigns to fine-tune generation so each brand's output becomes more distinctive and refined over time.

Built With

- celery

- cloudrun

- cloudsql

- docker

- fastapi

- firebase

- gemini

- github

- memorystore

- next.js

- postgresql

- python

- redis

- tailwind

- typescript

Log in or sign up for Devpost to join the conversation.