-

-

The BlueSight device! You can see the 8MP camera on the front face of the device.

-

The device is based on the Raspberry Pi 3B+

-

The device can be mounted on a backpack strap, as shown in this photograph.

-

The Neosensory Buzz haptic feedback interface. It contains 4 LRAs to stimulate mechanoreceptors in your skin.

-

The haptic feedback interface is worn on the wrist. Data from the Pi is sent to it via Bluetooth.

-

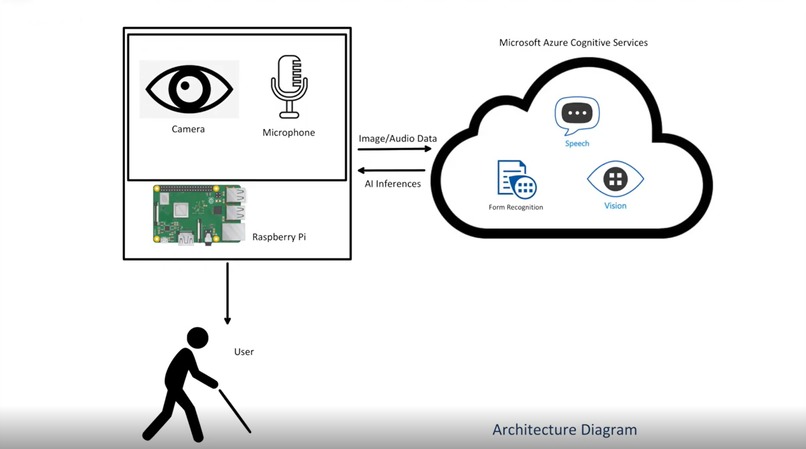

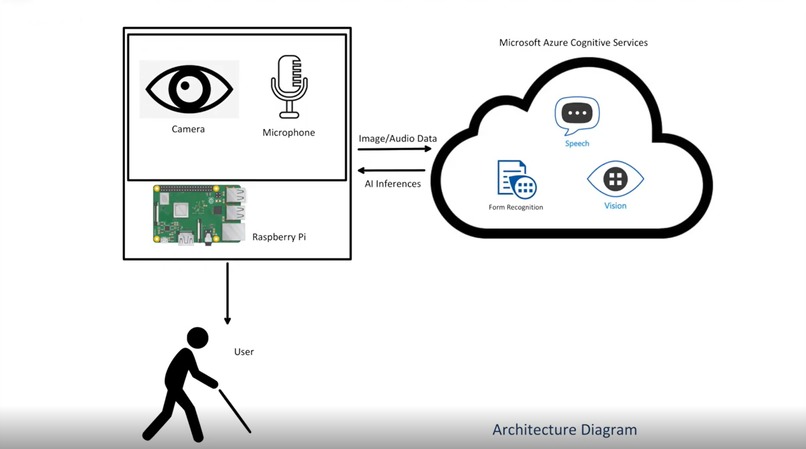

An architecture diagram of how the application works. Note: My first time making one of these; unusual stuff for a premed student.

Inspiration

As a premedical student, I’ve always been passionate about making a difference in the lives of others. I’ve particularly focused on developing solutions for fellow students and those with disabilities. When I came across the Microsoft Azure Hack of Accessibility, I thought this was the perfect opportunity to push myself to create something that can help both parties. In the past, I developed a tech based solution for people with prosthetic arms, but I wanted to do so for the blind. I got my first bit of inspiration from my peers; one of my friends had previously worked on an assistant for the blind, and while it worked, I couldn’t help but feel that the device was way too large and impractical to carry around.

That’s when I came up with the idea of running computer vision inferences on the cloud via Microsoft Azure. By doing so, I was able to keep the device small, low-power, inexpensive, and much more practical for the user. After drafting out some initial ideas, I created a prototype device that utilizes a combination of a voice assistant and a haptic feedback interface (via a device called the Neosensory Buzz) to assist blind students. I was lucky enough to get in touch with an amazing individual in the blind community, and she helped me connect with more individuals in the blind community. I got feedback from multiple sources over multiple days on my idea, and polished it all up. A week later, BlueSight was born.

What it does

BlueSight is an assistant for blind students that uses a combination of computer vision, document processing, and haptic feedback to both increase accessibility in the learning process and improve accessibility to physical places of education.

It accomplishes these tasks through utilization of a variety of Azure services. Through Azure AI Form Recognizer, students can process handwritten or typed notes, documents, or homework to search for keywords. This can help them study more efficiently and effectively. Through Azure Computer Vision, students can gain visual context about their environment, which could aid in navigation of busy college campuses. Students can also be guided to specific objects through a combination of Azure Computer Vision and haptic feedback from a device called the Neosensory Buzz. The Buzz was developed by neuroscientists at the Baylor School of Medicine, and can receive data from a computer/microcontroller as input via Bluetooth. Finally, all of these modules are tied together through the Azure Speech SDK, which allows communication between BlueSight and the user, both through speech to text, and through text to speech.

How I built it

BlueSight is built on the Raspberry Pi 3B+ running the 64-bit version of RaspbianOS . A small portable battery powers the device. Haptic feedback is delivered via Bluetooth through a Neosensory Buzz haptic feedback device on the wrist of the user. Small bluetooth earbuds with a microphone are used to communicate to and receive voice assistance from BlueSight.

In terms of the software side of things, the program is based in Python. Azure Computer Vision, Form Recognizer, and the Speech SDK were utilized. I also used the Bleak library to communicate via BLE to the Neosensory Buzz.

Challenges I ran into

This was a pretty difficult project to pull off. I was having trouble creating an algorithm that could effectively and understandably relay the position of the queried object to the user. However, after consulting some people from the blind community, I decided that simplicity was probably the best. I decided to create a system where the user is alerted if the object is straight ahead, to their right, or to their left via a unique vibrational sweeping pattern (1 of 3) on their wrist. If the object was to their left, there was a leftward sweeping pattern, while if the object was on their right, there was a rightward sweeping pattern. I had the vibrational intensity of the pattern correspond to the distance of the object from the user. This allowed for intuitive perception of an object’s location in space relative to the user.

Accomplishments that I’m proud of

I’m proud of having created something that I think is a start to a truly viable and effective solution for the blind. I definitely think the device could some work in specific areas, but the potential for impact is definitely there. Looking forward to working on it some more after the hackathon ends!

What I learned

This was my first time working with Azure, and I thought it would be incredibly overwhelming to learn going into this hackathon. To my surprise, it was actually quite simple to use. I had no trouble understanding what was going on, and ended up learning a ton about how to leverage Azure services to add AI to my projects. I’ll definitely be using it in future projects.

What's next for BlueSight

Because of this hackathon, I gained access to a very helpful and friendly community of blind individuals. I think that it would be an absolute waste to forget about this project after this hackathon. I’ll definitely be working with the blind community further to improve this device.

Built With

- azure

- bleak

- neosensory-buzz

- python

- raspberry-pi

Log in or sign up for Devpost to join the conversation.