-

-

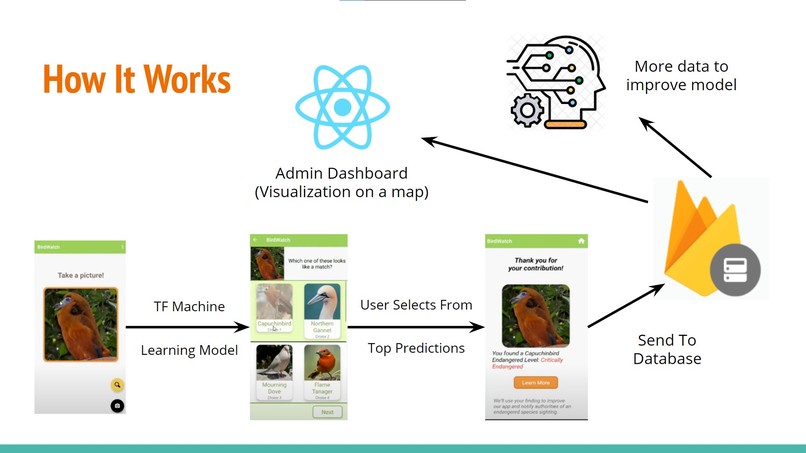

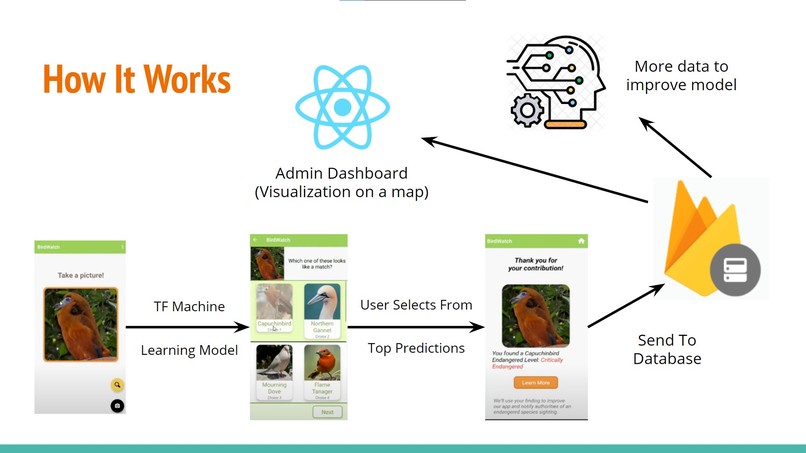

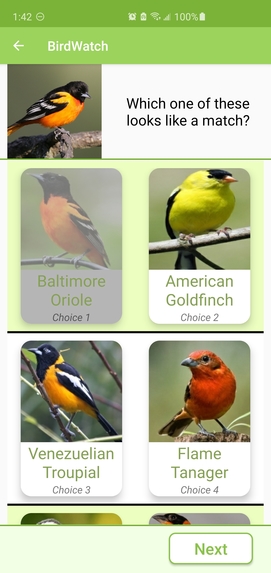

Flowchart of how our system works

-

The user takes a picture of the animal and it is displayed on the app.

-

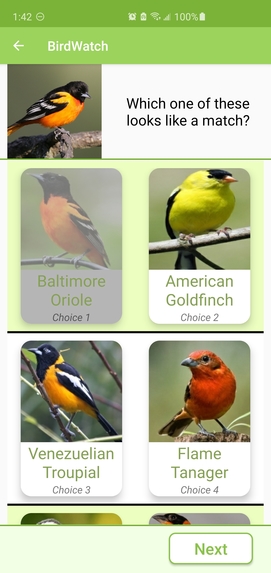

Our app using our AI model to propose the classification of the input image ranked by likelihood. The user chooses the most similar one.

-

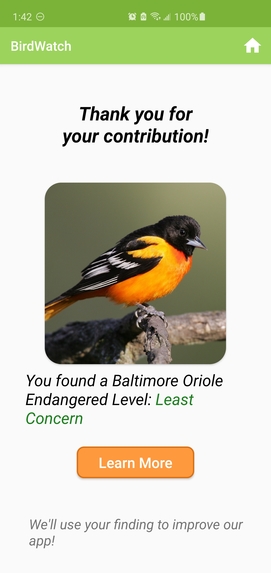

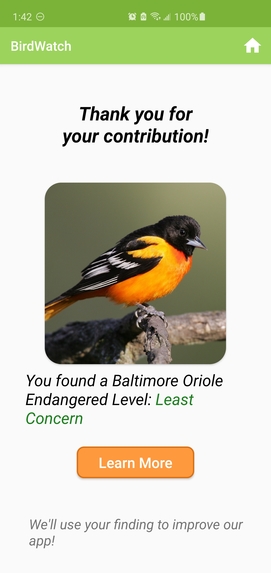

The app returns the IUCN status of the bird and a link for more info. Authorities are alerted and all the bird data is sent to our database.

Inspiration

According to the United Nations, approximately 150 species go extinct every day. The leading causes of extinction are the loss of habitat and overhunting. Before human interference, this extinction rate was estimated to be 1000 to 10000 times lower. The National Wildlife Federation says the best ways to protect endangered species are to raise awareness and identify endangered species’ habitats. However, a big challenge faced by experts working to preserve endangered species and protect their habitats is the difficulty of finding the species, with their ever-dwindling numbers. Moreover, existing animal identification apps only identify animals from images, but they don’t educate users or alert local authorities if the user has found an endangered species.

What it does

BirdWatch is a fast and easy app for eco-tourists to classify the bird species they find, learn about their endangerment level, and crowdsource their findings to improve the app and notify local authorities of endangered species in an area. It uses a deep learning model based on MobileNetV2's architecture to classify the species of a bird taken by the phone camera and display the top 5 matches. With a tap, the user confirms which bird it is and then receives information about the endangerment level of the bird and a link to additional articles. This is how BirdWatch improves ecotourism and teaches users about their environment and raises awareness through sustainable experiences. Behind the scenes, the bird's image, species, and location are sent to Firebase where the model will continually train and improve on an ever-growing, crowdsourced dataset. By adding to published data, the system makes the model even more representative of a wide variety of bird images. Local authorities can use the web app we created to see a map displaying markers on the locations where birds were found. In this manner, if an endangered bird is found, authorities will get notified and know the area the bird was located in, thereby being able to preserve the natural environment and protect these species.

How we built it

We used a dataset of 225 birds and trained a custom model with a modified MobileNet V2 architecture to identify the bird species. We then converted the model into a TensorFlow Lite model and exported it to the android app. To get the endangered level of species, we wrote a web scraper to get their IUCN status. Combining the machine learning model and web scraper output, we linked these elements together in a Linked Hash Map. In the android app, we took the input of the user for the picture and ran it through the model. This gave us the probability that the bird was a particular species for each of the 225 bird species. We used this to generate a list of the top 5 candidates and display their information in a ListView so that the user could select an option. After the user selected a bird, we used the LinkedHashMap to get the endangered status of the bird and display it to the user with additional information. We implemented a feature to retrieve the location (at the permission of the user), time, and the bird classification and added it to our Firebase Database. We made API calls to the Database to retrieve the data to put onto an interactive map with filtering in our web app.

Challenges we ran into

Our biggest challenge during this project was exporting the machine learning model so that we could use it to predict images in our mobile app. At first we used pytorch, but there were problems in exporting the model to a mobile-compatible version. Then we used Tensorflow, but training failed at first because Google Colab, a free online Jupyter notebook, had a RAM limit which restricted us from creating the TensorFlow lite model at the level we wanted it to be. We also had problems with figuring out how to export certain models since the documentation was difficult to find. We solved this by training and exporting our model from google cloud instead.

Accomplishments that we're proud of

- We're proud of getting a working model loaded into android studio and it being able to predict with the same accuracy as previously predicted

- We're proud to create a mobile application that takes a picture of a bird as input, then classifies it, displays results to the users, and crowdsources the data so that the app can continue to learn.

- We're proud of the web scraping application we used to load in the conservation status of each bird rather than having to manually look up and input the data for 200+ birds

- We're proud of training a machine learning model that can classify so many different classes with such a good accuracy

- We're proud of a web application that integrates all the data together in a clean and sleek way.

What we learned

- We learned different ways to do transfer learning so that it is much easier to do train a model on a numerous number of classes

- We learned how to make our android app interact with firebase databases and how to send the data from the model's prediction to the database

- How to take the list of confidence values received by the model's prediction and turn it into a picture array

- We learned how to use integrate firebase database with react.js

What's next for BirdWatch

To build upon the app, we can train the model on animal species as well so that it can identify a broader range of endangered species. Additionally, using Google App Engine will allow for the model to be hosted and trained online in the cloud allowing for automatic scalability as more users crowdsource their image data. We could also improve on the web interface to provide more relevant information and statistics.

Built With

- android-studio

- firebase

- java

- keras

- react

- tensorflow

Log in or sign up for Devpost to join the conversation.