BiaSight is an AI-powered tool that analyzes websites for gender bias, empowering content creators to build more inclusive online spaces. It uses Gemini via Vertex AI to score the categories language, representation, stereotypes, and framing, providing actionable suggestions to improve website content. Think of it as PageSpeed Insights for inclusivity.

Part of the project was also to make it deployable and to create a live version. Try it yourself: biasight.com

Keep in mind: this is a prototype. I applied a daily limit to control GCP costs. In case of exceptions, get a coffee and try again ☕️.

All code is open-source on GitHub. The project uses 2 separate repositories:

- Github repository for backend: https://github.com/vojay-dev/biasight

- Github repository for frontend: https://github.com/vojay-dev/biasight-ui

tl;dr for the Judges: I added instructions for you for local testing as the very last chapter ⬇️

💡 Inspiration / Motivation

Words matter. In a world where gender inequality persists despite decades of progress, BiaSight addresses one of the most pervasive yet often overlooked aspects of discrimination: the language we use in our digital spaces. BiaSight uses the power of Google's cutting-edge AI, including Gemini, to analyze and improve the inclusivity of online content.

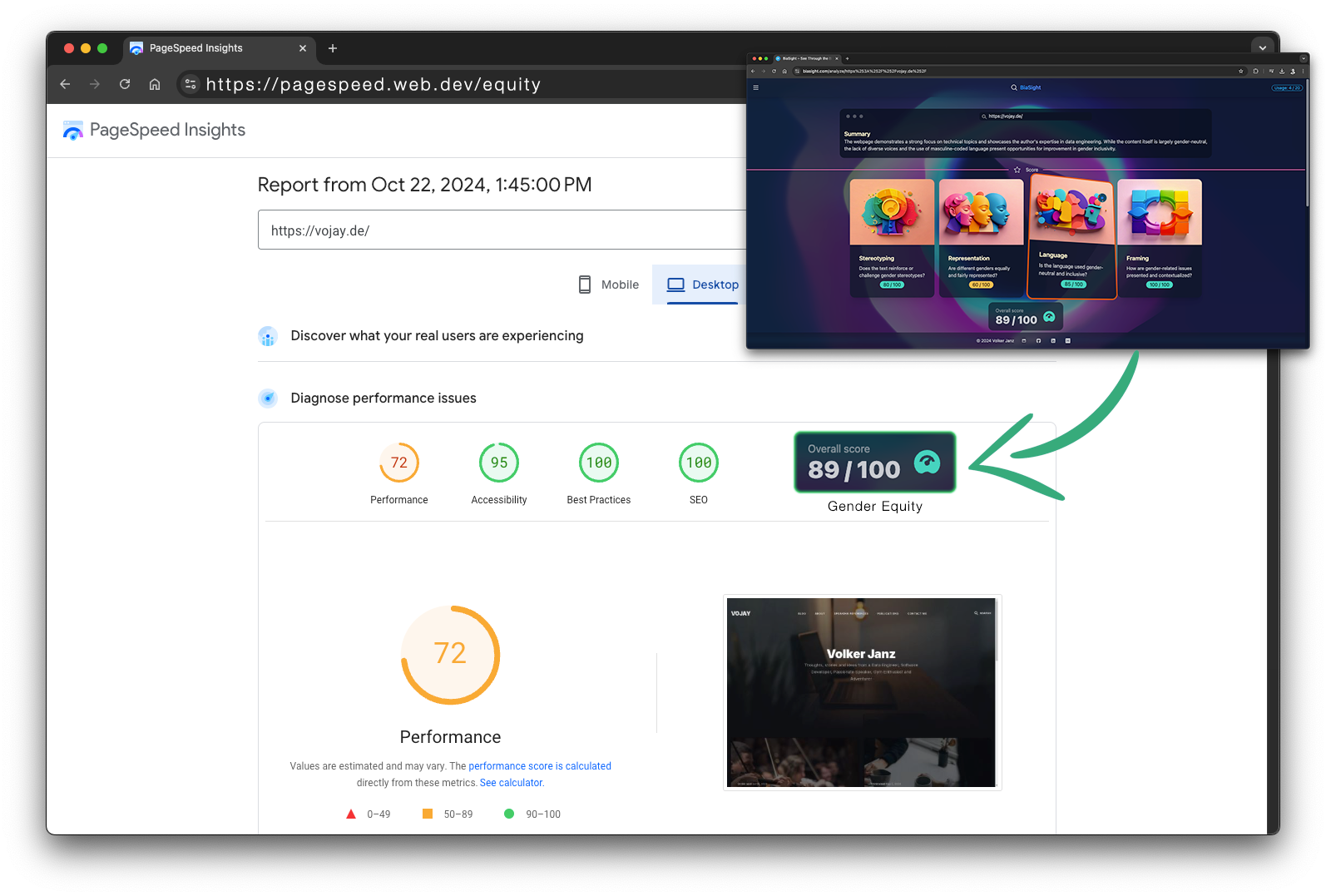

While content creators and website authors often focus on performance, usability, and visual appeal, the impact of words on discrimination against women and girls and how this impacts equality is frequently underestimated. BiaSight aims to change this by providing an intuitive, AI-driven analysis of web content across various equality categories, much like how Google PageSpeed Insights has become an indispensable tool for web performance optimization.

The vision of BiaSight is to make gender-inclusive language as integral to web development as responsive design or SEO optimization and to inspire creators for change. Remember, words matter. They shape perceptions, influence behaviors, and can either reinforce or challenge the gender inequalities that persist in our society.

Imagine a world where, alongside performance, accessibility, and SEO, gender equity is considered an essential part of web development. Envision a future where tools like Google PageSpeed Insights seamlessly integrate the BiaSight score, making gender-inclusive language the default for web content creation. This integration would serve as a powerful reminder that words have a profound impact on shaping perceptions and promoting equality.

Please also read the UN Sustainable Development Goal 5: Achieve gender equality and empower all women and girls for more context.

The 2024 UNESCO gender report "Technology on Her Terms" reveals a clear digital divide: 244 million fewer women than men have internet access, and women hold less than 25% of jobs in science, technology, engineering, and mathematics (STEM). This underrepresentation perpetuates harmful stereotypes in online content and even AI algorithms. For instance, AI-generated texts often describe women as "models" or "waitresses", while associating men with "business" and "career". These biases create a vicious cycle, discouraging girls from pursuing STEM fields and, in turn, shaping the very technologies that reinforce these stereotypes.

BiaSight aims to break this cycle by empowering content creators to identify and mitigate gender bias in their work—a concern easily overlooked in today's world of readily available AI content generation tools. By raising awareness of subtle prejudices, BiaSight helps build a more inclusive digital world that accurately reflects and empowers all genders.

Explore the full report to learn how we can create technology truly on her terms.

🎮 What it does

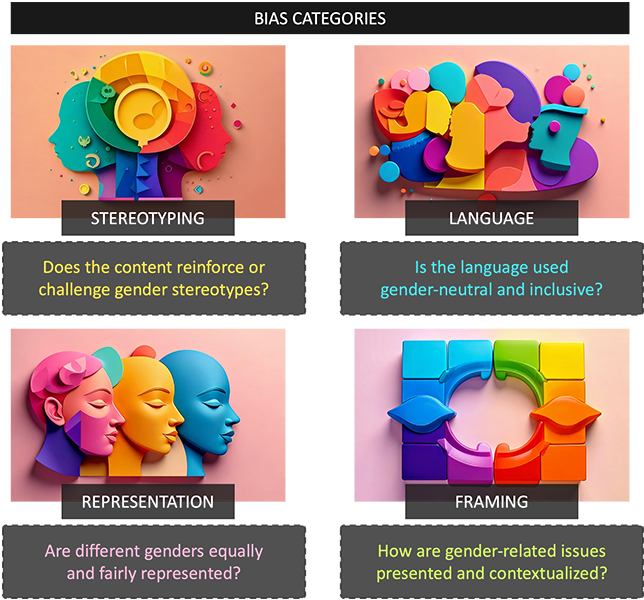

BiaSight empowers content creators to build a more inclusive online world by analyzing websites for gender bias and equity. Simply enter a website URL, and BiaSight, powered by Google Gemini, will deliver a comprehensive report highlighting potential areas for improvement. BiaSight analyzes websites across four key categories:

- Stereotyping: Are traditional gender roles being perpetuated? BiaSight examines how genders are portrayed in roles, occupations, behaviors, and characteristics to identify instances where harmful stereotypes may be present.

- Representation: Is there a balanced and diverse representation of genders on the webpage? BiaSight considers the frequency of male vs. female mentions, the visibility of women in images, and the inclusion of diverse perspectives and experiences.

- Language: Does the language used avoid gender bias? BiaSight detects gendered language, loaded words with stereotypical connotations, and the overall tone towards different genders. The analysis highlights opportunities to use more inclusive language.

- Framing: How are gender-related issues presented on the webpage? BiaSight checks for biases in perspective, looking for instances where the framing reinforces existing power structures or minimizes the experiences of any gender.

BiaSight delivers a comprehensive report, offering a detailed summary of identified biases, highlighting positive aspects of the website, and providing actionable suggestions for improvement and feedback for each of the categories.

To ensure the most accurate evaluation, the overall score is calculated in the backend, minimizing the potential for bias in the AI's responses. This robust backend, built with FastAPI, is also designed to be easily integrated into other platforms. Imagine using BiaSight as a Chrome extension or even as a new element within Google PageSpeed Insights!

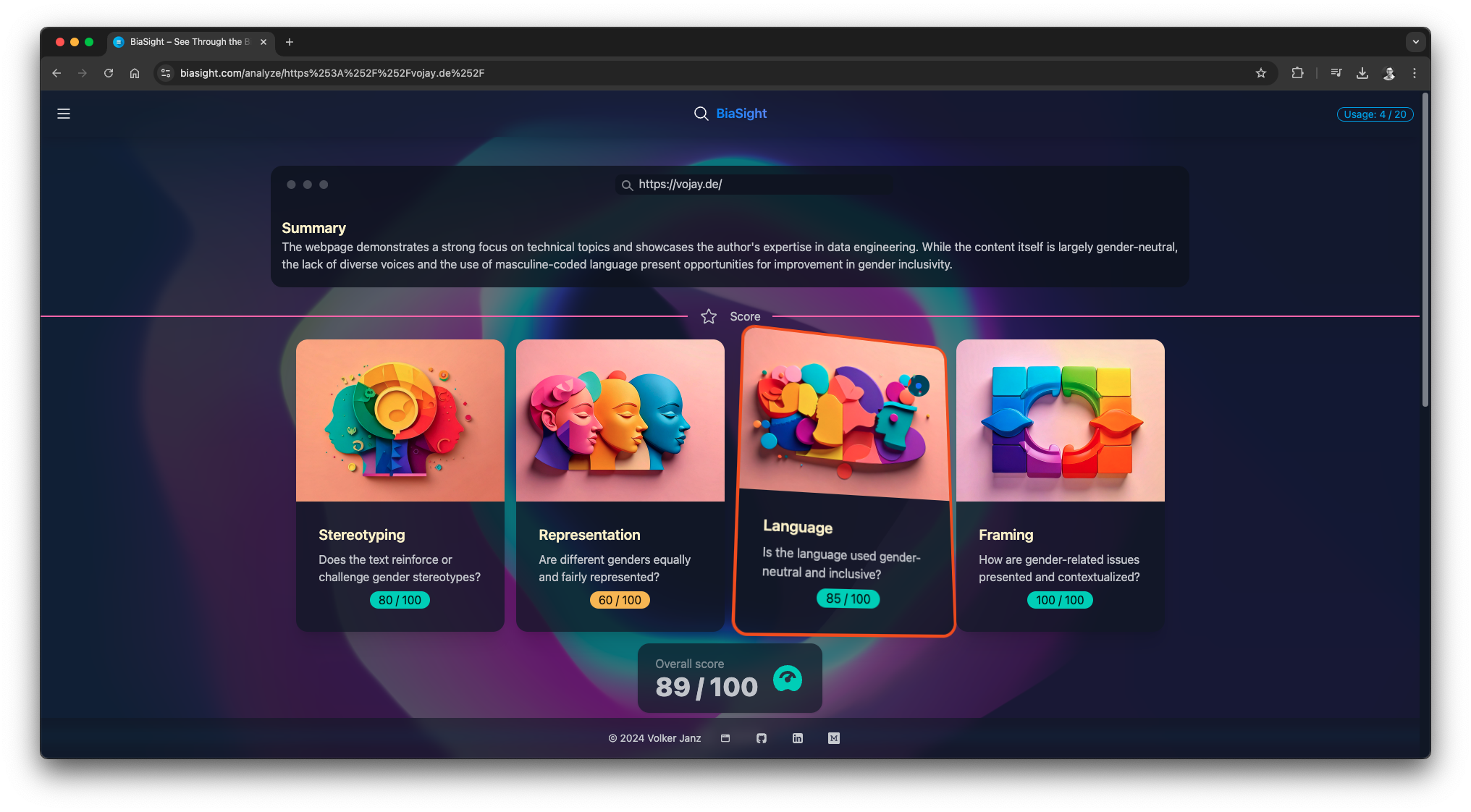

💻 Some examples:

Score

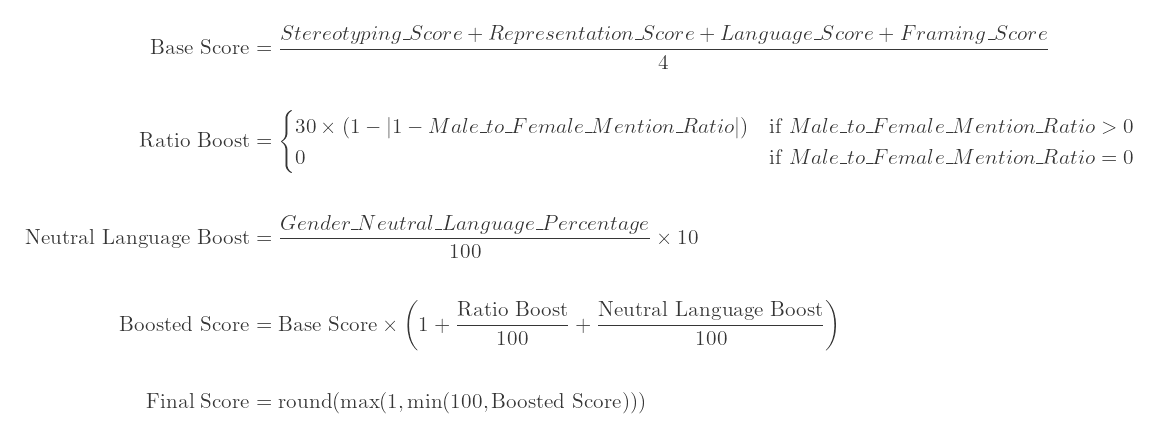

As a first step, a score is assigned by Gemini for each of the four bias categories: stereotyping, representation, language, and framing. The overall score is then calculated using the average of the four categories. Then, two additional factors are applied:

- Ratio Boost: A bonus is added to the base score based on the male-to-female mention ratio. The closer the ratio is to 1 (meaning equal mentions), the higher the bonus, with a maximum boost of 30% when the ratio is exactly 1.

- Neutral Language Boost: A bonus is added based on the percentage of gender-neutral language used in the text. The higher the percentage of gender-neutral language, the higher the bonus, with a maximum boost of 10% when the language is 100% gender-neutral.

These boosts aim to acknowledge and reward content with a more balanced gender representation and inclusive language. The final overall score is then capped between 1 (extremely biased) and 100 (completely free of bias), providing a comprehensive evaluation of the content's inclusivity.

⚙️ How it was built

Tech stack

Backend

- Python 3.12 + FastAPI API development

- Jinja templating for modular prompt generation

- Pydantic for data modeling and validation

- Poetry for dependency management

- Docker for deployment

- Gemini via VertexAI for evaluating web content

- BeautifulSoup for extracting content from web pages

- Ruff as linter and code formatter together with pre-commit hooks

- Github Actions to automatically run tests and linter on every push

Frontend

- VueJS 3.4 for frontend development

- Vite for frontend tooling

- Tailwind CSS as a utility-first CSS framework

- daisyUI as a component library for Tailwind CSS

System overview

Backend

The BiaSight backend is a powerful engine built with FastAPI and Python. It leverages BeautifulSoup to extract readable content from web pages, preparing it for analysis. Using Jinja templating, prompt generation is modularized, allowing seamless integration of web content into advanced prompts for Google’s Gemini LLM.

To ensure both accurate and deterministic results, Gemini is configured to use JSON mode for structured output and a low-temperature setting is applied to minimize variability in its generation. Pydantic ensures robust data modeling and validation, while Poetry manages dependencies efficiently. Docker streamlines deployment, and Ruff, combined with GitHub Actions, maintains high code quality through automated testing and linting.

For optimal performance and user experience, the backend employs a TTLCache, reducing analysis time by caching recent results. This architecture fosters easy and secure extensibility, allowing for future enhancements and integrations as BiaSight continues to evolve.

Frontend

The frontend is powered by Vue 3 and Vite, supported by daisyUI and Tailwind CSS for efficient frontend development. Together, these tools provide users with a sleek and modern interface for seamless interaction with the backend.

Backend documentation

Github repository for backend: https://github.com/vojay-dev/biasight

One of the main challenges with todays AI/ML projects is data quality. But that does not only apply to ETL/ELT pipelines, which prepare datasets to be used in model training or prediction, but also to the AI/ML application itself. Using Python for example usually enables Data Engineers and Scientist to get a reasonable result with little code but being (mostly) dynamically typed, Python lacks of data validation when used in a naive way.

That is why in this project, I combined FastAPI with Pydantic, a powerful data validation library for Python. The goal was to make the API lightweight but strict and strong, when it comes to data quality and validation. Instead of plain dictionaries for example, the BiaSight API strictly uses custom classes inherited from the BaseModel provided by Pydantic. This is the result of an analysis for example:

class AnalyzeResult(BaseModel):

summary: str

overall_score: Optional[int] = None

stereotyping_feedback: str

stereotyping_score: int

stereotyping_example: str

representation_feedback: str

representation_score: int

representation_example: str

language_feedback: str

language_score: int

language_example: str

framing_feedback: str

framing_score: int

framing_example: str

positive_aspects: str

improvement_suggestions: str

male_to_female_mention_ratio: float

gender_neutral_language_percentage: float

The combination of up-to-date Python features and libraries, such as FastAPI, Pydantic or Ruff makes the backend less verbose but still very stable and ensures a certain data quality, to ensure the LLM output has the expected quality.

Makefile

The project includes a Makefile with common tasks like setting up the virtual environment with Poetry, running the service locally and within Docker, running test, linter and more. Simply run:

make help

to get an overview of all available tasks.

Configuration

Prerequisite

- GCP project with VertexAI API enabled and access to Gemini (recommended:

gemini-1.5-flash-002orgemini-1.5-pro-002) - JSON credentials file for GCP Service Account with VertexAI permissions

The API is configured via environment variables. If a .env file is present in the project root, it will be loaded automatically. You can copy the .env.dist file from the repository as a basis.

The following variables must be set:

GCP_PROJECT_ID: The ID of the Google Cloud Platform (GCP) project used for VertexAI and Gemini.GCP_LOCATION: The location used for prediction processes.GCP_SERVICE_ACCOUNT_FILE: The path to the service account file used for authentication with GCP.

Gemini model

The default model used for Gemini is gemini-1.5-flash-002. To use a different model, simply adjust the GCP_GEMINI_MODEL config in the .env file. For this use-case, the Flash model delivers good and cost-efficient results.

Project setup

(Optional) Configure poetry to use in-project virtualenvs:

poetry config virtualenvs.in-project true

Install dependencies:

poetry install

Run:

Please check the Configuration section to ensure all requirements are met.

curl -s -X POST localhost:8000/analyze \

-H 'Content-Type: application/json' \

-d '{"uri": "https://womentechmakers.devpost.com/"}' | jq .

Docker

All Docker commands are also encapsulated in the Makefile for convenience.

Build

docker build -t biasight .

Run

docker run -d --rm --name biasight -p 9091:9091 biasight

curl -s -X POST localhost:9091/analyze \

-H 'Content-Type: application/json' \

-d '{"uri": "https://womentechmakers.devpost.com/"}' | jq .

docker stop biasight

Save image for deployment

docker save biasight:latest | gzip > biasight_latest.tar.gz

Gemini interaction

Gemini interaction is encapsulated in the GeminiClient class. To ensure a high quality of prompt responses and to avoid unnecessary parsing issues. The GeminiClient class uses the Gemini JSON format mode.

See: https://ai.google.dev/gemini-api/docs/structured-output?lang=python

This ensures Gemini replies with valid JSON, whereas the schema is attached to the individual prompt, for example:

Return your analysis in this JSON format:

{

"summary": str,

"stereotyping_feedback": str,

"stereotyping_score": int,

"stereotyping_example": str,

"representation_feedback": str,

"representation_score": int,

"representation_example": str,

"language_feedback": str,

"language_score": int,

"language_example": str,

"framing_feedback": str,

"framing_score": int,

"framing_example": str,

"positive_aspects": str,

"improvement_suggestions": str,

"male_to_female_mention_ratio": float,

"gender_neutral_language_percentage": float

}

This approach is then combined with Pydantic models to ensure the correctness of datatypes and the overall structure:

def analyze(self, text: str) -> AnalyzeResult:

prompt = self._render_template(text)

chat: ChatSession = self.gemini_client.start_chat()

chat_response: str = self.gemini_client.get_chat_response(chat, prompt)

analyze_result = AnalyzeResult.model_validate(from_json(chat_response))

# overall score is calculated via Python instead of using the LLM to ensure deterministic results

analyze_result.overall_score = self._calculate_score(analyze_result)

return analyze_result

This is a great example how to programmatically interact with Gemini, ensure the quality of the responses and use a LLM to cover core business logic.

Tests

The project also implements various tests, that especially cover the BiasAnalyzer, which is the class interacting with Gemini and calculating the score based on the formular described above.

In this case, I am using pytest and ruff to ensure correctness and quality.

To run the tests and linter, simply execute:

make check

Or alternatively:

poetry run python -m pytest tests/ -v -Wignore

poetry run ruff check --fix

Direct link to the test cases for the BiasAnalyzer: test_bias.py

Finally, GitHub Actions is configured with a workflow to automatically run the tests and linter on every push.

Example API interaction

curl -s -X POST localhost:8000/analyze \

-H 'Content-Type: application/json' \

-d '{"uri": "https://womentechmakers.devpost.com/"}' | jq .

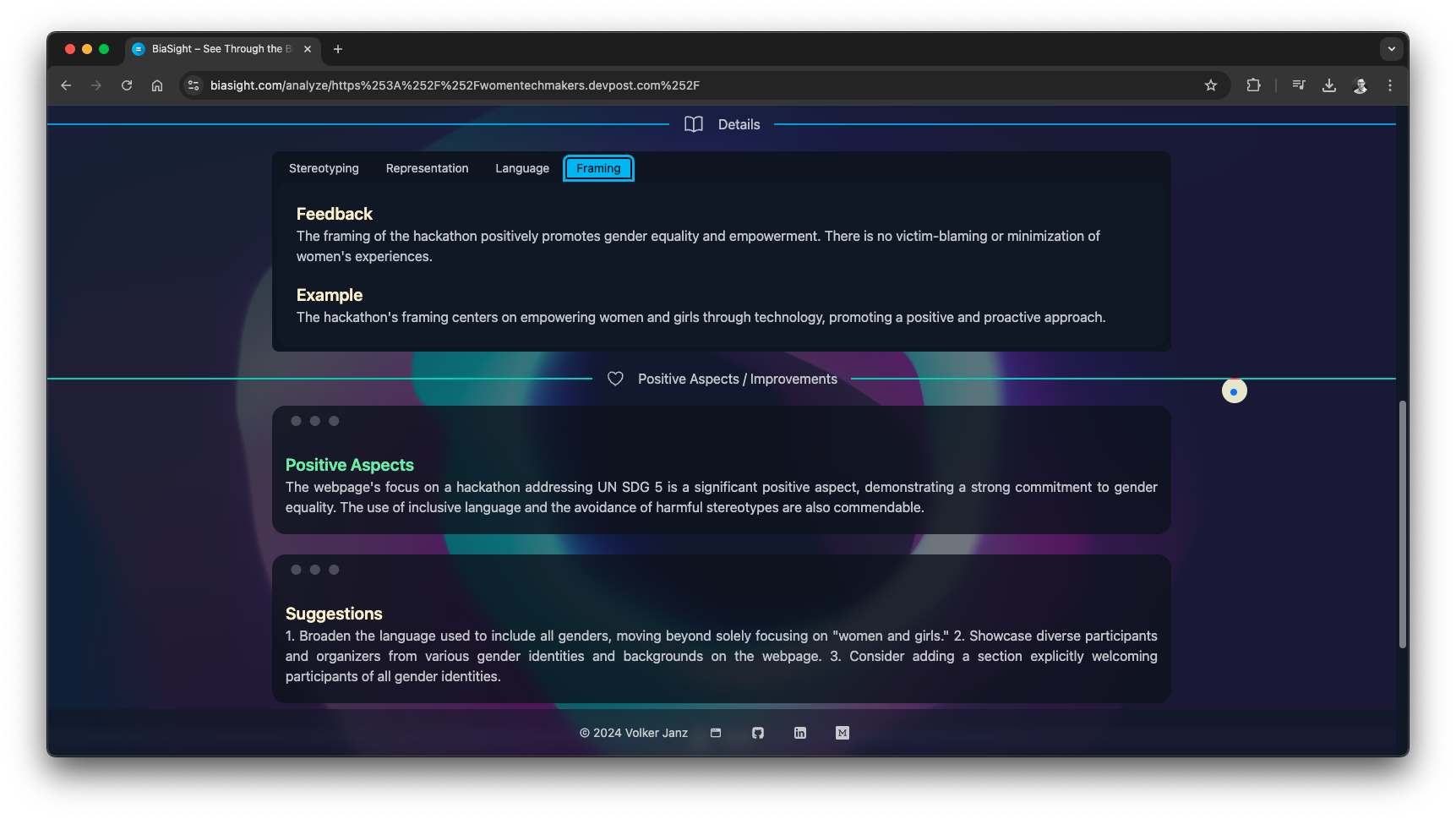

{

"uri": "https://womentechmakers.devpost.com/",

"result": {

"summary": "The webpage shows a strong commitment to gender equality through its focus on a hackathon addressing UN SDG 5. However, while the language used is largely inclusive, the high number of mentions related to women and girls compared to men could be perceived as unbalanced. Further improvements could enhance the overall inclusivity.",

"overall_score": 96,

"stereotyping_feedback": "The webpage avoids reinforcing traditional gender stereotypes. The focus is on addressing gender inequality, not perpetuating it.",

"stereotyping_score": 95,

"stereotyping_example": "The hackathon's theme directly challenges gender inequality by focusing on UN SDG 5.",

"representation_feedback": "While the hackathon aims for inclusivity, the overwhelming focus on women and girls in the description might inadvertently overshadow the participation of other genders.",

"representation_score": 75,

"representation_example": "The repeated emphasis on \"women and girls\" in the description and prize categories.",

"language_feedback": "The language used is largely gender-neutral and inclusive, using terms like \"participants\" instead of gendered terms. However, the frequent mention of \"women and girls\" could be balanced.",

"language_score": 85,

"language_example": "The use of \"participants\" instead of gender-specific terms like \"participants\" and the explicit statement that the hackathon is open to all genders.",

"framing_feedback": "The framing of the hackathon positively promotes gender equality and empowerment. There is no victim-blaming or minimization of women's experiences.",

"framing_score": 90,

"framing_example": "The hackathon's focus on UN SDG 5 and its emphasis on addressing real-world challenges faced by women and girls.",

"positive_aspects": "The webpage's clear commitment to gender equality through its focus on a hackathon addressing UN SDG 5 is commendable. The use of inclusive language and the explicit statement welcoming participants of all genders are positive steps.",

"improvement_suggestions": "1. Balance the focus on women and girls with more inclusive language that acknowledges the participation and contributions of all genders. 2. Highlight success stories and contributions from participants of all genders in promotional materials. 3. Ensure that judging criteria are equally applicable and unbiased towards all participants regardless of gender.",

"male_to_female_mention_ratio": 0.1,

"gender_neutral_language_percentage": 80.0

}

}

Frontend documentation

Project setup

Ensure to configure the correct API endpoint for local or live usage in src/config.js. When you run the backend locally, ensure to set API_BASE_URI accordingly, for example:

export const API_BASE_URI = 'http://localhost:8000'

npm install

Run

npm run dev

Build

npm run build

Components

The frontend is separated into the following components:

- 📚 Main Components

- Init: Component to enter a valid URL

- Analyze: Running the actual analysis talking to the API and presenting the report

- 📄 Page Components

- Home: Start page

- About: About page with basic project information

- ⚙️ Utility Components

- CustomCursor: Custom cursor implementation

- LoadingAnimation: Loading animation with customizable loading text

⭐️ Value for gender equity

BiaSight addresses the urgent need to challenge unconscious bias in digital content, directly impacting gender equality. It goes beyond traditional language analysis, offering a comprehensive approach that examines:

- Stereotyping: Identifying and mitigating harmful representations of gender roles and traits.

- Representation: Assessing and encouraging balanced and diverse representation of genders.

- Language: Promoting inclusive and gender-neutral language.

- Framing: Highlighting and addressing biased perspectives and narratives.

This comprehensive analysis empowers content creators with actionable insights and concrete suggestions to build a more equitable online world.

BiaSight's impact is profound:

- Raising Awareness: It increases understanding and awareness of unconscious bias.

- Empowering Creators: It equips creators with the tools to create more inclusive content.

- Promoting Equality: It contributes to dismantling harmful stereotypes and promoting a more just online environment.

BiaSight's innovative approach to identifying and mitigating bias makes it a powerful tool for creating a more equitable digital future.

📚 What I've learned

Building BiaSight has been an incredible journey, both technically and personally. On the technical side, I've delved deeper into the power of Google's Gemini API and its ability to process and analyze complex text. I've learned how to craft effective prompts that guide the AI's response, balancing creativity with structured output for optimal results. The combination of FastAPI, Vue.js, and advanced AI tools like Gemini has been a powerful and rewarding experience, pushing me to expand my skillset.

More importantly, this project has broadened my perspective on the role of language in shaping our world. I've learned that even subtle biases in online content can have a significant impact on how women are perceived and the opportunities they're given.

It's not just about the code; it's about using technology to make a positive difference.

🚀 What's next for BiaSight

- Visual component: Let the backend create and process screenshots of websites and analyze those with AI, to also identify gender bias in visual page aspects.

- PageSpeed Insights: Hi Google 😉, how about adding BiaSight or a more advanced version inspired by this project, to Google PageSpeed Insights and make it a new score category, so that gender equity becomes an integral part of creating web content? Would be glad to help 😉.

- Chrome extension: Create a Chrome extension that uses the score logic of BiaSight but evaluates pages with one or more Chrome built-in APIs to allow for a more seamless integration into the daily business of web content creators.

More ideas? Feedback welcome!

⚠️ Instructions for Judges

Prerequisite:

- Python 3.12

- Poetry (see: https://python-poetry.org/docs/#installation for installation instructions)

- GCP Service Account JSON key file to use Gemini via VertexAI (uploaded to this project as additional info for judges and organizers only)

- Node.js version 18+

- NPM

The project is split into:

- Backend / API (Python + FastAPI): https://github.com/vojay-dev/biasight

- Frontend (VueJS + Vite): https://github.com/vojay-dev/biasight-ui

Both have sophisticated README.md files with instructions.

Notes backend:

The API is configured via environment variables. If a .env file is present in the project root, it will be loaded automatically. Create a .env file in the project root with the following content:

GCP_PROJECT_ID=vojay-329716

GCP_LOCATION=us-central1

GCP_SERVICE_ACCOUNT_FILE=gcp-vojay-gemini.json

GCP_GEMINI_MODEL=gemini-1.5-flash-002

As mentioned, I provided the GCP_SERVICE_ACCOUNT_FILE as additional info for this project.

Everything else should be clear from the project README.

Notes frontend:

The Frontend is already configured to use the API running at localhost. However, it is recommended to double check the configuration in src/config.js. Here API_BASE_URI must be match the host and port the API is running on. For the local default setup, this is:

export const API_BASE_URI = 'http://localhost:8000'

Everything else should be clear from the project README.

Alternative

The project is also up and running at: https://biasight.com/, feel free to give it a try 👍.

WORDS MATTER

Built With

- beautiful-soup

- daisyui

- docker

- fastapi

- gemini

- google-cloud

- javascript

- jinja

- npm

- poetry

- pydantic

- python

- ruff

- tailwind

- vertexai

- vite

- vuejs

Log in or sign up for Devpost to join the conversation.