-

-

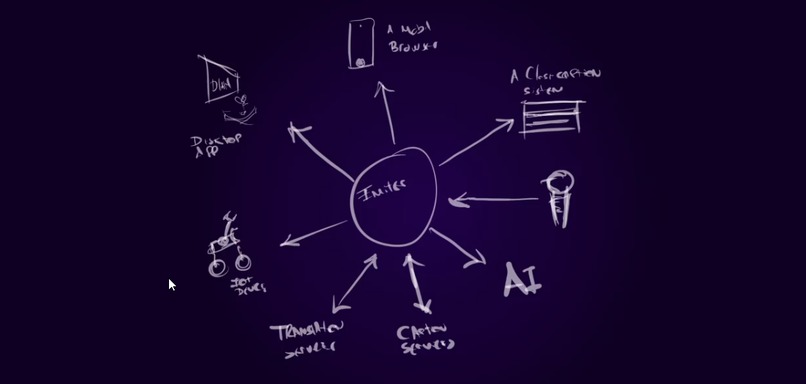

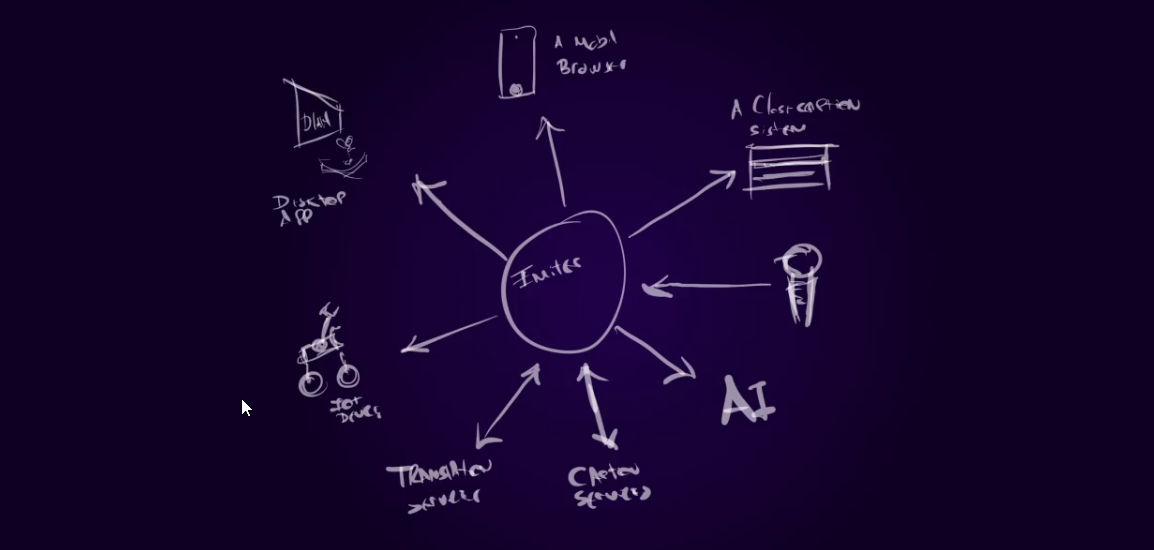

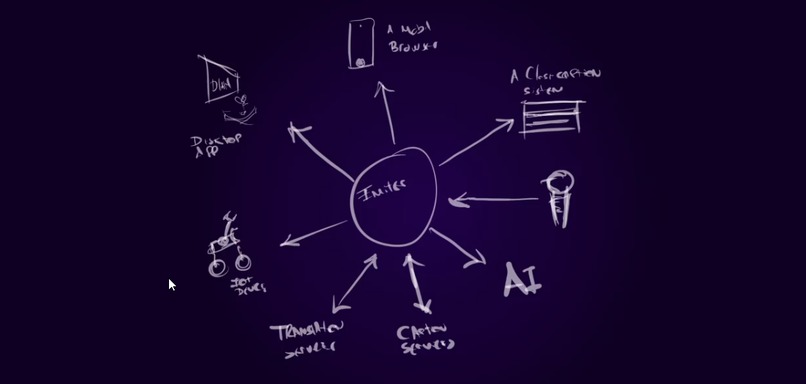

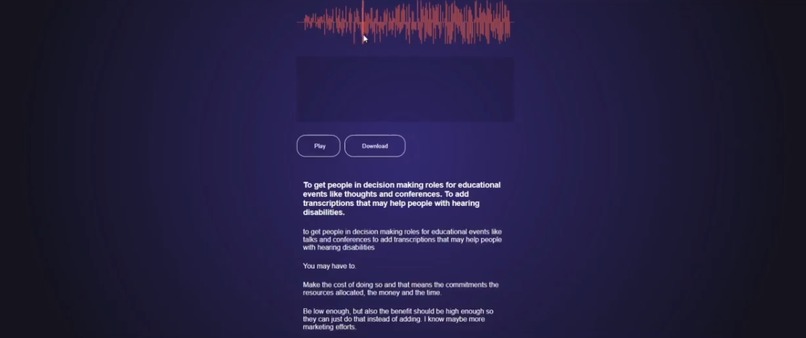

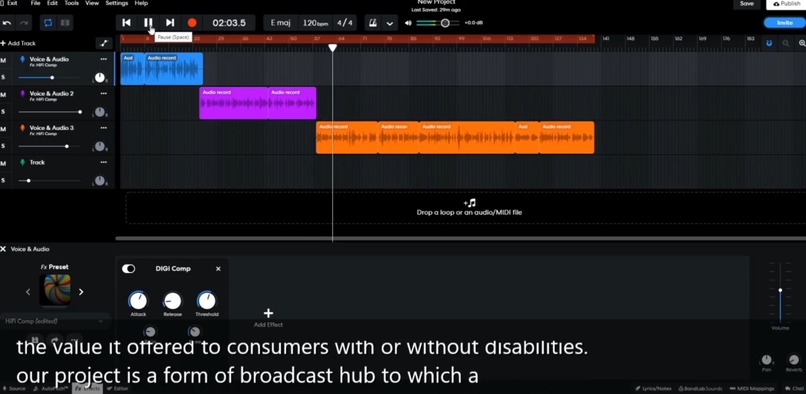

A hub for audio, transcriptions and translations, or anything else

-

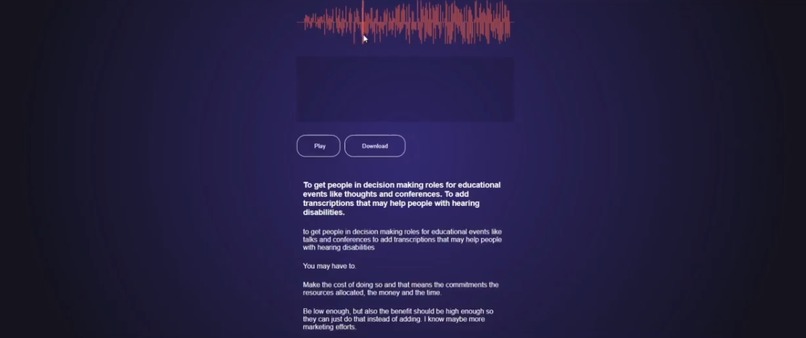

After the event, edit, and publish to other places, (add value to organizers)

-

Many apps may use the transcription data diferent, here a desktop app shows on top of a random sofware

-

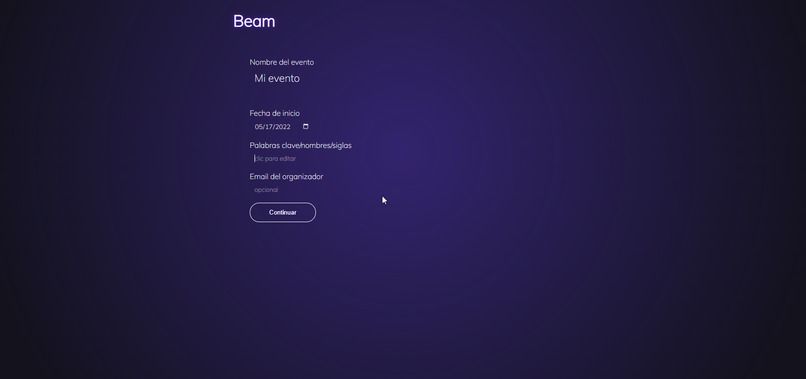

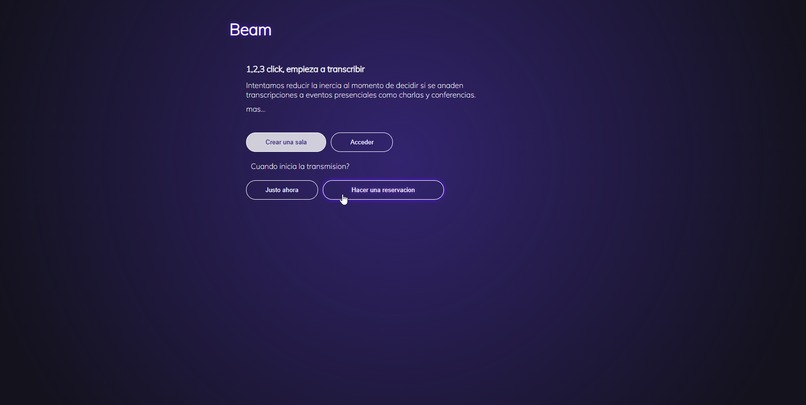

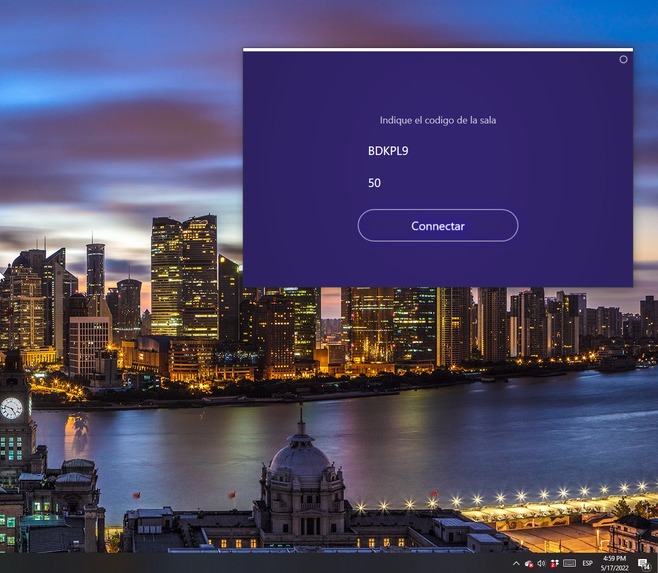

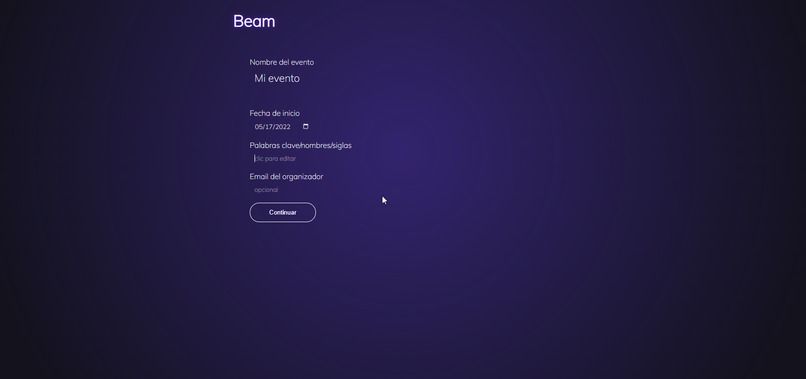

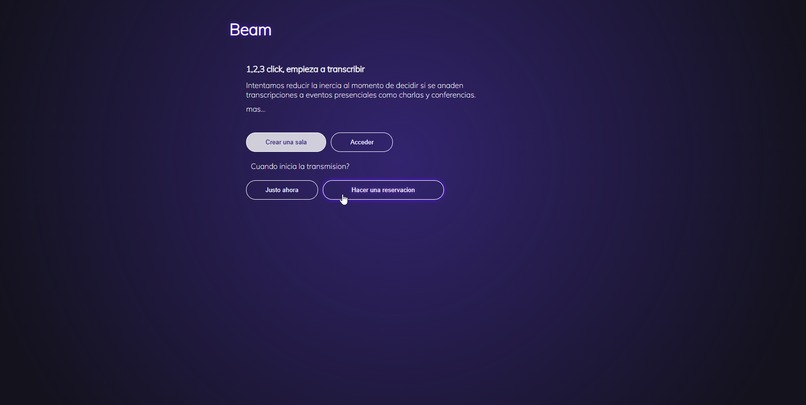

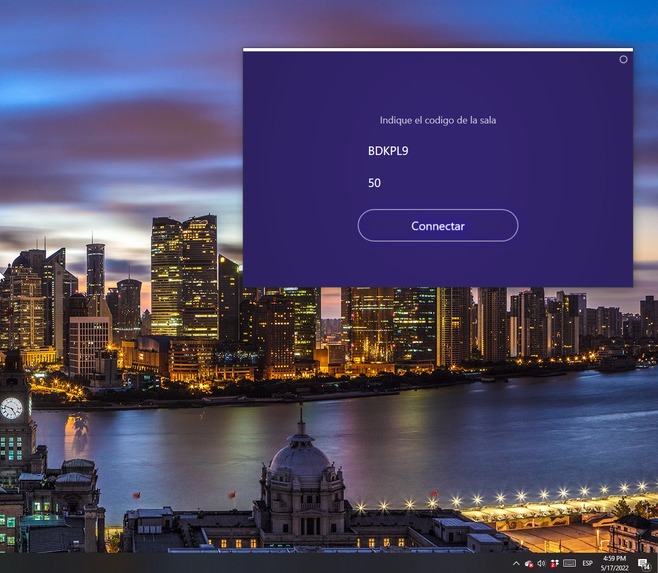

Configure the transcription session

-

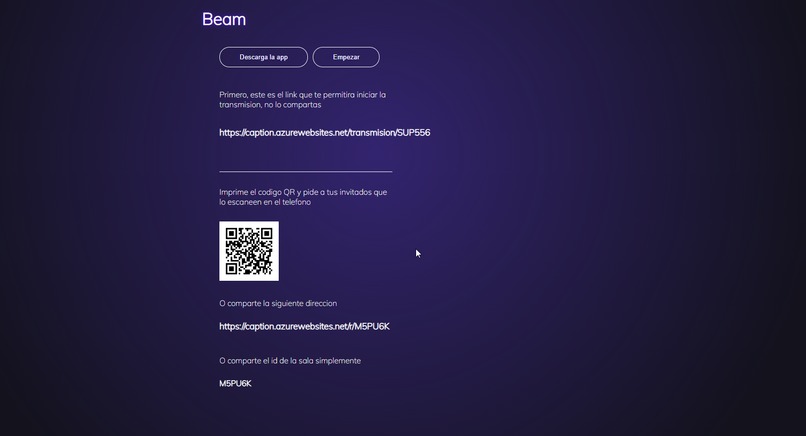

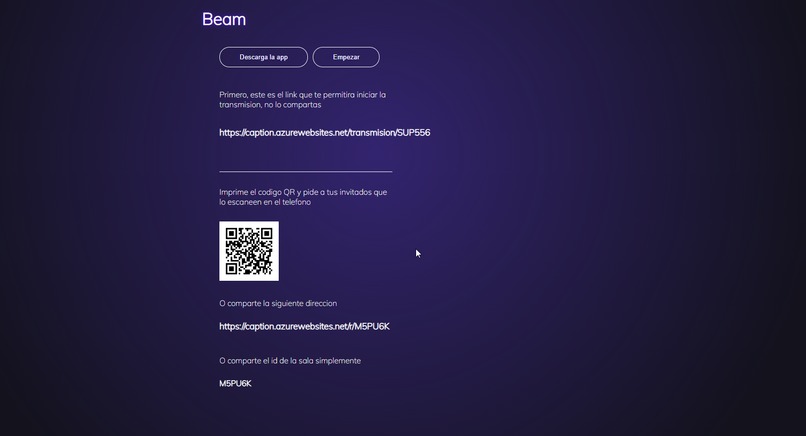

Use QR, links or session ID to access in any application

-

When and how you want to start

-

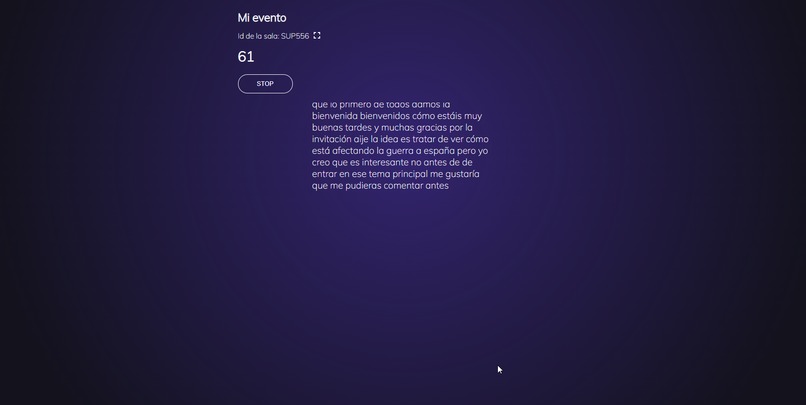

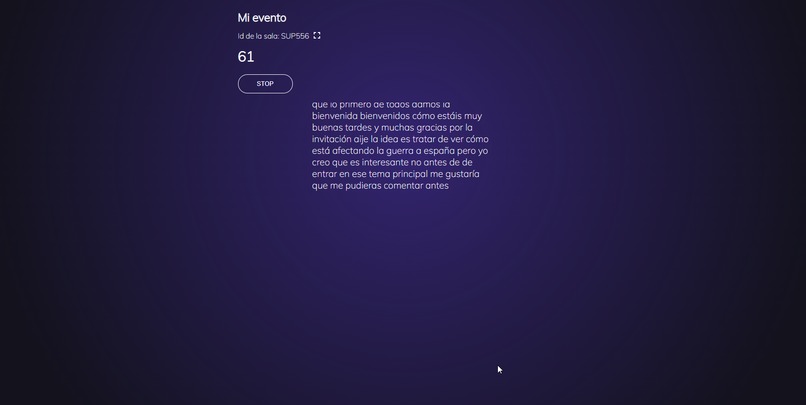

web view

-

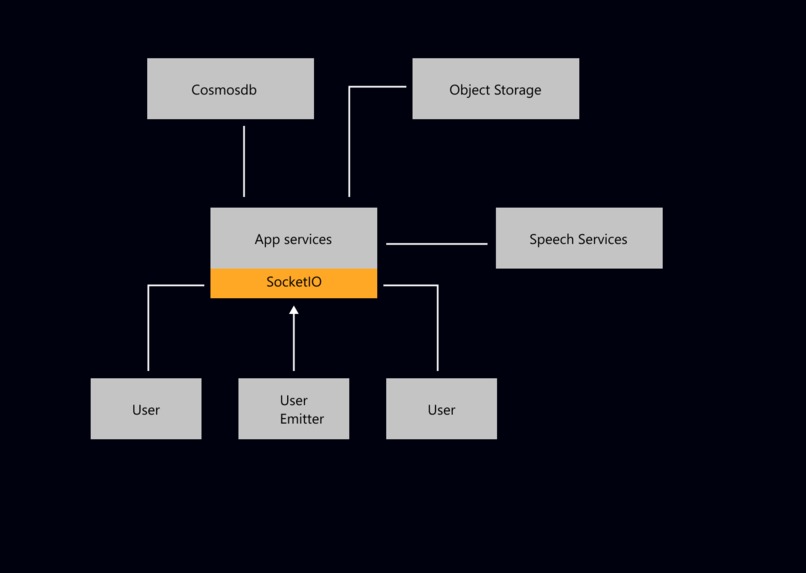

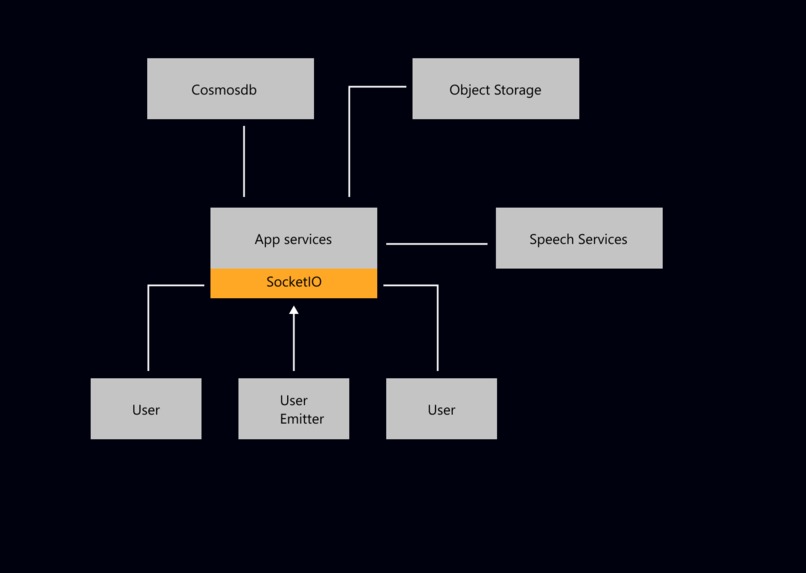

resources diagram

-

desktop app configuration

Inspiration

Conferences and university talks organized for in person attendance, may provide content of great value to people with impaired listening abilities to which they may not able to access easily.

For this problem, are real-time transcriptions the solution?

Yes, they are, but in order to access to transcriptions, someone must have made the decision to include them first.

These people can make the decision to add live transcripts and thus make the content available to the hearing impaired people, or they can dedicate their time and resources to other actions that will add other values and features to their project.

I think, it's a game of incentives.

The costs must be lower enough: and this means that any solution must be easy to integrate and flexible enough to be able to adapt to different scenarios.

And the benefits must be higher enough: This means that there must be a more widespread use for transcripts or a concentrated big use case, so we may think of this as game changing value that move people to adopt the technology or a constellation of uses chained, providing order at a big picture of what can be seen as a chaotic system.

Let's take as a reference that the film industry had generated films with subtitles for Spanish-speaking countries from forever; content that is fully accessible to people with limited hearing abilities; however, its availability is due not to the good will of content generators, but to the value it offers to consumers with and without disabilities.

What it does

Our project is a form of broadcast hub to which a constellation of applications can subscribe and participate in a transcription session, and adapt and process the content to different scenarios, according to the final needs of the user.

We seek to do this with the use of websockets, creating live rooms, where one or several actors can contribute, and as many others listen, and show in different media.

We visualize this as a service with the possibility of audio enhandsmen, transcription or translation… Because all the data is concentrated to 1 service instance, there is a reduction of cost and a possible increase of quality compared to many people doing the same in place.

For this project we created a desktop application that displays the transcripts on the screen; but if the end user is a long way from the projection, interested in exploring what has already been said, or moving to the bathroom, having the transcript on their mobile phone may be an advantage.

As you can see, both hearing-impaired and non-hearing-impaired people can take advantage of transcripts, which raises the bottom line for the event organizar.

Finally, adding value for the organizers depends on adding tools that allow them to process, improve and share the content, in audio and text so there is an incentive to add transcriptions to the event, even when he’s not making plans of use them.

We understand that it is worth including tools that motivate to use transcription services in order to later be able to share blogs, podcasts or edit e-books.

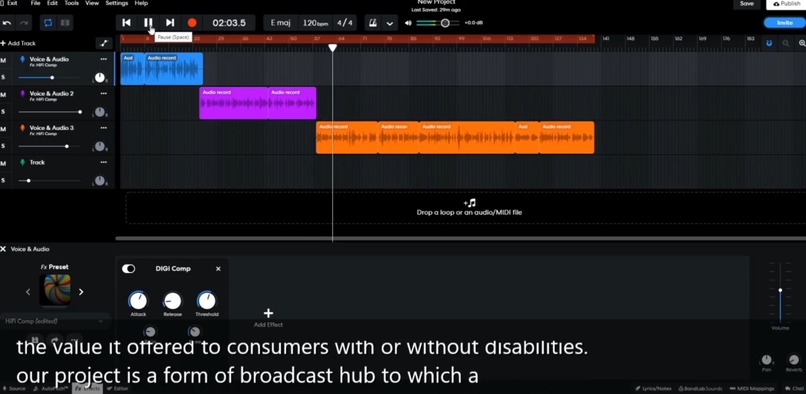

How we built it

I used websockets on azure web services for its simplicity. Nodejs backend and vanilla javascript on browser side. The transcriptions and audio files are saved to azure blob storage, and the rooms are registered in a Cosmosdb database. For this demo, only transcription is done, using azure Speech to Text services.

Challenges we ran into

I had to target indirectly many types of personas, each with diferents goals, and also try not to loose the target (people with hearing limitations).

Accomplishments that we're proud of

A simple to use interface and (at least for me) a self explained way of use of the service.

What we learned

Cosmosdb billing is made on a collection/table basis. I built a noise reduction model with tensorflow to try to enhandce the source audio azure speech recognition service was receiving, so it will work better on difiult enviroments, but for my surprice, azure apears to do a good work without it.

What's next for Beam

Make a public api without sockeio. Make translations part of the application. A little work on website for mobile devices, so it allow more actions, and objects.

Make it a platform for content creators?? Make ai models for audio processing, noise reduction and some enhancements? Share on a hosted space or embed on your site?

Log in or sign up for Devpost to join the conversation.