-

-

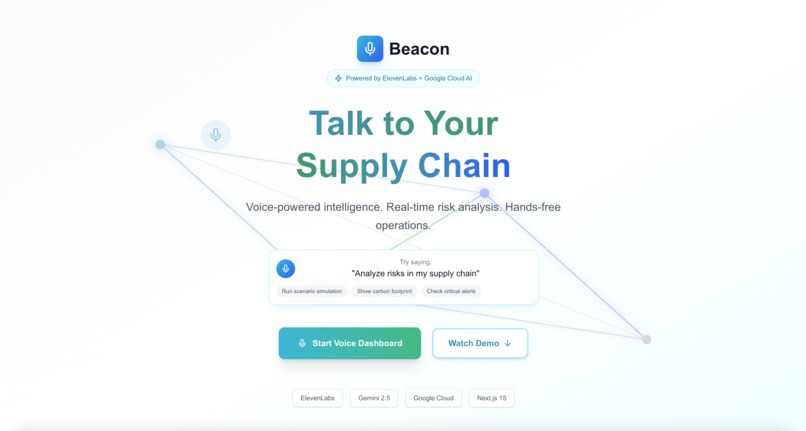

Beacon landing page showing the voice-first AI supply chain intelligence platform hero section

-

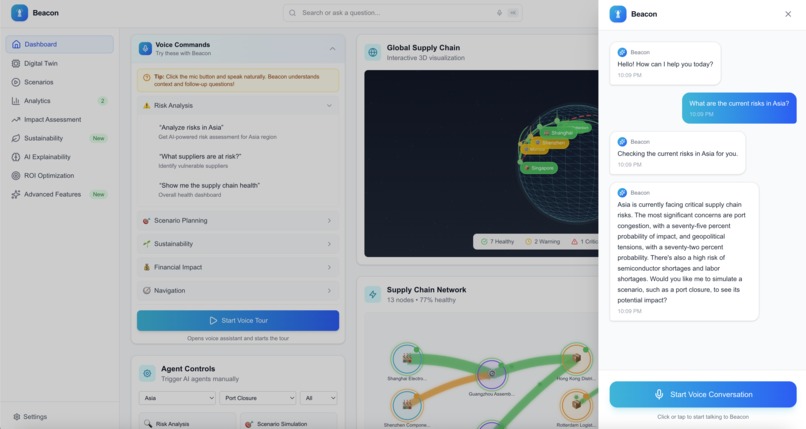

Beacon voice assistant activated with glowing microphone button and audio waveform visualization, ready to receive voice commands

-

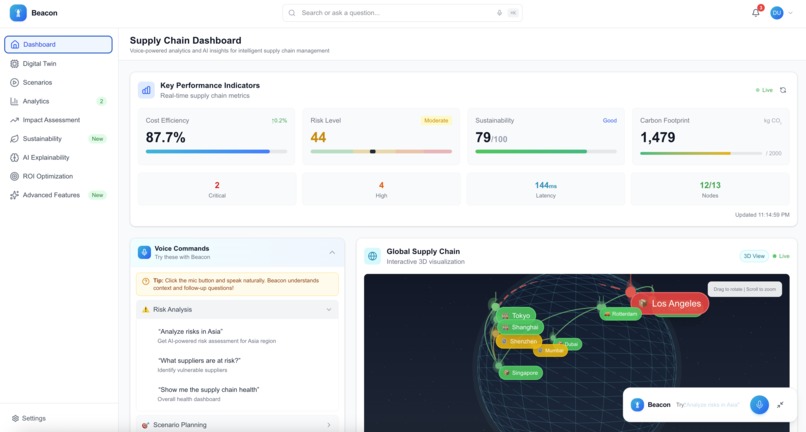

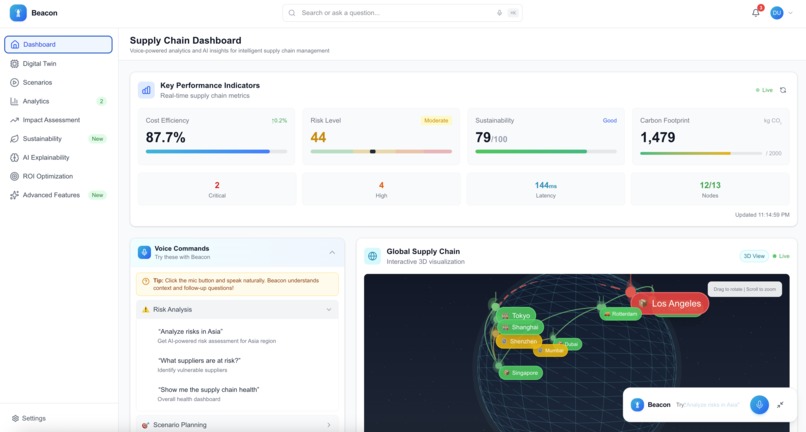

Beacon main dashboard displaying key performance indicators, supply chain health metrics, real-time alerts feed, and regional risk heatmap

-

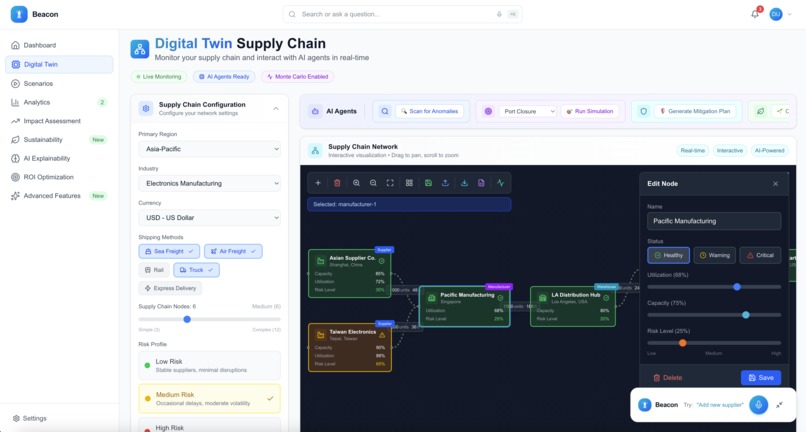

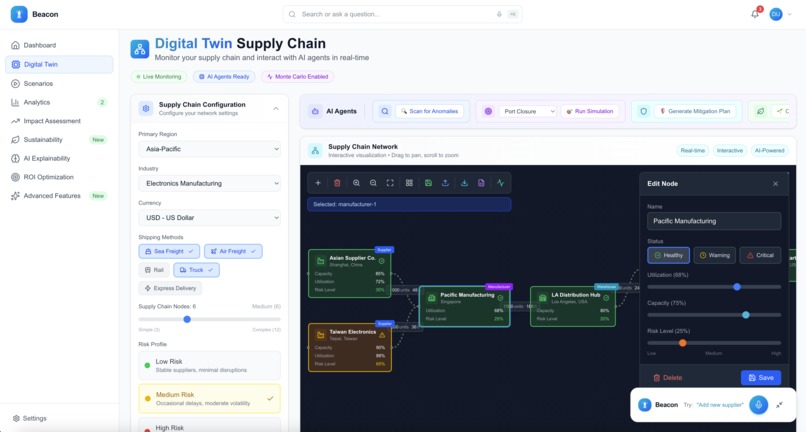

Interactive Digital Twin visualization showing supply chain network with connected nodes

-

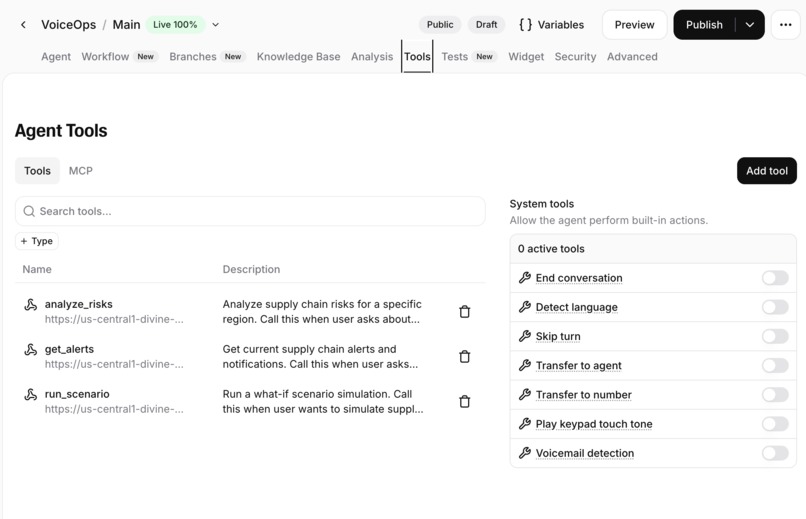

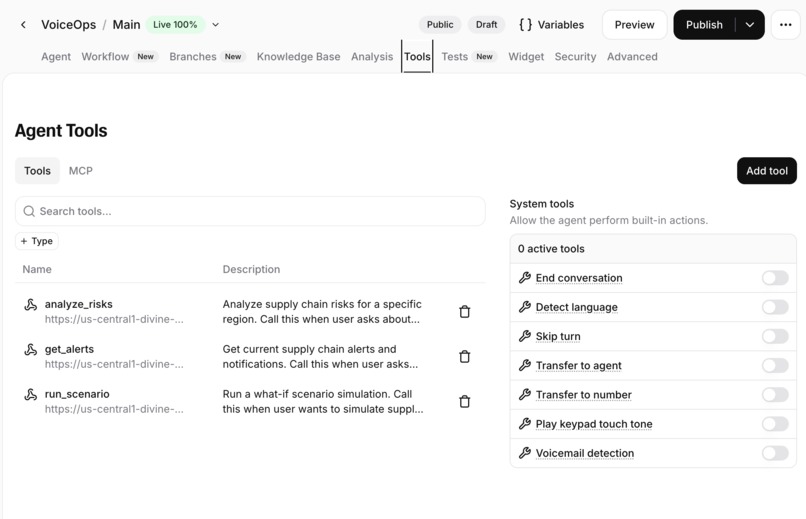

ElevenLabs voice agent configuration

-

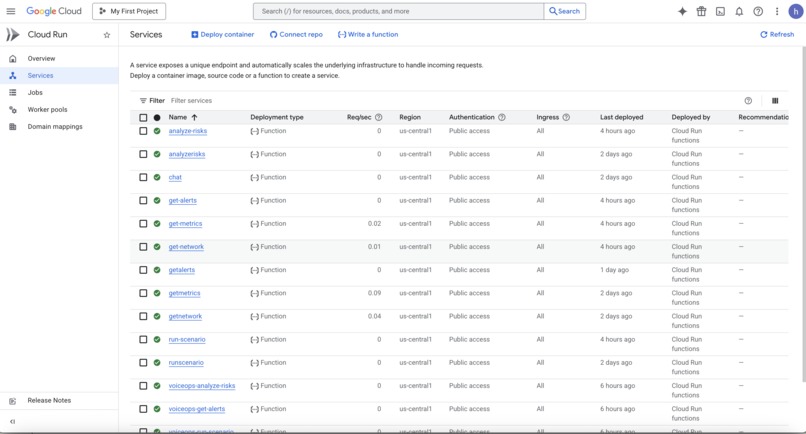

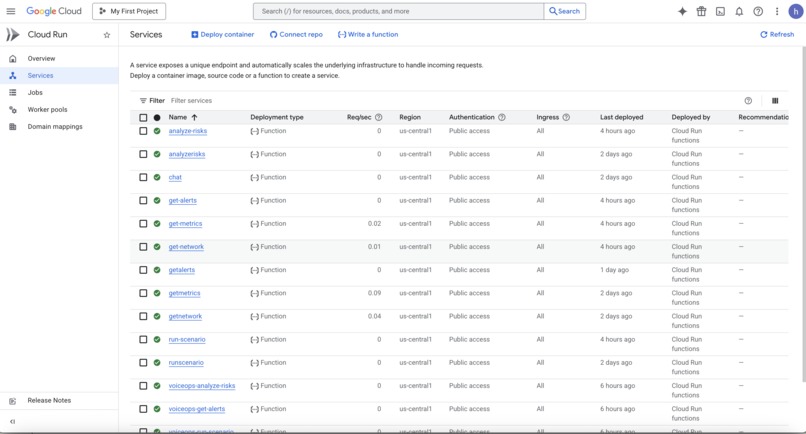

Google Cloud functions deployed

-

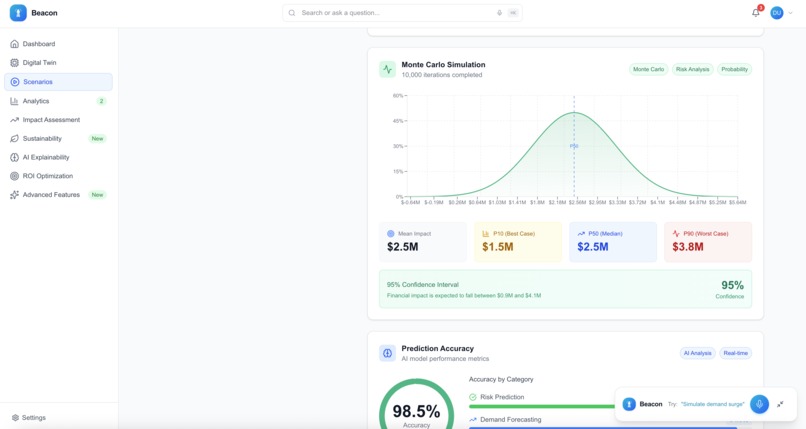

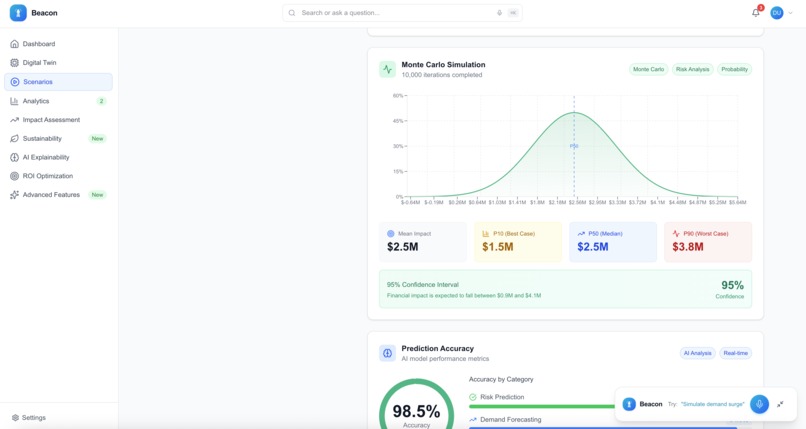

Monte Carlo simulation results displaying port closure scenario impact

-

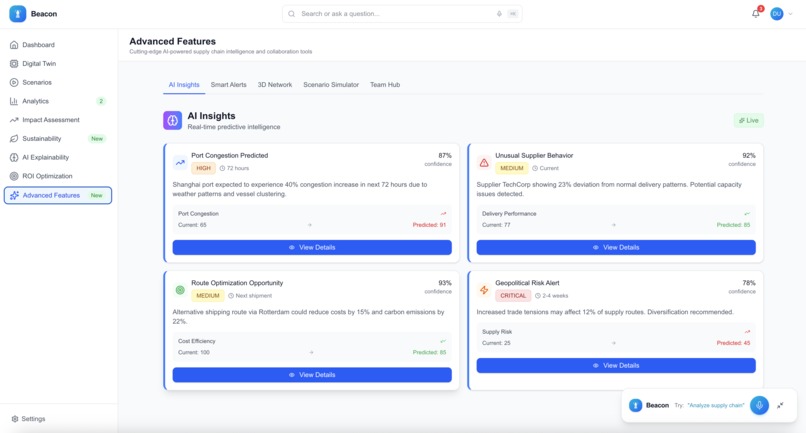

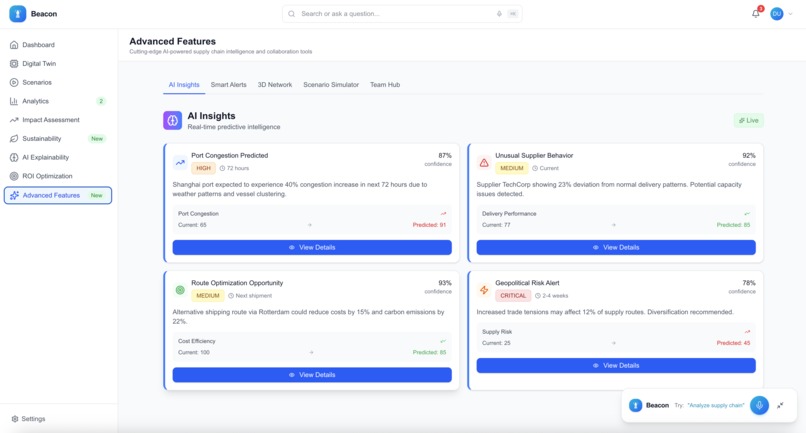

AI insights

-

-

-

Inspiration

Supply chain disruptions cost businesses $4 trillion annually. Managers still navigate complex dashboards, spending 10+ minutes to assess a single risk. During a crisis, this delay is costly.

I wanted to let users talk directly to their supply chain data. A warehouse manager walking the floor shouldn't need to stop and open a laptop. An executive in a meeting shouldn't need multiple screens. They should ask, "What are the risks in Asia?" and get an immediate answer.

The AI Partner Catalyst Hackathon was the right venue to build a voice-first enterprise tool.

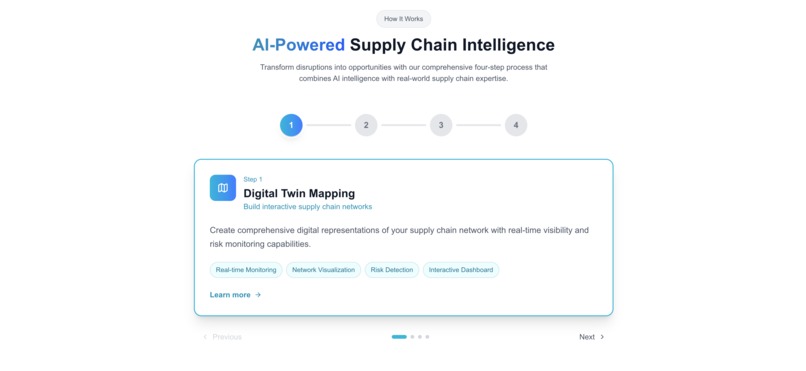

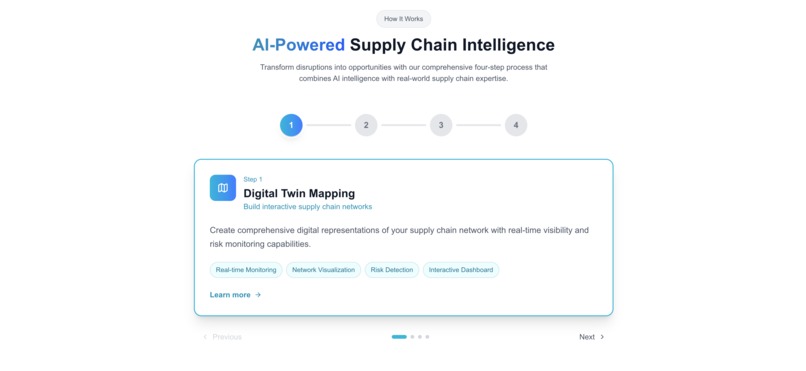

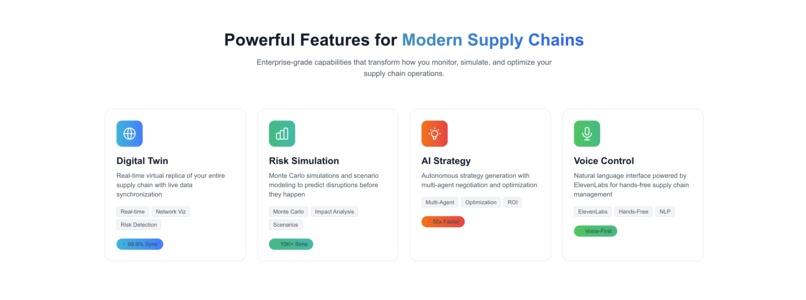

What Beacon Does

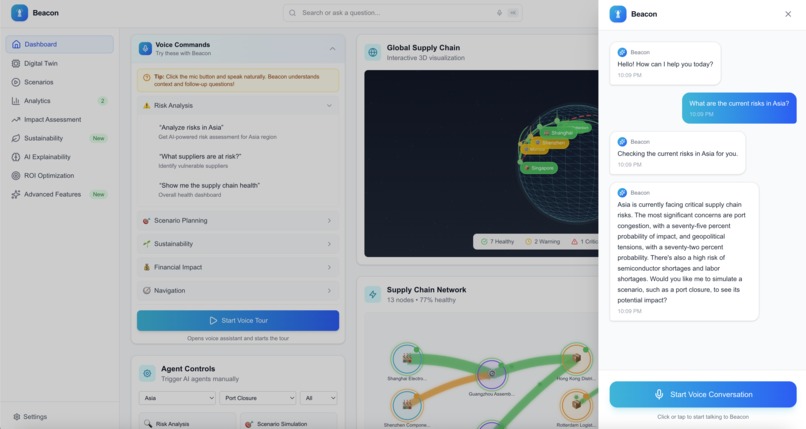

Beacon lets you talk to your supply chain data. Voice is the main interface, not an add-on.

Ask "What are the risks in Asia?" and you get a regional risk assessment with severity scores and affected suppliers, spoken back in plain English. Ask "Simulate a port closure in Europe" and Beacon runs Monte Carlo simulations, then tells you the financial impact and recovery timelines.

The voice interface handles risk analysis, scenario planning, real-time alerts, sustainability metrics, and navigation. You can say "Open digital twin" and the app navigates there. Say "Give me a tour" and Beacon walks you through the features while you do something else with your hands.

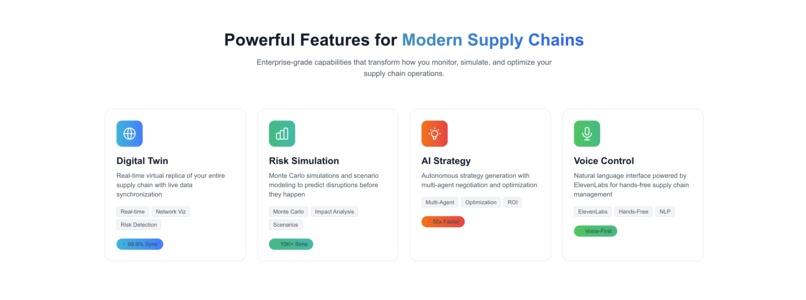

Beyond voice, there's an interactive Digital Twin - a visual network of suppliers, warehouses, ports, and factories that you can drag around and explore. The Monte Carlo engine runs thousands of scenarios and shows probability distributions with confidence intervals. The sustainability module tracks carbon footprint and ESG compliance.

How I Built It

The voice layer runs on ElevenLabs Conversational AI with custom webhook tools connecting commands to the backend. Getting multi-turn conversations to work took some effort - supply chain queries often reference previous answers ("what about that second supplier you mentioned?"), so I had to build context retention into the conversation flow.

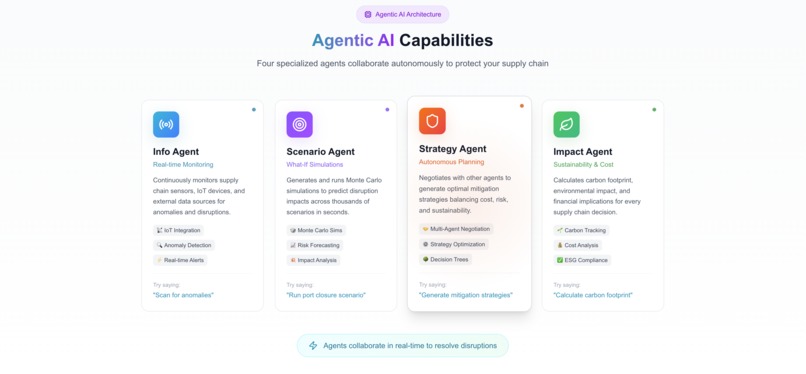

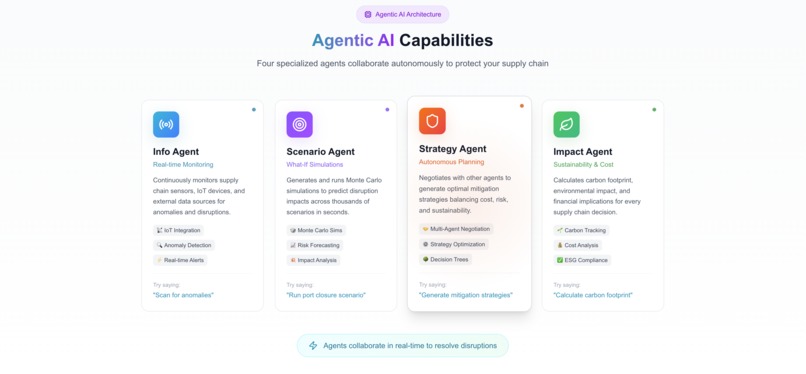

The AI backend lives on Google Cloud Functions (Gen2, Node.js 20) with Vertex AI Gemini 2.5 Flash doing the heavy lifting. I ended up with four specialized agents instead of one general-purpose model:

- Info Agent handles real-time monitoring, IoT integration, and anomaly detection

- Scenario Agent runs Monte Carlo simulations and risk forecasting

- Strategy Agent does mitigation planning and decision trees

- Impact Agent tracks carbon, costs, and ESG compliance

Splitting them up wasn't the original plan. I started with one agent and it kept getting confused about what mode it was in. Four focused agents turned out to be much more reliable than one that tried to do everything.

The frontend is Next.js 16 with React 19 and TypeScript. React Flow powers the Digital Twin visualization. Recharts and D3.js handle the analytics dashboards. Framer Motion makes it feel responsive. The whole thing deploys to Vercel.

I also built in circuit breakers, multi-tier fallbacks (Agent → Gemini → Base data), response caching, and structured logging. AI services go down sometimes and I didn't want the app to just break.

Challenges I ran into

Latency. My first version took 15 seconds to respond—too slow for voice. Getting under 10 seconds meant optimizing everything: prompt engineering, caching, parallel API calls. Rewrote the webhook handlers twice.

ElevenLabs webhooks are picky. The response format has to be exact or the voice agent just... stops. No error. No fallback. Silence. I built validation layers and fallback responses so even when something fails, the agent says something useful instead of nothing.

AudioWorklet CSP configuration. This one was obscure. ElevenLabs uses AudioWorklets for real-time audio processing, which requires specific Content Security Policy headers for blob: sources and worker-src directives. Took me longer than I'd like to admit to figure out why audio worked locally but not in production.

Gen2 Cloud Functions. Migrating from Gen1 meant updating URL patterns and reconfiguring IAM permissions for Vertex AI access. The documentation exists but isn't obvious.

Voice can't handle complexity the way screens can. Early versions tried to speak full analysis reports—way too long. I had to tune every Gemini prompt to deliver "headline + key insight + recommendation" and nothing more.

Accomplishments that I'm proud of

Voice is the primary interface, not a bolted-on feature. Every design decision started with "how does this work when you can't see a screen?"

The four-agent architecture works better than I expected. Each agent has a specific job and they don't trip over each other.

The Digital Twin came together well—React Flow handles the network graph, users can drag nodes around and see real-time status.

Full type safety across frontend and backend. Zero TypeScript errors. Caught a lot of bugs before production.

The Monte Carlo engine gives actual probability distributions, not just single-point estimates.

What I Learned

Voice forces you to simplify. If you can't say it in one sentence, it doesn't work as a voice response.

Fallbacks aren't optional. AI services fail. My system degrades through four levels: Agent → Gemini → cached data → template response.

ElevenLabs and Gemini work well together. The voice feels natural, the analysis is useful.

Enterprise software mostly ignores voice. There's probably a reason, but I think the gap is worth exploring.

In voice interfaces, every second of silence feels long. Speed matters more than I expected.

What's Next

Near-term: multi-language support, integrations with SAP and Oracle, a mobile app, and proactive alerts that speak first.

Longer-term: predictive analytics, AR visualization for warehouse walkthroughs, and team collaboration with shared voice sessions.

I'd like to see if this can become something people actually use daily, not just demo.

Try It

Live Demo: https://beacon-voiceops.vercel.app

Voice commands to try:

- "What are the risks in Asia?"

- "Run a port closure scenario"

- "Show my carbon footprint"

- "Open digital twin"

- "Give me a tour of the dashboard"

Built With

- d3.js

- elevenlabs

- gemini

- google-cloud

- react-flow

- three.js

- typescript

- vertex-ai

Log in or sign up for Devpost to join the conversation.