-

-

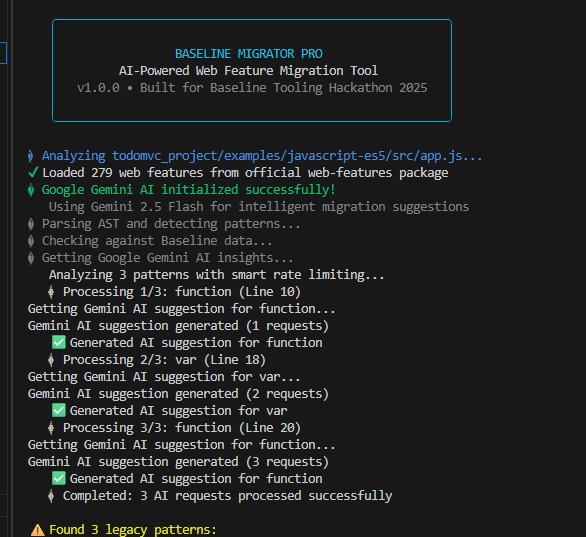

graphical userfriendly interface inspired by gemini

-

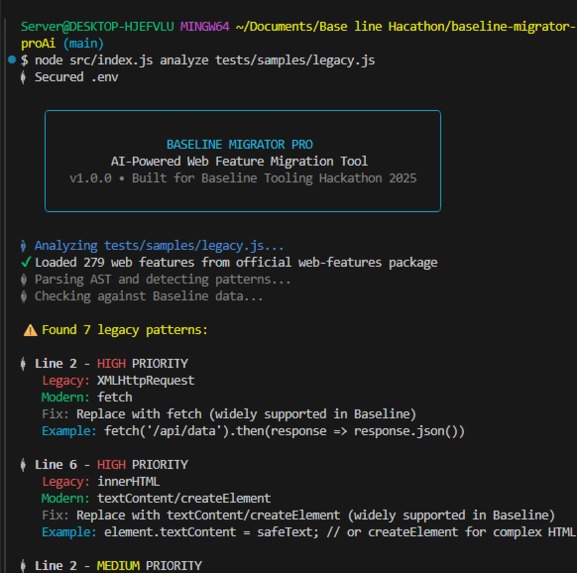

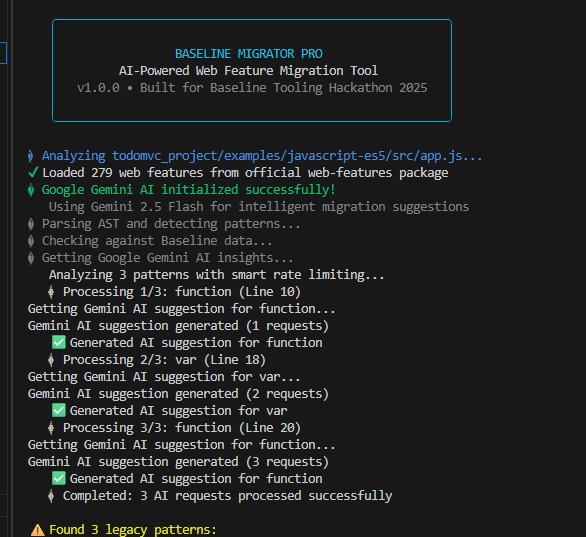

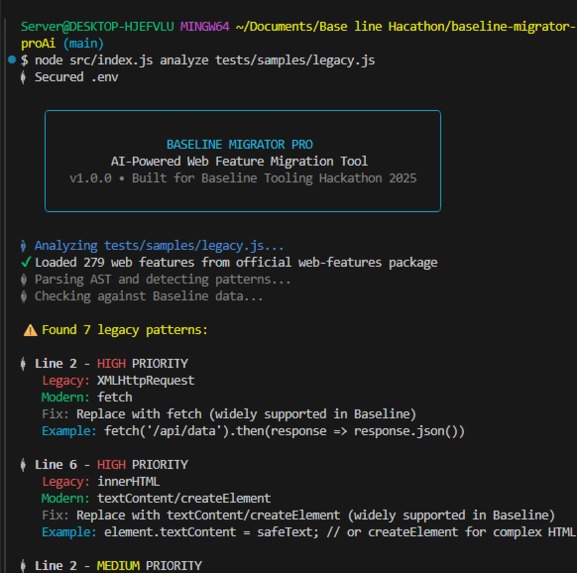

A command-line interface showing an AI-powered tool analyzing a legacy JavaScript file and recommending modern code replacements.

-

The AI-powered code migration tool uses Google Gemini to analyze a legacy JavaScript file and generate suggestions for modernization.

Inspiration

As a fourth-year student of Robotics and Automation Engineering, my academic trajectory has instilled a profound appreciation for systemic efficiency and the optimization of complex processes. Concurrently, my professional engagement as a freelancer in Data Analytics necessitates the continuous extraction of coherent insights from often-unwieldy, voluminous datasets. This duality—the engineer's drive for automation and the analyst's quest for clarity—is the bedrock upon which this project was conceived. The genesis of this tool arose from a palpable frustration encountered in numerous analytic and engineering preparatory tasks: the arduous, often Sisyphean, effort required to parse legacy codebase or unstructured data pipelines. It frequently felt akin to deciphering a technical palimpsest, where layers of disparate methodologies and obsolete conventions obscured the underlying logic. I vividly recall a particular engagement that demanded the integration of modern data ingestion techniques into an extant, labyrinthine processing script. The original methodology, characterized by archaic function calls and convoluted procedural logic, significantly protracted the development cycle. It became unequivocally clear that the time expenditure on comprehension and refactoring far eclipsed that dedicated to innovation and implementation.

Conceptualization and Design

This empirical challenge galvanized the project's central thesis: to engineer a diagnostic and translative utility that could autonomously analyze dated or structurally inefficient technical artifacts (be they code, configuration files, or data schemas) and proffer clear, modern, and optimally efficient alternatives. I envisioned a seamless transition mechanism, leveraging advanced computational principles to elevate the standard of technical output. This project, therefore, is not merely a code cleaner; it is an architectural modernization agent rooted in the principles of intelligent automation. The development phase served as an invaluable learning crucible. It compelled a deep dive into the evolutionary landscape of language and data standards, moving beyond rudimentary syntax to grasp the nuanced shifts toward more robust, parallel-processing-friendly paradigms (e.g., the transition from imperative scripting to functional or reactive models). Furthermore, the necessity of creating a custom analyzer—a computational entity capable of recognizing and pattern-matching structural inefficiencies—imparted a much deeper understanding of the computational mechanics underpinning developer tools.

Core Stack Deployment

The tool's architecture adheres to a principle of focused efficacy : Frontend Interface: Built upon a foundation of HTML, CSS, and vanilla JavaScript, the interface is designed for maximum clarity and intuitive interaction. The primary objective was to ensure a low-friction environment where a technical professional could paste their artifact and receive immediate, actionable diagnostics. Backend Processing: The analytical core is instantiated on a Node.js server utilizing the Express framework. This engine performs the heavy lifting, employing proprietary algorithms—informed by my experience in data pattern recognition—to identify sub-optimal structures. The critical feature is the integration of an AI-powered inference engine responsible for generating concise, context-aware migration strategies.

Challenges and Perspective

The realization of this project was not devoid of considerable intellectual rigor. One significant hurdle was the staggering combinatorial variety in which inefficient technical patterns manifest. Constructing an analyzer capable of reliable, idiom-agnostic identification was a complex exercise in algorithmic pattern matching, demanding a level of precision typically associated with robotics sensor fusion. Furthermore, integrating the artificial intelligence component presented its own spectrum of challenges. The mandate was to ensure the recommendations transcended simple syntactic replacements; they had to be judiciously contextual and demonstrably superior in terms of performance and maintainability. This necessitated extensive iteration and refinement of the AI model to achieve truly insightful recommendations. This endeavor represents a tangible confluence of my diverse proficiencies—the precision of an engineer, the pattern recognition of an analyst, and the creative problem-solving of an artist. It is a testament to the power of applied automation to mitigate technical debt and empower the subsequent generation of development. As a resource, I believe it will be instrumental for any professional navigating the complexities of legacy systems.

Log in or sign up for Devpost to join the conversation.