-

-

B.R.U — Edge AI system transforming cities in real time.

-

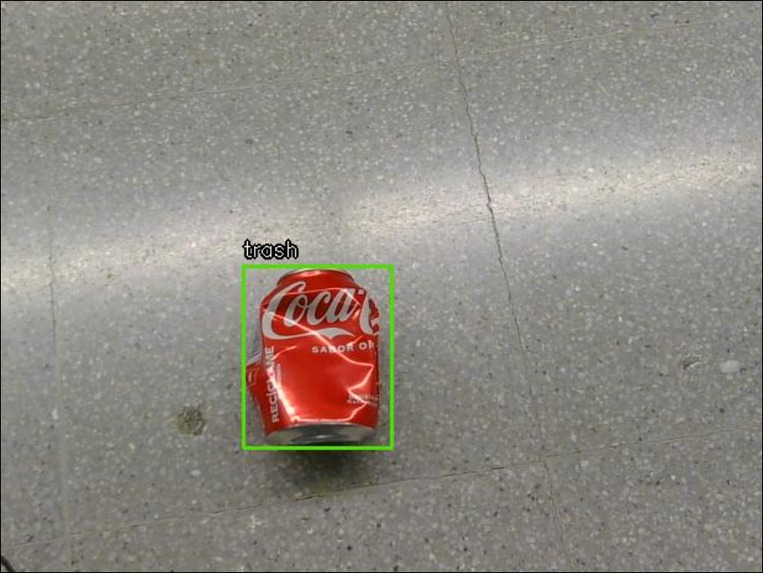

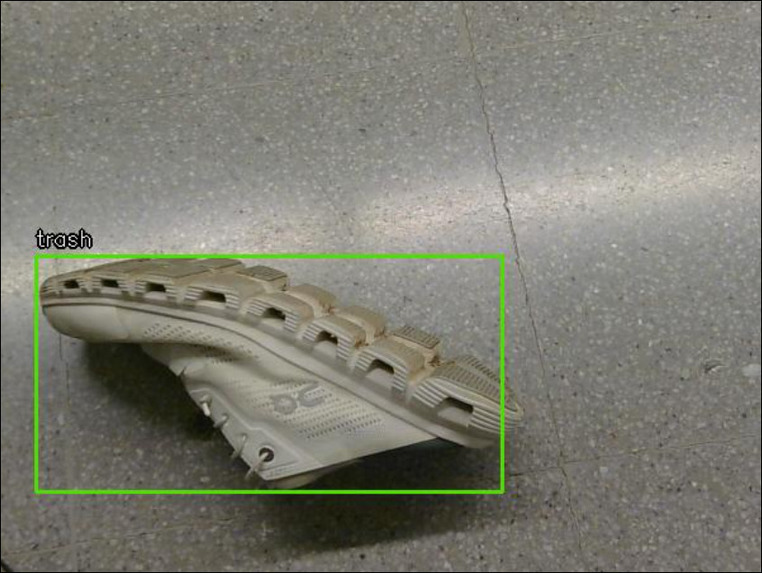

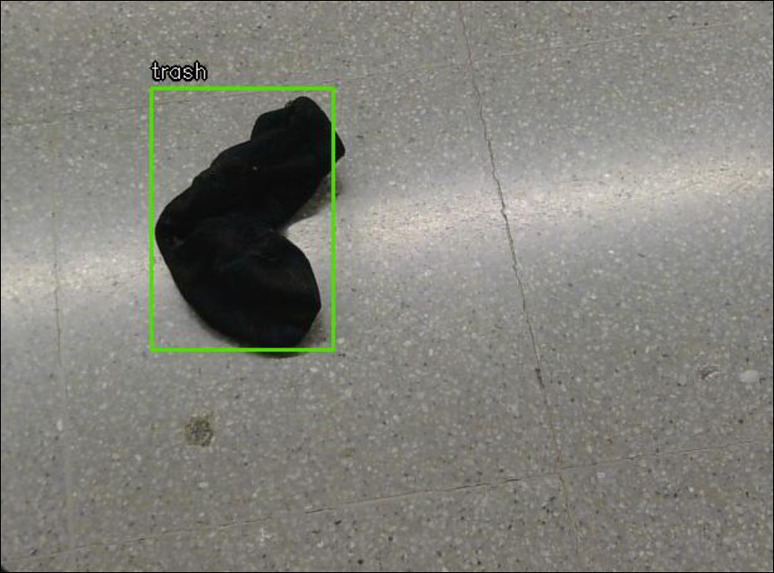

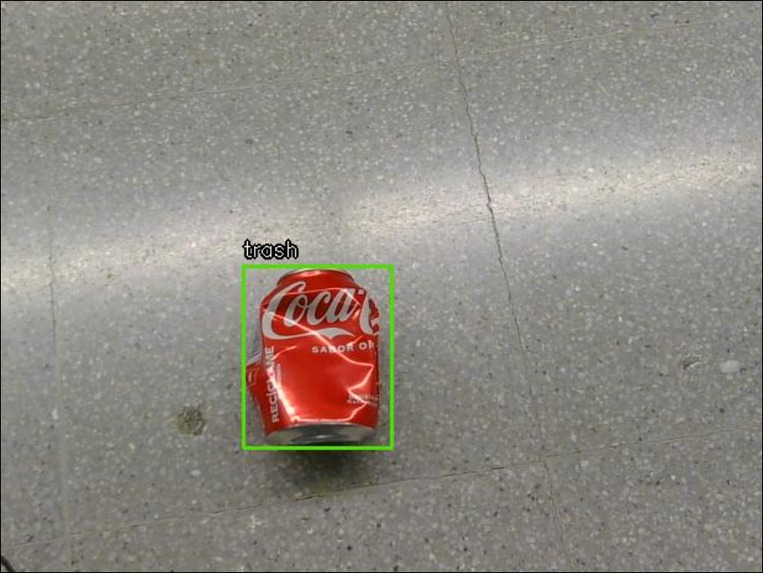

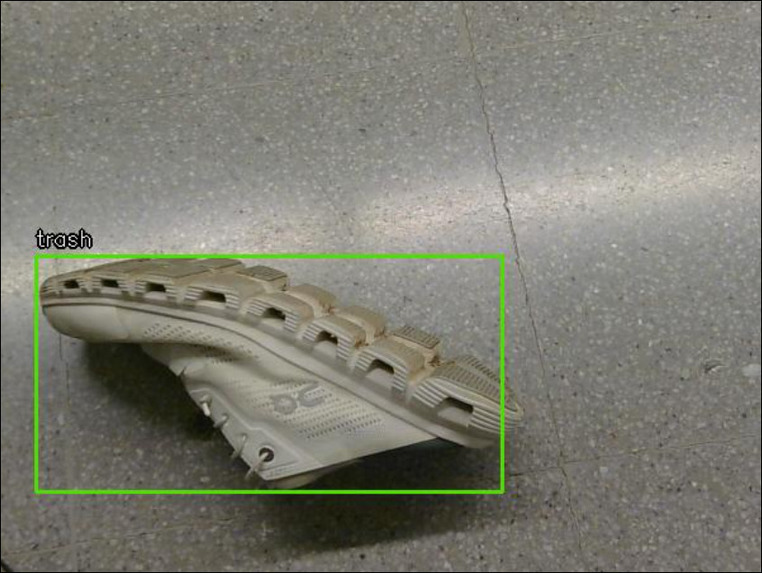

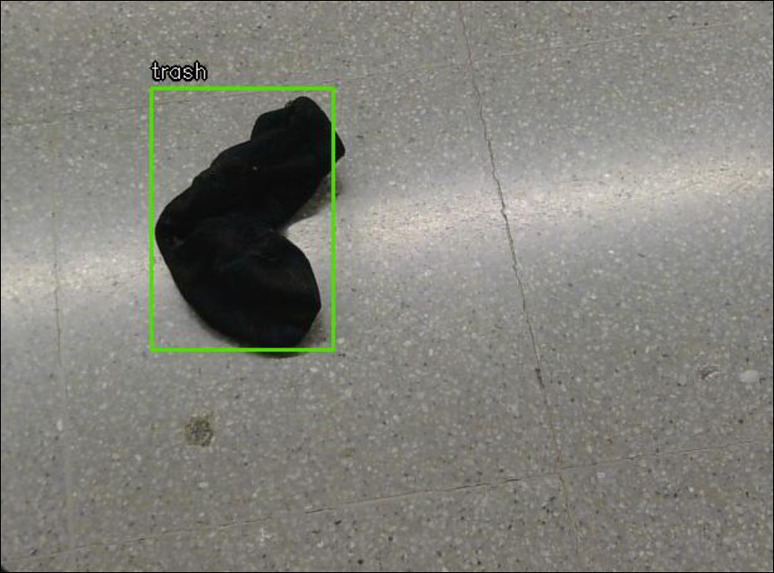

Detecting littering events in real time and triggering immediate feedback.

-

Detecting littering events in real time and triggering immediate feedback.

-

Detecting littering events in real time and triggering immediate feedback.

-

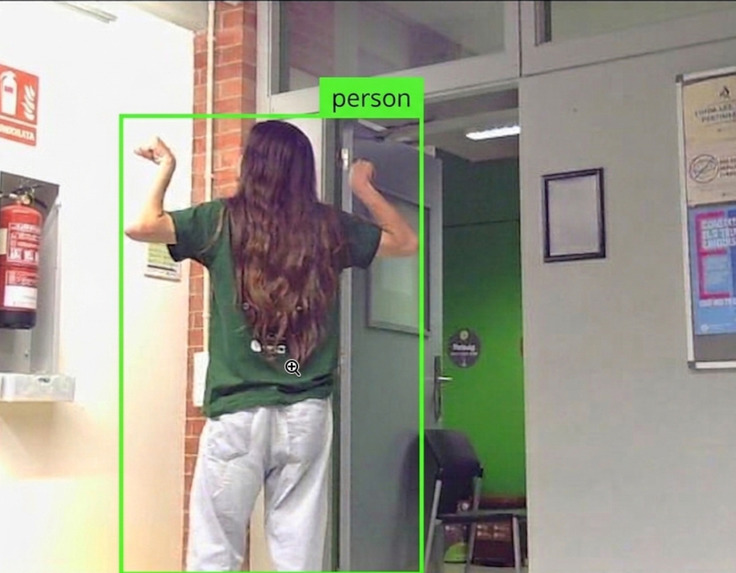

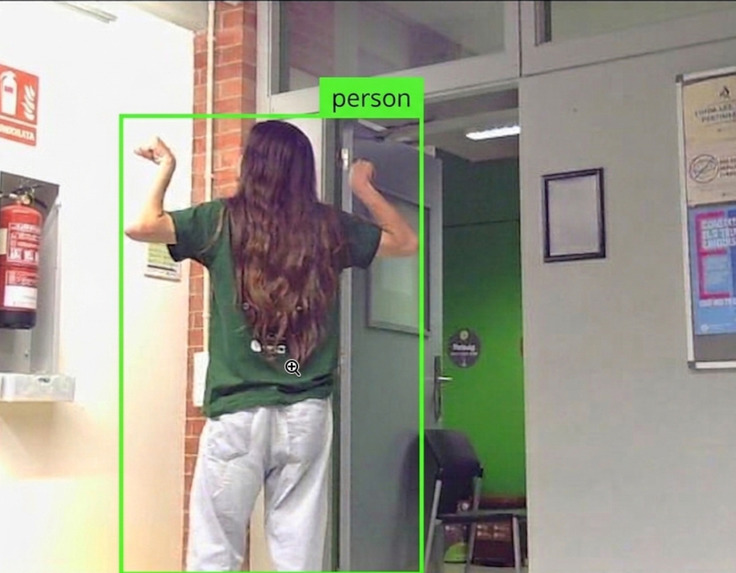

Real-time object detection running directly on-device using Edge AI.

-

Real-time object detection running directly on-device using Edge AI.

-

Human presence detection enabling context-aware interactions.

-

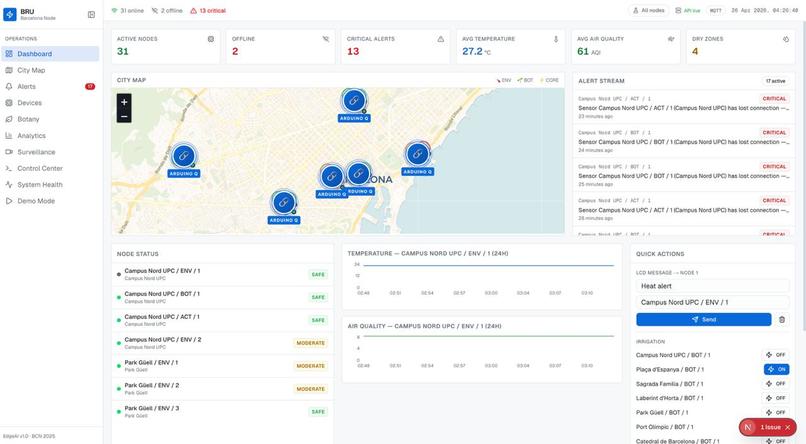

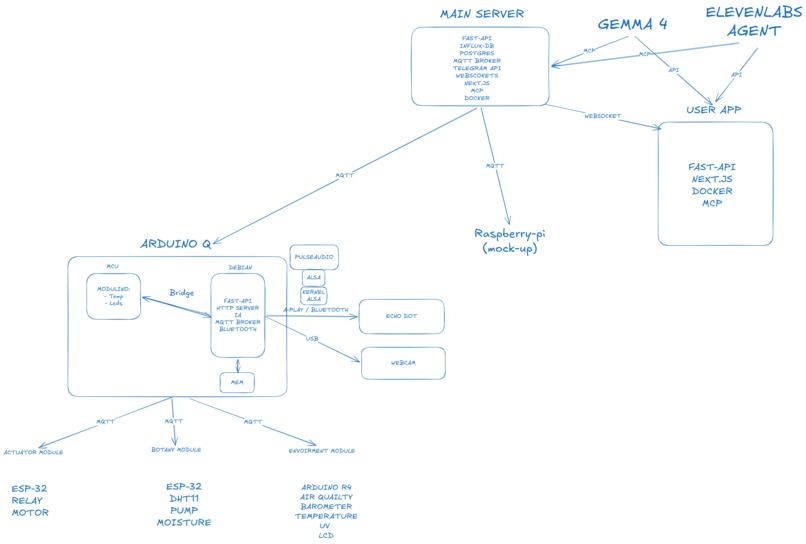

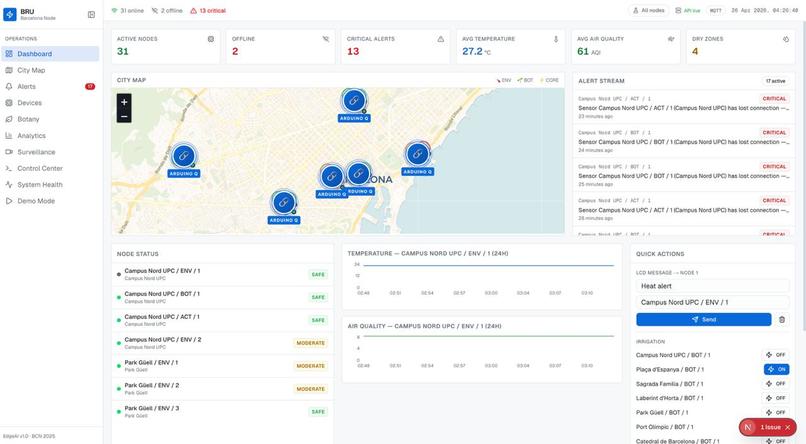

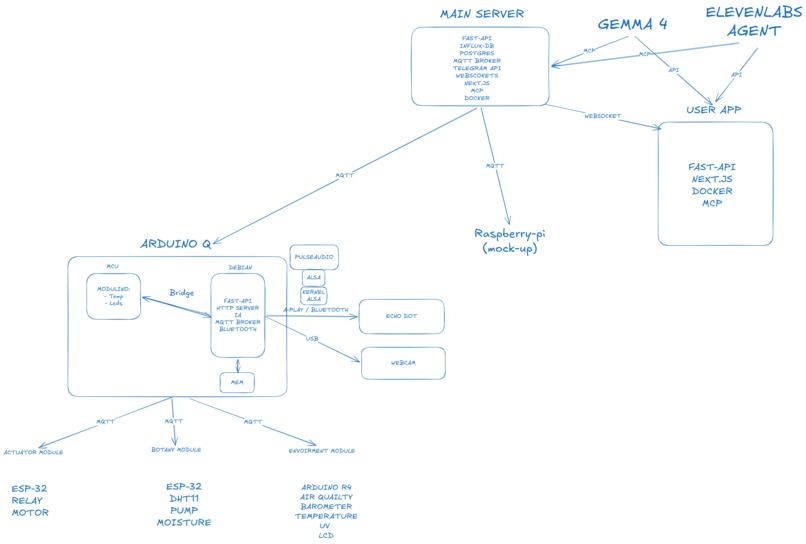

Real-time city monitoring dashboard showing system health and environmental data.

-

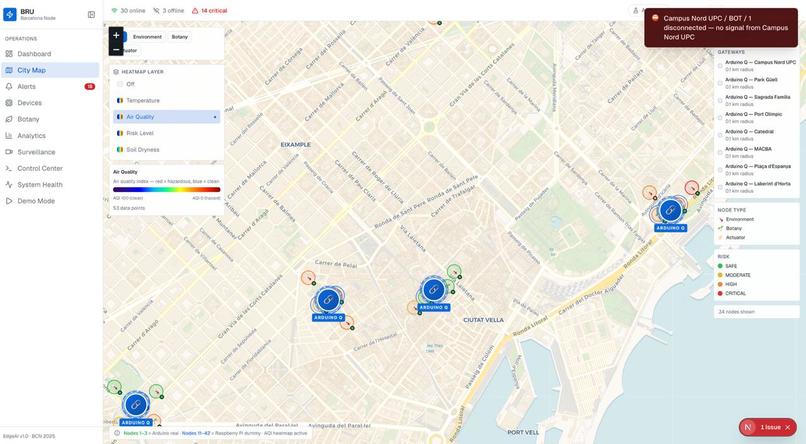

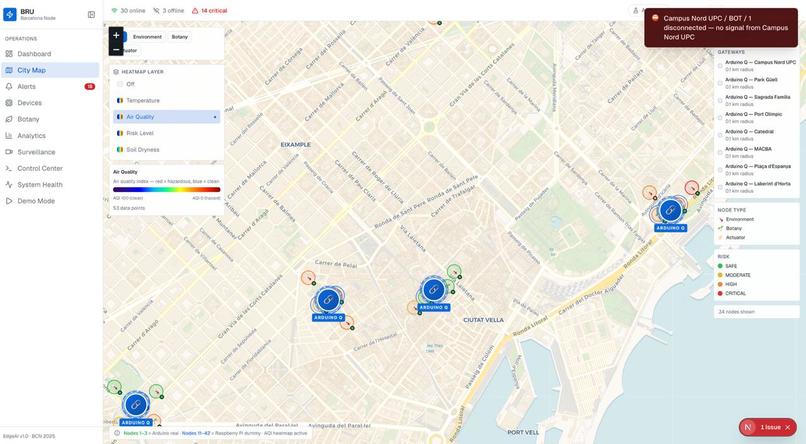

Live map of deployed nodes across Barcelona with environmental heatmaps.

-

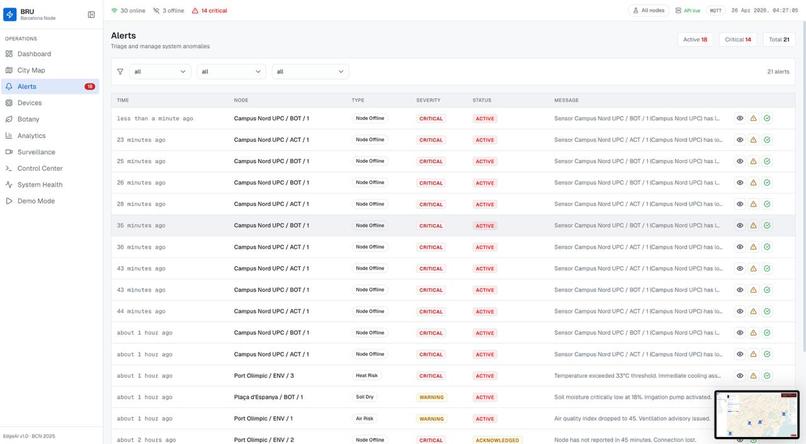

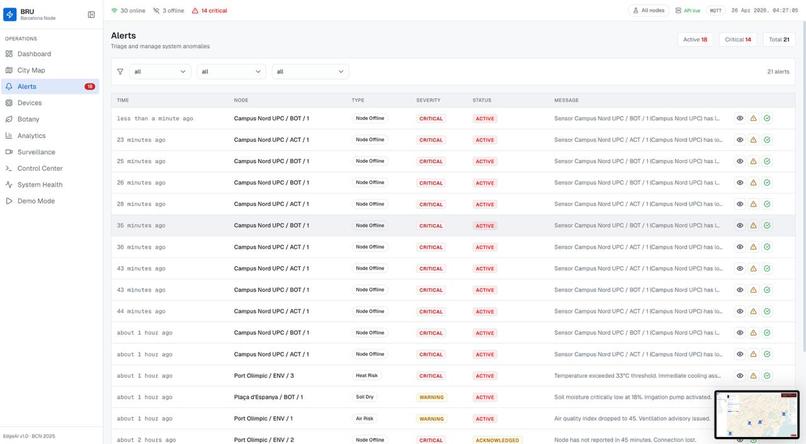

Centralized alert system for detecting and managing critical events.

-

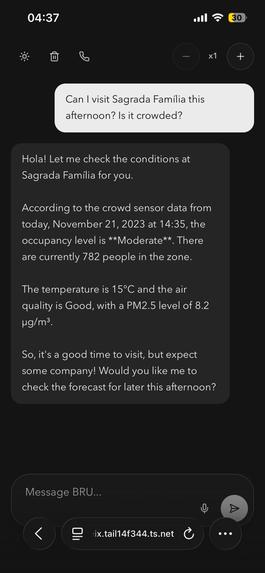

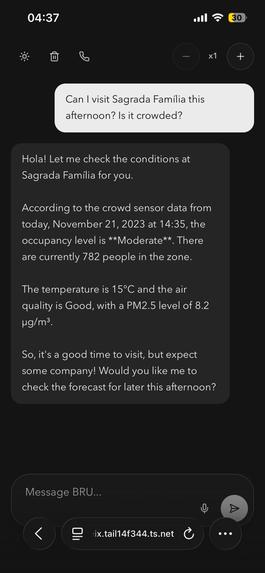

AI assistant answering real-time questions using live city data.

-

Voice interaction enabling hands-free communication with the system.

-

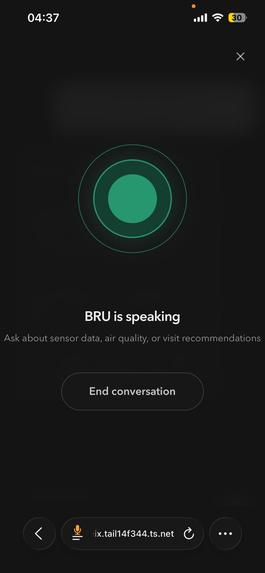

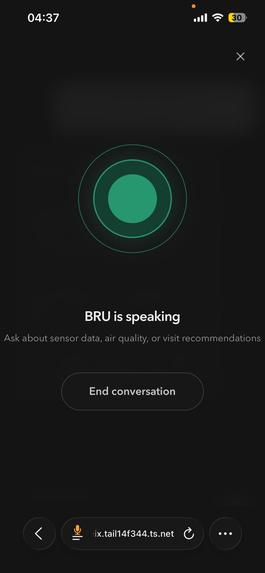

Scalable backend architecture using MQTT, APIs, and real-time data pipelines.

-

Device management view showing status, health, and connectivity of all nodes.

-

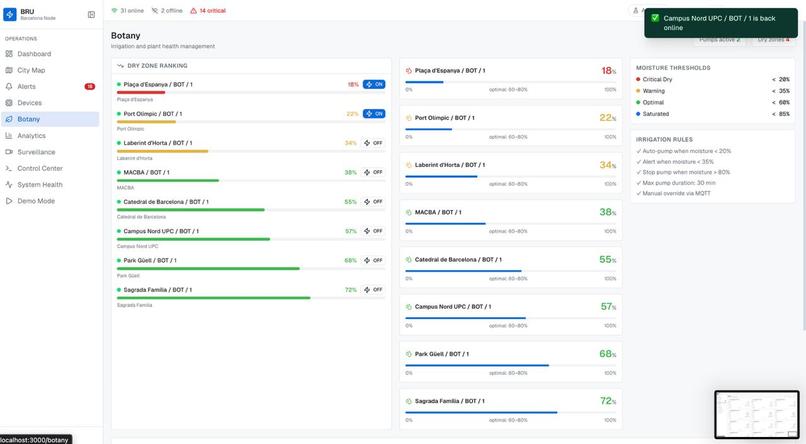

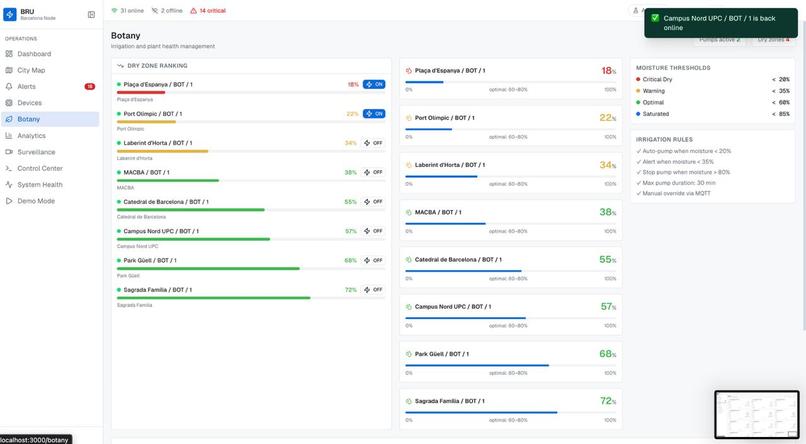

Smart irrigation system automatically adjusting water usage based on soil conditions.

-

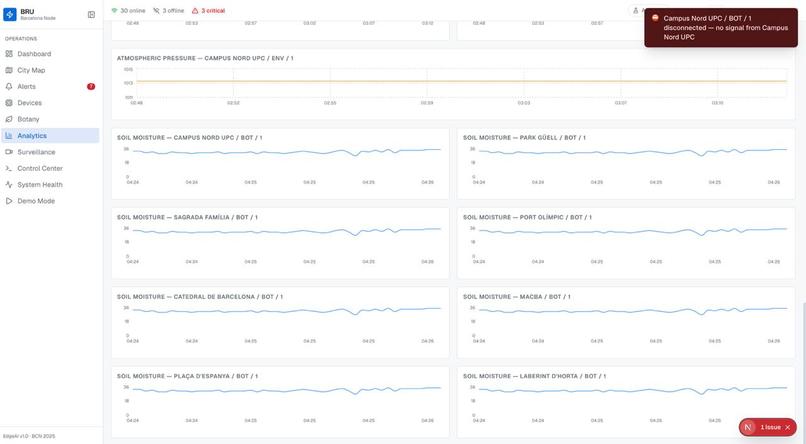

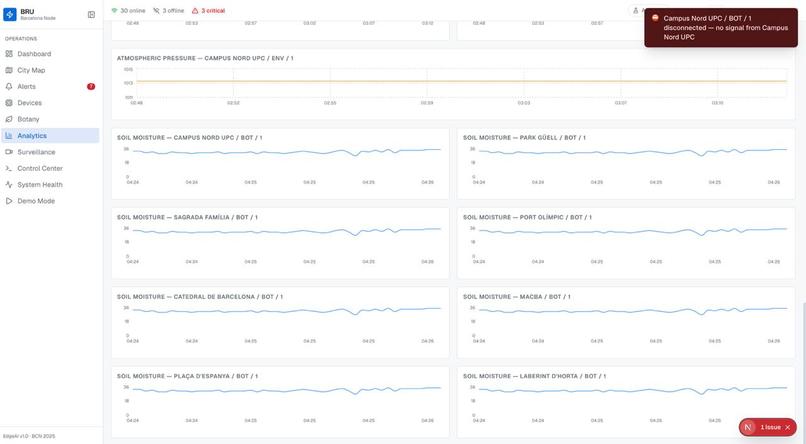

Time-series analytics for tracking environmental trends over time.

-

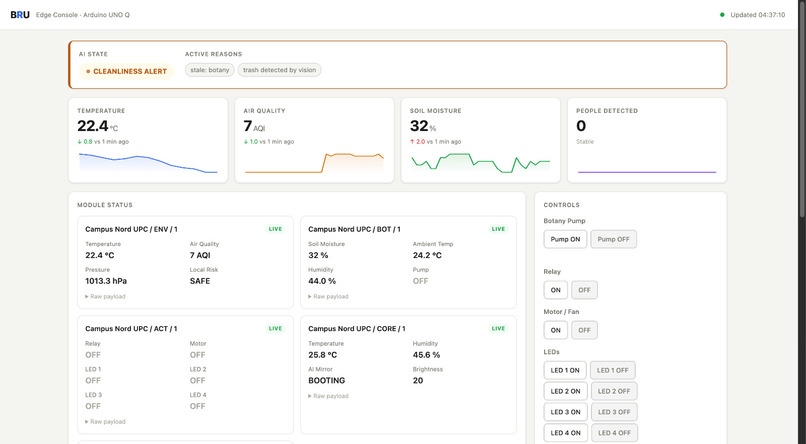

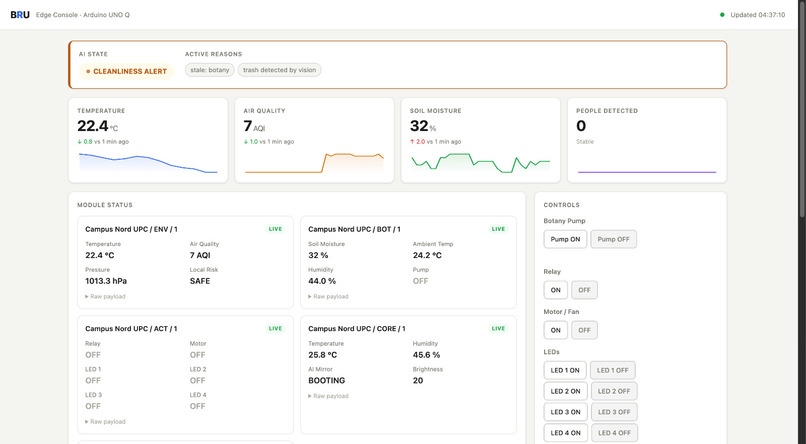

Technician interface for real-time control and debugging of individual devices.

-

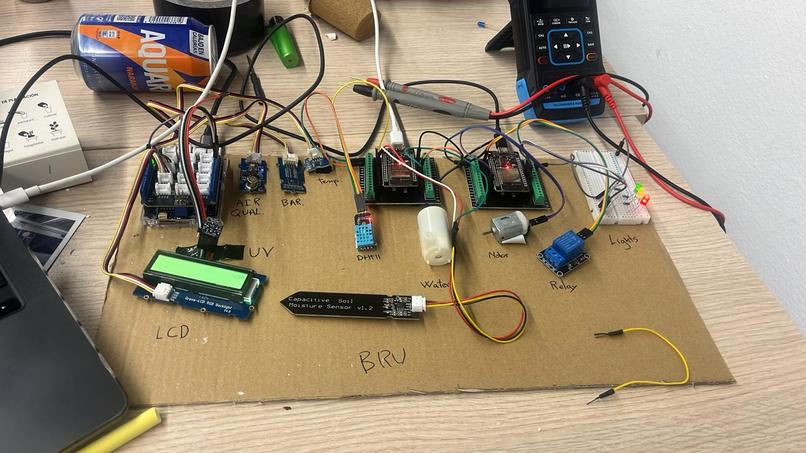

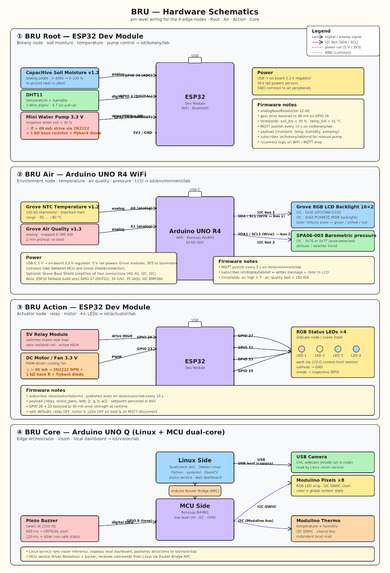

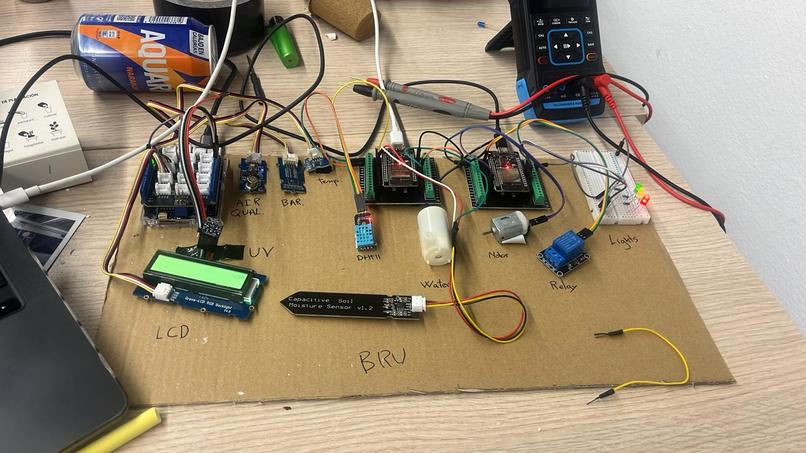

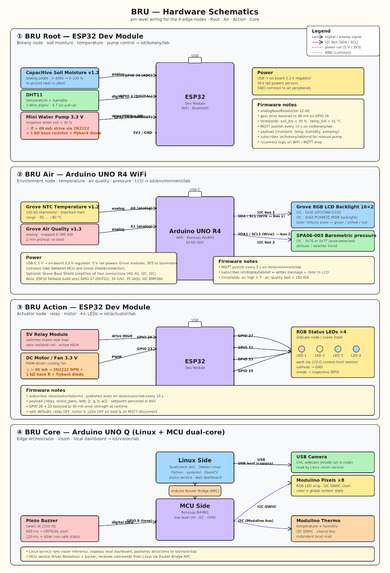

Hardware architecture of a B.R.U module, combining sensors, Edge AI, and actuators on a single device.

B.R.U - Building a Greener Barcelona

Inspiration

Barcelona is a vibrant city, but like many urban environments, it faces challenges around sustainability, air quality, and green space maintenance. We wanted to create a system that helps monitor environmental conditions in real time and empowers both citizens and technicians to take action.

Our inspiration came from combining IoT + AI + real-time data + computer vision + Edge AI to create a solution that is not only smart, but also deployable at city scale.

What it does

B.R.U is a modular environmental monitoring system that:

- Collects real-time data from distributed sensor modules (temperature, humidity, environmental, and botany-related data)

- Uses a camera system to detect:

- Trash being thrown in monitored areas 🚯

- Presence of rubber ducks 🦆

- Runs Edge AI directly on-device for instant detection

- Sends alerts via a speaker (Echo Dot simulation) using text-to-speech

- Transmits all data through an MQTT broker

- Stores and processes everything in a backend powered by FastAPI

- Provides a dashboard for monitoring and maintenance

- Includes an AI chat interface for interacting with the system

Each module:

- Is based on Arduino UNO Q

- Includes sensors + a webcam

- Has its own local backend + frontend for technicians

- Can react in real time by speaking when events are detected

How we built it

Hardware Layer

- Built on Arduino UNO Q, with:

- A Linux side

- A microcontroller side

- A bridge between them

- The microcontroller handles sensors, while Linux handles:

- Vision processing

- Local services

Edge AI (on-device intelligence)

One of the key innovations in B.R.U is the use of Edge AI, powered by Arduino + Edge Impulse.

- Models are deployed directly on each module

- The system detects:

- Trash events

- Objects like rubber ducks

- Inference runs locally on-device

Why this is technically strong:

- Low latency → near-instant detection and response

- High efficiency → optimized models for constrained hardware

- Privacy-preserving → no need to stream video to the cloud

- Bandwidth-efficient → only meaningful events are transmitted

This results in a system that is both responsive and resource-efficient, which is critical for real-world deployment.

Communication Layer

- Uses MQTT for lightweight, real-time messaging

- Enables a publish/subscribe architecture:

- Modules send events

- Backend and services consume them

- Designed to scale to hundreds or thousands of devices

Backend Layer

- Built with FastAPI

- Handles ingestion and APIs

Databases:

InfluxDB (time-series data)

- Optimized for continuous sensor streams

- Handles high-frequency writes at scale

- Efficient aggregation for trends and analytics

- Optimized for continuous sensor streams

PostgreSQL (relational data)

- Stores users, devices, modules

- Ensures structured and reliable system management

- Stores users, devices, modules

Dashboard

- Real-time visualization of:

- Sensor data

- Alerts (trash, rubber ducks, anomalies)

- Designed for operational monitoring at scale

- Helps technicians maintain large deployments efficiently

AI + Web App

- Chat interface powered by Gemma 4

- Communicates via MCP

- Uses real sensor + event data as context

- Supports:

- Text responses

- Voice interaction (via ElevenLabs)

Why it scales

B.R.U is designed from the ground up for city-wide deployment:

- Modular architecture → each unit operates independently and can be deployed anywhere

- Edge AI processing → reduces cloud load and allows scaling without bottlenecks

- MQTT communication → lightweight and ideal for massive IoT networks

- Time-series database (InfluxDB) → built to handle large volumes of sensor data

- Self-contained modules → each unit includes its own debugging interface for maintenance

Because intelligence is pushed to the edge, adding more devices does not significantly increase central infrastructure load. This makes B.R.U highly scalable and cost-efficient for real-world deployment in public spaces.

Challenges we ran into

- Integrating Edge AI with constrained hardware

- Balancing processing between microcontroller, Linux, and cloud

- Ensuring reliable computer vision detection

- Designing a system that works both locally and globally

- Making AI responses meaningful using live environmental + vision data

Accomplishments that we're proud of

- Built a system that:

- Sees (camera)

- Senses (IoT sensors)

- Thinks locally (Edge AI)

- Speaks (audio feedback)

- Understands (LLM)

- Real-time detection and response without cloud dependency

- Designed a system ready for real-world urban deployment

- Strong balance between technical performance and impact

What we learned

- How to deploy AI models on edge devices

- Tradeoffs between edge vs cloud processing

- Designing systems for scalability from day one

- Importance of efficient data pipelines in IoT systems

- How to connect AI with real-world environments

What's next for B.R.U

- Improve Edge AI models (more objects, better accuracy)

- Add behavior-based detection (pattern recognition over time)

- Expand deployment across Barcelona

- Add predictive analytics (AI-based environmental insights)

- Collaborate with city initiatives for real-world integration

🌿 B.R.U is designed not just as a prototype, but as a scalable solution for a greener Barcelona.

Built With

- arduino

- fastapi

- influxdb

- mcp

- nextjs

- postgresql

- raspberry-pi

- react-native

- sensors

Log in or sign up for Devpost to join the conversation.