-

-

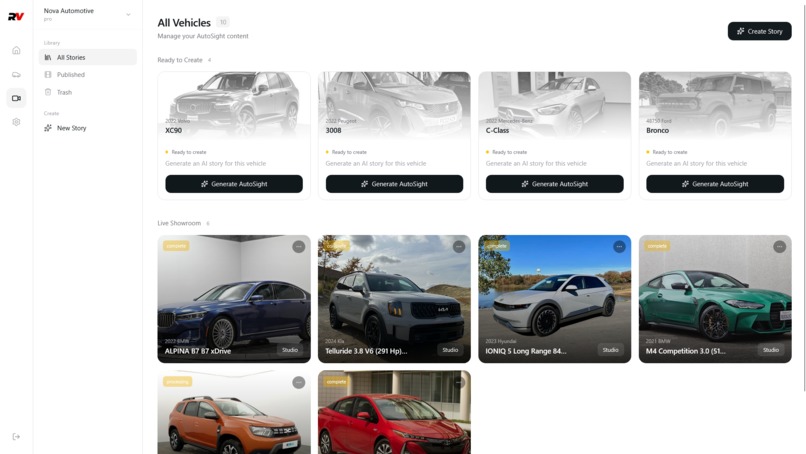

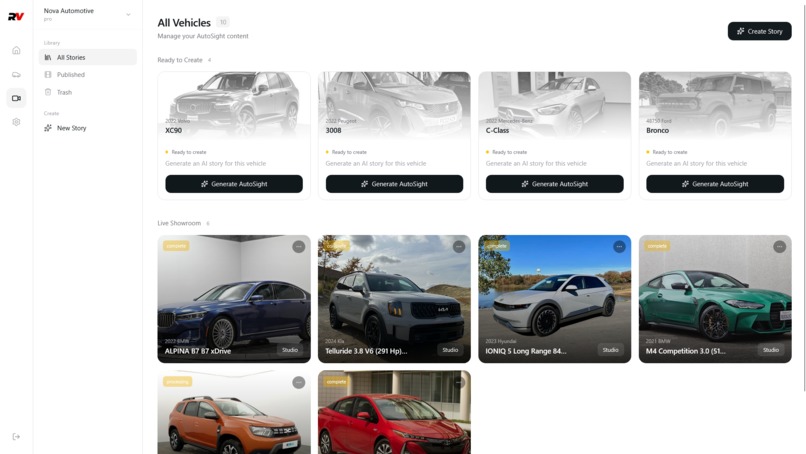

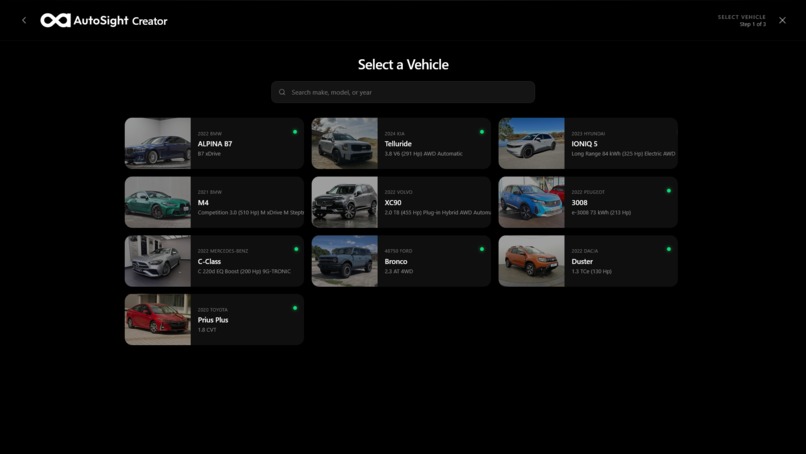

AutoSight Studio

-

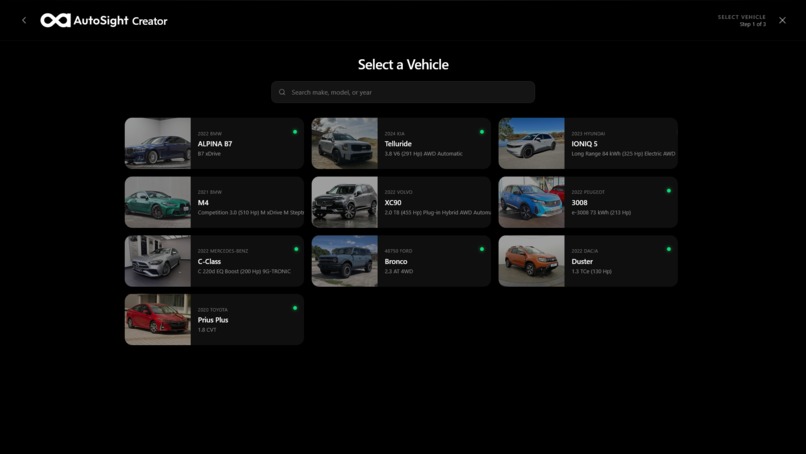

Dealer selects a car to create an AutoSight experience

-

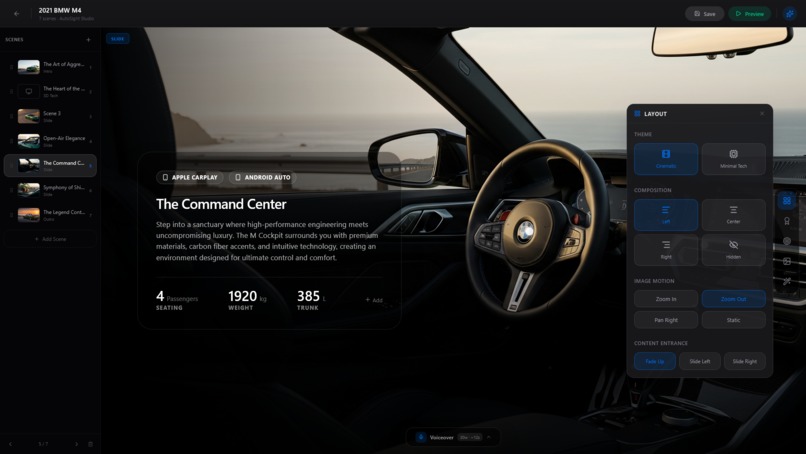

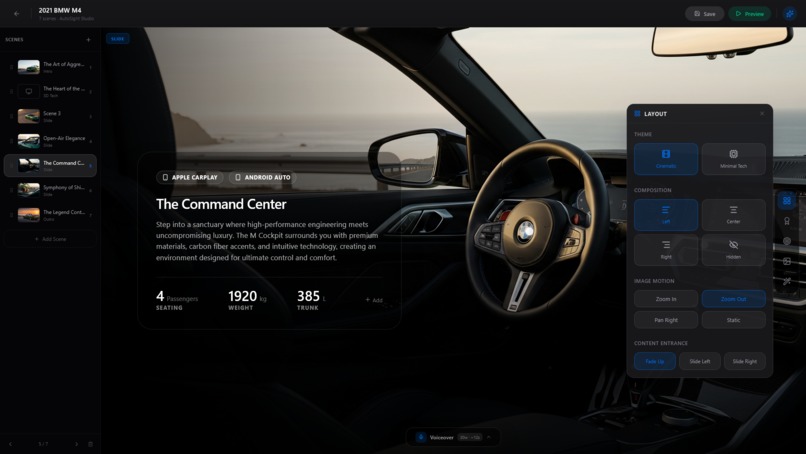

AutoSight AI Powered Editor Interface

-

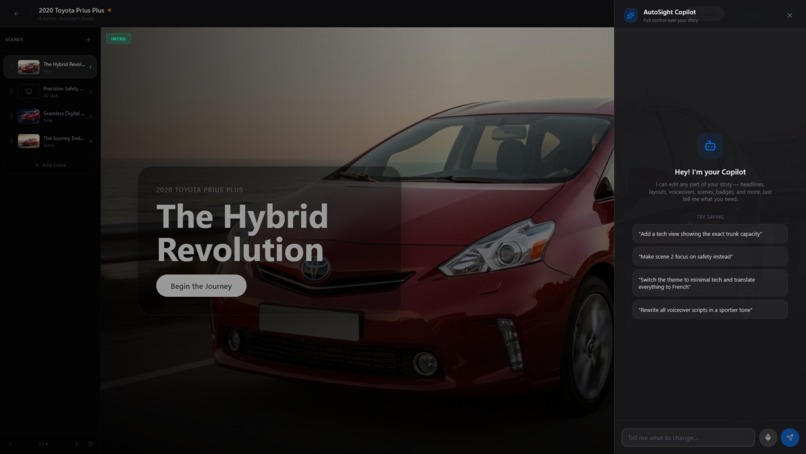

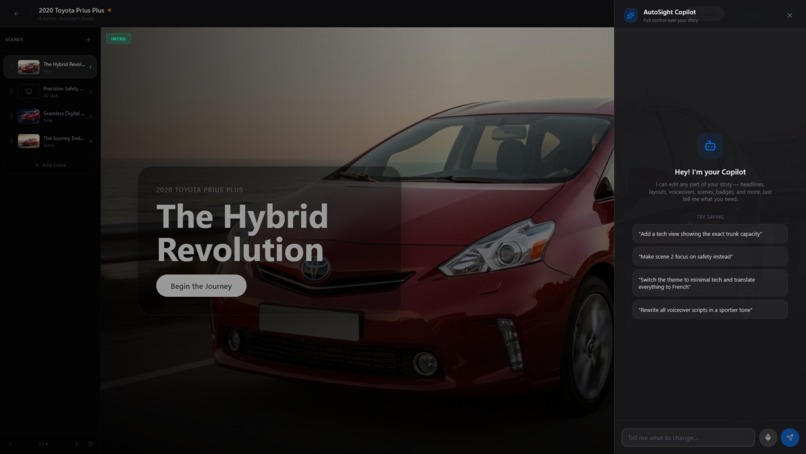

AutoSight Copilot helps the dealer have full control on the experience using Nova 2 Lite powerful tools calling

-

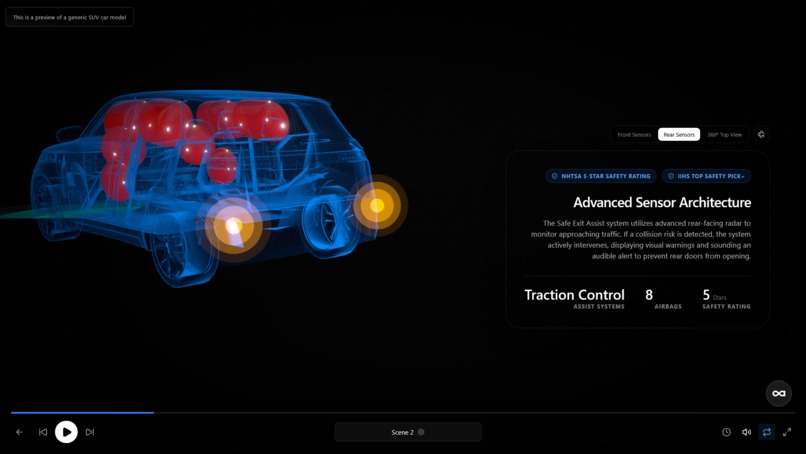

3D Tech view scene Performance mode

-

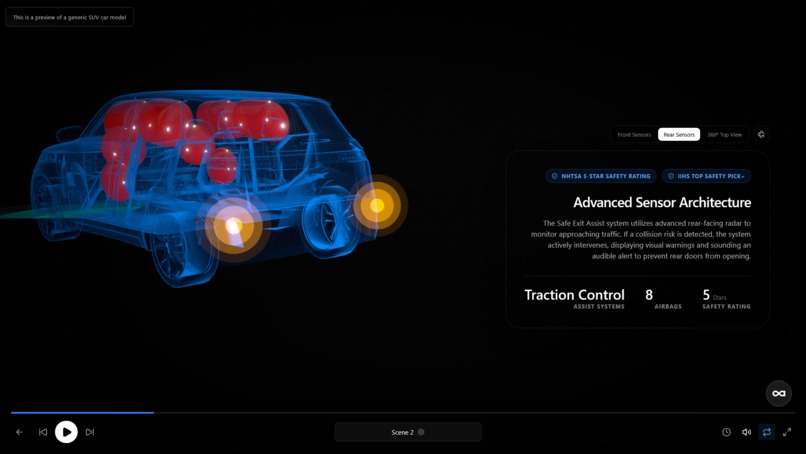

3D Tech view scene Safety mode

-

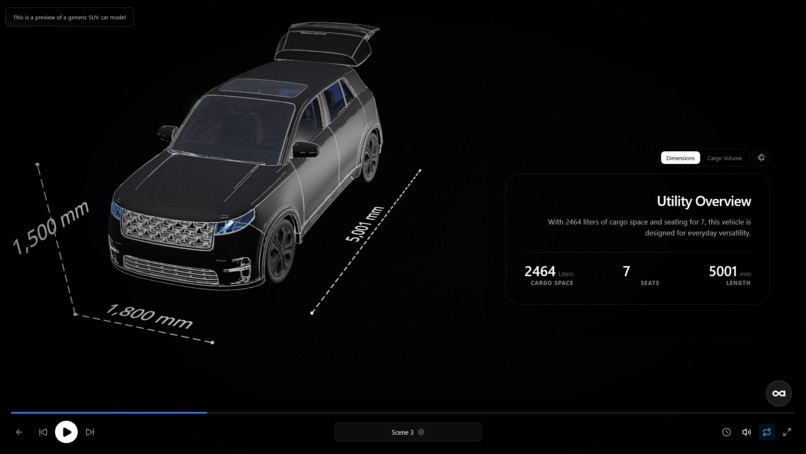

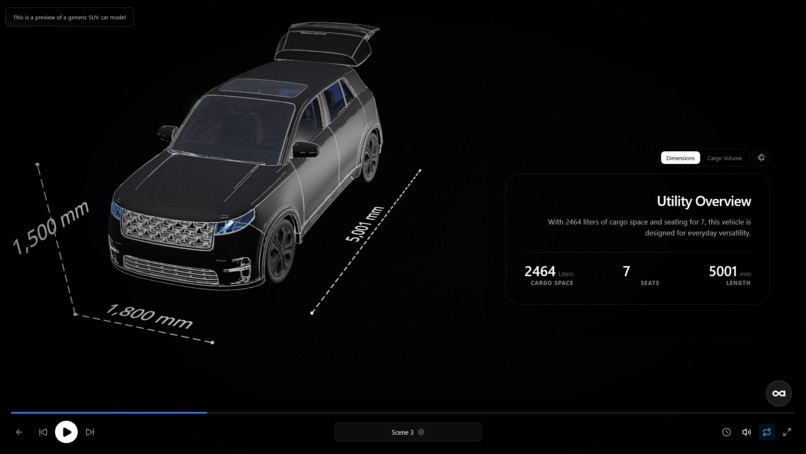

3D Tech view scene Utility mode

-

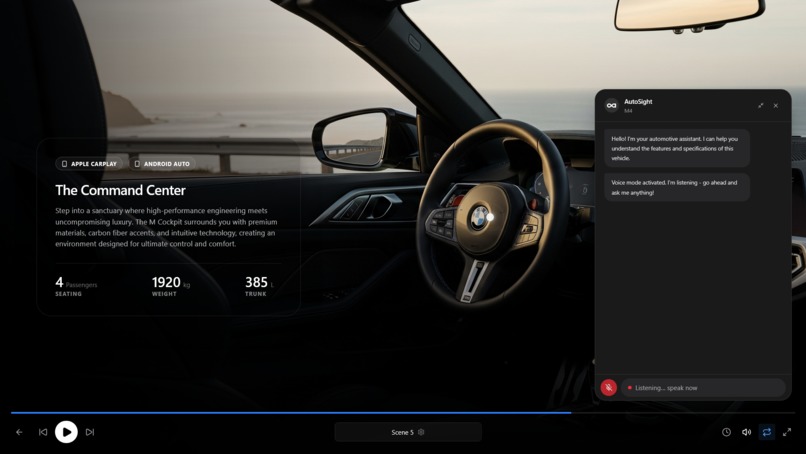

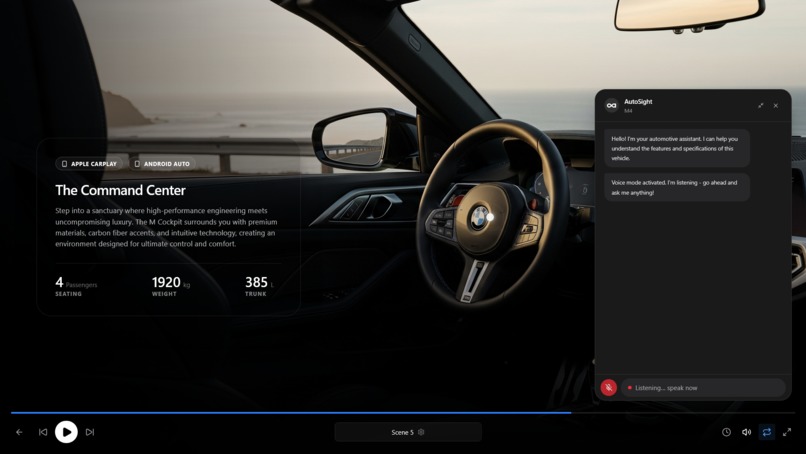

Multimodal RAG Chatbot that uses Nova 2 Sonic and Livekit for real time speech to speech

About the Project

Inspiration

Car dealerships spend days manually photographing, writing copy, and building web pages for every vehicle on their lot — and the result is still a static gallery with a spec table. We asked: what if a dealer could select a car from inventory, press one button, and get a fully produced interactive showroom experience — cinematic imagery, synchronized voiceover, interactive hotspots, 3D WebGL tech views, and an AI chatbot that can answer questions from the car's manual and book test drives by voice — all generated in under two minutes?

That vision required not just one AI model, but an entire production crew of specialized agents working together. Amazon Nova's combination of fast reasoning (Nova 2 Lite), multimodal embeddings, video generation (Nova Reel), and real-time speech-to-speech (Nova Sonic) made it possible to build the full pipeline on a single cloud platform.

What It Does

AutoSight is a seven-agent AI pipeline that turns a car database entry into a polished, interactive digital showroom:

- Analyst Agent (Nova 2 Lite) — profiles the car and identifies a buyer persona and marketing strategy

- Director Agent (Nova 2 Lite) — plans a cinematic storyboard with layouts, camera angles, and 3D tech view placements, locking all scenes into a consistent visual world

- Scriptwriter Agent (Nova 2 Lite) — writes headlines, body copy, voiceover scripts, and suggests interactive hotspots for each scene, constrained by camera perspective (no interior parts on exterior shots)

- Visualizer Agent — generates photorealistic scene images, places interactive hotspots via OWLv2 zero-shot object detection with six accuracy-boosting techniques, and produces intro videos via Amazon Nova Reel

- Badge Collector — verifies real-world certifications (NHTSA, IIHS, EPA, awards) from three parallel sources: rule-based logic, government APIs, and AI-analyzed web search

- Audio Agent — produces neural text-to-speech voiceover (Deepgram Aura-2) with word-level subtitle alignment, using Nova Micro for intelligent subtitle grouping

- QA Agent — validates and enriches the final story JSON with real car specs for 3D rendering

Beyond generation, AutoSight provides:

- Live Dealer Editor + Copilot — dealers edit any scene in real time; the Copilot (Nova 2 Lite with tool calling) can add scenes, retheme the entire experience, translate to another language, or regenerate images through natural language

- Multimodal RAG Chatbot — a four-tier retrieval pipeline (Nova 2 Lite + Nova Multimodal Embeddings) that answers buyer questions from uploaded PDF brochures and car manuals, with inline test-drive booking via tool calling

- Speech-to-Speech Voice Agent — Amazon Nova Sonic streams bidirectional audio over LiveKit WebRTC, enabling buyers to ask questions and book test drives entirely by voice

- RAG Document Ingestion — a Python PDF shredder extracts text, tables, and images from dealer-uploaded documents; Amazon Nova Multimodal Embeddings generates 1024-dim vectors stored in Supabase pgvector

How We Built It

The backend is a Node.js/Express 5 server with LangChain orchestrating all Amazon Nova calls through ChatBedrockConverse. Each of the seven pipeline agents is a separate module with its own Zod schema for structured output validation. The frontend is React 19 with Zustand state management, Framer Motion animations, and React Three Fiber for the 3D WebGL tech views.

Amazon Nova models used:

| Model | Role |

|---|---|

| Nova 2 Lite | All 7 pipeline agents, Copilot tool calling, RAG chatbot tiers 0-3 |

| Nova Micro | Subtitle grouping (fast, cheap, short-context task) |

| Nova Reel | Image-conditioned intro video generation (6s clips) |

| Nova Sonic | Real-time speech-to-speech voice agent with tool calling |

| Nova Multimodal Embeddings | 1024-dim vectors for RAG document retrieval |

The pipeline runs asynchronously — the API returns immediately with a story ID, and the frontend polls for progress while showing which agent is currently working. Each agent's output feeds into the next, creating a true multi-agent orchestration chain rather than independent model calls.

Key architectural decisions:

- World-locking — the Director picks one setting and lighting family, and a deterministic post-processor enforces it across all scenes, preventing the "stock photo rotation" problem

- Camera perspective enforcement — the Scriptwriter is explicitly told whether the camera is EXTERIOR or INTERIOR, and interior car parts are forbidden on exterior shots (framed as physical impossibility, which LLMs respect more reliably than soft preferences)

- Vision-enriched prompting — before generating images, Nova Lite analyzes the dealer's actual inventory photo to extract physical details (rim style, grille shape, trim accents), which are injected into every image prompt

- Six-technique hotspot detection — OWLv2 object detection with caption-wrapped CLIP prompts, contrastive distractor labels, per-part confidence thresholds, bounding box area constraints, aspect-ratio padding, and two-pass interior zoom

- Tiered RAG — the chatbot checks if the question can be answered from structured car JSON before hitting the vector store, saving 60% of embedding calls

Challenges We Faced

- Structured output consistency — Nova 2 Lite occasionally returns empty

{}for unused scene-type fields in the Scriptwriter output. We solved this with Zod's.optional().catch(undefined)pattern, which gracefully absorbs empty objects without crashing the pipeline. - Nova Sonic tool calling — the

inputSchema.jsonfield must beJSON.stringify(schema), not a plain object. This undocumented requirement caused"Unable to parse input chunk"errors until we discovered the correct serialization. - LiveKit WebRTC + Nova Sonic pacing — Nova Sonic sends TTS audio chunks as fast as the network delivers them. Without a dedicated playback queue with

await captureFrame()pacing, all frames dump into the AudioSource buffer instantly, causing audio speedup and glitching. - Image format normalization — Supabase Storage serves many dealer photos as AVIF, but Amazon Bedrock only accepts JPEG/PNG/GIF/WebP. We use

sharpmetadata inspection to detect the actual byte format and transparently convert unsupported formats before sending to Nova Lite vision. - Hotspot label normalization — LLM-generated labels like

"Front Bumper"need underscore-delimited keys (front_bumper) for detection threshold lookups. The original regex didn't convert spaces, causing all lookups to silently fall through to defaults.

What We Learned

- Amazon Nova's tool-calling works best with MANDATORY-style prompt phrasing (physical obligation vs. soft preference) — this dramatically increases tool-call reliability

- Structured output with Zod schemas is the most reliable way to get consistent JSON from Nova 2 Lite across hundreds of pipeline runs

- Nova Multimodal Embeddings produce high-quality 1024-dim vectors that work well for cross-modal retrieval (text queries matching table and image content from PDFs)

- Nova Sonic's bidirectional streaming protocol requires careful lifecycle management — AbortController, double-stop guards, and async producer-consumer queues are essential for clean teardown

Built With

- amazon-bedrock

- amazon-nova-2-lite

- amazon-nova-micro

- amazon-nova-multimodal-embeddings

- amazon-nova-reel

- amazon-nova-sonic

- deepgram

- express.js

- framer-motion

- javascript

- langchain

- livekit

- node.js

- owlv2

- pdfplumber

- pgvector

- pollinations-ai

- postgresql

- pymupdf

- python

- react

- react-three-fiber

- sharp

- supabase

- three.js

- transformers.js

- vite

- zod

- zustand

Log in or sign up for Devpost to join the conversation.