Aura: AI Creative Director for Small E-Commerce

Inspiration

We have a small jewelry business in the Philippines. Not at Cartier scale — small batch, handmade, sold through Facebook and an online store. And every time we needed product photos for a campaign, we hit the same wall.

Professional photoshoots? $500-2000 per session. A creative director who actually understands brand positioning? Not even in the budget conversation. So I'd end up with product-on-white shots, maybe a marble slab if I was feeling fancy. Meanwhile, the brands I'm competing against for attention have editorial spreads with mood lighting and styled props that make people stop scrolling.

We've already tried the AI tools. Background removers. "AI photoshoot" apps. They either gave me a generic studio swap or regenerated my product entirely — and now it doesn't even look like my ring anymore. The whole point is to sell THIS product, not a hallucinated version of it.

So I built what I actually needed: an AI creative director that thinks before it generates. One that looks at my product, understands what makes it special, plans a whole visual concept around it, debates itself on whether the plan is actually good — and only THEN creates the image. Not a filter. Not a background swap. A creative team.

What it does

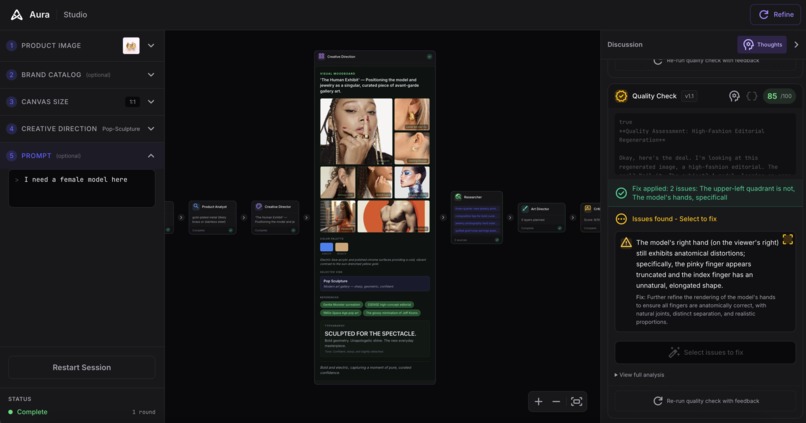

You upload a product photo. You pick a vibe — quiet luxury, gilded noir, pop sculpture, ethereal, organic modern, or high voltage. And then you watch a team of AI agents have a real creative discussion about your product.

The Product Analyst studies your piece — materials, visual qualities, what makes it photograph well. The Creative Director develops a concept ("Architectural Gold — treating the jewelry as a modern sculpture in a silent white gallery"). The Art Director translates that into a technical plan — camera angle, lighting direction, specific props, color palette. The Composition Critic reviews the plan, scores it, and sends it back for revision if it's not good enough.

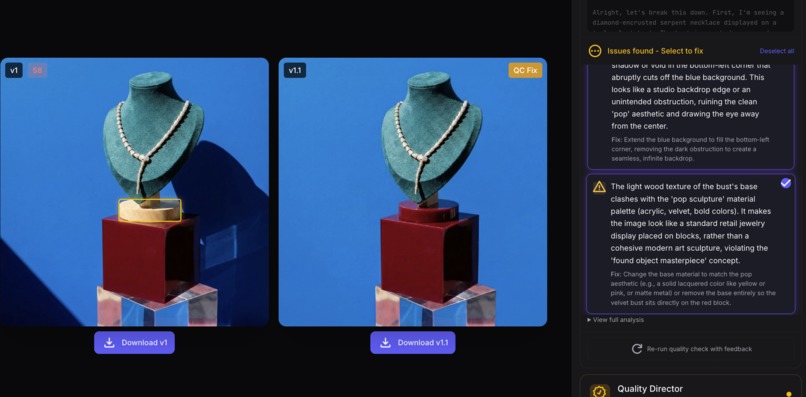

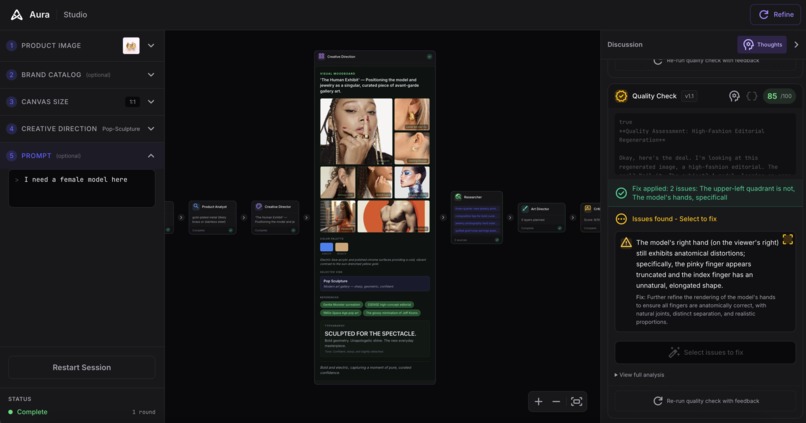

Only after the plan passes quality review does Aura generate the image. And then a Quality Director evaluates the output against what was planned — not against arbitrary rules, but against the creative team's actual intent.

Have a brand? Upload your catalog images, brand colors, and identity — the Brand Analyst extracts your visual DNA and every agent respects it. Your generated ads look like YOUR brand, not generic AI output.

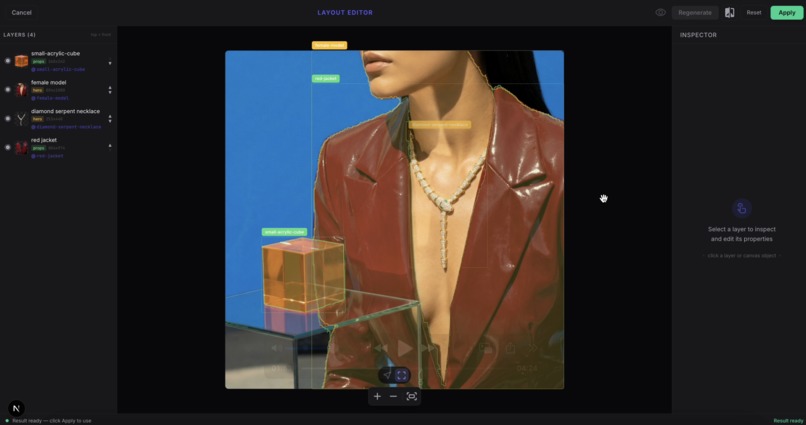

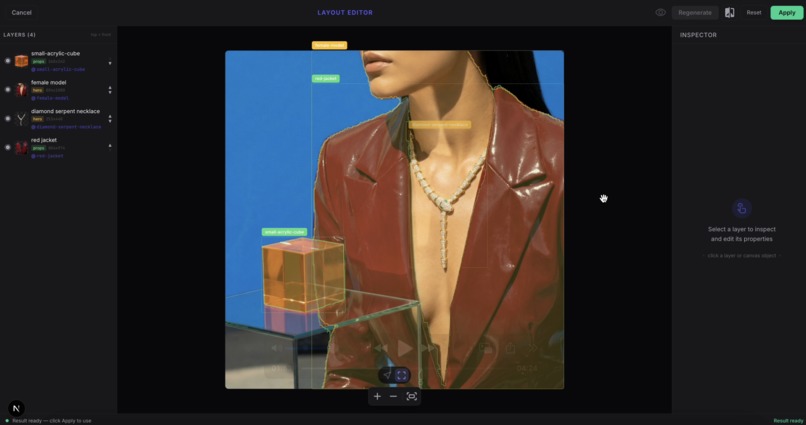

The result is a professional product photograph with editable layers. You can explode it into individual layers (background, surface, product, props), relight the scene, rearrange elements for different social formats, and run quality checks. Everything the big brands do in post-production, but automated.

How we built it

The Roundtable — 12 AI Agents That Debate

This isn't one model with one prompt. It's a collaborative system where specialized agents pass structured outputs to each other, with actual feedback loops:

- Product Analyst — Factual analysis of the product (materials, colors, features, suggested angles)

- Brand Analyst — Extracts visual DNA from brand catalog if provided

- Creative Director — Sets the vision: concept, emotion, why it works. Uses extended thinking (HIGH) for deeper creative reasoning

- Moodboard Curator — Searches the web for real reference imagery matching the creative direction

- Researcher — Validates the approach using Google Search grounding

- Art Director — Translates the vision into technical specs: camera, lighting, layers, master prompt. Also uses extended thinking

- Composition Critic — Scores the plan 1-10 and can REJECT it back to the Art Director for revision (up to 3 rounds)

- Prompt Consolidator — Merges critic suggestions into the final prompts

- Quality Director — Evaluates generated output against the creative plan

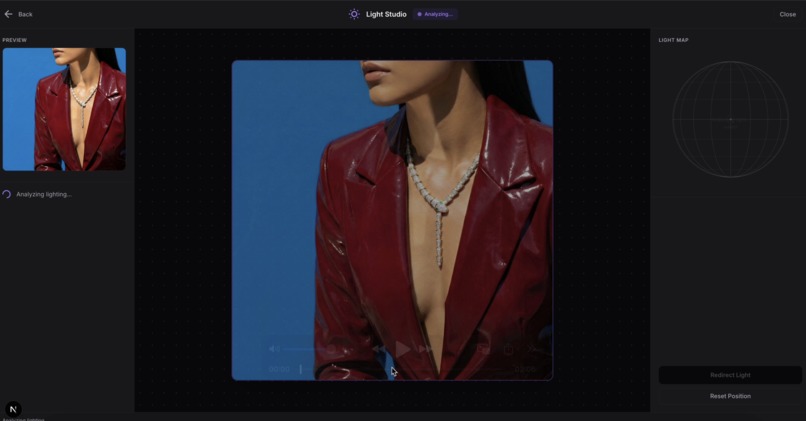

- Light Analyzer + Light Redirect — Physics-based relighting with material-aware rules

- Object Repositioner — Precise object movement with shadow/reflection consistency

- Region Generator — Add elements to specific areas of existing images

Each agent is defined in a YAML file with its own system prompt, model config, thinking level, and structured output schema. A generic agent runner loads any YAML agent and executes it with Gemini function calling.

The key innovation: agents debate before expensive image generation happens. The Critic can reject the Art Director's plan and send it back. This catches bad compositions, physics errors, and off-brand choices BEFORE wasting a generation call.

Tech Stack

- Next.js 16 + React 19 + TypeScript (strict) + Tailwind + daisyUI

- Google Gemini 3 — Flash Preview for vision/analysis, Pro for image generation, 2.5 Flash for segmentation (only model with PNG masks)

@google/genaiSDK (new SDK, not the old@google/generative-ai)- Three.js + React Three Fiber for 3D layer explosion view

- Sharp for server-side image processing

- Framer Motion for UI animations

- SSE streaming for real-time agent discussion updates

- Deployed on Google Cloud Run + Firebase Hosting via Cloud Build

6 Vibes — Deep Commercial Archetypes

Each vibe isn't just a color filter. It's a comprehensive creative specification covering lighting styles, surface materials, color palettes, camera angles, interaction physics, signature elements, hard boundaries, and reference brands:

- Quiet Luxury — "Expensive Nothingness" (The Row, COS, Polene)

- Gilded Noir — "Whispers in the dark" (Tom Ford, Cartier, YSL)

- Pop Sculpture — "Modern art gallery" (Swatch, Gentle Monster)

- Ethereal — "Floating in the sky" (Glossier, Dior Beauty)

- Organic Modern — "Expensive dirt" (Loewe, Jacquemus)

- High Voltage — "Paparazzi flash" (Balenciaga, Off-White)

Challenges we ran into

Getting AI to Actually Think Before Generating

The first version was linear — analyze product, generate image, done. The results were hit-or-miss. Sometimes brilliant, sometimes the ring is floating in space next to random acrylic blocks with no conceptual connection.

The first version was linear — analyze product, generate image, done. The results were hit-or-miss. Sometimes brilliant, sometimes the ring is floating in space next to random acrylic blocks with no conceptual connection.

The breakthrough was making agents argue with each other. When the Art Director proposes a plan and the Composition Critic says "why are there geometric blocks next to a romantic gold bracelet? That's visual noise, score 5/10" — the revision is always better. The debate IS the quality control.

The Floating Ring Problem

Gemini's vision model would flag products as "floating" even when they were sitting on a marble slab with a visible shadow. The Quality Director had hardcoded rules like "FLOATING PRODUCT = automatic score cap at 40" — so once triggered, the image could never pass QC, and the auto-fix loop would keep trying to fix a non-issue.

The fix was fundamental: stop hardcoding rules. The QC now evaluates against the Creative Director's actual intent. If the plan says "product resting on marble" and there's a contact shadow, that's not floating. If the plan says "ethereal levitation in clouds," floating IS the intent. Context matters more than rigid rules.

Product Retention

Generic AI image tools regenerate your product from scratch. Now your ring has different proportions, the gold tone shifted, the setting detail is wrong. That's useless for e-commerce — you need to sell THIS exact product.

Generic AI image tools regenerate your product from scratch. Now your ring has different proportions, the gold tone shifted, the setting detail is wrong. That's useless for e-commerce — you need to sell THIS exact product.

Aura treats the uploaded product photo as sacred. The reference image is passed through the entire pipeline. The generation prompt explicitly references the product image. The Quality Director checks product retention — does the output still look like the same product someone would receive?

Gemini Rate Limits and Cost

With 12 agents making calls, costs add up. The single master image generation approach (instead of layer-by-layer) saves ~73% on image generation costs. Extended thinking is only enabled for agents that actually need deep reasoning (Creative Director, Art Director, Critic) — analysis agents use LOW thinking to stay fast and cheap.

Accomplishments that we're proud of

Agents that actually debate. You can watch the Creative Director pitch a concept, the Art Director plan the execution, and the Critic push back — in real time via SSE streaming. It feels like eavesdropping on a real creative meeting.

Quality control that checks intent, not rules. The QC evaluates "did this match what the creative team planned?" not "did this follow my hardcoded checklist." If the CD wanted floating, floating is correct.

Post-generation editing that actually works. Layer explosion (3D view of all layers), light studio (physics-based relighting), layout editor (drag/rotate layers for different formats). These aren't gimmicks — they're the tools professionals use, made accessible.

Product retention. The generated image shows YOUR product. Same shape, same color, same details. Not a hallucinated version.

A demo you can actually try. Visit

https://aura-485413.web.app/studioand walk through a real captured session — see every agent's contribution, the moodboard, quality scores, and all post-generation tools. Zero API calls, fully interactive.

What we learned

AI reasoning matters more than AI generation. The quality of Aura's output comes from the PLANNING, not the image generation call. A well-reasoned prompt with clear creative intent produces better images than any amount of prompt engineering tricks.

Feedback loops are everything. A single pass through agents produces OK results. Letting the Critic reject and revise produces great results. The architecture of debate is what separates "AI tool" from "AI creative team."

Don't hardcode creative judgment. We spent weeks tuning point deductions and score caps. The model would follow them mechanically — "floating = 40 points, done." Holistic judgment with context works better than fake arithmetic.

Small businesses need control, not magic. The editable layers, the visible reasoning, the ability to override any decision — that's what makes Aura actually usable. A black box that sometimes produces great images isn't a tool you can build a brand on.

Gemini's multimodal capabilities are genuinely different. One model that can see a product, reason about creative strategy, search the web for references, and generate the final image — that eliminates entire categories of integration complexity.

What's next for Aura

Now

- Batch processing — upload 50 products, get 50 styled images. Same creative direction applied consistently across a catalog.

- One-click multi-format export from the layout editor — reframe one image for Instagram square, Stories, Facebook, Twitter without regenerating.

Soon

- Video ad generation — animated layers, subtle motion, Ken Burns on the background

- Shopify/Etsy integration — sync product catalog, generate and publish directly

- BYOK (Bring Your Own Key) access — use your own Gemini API key with Aura's agent system

The Vision

Every small business owner I know has products they're proud of. What they don't have is $2000 for a photoshoot, a creative director who gets their brand, or the Photoshop skills to make it all come together.

Aura is the creative team they deserve. Not someday — now.

Built With

- gemini-api

- google-cloud

- next.js

- node.js

- react

- sharp

- tailwindcss

- typescript

Log in or sign up for Devpost to join the conversation.