-

-

Aura – AI Navigation Assistant built to empower visually impaired individuals through real-time multimodal intelligence.

-

Aura’s core solution: an empathetic AI companion providing human-like guidance, awareness, and independence for users.

-

Key features of Aura including intelligent navigation, safety alerts, conversational assistance, and personalized support.

-

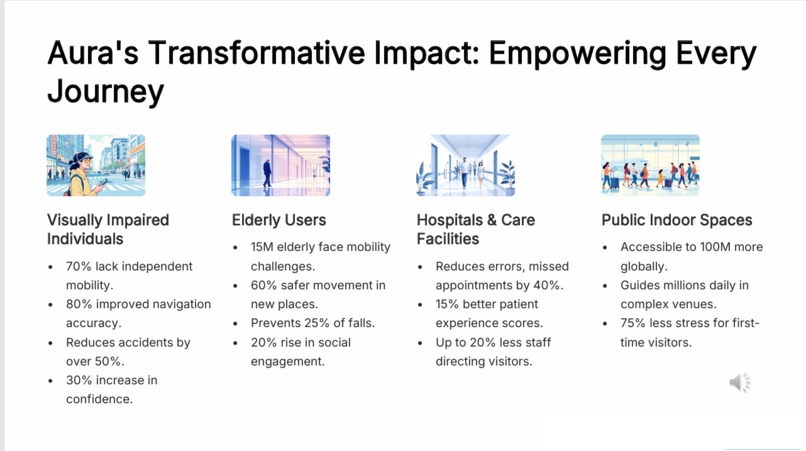

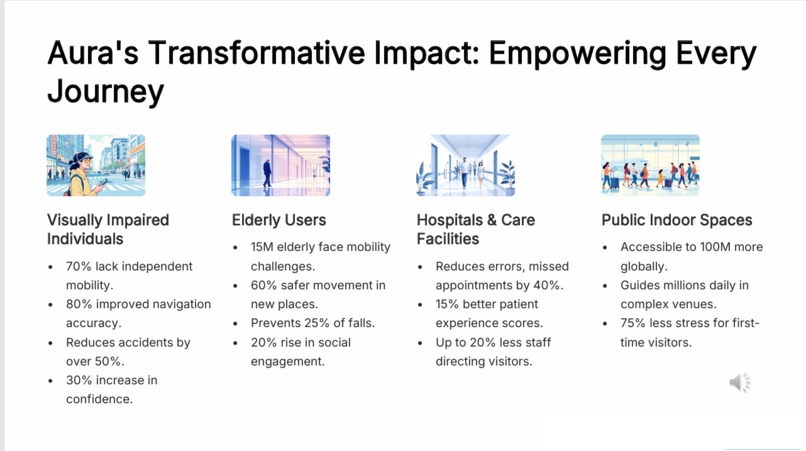

Aura’s measurable impact across users, caregivers, and public spaces—improving safety, confidence, and accessibility.

Inspiration

Millions of visually impaired individuals struggle daily with mobility, independence, and safety. Existing tools often overwhelm users with noise, confusing instructions, or slow responses. I wanted to create something that feels more human—an AI companion that guides, protects, and understands. Aura was born from that mission: to give independence back to people who navigate the world without sight.

What It Does

Aura is an AI-powered navigation assistant that uses the Gemini 3 multimodal API to provide real-time scene understanding, hazard detection, and conversational guidance for visually impaired users. With a smartphone or wearable camera, Aura can describe surroundings, detect obstacles, recognize traffic signals, and guide users safely with instant voice feedback. Users can ask natural questions like:

“What’s ahead of me?” “Is it safe to cross?” “Which way should I go?”

Aura responds with situationally accurate, empathetic guidance—like a caring companion.

How We Built It

Live camera input feeds into the application. The visual frames are processed through Gemini 3 Vision for object detection, risk assessment, and context reasoning. The system converts responses into natural audio guidance. Safety-critical decisions (approaching vehicles, drop-offs, obstacles) are prioritized using Gemini's low-latency reasoning. Multilingual support and personalization are layered on top.

Challenges We Ran Into

Achieving real-time performance with camera frames. Making guidance simple, natural, and not overwhelming. Stabilizing scene descriptions for moving users. Ensuring safety alerts were fast enough to be useful. Designing an intuitive UX for visually impaired users.

Accomplishments I'm Proud Of

Built end-to-end multimodal navigation using Gemini 3. Achieved smooth real-time hazard detection. Created a friendly “companion-like” interaction style. Designed an app that is practical, accessible, and meaningful.

What We Learned

Multimodal AI requires careful optimization for real-time use. Users need concise, empathetic feedback—not technical jargon. Accessibility design must remove friction at every step. Gemini 3’s reasoning is powerful for predicting risks before they happen.

What’s Next for Aura

Offline model support. Vibration‑based direction feedback for noisy environments. Integration with smart glasses. Partnering with accessibility organizations.

Built With

- api

- fastapi

- flask

- gemini3

- googleaistudio

- html/css

- javascript

- opencv

- python

- speech-to-text

- text-to-speech

- tts)

Log in or sign up for Devpost to join the conversation.