-

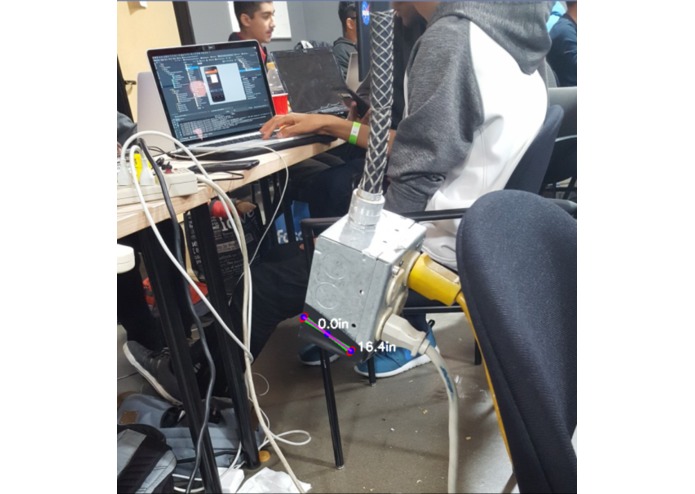

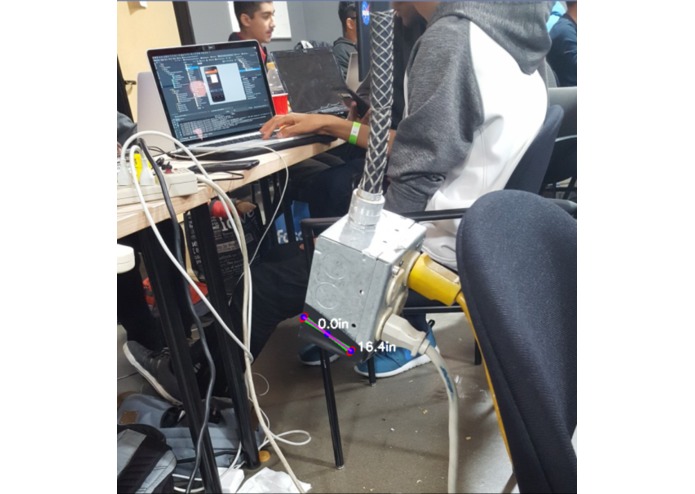

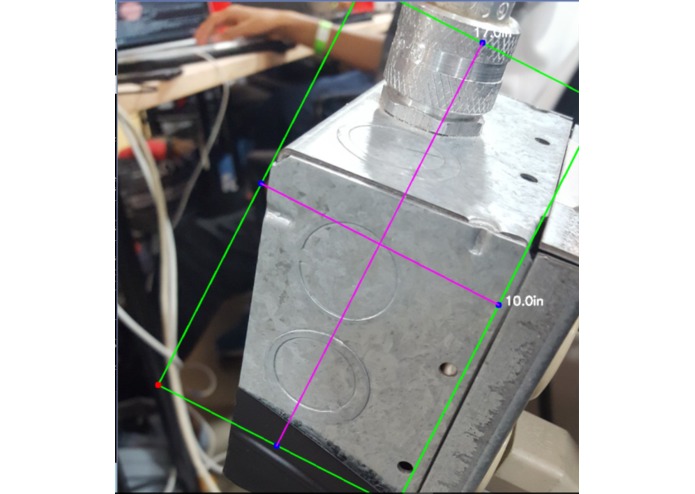

A blind person approaches a potential obstacle.

-

The obstacle is flagged due to its rapid size growth in the viewport, and the app sends a warning.

-

Minimalistic UI that features a webcam-type viewport.

-

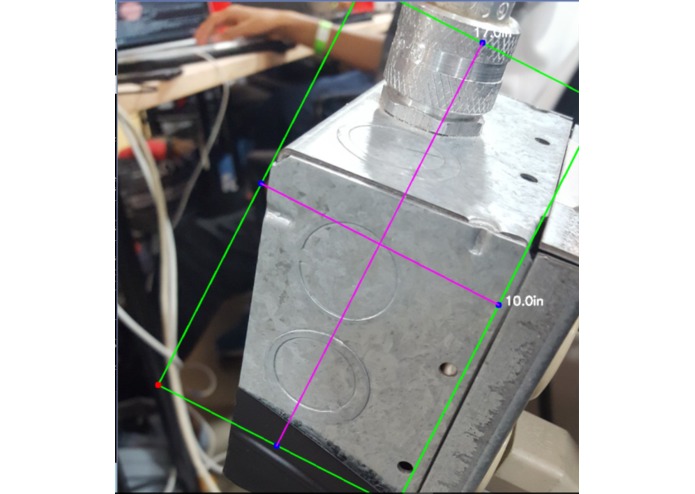

Image AI which detects the rough features of the image to detect differences in the Android client.

-

Original image that was processed- observe the "Doritos" logo which was transferred.

Inspiration

What it does

Instead of walking with a cane or some other aid, visually impaired people walk around with their phone with Aspicio running. The lightweight app built with angular takes constant pictures and connects to a python based server to analyze the photos. With the use of powerful GPU's in the cloud, the image is processed almost instantly and sent back to the phone which gives vibration and sound feedback if an obstacle is there.

How we built it

We used the Angular framework for the client application, which connects to a PHP backend running on a local Apache server. The This backend processes the image using Python scripts running on the OpenCV vision library, and returns the size of the object

Challenges we ran into

We had to revise our original idea of realtime video processing, due to the time it took to process them. Instead, we had to build an app that could process still frames captured from that video stream while leaving time for backend processing and still keeping the latency low enough to effectively give warnings.

Accomplishments that we're proud of

We were able to detect the relative sizes of the objects in different frames, as well as using intelligent image detection to detect the rough boundaries of the most important objects in the frame.

What we learned

We learned a ton about the connection between the backend and the frontend. We also learned about computer vision and the role math plays in the Computer Vision and edge detection.

What's next for Aspicio

We will continue to optimize the backend to process more quickly, as well as enabling user communication through speech.

Log in or sign up for Devpost to join the conversation.