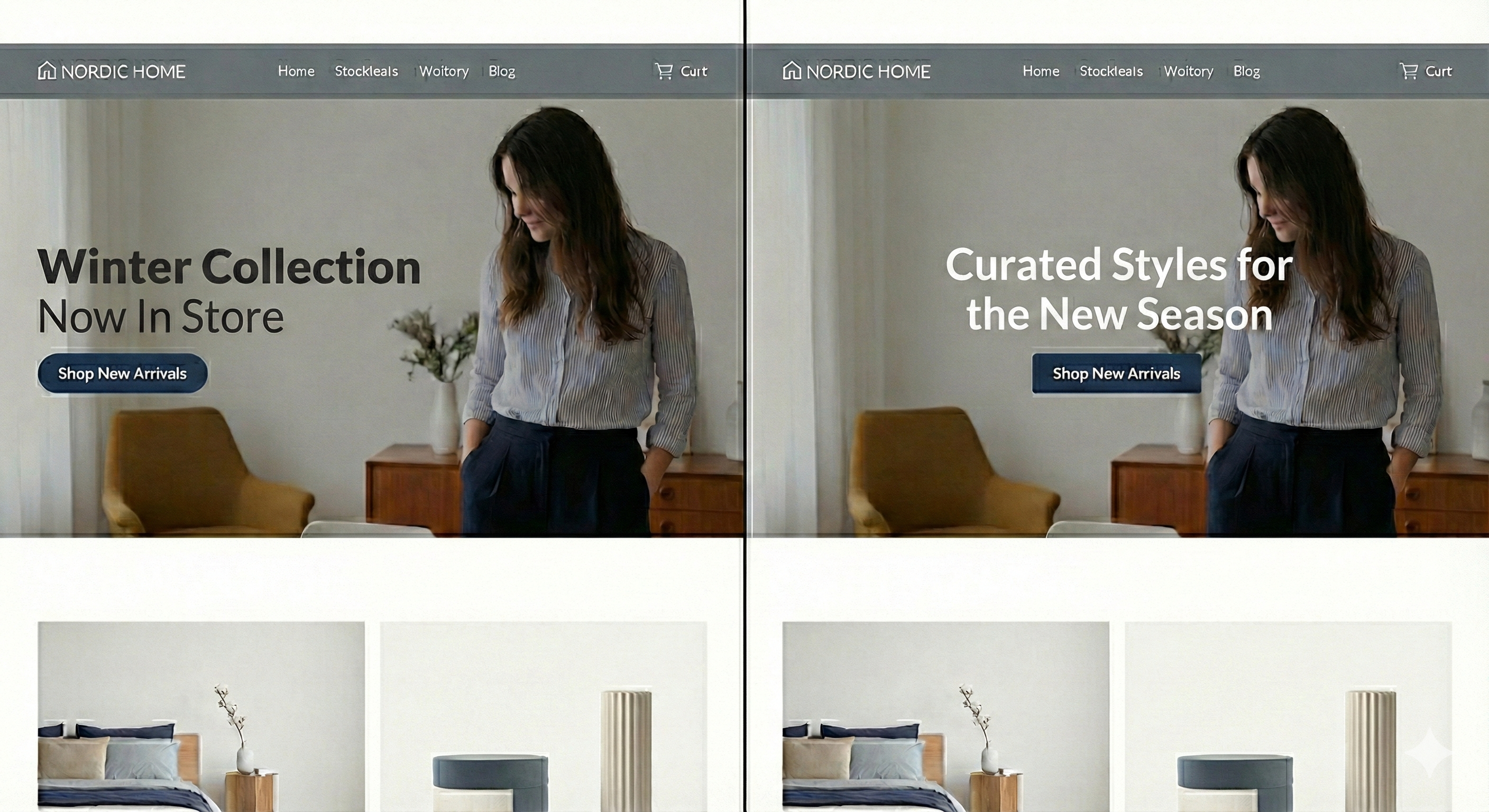

In the images of the two UIs you see, there are a few differences. How do we know which one is better?

In the images of the two UIs you see, there are a few differences. How do we know which one is better?

Let’s examine how big tech companies address this problem. They continuously track user behavior such as clicks, scroll depth, and time spent on different sections of a website to understand how users interact with their UI. This data is analyzed by product, design, and growth teams, who then propose interface changes—such as adjusting button placement, layout, text, or visual hierarchy. An engineer then implements these changes, tests them, and redeploys the application. This loop repeats frequently and is expensive and slow, making continuous UI optimization difficult for small and fast-moving businesses.

Two main challenges with this approach are:

Significant and Frequent UI changes often demand remote UI rendering. Implementing this requires a very strong engineering team and a large budget—something most small businesses cannot afford. This is especially problematic for mobile clients, where users must update their app from the App Store to receive UI changes.

Collecting and analyzing user data.

We built an AI agent that can handle this entire cycle autonomously and continuously to improve the UI of any application.

Our agent divides an application’s users into multiple groups and serves different UIs to them with minor variations such as changing how a label is phrased, adjusting the position of text or buttons (as you see in the image), and more. It then collects analytics data such as button clicks and time spent in particular sections. Based on this data, it determines which changes are statistically better than others and gradually moves the overall UI of the application in that direction.

This cycle can run 24 hours a day, entirely on its own (with guardrails in place to prevent it from changing things it shouldn’t).

The two main challenges we solved to make this possible are:

Remote UI rendering for all: Much of the heavy lifting here was done using Vercel's JSON rendering library. However, we believe that building a custom renderer from scratch for a specific framework would prove even more fruitful (thoughts?). What to render and how to propose meaningful changes is handled by our AI agent.

Analytics: After changing the UI, how do we still collect useful data? Can we only collect what was pre-programmed? Our framework can also deliver logic for collecting new types of analytics data remotely. For this, we used PostHog's analytics platform.

Log in or sign up for Devpost to join the conversation.