-

-

Initial state, the Kinect is not recording data and the user is holding a fist.

-

The user opens the right hand in order to tell the drone where to go. The destination coordinate is calculated based on the elbow and wrist.

-

The user will reset the drone with a thumbs up to let it know that they are ready to send it somewhere else.

-

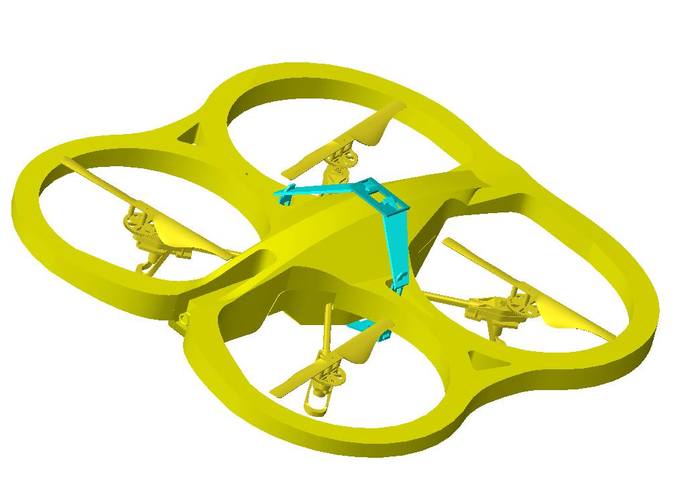

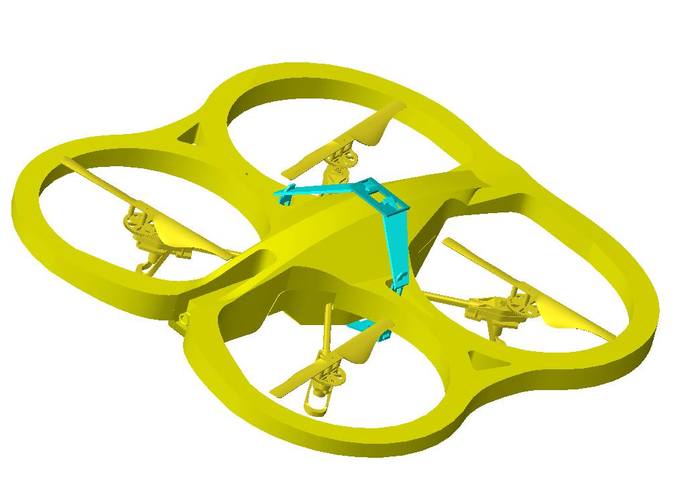

Carrying apparatus to be used to pick up items detected by the drone.

-

Drone with carrying apparatus.

Inspiration

The inspiration for ASAR started with the idea of making RC drones more intelligent. We thought about practical applications of drones and how their use cases can be improved. One significant flaw we found was in search and rescue. Operators are supposed to manually maneuver drones through unfamiliar terrains and natural disasters. This is extremely difficult for a human and can easily be simplified through intelligent automation.

What it does

In emergency situations where it is too dangerous to rescue a survivor, our drone can be deployed for a rescue mission. By pointing to the scene of a disaster, such as a high-rise fire, hurricane, or humanitarian crisis, the drone goes to the specified location and begins searching for survivors. Using facial recognition, it will tell emergency crews about the locations of survivors. The future purpose of this drone would be to be able to carry survivors to safety using our claw frame.

How I built it

We built this entirely in javascript (Node.js). We found several different libraries by third parties to accomplish this. These libraries included Kinect2 and AR-Drone Autonomous. Once we discovered these libraries, we simply started connecting all of the data into several different workflows. The first started at the kinect. Kinect data was manipulated using linear algebra to find the location that the user was pointing to. This coordinate was passed to a function that was created using the ardrone libraries to make the drone travel to a specified location. Once the drone reached the calculated coordinate, we utilized our carrying apparatus to pick up the item at the location. Afterwards, we had the drone return to the origin and land.

Challenges I ran into

The first ~8 hours of the hackathon were spent dealing with issues with the AR drone. There was an issue with the drone itself that we could not solve. Once we were able to get our hands on another drone, we were able to begin development. A majority of the drone manipulation tasks were not very difficult, but we ran into significant issues when trying to use the video stream from the drone while flying it. Essentially the problem was that we could not fly the drone and stream & analyze video simultaneously because of the synchronous manner that the drone was executing instructions in. Another significant issue was that the drone's axes were different than the kinect's axes. Finding the mapping between them to have them on the same plane was extremely difficult.

Accomplishments that I'm proud of

Being able to effectively integrate the Parrot AR Drone with the Kinect. These would have been difficult technologies to integrate separately, but we used the power of Node.js to make the development process simpler.

What I learned

We learned a great deal through both the workshops and the project itself. Most significantly, we learned about the inaccuracies of the drone sensors and a great deal about abstracting lower level actions to higher level functions. Ultimately, it taught us a lot about cross-discipline projects. Our team consisted of a computer engineering major, an aerospace engineering major, and two computer science majors, and this project combined aspects of all of those disciplines.

Log in or sign up for Devpost to join the conversation.