-

-

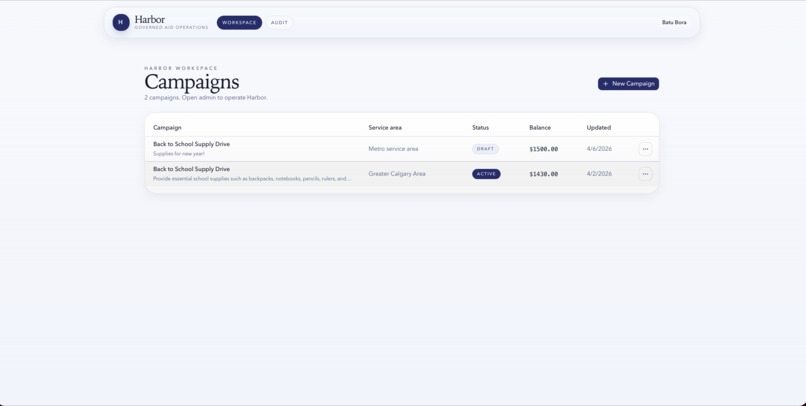

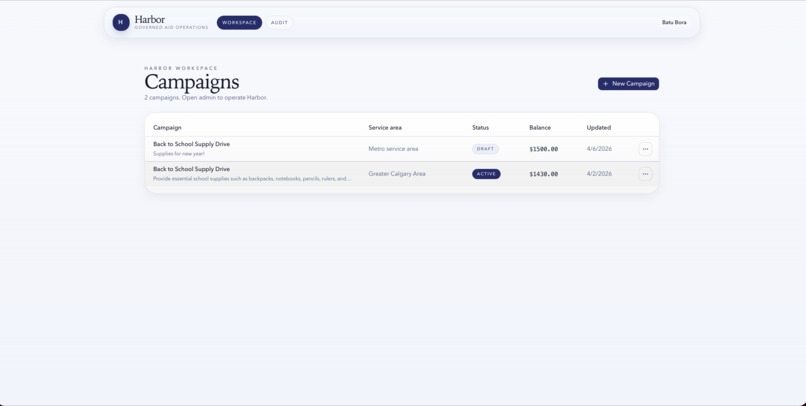

Harbor workspace showing active campaigns, balances, service areas, and quick access to campaign admin.

-

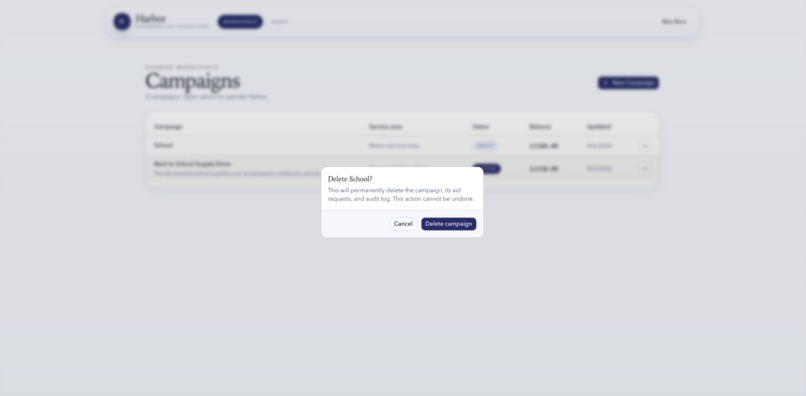

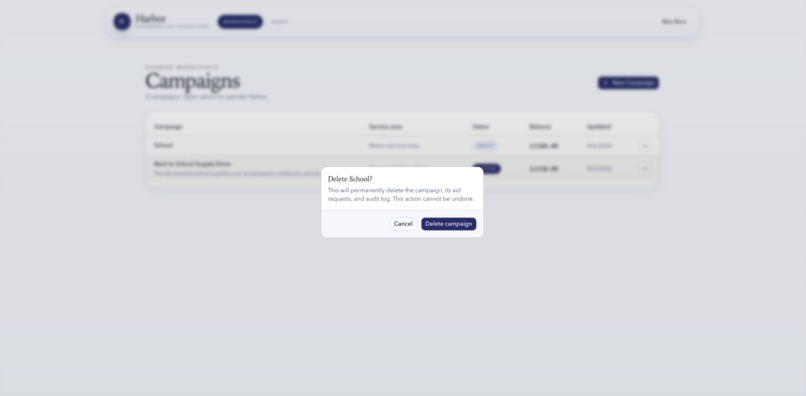

Protected campaign deletion flow that prevents accidental removal of campaign data, requests, and history.

-

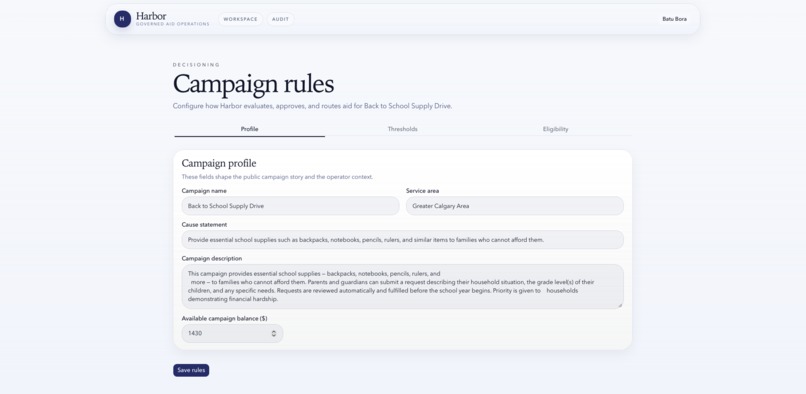

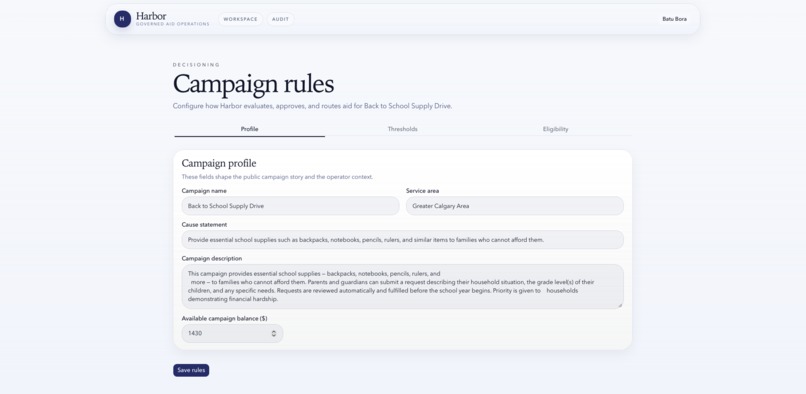

New campaign setup panel where operators define the cause, funding balance, and approval thresholds.

-

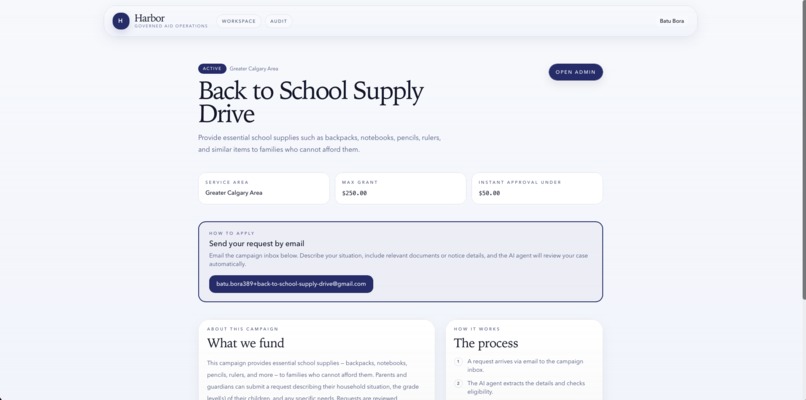

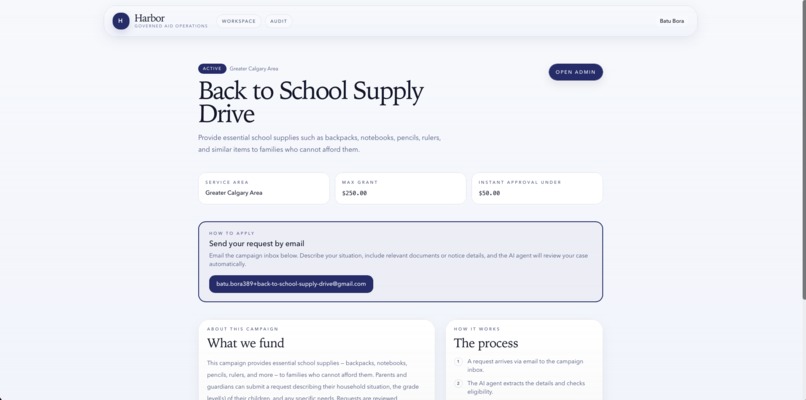

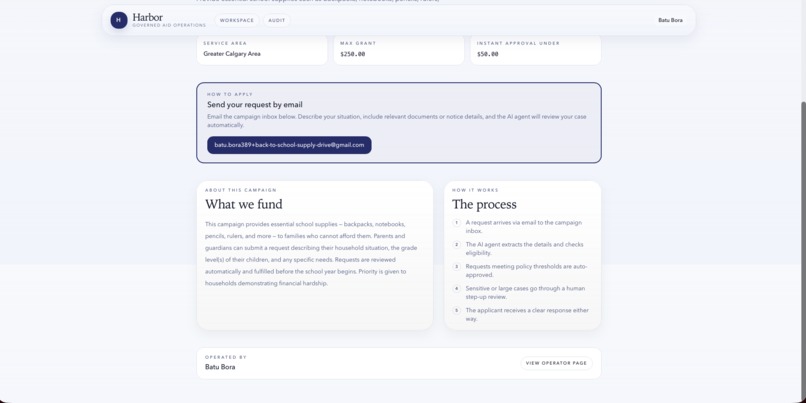

Public campaign page where applicants see what the fund covers and the dedicated intake email address.

-

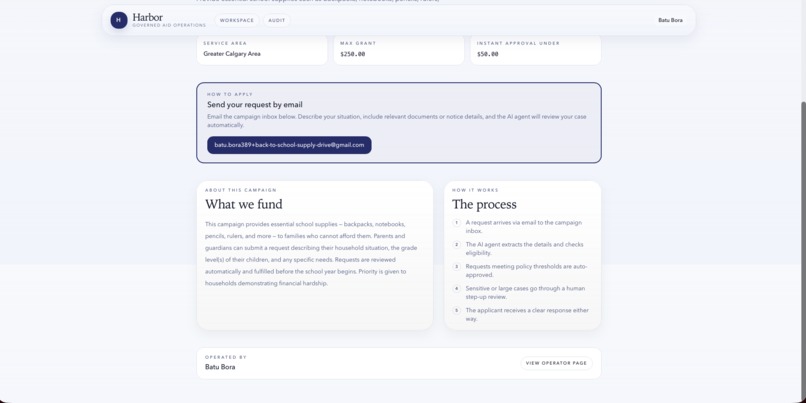

Applicant-facing campaign details and process overview, showing how emailed requests are reviewed and routed.

-

-

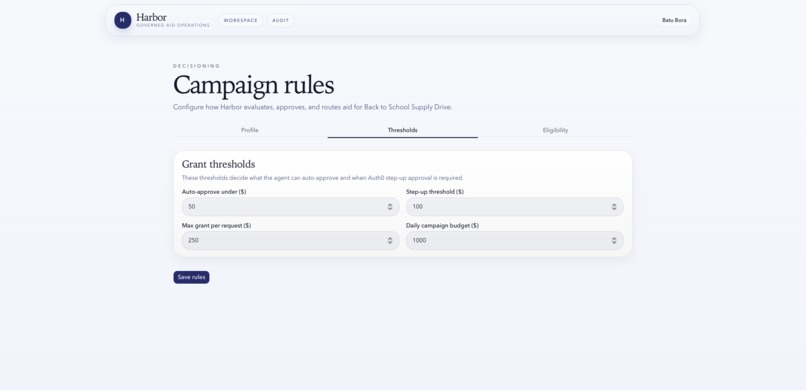

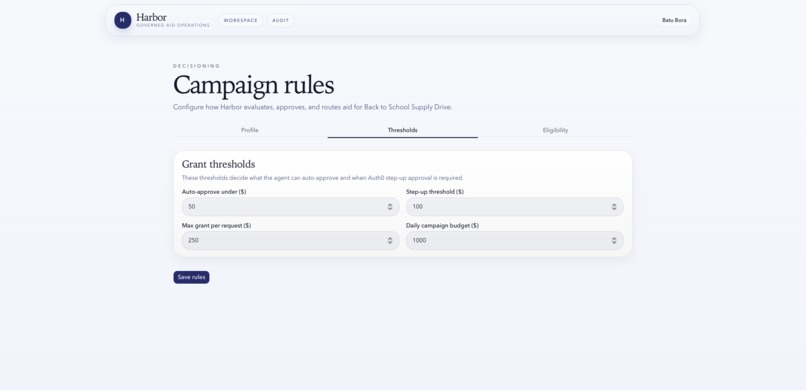

Grant threshold controls for auto-approval, supervisor step-up, max grant size, and daily budget.

-

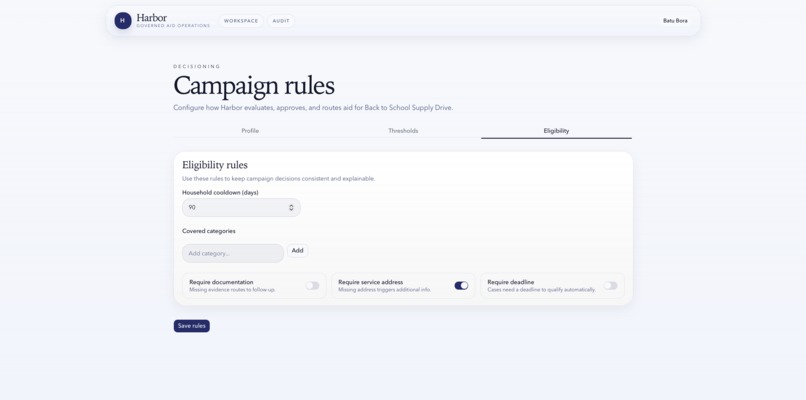

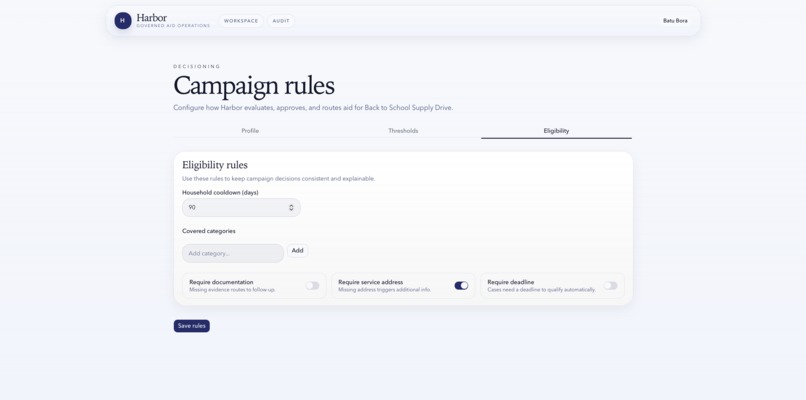

Eligibility rules for cooldown periods, covered categories, and required evidence such as documentation and service address.

-

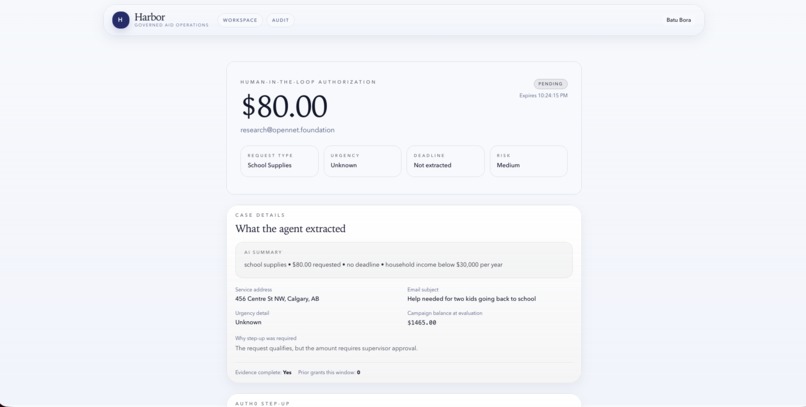

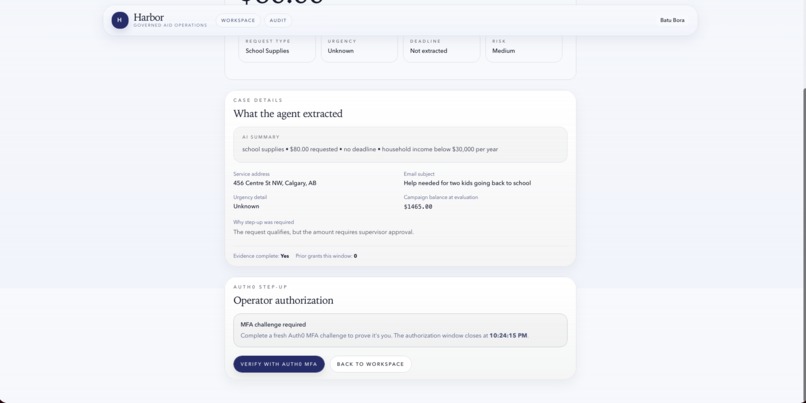

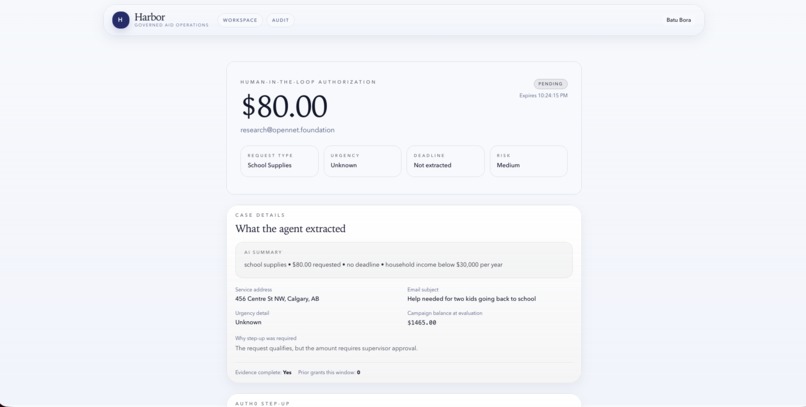

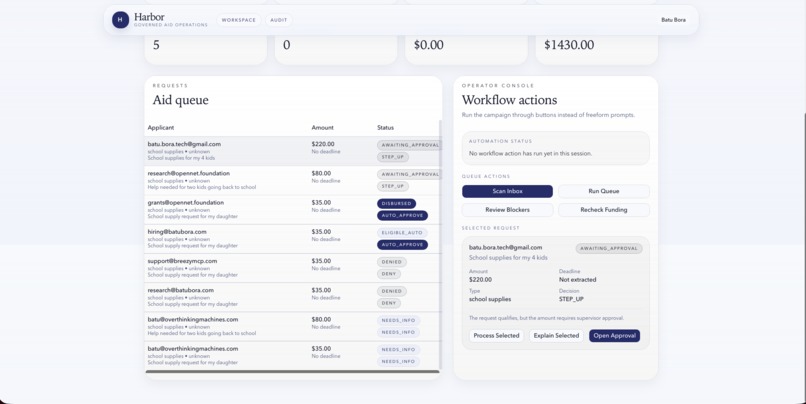

Human-in-the-loop approval view showing the requested amount, applicant context, and AI-extracted case details.

-

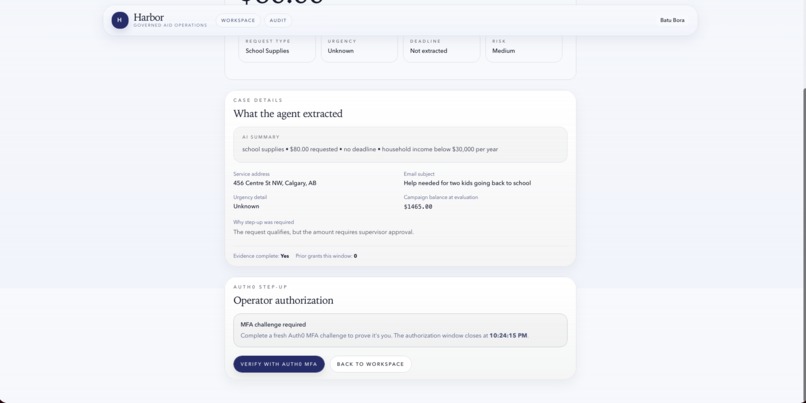

Auth0 step-up MFA flow that pauses sensitive approvals until the operator completes fresh verification.

-

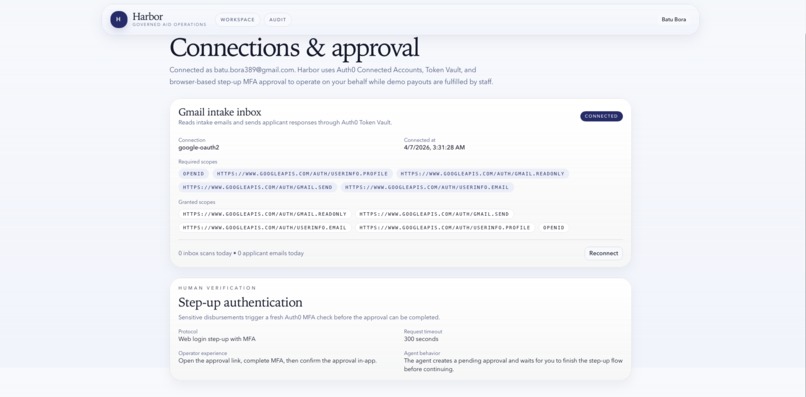

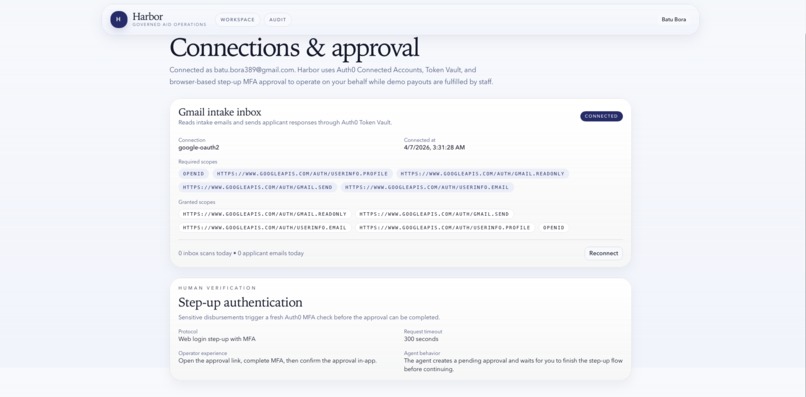

Connections and approval page showing Gmail connected through Auth0 Token Vault, granted scopes, and approval settings.

-

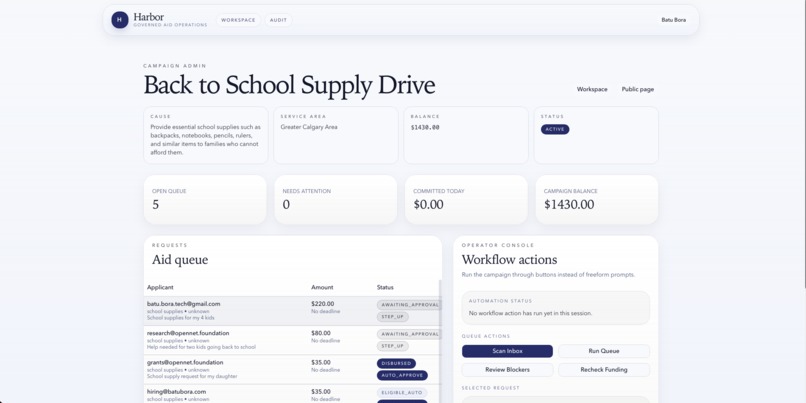

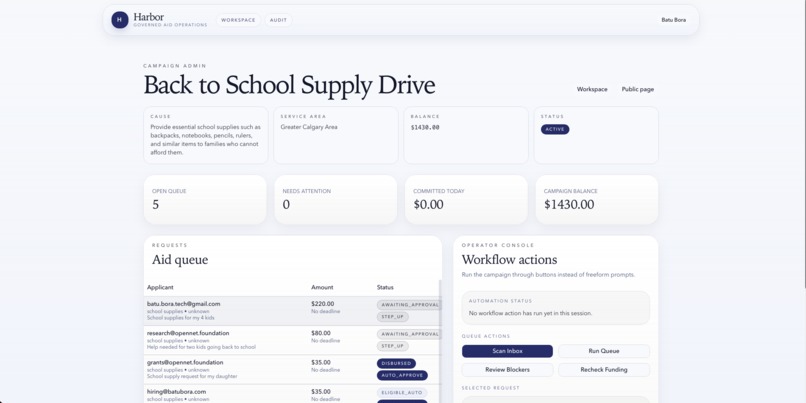

Campaign admin dashboard with live balance, queue counts, campaign status, and workflow actions for the active fund.

-

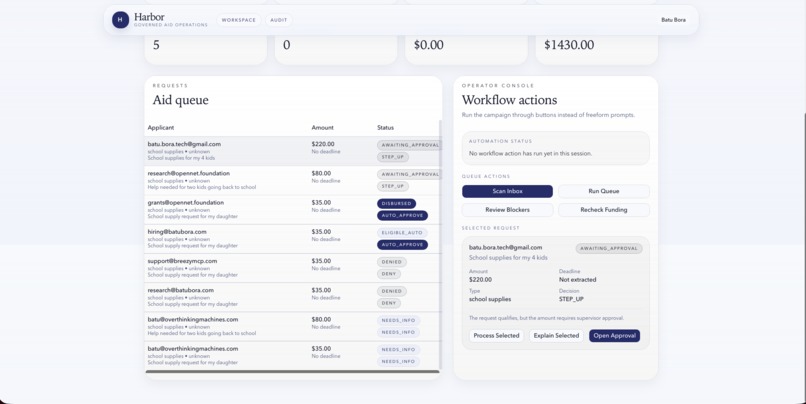

Operator console where Harbor scans the inbox, reviews requests, and routes each case to auto-approve, step-up, deny, or needs-info.

-

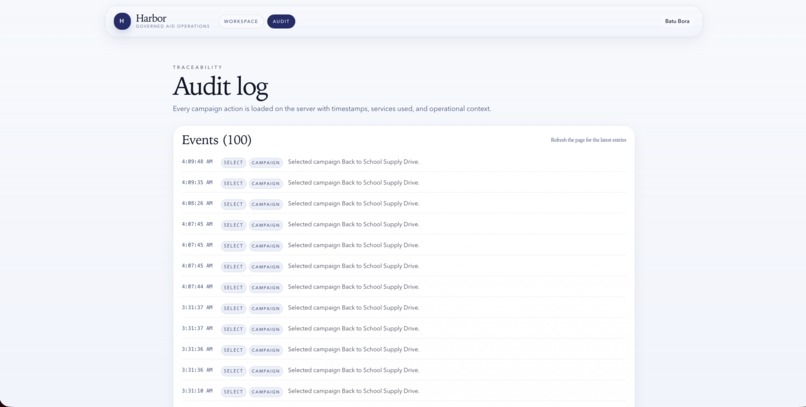

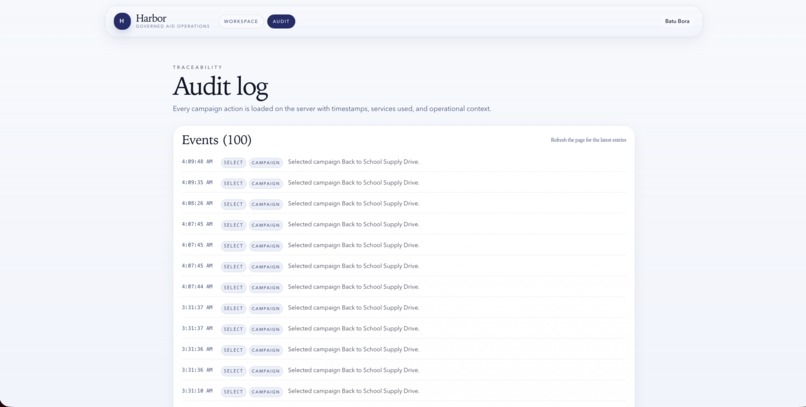

Audit log showing a timestamped record of campaign actions for traceability and review.

Inspiration

Most AI agents are pointed at low-stakes tasks like drafting emails or booking meetings. We wanted to test a harder question: can an AI agent help run a high-stakes workflow without becoming a trust problem?

Emergency aid programs often start in the inbox. Organizers read hardship emails, check documents by hand, compare requests against policy, and make judgment calls under time pressure. The work is slow, inconsistent, and hard to audit. We built Harbor to show that an AI agent can speed up the operational work while Auth0 keeps a human in control before anything sensitive happens.

What it does

Harbor is an AI operations assistant for emergency relief campaigns.

An operator creates a campaign, sets policy thresholds, connects Gmail through Auth0 Connected Accounts and Token Vault, and activates the campaign. From there, Harbor can:

- scan incoming hardship emails from the campaign inbox

- extract the applicant, requested amount, deadline, service address, and evidence status

- evaluate each request against campaign rules like max grant, cooldown period, covered categories, and auto-approve thresholds

- auto-approve low-risk cases that are complete and within policy

- pause and require Auth0 step-up MFA for higher-risk or higher-value cases

- send applicant emails on the operator's behalf through Gmail

- maintain an audit trail of scans, evaluations, approvals, denials, emails, and payout handoffs

In the hackathon demo, an approved request creates a governed payout handoff instead of silently moving money. The goal is speed without losing policy control or visibility.

How we built it

We built Harbor with Next.js 16, React 19, TypeScript, and Tailwind CSS. The agent layer uses the Vercel AI SDK with Anthropic models: Claude Sonnet for workflow reasoning and decisions, and Claude Haiku for structured email extraction.

Auth0 sits on the critical path of the product. We use @auth0/nextjs-auth0

for authentication, Auth0 Connected Accounts for delegated access, and Auth0

Token Vault so the agent can access Gmail on the user's behalf without us

storing raw OAuth credentials. For sensitive approvals, we trigger a

browser-based Auth0 MFA step-up flow and only continue after the operator

completes fresh verification.

On the backend, Neon Postgres and Drizzle ORM store campaign state, aid requests, approval records, policy rules, and audit events. The product includes a workspace, policy editor, permissions dashboard, approval page, audit log, and an agent chat surface that runs the workflow end to end.

Challenges we ran into

The hardest part was not the model. It was delegated auth.

Getting the Gmail connection, scopes, Token Vault access, and server-side request flow working together in a real Next.js App Router project took the most debugging time. Scope hygiene mattered: we needed enough access to read and send email, without telling a vague "trust us, we have everything" permission story.

The second challenge was product design. Letting an agent do too much makes for a flashy demo, but not a trustworthy product. We had to be strict about when the agent could proceed automatically, when it had to stop, and how to explain that stop in plain language.

Accomplishments that we're proud of

- We built a concrete, high-stakes AI workflow instead of a generic assistant.

- Auth0 is central to the user journey. Token Vault, Connected Accounts, and MFA step-up are not add-ons.

- Harbor makes decisions visible through policy rules, approval pages, and an audit log.

- The app shows a credible human-in-the-loop pattern: the agent handles the repetitive work, and the operator keeps control over consequential actions.

- We kept the demo narrow enough to understand quickly while still making it feel like a real product.

What we learned

AI agents need a different trust model than normal web apps. A normal app acts for a user who is present. An agent often acts for a user who is absent, and that changes how you think about credentials, approval, and recovery.

We also learned that step-up auth is not just a security control. It is also a UX control. Users are more comfortable delegating work when the boundary around sensitive actions is explicit.

Finally, we learned that vertical specificity beats breadth in a hackathon. A governed aid operator is a much clearer story than a broad assistant that does a little of everything.

At the same time, Harbor demonstrates a reusable pattern beyond emergency aid. Any agent that acts through a user's email or another delegated API runs into the same problem: how do you let the agent do useful work without turning delegated access into silent, unreviewable automation? The pattern here generalizes well: delegated access through Token Vault, explicit policy boundaries, visible scopes, and step-up authentication before high-stakes actions.

What's next for Harbor

Next, we want Harbor to read documents and attachments directly from email, so it can understand shutoff notices, utility bills, and other evidence. We also want richer explanations for every decision, multi-workspace support for organizations running several campaigns, and a production payout rail with reconciliation instead of a demo handoff flow.

Longer term, we see Harbor as an operating layer for small relief programs: intake, policy enforcement, approvals, auditability, and secure delegated action in one system.

Bonus Blog Post

When I first thought about building an AI agent for emergency aid, my default engineering instinct was straightforward: store OAuth tokens, encrypt them, refresh them in the background, and move on. That is how most web apps work. It also felt wrong for a product that reads personal hardship emails and acts when the user is not sitting there watching.

That is the moment Token Vault clicked for me.

Harbor is not a chatbot. It is an agent that reads a real inbox, drafts responses, and moves requests toward approval. In that kind of product, "we stored the tokens safely, trust us" is not a satisfying answer. Token Vault changed both the architecture and the product tone. Instead of building my own mini credential platform, I could stay focused on the real question: where should the human stay in control?

The technical friction was real. Calling a model was the easy part. Getting Gmail scopes, Auth0 Connected Accounts, Token Vault retrieval, and a Next.js App Router backend to line up cleanly took more time than prompting.

Once that worked, the rest of the product got clearer. I could design Harbor around one simple principle: the agent can prepare and propose, but sensitive actions should pause for fresh verification. That is why the step-up MFA flow became such an important part of the product. It is not just a safety check. It is the point where delegated action becomes acceptable.

That was the biggest lesson of the project: for AI agents, trust is not a footer policy. Trust is the product.

Built With

- anthropic-claude-haiku-4.5

- anthropic-claude-sonnet-4

- auth0-connected-accounts

- auth0-for-ai-agents

- auth0-mfa

- auth0-token-vault

- drizzle-orm

- gmail-api

- neon-postgres

- next.js

- react-19

- tailwind-css

- typescript

- vercel-ai-sdk-6

Log in or sign up for Devpost to join the conversation.