-

-

Splash Screen shows animated music preview with MIDI jukebox playback controls.

-

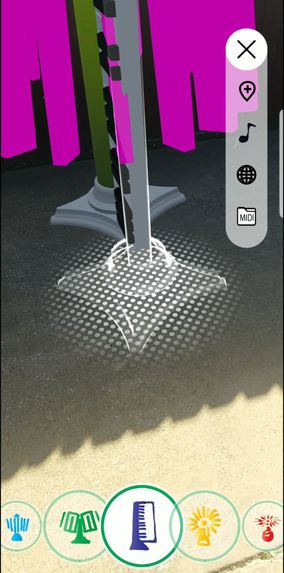

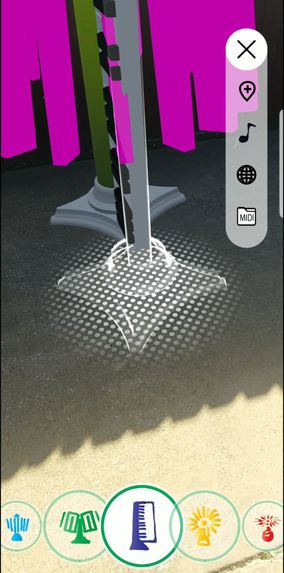

Selection of 5 Unique Spectrum Sculpture

-

Select location to place the item

-

Comfirm placement

-

Animated 3D Spectrum sync to musical notes

-

Control music playback in music mode.

-

Choose MIDI files folder to playback songs automatically.

Inspiration

My inspiration comes from AniMusic, a computer music animation video from the 2000s that showcased the stunning potential of 3D visualization of MIDI-based music. I want to include my existing musical animation project into AR using GeoSpacial API, allowing user to place animated spectrum in real-world locations and bringing music to life in a whole new way.

What it does

Visualize animated musical notes in Augmented Reality with virtual 3D instrument/ Sculpture. Movement driven by individual musical note data within MIDI file format.

- User can choose 5 unique musical spectrum and sculpture to place in the world.

- User can playback included sample file or choose their own midi folder for playback.

- Files in the folder are played back in sequence like a jukebox - with song skip function.

How it's build

I built the app using Unity 3D game engine. I used "Pocket Garden" demo app as a reference for UI and ARCore Geospatial API. Created custom graphics and 3D models for skinning. For the animated music I created customized procedural animation in C#. To enhance the functionality of the app, I used additional libraries such as "CsharpSynth" for MIDI audio playback, "Simple Spectrum" for visuals, and "Simple File Browser" for file selection.

Challenges I ran into

Longer iteration time for app testing with geospatial function, requiring a device build and a proper outdoor location with sufficient lighting condition. I often needs to disable location functions for quicker test iteration indoor.

What's next for AR Animusic Synthwave

Maybe adding more customizable visual spectrum - variation in color, size, arrangement. etc.

Built With

- arcore

- csharp

- unity

Log in or sign up for Devpost to join the conversation.