-

-

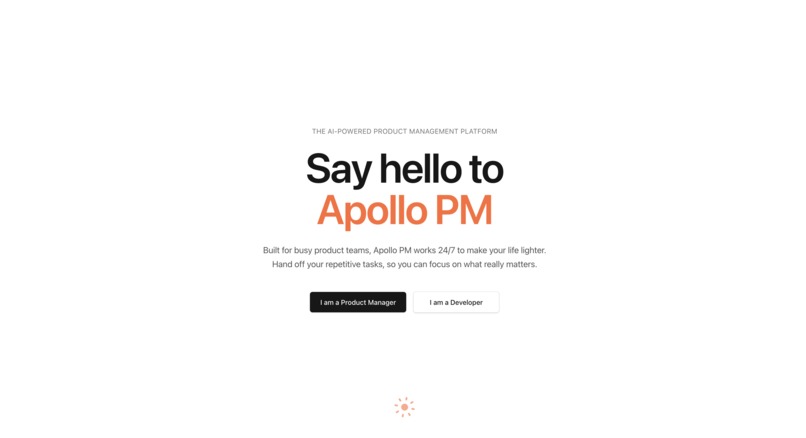

Homepage

-

-

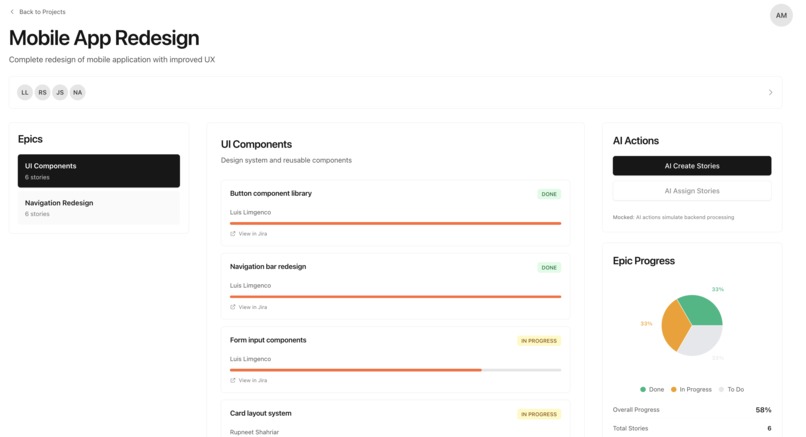

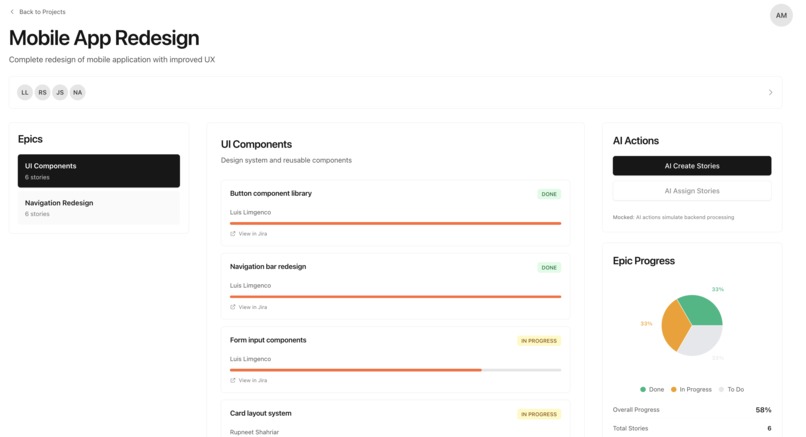

PM's Project Overview: Contains all stories, and whether they are assigned. Apollo can assign tasks based on the task and each dev's skills.

-

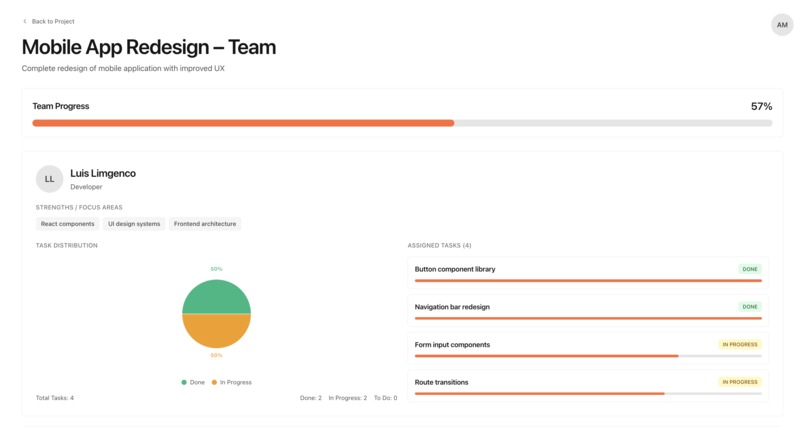

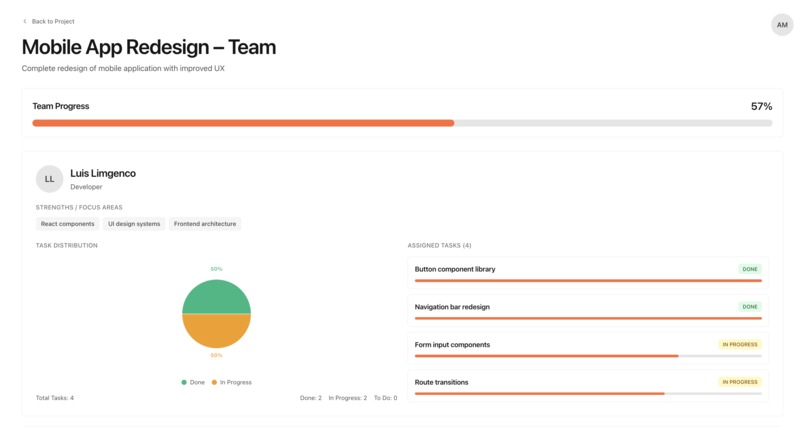

The project manager's overview of team member's and their progress. Shows all current active stories, and the progress for each.

-

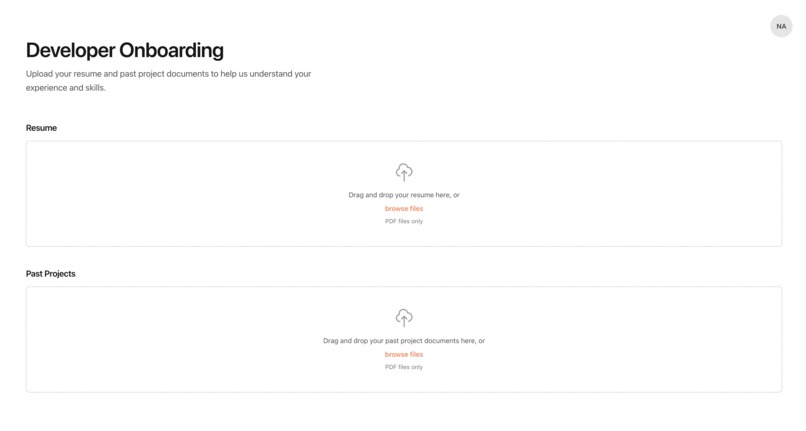

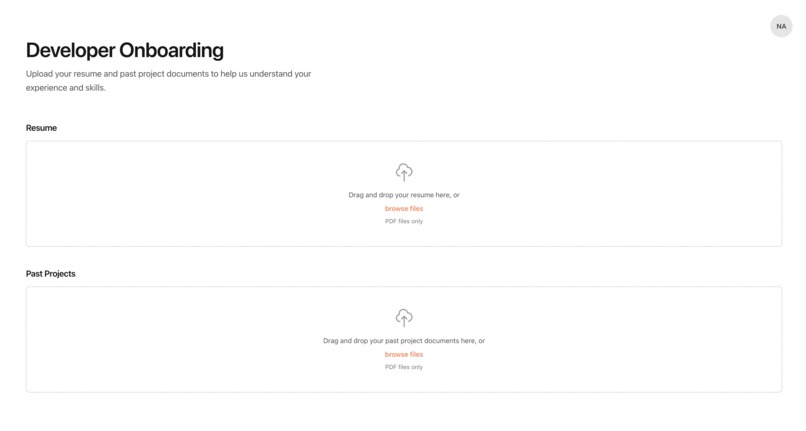

Developers upload past projects and resume to help Apollo determine their skillset to assign tasks better.

-

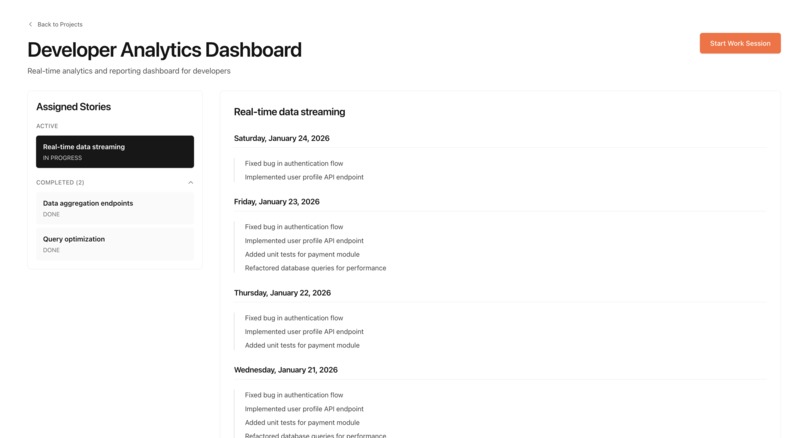

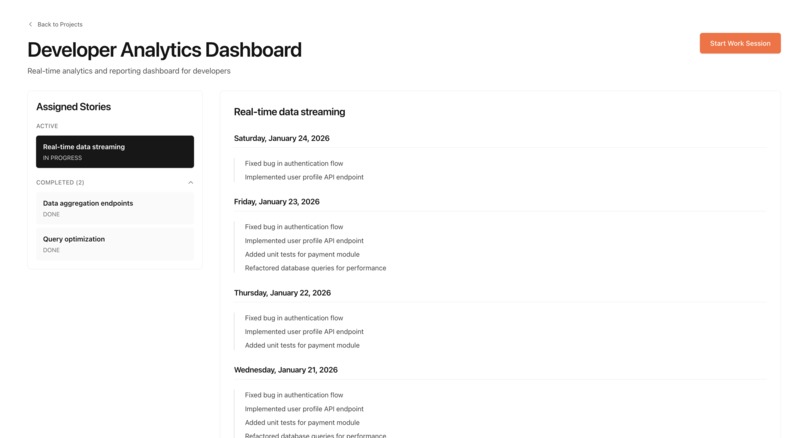

Dev's view of a project overview. Contains all of Dev's stories and also a log of accomplishments during work sessions to track progress.

-

Work sessions allows Apollo to track and summarize developer's progress while prioritizing privacy.

Demo video

Inspiration

In big tech, the biggest problem for devs is unclear requirements while PMs struggle with transparency. We had the idea to solve both of those problems with a single elegant solution.

What it does

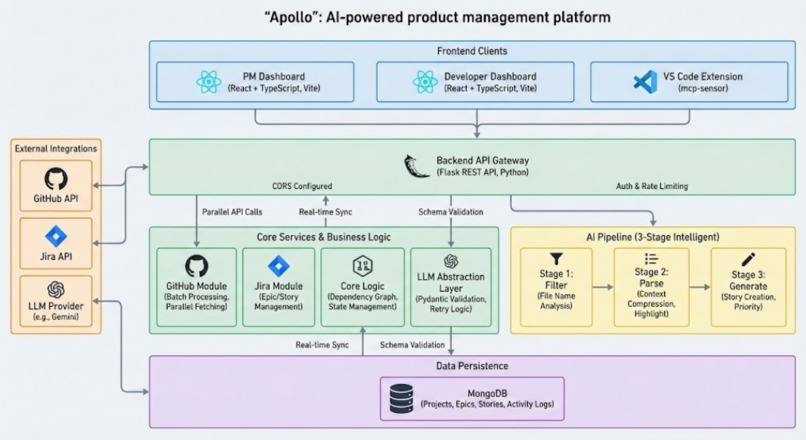

Apollo is an AI-powered product management platform

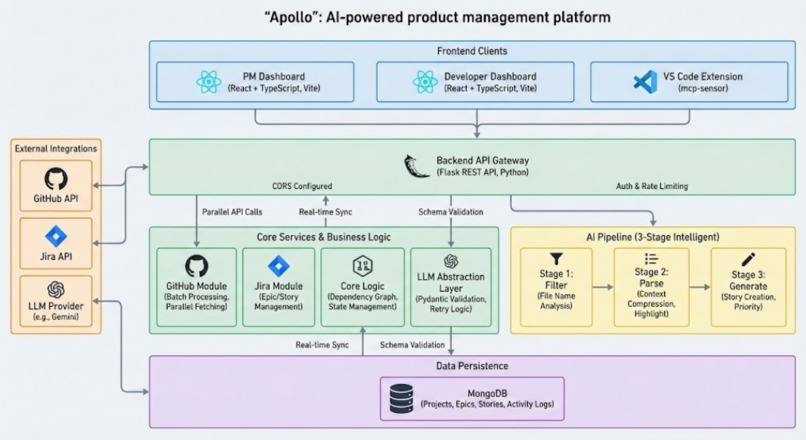

Automatically generates Jira stories from epics: Analyzes entire GitHub repositories (even those tens of thousands of lines long) in less than 2 minutes using concurrent pipelines, to develop actionable stories with code references.

3-stage intelligent pipeline:

- Stage 1: Filters repository files by name based on epic requirements

- Stage 2: Parse relevant files for starting points and highlight relevant code sections

- Stage 3: Generates stories grouping priorities with relevant snippets of code

Developer context re-entry: VS Code extension tracks activity and generates unbiased summaries to provide honest updates and support developers resume work

Dual user interface:

- PM Dashboard: View projects, epics, and AI-generated stories with analytics

- Developer Dashboard: Track assigned tasks and work progress

Seamless integrations: Direct Jira and GitHub API integration for real-time data sync

How we built it

Backend Architecture:

- Flask REST API (Python) with MongoDB for data persistence

- Modular design: Separate modules for Jira (jira/), GitHub (gh/), and core app logic

- LLM abstraction layer: Retry logic and error handling and data format validation with pydantic

- GitHub API client: Handles repository traversal, file content fetching, and parallel processing

- Jira API client: Creates and manages epics, stories, and projects

Frontend:

- React + TypeScript with Vite for fast development

- Framer Motion for animations

- Tailwind CSS for styling

- Recharts for data visualization

- React Router for navigation between PM and Developer views

VS Code Extension:

- mcp-sensor: Tracks developer activity (app name, window title, workspace path) and sends heartbeat data to the backend

Key Technical Components:

- Batch processing: Processes large repositories in configurable batches (default 500 files) to handle rate limits

- Parallel file fetching: Uses ProcessPoolExecutor for concurrent GitHub and Gemini API calls

- Pydantic validation: Ensures LLM responses match expected schemas

- Error handling: Retry logic with exponential backoff for API failures

- Dependency graph: Story prerequisite system with topological sorting for execution order

Challenges we ran into

- LLM response consistency: LLMs sometimes returned malformed JSON. Solution: Implemented robust parsing with regex fallbacks and Pydantic validation

- GitHub API rate limits: Large repositories hit rate limits quickly. Solution: Implemented batch processing and parallel execution with proper rate limit handling

- File path validation: GitHub repository URLs came in multiple formats. Solution: Built flexible URL parsing supporting https://github.com/owner/repo, owner/repo, and other variations

- File contents exceeded model limits. So we implemented recursive context window compression

- VS Code extension integration: Tracking developer activity required careful permission handling. Solution: Designed a lightweight extension that respects user privacy while providing useful context. They can remove irrelevant activity that might have been marked as productive but can’t make things up. It rewards honesty by automating documentation.

- CORS configuration: Frontend-backend communication required careful CORS setup. Solution: Configured Flask-CORS with proper origin whitelisting

Accomplishments that we're proud of

- End-to-end automation: Successfully automated the epic-to-story pipeline, reducing manual work from hours to minutes

- Prudent LLM usage: AI inexpensively identifies relevant files before delving deeper into highlighting specific code sections

- Production-ready error handling: Robust retry logic, exponential backoff, and graceful degradation

- Clean architecture: Modular design with clear separation of concerns, making the codebase maintainable and extensible

- Developer experience: VS Code extension provides seamless context re-entry, helping developers stay productive

- Beautiful UI: Modern, responsive frontend with smooth animations and intuitive navigation

- Comprehensive testing: Detailed test scripts and documentation for each pipeline stage

What we learned

- LLM prompt engineering: Crafting effective prompts is critical for consistent, useful outputs

- API integration patterns: Best practices for handling rate limits, authentication, and error recovery across multiple services

- Batch processing strategies: How to efficiently process large datasets while respecting API constraints

- Type safety with Pydantic: Using Pydantic models for validation improved reliability and developer experience

- Concurrent programming: Leveraging Python's ProcessPoolExecutor for parallel API calls significantly improved performance

- Full-stack coordination: Managing state and data flow between React frontend, Flask backend, and MongoDB database

- VS Code extension development: Understanding the extension API and creating useful developer tools

- User experience design: Balancing automation with user control, ensuring PMs and developers can review and refine AI-generated content

What's next for Apollo

- Advanced story refinement: Allow PMs to refine AI-generated stories with follow-up prompts

- Dependency detection: Automatically detect and visualize story dependencies based on code analysis

- Smart assignment: AI-powered story assignment based on developer skills and workload

- Progress tracking: Automatic progress updates by analyzing GitHub commits and pull requests

- Multi-repository support: Enhanced handling of monorepos and cross-repository dependencies

- Custom LLM fine-tuning: Fine-tune models on project-specific data for better accuracy

- Integration expansion: Support for additional tools like Linear, Asana, and Azure DevOps

- Analytics dashboard: Advanced metrics and insights for project health and team productivity

- Mobile app: Native mobile applications for PMs and developers to manage work on the go

Log in or sign up for Devpost to join the conversation.