-

-

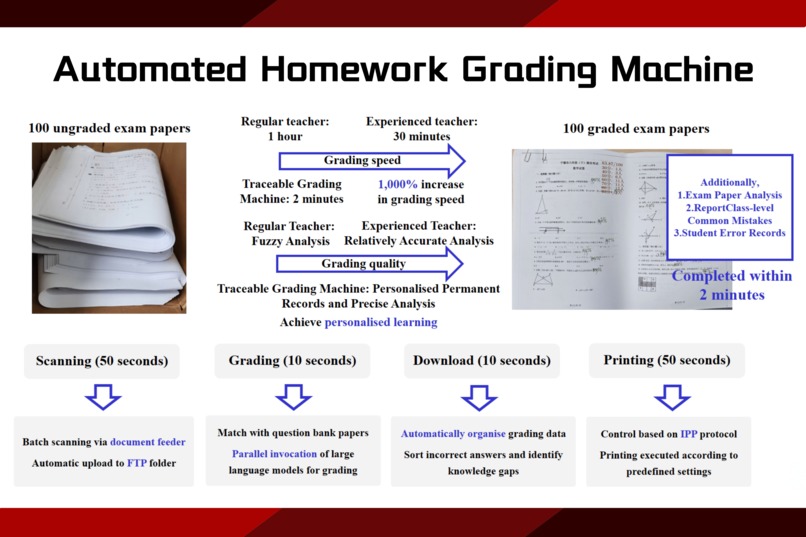

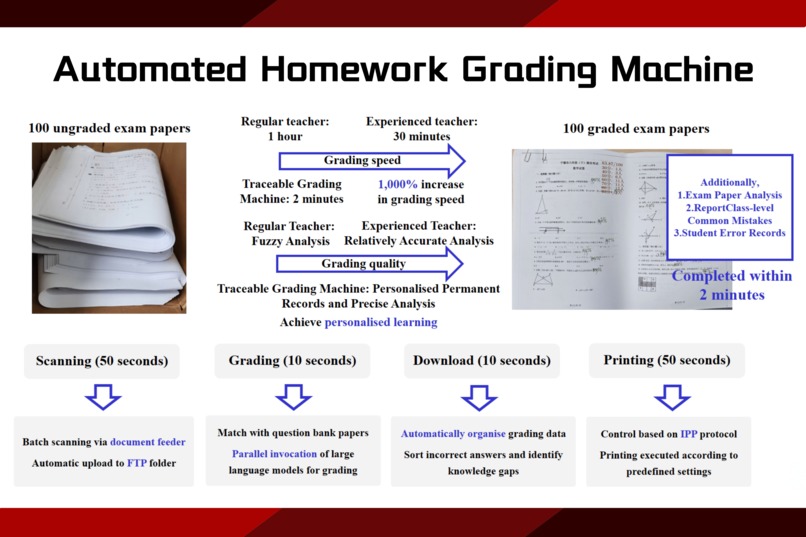

Grades 100 exam papers in 2 minutes: scan, grade, download, and print with traceability.

-

Grades 100 papers in 2 minutes with 99.9% accuracy. Scan, grade, print, and analyse—all in one intelligent machine.

-

Automated Homework Grading Machine: Grades 100 papers in 2 minutes with 99.9% accuracy. Fast, reliable, and ready for classroom use.

Inspiration

This project was inspired by a formative academic experience during the early years of university. In contrast to high school, university-level mathematics courses often condense an immense amount of content—up to ten times as much—into a single semester. Lectures are fast-paced, leaving many students struggling to digest and internalise new material in time. This disconnect between instruction and independent practice created a noticeable gap. To address this, comprehensive solution sets were compiled each week, accompanied by dedicated problem-solving sessions to support peer learning. Over time, the critical role of detailed grading and timely feedback became increasingly clear.

One particular experience proved especially impactful: after submitting a two-page exam response, every mistake was meticulously identified and corrected by the instructor. The clarity and care in this feedback were profoundly motivating, directly contributing to improved performance and deeper engagement with the material. This moment underscored the transformative power of personalised, constructive feedback.

Motivated by that experience, the idea emerged to translate such meaningful interactions into a scalable, technology-driven solution. By automating grading and error analysis through intelligent systems, the aim is to extend the benefits of personalised feedback to more students—supporting better understanding, more efficient learning, and the development of positive study habits. At its core, educational technology should bridge the gap between teacher guidance and student growth with both precision and empathy.

What it does

The Automated Homework Grading Machine is an AI-education integrated intelligent terminal that dramatically enhances the efficiency and accuracy of grading paper-based assignments. By combining cutting-edge large language model (LLM) technologies with dedicated hardware, the system can complete the grading of 100 exam papers within just two minutes—a task that would typically take experienced teachers 30 minutes or more. Beyond speed, the system provides in-depth class-level and individual-level analysis, identifying common errors and tracing specific student mistakes to support personalised learning. It automatically generates customised error sets, prints personalised paper-based review materials, and offers intuitive interfaces for teachers to access performance data. With grading accuracy exceeding 97%, and over 100,000 papers already processed across ten pilot schools, the system has proven to significantly improve error coverage, elevate class average scores, and reduce the workload of educators while enhancing learning outcomes for students.

How we built it

The development of the Automated Homework Grading Machine followed a hardware-software co-design approach, combining advanced large-scale foundation models with intelligent hardware to create a high-efficiency, high-accuracy, and scalable system for paper-based homework grading. The system covers the full pipeline of grading tasks—starting from paper scanning, intelligent recognition, automated evaluation, personalised feedback, and extending to structured error sorting and analysis. By leveraging a hybrid architecture of local intelligent terminals and cloud-based computation, the system enables real-time, automated grading of handwritten student work at classroom scale.

Technically, the system integrates the multi-modal capabilities of large language models, enabling it to process question types that traditional OCR methods struggle with—such as geometric diagram questions, matching problems, and other visual-spatial tasks. Unlike conventional solutions, the system is not limited to textual recognition; it has semantic understanding abilities that allow it to interpret students’ reasoning processes and identify weak knowledge points with deeper contextual insight.

The hardware form factor is built around a printer-grade terminal, rather than consumer devices like mobile phones or flatbed scanners. This decision was crucial: it allows batch input of physical test papers, high-speed processing, and immediate printing of grading results directly onto each paper. This not only improves operational efficiency but also ensures traceability, reduces teacher workload, and preserves clear records of each student’s learning trajectory.

The grading workflow has been significantly streamlined—test papers are fed into the device with a single action, and detailed grading outputs are automatically generated, requiring no manual intervention. Based on OCR technology and the Wenxin large model, the system accurately evaluates a wide range of question types. It does more than mark errors: it provides interpretive feedback, analytical comments, and identifies patterns of misunderstanding, enabling teachers to better understand and respond to student needs.

One of the key features is the system’s ability to automatically organise error records, generating customised error sets and blind spot reports for individual students. This facilitates targeted review and helps students strengthen weak areas effectively. These reports can be printed and distributed as personalised study materials, integrating seamlessly into everyday classroom use.

Importantly, the system’s model training, cloud deployment, and interface development benefited from the technical support provided through I.D.E.A. Hackathon 2025. Resources such as accessible model APIs, computing infrastructure, and expert feedback during the event played a vital role in refining our technical implementation and accelerating prototype-to-product conversion. This support helped ensure that the final solution is not only technically robust but also practically deployable in real educational environments.

With its full-stack automation, cross-modal grading capabilities, and teacher-oriented design, the Automated Homework Grading Machine offers a classroom-ready solution that embodies both technological depth and educational relevance.

Challenges we ran into

Developing the Automated Homework Grading Machine involved a range of complex challenges at both the technical and application levels. One of the most fundamental difficulties was accurately interpreting handwritten student responses. Unlike digital text, handwritten content—particularly from primary school students—varies greatly in style, spacing, structure, and clarity. To address this, we had to tightly integrate advanced optical character recognition (OCR) with large-scale language models capable of contextual reasoning. The system needed not only to recognise individual characters but also to understand the spatial layout and semantics of diagrams, matching lines, and geometric figures, which go far beyond the capabilities of conventional OCR.

A key breakthrough came from incorporating multi-modal capabilities of large foundation models, allowing the system to process visual structures alongside text. For example, the grading of geometry questions, matching problems, and visual logic puzzles required the model to “see” and “understand” visual elements—not just read them. This was made possible by leveraging technologies provided through I.D.E.A. Hackathon 2025, including access to open model APIs and computational resources that supported real-time inference and visual-semantic alignment. These tools enabled us to deploy a system capable of handling not only textual content but also complex handwritten visual input at high speed and accuracy.

Another major challenge was building a traceable and explainable grading pipeline. Teachers need more than a final score—they require insight into why an answer was incorrect, where the student went wrong, and how to provide meaningful, pedagogically aligned feedback. This necessitated a dual-layer system: a fast grading backend supported by logic rules and model prompts, and a frontend capable of displaying annotated feedback directly on the physical test paper. Designing this pipeline while ensuring real-time responsiveness and model reliability added significant complexity to both the software stack and hardware integration.

Hardware-wise, ensuring synchronisation between scanning, recognition, and printing with minimal latency presented additional engineering challenges. The device needed to process multiple papers in batches, match each to the correct student profile, apply model predictions, and print feedback with precision—all within classroom time constraints.

These challenges collectively shaped the system into a robust, field-deployable educational tool. By tackling low-level visual recognition problems, high-level semantic evaluation, and practical traceability requirements simultaneously, we gained a deeper understanding of what it means to create AI systems that truly work in real educational environments. Importantly, support from I.D.E.A. Hackathon 2025 provided us with the model access, tooling, and mentorship that accelerated our technical breakthroughs and ensured our final product could deliver real value in schools.

Accomplishments that we're proud of

We successfully transformed our concept into a fully productised solution now deployed in ten schools, where it has graded over 100,000 handwritten exam papers with an accuracy rate exceeding 97%. The system has reduced grading time from 60 minutes to just 2 minutes per 100 papers, significantly improving teaching efficiency and easing the burden on educators. The product demonstration video has received over 100,000 views, and more than 100 teachers have proactively reached out to request trial access, reflecting strong recognition from the education community.

The project has received extensive recognition in national and international competitions. It was awarded the Champion title in the 2024 AI + Hardware Innovation Competition, the Grand Prize in the 2024 Dahua Cup University Technical Innovation Competition, the Second Prize in the 3rd China Generative AI Application Innovation Challenge, and the First Prize in the Zhejiang University Campus Round of the 10th China Postgraduate Smart City Technology and Creative Design Competition. Additional awards include the Second Prize in the East China Regional Competition of the China Collegiate Computing Competition – AI Creativity Track, the Second Prize in the Zhejiang University Preliminary Round of the 5th China Graduate AI Innovation Competition, and the Third Prize in the PaddlePaddle AGI Hackathon. The team was also recognized with a Silver Award in the 2021 China International College Students’ “Internet+” Innovation and Entrepreneurship Competition, as well as prizes in earlier years such as the First Prize in the 4th Simulation Round of the ASABE Agricultural Robotics Competition (USA), the Third Prize in the China Agricultural Robotics Competition, and the Third Prize in the 12th National Undergraduate Mathematics Competition (Non-Mathematics Major Category).

In AI industry-related competitions, we were named an Outstanding Innovation Team in the Intel AI Innovation Application Competition, and earned the Third Prize in the Individual Track of the competition’s Grand Final. These recognitions reflect the team’s continued excellence and innovation across AI, hardware, and interdisciplinary fields.

The project has also produced a number of intellectual property outcomes, including patents such as Comfortable Foldable Desk, A Method and System for Intelligent Correction of Paper-Based Assignments, A Smart Clock with Air Purification Function, A Method and System for Adaptive Measurement of Three-Dimensional Plant Morphology, and Smart Desk Lamp. In addition, we have registered a software copyright titled MeowMeow WoofWoof Homework Grading Software, along with its electronic version.

In academic research, three papers from the project team have been accepted at international conferences. The paper “Integrated Optimization of Large Language Models: Synergizing Data Utilization and Compression Techniques” was accepted by the 2024 4th International Conference on Electronic Information Engineering and Computer Science, focusing on training large models with reduced data and storage requirements—closely aligned with our work. The paper “Rhyme-aware Chinese Lyric Generator Based on GPT”, accepted at the 4th International Conference on Advanced Algorithms and Neural Networks, connects to our grading applications in primary language education. The third paper, “BOANN: Bayesian-Optimized Attentive Neural Network for Classification”, accepted by the 2024 3rd International Conference on Image Processing, Computer Vision and Machine Learning, demonstrates improvements in model accuracy relevant to image recognition tasks.

In addition, the project was invited for a technical sharing session at the 2024 Intel AI Innovation Workshop, further affirming recognition from industry experts. These accomplishments—spanning deployment, competitions, intellectual property, and research—demonstrate the comprehensive capabilities and practical value of the system.

What we learned

Building the Automated Homework Grading Machine taught us that truly impactful educational technology must balance technical innovation with deep empathy for real classroom needs. While high accuracy and speed are critical, we learned that trust, usability, and alignment with actual teaching workflows are just as essential. Teachers need more than a powerful tool—they need one that is transparent and intuitive, one that they can understand and rely on in daily practice.

We also came to understand that automation in education should never aim to replace educators. Instead, its true value lies in empowering them—by saving time on repetitive tasks, offering clearer insights into student learning, and supporting more personalised and effective instruction. This project reaffirmed that when AI is developed with care, clarity, and purpose, it can become a meaningful partner in the learning process.

Participating in I.D.E.A. Hackathon 2025 reinforced these lessons at a deeper level. The technical resources, mentorship, and collaborative atmosphere provided by the event allowed us to sharpen both the practicality and ethical awareness of our system. Through the Hackathon, we not only improved our solution’s technical performance, but also re-anchored it in the real-world needs of educators and students. We are truly grateful for the opportunity to grow with the support of such a forward-thinking platform.

What's next for An Automated Homework Grading System

Looking ahead, we plan to expand from pilot deployments to large-scale implementation across more than 200 schools in cities such as Hangzhou and Wuhan. This next phase will focus on refining system performance in diverse teaching contexts, while enhancing the personalisation of feedback and expanding subject coverage beyond mathematics and English.

We aim to strengthen our partnerships with educational institutions and suppliers to support district-wide adoption through centralised procurement. At the same time, we will continue to invest in R&D—improving our core grading algorithms, optimising hardware usability, and deepening the integration of learning analytics into school teaching workflows.

With IP protections in place and proven classroom effectiveness, we are committed to scaling our system as a cornerstone of data-driven, equitable education. Our long-term vision is to empower every teacher with intelligent tools that not only save time, but transform how learning is supported, measured, and improved.

Built With

- gemini-2.5-pro-preview-05-06

- gemma-3-4b-it

Log in or sign up for Devpost to join the conversation.