-

-

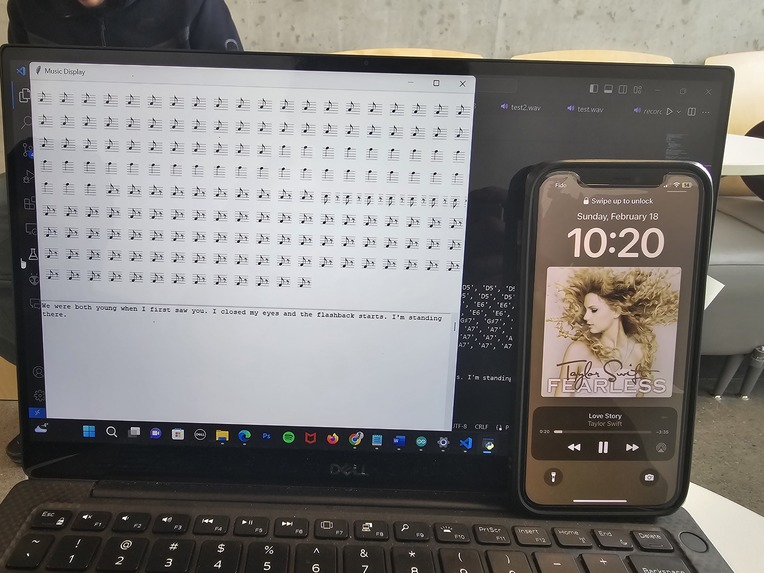

Running prototype with song audio being recorded by software for further analysis and interpretation.

-

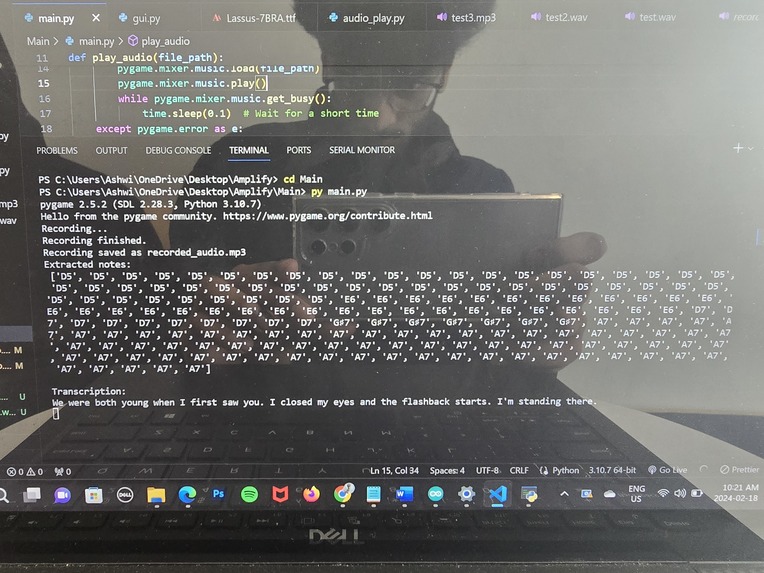

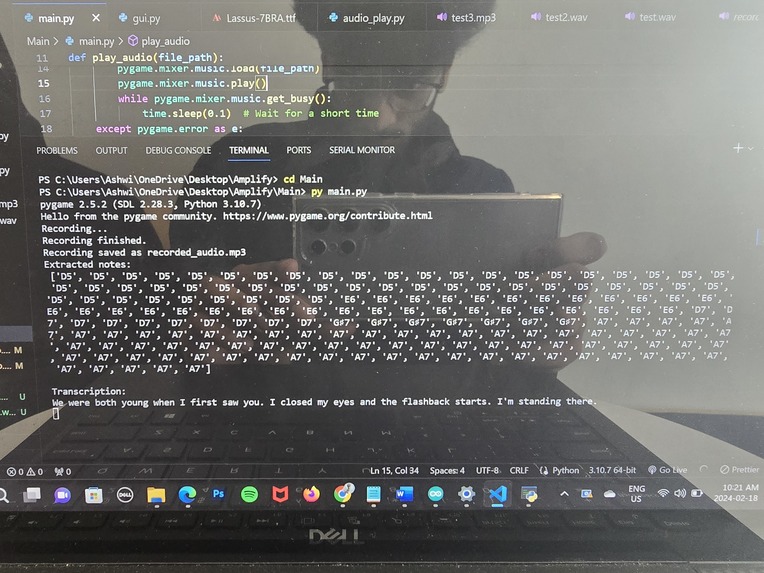

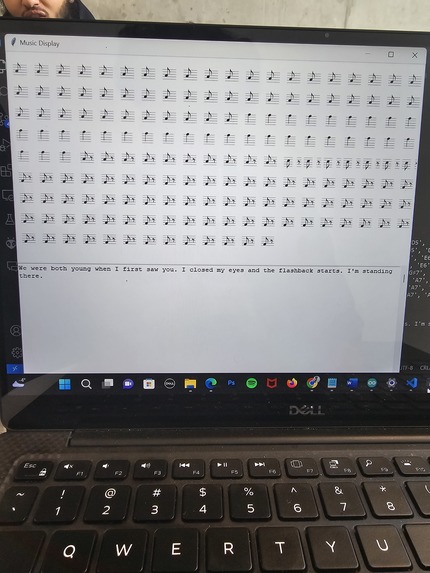

Collected music data showcasing musical notes detected within sub-intervals and transcribed lyrics. Image also includes user info messages.

-

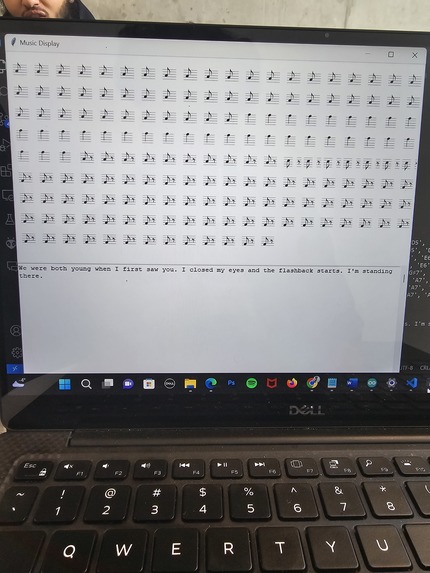

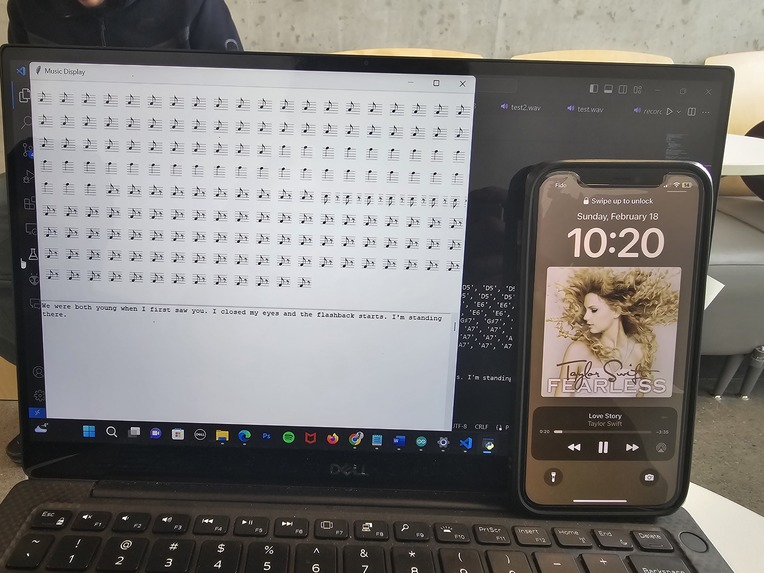

Outputted music sheet and transcribed lyrics.

-

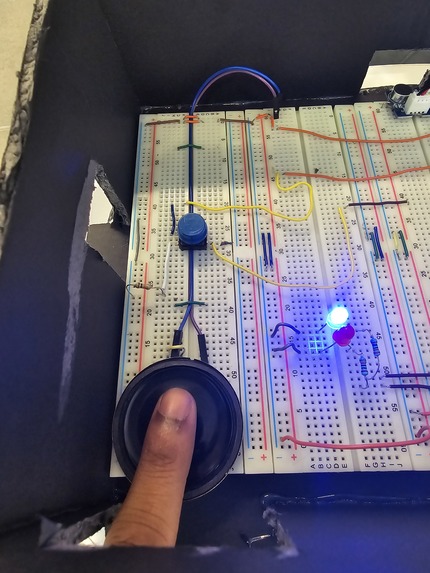

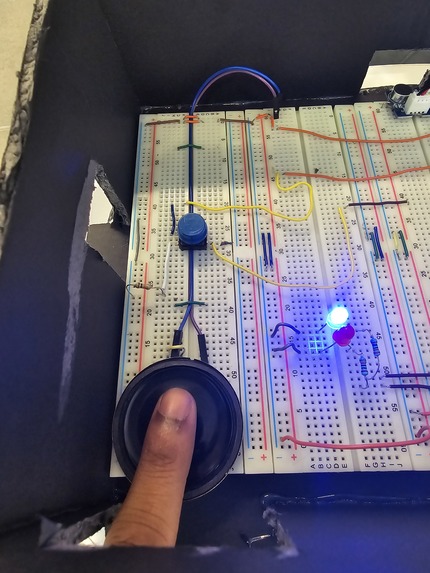

dexterity output which translates audio input into vibrational output to "feel" music.

-

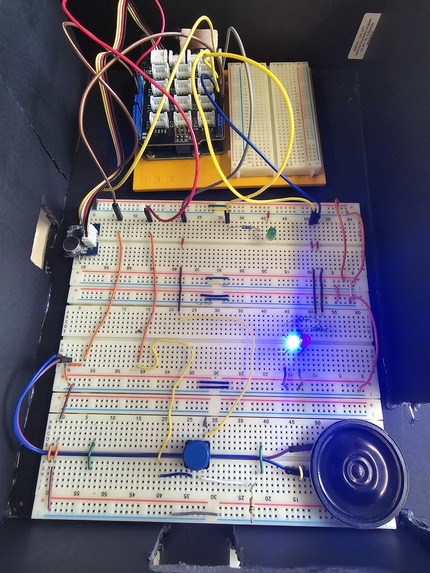

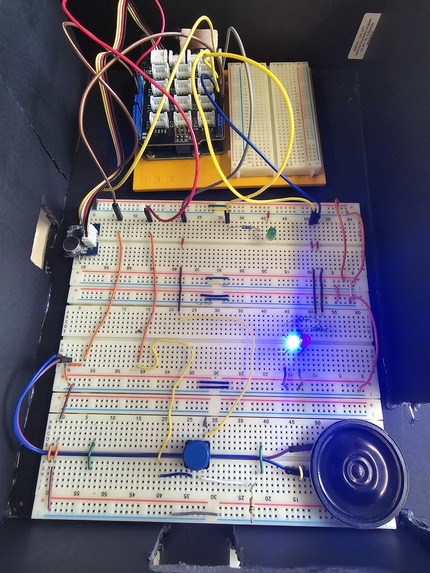

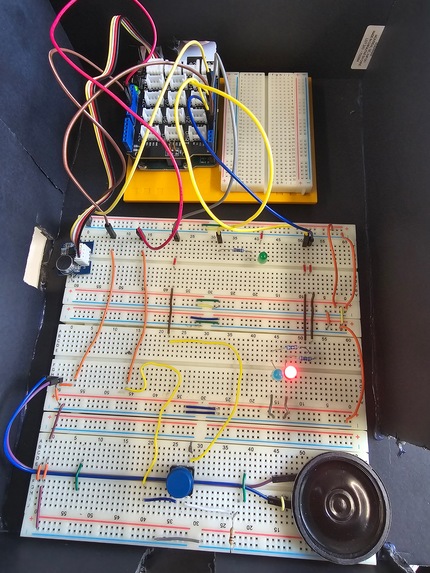

Circuit with "ON" state active with audio to dexterity circuit, on/off state circuit and audio visualizer circuit.

-

Final product portfolio packaged within a case.

-

Full solution implementation with hardware and software parallel components.

-

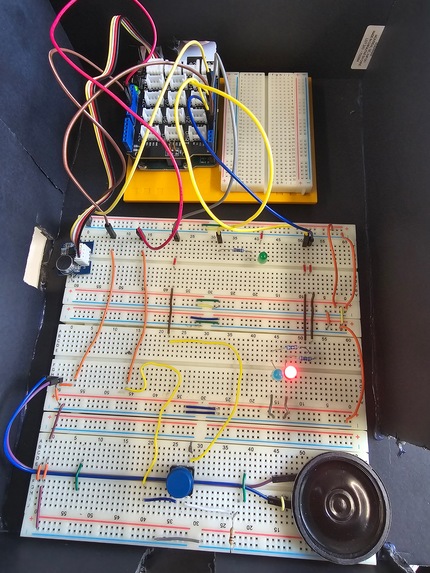

Circuit in the "OFF" state. Circuit will not perform audio collection and input-output type conversions.

Inspiration

Our project originated from a need to help people who are hard of hearing. We personally know people who are deaf, so we wanted to create a project that makes them feel more included in the world of music.

What it does

Our project allows the user to record a song which then produces a transcribed set of lyrics and a musical note sheet. Also, the piezo sensor produces vibrations that change to the music which allows the user to feel the music.

How we built it

We built this design using the OpenAI Whisper API for transcribing purposes. For the hardware, we used an Arduino with a base shield, connected to a breadboard which has various components like a piezo buzzer, switch, and microphone sensor.

Challenges we ran into

We faced difficulties with wiring up our circuit, reading and mapping the frequencies to a readable scale, and adding a switch to turn the circuit on and off.

Accomplishments that we're proud of

We are proud of correctly wiring the circuit, using the OpenAI Whisper API to transcribe lyrics, and enabling the user to view a personalized music sheet.

What we learned

We learned how to use the OpenAI Whisper API, build an Arduino circuit with various components connected to it, and record and playback audio files with Python.

What's next for Amplify

Amplify’s next goal is to make the design more portable so that it can be more accessible and used by users anywhere and everywhere. We also wish to make the variety of vibrations more distinct and create an interactive GUI so that the user has a better experience.

Log in or sign up for Devpost to join the conversation.