-

-

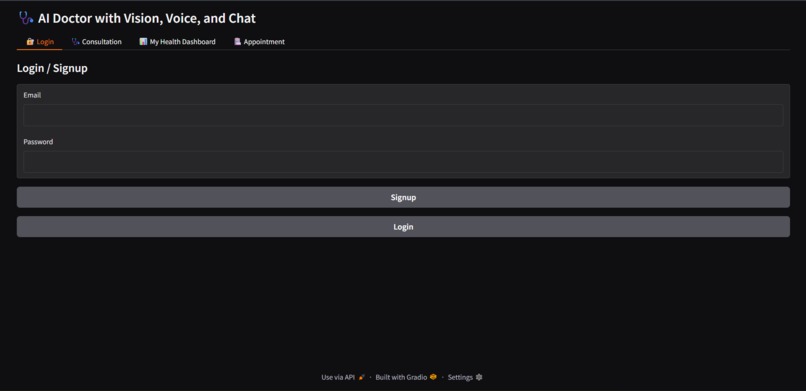

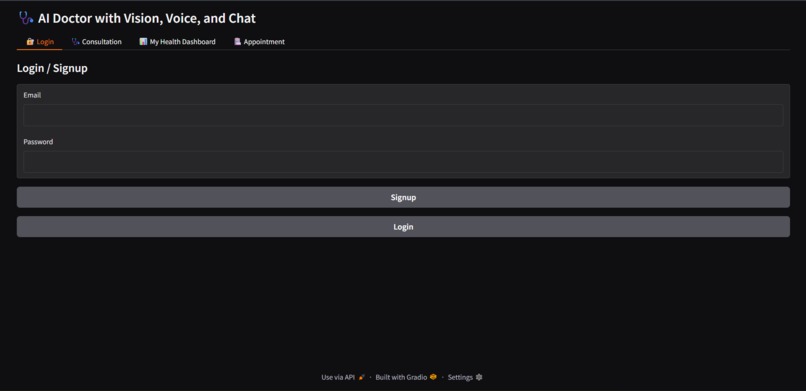

User login and secure access to the Amazon Nova Healthcare Agent platform.

-

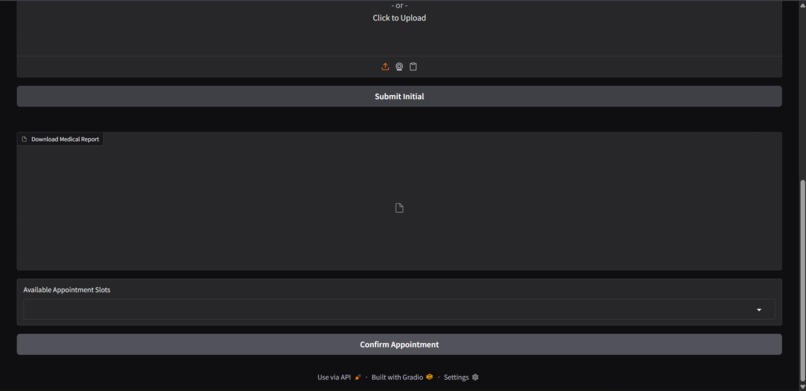

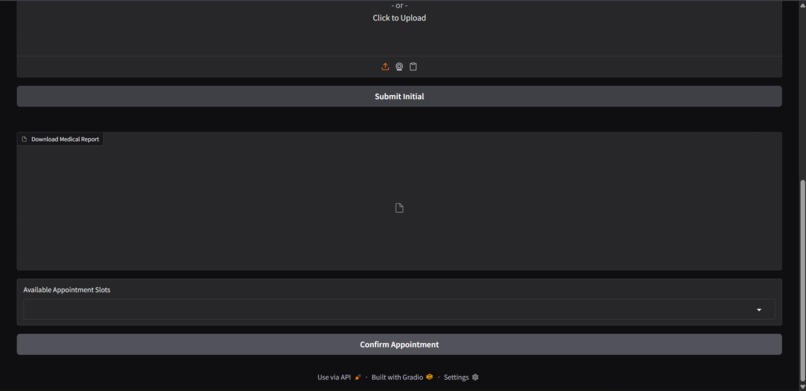

Available doctor slots displayed for booking consultations with automatic confirmation.

-

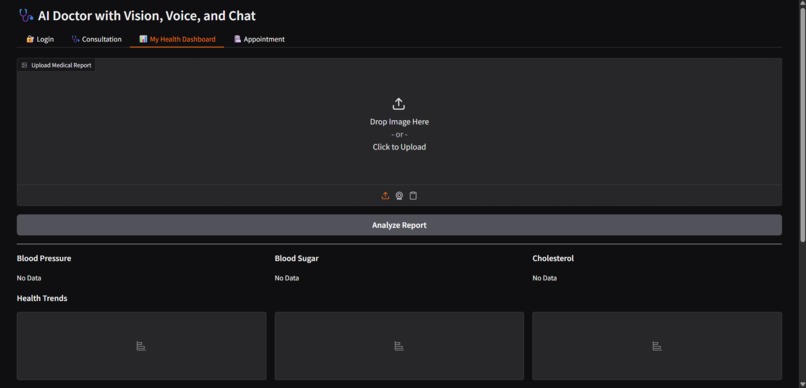

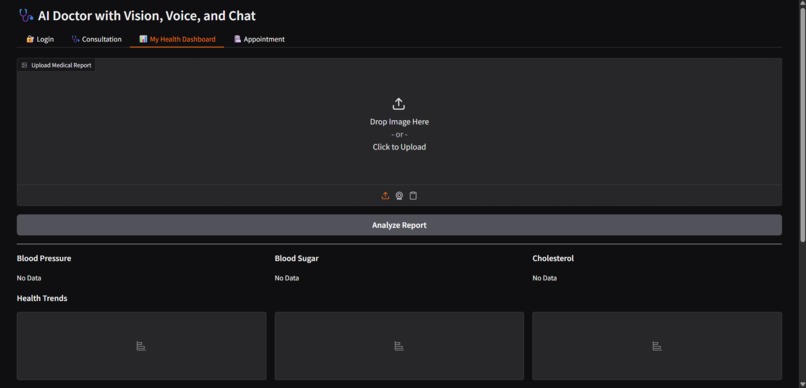

Health dashboard for tracking patient metrics like blood pressure, sugar, and cholesterol over time.

-

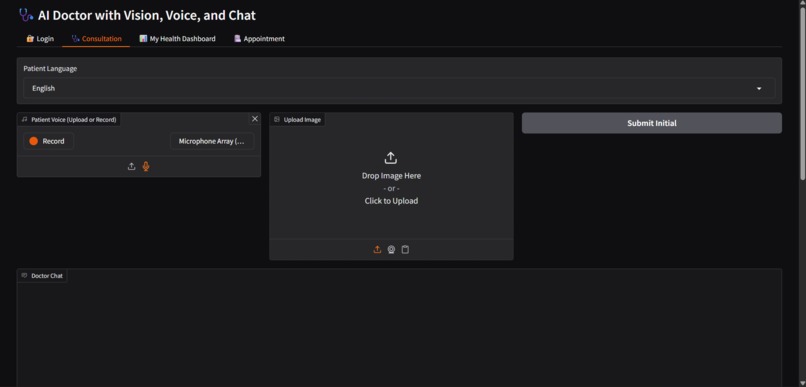

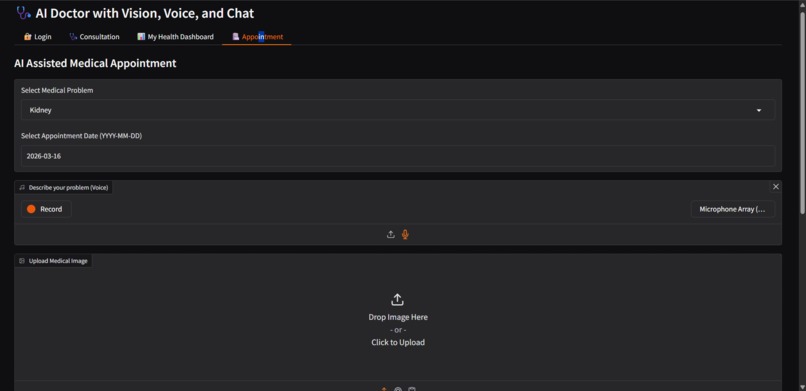

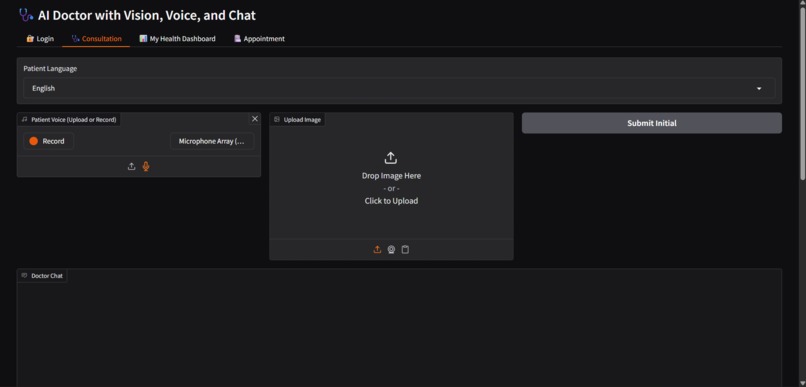

Voice-based symptom input where the patient interacts with the AI doctor using natural language.

-

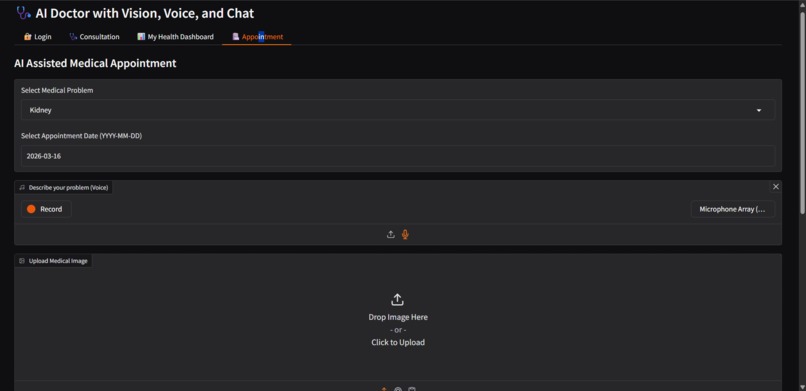

AI-assisted doctor appointment system that detects disease type and recommends the right specialist.

Inspiration

Access to quick and reliable medical guidance is still difficult for many people, especially in rural and multilingual communities. This project was inspired by the idea of building an AI assistant that can help patients understand symptoms, analyze medical data, and guide them toward proper medical care before reaching a hospital.

What it does

Amazon Nova Multimodal Healthcare Agent is an AI-powered healthcare assistant that helps patients using voice interaction, medical image analysis, and health report understanding.

Patients can describe symptoms using voice in multiple languages, upload medical images such as X-rays, and upload health reports. The system analyzes this information to provide diagnosis suggestions, medical advice, and follow-up responses.

It can also generate health dashboards, track previous reports, detect medical emergencies, and automatically schedule doctor appointments with live email notifications.

How we built it

The system uses Amazon Nova Micro for medical reasoning and conversation in multi Language , Amazon Nova Lite for medical image analysis, and Amazon Titan Embeddings to build a RAG pipeline that retrieves WHO medical guidelines.

The platform also integrates deep learning models for disease detection, Whisper-based speech recognition for voice input, and ElevenLabs for voice output. The backend is built with Python, LangChain, FAISS, SQLAlchemy, and Gradio, and email notifications are handled using SendGrid.

Challenges we ran into

The biggest challenge was integrating multiple AI capabilities in one system, including voice input, medical image analysis, RAG knowledge retrieval, and AI reasoning.

Ensuring that responses were grounded in reliable medical information required implementing a WHO knowledge retrieval pipeline using Titan Embeddings.

Accomplishments that we're proud of

We successfully built a complete multimodal healthcare assistant powered by Amazon Nova models.

The system combines:

voice-based medical consultation

medical image analysis

WHO knowledge retrieval using Titan Embeddings

health report analysis and dashboards

automated doctor appointment booking with automatic email service

emergency detection and hospital guidance

What we learned

This project helped us learn how to build agentic AI systems using foundation models, combine multimodal inputs, and design RAG pipelines that ground AI responses with trusted medical knowledge.

What's next for Amazon Nova Multimodal Healthcare Agent

In the future, this system can be expanded into a real-world healthcare support platform. Planned improvements include integrating real-time voice conversations with Amazon Nova Sonic, connecting with hospital appointment systems, and improving medical report analysis for better patient monitoring. The goal is to make AI-assisted healthcare guidance more accessible and reliable for patients everywhere.

Built With

- amazon-bedrock

- amazon-nova-lite

- amazon-nova-micro

- amazon-titan-embeddings

- elevenlabs-text-to-speech

- faiss-vector-database

- gradio

- keras

- langchain

- python

- sendgrid-email-api

- sqlalchemy

- sqlite

- tensorflow

- whisper-speech-recognition

Log in or sign up for Devpost to join the conversation.