-

-

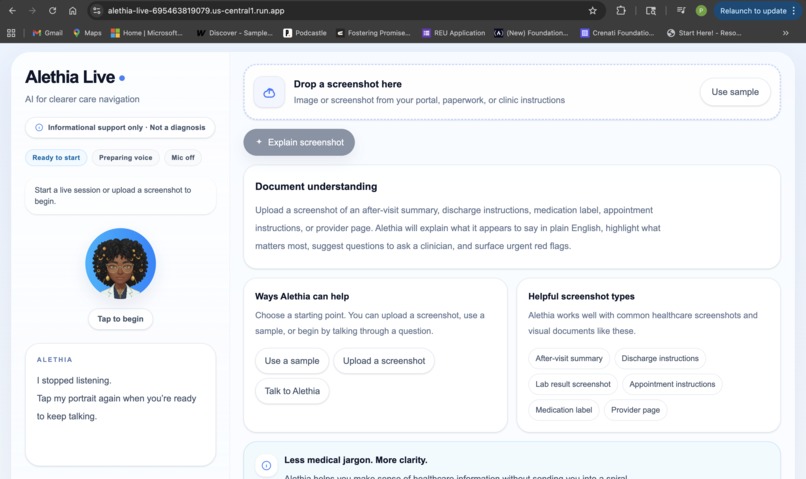

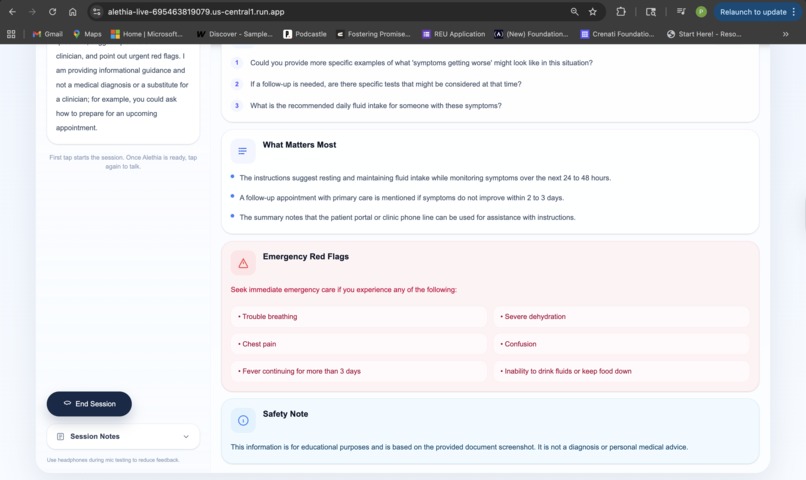

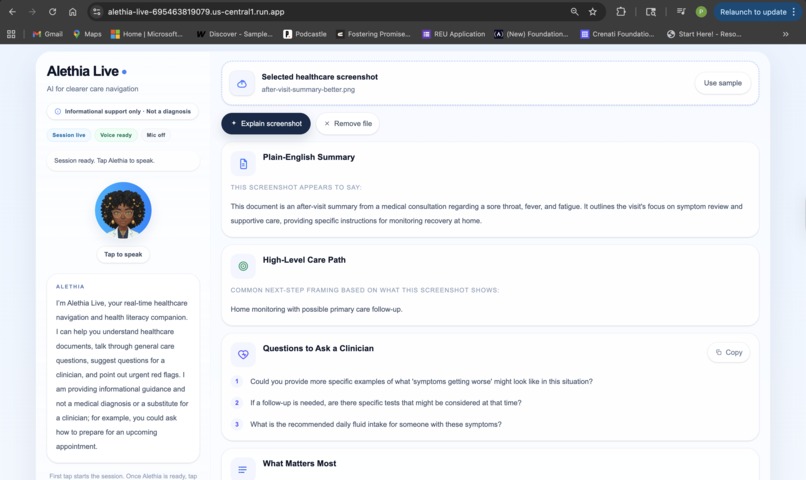

Alethia Live home screen with voice-first and screenshot-based healthcare support.

-

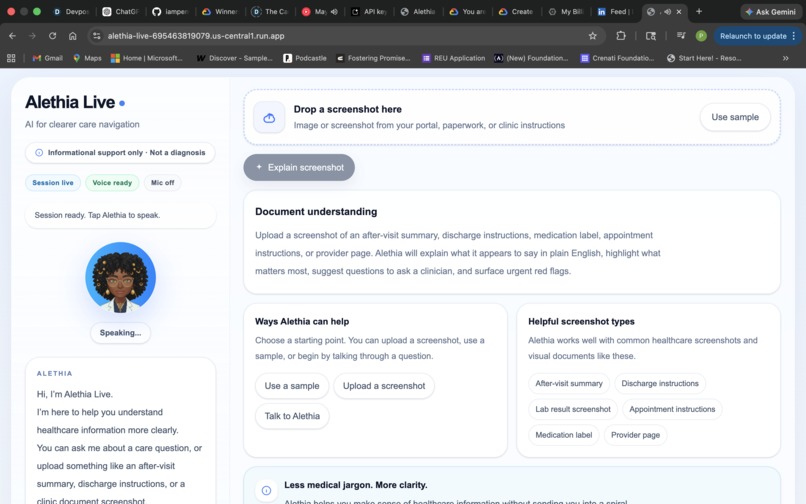

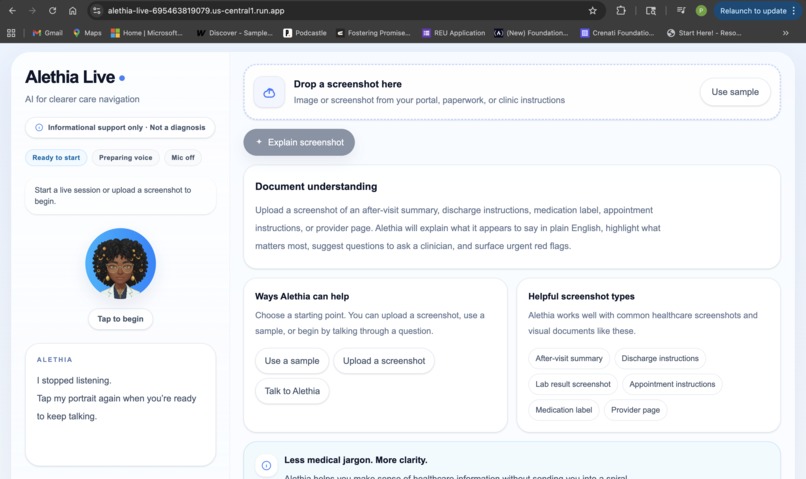

Real-time live voice interaction with Alethia during a healthcare navigation conversation.

-

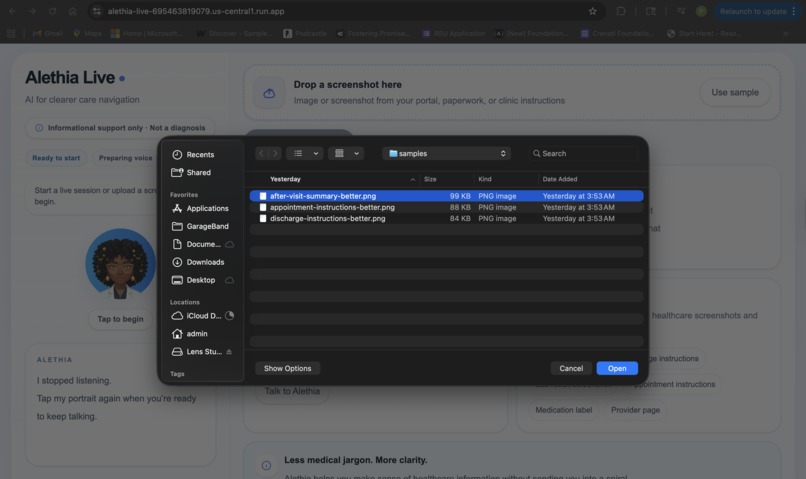

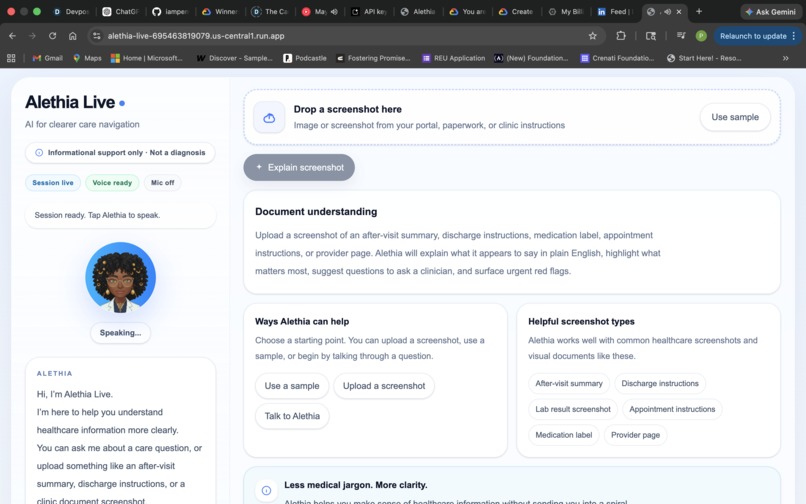

Uploading a healthcare screenshot for plain-English explanation and next-step guidance.

-

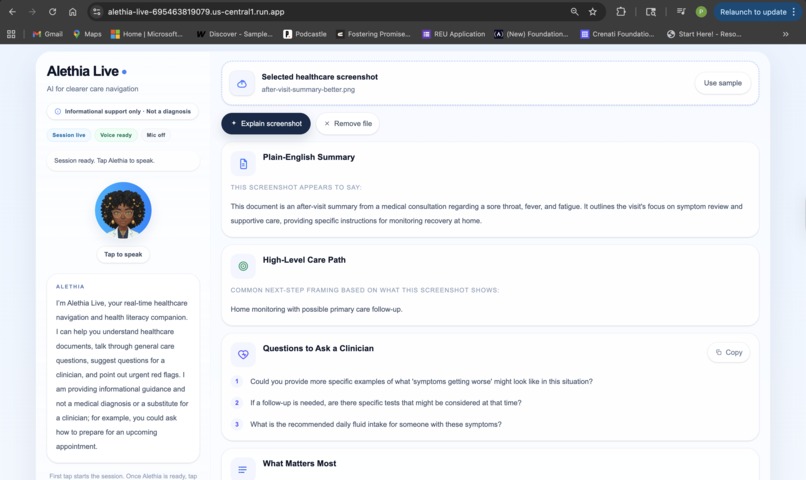

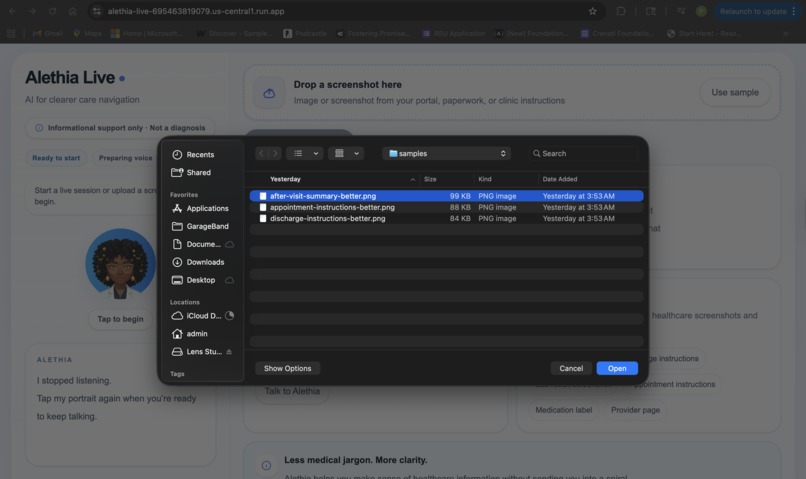

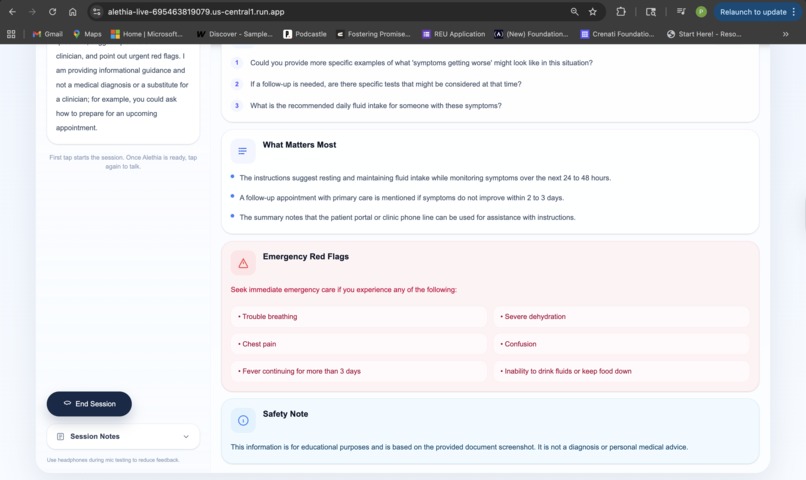

Structured results with plain-English summary, care-path framing, and clinician questions.

-

Safety-forward output that highlights emergency red flags and reinforces informational boundaries.

Inspiration

Healthcare confusion doesn't announce itself. It shows up quietly, in small and stressful moments.

Someone leaves an appointment still unsure what the paperwork actually meant. A parent lies awake wondering whether a fever can wait until morning. A patient stares at discharge instructions and realizes they understand the words...just not what they're supposed to do next.

I built Alethia Live around that gap.

Not another symptom checker. Not a fake digital doctor. Something more grounded and more human: a real-time healthcare navigation companion that helps people understand care information, think clearly about next steps, prepare better questions for their clinician, and recognize when something may need urgent attention.

As someone drawn to human-centered healthcare AI, I kept returning to one tension throughout this project: how do you build something genuinely useful without crossing into unsafe territory? That balance (usefulness without overreach), became the heart of everything.

What It Does

Alethia Live is a multimodal live AI agent for healthcare navigation and health literacy support.

Real-Time Voice Guidance

Speak naturally about a healthcare concern or a confusing care situation. Alethia Live listens, asks a few clarifying questions, and responds with:

- A plain-English summary of your concern

- A high-level care-path framing (primary care, urgent care, or emergency evaluation)

- Questions to bring to your clinician

- Emergency red flags to watch for

Screenshot & Document Understanding

Upload a healthcare screenshot or image (discharge instructions, an after-visit summary, appointment notes, a clinic page), and Alethia Live translates it into clear, structured language.

Every response is organized into the same practical sections:

- Plain-English Summary

- High-Level Care Path

- What Matters Most

- Questions to Ask a Clinician

- Emergency Red Flags

- Safety Note

Most importantly: Alethia Live doesn't diagnose, recommend treatment, or give medication advice. It's built to inform, not to impersonate a clinician.

How I Built It

I chose a single Next.js web app architecture — the fastest, cleanest, most shippable path for a strong hackathon MVP.

Stack:

- Next.js + TypeScript

- Tailwind CSS

- Gemini Live API

@google/genai- Google Cloud Run

The system uses a split multimodal architecture: Gemini Live API powers the real-time voice experience, while a standard @google/genai route handles screenshot and document understanding.

On the backend, I built:

- An ephemeral token route for secure browser-to-Gemini-Live connections

- A summary route that accepts uploaded images and returns structured healthcare-literacy cards

On the frontend, I designed a calm, premium experience: portrait-centered live interaction, microphone streaming, model audio playback, screenshot upload flow, structured result cards, and visible safety framing throughout.

One deliberate product decision I'm proud of: for document understanding, I pushed the language to stay document-anchored — phrases like "This document says…" and "The instructions shown here recommend…" — so the assistant never sounds like it's personally prescribing care.

Challenges

The hardest challenge wasn't technical. It was scope discipline.

Healthcare is a domain where it's easy to build something that sounds impressive but becomes unsafe, unfocused, or misleading. I kept asking myself: What is the strongest version of this product that is genuinely helpful, clearly bounded, and still realistic to ship?

On the technical side, getting the live voice loop working end-to-end required careful iteration through secure token generation, browser-to-Live connection, setup handshake, microphone streaming, model audio playback, and reliable turn completion.

On the product side, the challenge was making Alethia Live feel useful without sounding clinical or overconfident. Calm. Smart. Trustworthy, but honest about what it is.

I also dealt with real deployment and security work: catching and fixing accidental API key exposure, rotating credentials, cleaning the repo history, and resolving Cloud Run and IAM issues to get the app fully hosted rather than just running locally.

Accomplishments I'm Proud Of

Alethia Live is no longer a concept slide or a mockup. It's a working, deployed multimodal live agent — and that matters to me.

A few things I'm especially proud of:

- A functioning real-time Gemini Live voice experience running in the browser

- A practical screenshot and document understanding flow for healthcare literacy

- Keeping the product focused on navigation and understanding — not diagnosis

- A full deployment on Google Cloud Run

- Turning the UI into something calm and polished, not just a builder-facing prototype

- Making safety a visible product feature, not a hidden prompt detail

I'm also proud of the product framing itself. Alethia Live doesn't try to be everything. It solves a narrower, highly real problem: helping people feel less lost as they try to understand their care.

What I Learned

Live AI products become compelling when each interaction mode has a clear role.

Voice carries emotional immediacy. It's natural, fast, and human. Screenshot understanding grounds the experience in what the user is actually seeing — it anchors the conversation in reality. Together, they create something more useful and more believable than text alone.

I also learned that safety can't be a layer you add at the end. It has to shape the product definition, the system prompt, the UI copy, the structure of the outputs, and the tone of the entire experience.

Most of all: in healthcare AI, restraint is a strength. A system becomes more trustworthy not when it claims more — but when it knows its limits, and still manages to be genuinely useful within them.

What's Next for Alethia Live

This project ends as a strong hackathon MVP. I'd like it to become something more.

Directions I'm excited about:

- Deeper understanding across a wider range of healthcare documents and forms

- Better multilingual health-literacy support

- More refined emergency red-flag handling and escalation UX

- Stronger accessibility for users under stress or with lower health literacy

- Provider and clinic workflow integrations where appropriate

- Real safety and clarity evaluation with realistic user testing

Long term, I see Alethia Live as part of a bigger vision for human-centered healthcare AI: systems that help people understand, prepare, and navigate — without pretending to replace the humans who care for them.

That's the future I wanted this project to point toward.

Built With

- browser-microphone/media-apis

- gemini-live-api

- google-cloud-run

- google-genai-sdk-(@google/genai)

- next.js

- react

- tailwind-css

- typescript

- web-audio-api

Log in or sign up for Devpost to join the conversation.