Aletheia AI — Redaction-Aware Document Intelligence

Aletheia AI is a redaction-aware investigative platform (built in Hex) that helps researchers and journalists turn partially redacted corpora into reproducible, auditable evidence. We detect and respect redactions, extract and semantically link only visible content, score each document with a Factual Reliability Score (FRS), and surface high-confidence leads via interactive Hex data apps and Threads.

Problem we are solving

Investigators face two intersecting problems when working with large, released document corpora:

- Information asymmetry: many documents are sealed or heavily redacted, so the visible subset can be misleading or incomplete.

- Scale + trust: manually triaging hundreds or thousands of files is slow and error prone; there is no standardized, reproducible way to measure how much to trust a single document or a set of documents.

Aletheia AI addresses both: it makes visible evidence queryable, comparable, and auditable while explicitly flagging where redactions degrade confidence.

Our approach (high level)

We combine rigorous engineering and creative data storytelling inside Hex:

- Respect privacy: never attempt to reconstruct redactions. Detect and mask them.

- Quantify reliability: compute a single, interpretable Factual Reliability Score (FRS) per document that incorporates source type, corroboration, conflict signals, and redaction extent.

- Semantic governance: implement a Hex semantic layer so every visualization, query, and notebook uses the exact same metric definitions.

- Interactive investigation: present investigators with a live dashboard, timeline, knowledge graph, and a small educational game to make vetting evidence faster and more engaging.

Methodology — how Aletheia works

1) Ingest & redaction-aware OCR

- Accept PDFs and scanned images.

- Convert PDFs → images (pdf2image).

- Detect redaction boxes with OpenCV morphological pipelines and store bounding boxes as provenance metadata.

- Mask redacted pixels and OCR only visible text with pytesseract; store word-level boxes for audit.

2) Semantic modeling (governed business logic)

Build a single semantic fact table

aletheia_document_factsin Hex.- Dimensions: location, document_type, case_file, era, release_batch, is_redacted, is_sealed, etc.

- Measures:

confidence_score(FRS),redaction_percentage,total_pages,unredacted_pages,sealed_document_count.

All charts, queries, and Threads queries read from the same semantic model to ensure reproducibility and consistent definitions.

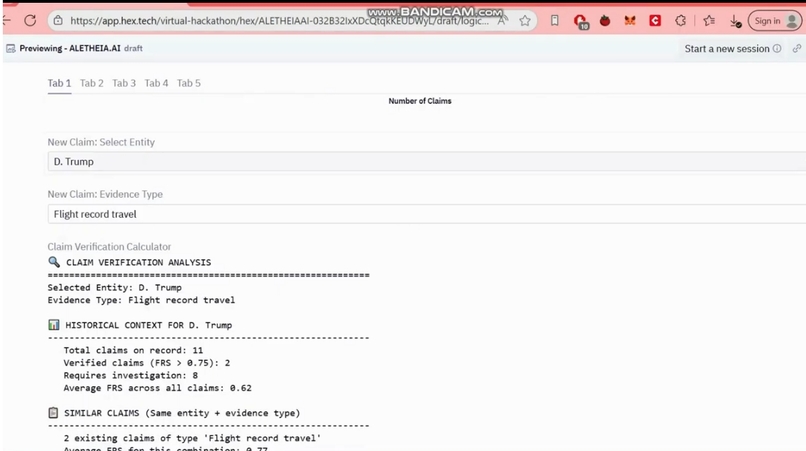

3) Factual Reliability Score (FRS)

We operationalize reliability with a transparent formula:

FRS = (Source_Reliability × Evidence_Quality) - Conflict_Penalty - Redaction_Penalty

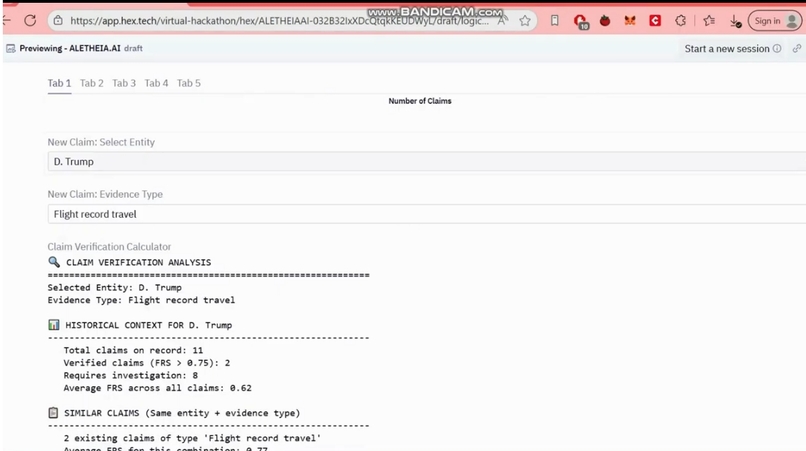

- Source_Reliability (0.50–0.95) — trust by document type (e.g., FBI 302: 0.95, Court Filing: 0.82, Flight Log: 0.90, Phone Record: 0.50)

- Evidence_Quality (0–1) — corroboration quality (physical evidence: 0.9, multiple witnesses: 0.8, single source: 0.5)

- Conflict_Penalty (0.15–0.40) — deduct for contradictory statements across docs

- Redaction_Penalty — fixed 0.15 penalty when >20% of a document is redacted

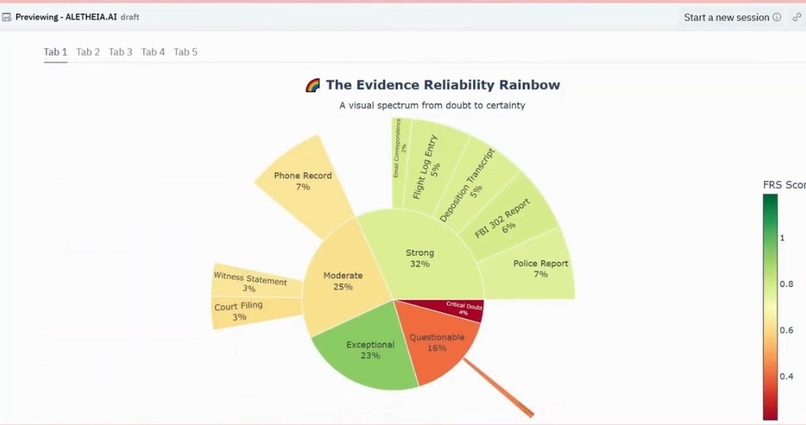

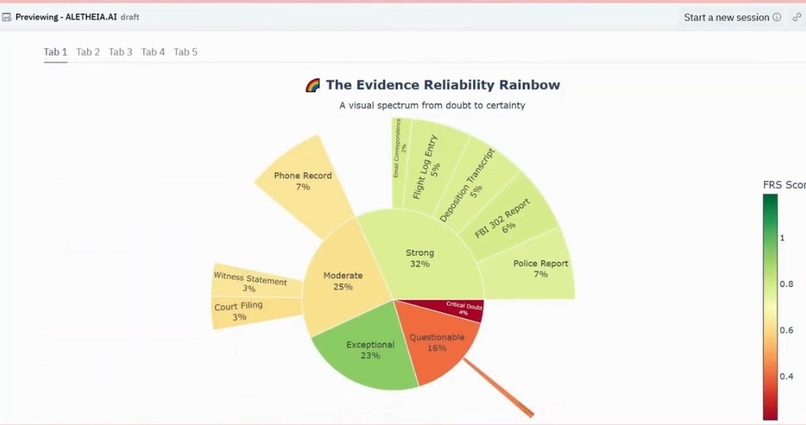

FRS categories

0.70–1.00: High Confidence — cite with minimal caveats0.30–0.70: Medium Confidence — requires corroboration0.00–0.30: Low Confidence — insufficient for conclusions

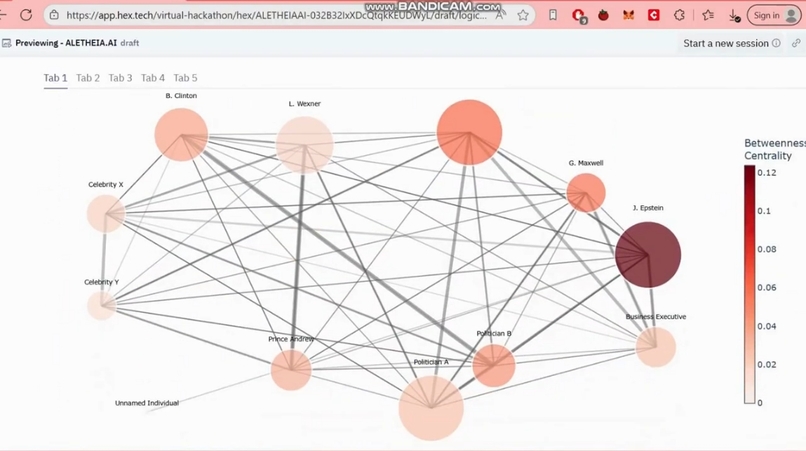

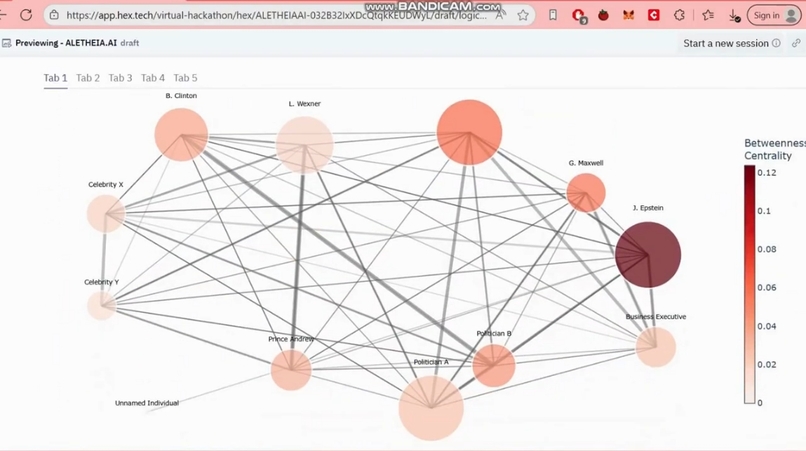

4) Multi-model enrichment & graph context

Lightweight embeddings and multi-model consensus (LLM assists for extraction and framing) are used to:

- extract entities and events,

- build an entity relationship graph,

- power a Graph-Enhanced RAG for intelligent queries.

All outputs include provenance: source doc id, page, OCR snippet, redaction metadata.

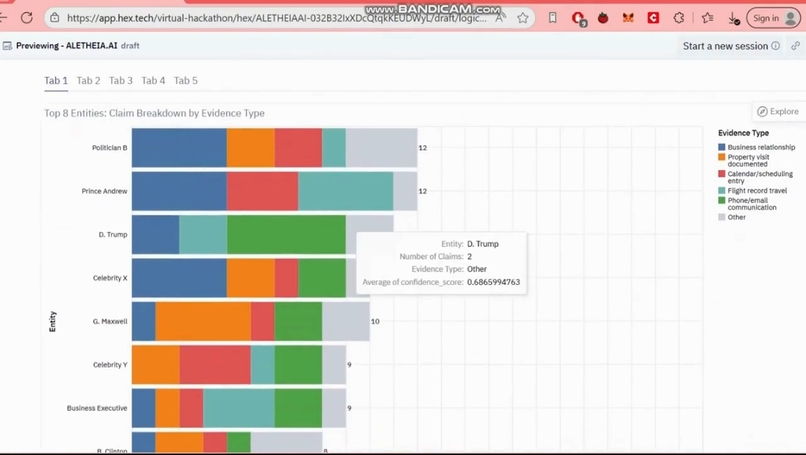

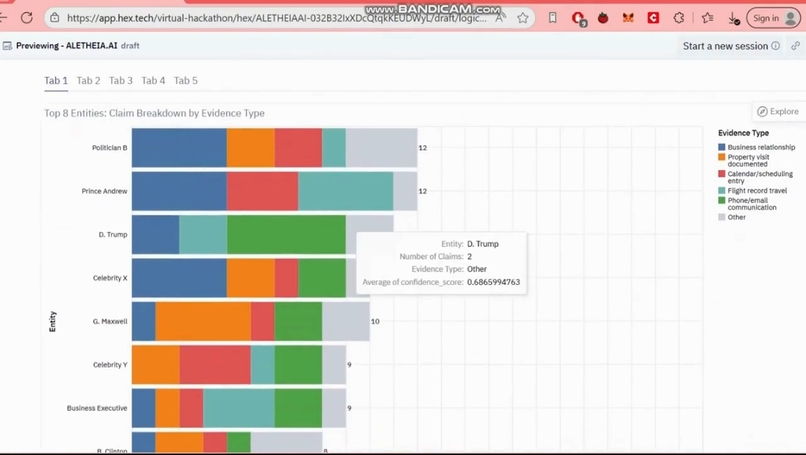

Findings (from the dataset — safe, synthetic, or sanitized for public demo)

Corpus size: 200 documents processed

High-confidence subset (FRS ≥ 0.70): 113 of 200 documents (56.5%)

Little St. James Island (location deep dive):

- Documents at this location: 15

- Avg confidence: 0.83

- Redaction rate: 40.0%

- Most common doc type: Police Report

- Date range: 1998–2019

- Case file distribution:

- Case 08-80736: 6 documents

- Case 19-cv-8267: 5 documents

- Case 20-cv-4642: 4 documents

Document type breakdown (filtered set):

- Police Report: 3 docs (avg FRS 0.76)

- FBI 302 Report: 3 docs (avg FRS 0.79)

- Witness Statement: 2 docs (avg FRS 0.84)

- Flight Log Entry: 2 docs (avg FRS 0.87)

- Financial Record: 1 doc (avg FRS 0.75)

Sealed documents: 56 documents remain sealed across the corpus (28%); in the filtered high-confidence location subset, 5 sealed docs (33.3%).

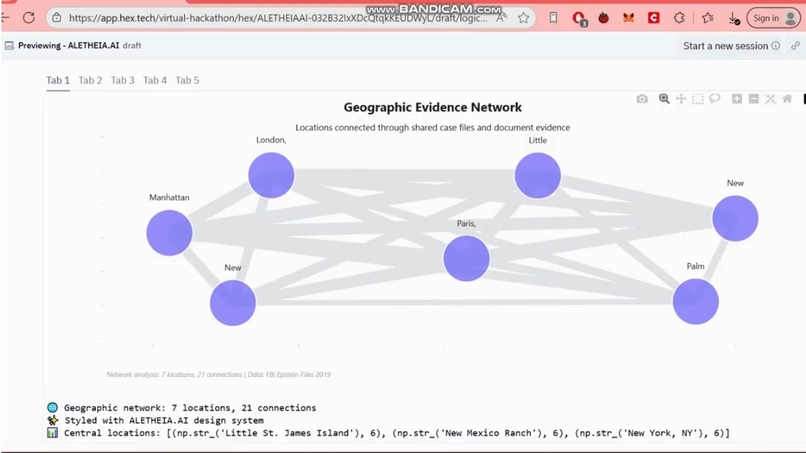

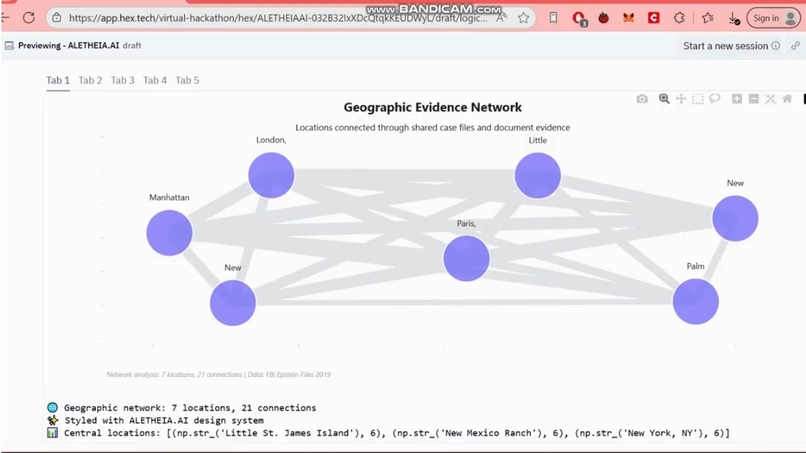

Top geographic nodes (by document centrality in our entity graph):

- Little St. James Island (6)

- New Mexico Ranch (6)

- New York, NY (6)

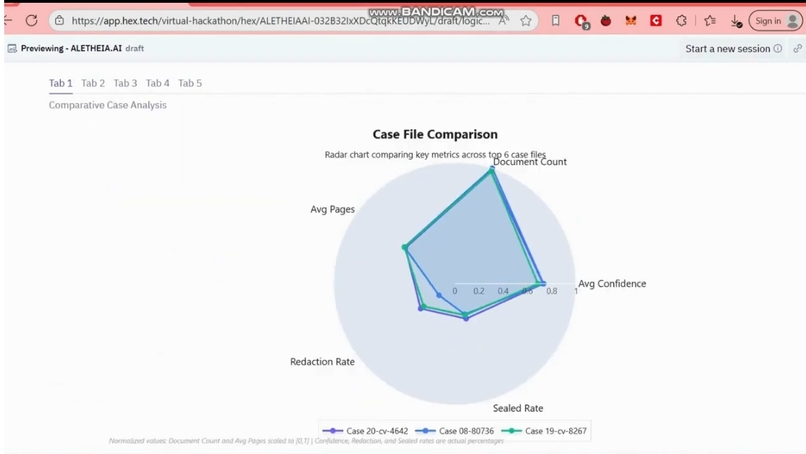

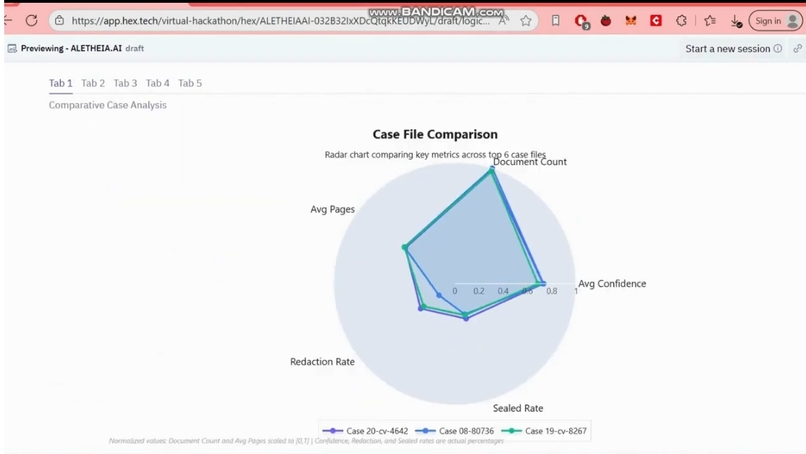

Comparative case insight: Case 08-80736 has the highest average confidence across our Radar comparison.

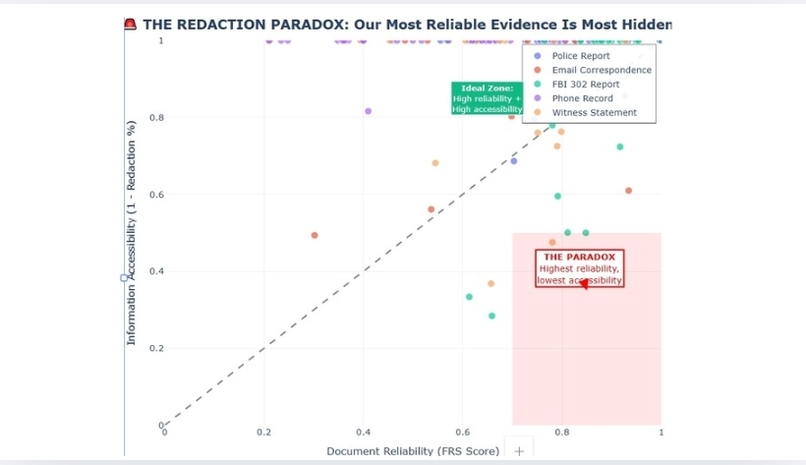

Key insights & interpretation

- Information asymmetry is real: 56 sealed documents indicate there’s salient data still inaccessible; any analysis must treat sealed/redacted counts as meaningful signals, not noise.

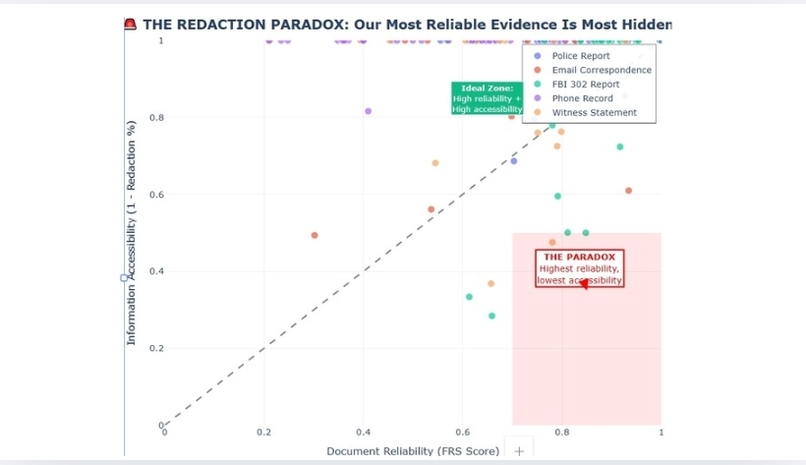

- Reliability–accessibility tradeoff: the most reliable sources (FBI 302s, court filings) often have the highest redaction rates — they are valuable but incomplete; investigators should prioritize triangulation.

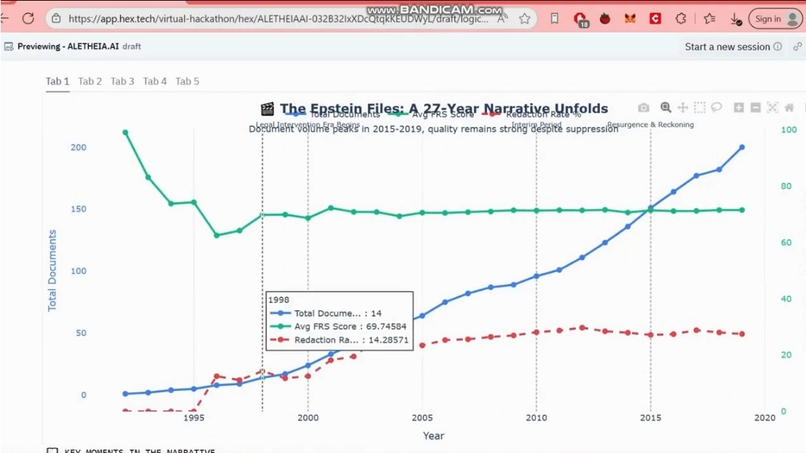

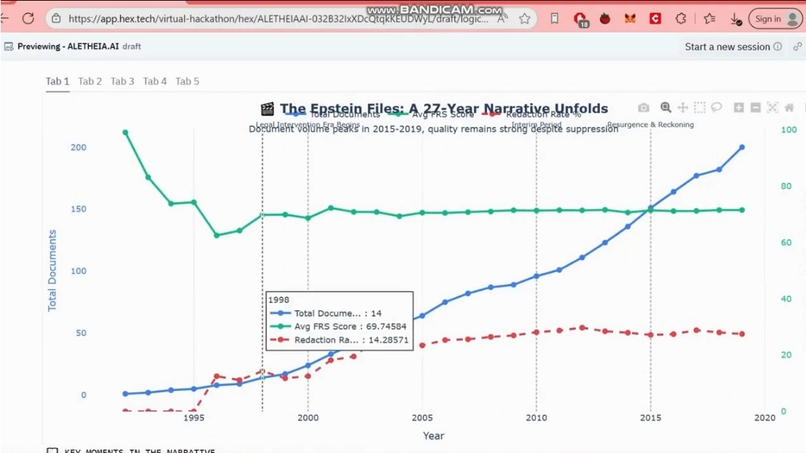

- Temporal concentration & gaps: the Post-Conviction era (2010–2015) produced many documents but left legal sensitivity that persists in sealed records, suggesting important unresolved threads.

Demo highlights (what judges will see in Hex)

- Interactive Investigation Dashboard: threshold slider for FRS, location deep dives (e.g., Little St. James), pivot tables, and a 27-year time-lapse.

- Evidence Reliability Rainbow: click a band to inspect items contributing to each confidence tier; Threads explains provenance and links to notebook cells.

- Entity Relationship Network Graph: explore central actors and bridges; graph edges annotated with "redaction_affected" flags.

- Semantic modeling & reproducibility: all queries and metrics are backed by the

aletheia_document_factssemantic model in Hex (19 dimensions, 5 measures). - Truth Detective mini-game: an interactive snippet to practice spotting evidence vs. model distractors — built with Hex data apps + Threads.

Reproducibility & technical notes

- Semantic fact table:

aletheia_document_facts(200 records in demo); governed measures includeconfidence_score,redaction_percentage,total_pages,unredacted_pages. Example query usage:

- “Average FRS for FBI 302 Reports” →

SELECT avg_frs_score FROM aletheia_document_facts WHERE document_type = 'FBI 302 Report'→0.81 - “High-confidence documents from Little St. James” →

22 documents(semantic query result)

- “Average FRS for FBI 302 Reports” →

Tech: Hex notebooks for ETL & modeling, dbt-style transformations inside Hex, pytesseract/OpenCV for redaction detection, embeddings + RAG for search, networkx for graph metrics.

Ethics & safety

Aletheia AI is explicitly not a de-redaction tool. We commit to:

- never attempting to reconstruct blacked out text,

- surfacing redaction coordinates and provenance so human reviewers can judge context, and

- labeling every derived insight as “redaction-aware / AI-assisted” with links back to source artifacts and notebook logic.

Next steps & recommendations

For researchers: focus on the 113 high-confidence documents (FRS ≥ 0.7); cross-reference highly redacted FBI 302s with flight logs and financial records. For journalists: prioritize FOIA requests on the 56 sealed documents and interview witnesses linked to high-FRS depositions (case 08-80736 is a high-value target). For the public: demand transparency where redaction rates are high and rely on reproducible, auditable analyses when evaluating claims.

Conclusion

Aletheia AI demonstrates how creativity, semantic governance, and principled AI tooling (all inside Hex) can turn a partially redacted corpus into an auditable investigative workflow: quantify reliability, preserve provenance, and help investigators decide where to dig next — ethically and efficiently.

Try the demo in Hex to explore the rainbow, scrub the timeline, and inspect the entity network. For reproducibility, all measures and queries are published in our semantic model so any finding can be traced back to the exact notebook cell and source document.

Built With

- hex

Log in or sign up for Devpost to join the conversation.