ASAP (Advanced Semantic AI Platform)

AI-Powered Workspace for Streamlining Software Coding and Documentation

Inspiration

I was inspired to participate in the hackathon by the chance to dive deep into the data infrastructure behind applications like OpenAI's GPT. The innovative blend of AI’s speed with the reliability of traditional databases, as seen in Azure Cosmos DB, was incredibly exciting to explore.

With three decades of experience as a software engineer, I have firsthand knowledge of how overwhelming it can be to sift through vast databases for specific information. This process can take hours or even days, even for seasoned professionals. Allowing users to input queries in natural language streamlines search and retrieval, eliminating irrelevant data and saving time, while boosting productivity.

Inspired by the hackathon, I began developing a suite of AI-powered copilots under the name Advanced Semantic AI Platform (ASAP). These copilots streamline the tedious tasks of coding and generating technical documentation. The capability to conduct precise data searches using natural language, with data stored in a vectorized format in Cosmos DB, was exactly the solution I needed.

What's more, during my hackathon project, I uncovered additional major benefits of Azure Cosmos DB technology, particularly in solving fundamental issues related to code and documentation generation using AI.

The main issue with code and documentation generators is the inconsistency and unreliability of generative AIs, which often introduce unexpected variations, sometimes even faulty ones, into the generated content. Before starting to work on the hackathon, I applied an intuitive idea of using minimalistic yet highly efficient data formats to produce consistent and uniform output and to maintain prompt compatibility across different versions of language models. The use of vector databases has significantly improved this process by more effectively managing and retrieving large-scale data, ensuring that the context and relevance of responses are maintained even with updated models.

While exploring vectorization, I discovered the significance of integrating ontologies. These provide structured frameworks that enhance consistency, relevance, and adherence to industry standards in AI-generated documentation and code.

I have developed a method called COSE (Cognitive Optimized Sparse Encoding), which leverages minimalistic yet efficient data formats. This approach aligns seamlessly with the inherently sparse and high-dimensional nature of vector databases. Integrating COSE into vector databases enhances data retrieval speeds and computational efficiency through cognitive optimization, facilitating adaptive and user-centric processing. This synergy not only boosts performance but also makes databases more intuitive, propelling advancements in AI and data science. The combination of the two technologies embodies a forward-thinking approach, ensuring optimal performance while maintaining user accessibility and ease of use.

I began this project to automate my own tasks, and it has evolved into an extraordinary solution that I believe will greatly benefit other professionals in the IT community.

What It Does

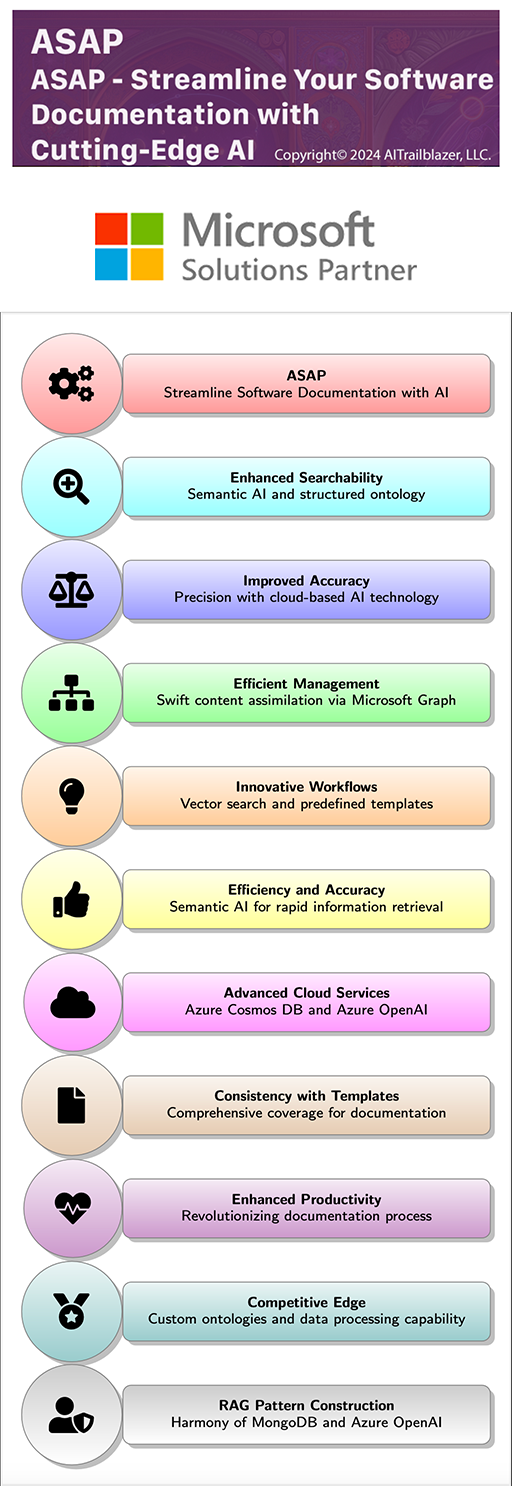

Imagine an AI that can generate code and documentation in minutes, find the information you need in seconds, and publish reports almost instantaneously! ASAP offers a suite of AI copilots transforming tech documentation and code creation using custom ontologies tailored to an organization's standards. Integrating advanced natural language search and automation within a unified workspace, ASAP combines the speed and efficiency of AI with the reliability of traditional systems.

Imagine an AI that can generate code and documentation in minutes, find the information you need in seconds, and publish reports almost instantaneously! ASAP offers a suite of AI copilots transforming tech documentation and code creation using custom ontologies tailored to an organization's standards. Integrating advanced natural language search and automation within a unified workspace, ASAP combines the speed and efficiency of AI with the reliability of traditional systems.

ASAP's Suite Includes:

- OntologyGen: Creates custom ontologies aligned with organizational standards, terminologies, and data structures, ensuring adherence during the document and code generation processes.

- TechDocGen: Uses OntologyGen's customized ontologies to generate professional, consistent documentation.

- CodeGen: Deploys software ontologies to generate accurate code across multiple platforms, ensuring compatibility and optimal performance.

- ReportGen: Employs predefined ontologies to create comprehensive, standardized reports in various formats (PDF, diagrams, images).

- Natural Language Queries: Enables users to perform precise data searches using natural language, with data stored in a vectorized format in Cosmos DB.

- Unified Workspace: Designed for accessibility and ease of use, each AI copilot has its workspace with chat sessions stored for later retrieval using semantic search.

- Retrieval Augmented Generation (RAG): Enhances performance in knowledge-intensive tasks by retrieving and incorporating external information into prompts.

Each copilot is a domain expert: TechDocGen for documentation, CodeGen for coding languages and platforms. ASAP's customizable settings, such as target audience and response attributes, enable replication of the original chat context. Lifelong Learning Agents, supported by Cosmos DB and vectorization, ensure continuous improvement in performance.

Problem

Here are the key problems that ASAP addresses:

Here are the key problems that ASAP addresses:

- Time Consumption: Coding and creating software documentation are extremely time-consuming. The vast amount of data developers need to sift through makes managing, organizing, and retrieving relevant information a challenge, leading to information overload and inefficiency.

- Lack of Consistency: Without a structured ontology, terms and processes might be used inconsistently across different documents, leading to confusion and misinterpretation. Collaboration across teams or departments can be hindered due to varying interpretations.

- Inefficiencies in Document and Code Management: Traditional methods of creating and retrieving documents and code are often time-consuming and labor-intensive.

- Limited Data Accessibility: Accessing and retrieving vast amounts of corporate data can be cumbersome and inefficient with conventional systems.

- Reduced Productivity: Manual document and code generation can detract from more critical tasks, lowering overall productivity.

- Lack of Expertise and Customization: Users often lack the tools to customize outputs or harness expert-level insights in their fields, particularly in non-technical domains.

- Static Performance: Many platforms do not adapt or improve over time, failing to learn from interactions or integrate new data effectively.

- Handling Complex Knowledge: Traditional platforms struggle with managing and utilizing complex, knowledge-intensive information efficiently.

These pain points collectively reduce development speed and cause frustration within teams, making the search for effective solutions a critical concern for software organizations.

Solution

Efficiency in Document and Code Management

By integrating sophisticated natural language search and automation capabilities in a unified workspace, ASAP revolutionizes how documents and code are created and retrieved, reducing time and effort.

Improved Accuracy and Relevance in Content Generation

By adopting custom ontologies, ASAP ensures that AI-generated content adheres to industry standards and semantics, addressing key pain points such as lack of uniformity, mismatched terminologies, and inconsistent quality in document and code generation.

Enhanced Data Accessibility

The platform enables precise and efficient data retrieval through Microsoft Graph, storing information in a vectorized format in Cosmos DB for enhanced usability.

Productivity Enhancement

ASAP automates essential aspects of document and code generation, allowing users to focus on more critical and strategic tasks, thereby boosting productivity.

Expertise and Customization

AI copilots like Code-Generation and Document-Generation Agents function as domain-specific experts and are tailored with settings like target audience and response attributes, enhancing operational efficiency and making advanced AI tools accessible to non-experts.

Continuous Learning and Improvement

Lifelong Learning Agents ensure the platform continuously improves, adapting to new data and interactions.

Advanced Knowledge Integration

Leveraging Retrieval Augmented Generation (RAG), ASAP excels in knowledge-intensive tasks, effectively managing and utilizing complex information.

These solutions position ASAP as a vital tool for enhancing efficiency, productivity, and adaptability in modern business environments.

Benefits

Enhanced Efficiency in Document and Code Management

ASAP revolutionizes the creation and retrieval of documents and code by integrating sophisticated natural language search and automation capabilities within a unified workspace. This integration significantly reduces the time and effort required, streamlining workflows and operations.

Improved Data Accessibility

ASAP enhances data usability by enabling precise and efficient retrieval through Microsoft Graph, coupled with storage in a vectorized format in Cosmos DB. This ensures that users can access and utilize data more effectively, improving decision-making and response times.

Increased Productivity

By automating key aspects of document and code generation, ASAP frees users to focus on more critical and strategic tasks. This automation not only speeds up routine processes but also boosts overall productivity.

Expertise and Customization at Your Fingertips

The platform's AI copilots, such as the CodeGen and TechDocGen, offer expert-level guidance tailored to specific domains. These agents adapt to user preferences and needs, including target audience and response attributes, making advanced AI tools accessible even to non-experts and enhancing operational efficiency. OntologyGen facilitates the creation of custom ontologies, allowing businesses to define internal processes in a structured format. This customizability drives interaction with AI copilots, resulting in improved accuracy and efficiency in content generation.

Continuous Performance Enhancements

With Lifelong Learning Agents, ASAP is designed to continuously learn and improve, adapting to new data and user interactions. This ongoing development ensures that the platform remains cutting-edge and increasingly effective over time.

Superior Handling of Complex Knowledge

Through Retrieval Augmented Generation (RAG), ASAP excels in knowledge-intensive tasks, managing and leveraging complex information efficiently. This capability makes it an indispensable tool for sectors that require detailed and sophisticated knowledge management.

These benefits underscore ASAP’s role as an essential tool for boosting efficiency, productivity, and adaptability in modern business environments, making it a strategic asset for any organization.

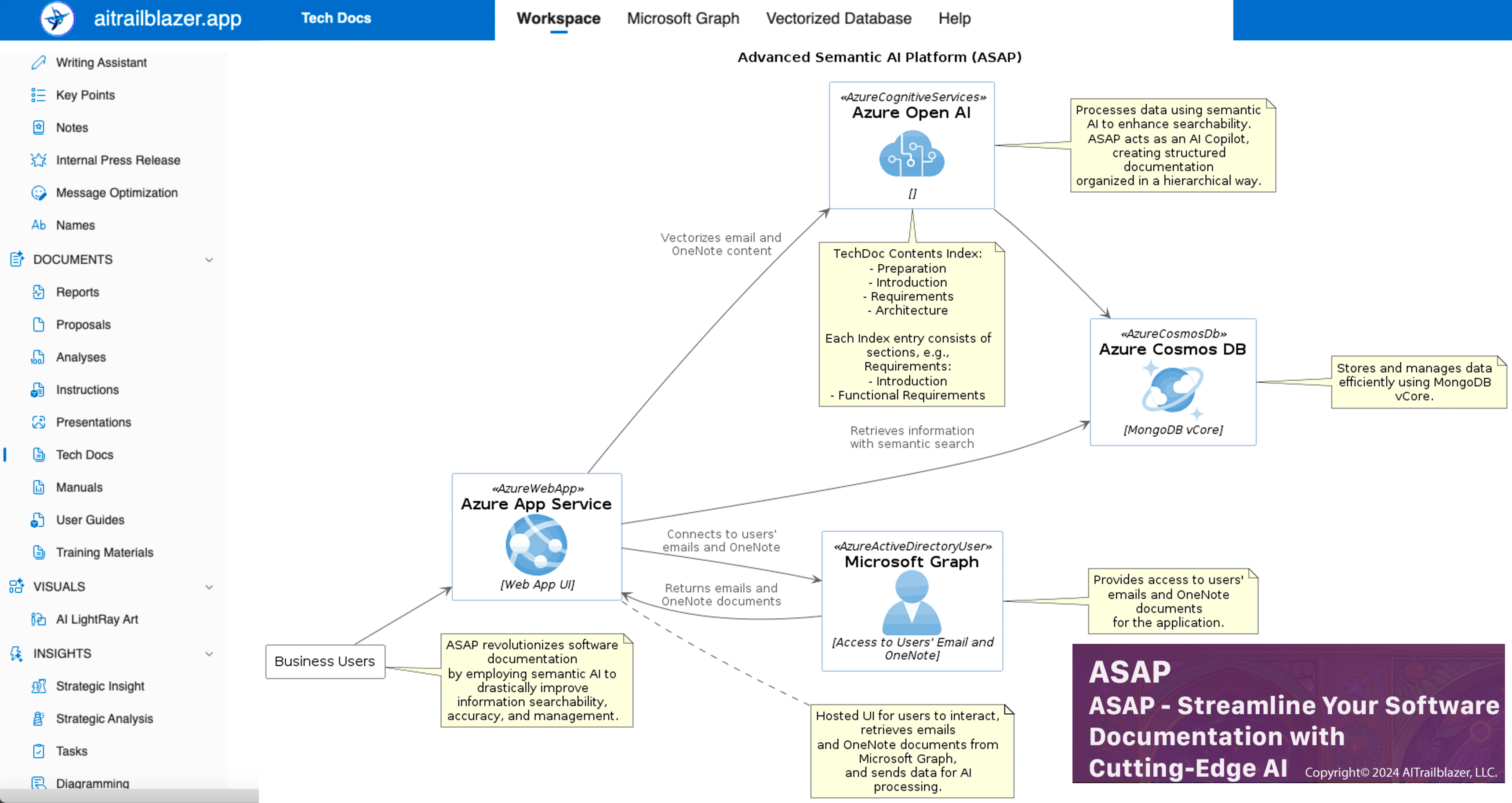

How We Built It

ASAP’s development involved designing a Retrieval Augmented Generation (RAG) pattern, integrating MongoDB’s vector database capabilities with Azure OpenAI’s vector search and AI-assisted user interface. This architecture seamlessly transforms stored data into streamlined knowledge, optimizing the software development lifecycle.

ASAP’s development involved designing a Retrieval Augmented Generation (RAG) pattern, integrating MongoDB’s vector database capabilities with Azure OpenAI’s vector search and AI-assisted user interface. This architecture seamlessly transforms stored data into streamlined knowledge, optimizing the software development lifecycle.

Challenges We Ran Into

Our developmental journey presented several challenges:

- Optimizing Data Management: Achieving a balance between speed and accuracy.

- User Experience: Crafting intuitive and user-friendly interfaces.

- Data Flow Integration: Ensuring smooth integration between traditional and vector databases.

- Scalability and Reliability: Maintaining performance and scalability.

- Natural Language Understanding: Developing a sophisticated chatbot experience.

Accomplishments That We're Proud Of

We have achieved several milestones:

- Pioneering AI Integration: Leading in AI solutions for software coding and documentation.

- Advanced Functionalities: Introducing a multi-copilot system and effective chat session management.

- Technical Innovations: Leveraging vCore-based Azure Cosmos DB and Azure OpenAI services.

- Enhanced User Interaction: Creating a sophisticated chatbot experience.

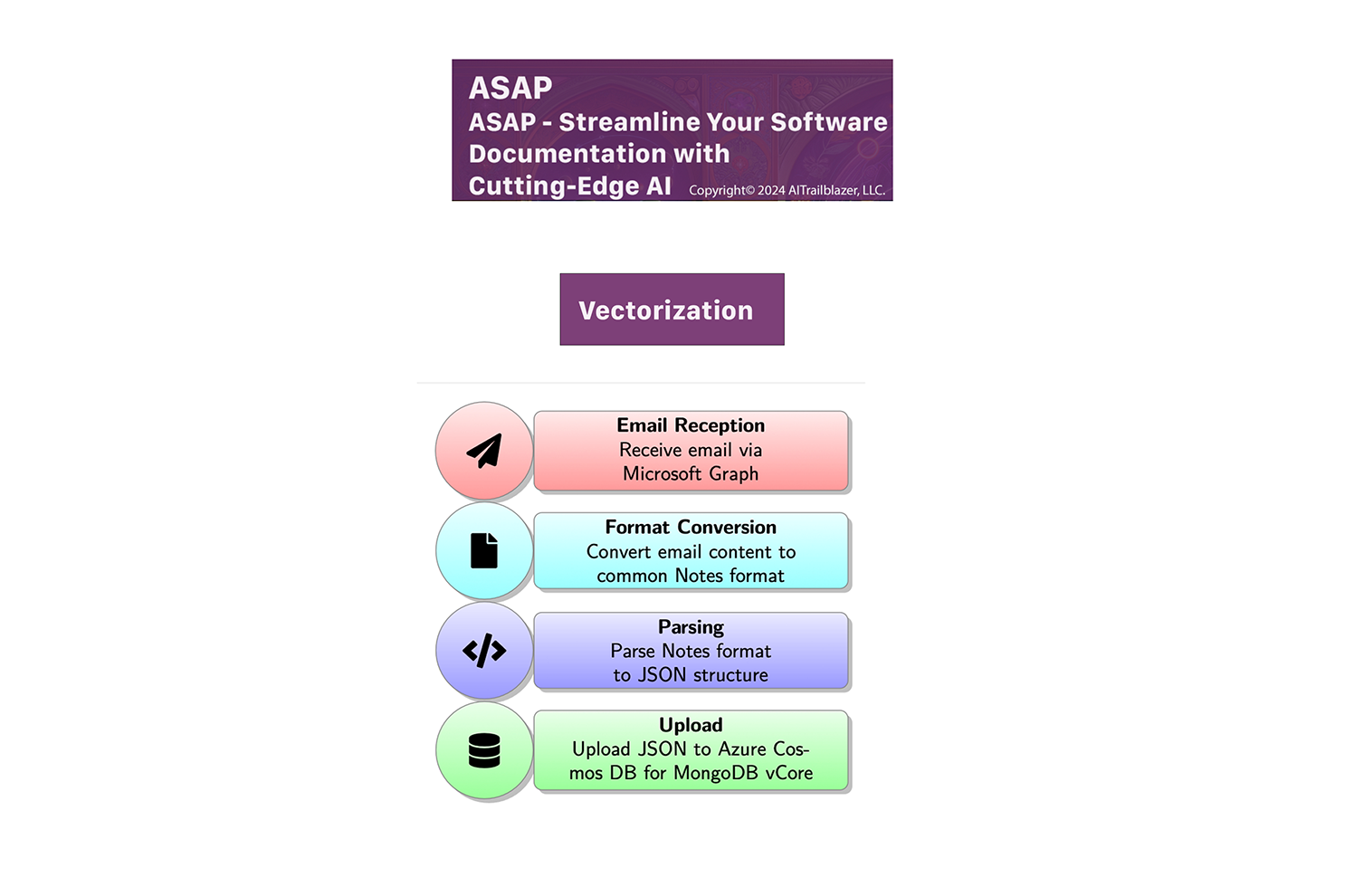

- One-Click Vectorization: Simplifying the process of vectorization.

What We Learned

Optimizing Data for RAG

One valuable insight we gained while implementing the Retrieval Augmented Generation (RAG) was the necessity of refining our datasets for better model performance. Original documents, customer requirements, and similar sources often contain verbose and redundant information. This extra content not only increases token usage but also diminishes prediction accuracy.

To address this, we transitioned from using raw content to leveraging a new framework we invented during the hackathon:

Optimizing Memory Organization in Large Language Models Using Cognitive Optimized Sparse Encoding (COSE)

Cognitive Optimized Sparse Encoding (COSE)

COSE enhances LLMs by emulating human memory for better data retrieval and memory organization.

Mimics Human Memory Efficiency:

- COSE models the way human memory works to achieve faster and more accurate recall with less computational effort.

- This efficiency allows LLMs to pull relevant information quickly, much like how the human brain recalls useful memories.

Token Efficiency:

- By focusing on using fewer tokens, COSE effectively manages larger datasets within the confines of an LLM's context window.

- This is crucial in applications where memory and processing power are limited, ensuring efficient use of resources.

Broad Applicability:

- COSE has wide-ranging applications in fields such as artificial intelligence, information management, and education.

- It enhances the performance of LLMs by improving their ability to learn, recall, and generate accurate information.

Initial Bulk Training vs. In-Context Learning:

- There is a debate between the effectiveness of bulk training (training models on large amounts of initial data) versus in-context learning (learning dynamically from ongoing inputs).

- COSE brings a balance by improving adaptability, enabling LLMs to perform well in dynamic scenarios where data and context constantly change.

Summary: COSE offers a human-like, token-efficient approach to improve LLMs' memory organization and data management. It effectively balances the stability of bulk training with the adaptability of in-context learning. Despite its complexities, COSE shows potential in diverse applications like AI and education.

Detailed Explanation:

Latent Space in LLMs:

High-Dimensional Vector Space:

- Latent space represents a high-dimensional vector space that encodes linguistic, semantic, and syntactic features of the input data.

- This space allows for complex computations and representations that underpin the LLM's understanding and generation of language.

Encoding and Decoding:

- Encoding: This process compresses the incoming data into a latent representation, effectively summarizing the essential features in a compact form.

- Decoding: The latent representation is then decoded back into human-readable text, which is coherent and contextually relevant.

Core Concepts:

High-Dimensional Space:

- Encodes multiple attributes of the data such as parts of speech, semantic roles, and syntactic structures.

- This dense encoding enables nuanced understanding and manipulation of language by the model.

Encoding/Decoding Mechanisms:

- These mechanisms transform raw input into a latent form and vice versa, maintaining the integrity and meaning of the information through the process.

Applications:

Semantic Search:

- COSE enhances semantic search by retrieving documents based on a deep understanding of their content, not just keywords.

- This means users can find more relevant and contextually appropriate information.

Text Generation:

- The model uses latent vectors to generate text that is not only contextually coherent but also stylistically appropriate.

- This results in more natural and human-like text output.

Transfer Learning:

- COSE aids in adapting pre-trained models' knowledge to new tasks, making it easier for LLMs to perform well on tasks they were not explicitly trained for.

- This adaptability is crucial for applications that require continual learning and updating.

Associative Learning:

- COSE enables LLMs to be 'primed' with new concepts and make connections between new and existing knowledge.

- This associative learning improves the model’s ability to understand and generate relevant output.

Compression Concept:

- The end result of COSE is akin to a form of data compression. It distills complex data into succinct, efficient representations (Compression).

- Later, this succinct information is expanded into a full, detailed form (Decompress) when needed, ensuring that the model can operate efficiently without losing the richness of information.

COSE thus represents a powerful approach to optimizing LLMs for real-world applications, balancing efficiency, complexity, and adaptability in a way that mirrors human cognitive processes.

What's Next for Advanced Semantic AI Platform (ASAP)

We plan to enhance ASAP further by boosting its semantic AI and natural language processing capabilities. Future updates will focus on introducing more customization options, improving collaboration features, and ensuring continuous adaptation to user feedback and technological advancements.

ASAP is committed to revolutionizing software coding and documentation through ongoing improvement, making advanced AI tools accessible and user-friendly, thereby enhancing operational efficiency for all users.

With over thirty years of industry insight, we aim to deliver a product that exceeds expectations and drives success for IT professionals. The success of ASAP depends on factors including market demand, technological capability, competition, and execution strategy.

In recent years, the software industry, especially AI integration, has grown rapidly. Developers face increasing pressure to swiftly adapt to technological changes and deliver advanced solutions promptly. The demand for automation tools that streamline development workflows is rising. In 2023, 82% of IT leaders emphasized the standardization and efficiency of processes as their top priorities.

COSE proved to be valuable and we would develop it further.

Market Demand

- High Demand: There is a strong need for efficient software coding and documentation solutions.

- Target Audience: Developers, software engineers, and technical writers are eager to adopt robust documentation tools.

Competitive Advantage

- Unique Value Proposition: Differentiating features like integrated AI copilots within a single workspace, compatibility with Microsoft Graph, predefined templates, and tailored ontologies set ASAP apart.

- Industry Trends: Staying aligned with the latest trends in AI and software development ensures relevance and competitiveness.

Technological Capability

- Advanced AI Integration: Leveraging semantic AI, structured ontologies, and cloud services positions ASAP as a cutting-edge solution.

- Scalability and Reliability: The platform’s ability to handle large datasets while maintaining high performance is crucial.

Customization and Flexibility

- Product Development: Introducing more customization options for templates and workflows to meet diverse organizational needs.

- User Feedback: Responding to user feedback and quickly iterating on the product.

Collaboration Features

- Enhanced Teamwork: Boosting collaboration features for more effective teamwork on documentation projects.

ASAP is dedicated to revolutionizing software coding and documentation by focusing on enhanced capabilities, improved collaboration, customization, and user support. By doing so, we are committed to helping organizations streamline their documentation processes and achieve greater productivity.

Case study

Streamlining Workflow with Azure Cosmos DB and ASAP Tools

Consultant’s Journey with ASAP: Optimizing Workflow with Azure Cosmos DB

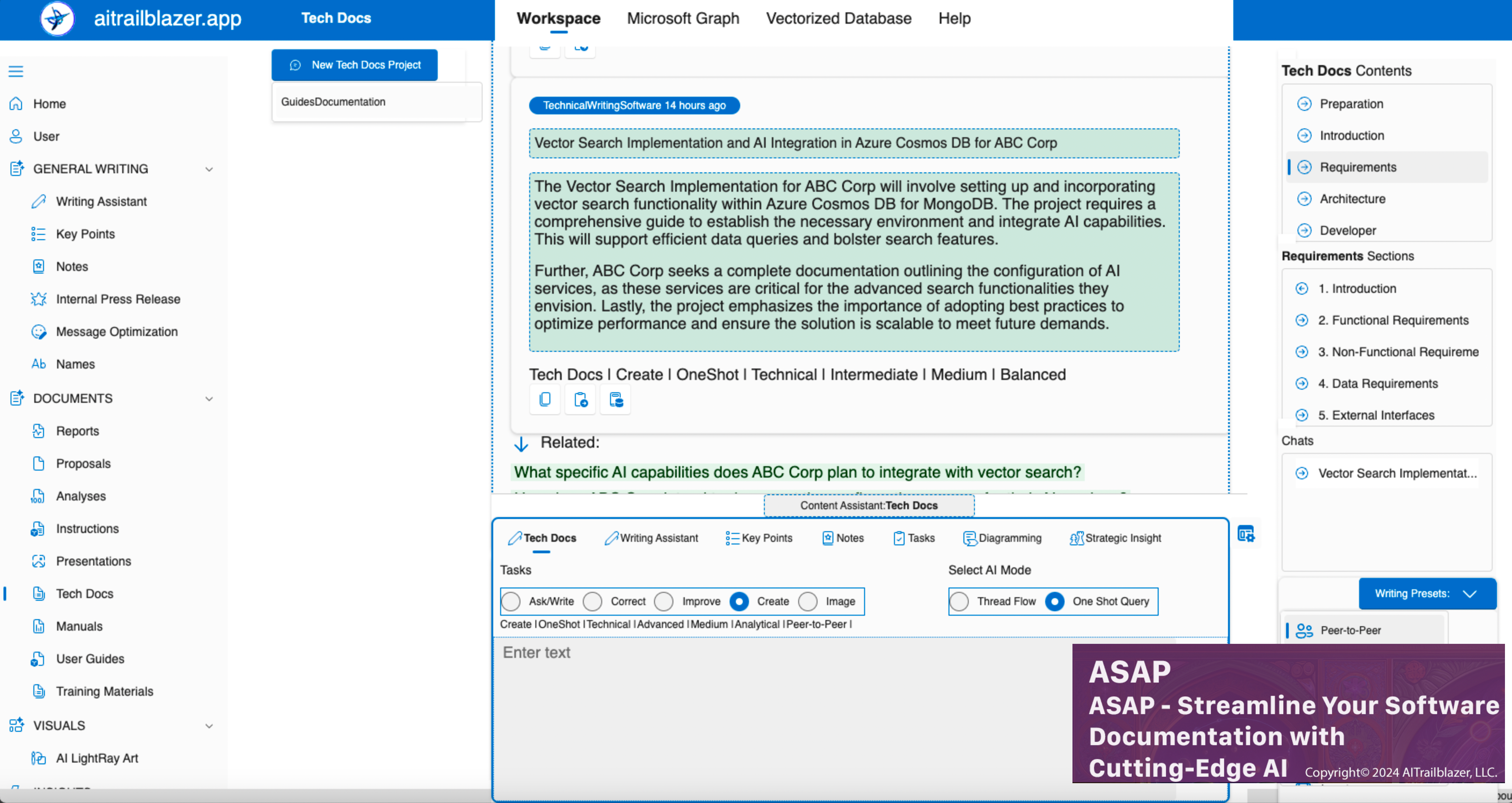

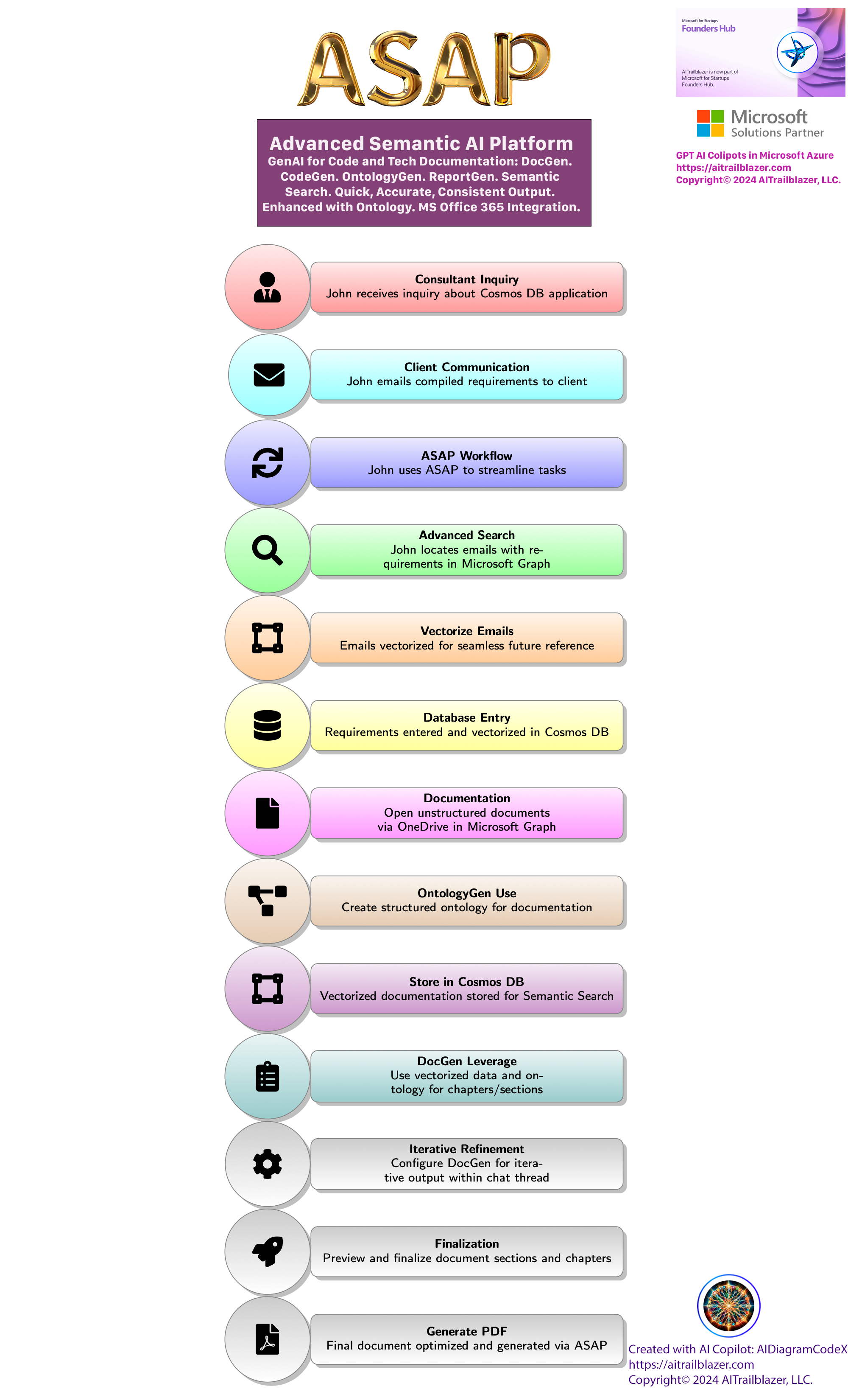

A seasoned Azure Cosmos DB consultant received an inquiry from a prospective customer about developing an application utilizing Cosmos DB. Following their conversation, she compiled the customer's requirements and sent them via email, both to the customer and as a record for herself.

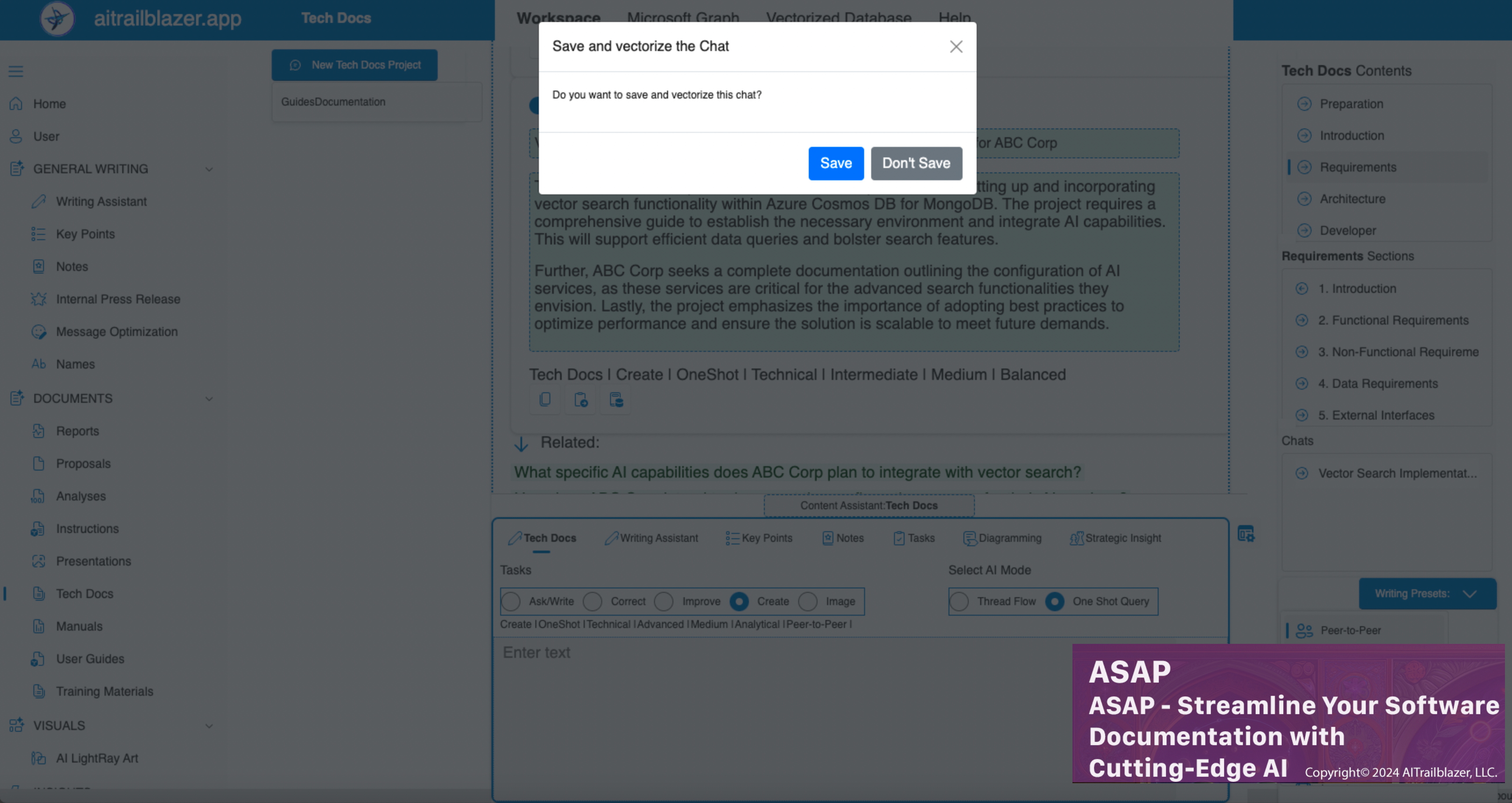

Juggling multiple clients, the consultant relies on ASAP to streamline her workflow. She opens ASAP and clicks on the Microsoft Graph tab. Using the advanced search capabilities, she locates her emails containing the requirements. By vectorizing these emails, she ensures that she won’t need to resubmit them to GPT every time; ASAP combines the system prompt with the custom prompt for seamless future reference.

With the requirements entered into the database and vectorized, the consultant turns her attention to documentation. She accesses her internal company documents via the OneDrive interface within the Microsoft Graph tab and opens an unstructured document. Using OntologyGen, she customizes settings to create a structured ontology, storing the generated documentation in Azure Cosmos DB in vectorized form for efficient retrieval through Semantic Search.

Next, the consultant leverages DocGen to utilize the vectorized requirements and her custom ontology. The ontology follows a structured format of chapters and sections. Starting with one chapter, she configures DocGen to refine the output iteratively within a chat thread. Once satisfied with a section, she saves the final version.

After completing each section and ensuring all chapters are ready, the consultant previews the entire document and makes necessary adjustments. Satisfied with the results, she uses ASAP to generate the final document as a PDF, fully optimized for her customer’s needs.

1) Informal notes for custom project

Vector Search Implementation for ABC Corp.

Subject: Project: Vector Search Implementation for ABC Corp. From: Constantine To: Constantine Received: 06/20/2024 18:08

Customer Notes. Project: Vector Search Implementation Consultant: John Doe Customer: ABC Corp

Note 1

Date: 2024-06-06

The customer wants to implement Vector Search & AI Assistant for Azure Cosmos DB for MongoDB vCore. They need a guide on setting up the environment and integrating vector search capabilities.

Note 2

Date: 2024-06-07

They also require detailed documentation on configuring AI services to assist with data queries and enhance search functionalities.

Note 3

Date: 2024-06-08

The customer is interested in best practices for optimizing performance and ensuring scalability of the solution.

2) Vectorization-COMPRESS

Using COSE Copilot with following settings:

Settings:Create |OneShot |Technical |Distinguished |Large |Focused |Downward Synthesize

Note: the Target Audience Level is Distinguished - e.g. the highest level because it is intended to be used from computer for communication on highest level of understanding.

[COMPRESS-START]

Vector Search Implementation for ABC Corp. Project Notes:

Implement Vector Search & AI Assistant on Azure Cosmos DB for MongoDB vCore—setup and integration guide needed. (2024-06-06).

Detailed documentation required for configuring AI services to support data queries and enhance search functionalities. (2024-06-07).

Provide best practices for optimizing performance and ensuring scalability. (2024-06-08).

[COMPRESS-END]

3) Vectorization-DECOMPRESS

Settings:Create |OneShot |Technical |Novice |Large |Focused |Downward Synthesize

Note: the Target Audience Level is Novice - e.g. the lowest level because it is intended to be use from a person with all information required to start and finish the project.

[DECOMPRESS-START]

ABC Corp. Project: Vector Search Implementation

1. Implementing Vector Search & AI Assistant:

- Platform: Azure Cosmos DB for MongoDB vCore.

- Task: Setup and integration of vector search functionalities with AI Assistant.

- Date: June 6, 2024.

- Requirements: Comprehensive setup and integration guide.

2. Configure AI Services:

- Focus: Supporting data queries.

- Enhancement: Improve search functionalities.

- Date: June 7, 2024.

- Documentation Needs: Detailed configuration instructions for AI services.

3. Performance Optimization & Scalability:

- Goal: Achieve high performance and scalability.

- Recommendations: Best practices for optimization.

- Date: June 8, 2024.

[DECOMPRESS-END]

Leveraging AI Copilot Tasks

After Vectorization-COMPRESS, use Vectorization-DECOMPRESS as input to AI Copilot Tasks:

Task #1 Title: Setup Guide for Vector Search Implementation on Azure Cosmos DB for MongoDB vCore

Category: Development

Priority: High

Framework Justification: Eisenhower Matrix - The task's importance is paramount due to its direct impact on the project's foundation, making it a high-priority task.

Description: Create a comprehensive guide on setting up the environment for Vector Search and AI Assistant for Azure Cosmos DB for MongoDB vCore. Include steps for initial setup, required configurations, and integration processes.

Due Date: 2024-06-13 Assigned To: John Doe

Task #2 Title: Document Configuration of AI Services for Enhanced Search Functionalities

Category: Documentation

Priority: Medium

Framework Justification: MoSCoW Method - Essential for the project’s completion but can be scheduled after initial setup, making it a 'Should have' task.

Description: Develop detailed documentation on how to configure AI services to assist with data queries and enhance search functionalities. Provide examples and step-by-step instructions to ensure clarity.

Due Date: 2024-06-20

Assigned To: John Doe

Task #3 Title: Research and Document Best

Practices for Performance Optimization

Category: Research/Documentation

Priority: Medium

Framework Justification: Pareto Principle - Identifying best practices will have a long-term impact on the project's success, focusing efforts on tasks that yield significant results.

Description: Research industry best practices for optimizing performance and ensuring the scalability of the implemented Vector Search solution. Document findings and create guidelines to be included in the overall project documentation.

Due Date: 2024-06-18

Assigned To: John Doe

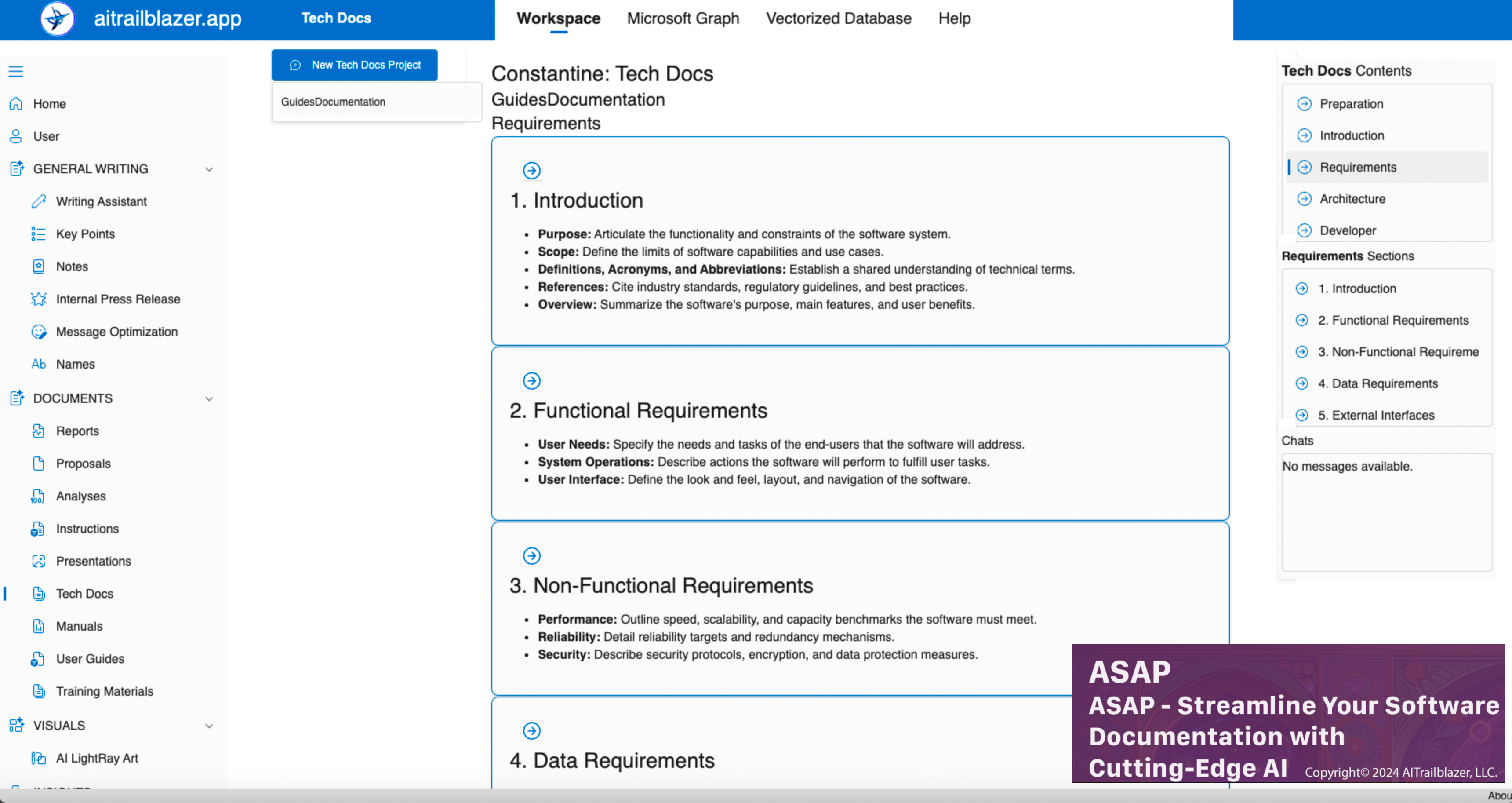

Select an Ontology for creating documentation.

Comprehensive Guide to Azure Cosmos DB Documentation

Comprehensive Guide to Azure Cosmos DB Documentation

Software Documentation for Azure Cosmos DB Ontologies

1. Requirements Documentation

1. Introduction

Purpose: Explain the functionality, scalability, and performance of Azure Cosmos DB.

Scope: Cover features, limitations, and use cases.

Definitions, Acronyms, and Abbreviations: Clarify terms such as "partitioning," "throughput," and "global distribution."

References: Include links to Azure documentation, industry best practices, and regulatory standards.

Overview: Summarize the database's multi-model capabilities, global distribution, and consistency models.

2. Functional Requirements

User Needs: Describe the requirements for managing globally distributed databases.

System Operations: Specify CRUD operations, indexing, and query capabilities.

User Interface: Detail the Azure Portal and SDK interfaces.

3. Non-Functional Requirements

Performance: Define latency targets and throughput requirements.

Reliability: Describe SLAs, replication strategies, and failover mechanisms.

Security: Outline encryption standards, access controls, and compliance with data protection laws.

4. Data Requirements

Data Models: Detail support for document, graph, key-value, table, and column-family data models.

Data Storage: Define partitioning schemes, consistency levels, and backup strategies.

Data Manipulation: Specify methods for querying with SQL, Gremlin, MongoDB API, and Table API.

...

Start generating section Introduction.

The input is for example:

Task #2 Title: Document Configuration of AI Services for Enhanced Search Functionalities

Category: Documentation

Priority: Medium

Framework Justification: MoSCoW Method - Essential for the project’s completion but can be scheduled after initial setup, making it a 'Should have' task.

Description: Develop detailed documentation on how to configure AI services to assist with data queries and enhance search functionalities. Provide examples and step-by-step instructions to ensure clarity.

Due Date: 2024-06-20

Assigned To: John Doe

it is combined with

Segment #1:

Comprehensive Guide to Azure Cosmos DB Documentation

1. Introduction

Purpose: Explain the functionality, scalability, and performance of Azure Cosmos DB.

Scope: Cover features, limitations, and use cases.

Definitions, Acronyms, and Abbreviations: Clarify terms such as "partitioning," "throughput," and "global distribution."

References: Include links to Azure documentation, industry best practices, and regulatory standards.

Overview: Summarize the database's multi-model capabilities, global distribution, and consistency models.

with custom command: run it to produce:

Comprehensive Guide to Azure Cosmos DB Documentation

1. Introduction

Purpose: This guide aims to provide an in-depth understanding of Azure Cosmos DB, focusing on its functionality, scalability, and performance.

Scope: We'll cover the database's features, limitations, and use cases to give you a comprehensive overview of its capabilities.

Definitions, Acronyms, and Abbreviations:

- Partitioning: Method to distribute data across separate storage units for better scalability.

- Throughput: Measure of data flow rate; how much data can be processed per second.

- Global Distribution: Capability of distributing data across multiple geographic regions for improved access and redundancy.

References:

- Azure Documentation: Azure Cosmos DB Documentation

- Industry Best Practices: Refer to published best practices for database management.

- Regulatory Standards: Links to GDPR and other relevant regulatory information.

Overview: Azure Cosmos DB is a globally distributed, multi-model database service. It supports various data models and offers five consistency levels. Its global distribution capabilities ensure data is available and responsive worldwide while maintaining high performance and scalability.

Task

Document Configuration of AI Services for Enhanced Search Functionalities

Category: Documentation

Priority: Medium

Description: This section provides a step-by-step guide on configuring AI services to improve data query capabilities and enhance search functionalities.

Introduction to AI Services:

- Purpose: AI services can analyze and interpret complex data queries, providing more accurate search results.

- Scope: Covers configuration, integration, and optimization.

Step-by-Step Configuration:

- Set Up AI Service:

- Navigate to the Azure Portal: Log in and select your subscription.

- Create a New AI Service: Choose the service suitable for enhanced search, like Azure Cognitive Search.

- Configure Basic Settings: Define your service name, location, and pricing tier.

- Integration with Cosmos DB:

- Connect Data Source: Link your Azure Cosmos DB account as the data source.

- Index Data: Set up indexing to ensure data is searchable.

- Define Search Index Schema: Create fields and specify data types to be analyzed by AI.

- Enhanced Search Features:

- Natural Language Processing (NLP): Enable NLP to improve query understanding.

- Synonym Mapping: Add synonyms to improve search result relevance.

- Custom Scoring Profiles: Create scoring profiles to prioritize certain data attributes.

- Set Up AI Service:

Testing and Optimization:

- Run Test Queries: Perform various searches to ensure configurations are correct.

- Adjust Parameters: Fine-tune index schema and scoring profiles based on test results.

- Monitor Performance: Use Azure Monitor to track usage and performance metrics.

Conclusion:

- Ensuring AI services are correctly configured enhances the usability and relevance of search functionalities, driving better insights and data utilization.

By following this guide, users will be equipped to set up and optimize AI services with Azure Cosmos DB, thereby enhancing search functionalities and improving data retrieval efficiency.

Log in or sign up for Devpost to join the conversation.